Content moderation

On Internet websites that invite users to post comments, content moderation is the process of detecting contributions that are irrelevant, obscene, illegal, harmful, or insulting, in contrast to useful or informative contributions, frequently for censorship or suppression of opposing viewpoints. The purpose of content moderation is to remove or apply a warning label to problematic content or allow users to block and filter content themselves.[1]

Various types of Internet sites permit user-generated content such as comments, including Internet forums, blogs, and news sites powered by scripts such as phpBB, a Wiki, or PHP-Nuke etc. Depending on the site's content and intended audience, the site's administrators will decide what kinds of user comments are appropriate, then delegate the responsibility of sifting through comments to lesser moderators. Most often, they will attempt to eliminate trolling, spamming, or flaming, although this varies widely from site to site.

Major platforms use a combination of algorithmic tools, user reporting and human review.[1] Social media sites may also employ content moderators to manually inspect or remove content flagged for hate speech or other objectionable content. Other content issues include revenge porn, graphic content, child abuse material and propaganda.[1] Some websites must also make their content hospitable to advertisements.[1]

In the United States, content moderation is governed by Section 230 of the Communications Decency Act, and has seen several cases concerning the issue make it to the United States Supreme Court, such as the current Moody v. NetChoice, LLC.

Supervisor moderation[edit]

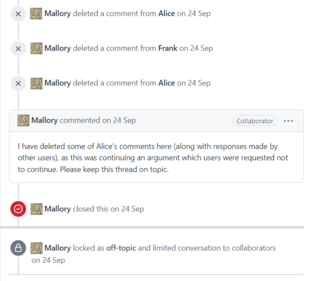

Also known as unilateral moderation, this kind of moderation system is often seen on Internet forums. A group of people are chosen by the site's administrators (usually on a long-term basis) to act as delegates, enforcing the community rules on their behalf. These moderators are given special privileges to delete or edit others' contributions and/or exclude people based on their e-mail address or IP address, and generally attempt to remove negative contributions throughout the community. They act as a good invisible backbone, underpinning the social web in a crucial but undervalued role.[2]

Facebook[edit]

As of 2017, Facebook had increased the number of content moderators from 4,500 to 7,500 in 2017 due to legal and other controversies. In Germany, Facebook was responsible for removing hate speech within 24 hours of when it was posted.[3] As of 2021, according to Frances Haugen, the number of Facebook employees responsible for content moderation was much smaller.[4]

If you have a new Page on Facebook, you can manage the following moderation settings:

- Comment ranking: See the most relevant comments on your public posts first.

- Profanity filter: You can choose whether to block profanity from your Page, and to what degree. Facebook determines what to block by using the most commonly reported words and phrases marked as offensive by the community.

- Country restrictions: You can choose to show your Page to people in certain countries or hide it from people in others. If no countries are listed, your Page will be visible to everyone.

- Age restrictions: When you select an age restriction for your Page, people younger than the age won't be able to see your Page or its content.

- Keyword blocklist: Hide comments containing certain words from your timeline.

- Tag review: Review tags people add to your posts before the tags appear on Facebook.

- Blocking: Once you block someone, that person can no longer see things that you post on your timeline, tag your Page, invite your Page to events or groups or start a conversation with your Page.

- Inbox comment moderation: Manage comment moderation for Inbox from Meta Business Suite[5]

Twitter[edit]

This section needs to be updated. (March 2023) |

Social media site Twitter has a suspension policy. Between August 2015 and December 2017 it suspended over 1.2 million accounts for terrorist content in an effort to reduce the number of followers and amount of content associated with the Islamic State.[6] Following the acquisition of Twitter by Elon Musk in October 2022, content rules have been weakened across the platform in an attempt to prioritize free speech.[7] However, the effects of this campaign have been called into question.[8][9]

Commercial content moderation[edit]

Commercial Content Moderation is a term coined by Sarah T. Roberts to describe the practice of "monitoring and vetting user-generated content (UGC) for social media platforms of all types, in order to ensure that the content complies with legal and regulatory exigencies, site/community guidelines, user agreements, and that it falls within norms of taste and acceptability for that site and its cultural context."[10]

While at one time this work may have been done by volunteers within the online community, for commercial websites this is largely achieved through outsourcing the task to specialized companies, often in low-wage areas such as India and the Philippines. Outsourcing of content moderation jobs grew as a result of the social media boom. With the overwhelming growth of users and UGC, companies needed many more employees to moderate the content. In the late 1980s and early 1990s, tech companies began to outsource jobs to foreign countries that had an educated workforce but were willing to work for cheap.[11]

As of 2010, employees work by viewing, assessing and deleting disturbing content.[12] Wired reported in 2014, they may suffer psychological damage[13][14][15][2][16] In 2017, the Guardian reported secondary trauma may arise, with symptoms similar to PTSD.[17] Some large companies such as Facebook offer psychological support[17] and increasingly rely on the use of artificial intelligence to sort out the most graphic and inappropriate content, but critics claim that it is insufficient.[18] In 2019, NPR called it a job hazard.[19]

Facebook[edit]

Facebook has decided to create an oversight board that will decide what content remains and what content is removed. This idea was proposed in late 2018. The "Supreme Court" at Facebook is to replace making decisions in an ad hoc manner.[19]

Distributed moderation[edit]

User moderation[edit]

User moderation allows any user to moderate any other user's contributions. Billions of people are currently making decisions on what to share, forward or give visibility to on a daily basis.[20] On a large site with a sufficiently large active population, this usually works well, since relatively small numbers of troublemakers are screened out by the votes of the rest of the community.

User moderation can also be characterized by reactive moderation. This type of moderation depends on users of a platform or site to report content that is inappropriate and breaches community standards. In this process, when users are faced with an image or video they deem unfit, they can click the report button. The complaint is filed and queued for moderators to look at.[21]

Use of algorithms for content moderation[edit]

This section is empty. You can help by adding to it. (February 2024) |

See also[edit]

- Like button

- Meta-moderation system

- Moody v. NetChoice, LLC

- Recommender system

- Trust metric

- We Had to Remove This Post

References[edit]

- ^ a b c d Grygiel, Jennifer; Brown, Nina (June 2019). "Are social media companies motivated to be good corporate citizens? Examination of the connection between corporate social responsibility and social media safety". Telecommunications Policy. 43 (5): 2, 3. doi:10.1016/j.telpol.2018.12.003. S2CID 158295433. Retrieved 25 May 2022.

- ^ a b "Invisible Data Janitors Mop Up Top Websites - Al Jazeera America". aljazeera.com.

- ^ "Artificial intelligence will create new kinds of work". The Economist. Retrieved 2017-09-02.

- ^ Jan Böhmermann, ZDF Magazin Royale. Facebook Whistleblowerin Frances Haugen im Talk über die Facebook Papers.

- ^ "About moderation for new Pages on Facebook". Meta Business Help Centre. Retrieved 2023-08-21.

- ^ Gartenstein-Ross, Daveed; Koduvayur, Varsha (26 May 2022). "Texas's New Social Media Law Will Create a Haven for Global Extremists". foreignpolicy.com. Foreign Policy. Retrieved 27 May 2022.

- ^ "Elon Musk on X: "@essagar Suspending the Twitter account of a major news organization for publishing a truthful story was obviously incredibly inappropriate"". Twitter. Retrieved 2023-08-21.

- ^ Burel, Grégoire; Alani, Harith; Farrell, Tracie (2022-05-12). "Elon Musk could roll back social media moderation – just as we're learning how it can stop misinformation". The Conversation. Retrieved 2023-08-21.

- ^ Fung, Brian (June 2, 2023). "Twitter loses its top content moderation official at a key moment". CNN News.

- ^ "Behind the Screen: Commercial Content Moderation (CCM)". Sarah T. Roberts | The Illusion of Volition. 2012-06-20. Retrieved 2017-02-03.

- ^ Elliott, Vittoria; Parmar, Tekendra (22 July 2020). ""The darkness and despair of people will get to you"". rest of world.

- ^ Stone, Brad (July 18, 2010). "Concern for Those Who Screen the Web for Barbarity". The New York Times.

- ^ Adrian Chen (23 October 2014). "The Laborers Who Keep Dick Pics and Beheadings Out of Your Facebook Feed". WIRED. Archived from the original on 2015-09-13.

- ^ "The Internet's Invisible Sin-Eaters". The Awl. Archived from the original on 2015-09-08.

- ^ "Western News - Professor uncovers the Internet's hidden labour force". Western News. March 19, 2014.

- ^ "Should Facebook Block Offensive Videos Before They Post?". WIRED. 26 August 2015.

- ^ a b Olivia Solon (2017-05-04). "Facebook is hiring moderators. But is the job too gruesome to handle?". The Guardian. Retrieved 2018-09-13.

- ^ Olivia Solon (2017-05-25). "Underpaid and overburdened: the life of a Facebook moderator". The Guardian. Retrieved 2018-09-13.

- ^ a b Gross, Terry. "For Facebook Content Moderators, Traumatizing Material Is A Job Hazard". NPR.org.

- ^ Hartmann, Ivar A. (April 2020). "A new framework for online content moderation". Computer Law & Security Review. 36: 3. doi:10.1016/j.clsr.2019.105376. S2CID 209063940. Retrieved 25 May 2022.

- ^ Grimes-Viort, Blaise (December 7, 2010). "6 types of content moderation you need to know about". Social Media Today.

Further reading[edit]

- Sarah T. Roberts (2019). Behind the Screen: Content Moderation in the Shadows of Social Media. Yale University Press. ISBN 978-0300235883.

External links[edit]

- Slashdot – A definitive example of user moderation

- Fundamental Basics of Content Moderation

- Cliff Lampe and Paul Resnick : Slash (dot) and burn: distributed moderation in a large online conversation space Proceedings of the SIGCHI conference on Human factors in computing systems table of contents, Vienna, Austria 2005, 543–550.

- Hamed Alhoori, Omar Alvarez, Richard Furuta, Miguel Muñiz, Eduardo Urbina: Supporting the Creation of Scholarly Bibliographies by Communities through Online Reputation Based Social Collaboration. ECDL 2009: 180–191