Direct Rendering Infrastructure

| Original author(s) | Precision Insight, Tungsten Graphics |

|---|---|

| Developer(s) | freedesktop.org |

| Initial release | August 1998[1] |

| Stable release | 2.4.x

/ February 2009 |

| Written in | C |

| Platform | POSIX |

| Type | Framework / API |

| License | MIT and other licenses[2] |

| Website | dri |

| Original author(s) | Kristian Høgsberg et al. |

|---|---|

| Developer(s) | freedesktop.org |

| Initial release | September 4, 2008[3] |

| Stable release | 2.8

/ July 11, 2012[4] |

| Written in | C |

| Platform | POSIX |

| Type | Framework / API |

| License | MIT and other licenses[2] |

| Website | dri |

| Original author(s) | Keith Packard et al. |

|---|---|

| Developer(s) | freedesktop.org |

| Initial release | November 1, 2013[5] |

| Stable release | 1.0

/ November 1, 2013[5] |

| Written in | C |

| Platform | POSIX |

| Type | Framework / API |

| License | MIT and other licenses[2] |

| Website | dri |

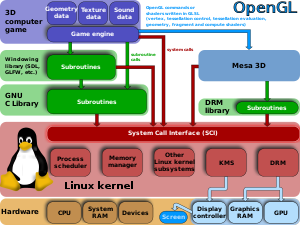

The Direct Rendering Infrastructure (DRI) is the framework comprising the modern Linux graphics stack which allows unprivileged user-space programs to issue commands to graphics hardware without conflicting with other programs.[6] The main use of DRI is to provide hardware acceleration for the Mesa implementation of OpenGL. DRI has also been adapted to provide OpenGL acceleration on a framebuffer console without a display server running.[7]

DRI implementation is scattered through the X Server and its associated client libraries, Mesa 3D and the Direct Rendering Manager kernel subsystem.[6] All of its source code is free software.

Overview[edit]

In the classic X Window System architecture the X Server is the only process with exclusive access to the graphics hardware, and therefore the one which does the actual rendering on the framebuffer. All that X clients do is communicate with the X Server to dispatch rendering commands. Those commands are hardware independent, meaning that the X11 protocol provides an API that abstracts the graphics device so the X clients don't need to know or worry about the specifics of the underlying hardware. Any hardware specific code lives inside the Device Dependent X, the part of the X Server that manages each type of video card or graphics adapter and which is also often called the video or graphics driver.

The rise of 3D rendering has shown the limits of this architecture. 3D graphics applications tend to produce large amounts of commands and data, all of which must be dispatched to the X Server for rendering. As the amount of inter-process communication (IPC) between the X client and X Server increased, the 3D rendering performance suffered to the point that X driver developers concluded that in order to take advantage of 3D hardware capabilities of the latest graphics cards a new IPC-less architecture was required. X clients should have direct access to graphics hardware rather than relying on a third party process to do so, saving all the IPC overhead. This approach is called "direct rendering" as opposed to the "indirect rendering" provided by the classical X architecture. The Direct Rendering Infrastructure was initially developed to allow any X client to perform 3D rendering using this "direct rendering" approach.

Nothing prevents DRI from being used to implement accelerated 2D direct rendering within an X client.[3] Simply no one has had the need to do so because the 2D indirect rendering performance was good enough.

Software architecture[edit]

The basic architecture of the Direct Rendering Infrastructure involves three main components:[8]

- the DRI client —for example, an X client performing "direct rendering"— needs a hardware specific "driver" able to manage the current video card or graphics adapter in order to render on it. These DRI drivers are typically provided as shared libraries to which the client is dynamically linked. Since DRI was conceived to take advantage of 3D graphics hardware, the libraries are normally presented to clients as hardware accelerated implementations of a 3D API such as OpenGL, provided by either the 3D hardware vendor itself or a third party such as the Mesa 3D free software project.

- the X Server provides an X11 protocol extension —the DRI extension— that the DRI clients use to coordinate with both the windowing system and the DDX driver.[9] As part of the DDX driver, it's quite common that the X Server process also dynamically links to the same DRI driver that the DRI clients, but to provide hardware accelerated 3D rendering to the X clients using the GLX extension for indirect rendering (for example remote X clients that can't use direct rendering). For 2D rendering, the DDX driver must also take into account the DRI clients using the same graphics device.

- the access to the video card or graphics adapter is regulated by a kernel component called the Direct Rendering Manager (DRM).[10] Both the X Server's DDX driver and each X client's DRI driver must use DRM to access to the graphics hardware. DRM provides synchronization to the shared resources of the graphics hardware —resources such as the command queue, the card registers, the video memory, the DMA engines, ...— ensuring that the concurrent access of all those multiple competing user space processes don't interfere with each other. DRM also serves as a basic security enforcer that doesn't allow any X client to access the hardware beyond what it needs to perform the 3D rendering.

DRI1[edit]

In the original DRI architecture, due to the memory size of video cards at that time, there was a single instance of the screen front buffer and back buffer (also of the ancillary depth buffer and stencil buffer), shared by all the DRI clients and the X Server.[11][12] All of them rendered directly onto the back buffer, that was swapped with the front buffer at vertical blanking interval time.[11] In order to render to the back buffer, a DRI process should ensure that the rendering was clipped to the area reserved for its window.[11][12]

The synchronization with the X Server was done through signals and a shared memory buffer called the SAREA.[12] The access to the DRM device was exclusive, so any DRI client had to lock it at the beginning of a rendering operation. Other users of the device —including the X Server— were blocked in the meantime, and they had to wait until the lock was released at the end of the current rendering operation, even if it wouldn't be any conflict between both operations.[12] Another drawback was that operations didn't retain memory allocations after the current DRI process released its lock on the device, so any data uploaded to the graphics memory such as textures were lost for upcoming operations, causing a significant impact on graphics performance.

Nowadays DRI1 is considered completely obsolete and must not be used.

DRI2[edit]

Due to the increasing popularity of compositing window managers like Compiz, the Direct Rendering Infrastructure had to be redesigned so that X clients could also support redirection to "offscreen pixmaps" while doing direct rendering. Regular X clients already respected the redirection to a separate pixmap provided by the X Server as a render target —the so-called offscreen pixmap—, but DRI clients continued to do the rendering directly into the shared backbuffer, effectively bypassing the compositing window manager.[11][13] The ultimate solution was to change the way DRI handled the render buffers, which led to a completely different DRI extension with a new set of operations, and also major changes in the Direct Rendering Manager.[3] The new extension was named "DRI2", although it's not a later version but a different extension not even compatible with the original DRI —in fact both have coexisted within the X Server for a long time.

In DRI2, instead of a single shared (back) buffer, every DRI client gets its own private back buffer[11][12] —along with their associated depth and stencil buffers— to render its window content using the hardware acceleration. The DRI client then swaps it with a false "front buffer",[12] which is used by the compositing window manager as one of the sources to compose (build) the final screen back buffer to be swapped at the VBLANK interval with the real front buffer.

To handle all these new buffers, the Direct Rendering Manager had to incorporate new functionality, specifically a graphics memory manager. DRI2 was initially developed using the experimental TTM memory manager,[11][13] but it was later rewritten to use GEM after it was chosen as the definitive DRM memory manager.[14] The new DRI2 internal buffer management model also solved two major performance bottlenecks present in the original DRI implementation:

- DRI2 clients no longer lock the entire DRM device while using it for rendering, since now each client gets a separate render buffer independent from the other processes.[12]

- DRI2 clients can allocate their own buffers (with textures, vertex lists, ...) in the video memory and keep them as long as they want, which significantly reduces video memory bandwidth consumption.

In DRI2, the allocation of the private offscreen buffers (back buffer, fake front buffer, depth buffer, stencil buffer, ...) for a window is done by the X Server itself.[15][16] DRI clients retrieve those buffers to do the rendering into the window by calling operations such as DRI2GetBuffers and DRI2GetBuffersWithFormat available in the DRI2 extension.[3] Internally, DRI2 uses GEM names —a type of global handle provided by the GEM API that allows two processes accessing a DRM device to refer to the same buffer— for passing around "references" to those buffers through the X11 protocol.[16] The reason why the X Server is in charge of the buffer allocation of the render buffers of a window is that the GLX extension allows for multiple X clients to do OpenGL rendering cooperatively in the same window.[15] This way, the X Server manages the whole lifecycle of the render buffers along the entire rendering process and knows when it can safely recycle or discard them. When a window resize is performed, the X Server is also responsible for allocating new render buffers matching the new window size, and notifying the change to the DRI client(s) rendering into the window using an InvalidateBuffers event, so they would retrieve the GEM names of the new buffers.[15]

The DRI2 extension provides other core operations for the DRI clients, such as finding out which DRM device and driver should they use (DRI2Connect) or getting authenticated by the X Server in order to be able to use the rendering and buffer facilities of the DRM device (DRI2Authenticate).[3] The presentation of the rendered buffers in the screen is performed using the DRI2CopyRegion and DRI2SwapBuffers requests. DRI2CopyRegion can be used to do a copy between the fake front buffer and the real front buffer, but it doesn't provide any synchronization with the vertical blanking interval, so it can cause tearing. DRI2SwapBuffers, on the other hand, performs a VBLANK-synchronized swap between back and front buffer, if it's supported and both buffers have the same size, or a copy (blit) otherwise.[3][15]

DRI3[edit]

Although DRI2 was a significant improvement over the original DRI, the new extension also introduced some new issues.[15][16] In 2013, a third iteration of the Direct Rendering Infrastructure known as DRI3 was developed in order to fix those issues.[17]

The main differences of DRI3 compared to DRI2 are:

- DRI3 clients allocate themselves their render buffers instead of relying on the X Server for doing the allocation —that was the method supported by DRI2.[15][16]

- DRI3 gets rid of the old insecure GEM buffer sharing mechanism based on GEM names (global GEM handles) for passing buffer objects between a DRI client and the X Server in favor of the one more secure and versatile based on PRIME DMA-BUFs, which uses file descriptors instead.[15][16]

Buffer allocation on the client side breaks GLX assumptions in the sense that it's no longer possible for multiple GLX applications to render cooperatively in the same window. On the plus side, the fact that the DRI client is in charge of its own buffers throughout their lifetime brings many advantages. For example, it is easy for the DRI3 client to ensure that the size of the render buffers always match the current size of the window, and thereby eliminate the artifacts due to the lack of synchronization of buffer sizes between client and server that plagued window resizing in DRI2.[15][16][18] A better performance is also achieved because now DRI3 clients save the extra round trip waiting for the X Server to send the render buffers.[16] DRI3 clients, and especially compositor window managers, can take advantage of keeping older buffers of previous frames and reusing them as the basis on which to render only the damaged parts of a window as another performance optimization.[15][16] The DRI3 extension no longer needs to be modified to support new particular buffer formats, since they are now handled directly between the DRI client driver and the DRM kernel driver.[15] The use of file descriptors, on the other hand, allows the kernel to perform a safe cleanup of any unused GEM buffer object —one with no reference to it.[15][16]

Technically, DRI3 consists of two different extensions, the "DRI3" extension and the "Present" extension.[17][19] The main purpose of the DRI3 extension is to implement the mechanism to share direct rendered buffers between DRI clients and the X Server.[18][19][20] DRI clients allocate and use GEM buffers objects as rendering targets, while the X Server represents these render buffers using a type of X11 object called "pixmap". DRI3 provides two operations, DRI3PixmapFromBuffer and DRI3BufferFromPixmap, one to create a pixmap (in "X Server space") from a GEM buffer object (in "DRI client space"), and the other to do the reverse and get a GEM buffer object from an X pixmap.[18][19][20] In these DRI3 operations GEM buffer objects are passed as DMA-BUF file descriptors instead of GEM names. DRI3 also provides a way to share synchronization objects between the DRI client and the X Server, allowing both a serialized access to the shared buffer.[19] Unlike DRI2, the initial DRI3Open operation —the first every DRI client must request to know which DRM device to use— returns an already open file descriptor to the device node instead of the device node filename, with any required authentication procedure already performed in advance by the X Server.[18][19]

DRI3 provides no mechanism to show the rendered buffers on the screen, but relies on another extension, the Present extension, to do so.[20] Present is so named because its main task is to "present" buffers on the screen, meaning that it handles the update of the framebuffer using the contents of the rendered buffers delivered by client applications.[19] Screen updates have to be done at the proper time, normally during the VBLANK interval in order to avoid display artifacts such as tearing. Present also handles the synchronization of screen updates to the VBLANK interval.[21] It also keeps the X client informed about the instant each buffer is really shown on the screen using events, so the client can synchronize its rendering process with the current screen refresh rate.

Present accepts any X pixmap as the source for a screen update.[21] Since pixmaps are standard X objects, Present can be used not only by DRI3 clients performing direct rendering, but also by any X client rendering on a pixmap by any means.[18] For example, most existing non-GL based GTK+ and Qt applications used to do double buffered pixmap rendering using XRender. The Present extension can also be used by these applications to achieve efficient and non-tearing screen updates. This is the reason why Present was developed as a separate standalone extension instead of being part of DRI3.[18]

Apart from allowing non-GL X clients to synchronize with VBLANK, Present brings other advantages. DRI3 graphics performance is better because Present is more efficient than DRI2 in swapping buffers.[19] A number of OpenGL extensions that weren't available with DRI2 are now supported based on new features provided by Present.[19]

Present provides two main operations to X clients: update a region of a window using part of or all the contents of a pixmap (PresentPixmap) and set the type of presentation events related to a certain window that the client wants to be notified about (PresentSelectInput).[19][21] There are three presentation events about which a window can notify an X client: when an ongoing presentation operation —normally from a call to PresentPixmap— has been completed (PresentCompleteNotify), when a pixmap used by a PresentPixmap operation is ready to be reused (PresentIdleNotify) and when the window configuration —mostly window size— changes (PresentConfigureNotify).[19][21] Whether a PresentPixmap operation performs a direct copy (blit) onto the front buffer or a swap of the entire back buffer with the front buffer is an internal detail of the Present extension implementation, instead of an explicit choice of the X client as it was in DRI2.

Adoption[edit]

This section needs to be updated. (June 2020) |

Several open source DRI drivers have been written, including ones for ATI Mach64, ATI Rage128, ATI Radeon, 3dfx Voodoo3 through Voodoo5, Matrox G200 through G400, SiS 300-series, Intel i810 through i965, S3 Savage, VIA UniChrome graphics chipsets, and nouveau for Nvidia. Some graphics vendors have written closed-source DRI drivers, including ATI and PowerVR Kyro.

The various versions of DRI have been implemented by various operating systems, amongst others by the Linux kernel, FreeBSD, NetBSD, OpenBSD, and OpenSolaris.

History[edit]

The project was started by Jens Owen and Kevin E. Martin from Precision Insight (funded by Silicon Graphics and Red Hat).[1][22] It was first made widely available as part of XFree86 4.0[1][23] and is now part of the X.Org Server. It is currently maintained by the free software community.

Work on DRI2 started at the 2007 X Developers' Summit from a Kristian Høgsberg's proposal.[24][25] Høgsberg himself wrote the new DRI2 extension and the modifications to Mesa and GLX.[26] In March 2008 DRI2 was mostly done,[27][28][29] but it couldn't make into X.Org Server version 1.5[14] and had to wait until version 1.6 from February 2009.[30] The DRI2 extension was officially included in the X11R7.5 release of October 2009.[31] The first public version of the DRI2 protocol (2.0) was announced in April 2009.[32] Since then there has been several revisions, being the most recent the version 2.8 from July 2012.[4]

Due to several limitations of DRI2, a new extension called DRI-Next was proposed by Keith Packard and Emma Anholt at the X.Org Developer's Conference 2012.[15] The extension was proposed again as DRI3000 at Linux.conf.au 2013.[16][17] DRI3 and Present extensions were developed during 2013 and merged into the X.Org Server 1.15 release from December 2013.[33][34] The first and only version of the DRI3 protocol (1.0) was released in November 2013.[5]

-

2D drivers inside of the X server

-

Early DRI: Mode setting is still being performed by the X display server, which forces it to be run as root

-

Finally all access goes through the Direct Rendering Manager

-

In Linux kernel 3.12 render nodes were introduced; DRM and the KMS driver were split. Wayland implements direct rendering over EGL

See also[edit]

References[edit]

- ^ a b c Owen, Jens. "The DRI project history". DRI project wiki. Retrieved 16 April 2016.

- ^ a b c Mesa DRI License / Copyright Information - The Mesa 3D Graphics Library

- ^ a b c d e f Høgsberg, Kristian (4 September 2008). "The DRI2 Extension - Version 2.0". X.Org. Retrieved 29 May 2016.

- ^ a b Airlie, Dave (11 July 2012). "[ANNOUNCE] dri2proto 2.8". xorg-announce (Mailing list).

- ^ a b c Packard, Keith (1 November 2013). "[ANNOUNCE] dri3proto 1.0". xorg-announce (Mailing list).

- ^ a b "Mesa 3D and Direct Rendering Infrastructure wiki". Retrieved 15 July 2014.

- ^ "DRI for Framebuffer Consoles". Retrieved January 4, 2019.

- ^ Martin, Kevin E.; Faith, Rickard E.; Owen, Jens; Akin, Allen (11 May 1999). "Direct Rendering Infrastructure, Low-Level Design Document". Retrieved 18 May 2016.

- ^ Owen, Jens; Martin, Kevin (11 May 1999). "DRI Extension for supporting Direct Rendering - Protocol Specification". Retrieved 18 May 2016.

- ^ Faith, Rickard E. (11 May 1999). "The Direct Rendering Manager: Kernel Support for the Direct Rendering Infrastructure". Retrieved 18 May 2016.

- ^ a b c d e f Packard, Keith (21 July 2008). "X output status july 2008". Retrieved 26 May 2016.

- ^ a b c d e f g Packard, Keith (24 April 2009). "Sharpening the Intel Driver Focus". Retrieved 26 May 2016.

- ^ a b Høgsberg, Kristian (8 August 2007). "Redirected direct rendering". Retrieved 25 May 2016.

- ^ a b Høgsberg, Kristian (4 August 2008). "Backing out DRI2 from server 1.5". xorg (Mailing list).

- ^ a b c d e f g h i j k l Packard, Keith (28 September 2012). "Thoughts about DRI.Next". Retrieved 26 May 2016.

- ^ a b c d e f g h i j Willis, Nathan (11 February 2013). "LCA: The X-men speak". LWN.net. Retrieved 26 May 2016.

- ^ a b c Packard, Keith (19 February 2013). "DRI3000 — Even Better Direct Rendering". Retrieved 26 May 2016.

- ^ a b c d e f Packard, Keith (4 June 2013). "Completing the DRI3 Extension". Retrieved 31 May 2016.

- ^ a b c d e f g h i j Edge, Jake (9 October 2013). "DRI3 and Present". LWN.net. Retrieved 26 May 2016.

- ^ a b c Packard, Keith (4 June 2013). "The DRI3 Extension - Version 1.0". Retrieved 30 May 2016.

- ^ a b c d Packard, Keith (6 June 2013). "The Present Extension - Version 1.0". Retrieved 1 June 2016.

- ^ Owen, Jens; Martin, Kevin E. (15 September 1998). "A Multipipe Direct Rendering Architecture for 3D". Retrieved 16 April 2016.

- ^ "Release Notes for XFree86 4.0". XFree86 Project. 7 March 2000. Retrieved 16 April 2016.

- ^ "X Developers' Summit 2007 - Notes". X.Org. Retrieved 7 March 2016.

- ^ Høgsberg, Kristian (3 October 2007). "DRI2 Design Wiki Page". xorg (Mailing list).

- ^ Høgsberg, Kristian (4 February 2008). "Plans for merging DRI2 work". xorg (Mailing list).

- ^ Høgsberg, Kristian (15 February 2008). "DRI2 committed". xorg (Mailing list).

- ^ Høgsberg, Kristian (31 March 2008). "DRI2 direct rendering". xorg (Mailing list).

- ^ Høgsberg, Kristian (31 March 2008). "DRI2 Direct Rendering". Retrieved 20 April 2016.

- ^ "Server 1.6 branch". X.org. Retrieved 7 February 2015.

- ^ "Release Notes for X11R7.5". X.Org. Retrieved 20 April 2016.

- ^ Høgsberg, Kristian (20 April 2009). "[ANNOUNCE] dri2proto 2.0". xorg-announce (Mailing list).

- ^ Packard, Keith (November 2013). "[ANNOUNCE] xorg-server 1.14.99.901". X.org. Retrieved 9 February 2015.

- ^ Larabel, Michael. "X.Org Server 1.15 Release Has Several New Features". Phoronix. Retrieved 9 February 2015.

External links[edit]

- Direct Rendering Infrastructure project home page

- Current specification documents (always updated to the most recent version):

- The DRI2 Extension (Kristian Høgsberg, 2008)

- The DRI3 Extension (Keith Packard, 2013)

- The Present Extension (Keith Packard, 2013)