Discrete wavelet transform

In numerical analysis and functional analysis, a discrete wavelet transform (DWT) is any wavelet transform for which the wavelets are discretely sampled. As with other wavelet transforms, a key advantage it has over Fourier transforms is temporal resolution: it captures both frequency and location information (location in time).

Examples[edit]

Haar wavelets[edit]

The first DWT was invented by Hungarian mathematician Alfréd Haar. For an input represented by a list of numbers, the Haar wavelet transform may be considered to pair up input values, storing the difference and passing the sum. This process is repeated recursively, pairing up the sums to prove the next scale, which leads to differences and a final sum.

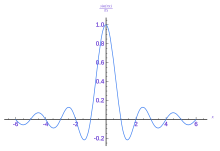

Daubechies wavelets[edit]

The most commonly used set of discrete wavelet transforms was formulated by the Belgian mathematician Ingrid Daubechies in 1988. This formulation is based on the use of recurrence relations to generate progressively finer discrete samplings of an implicit mother wavelet function; each resolution is twice that of the previous scale. In her seminal paper, Daubechies derives a family of wavelets, the first of which is the Haar wavelet. Interest in this field has exploded since then, and many variations of Daubechies' original wavelets were developed.[1][2][3]

The dual-tree complex wavelet transform (DCWT)[edit]

The dual-tree complex wavelet transform (WT) is a relatively recent enhancement to the discrete wavelet transform (DWT), with important additional properties: It is nearly shift invariant and directionally selective in two and higher dimensions. It achieves this with a redundancy factor of only , substantially lower than the undecimated DWT. The multidimensional (M-D) dual-tree WT is nonseparable but is based on a computationally efficient, separable filter bank (FB).[4]

Others[edit]

Other forms of discrete wavelet transform include the Le Gall–Tabatabai (LGT) 5/3 wavelet developed by Didier Le Gall and Ali J. Tabatabai in 1988 (used in JPEG 2000 or JPEG XS ),[5][6][7] the Binomial QMF developed by Ali Naci Akansu in 1990,[8] the set partitioning in hierarchical trees (SPIHT) algorithm developed by Amir Said with William A. Pearlman in 1996,[9] the non- or undecimated wavelet transform (where downsampling is omitted), and the Newland transform (where an orthonormal basis of wavelets is formed from appropriately constructed top-hat filters in frequency space). Wavelet packet transforms are also related to the discrete wavelet transform. Complex wavelet transform is another form.

Properties[edit]

The Haar DWT illustrates the desirable properties of wavelets in general. First, it can be performed in operations; second, it captures not only a notion of the frequency content of the input, by examining it at different scales, but also temporal content, i.e. the times at which these frequencies occur. Combined, these two properties make the Fast wavelet transform (FWT) an alternative to the conventional fast Fourier transform (FFT).

Time issues[edit]

Due to the rate-change operators in the filter bank, the discrete WT is not time-invariant but actually very sensitive to the alignment of the signal in time. To address the time-varying problem of wavelet transforms, Mallat and Zhong proposed a new algorithm for wavelet representation of a signal, which is invariant to time shifts.[10] According to this algorithm, which is called a TI-DWT, only the scale parameter is sampled along the dyadic sequence 2^j (j∈Z) and the wavelet transform is calculated for each point in time.[11][12]

Applications[edit]

The discrete wavelet transform has a huge number of applications in science, engineering, mathematics and computer science. Most notably, it is used for signal coding, to represent a discrete signal in a more redundant form, often as a preconditioning for data compression. Practical applications can also be found in signal processing of accelerations for gait analysis,[13][14] image processing,[15][16] in digital communications and many others.[17] [18][19]

It is shown that discrete wavelet transform (discrete in scale and shift, and continuous in time) is successfully implemented as analog filter bank in biomedical signal processing for design of low-power pacemakers and also in ultra-wideband (UWB) wireless communications.[20]

Example in image processing[edit]

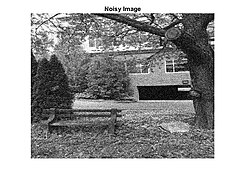

Wavelets are often used to denoise two dimensional signals, such as images. The following example provides three steps to remove unwanted white Gaussian noise from the noisy image shown. Matlab was used to import and filter the image.

The first step is to choose a wavelet type, and a level N of decomposition. In this case biorthogonal 3.5 wavelets were chosen with a level N of 10. Biorthogonal wavelets are commonly used in image processing to detect and filter white Gaussian noise,[21] due to their high contrast of neighboring pixel intensity values. Using these wavelets a wavelet transformation is performed on the two dimensional image.

Following the decomposition of the image file, the next step is to determine threshold values for each level from 1 to N. Birgé-Massart strategy[22] is a fairly common method for selecting these thresholds. Using this process individual thresholds are made for N = 10 levels. Applying these thresholds are the majority of the actual filtering of the signal.

The final step is to reconstruct the image from the modified levels. This is accomplished using an inverse wavelet transform. The resulting image, with white Gaussian noise removed is shown below the original image. When filtering any form of data it is important to quantify the signal-to-noise-ratio of the result.[citation needed] In this case, the SNR of the noisy image in comparison to the original was 30.4958%, and the SNR of the denoised image is 32.5525%. The resulting improvement of the wavelet filtering is a SNR gain of 2.0567%.[23]

It is important to note that choosing other wavelets, levels, and thresholding strategies can result in different types of filtering. In this example, white Gaussian noise was chosen to be removed. Although, with different thresholding, it could just as easily have been amplified.

To illustrate the differences and similarities between the discrete wavelet transform with the discrete Fourier transform, consider the DWT and DFT of the following sequence: (1,0,0,0), a unit impulse.

The DFT has orthogonal basis (DFT matrix):

while the DWT with Haar wavelets for length 4 data has orthogonal basis in the rows of:

(To simplify notation, whole numbers are used, so the bases are orthogonal but not orthonormal.)

Preliminary observations include:

- Sinusoidal waves differ only in their frequency. The first does not complete any cycles, the second completes one full cycle, the third completes two cycles, and the fourth completes three cycles (which is equivalent to completing one cycle in the opposite direction). Differences in phase can be represented by multiplying a given basis vector by a complex constant.

- Wavelets, by contrast, have both frequency and location. As before, the first completes zero cycles, and the second completes one cycle. However, the third and fourth both have the same frequency, twice that of the first. Rather than differing in frequency, they differ in location — the third is nonzero over the first two elements, and the fourth is nonzero over the second two elements.

The DWT demonstrates the localization: the (1,1,1,1) term gives the average signal value, the (1,1,–1,–1) places the signal in the left side of the domain, and the (1,–1,0,0) places it at the left side of the left side, and truncating at any stage yields a downsampled version of the signal:

The DFT, by contrast, expresses the sequence by the interference of waves of various frequencies – thus truncating the series yields a low-pass filtered version of the series:

Notably, the middle approximation (2-term) differs. From the frequency domain perspective, this is a better approximation, but from the time domain perspective it has drawbacks – it exhibits undershoot – one of the values is negative, though the original series is non-negative everywhere – and ringing, where the right side is non-zero, unlike in the wavelet transform. On the other hand, the Fourier approximation correctly shows a peak, and all points are within of their correct value, though all points have error. The wavelet approximation, by contrast, places a peak on the left half, but has no peak at the first point, and while it is exactly correct for half the values (reflecting location), it has an error of for the other values.

This illustrates the kinds of trade-offs between these transforms, and how in some respects the DWT provides preferable behavior, particularly for the modeling of transients.

Definition[edit]

One level of the transform[edit]

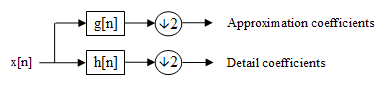

The DWT of a signal is calculated by passing it through a series of filters. First the samples are passed through a low-pass filter with impulse response resulting in a convolution of the two:

The signal is also decomposed simultaneously using a high-pass filter . The outputs give the detail coefficients (from the high-pass filter) and approximation coefficients (from the low-pass). It is important that the two filters are related to each other and they are known as a quadrature mirror filter.

However, since half the frequencies of the signal have now been removed, half the samples can be discarded according to Nyquist's rule. The filter output of the low-pass filter in the diagram above is then subsampled by 2 and further processed by passing it again through a new low-pass filter and a high- pass filter with half the cut-off frequency of the previous one, i.e.:

This decomposition has halved the time resolution since only half of each filter output characterises the signal. However, each output has half the frequency band of the input, so the frequency resolution has been doubled.

With the subsampling operator

the above summation can be written more concisely.

However computing a complete convolution with subsequent downsampling would waste computation time.

The Lifting scheme is an optimization where these two computations are interleaved.

Cascading and filter banks[edit]

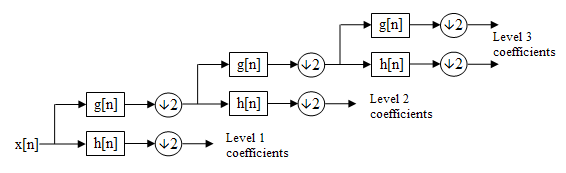

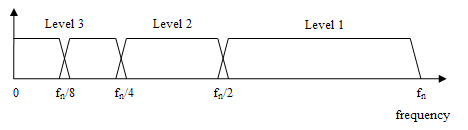

This decomposition is repeated to further increase the frequency resolution and the approximation coefficients decomposed with high- and low-pass filters and then down-sampled. This is represented as a binary tree with nodes representing a sub-space with a different time-frequency localisation. The tree is known as a filter bank.

At each level in the above diagram the signal is decomposed into low and high frequencies. Due to the decomposition process the input signal must be a multiple of where is the number of levels.

For example a signal with 32 samples, frequency range 0 to and 3 levels of decomposition, 4 output scales are produced:

| Level | Frequencies | Samples |

|---|---|---|

| 3 | to | 4 |

| to | 4 | |

| 2 | to | 8 |

| 1 | to | 16 |

Relationship to the mother wavelet[edit]

The filterbank implementation of wavelets can be interpreted as computing the wavelet coefficients of a discrete set of child wavelets for a given mother wavelet . In the case of the discrete wavelet transform, the mother wavelet is shifted and scaled by powers of two

where is the scale parameter and is the shift parameter, both of which are integers.

Recall that the wavelet coefficient of a signal is the projection of onto a wavelet, and let be a signal of length . In the case of a child wavelet in the discrete family above,

Now fix at a particular scale, so that is a function of only. In light of the above equation, can be viewed as a convolution of with a dilated, reflected, and normalized version of the mother wavelet, , sampled at the points . But this is precisely what the detail coefficients give at level of the discrete wavelet transform. Therefore, for an appropriate choice of and , the detail coefficients of the filter bank correspond exactly to a wavelet coefficient of a discrete set of child wavelets for a given mother wavelet .

As an example, consider the discrete Haar wavelet, whose mother wavelet is . Then the dilated, reflected, and normalized version of this wavelet is , which is, indeed, the highpass decomposition filter for the discrete Haar wavelet transform.

Time complexity[edit]

The filterbank implementation of the Discrete Wavelet Transform takes only O(N) in certain cases, as compared to O(N log N) for the fast Fourier transform.

Note that if and are both a constant length (i.e. their length is independent of N), then and each take O(N) time. The wavelet filterbank does each of these two O(N) convolutions, then splits the signal into two branches of size N/2. But it only recursively splits the upper branch convolved with (as contrasted with the FFT, which recursively splits both the upper branch and the lower branch). This leads to the following recurrence relation

which leads to an O(N) time for the entire operation, as can be shown by a geometric series expansion of the above relation.

As an example, the discrete Haar wavelet transform is linear, since in that case and are constant length 2.

The locality of wavelets, coupled with the O(N) complexity, guarantees that the transform can be computed online (on a streaming basis). This property is in sharp contrast to FFT, which requires access to the entire signal at once. It also applies to the multi-scale transform and also to the multi-dimensional transforms (e.g., 2-D DWT).[24]

Other transforms[edit]

- The Adam7 algorithm, used for interlacing in the Portable Network Graphics (PNG) format, is a multiscale model of the data which is similar to a DWT with Haar wavelets. Unlike the DWT, it has a specific scale – it starts from an 8×8 block, and it downsamples the image, rather than decimating (low-pass filtering, then downsampling). It thus offers worse frequency behavior, showing artifacts (pixelation) at the early stages, in return for simpler implementation.

- The multiplicative (or geometric) discrete wavelet transform[25] is a variant that applies to an observation model involving interactions of a positive regular function and a multiplicative independent positive noise , with . Denote , a wavelet transform. Since , then the standard (additive) discrete wavelet transform is such that where detail coefficients cannot be considered as sparse in general, due to the contribution of in the latter expression. In the multiplicative framework, the wavelet transform is such that This 'embedding' of wavelets in a multiplicative algebra involves generalized multiplicative approximations and detail operators: For instance, in the case of the Haar wavelets, then up to the normalization coefficient , the standard approximations (arithmetic mean) and details (arithmetic differences) become respectively geometric mean approximations and geometric differences (details) when using .

Code example[edit]

In its simplest form, the DWT is remarkably easy to compute.

The Haar wavelet in Java:

public static int[] discreteHaarWaveletTransform(int[] input) {

// This function assumes that input.length=2^n, n>1

int[] output = new int[input.length];

for (int length = input.length / 2; ; length = length / 2) {

// length is the current length of the working area of the output array.

// length starts at half of the array size and every iteration is halved until it is 1.

for (int i = 0; i < length; ++i) {

int sum = input[i * 2] + input[i * 2 + 1];

int difference = input[i * 2] - input[i * 2 + 1];

output[i] = sum;

output[length + i] = difference;

}

if (length == 1) {

return output;

}

//Swap arrays to do next iteration

System.arraycopy(output, 0, input, 0, length);

}

}

Complete Java code for a 1-D and 2-D DWT using Haar, Daubechies, Coiflet, and Legendre wavelets is available from the open source project: JWave. Furthermore, a fast lifting implementation of the discrete biorthogonal CDF 9/7 wavelet transform in C, used in the JPEG 2000 image compression standard can be found here (archived 5 March 2012).

Example of above code[edit]

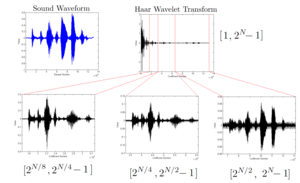

This figure shows an example of applying the above code to compute the Haar wavelet coefficients on a sound waveform. This example highlights two key properties of the wavelet transform:

- Natural signals often have some degree of smoothness, which makes them sparse in the wavelet domain. There are far fewer significant components in the wavelet domain in this example than there are in the time domain, and most of the significant components are towards the coarser coefficients on the left. Hence, natural signals are compressible in the wavelet domain.

- The wavelet transform is a multiresolution, bandpass representation of a signal. This can be seen directly from the filterbank definition of the discrete wavelet transform given in this article. For a signal of length , the coefficients in the range represent a version of the original signal which is in the pass-band . This is why zooming in on these ranges of the wavelet coefficients looks so similar in structure to the original signal. Ranges which are closer to the left (larger in the above notation), are coarser representations of the signal, while ranges to the right represent finer details.

See also[edit]

- Discrete cosine transform (DCT)

- Wavelet

- Wavelet transform

- Wavelet compression

- List of wavelet-related transforms

References[edit]

- ^ A.N. Akansu, R.A. Haddad and H. Caglar, Perfect Reconstruction Binomial QMF-Wavelet Transform, Proc. SPIE Visual Communications and Image Processing, pp. 609–618, vol. 1360, Lausanne, Sept. 1990.

- ^ Akansu, Ali N.; Haddad, Richard A. (1992), Multiresolution signal decomposition: transforms, subbands, and wavelets, Boston, MA: Academic Press, ISBN 978-0-12-047141-6

- ^ A.N. Akansu, Filter Banks and Wavelets in Signal Processing: A Critical Review, Proc. SPIE Video Communications and PACS for Medical Applications (Invited Paper), pp. 330-341, vol. 1977, Berlin, Oct. 1993.

- ^ Selesnick, I.W.; Baraniuk, R.G.; Kingsbury, N.C., 2005, The dual-tree complex wavelet transform

- ^ Sullivan, Gary (8–12 December 2003). "General characteristics and design considerations for temporal subband video coding". ITU-T. Video Coding Experts Group. Retrieved 13 September 2019.

- ^ Bovik, Alan C. (2009). The Essential Guide to Video Processing. Academic Press. p. 355. ISBN 9780080922508.

- ^ Gall, Didier Le; Tabatabai, Ali J. (1988). "Sub-band coding of digital images using symmetric short kernel filters and arithmetic coding techniques". ICASSP-88., International Conference on Acoustics, Speech, and Signal Processing. pp. 761–764 vol.2. doi:10.1109/ICASSP.1988.196696. S2CID 109186495.

- ^ Ali Naci Akansu, An Efficient QMF-Wavelet Structure (Binomial-QMF Daubechies Wavelets), Proc. 1st NJIT Symposium on Wavelets, April 1990.

- ^ Said, A.; Pearlman, W. A. (1996). "A new, fast, and efficient image codec based on set partitioning in hierarchical trees". IEEE Transactions on Circuits and Systems for Video Technology. 6 (3): 243–250. doi:10.1109/76.499834. ISSN 1051-8215. Retrieved 18 October 2019.

- ^ S. Mallat, A Wavelet Tour of Signal Processing, 2nd ed. San Diego, CA: Academic, 1999.

- ^ S. G. Mallat and S. Zhong, "Characterization of signals from multiscale edges," IEEE Trans. Pattern Anal. Mach. Intell., vol. 14, no. 7, pp. 710– 732, Jul. 1992.

- ^ Ince, Kiranyaz, Gabbouj, 2009, A generic and robust system for automated patient-specific classification of ECG signals

- ^ "Novel method for stride length estimation with body area network accelerometers", IEEE BioWireless 2011, pp. 79–82

- ^ Nasir, V.; Cool, J.; Sassani, F. (October 2019). "Intelligent Machining Monitoring Using Sound Signal Processed With the Wavelet Method and a Self-Organizing Neural Network". IEEE Robotics and Automation Letters. 4 (4): 3449–3456. doi:10.1109/LRA.2019.2926666. ISSN 2377-3766. S2CID 198474004.

- ^ Broughton, S. Allen. "Wavelet Based Methods in Image Processing". www.rose-hulman.edu. Retrieved 2017-05-02.

- ^ Chervyakov, N. I.; Lyakhov, P. A.; Nagornov, N. N. (2018-11-01). "Quantization Noise of Multilevel Discrete Wavelet Transform Filters in Image Processing". Optoelectronics, Instrumentation and Data Processing. 54 (6): 608–616. Bibcode:2018OIDP...54..608C. doi:10.3103/S8756699018060092. ISSN 1934-7944. S2CID 128173262.

- ^ Akansu, Ali N.; Smith, Mark J. T. (31 October 1995). Subband and Wavelet Transforms: Design and Applications. Kluwer Academic Publishers. ISBN 0792396456.

- ^ Akansu, Ali N.; Medley, Michael J. (6 December 2010). Wavelet, Subband and Block Transforms in Communications and Multimedia. Kluwer Academic Publishers. ISBN 978-1441950864.

- ^ A.N. Akansu, P. Duhamel, X. Lin and M. de Courville Orthogonal Transmultiplexers in Communication: A Review, IEEE Trans. On Signal Processing, Special Issue on Theory and Applications of Filter Banks and Wavelets. Vol. 46, No.4, pp. 979–995, April, 1998.

- ^ A.N. Akansu, W.A. Serdijn, and I.W. Selesnick, Wavelet Transforms in Signal Processing: A Review of Emerging Applications, Physical Communication, Elsevier, vol. 3, issue 1, pp. 1–18, March 2010.

- ^ Pragada, S.; Sivaswamy, J. (2008-12-01). "Image Denoising Using Matched Biorthogonal Wavelets". 2008 Sixth Indian Conference on Computer Vision, Graphics Image Processing: 25–32. doi:10.1109/ICVGIP.2008.95. S2CID 15516486.

- ^ "Thresholds for wavelet 1-D using Birgé-Massart strategy - MATLAB wdcbm". www.mathworks.com. Retrieved 2017-05-03.

- ^ "how to get SNR for 2 images - MATLAB Answers - MATLAB Central". www.mathworks.com. Retrieved 2017-05-10.

- ^ Barina, David (2020). "Real-time wavelet transform for infinite image strips". Journal of Real-Time Image Processing. 18 (3). Springer: 585–591. doi:10.1007/s11554-020-00995-8. S2CID 220396648. Retrieved 2020-07-09.

- ^ Atto, Abdourrahmane M.; Trouvé, Emmanuel; Nicolas, Jean-Marie; Lê, Thu Trang (2016). "Wavelet Operators and Multiplicative Observation Models—Application to SAR Image Time-Series Analysis" (PDF). IEEE Transactions on Geoscience and Remote Sensing. 54 (11): 6606–6624. Bibcode:2016ITGRS..54.6606A. doi:10.1109/TGRS.2016.2587626. S2CID 1860049.

External links[edit]

- Stanford's WaveLab in matlab

- libdwt, a cross-platform DWT library written in C

- Concise Introduction to Wavelets by René Puschinger

- ^ Prasad, Akhilesh; Maan, Jeetendrasingh; Verma, Sandeep Kumar (2021). "Wavelet transforms associated with the index Whittaker transform". Mathematical Methods in the Applied Sciences. 44 (13): 10734–10752. Bibcode:2021MMAS...4410734P. doi:10.1002/mma.7440. ISSN 1099-1476. S2CID 235556542.

![{\displaystyle y[n]=(x*g)[n]=\sum \limits _{k=-\infty }^{\infty }{x[k]g[n-k]}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/f4eb91f7893c66437b324aa633b004bdab8fe35e)

![{\displaystyle y_{\mathrm {low} }[n]=\sum \limits _{k=-\infty }^{\infty }{x[k]g[2n-k]}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/2888626ff63016f7500fcd46ca830fc9a4257f23)

![{\displaystyle y_{\mathrm {high} }[n]=\sum \limits _{k=-\infty }^{\infty }{x[k]h[2n-k]}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/0771b3bacd7a8fe2f620d96abd981d1867c31269)

![{\displaystyle (y\downarrow k)[n]=y[kn]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/c85edbf80c21cb06f68ccbb1048db49557999c0e)

![{\displaystyle h[n]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/89981bbbb05ffd469eeadb828c18359965985e46)

![{\displaystyle g[n]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3c5e1d771a2385e9aeb71838a40425bb07c89525)

![{\displaystyle \psi =[1,-1]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/184cea2b9e81c07ceb47b147fef04a19a2c79048)

![{\displaystyle h[n]={\frac {1}{\sqrt {2}}}[-1,1]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9bab290b077bb173832a55e0d0e9790f96d054d6)

![{\displaystyle h[n]=\left[{\frac {-{\sqrt {2}}}{2}},{\frac {\sqrt {2}}{2}}\right]g[n]=\left[{\frac {\sqrt {2}}{2}},{\frac {\sqrt {2}}{2}}\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/040faee166a5945c0fd99b632808e2143c978b0f)

![{\displaystyle [2^{N-j},2^{N-j+1}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/5a62478da394c615e67c78a0816606c4400c2543)

![{\displaystyle \left[{\frac {\pi }{2^{j}}},{\frac {\pi }{2^{j-1}}}\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1818a598dac087031bfd7681f2aa03ee59a3dca5)