Disinformation

It has been suggested that Disinformation attack be merged into this article. (Discuss) Proposed since October 2023. |

| Part of a series on |

| War |

|---|

|

Disinformation is false information deliberately spread to deceive people.[1][2][3] Disinformation is an orchestrated adversarial activity in which actors employ strategic deceptions and media manipulation tactics to advance political, military, or commercial goals.[4] Disinformation is implemented through attacks that "weaponize multiple rhetorical strategies and forms of knowing—including not only falsehoods but also truths, half-truths, and value judgements—to exploit and amplify culture wars and other identity-driven controversies."[5]

In contrast, misinformation refers to inaccuracies that stem from inadvertent error.[6] Misinformation can be used to create disinformation when known misinformation is purposefully and intentionally disseminated.[7] "Fake news" has sometimes been categorized as a type of disinformation, but scholars have advised not using these two terms interchangeably or using "fake news" altogether in academic writing since politicians have weaponized it to describe any unfavorable news coverage or information.[8]

Etymology[edit]

The English word disinformation comes from the application of the Latin prefix dis- to information making the meaning "reversal or removal of information". The rarely used word had appeared with this usage in print at least as far back as 1887.[10][11][12][13]

Some consider it a loan translation of the Russian дезинформация, transliterated as dezinformatsiya,[1][2][3] apparently derived from the title of a KGB black propaganda department.[14][2][15][1] Soviet planners in the 1950s defined disinformation as "dissemination (in the press, on the radio, etc.) of false reports intended to mislead public opinion."[16]

Disinformation first made an appearance in dictionaries in 1985, specifically, Webster's New College Dictionary and the American Heritage Dictionary.[17] In 1986, the term disinformation was not defined in Webster's New World Thesaurus or New Encyclopædia Britannica.[1] After the Soviet term became widely known in the 1980s, native speakers of English broadened the term as "any government communication (either overt or covert) containing intentionally false and misleading material, often combined selectively with true information, which seeks to mislead and manipulate either elites or a mass audience."[3]

By 1990, use of the term disinformation had fully established itself in the English language within the lexicon of politics.[18] By 2001, the term disinformation had come to be known as simply a more civil phrase for saying someone was lying.[19] Stanley B. Cunningham wrote in his 2002 book The Idea of Propaganda that disinformation had become pervasively used as a synonym for propaganda.[20]

Operationalization[edit]

The Shorenstein Center at Harvard University defines disinformation research as an academic field that studies “the spread and impacts of misinformation, disinformation, and media manipulation,” including “how it spreads through online and offline channels, and why people are susceptible to believing bad information, and successful strategies for mitigating its impact”[21] According to a 2023 research article published in New Media & Society,[4] disinformation circulates on social media through deception campaigns implemented in multiple ways including: astroturfing, conspiracy theories, clickbait, culture wars, echo chambers, hoaxes, fake news, propaganda, pseudoscience, and rumors.

In order to distinguish between similar terms, including misinformation and malinformation, scholars collectively agree on the definitions for each term as follows: (1) disinformation is the strategic dissemination of false information with the intention to cause public harm;[22] (2) misinformation represents the unintentional spread of false information; and (3) malinformation is factual information disseminated with the intention to cause harm,[23][24] these terms are abbreviated 'DMMI'.[25]

Comparisons with propaganda[edit]

Whether and to what degree disinformation and propaganda overlap is subject to debate. Some (like U.S. Department of State) define propaganda as the use of non-rational arguments to either advance or undermine a political ideal, and use disinformation as an alternative name for undermining propaganda.[26] While others consider them to be separate concepts altogether.[27] One popular distinction holds that disinformation also describes politically motivated messaging designed explicitly to engender public cynicism, uncertainty, apathy, distrust, and paranoia, all of which disincentivize citizen engagement and mobilization for social or political change.[16]

Practice[edit]

Disinformation is the label often given to foreign information manipulation and interference (FIMI).[28][29] Studies on disinformation are often concerned with the content of activity whereas the broader concept of FIMI is more concerned with the "behaviour of an actor" that is described through the military doctrine concept of tactics, techniques, and procedures (TTPs).[28]

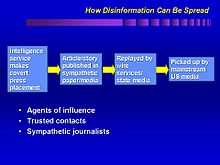

Disinformation is primarily carried out by government intelligence agencies, but has also been used by non-governmental organizations and businesses.[30] Front groups are a form of disinformation, as they mislead the public about their true objectives and who their controllers are.[31] Most recently, disinformation has been deliberately spread through social media in the form of "fake news", disinformation masked as legitimate news articles and meant to mislead readers or viewers.[32] Disinformation may include distribution of forged documents, manuscripts, and photographs, or spreading dangerous rumours and fabricated intelligence. Use of these tactics can lead to blowback, however, causing such unintended consequences such as defamation lawsuits or damage to the dis-informer's reputation.[31]

Worldwide[edit]

The examples and perspective in this section may not represent a worldwide view of the subject. (October 2023) |

Soviet disinformation[edit]

Russian disinformation[edit]

American disinformation[edit]

The United States Intelligence Community appropriated use of the term disinformation in the 1950s from the Russian dezinformatsiya, and began to use similar strategies[43][44] during the Cold War and in conflict with other nations.[15] The New York Times reported in 2000 that during the CIA's effort to substitute Mohammed Reza Pahlavi for then-Prime Minister of Iran Mohammad Mossadegh, the CIA placed fictitious stories in the local newspaper.[15] Reuters documented how, subsequent to the 1979 Soviet Union invasion of Afghanistan during the Soviet–Afghan War, the CIA put false articles in newspapers of Islamic-majority countries, inaccurately stating that Soviet embassies had "invasion day celebrations".[15] Reuters noted a former U.S. intelligence officer said they would attempt to gain the confidence of reporters and use them as secret agents, to affect a nation's politics by way of their local media.[15]

In October 1986, the term gained increased currency in the U.S. when it was revealed that two months previously, the Reagan Administration had engaged in a disinformation campaign against then-leader of Libya, Muammar Gaddafi.[45] White House representative Larry Speakes said reports of a planned attack on Libya as first broken by The Wall Street Journal on August 25, 1986, were "authoritative", and other newspapers including The Washington Post then wrote articles saying this was factual.[45] U.S. State Department representative Bernard Kalb resigned from his position in protest over the disinformation campaign, and said: "Faith in the word of America is the pulse beat of our democracy."[45]

The executive branch of the Reagan administration kept watch on disinformation campaigns through three yearly publications by the Department of State: Active Measures: A Report on the Substance and Process of Anti-U.S. Disinformation and Propaganda Campaigns (1986); Report on Active Measures and Propaganda, 1986–87 (1987); and Report on Active Measures and Propaganda, 1987–88 (1989).[43]

Response[edit]

Responses from cultural leaders[edit]

Pope Francis condemned disinformation in a 2016 interview, after being made the subject of a fake news website during the 2016 U.S. election cycle which falsely claimed that he supported Donald Trump.[46][47][48] He said the worst thing the news media could do was spread disinformation. He said the act was a sin,[49][50] comparing those who spread disinformation to individuals who engage in coprophilia.[51][52]

Ethics in warfare[edit]

In a contribution to the 2014 book Military Ethics and Emerging Technologies, writers David Danks and Joseph H. Danks discuss the ethical implications in using disinformation as a tactic during information warfare.[53] They note there has been a significant degree of philosophical debate over the issue as related to the ethics of war and use of the technique.[53] The writers describe a position whereby the use of disinformation is occasionally allowed, but not in all situations.[53] Typically the ethical test to consider is whether the disinformation was performed out of a motivation of good faith and acceptable according to the rules of war.[53] By this test, the tactic during World War II of putting fake inflatable tanks in visible locations on the Pacific Islands in order to falsely present the impression that there were larger military forces present would be considered as ethically permissible.[53] Conversely, disguising a munitions plant as a healthcare facility in order to avoid attack would be outside the bounds of acceptable use of disinformation during war.[53]

Research[edit]

Research related to disinformation studies is increasing as an applied area of inquiry.[54][55] The call to formally classify disinformation as a cybersecurity threat is made by advocates due to its increase in social networking sites.[56] Researchers working for the University of Oxford found that over a three-year period the number of governments engaging in online disinformation rose from 28 in 2017, to 40 in 2018, and 70 in 2019. Despite the proliferation of social media websites, Facebook and Twitter showed the most activity in terms of active disinformation campaigns. Techniques reported on included the use of bots to amplify hate speech, the illegal harvesting of data, and paid trolls to harass and threaten journalists.[57]

Whereas disinformation research focuses primarily on how actors orchestrate deceptions on social media, primarily via fake news, new research investigates how people take what started as deceptions and circulate them as their personal views.[5] As a result, research shows that disinformation can be conceptualized as a program that encourages engagement in oppositional fantasies (i.e., culture wars), through which disinformation circulates as rhetorical ammunition for never-ending arguments.[5] As disinformation entangles with culture wars, identity-driven controversies constitute a vehicle through which disinformation disseminates on social media. This means that disinformation thrives, not despite raucous grudges but because of them. The reason is that controversies provide fertile ground for never-ending debates that solidify points of view.[5]

Scholars have pointed out that disinformation is not only a foreign threat as domestic purveyors of disinformation are also leveraging traditional media outlets such as newspapers, radio stations, and television news media to disseminate false information.[58] Current research suggests right-wing online political activists in the United States may be more likely to use disinformation as a strategy and tactic.[59] Governments have responded with a wide range of policies to address concerns about the potential threats that disinformation poses to democracy, however, there is little agreement in elite policy discourse or academic literature as to what it means for disinformation to threaten democracy, and how different policies might help to counter its negative implications.[60]

Consequences of exposure to disinformation online[edit]

There is a broad consensus amongst scholars that there is a high degree of disinformation, misinformation, and propaganda online; however, it is unclear to what extent such disinformation has on political attitudes in the public and, therefore, political outcomes.[61] This conventional wisdom has come mostly from investigative journalists, with a particular rise during the 2016 U.S. election: some of the earliest work came from Craig Silverman at Buzzfeed News.[62] Cass Sunstein supported this in #Republic, arguing that the internet would become rife with echo chambers and informational cascades of misinformation leading to a highly polarized and ill-informed society.[63]

Research after the 2016 election found: (1) for 14 percent of Americans social media was their "most important" source of election news; 2) known false news stories "favoring Trump were shared a total of 30 million times on Facebook, while those favoring Clinton were shared 8 million times"; 3) the average American adult saw fake news stories, "with just over half of those who recalled seeing them believing them"; and 4) people are more likely to "believe stories that favor their preferred candidate, especially if they have ideologically segregated social media networks."[64] Correspondingly, whilst there is wide agreement that the digital spread and uptake of disinformation during the 2016 election was massive and very likely facilitated by foreign agents, there is an ongoing debate on whether all this had any actual effect on the election. For example, a double blind randomized-control experiment by researchers from the London School of Economics (LSE), found that exposure to online fake news about either Trump or Clinton had no significant effect on intentions to vote for those candidates. Researchers who examined the influence of Russian disinformation on Twitter during the 2016 US presidential campaign found that exposure to disinformation was (1) concentrated among a tiny group of users, (2) primarily among Republicans, and (3) eclipsed by exposure to legitimate political news media and politicians. Finally, they find "no evidence of a meaningful relationship between exposure to the Russian foreign influence campaign and changes in attitudes, polarization, or voting behavior."[65] As such, despite its mass dissemination during the 2016 Presidential Elections, online fake news or disinformation probably did not cost Hillary Clinton the votes needed to secure the presidency.[66]

Research on this topic is continuing, and some evidence is less clear. For example, internet access and time spent on social media does not appear correlated with polarisation.[67] Further, misinformation appears not to significantly change political knowledge of those exposed to it.[68] There seems to be a higher level of diversity of news sources that users are exposed to on Facebook and Twitter than conventional wisdom would dictate, as well as a higher frequency of cross-spectrum discussion.[69][70] Other evidence has found that disinformation campaigns rarely succeed in altering the foreign policies of the targeted states.[71]

Research is also challenging because disinformation is meant to be difficult to detect and some social media companies have discouraged outside research efforts.[72] For example, researchers found disinformation made "existing detection algorithms from traditional news media ineffective or not applicable...[because disinformation] is intentionally written to mislead readers...[and] users' social engagements with fake news produce data that is big, incomplete, unstructured, and noisy."[72] Facebook, the largest social media company, has been criticized by analytical journalists and scholars for preventing outside research of disinformation.[73][74][75][76]

Alternative perspectives and critiques[edit]

Researchers have criticized the framing of disinformation as being limited to technology platforms, removed from its wider political context and inaccurately implying that the media landscape was otherwise well-functioning.[77] "The field possesses a simplistic understanding of the effects of media technologies; overemphasizes platforms and underemphasizes politics; focuses too much on the United States and Anglocentric analysis; has a shallow understanding of political culture and culture in general; lacks analysis of race, class, gender, and sexuality as well as status, inequality, social structure, and power; has a thin understanding of journalistic processes; and, has progressed more through the exigencies of grant funding than the development of theory and empirical findings."[78]

Alternative perspectives have been proposed:

- Moving beyond fact-checking and media literacy to study a pervasive phenomenon as something that involves more than news consumption.

- Moving beyond technical solutions including AI-enhanced fact checking to understand the systemic basis of disinformation.

- Develop a theory that goes beyond Americentrism to develop a global perspective, understand cultural imperialism and Third World dependency on Western news,[79] and understand disinformation in the Global South.[80]

- Develop market-oriented disinformation research that examines the financial incentives and business models that nudge content creators and digital platforms to circulate disinformation online.[4]

- Include a multidisciplinary approach, involving history, political economy, ethnic studies, feminist studies, and science and technology studies.

- Develop understandings of Gendered-based disinformation (GBD) defined as "the dissemination of false or misleading information attacking women (especially political leaders, journalists and public figures), basing the attack on their identity as women."[81][82]

Strategies for spreading disinformation[edit]

Disinformation attack[edit]

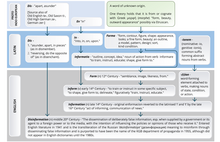

The research literature on how disinformation spreads is growing.[61] Studies show that disinformation spread in social media can be classified into two broad stages: seeding and echoing.[5] "Seeding," when malicious actors strategically insert deceptions, like fake news, into a social media ecosystem, and "echoing" is when the audience disseminates disinformation argumentatively as their own opinions often by incorporating disinformation into a confrontational fantasy.

Internet manipulation[edit]

Studies show four main methods of seeding disinformation online:[61]

- Selective censorship

- Manipulation of search rankings

- Hacking and releasing

- Directly Sharing Disinformation

See also[edit]

- Active Measures Working Group

- Agitprop

- Black propaganda

- Censorship

- Chinese information operations and information warfare

- Counter Misinformation Team

- COVID-19 misinformation

- Deepfakes

- Demoralization (warfare)

- Denial and deception

- Disinformation attack

- Disinformation in the 2022 Russian invasion of Ukraine

- Fake news

- False flag

- Fear, uncertainty and doubt

- Gaslighting

- Internet manipulation

- Knowledge falsification

- Kompromat

- Manufacturing Consent

- Media manipulation

- Military deception

- Post-truth politics

- Propaganda in the Soviet Union

- Sharp power

- Social engineering (political science)

- The Disinformation Project

Notes[edit]

References[edit]

- ^ a b c d Ion Mihai Pacepa and Ronald J. Rychlak (2013), Disinformation: Former Spy Chief Reveals Secret Strategies for Undermining Freedom, Attacking Religion, and Promoting Terrorism, WND Books, pp. 4–6, 34–39, 75, ISBN 978-1-936488-60-5

- ^ a b c Bittman, Ladislav (1985), The KGB and Soviet Disinformation: An Insider's View, Pergamon-Brassey's, pp. 49–50, ISBN 978-0-08-031572-0

- ^ a b c Shultz, Richard H.; Godson, Roy (1984), Dezinformatsia: Active Measures in Soviet Strategy, Pergamon-Brassey's, pp. 37–38, ISBN 978-0-08-031573-7

- ^ a b c Diaz Ruiz, Carlos (2023). "Disinformation on digital media platforms: A market-shaping approach". New Media & Society. Online first: 1–24. doi:10.1177/14614448231207644. S2CID 264816011 – via SAGE.

- ^ a b c d e f Diaz Ruiz, Carlos; Nilsson, Tomas (16 May 2022). "Disinformation and Echo Chambers: How Disinformation Circulates in Social Media Through Identity-Driven Controversies". Journal of Public Policy & Marketing. 42: 18–35. doi:10.1177/07439156221103852. S2CID 248934562. Archived from the original on 20 June 2022. Retrieved 20 June 2022.

- ^ "Ireton, C & Posetti, J (2018) "Journalism, fake news & disinformation: handbook for journalism education and training" UNESCO". Archived from the original on 6 April 2023. Retrieved 7 August 2021.

- ^ Golbeck, Jennifer, ed. (2008), Computing with Social Trust, Human-Computer Interaction Series, Springer, pp. 19–20, ISBN 978-1-84800-355-2

- ^ Freelon, Deen; Wells, Chris (3 March 2020). "Disinformation as Political Communication". Political Communication. 37 (2): 145–156. doi:10.1080/10584609.2020.1723755. ISSN 1058-4609. S2CID 212897113. Archived from the original on 17 July 2023. Retrieved 17 July 2023.

- ^ a b Hadley, Newman (2022). "Author". Journal of Information Warfare. Archived from the original on 28 December 2022. Retrieved 28 December 2022.

Strategic communications advisor working across a broad range of policy areas for public and multilateral organisations. Counter-disinformation specialist and published author on foreign information manipulation and interference (FIMI).

- ^ "City & County Cullings (Early use of the word "disinformation" 1887)". Medicine Lodge Cresset. 17 February 1887. p. 3. Archived from the original on 24 May 2021. Retrieved 24 May 2021.

- ^ "Professor Young on Mars and disinformation (1892)". The Salt Lake Herald. 18 August 1892. p. 4. Archived from the original on 24 May 2021. Retrieved 24 May 2021.

- ^ "Pure nonsense (early use of the word disinformation) (1907)". The San Bernardino County Sun. 26 September 1907. p. 8. Archived from the original on 24 May 2021. Retrieved 24 May 2021.

- ^ "Support for Red Cross helps U.S. boys abroad, Rotary Club is told (1917)". The Sheboygan Press. 18 December 1917. p. 4. Archived from the original on 24 May 2021. Retrieved 24 May 2021.

- ^ Garth Jowett; Victoria O'Donnell (2005), "What Is Propaganda, and How Does It Differ From Persuasion?", Propaganda and Persuasion, Sage Publications, pp. 21–23, ISBN 978-1-4129-0898-6,

In fact, the word disinformation is a cognate for the Russian dezinformatsia, taken from the name of a division of the KGB devoted to black propaganda.

- ^ a b c d e Taylor, Adam (26 November 2016), "Before 'fake news,' there was Soviet 'disinformation'", The Washington Post, archived from the original on 14 May 2019, retrieved 3 December 2016

- ^ a b Jackson, Dean (2018), DISTINGUISHING DISINFORMATION FROM PROPAGANDA, MISINFORMATION, AND "FAKE NEWS" (PDF), National Endowment for Democracy, archived (PDF) from the original on 7 April 2022, retrieved 31 May 2022

- ^ Bittman, Ladislav (1988), The New Image-Makers: Soviet Propaganda & Disinformation Today, Brassey's Inc, pp. 7, 24, ISBN 978-0-08-034939-8

- ^ Martin, David (1990), The Web of Disinformation: Churchill's Yugoslav Blunder, Harcourt Brace Jovanovich, p. xx, ISBN 978-0-15-180704-8

- ^ Barton, Geoff (2001), Developing Media Skills, Heinemann, p. 124, ISBN 978-0-435-10960-8

- ^ Cunningham, Stanley B. (2002), "Disinformation (Russian: dezinformatsiya)", The Idea of Propaganda: A Reconstruction, Praeger, pp. 67–68, 110, ISBN 978-0-275-97445-9

- ^ "Disinformation". Shorenstein Center. Archived from the original on 30 October 2023. Retrieved 30 October 2023.

- ^ Center for Internet Security. (3 October 2022). "Essential Guide to Election Security:Managing Mis-, Dis-, and Malinformation". CIS website Archived 18 December 2023 at the Wayback Machine Retrieved 18 December 2023.

- ^ Baines, Darrin; Elliott, Robert J. R. (April 2020). "Defining misinformation, disinformation and malinformation: An urgent need for clarity during the COVID-19 infodemic". Discussion Papers. Archived from the original on 14 December 2022. Retrieved 14 December 2022.

- ^ "Information disorder: Toward an interdisciplinary framework for research and policy making". Council of Europe Publishing. Archived from the original on 14 December 2022. Retrieved 14 December 2022.

- ^ Newman, Hadley. "Understanding the Differences Between Disinformation, Misinformation, Malinformation and Information – Presenting the DMMI Matrix". Draft Online Safety Bill (Joint Committee). UK: UK Government. Archived from the original on 4 January 2023. Retrieved 4 January 2023.

- ^ Can public diplomacy survive the internet? (PDF), May 2017, archived from the original (PDF) on 30 March 2019

- ^ The Menace of Unreality: How the Kremlin Weaponizes Information, Culture and Money (PDF), Institute of Modern Russia, 2014, archived from the original (PDF) on 3 February 2019

- ^ a b Newman, Hadley (2022). Foreign information manipulation and interference defence standards: Test for rapid adoption of the common language and framework 'DISARM' (PDF). NATO Strategic Communications Centre of Excellence. Latvia. p. 60. ISBN 978-952-7472-46-0. Archived from the original on 28 December 2022. Retrieved 28 December 2022 – via European Centre of Excellence for Countering Hybrid Threats.

{{cite book}}: CS1 maint: location missing publisher (link) - ^ European Extrernal Action Service (EEAS) (27 October 2021). "Tackling Disinformation, Foreign Information Manipulation & Interference".

- ^ Goldman, Jan (2006), "Disinformation", Words of Intelligence: A Dictionary, Scarecrow Press, p. 43, ISBN 978-0-8108-5641-7

- ^ a b Samier, Eugene A. (2014), Secrecy and Tradecraft in Educational Administration: The Covert Side of Educational Life, Routledge Research in Education, Routledge, p. 176, ISBN 978-0-415-81681-6

- ^ Tandoc, Edson C; Lim, Darren; Ling, Rich (7 August 2019). "Diffusion of disinformation: How social media users respond to fake news and why". Journalism. 21 (3): 381–398. doi:10.1177/1464884919868325. ISSN 1464-8849. S2CID 202281476.

- ^ Taylor, Adam (26 November 2016), "Before 'fake news,' there was Soviet 'disinformation'", The Washington Post, retrieved 3 December 2016

- ^ Martin J. Manning; Herbert Romerstein (2004), "Disinformation", Historical Dictionary of American Propaganda, Greenwood, pp. 82–83, ISBN 978-0-313-29605-5

- ^ Nicholas John Cull; David Holbrook Culbert; David Welch (2003), "Disinformation", Propaganda and Mass Persuasion: A Historical Encyclopedia, 1500 to the Present, ABC-CLIO, p. 104, ISBN 978-1610690713

- ^ Stukal, Denis; Sanovich, Sergey; Bonneau, Richard; Tucker, Joshua A. (February 2022). "Why Botter: How Pro-Government Bots Fight Opposition in Russia" (PDF). American Political Science Review. 116 (1). Cambridge and New York: Cambridge University Press on behalf of the American Political Science Association: 843–857. doi:10.1017/S0003055421001507. ISSN 1537-5943. LCCN 08009025. OCLC 805068983. S2CID 247038589. Retrieved 10 March 2022.

- ^ Sultan, Oz (Spring 2019). "Tackling Disinformation, Online Terrorism, and Cyber Risks into the 2020s". The Cyber Defense Review. 4 (1). West Point, New York: Army Cyber Institute: 43–60. ISSN 2474-2120. JSTOR 26623066.

- ^ Anne Applebaum; Edward Lucas (6 May 2016), "The danger of Russian disinformation", The Washington Post, retrieved 9 December 2016

- ^ "Russian state-sponsored media and disinformation on Twitter". ZOiS Spotlight. Retrieved 16 September 2020.

- ^ "Russian Disinformation Is Taking Hold in Africa". CIGI. 17 November 2021. Retrieved 3 March 2022.

The Kremlin's effectiveness in seeding its preferred vaccine narratives among African audiences underscores its wider concerted effort to undermine and discredit Western powers by pushing or tapping into anti-Western sentiment across the continent.

- ^ "Leaked documents reveal Russian effort to exert influence in Africa". The Guardian. 11 June 2019. Retrieved 3 March 2022.

The mission to increase Russian influence on the continent is being led by Yevgeny Prigozhin, a businessman based in St Petersburg who is a close ally of the Russian president, Vladimir Putin. One aim is to 'strong-arm' the US and the former colonial powers the UK and France out of the region. Another is to see off 'pro-western' uprisings, the documents say.

- ^ MacFarquharaug, Neil (28 August 2016), "A Powerful Russian Weapon: The Spread of False Stories", The New York Times, p. A1, retrieved 9 December 2016,

Moscow adamantly denies using disinformation to influence Western public opinion and tends to label accusations of either overt or covert threats as 'Russophobia.'

- ^ a b Martin J. Manning; Herbert Romerstein (2004), "Disinformation", Historical Dictionary of American Propaganda, Greenwood, pp. 82–83, ISBN 978-0-313-29605-5

- ^ Murray-Smith, Stephen (1989), Right Words, Viking, p. 118, ISBN 978-0-670-82825-8

- ^ a b c Biagi, Shirley (2014), "Disinformation", Media/Impact: An Introduction to Mass Media, Cengage Learning, p. 328, ISBN 978-1-133-31138-6

- ^ "Pope Warns About Fake News-From Experience", The New York Times, Associated Press, 7 December 2016, archived from the original on 7 December 2016, retrieved 7 December 2016

- ^ Alyssa Newcomb (15 November 2016), "Facebook, Google Crack Down on Fake News Advertising", NBC News, NBC News, archived from the original on 6 April 2019, retrieved 16 November 2016

- ^ Schaede, Sydney (24 October 2016), "Did the Pope Endorse Trump?", FactCheck.org, archived from the original on 19 April 2019, retrieved 7 December 2016

- ^ Pullella, Philip (7 December 2016), "Pope warns media over 'sin' of spreading fake news, smearing politicians", Reuters, archived from the original on 23 November 2020, retrieved 7 December 2016

- ^ "Pope Francis compares fake news consumption to eating faeces", The Guardian, 7 December 2016, archived from the original on 7 March 2021, retrieved 7 December 2016

- ^ Zauzmer, Julie (7 December 2016), "Pope Francis compares media that spread fake news to people who are excited by feces", The Washington Post, archived from the original on 4 February 2021, retrieved 7 December 2016

- ^ Griffin, Andrew (7 December 2016), "Pope Francis: Fake news is like getting sexually aroused by faeces", The Independent, archived from the original on 26 January 2021, retrieved 7 December 2016

- ^ a b c d e f Danks, David; Danks, Joseph H. (2014), "The Moral Responsibility of Automated Responses During Cyberwarfare", in Timothy J. Demy; George R. Lucas Jr.; Bradley J. Strawser (eds.), Military Ethics and Emerging Technologies, Routledge, pp. 223–224, ISBN 978-0-415-73710-4

- ^ Spies, Samuel (14 August 2019). "Defining "Disinformation", V1.0". MediaWell, Social Science Research Council. Archived from the original on 30 October 2020. Retrieved 9 November 2019.

- ^ Tandoc, Edson C. (2019). "The facts of fake news: A research review". Sociology Compass. 13 (9): e12724. doi:10.1111/soc4.12724. ISSN 1751-9020. S2CID 201392983.

- ^ Caramancion, Kevin Matthe (2020). "An Exploration of Disinformation as a Cybersecurity Threat". 2020 3rd International Conference on Information and Computer Technologies (ICICT). pp. 440–444. doi:10.1109/ICICT50521.2020.00076. ISBN 978-1-7281-7283-5. S2CID 218651389.

- ^ "Samantha Bradshaw & Philip N. Howard. (2019) The Global Disinformation Disorder: 2019 Global Inventory of Organised Social Media Manipulation. Working Paper 2019.2. Oxford, UK: Project on Computational Propaganda" (PDF). comprop.oii.ox.ac.uk. Archived (PDF) from the original on 25 May 2022. Retrieved 17 November 2022.

- ^ Miller, Michael L.; Vaccari, Cristian (July 2020). "Digital Threats to Democracy: Comparative Lessons and Possible Remedies". The International Journal of Press/Politics. 25 (3): 333–356. doi:10.1177/1940161220922323. ISSN 1940-1612. S2CID 218962159. Archived from the original on 14 December 2022. Retrieved 14 December 2022.

- ^ Freelon, Deen; Marwick, Alice; Kreiss, Daniel (4 September 2020). "False equivalencies: Online activism from left to right". Science. 369 (6508): 1197–1201. Bibcode:2020Sci...369.1197F. doi:10.1126/science.abb2428. PMID 32883863. S2CID 221471947. Archived from the original on 21 October 2021. Retrieved 2 February 2022.

- ^ Tenove, Chris (July 2020). "Protecting Democracy from Disinformation: Normative Threats and Policy Responses". The International Journal of Press/Politics. 25 (3): 517–537. doi:10.1177/1940161220918740. ISSN 1940-1612. S2CID 219437151. Archived from the original on 14 December 2022. Retrieved 14 December 2022.

- ^ a b c Tucker, Joshua; Guess, Andrew; Barbera, Pablo; Vaccari, Cristian; Siegel, Alexandra; Sanovich, Sergey; Stukal, Denis; Nyhan, Brendan (2018). "Social Media, Political Polarization, and Political Disinformation: A Review of the Scientific Literature". SSRN Working Paper Series. doi:10.2139/ssrn.3144139. ISSN 1556-5068. Archived from the original on 21 February 2021. Retrieved 29 October 2019.

- ^ "This Analysis Shows How Viral Fake Election News Stories Outperformed Real News On Facebook". BuzzFeed News. 16 November 2016. Archived from the original on 17 July 2018. Retrieved 29 October 2019.

- ^ Sunstein, Cass R. (14 March 2017). #Republic : divided democracy in the age of social media. Princeton. ISBN 978-0691175515. OCLC 958799819.

{{cite book}}: CS1 maint: location missing publisher (link) - ^ Allcott, Hunt; Gentzkow, Matthew (May 2017). "Social Media and Fake News in the 2016 Election". Journal of Economic Perspectives. 31 (2): 211–236. doi:10.1257/jep.31.2.211. ISSN 0895-3309. S2CID 32730475.

- ^ Eady, Gregory; Paskhalis, Tom; Zilinsky, Jan; Bonneau, Richard; Nagler, Jonathan; Tucker, Joshua A. (9 January 2023). "Exposure to the Russian Internet Research Agency Foreign Influence Campaign on Twitter in the 2016 US Election and its Relationship to Attitudes and Voting Behavior". Nature Communications. 14 (62): 62. Bibcode:2023NatCo..14...62E. doi:10.1038/s41467-022-35576-9. PMC 9829855. PMID 36624094.

- ^ Leyva, Rodolfo (2020). "Testing and unpacking the effects of digital fake news: on presidential candidate evaluations and voter support". AI & Society. 35 (4): 970. doi:10.1007/s00146-020-00980-6. S2CID 218592685.

- ^ Boxell, Levi; Gentzkow, Matthew; Shapiro, Jesse M. (3 October 2017). "Greater Internet use is not associated with faster growth in political polarization among US demographic groups". Proceedings of the National Academy of Sciences. 114 (40): 10612–10617. Bibcode:2017PNAS..11410612B. doi:10.1073/pnas.1706588114. ISSN 0027-8424. PMC 5635884. PMID 28928150.

- ^ Allcott, Hunt; Gentzkow, Matthew (May 2017). "Social Media and Fake News in the 2016 Election". Journal of Economic Perspectives. 31 (2): 211–236. doi:10.1257/jep.31.2.211. ISSN 0895-3309.

- ^ Bakshy, E.; Messing, S.; Adamic, L. A. (5 June 2015). "Exposure to ideologically diverse news and opinion on Facebook". Science. 348 (6239): 1130–1132. Bibcode:2015Sci...348.1130B. doi:10.1126/science.aaa1160. ISSN 0036-8075. PMID 25953820. S2CID 206632821.

- ^ Wojcieszak, Magdalena E.; Mutz, Diana C. (1 March 2009). "Online Groups and Political Discourse: Do Online Discussion Spaces Facilitate Exposure to Political Disagreement?". Journal of Communication. 59 (1): 40–56. doi:10.1111/j.1460-2466.2008.01403.x. ISSN 0021-9916. S2CID 18865773.

- ^ Lanoszka, Alexander (2019). "Disinformation in international politics". European Journal of International Security. 4 (2): 227–248. doi:10.1017/eis.2019.6. ISSN 2057-5637. S2CID 211312944.

- ^ a b Shu, Kai; Sliva, Amy; Wang, Suhang; Tang, Jiliang; Liu, Huan (1 September 2017). "Fake News Detection on Social Media: A Data Mining Perspective". ACM SIGKDD Explorations Newsletter. 19 (1): 22–36. arXiv:1708.01967. doi:10.1145/3137597.3137600. ISSN 1931-0145. S2CID 207718082. Archived from the original on 5 February 2022. Retrieved 1 February 2022.

- ^ McCoy, Laura Edelson, Damon. "How Facebook Hinders Misinformation Research". Scientific American. Archived from the original on 2 February 2022. Retrieved 1 February 2022.

{{cite web}}: CS1 maint: multiple names: authors list (link) - ^ McCoy, Laura Edelson and Damon (14 August 2021). "Facebook shut down our research into its role in spreading disinformation | Laura Edelson and Damon McCoy". The Guardian. Archived from the original on 24 March 2022. Retrieved 1 February 2022.

- ^ Krishnan, Nandita; Gu, Jiayan; Tromble, Rebekah; Abroms, Lorien C. (15 December 2021). "Research note: Examining how various social media platforms have responded to COVID-19 misinformation". Harvard Kennedy School Misinformation Review. doi:10.37016/mr-2020-85. S2CID 245256590. Archived from the original on 3 February 2022. Retrieved 1 February 2022.

- ^ "Only Facebook knows the extent of its misinformation problem. And it's not sharing, even with the White House". Washington Post. ISSN 0190-8286. Archived from the original on 5 February 2022. Retrieved 1 February 2022.

- ^ Kuo, Rachel; Marwick, Alice (12 August 2021). "Critical disinformation studies: History, power, and politics". Harvard Kennedy School Misinformation Review. doi:10.37016/mr-2020-76. Archived from the original on 15 October 2023.

- ^ "What Comes After Disinformation Studies?". Center for Information, Technology, & Public Life (CITAP), University of North Carolina at Chapel Hill. Archived from the original on 3 February 2023. Retrieved 16 January 2024.

- ^ Tworek, Heidi (2 August 2022). "Can We Move Beyond Disinformation Studies?". Centre for International Governance Innovation. Archived from the original on 1 June 2023. Retrieved 16 January 2024.

- ^ Wasserman, Herman; Madrid‐Morales, Dani, eds. (12 April 2022). Disinformation in the Global South (1 ed.). Wiley. doi:10.1002/9781119714491. ISBN 978-1-119-71444-6.

- ^ Sessa, Maria Giovanna (4 December 2020). "Misogyny and Misinformation: An analysis of gendered disinformation tactics during the COVID-19 pandemic". EU DisinfoLab. Archived from the original on 19 September 2023. Retrieved 16 January 2024.

- ^ Sessa, Maria Giovanna (26 January 2022). "What is Gendered Disinformation?". Heinrich Böll Foundation. Archived from the original on 21 July 2022. Retrieved 16 January 2024.

- ^ Woolley, Samuel; Howard, Philip N. (2019). Computational Propaganda: Political Parties, Politicians, and Political Manipulation on Social Media. Oxford University Press. ISBN 978-0190931414.

- ^ Diaz Ruiz, Carlos (30 October 2023). "Disinformation on digital media platforms: A market-shaping approach". New Media & Society. doi:10.1177/14614448231207644. ISSN 1461-4448. S2CID 264816011.

- ^ Marchal, Nahema; Neudert, Lisa-Maria (2019). "Polarisation and the use of technology in political campaigns and communication" (PDF). European Parliamentary Research Service.

- ^ Kreiss, Daniel; McGregor, Shannon C (11 April 2023). "A review and provocation: On polarization and platforms". New Media & Society. 26: 556–579. doi:10.1177/14614448231161880. ISSN 1461-4448. S2CID 258125103.

- ^ Diaz Ruiz, Carlos; Nilsson, Tomas (2023). "Disinformation and Echo Chambers: How Disinformation Circulates on Social Media Through Identity-Driven Controversies". Journal of Public Policy & Marketing. 42 (1): 18–35. doi:10.1177/07439156221103852. ISSN 0743-9156. S2CID 248934562.

- ^ Di Domenico, Giandomenico; Ding, Yu (23 October 2023). "Between Brand attacks and broader narratives: how direct and indirect misinformation erode consumer trust". Current Opinion in Psychology. 54: 101716. doi:10.1016/j.copsyc.2023.101716. ISSN 2352-250X. PMID 37952396. S2CID 264474368.

- ^ Castells, Manuel (4 June 2015). Networks of Outrage and Hope: Social Movements in the Internet Age. John Wiley & Sons. ISBN 9780745695792. Retrieved 4 February 2017.

- ^ "Condemnation over Egypt's internet shutdown". Financial Times. Retrieved 4 February 2017.

- ^ "Net neutrality wins in Europe – a victory for the internet as we know it". ZME Science. 31 August 2016. Retrieved 4 February 2017.

Further reading[edit]

- Bittman, Ladislav (1985), The KGB and Soviet Disinformation: An Insider's View, Pergamon-Brassey's, ISBN 978-0-08-031572-0

- Boghardt, Thomas (26 January 2010), "Operation INFEKTION – Soviet Bloc Intelligence and Its AIDS Disinformation Campaign" (PDF), Studies in Intelligence, 53 (4), retrieved 9 December 2016

- Golitsyn, Anatoliy (1984), New Lies for Old: The Communist Strategy of Deception and Disinformation, Dodd, Mead & Company, ISBN 978-0-396-08194-4

- O'Connor, Cailin, and James Owen Weatherall, "Why We Trust Lies: The most effective misinformation starts with seeds of truth", Scientific American, vol. 321, no. 3 (September 2019), pp. 54–61.

- Ion Mihai Pacepa and Ronald J. Rychlak (2013), Disinformation: Former Spy Chief Reveals Secret Strategies for Undermining Freedom, Attacking Religion, and Promoting Terrorism, WND Books, ISBN 978-1-936488-60-5

- Fletcher Schoen; Christopher J. Lamb (1 June 2012), "Deception, Disinformation, and Strategic. Communications: How One Interagency Group. Made a Major Difference" (PDF), Strategic Perspectives, 11, retrieved 9 December 2016

- Shultz, Richard H.; Godson, Roy (1984), Dezinformatsia: Active Measures in Soviet Strategy, Pergamon-Brassey's, ISBN 978-0080315737

- Taylor, Adam (26 November 2016), "Before 'fake news,' there was Soviet 'disinformation'", The Washington Post, retrieved 3 December 2016

- Legg, Heidi; Kerwin, Joe (1 November 2018), The Fight Against Disinformation in the U.S.: A Landscape Analysis, Harvard Kennedy School, Shorenstein Center, retrieved 10 August 2020

External links[edit]

- Disinformation Archived 25 October 2007 at the Wayback Machine – a learning resource from the British Library including an interactive movie and activities.

- MediaWell – an initiative of the nonprofit Social Science Research Council seeking to track and curate disinformation, misinformation, and fake news research.