FFmpeg

| |

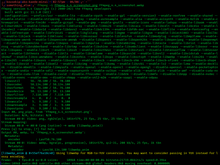

FFmpeg being used to convert a file from the PNG file format to the WebP format | |

| Original author(s) | Fabrice Bellard Bobby Bingham (libavfilter)[1] |

|---|---|

| Developer(s) | FFmpeg team |

| Initial release | December 20, 2000[2] |

| Stable release | 7.0[3] |

| Repository | git |

| Written in | C and Assembly[4] |

| Operating system | Various, including Windows, macOS, and Linux (executable programs are only available from third parties, as the project only distributes source code)[5][6] |

| Platform | x86, ARM, PowerPC, MIPS, RISC-V, DEC Alpha, Blackfin, AVR32, SH-4, and SPARC; may be compiled for other desktop computers |

| Type | Multimedia framework |

| License | LGPL-2.1-or-later, GPL-2.0-or-later Unredistributable if compiled with any software with a license incompatible with the GPL[7] |

| Website | ffmpeg |

FFmpeg is a free and open-source software project consisting of a suite of libraries and programs for handling video, audio, and other multimedia files and streams. At its core is the command-line ffmpeg tool itself, designed for processing of video and audio files. It is widely used for format transcoding, basic editing (trimming and concatenation), video scaling, video post-production effects and standards compliance (SMPTE, ITU).

FFmpeg also includes other tools: ffplay, a simple media player and ffprobe, a command-line tool to display media information. Among included libraries are libavcodec, an audio/video codec library used by many commercial and free software products, libavformat (Lavf),[8] an audio/video container mux and demux library, and libavfilter, a library for enhancing and editing filters through a Gstreamer-like filtergraph.[9]

FFmpeg is part of the workflow of many other software projects, and its libraries are a core part of software media players such as VLC, and has been included in core processing for YouTube and Bilibili.[10] Encoders and decoders for many audio and video file formats are included, making it highly useful for the transcoding of common and uncommon media files.

FFmpeg is published under the LGPL-2.1-or-later or GPL-2.0-or-later, depending on which options are enabled.[11]

History[edit]

The project was started by Fabrice Bellard[11] (using the pseudonym "Gérard Lantau") in 2000, and was led by Michael Niedermayer from 2004 until 2015.[12] Some FFmpeg developers were also part of the MPlayer project.

The name of the project is inspired by the MPEG video standards group, together with "FF" for "fast forward", so FFmpeg stands for "Fast Forward Moving Picture Experts Group".[13] The logo represents a zigzag scan pattern that shows how MPEG video codecs handle entropy encoding.[14]

On March 13, 2011, a group of FFmpeg developers decided to fork the project under the name Libav.[15][16][17] The event was related to an issue in project management, in which developers disagreed with the leadership of FFmpeg.[18][19][20]

On January 10, 2014, two Google employees announced that over 1000 bugs had been fixed in FFmpeg during the previous two years by means of fuzz testing.[21]

In January 2018, the ffserver command-line program – a long-time component of FFmpeg – was removed.[22] The developers had previously deprecated the program citing high maintenance efforts due to its use of internal application programming interfaces.[23]

The project publishes a new release every three months on average. While release versions are available from the website for download, FFmpeg developers recommend that users compile the software from source using the latest build from their source code Git version control system.[24]

Codec history[edit]

Two video coding formats with corresponding codecs and one container format have been created within the FFmpeg project so far. The two video codecs are the lossless FFV1, and the lossless and lossy Snow codec. Development of Snow has stalled, while its bit-stream format has not been finalized yet, making it experimental since 2011. The multimedia container format called NUT is no longer being actively developed, but still maintained.[25]

In summer 2010, FFmpeg developers Fiona Glaser, Ronald Bultje, and David Conrad, announced the ffvp8 decoder. Through testing, they determined that ffvp8 was faster than Google's own libvpx decoder.[26][27] Starting with version 0.6, FFmpeg also supported WebM and VP8.[28]

In October 2013, a native VP9[29] decoder and OpenHEVC, an open source High Efficiency Video Coding (HEVC) decoder, were added to FFmpeg.[30] In 2016 the native AAC encoder was considered stable, removing support for the two external AAC encoders from VisualOn and FAAC. FFmpeg 3.0 (nicknamed "Einstein") retained build support for the Fraunhofer FDK AAC encoder.[31] Since version 3.4 "Cantor" FFmpeg supported the FITS image format.[32] Since November 2018 in version 4.1 "al-Khwarizmi" AV1 can be muxed in MP4 and Matroska incl. WebM.[33][34]

Components[edit]

Command line tools[edit]

- ffmpeg is a command-line tool that converts audio or video formats. It can also capture and encode in real-time from various hardware and software sources[35] such as a TV capture card.

- ffplay is a simple media player utilizing SDL and the FFmpeg libraries.

- ffprobe is a command-line tool to display media information (text, CSV, XML, JSON), see also Mediainfo.

Libraries[edit]

- libswresample is a library containing audio resampling routines.

- libavresample is a library containing audio resampling routines from the Libav project, similar to libswresample from ffmpeg.

- libavcodec is a library containing all of the native FFmpeg audio/video encoders and decoders. Most codecs were developed from scratch to ensure best performance and high code reusability.

- libavformat (Lavf)[8] is a library containing demuxers and muxers for audio/video container formats.

- libavutil is a helper library containing routines common to different parts of FFmpeg. This library includes hash functions, ciphers, LZO decompressor and Base64 encoder/decoder.

- libpostproc is a library containing older H.263 based video postprocessing routines.

- libswscale is a library containing video image scaling and colorspace/pixelformat conversion routines.

- libavfilter is the substitute for vhook which allows the video/audio to be modified or examined (for debugging) between the decoder and the encoder. Filters have been ported from many projects including MPlayer and avisynth.

- libavdevice is a library containing audio/video io through internal and external devices.

Supported hardware[edit]

CPUs[edit]

FFmpeg encompasses software implementations of video and audio compressing and decompressing algorithms. These can be compiled and run on diverse instruction sets.

Many widespread instruction sets are supported by FFmpeg, including x86 (IA-32 and x86-64), PPC (PowerPC), ARM, DEC Alpha, SPARC, and MIPS.[36]

Special purpose hardware[edit]

There are a variety of application-specific integrated circuits (ASICs) for audio/video compression and decompression. These ASICs can partially or completely offload the computation from the host CPU. Instead of a complete implementation of an algorithm, only the API is required to use such an ASIC.[37]

| Firm | ASIC | purpose | supported by FFmpeg | Details |

|---|---|---|---|---|

| AMD | UVD | decoding | via VDPAU API and VAAPI | |

| VCE | encoding | via VAAPI, considered experimental[38] | ||

| Amlogic | Amlogic Video Engine | decoding | ? | |

| BlackMagic | DeckLink | encoding/decoding | real-time ingest and playout | |

| Broadcom | Crystal HD | decoding | ||

| Qualcomm | Hexagon | encoding/decoding | hwaccel[39] | |

| Intel | Intel Clear Video | decoding | (libmfx, VAAPI) | |

| Intel Quick Sync Video | encoding/decoding | (libmfx, VAAPI) | ||

| Nvidia | PureVideo / NVDEC | decoding | via the VDPAU API as of FFmpeg v1.2 (deprecated) via CUVID API as of FFmpeg v3.1[40] | |

| NVENC | encoding | as of FFmpeg v2.6 |

The following APIs are also supported: DirectX Video Acceleration (DXVA2, Windows), Direct3D 11 (D3D11VA, Windows), Media Foundation (Windows), VideoToolbox (macOS), RockChip MPP, OpenCL, OpenMAX, MMAL (Raspberry Pi), MediaCodec (Android OS), V4L2 (Linux). Depending on the environment, these APIs may lead to specific ASICs, to GPGPU routines, or to SIMD CPU code.[41]

Supported codecs and formats[edit]

Image formats[edit]

This section may be too technical for most readers to understand. (April 2023) |

FFmpeg supports many common and some uncommon image formats.

The PGMYUV image format is a homebrew variant of the binary (P5) PGM Netpbm format. FFmpeg also supports 16-bit depths of the PGM and PPM formats, and the binary (P7) PAM format with or without alpha channel, depth 8 bit or 16 bit for pix_fmts monob, gray, gray16be, rgb24, rgb48be, ya8, rgba, rgb64be.

Supported formats[edit]

This section needs additional citations for verification. (July 2022) |

In addition to FFV1 and Snow formats, which were created and developed from within FFmpeg, the project also supports the following formats:

| Group | Format type | Format name |

|---|---|---|

| ISO/IEC/ITU-T | Video | MPEG-1 Part 2, H.261 (Px64),[42] H.262/MPEG-2 Part 2, H.263,[42] MPEG-4 Part 2, H.264/MPEG-4 AVC, HEVC/H.265[30] (MPEG-H Part 2), MPEG-4 VCB (a.k.a. VP8), Motion JPEG, IEC DV video and CD+G |

| Audio | MP1, MP2, MP3, AAC, HE-AAC, MPEG-4 ALS, G.711 μ-law, G.711 A-law, G.721 (a.k.a. G.726 32k), G.722, G.722.2 (a.k.a. AMR-WB), G.723 (a.k.a. G.726 24k and 40k), G.723.1, G.726, G.729, G.729D, IEC DV audio and Direct Stream Transfer | |

| Subtitle | MPEG-4 Timed Text (a.k.a. 3GPP Timed Text) | |

| Image | JPEG, Lossless JPEG, JPEG-LS, JPEG 2000, JPEG XL,[43] PNG, CCITT G3 and CCITT G4 | |

| Alliance for Open Media | Video | AV1[44] |

| Image | AVIF[45] | |

| EIA | Subtitle | EIA-608 |

| CEA | Subtitle | CEA-708 |

| SMPTE | Video | SMPTE 314M (a.k.a. DVCAM and DVCPRO), SMPTE 370M (a.k.a. DVCPRO HD), VC-1 (a.k.a. WMV3), VC-2 (a.k.a. Dirac Pro), VC-3 (a.k.a. AVID DNxHD) |

| Audio | SMPTE 302M | |

| Image | DPX | |

| ATSC/ETSI/DVB | Audio | Full Rate (GSM 06.10), AC-3 (Dolby Digital), Enhanced AC-3 (Dolby Digital Plus) and DTS Coherent Acoustics (a.k.a. DTS or DCA) |

| Subtitle | DVB Subtitling (ETSI 300 743) | |

| DVD Forum/Dolby | Audio | MLP / Dolby TrueHD |

| Subtitle | DVD-Video subtitles | |

| Xperi/DTS, Inc/QDesign | Audio | DTS Coherent Acoustics (a.k.a. DTS or DCA), DTS Extended Surround (a.k.a. DTS-ES), DTS 96/24, DTS-HD High Resolution Audio, DTS Express (a.k.a. DTS-HD LBR), DTS-HD Master Audio, QDesign Music Codec 1 and 2 |

| Blu-ray Disc Association | Subtitle | PGS (Presentation Graphics Stream) |

| 3GPP | Audio | AMR-NB, AMR-WB (a.k.a. G.722.2) |

| 3GPP2 | Audio | QCELP-8 (a.k.a. SmartRate or IS-96C), QCELP-13 (a.k.a. PureVoice or IS-733) and Enhanced Variable Rate Codec (EVRC. a.k.a. IS-127) |

| World Wide Web Consortium | Video | Animated GIF[46] |

| Subtitle | WebVTT | |

| Image | GIF, and SVG (via librsvg) | |

| IETF | Video | FFV1 |

| Audio | iLBC (via libilbc), Opus and Comfort noise | |

| International Voice Association | Audio | DSS-SP |

| SAC | Video | AVS video, AVS2 video[47] (via libdavs2), and AVS3 video (via libuavs3d) |

| Microsoft | Video | Microsoft RLE, Microsoft Video 1, Cinepak, Microsoft MPEG-4 v1, v2 and v3, Windows Media Video (WMV1, WMV2, WMV3/VC-1), WMV Screen and Mimic codec |

| Audio | Windows Media Audio (WMA1, WMA2, WMA Pro and WMA Lossless), XMA (XMA1 and XMA2),[48] MSN Siren, MS-GSM and MS-ADPCM | |

| Subtitle | SAMI | |

| Image | Windows Bitmap, WMV Image (WMV9 Image and WMV9 Image v2), DirectDraw Surface, and MSP[49] | |

| Interactive Multimedia Association | Audio | IMA ADPCM |

| Intel / Digital Video Interactive | Video | RTV 2.1 (Indeo 2), Indeo 3, 4 and 5,[42] and Intel H.263 |

| Audio | DVI4 (a.k.a. IMA DVI ADPCM), Intel Music Coder, and Indeo Audio Coder | |

| RealNetworks | Video | RealVideo Fractal Codec (a.k.a. Iterated Systems ClearVideo), 1, 2, 3 and 4 |

| Audio | RealAudio v1 – v10, and RealAudio Lossless[50] | |

| Subtitle | RealText | |

| Apple / Spruce Technologies | Video | Cinepak (Apple Compact Video), ProRes, Sorenson 3 Codec, QuickTime Animation (Apple Animation), QuickTime Graphics (Apple Graphics), Apple Video, Apple Intermediate Codec and Pixlet[51] |

| Audio | ALAC | |

| Image | QuickDraw PICT | |

| Subtitle | Spruce subtitle (STL) | |

| Adobe Flash Player (SWF) | Video | Screen video, Screen video 2, Sorenson Spark and VP6 |

| Audio | Adobe SWF ADPCM and Nellymoser Asao | |

| Adobe / Aldus | Image | TIFF, PSD,[51] and DNG |

| Xiph.Org | Video | Theora |

| Audio | Speex,[52] Vorbis, Opus and FLAC | |

| Subtitle | Ogg Writ | |

| Sony | Audio | Adaptive Transform Acoustic Coding (ATRAC1, ATRAC3, ATRAC3Plus,[53] and ATRAC9[47])[42] and PSX ADPCM |

| NTT | Audio | TwinVQ |

| Google / On2 / GIPS | Video | Duck TrueMotion 1, Duck TrueMotion 2, Duck TrueMotion 2.0 Real Time, VP3, VP4,[54] VP5,[42] VP6,[42] VP7, VP8,[55] VP9[29] and animated WebP |

| Audio | DK ADPCM Audio 3/4, On2 AVC and iLBC (via libilbc) | |

| Image | WebP[56] | |

| Epic Games / RAD Game Tools | Video | Smacker video and Bink video |

| Audio | Bink audio | |

| CRI Middleware | Audio | ADX ADPCM, and HCA |

| Nintendo / NERD | Video | Mobiclip video |

| Audio | GCADPCM (a.k.a. ADPCM THP), FastAudio, and ADPCM IMA MOFLEX | |

| Synaptics / DSP Group | Audio | Truespeech |

| Electronic Arts / Criterion Games / Black Box Games / Westwood Studios | Video | RenderWare TXD,[57] Madcow, CMV, TGV, TGQ, TQI, Midivid VQ (MVDV), MidiVid 3.0 (MV30), Midivid Archival (MVHA), and Vector Quantized Animation (VQA) |

| Audio | Electronic Arts ADPCM variants | |

| Netpbm | Image | PBM, PGM, PPM, PNM, PAM, PFM and PHM |

| MIT/X Consortium/The Open Group | Image | XBM,[50] XPM and xwd |

| HPE / SGI / Silicon Graphics | Video | Silicon Graphics RLE 8-bit video,[46] Silicon Graphics MVC1/2[46] |

| Image | Silicon Graphics Image | |

| Oracle/Sun Microsystems | Image | Sun Raster |

| IBM | Video | IBM UltiMotion |

| Avid Technology / Truevision | Video | Avid 1:1x, Avid Meridien,[50] Avid DNxHD, Avid DNx444,[53] and DNxHR |

| Image | Targa[46] | |

| Autodesk / Alias | Video | Autodesk Animator Studio Codec and FLIC |

| Image | Alias PIX | |

| Activision Blizzard / Activision / Infocom | Audio | ADPCM Zork |

| Konami / Hudson Soft | Video | HVQM4 Video |

| Audio | Konami MTAF, and ADPCM IMA HVQM4 | |

| Grass Valley / Canopus | Video | HQ, HQA, HQX and Lossless |

| Vizrt / NewTek | Video | SpeedHQ |

| Image | Vizrt Binary Image[45] | |

| Academy Software Foundation / ILM | Image | OpenEXR[50] |

| Mozilla Corporation | Video | APNG[56] |

| Matrox | Video | Matrox Uncompressed SD (M101) / HD (M102) |

| AMD/ATI | Video | ATI VCR1/VCR2 |

| Asus | Video | ASUS V1/V2 codec |

| Commodore | Video | CDXL codec |

| Kodak | Image | Photo CD |

| Blackmagic Design / Cintel | Image | Cintel RAW |

| Houghton Mifflin Harcourt / The Learning Company / ZSoft Corporation | Image | PCX |

| Australian National University | Image | X-Face[46] |

| Bluetooth Special Interest Group | Audio | SBC, and mSBC |

| Qualcomm / CSR | Audio | QCELP, aptX, and aptX HD |

| Open Mobile Alliance / WAP Forum | Image | Wireless Bitmap |

Muxers[edit]

Output formats (container formats and other ways of creating output streams) in FFmpeg are called "muxers". FFmpeg supports, among others, the following:

- AIFF

- ASF

- AVI and also input from AviSynth

- BFI[58]

- CAF

- FLV

- GIF

- GXF, General eXchange Format, SMPTE 360M

- HLS, HTTP Live Streaming

- IFF[59]

- ISO base media file format (including QuickTime, 3GP and MP4)

- Matroska (including WebM)

- Maxis XA[60]

- MPEG-DASH[61]

- MPEG program stream

- MPEG transport stream (including AVCHD)

- MXF, Material eXchange Format, SMPTE 377M

- MSN Webcam stream[62]

- NUT[25]

- Ogg

- OMA[63]

- RL2[64]

- Segment, for creating segmented video streams

- Smooth Streaming

- TXD[57]

- WTV

Pixel formats[edit]

This section needs additional citations for verification. (July 2022) |

| Type | Color | Packed | Planar | Palette | |||

|---|---|---|---|---|---|---|---|

| Without alpha | With alpha | Without alpha | With alpha | Chroma-interleaved | With alpha | ||

| Monochrome | Binary (1-bit monochrome) | monoblack, monowhite | — | — | — | — | — |

| Grayscale | 8/9/10/12/14/16bpp | — | — | 16/32bpp | — | — | |

| RGB | RGB 1:2:1 (4-bit color) | 4bpp | — | — | — | — | — |

| RGB 3:3:2 (8-bit color) | 8bpp | — | — | — | — | — | |

| RGB 5:5:5 (High color) | 16bpp | — | — | — | — | — | |

| RGB 5:6:5 (High color) | 16bpp | — | — | — | — | — | |

| RGB/BGR | 24/30[p 1]/48bpp | 32[p 2]/64bpp | — | — | — | 8bit->32bpp | |

| GBR[p 3] | — | — | 8/9/10/12/14/16bpc | 8/10/12/16bpc | — | — | |

| RGB Float | RGB | 32bpc | 16/32bpc | — | — | — | — |

| GBR | — | — | 32bpc | 32bpc | — | — | |

| YUV | YVU 4:1:0 | — | — | (9bpp (YVU9))[p 4] | — | — | — |

| YUV 4:1:0 | — | — | 9bpp | — | — | — | |

| YUV 4:1:1 | 8bpc (UYYVYY) | — | 8bpc | — | (8bpc (NV11)) | — | |

| YVU 4:2:0 | — | — | (8bpc (YV12))[p 4] | — | 8 (NV21) | — | |

| YUV 4:2:0 | — | — | 8[p 5]/9/10/12/14/16bpc | 8/9/10/16bpc | 8 (NV12)/10 (P010)/12 (P012)/16bpc (P016) | — | |

| YVU 4:2:2 | — | — | (8bpc (YV16))[p 4] | — | (8bpc (NV61)) | — | |

| YUV 4:2:2 | 8 (YUYV[p 6] and UYVY)/10 (Y210)/12bpc (Y212)[p 7] | — | 8[p 8]/9/10/12/14/16bpc | 8/9/10/12/16bpc | 8 (NV16)/10 (NV20 and P210)/16bpc (P216) | — | |

| YUV 4:4:0 | — | — | 8/10/12bpc | — | — | — | |

| YVU 4:4:4 | — | — | (8bpc (YV24))[p 4] | — | 8bpc (NV42) | — | |

| YUV 4:4:4 | 8 (VUYX)/10[p 9]/12bpc[p 10] | 8[p 11] / 16bpc (AYUV64)[p 12] | 8[p 13]/9/10/12/14/16bpc | 8/9/10/12/16bpc | 8 (NV24)/10 (P410)/ 16bpc (P416) | — | |

| XYZ | XYZ 4:4:4[p 14] | 12bpc | — | — | — | — | — |

| Bayer | BGGR/RGGB/GBRG/GRBG | 8/16bpp | — | — | — | — | — |

- ^ 10-bit color components with 2-bit padding (X2RGB10)

- ^ RGBx (rgb0) and xBGR (0bgr) are also supported

- ^ used in YUV-centric codecs such like H.264

- ^ a b c d YVU9, YV12, YV16, and YV24 are supported as rawvideo codec in FFmpeg.

- ^ I420 a.k.a. YUV420P

- ^ aka YUY2 in Windows

- ^ UYVY 10bpc without a padding is supported as bitpacked codec in FFmpeg. UYVY 10bpc with 2-bits padding is supported as v210 codec in FFmpeg. 16bpc (Y216) is supported as targa_y216 codec in FFmpeg.

- ^ I422 a.k.a. YUV422P

- ^ XV30 a.k.a. XVYU2101010

- ^ XV36

- ^ VUYA a.k.a. AYUV

- ^ 10bpc (Y410), 12bpc (Y412), and Y416 (16bpc) are not supported.

- ^ I444 a.k.a. YUV444P

- ^ used in JPEG2000

FFmpeg does not support IMC1-IMC4, AI44, CYMK, RGBE, Log RGB and other formats. It also does not yet support ARGB 1:5:5:5, 2:10:10:10, or other BMP bitfield formats that are not commonly used.

Supported protocols[edit]

Open standards[edit]

De facto standards[edit]

Supported filters[edit]

FFmpeg supports, among others, the following filters.[68]

Audio[edit]

- Resampling (aresample)

- Pass/Stop filters

- Low-pass filter (lowpass)

- High-pass filter (highpass)

- All-pass filter (allpass)

- Butterworth Band-pass filter (bandpass)

- Butterworth Band-stop filter (bandreject)

- Arbitrary Finite Impulse Response Filter (afir)

- Arbitrary Infinite Impulse Response Filter (aiir)

- Equalizer

- Peak Equalizer (equalizer)

- Butterworth/Chebyshev Type I/Type II Multiband Equalizer (anequalizer)

- Low Shelving filter (bass)

- High Shelving filter (treble)

- Xbox 360 rqulizer

- FIR equalizer (firequalizer)

- Biquad filter (biquad)

- Remove/Add DC offset (dcshift)

- Expression evaluation

- Time domain expression evaluation (aeval)

- Frequency domain expression evaluation (afftfilt)

- Dynamics

- Limiter (alimiter)

- Compressor (acompressor)

- Dynamic range expander (crystalizer)

- Side-chain Compressor (sidechaincompress)

- Compander (compand)

- Noise gate (agate)

- Side-chain Noise gate(sidechaingate)

- Distortion

- Bitcrusher (acrusher)

- Emphasis (aemphasis)

- Amplify/Normalizer

- Volume (volume)

- Dynamic Audio Normalizer (dynaudnorm)

- EBU R 128 loudness normalizer (loudnorm)

- Modulation

- Sinusoidal Amplitude Modulation (tremolo)

- Sinusoidal Phase Modulation (vibrato)

- Phaser (aphaser)

- Chorus (chorus)

- Flanger (flanger)

- Pulsator (apulsator)

- Echo/Reverb

- Echo (aecho)

- Routing/Panning

- Stereo widening (stereowiden)

- Increase channel differences (extrastereo)

- M/S to L/R (stereotools)

- Channel mapping (channelmap)

- Channel splitting (channelsplit)

- Channel panning (pan)

- Channel merging (amerge)

- Channel joining (join)

- for Headphones

- Stereo to Binaural (earwax, ported from SoX)[69]

- Bauer Stereo to Binaural (bs2b, via libbs2b)

- Crossfeed (crossfeed)

- Multi-channel to Binaural (sofalizer, requires libnetcdf)

- Delay

- Delay (adelay)

- Delay by distance (compensationdelay)

- Fade

- Fader (afade)

- Crossfader (acrossfade)

- Audio time stretching and pitch scaling

- Time stretching (atempo)

- Time-stretching and Pitch-shifting (rubberband, via librubberband)

- Editing

- Trim (atrim)

- Silence-padding (apad)

- Silence remover (silenceremove)

- Show frame/channel information

- Show frame information (ashowinfo)

- Show channel information (astats)

- Show silence ranges (silencedetect)

- Show audio volumes (volumedetect)

- ReplayGain scanner (replaygain)

- Modify frame/channel information

- Set output format (aformat)

- Set number of sample (asetnsamples)

- Set sampling rate (asetrate)

- Mixer (amix)

- Synchronization (asyncts)

- HDCD data decoder (hdcd)

- Plugins

- Do nothing (anull)

Video[edit]

- Transformations

- Temporal editing

- Framerate (fps, framerate)

- Looping (loop)

- Trimming (trim)

- Deinterlacing (bwdif, idet, kerndeint, nnedi, yadif, w3fdif)

- Inverse Telecine

- Filtering

- Blurring (boxblur, gblur, avgblur, sab, smartblur)

- Convolution filters

- Convolution (convolution)

- Edge detection (edgedetect)

- Sobel Filter (sobel)

- Prewitt Filter (prewitt)

- Unsharp masking (unsharp)

- Denoising (atadenoise, bitplanenoise, dctdnoiz, owdenoise, removegrain)

- Logo removal (delogo, removelogo)

- Subtitles (ASS, subtitles)

- Alpha channel editing (alphaextract, alphamerge)

- Keying (chromakey, colorkey, lumakey)

- Frame detection

- Black frame detection (blackdetect, blackframe)

- Thumbnail selection (thumbnail)

- Frame Blending (blend, tblend, overlay)

- Video stabilization (vidstabdetect, vidstabtransform)

- Color and Level adjustments

- Balance and levels (colorbalance, colorlevels)

- Channel mixing (colorchannelmixer)

- Color space (colorspace)

- Parametric adjustments (curves, eq)

- Histograms and visualization

- CIE Scope (ciescope)

- Vectorscope (vectorscope)

- Waveform monitor (waveform)

- Color histogram (histogram)

- Drawing

- OCR

- Quality measures

- Lookup Tables

- lut, lutrgb, lutyuv, lut2, lut3d, haldclut

Supported test patterns[edit]

- SMPTE color bars (smptebars and smptehdbars)

- EBU color bars (pal75bars and pal100bars)

Supported LUT formats[edit]

- cineSpace LUT format

- Iridas Cube

- Adobe After Effects 3dl

- DaVinci Resolve dat

- Pandora m3d

Supported media and interfaces[edit]

FFmpeg supports the following devices via external libraries.[70]

Media[edit]

- Compact disc (via libcdio; input only)

Physical interfaces[edit]

- IEEE 1394 (a.k.a. FireWire; via libdc1394 and libraw1394; input only)

- IEC 61883 (via libiec61883; input only)

- DeckLink

- Brooktree video capture chip (via bktr driver; input only)

Audio IO[edit]

- Advanced Linux Sound Architecture (ALSA)

- Open Sound System (OSS)

- PulseAudio

- JACK Audio Connection Kit (JACK; input only)

- OpenAL (input only)

- sndio

- Core Audio (for macOS)

- AVFoundation (input only)

- AudioToolbox (output only)

Video IO[edit]

- Video4Linux2

- Video for Windows (input only)

- Windows DirectShow

- Android Camera (input only)

Screen capture and output[edit]

- Simple DirectMedia Layer 2 (output only)

- OpenGL (output only)

- Linux framebuffer (fbdev)

- Graphics Device Interface (GDI; input only)

- X Window System (X11; via XCB; input only)

- X video extension (XV; via Xlib; output only)

- Kernel Mode Setting (via libdrm; input only)

Others[edit]

- ASCII art (via libcaca; output only)

Applications[edit]

Legal aspects[edit]

FFmpeg contains more than 100 codecs,[71] most of which use compression techniques of one kind or another. Many such compression techniques may be subject to legal claims relating to software patents.[72] Such claims may be enforceable in countries like the United States which have implemented software patents, but are considered unenforceable or void in member countries of the European Union, for example.[73][original research] Patents for many older codecs, including AC3 and all MPEG-1 and MPEG-2 codecs, have expired.[citation needed]

FFmpeg is licensed under the LGPL license, but if a particular build of FFmpeg is linked against any GPL libraries (notably x264), then the entire binary is licensed under the GPL.

Projects using FFmpeg[edit]

FFmpeg is used by software such as Blender, Cinelerra-GG Infinity, HandBrake, Kodi, MPC-HC, Plex, Shotcut, VirtualDub2 (a VirtualDub fork),[74] VLC media player, xine and YouTube.[75][76] It handles video and audio playback in Google Chrome[76] and the Linux version of Firefox.[77] GUI front-ends for FFmpeg have been developed, including Multimedia Xpert[78] and XMedia Recode.

FFmpeg is used by ffdshow, FFmpegInterop, the GStreamer FFmpeg plug-in, LAV Filters and OpenMAX IL to expand the encoding and decoding capabilities of their respective multimedia platforms.

As part of NASA's Mars 2020 mission, FFmpeg is used by the Perseverance rover on Mars for image and video compression before footage is sent to Earth.[79]

See also[edit]

- MEncoder, a similar project

- List of open-source codecs

- List of video editing software

References[edit]

- ^ "Bobby announces work on libavfilter as GsOC project". 2008-02-09. Archived from the original on 2021-10-07. Retrieved 2021-10-07.

- ^ "Initial revision - git.videolan.org/ffmpeg.git/commit". git.videolan.org. 2000-12-20. Archived from the original on 2013-12-25. Retrieved 2013-05-11.

- ^ "Releases FFmpeg".

- ^ "Developer Documentation". ffmpeg.org. 2011-12-08. Archived from the original on 2012-02-04. Retrieved 2012-01-04.

- ^ "Platform Specific Information". FFmpeg.org. Archived from the original on 25 February 2020. Retrieved 25 February 2020.

- ^ "Download". ffmpeg.org. FFmpeg. Archived from the original on 2011-10-06. Retrieved 2012-01-04.

- ^ FFmpeg can be compiled with various external libraries, some of which have licenses that are incompatible with the FFmpeg's primary license, the GNU GPL.

- ^ a b "FFmpeg: Lavf: I/O and Muxing/Demuxing Library". ffmpeg.org. Archived from the original on 3 December 2016. Retrieved 21 October 2016.

- ^ "Libavfilter Documentation". ffmpeg.org. Archived from the original on 2021-10-07. Retrieved 2021-10-07.

- ^ ijkplayer, bilibili, 2021-10-05, archived from the original on 2021-10-05, retrieved 2021-10-05

- ^ a b "FFmpeg License and Legal Considerations". ffmpeg.org. Archived from the original on 2012-01-03. Retrieved 2012-01-04.

- ^ Niedermayer, Michael (31 July 2015). "[FFmpeg-devel] FFmpegs future and resigning as leader". Archived from the original on 2015-08-15. Retrieved 2015-09-22.

- ^ Bellard, Fabrice (18 February 2006). "FFmpeg naming and logo". FFmpeg developer mailing list. FFmpeg website. Archived from the original on 26 April 2012. Retrieved 24 December 2011.

- ^ Carlsen, Steve (1992-06-03). "TIFF 6.0 specification" (PS). Aldus Corporation. p. 98. Retrieved 2016-08-14.

Zig-Zag Scan

[dead link] Alt URL Archived 2012-07-03 at the Wayback Machine - ^ Libav project site, archived from the original on 2012-01-03, retrieved 2012-01-04

- ^ Ronald S. Bultje (2011-03-14), Project renamed to Libav, archived from the original on 2016-11-07, retrieved 2012-01-04

- ^ A group of FFmpeg developers just forked as Libav, Phoronix, 2011-03-14, archived from the original on 2011-09-15, retrieved 2012-01-04

- ^ What happened to FFmpeg, 2011-03-30, archived from the original on 2018-09-02, retrieved 2012-05-19

- ^ FFMpeg turmoil, 2011-01-19, archived from the original on 2012-01-12, retrieved 2012-01-04

- ^ "The FFmpeg/Libav situation". blog.pkh.me. Archived from the original on 2012-07-01. Retrieved 2015-09-22.

- ^ "FFmpeg and a thousand fixes". googleblog.com. January 10, 2014. Archived from the original on 22 October 2016. Retrieved 21 October 2016.

- ^ "ffserver – FFmpeg". trac.ffmpeg.org. Archived from the original on 2018-02-04. Retrieved 2018-02-03.

- ^ "ffserver program being dropped". ffmpeg.org. 2016-07-10. Archived from the original on 2016-07-16. Retrieved 2018-02-03.

- ^ "ffmpeg.org/download.html#releases". ffmpeg.org. Archived from the original on 2011-10-06. Retrieved 2015-04-27.

- ^ a b "NUT". Multimedia Wiki. 2012. Archived from the original on 2014-01-03. Retrieved 2014-01-03.

- ^ Glaser, Fiona (2010-07-23), Diary Of An x264 Developer: Announcing the world's fastest VP8 decoder, archived from the original on 2010-09-30, retrieved 2012-01-04

- ^ FFmpeg Announces High-Performance VP8 Decoder, Slashdot, 2010-07-24, archived from the original on 2011-12-21, retrieved 2012-01-04

- ^ "FFmpeg Goes WebM, Enabling VP8 for Boxee & Co". newteevee.com. 2010-06-17. Archived from the original on 2010-06-20. Retrieved 2012-01-04.

...with VLC, Boxee, MythTV, Handbrake and MPlayer being some of the more popular projects utilizing FFmpeg...

- ^ a b "Native VP9 decoder is now in the Git master branch". Launchpad. 2013-10-03. Archived from the original on 2013-10-22. Retrieved 2013-10-21.

- ^ a b "FFmpeg Now Features Native HEVC/H.265 Decoder Support". Softpedia. 2013-10-16. Archived from the original on 2014-06-15. Retrieved 2013-10-16.

- ^ FFmpeg (2016-02-15). "February 15th, 2016, FFmpeg 3.0 "Einstein"". Archived from the original on 2016-07-16. Retrieved 2016-04-02.

- ^ FFmpeg (2017-10-15). "October 15th, 2017, FFmpeg 3.4 "Cantor"". Archived from the original on 2016-07-16. Retrieved 2019-05-10.

- ^ FFmpeg (2018-11-06). "November 6th, 2018, FFmpeg 4.1 "al-Khwarizmi"". Archived from the original on 2016-07-16. Retrieved 2019-05-10.

- ^ Jan Ozer (2019-03-04). "Good News: AV1 Encoding Times Drop to Near-Reasonable Levels". StreamingMedia.com. Archived from the original on 2021-05-14. Retrieved 2019-05-10.

- ^ This video of Linux desktop (X11) was captured by ffmpeg and encoded in realtime[circular reference]

- ^ "FFmpeg Automated Testing Environment". Fate.multimedia.cx. Archived from the original on 2016-04-10. Retrieved 2012-01-04.

- ^ "FFmpeg Hardware Acceleration". ffmpeg.org Wiki. Archived from the original on 2016-12-04. Retrieved 2016-11-12.

- ^ "Hardware/VAAPI – FFmpeg". trac.ffmpeg.org. Archived from the original on 2017-10-16. Retrieved 2017-10-16.

- ^ "HEVC Video Encoder User Manual" (PDF). Qualcomm Developer Network. Archived (PDF) from the original on 2021-04-16. Retrieved 2021-02-23.

- ^ "FFmpeg Changelog". GitHub. Archived from the original on 2017-03-21. Retrieved 2016-11-12.

- ^ "HWAccelIntro – FFmpeg". trac.ffmpeg.org.

- ^ a b c d e f "Changelog". FFmpeg trunk SVN. FFmpeg. 17 April 2007. Retrieved 26 April 2007.[permanent dead link]

- ^ "FFmpeg Lands JPEG-XL Support". www.phoronix.com. Retrieved 2022-04-26.

- ^ "git.ffmpeg.org Git - ffmpeg.git/commit". git.ffmpeg.org. Archived from the original on 2018-04-23. Retrieved 2018-04-23.

- ^ a b FFmpeg 5.1 Released With Many Improvements To This Important Multimedia Project. Phoronix. July 22, 2022

- ^ a b c d e FFmpeg 1.1 Brings New Support, Encoders/Decoders. Phoronix. January 7, 2013

- ^ a b FFmpeg 4.1 Brings AV1 Parser & Support For AV1 In MP4. Phoronix. November 6, 2018

- ^ FFmpeg 3.0 Released, Supports VP9 VA-API Acceleration. Phoronix. February 15, 2016

- ^ FFmpeg 4.4 Released With AV1 VA-API Decoder, SVT-AV1 Encoding. Phoronix. April 9, 2021

- ^ a b c d FFmpeg 0.11 Has Blu-Ray Protocol, New Encoders. Phoronix. May 26, 2012

- ^ a b FFmpeg 3.3 Brings Native Opus Encoder, Support For Spherical Videos. Phoronix. April 17, 2017

- ^ FFmpeg 5.0 Released For This Popular, Open-Source Multimedia Library. Phoronix. January 14, 2022

- ^ a b FFmpeg 2.2 Release Adds The Libx265 Encoder. Phoronix. March 23, 2014

- ^ FFmpeg 4.2 Released With AV1 Decoding Support, GIF Parser. Phoronix. August 6, 2019

- ^ FFmpeg 0.6 Released With H.264, VP8 Love. Phoronix. June 16, 2010

- ^ a b FFmpeg 2.5 Brings Animated PNG, WebP Decoding Support. Phoronix. December 4, 2014

- ^ a b "FFmpeg development mailing list". FFmpeg development. FFmpeg. 7 May 2007. Archived from the original on 11 August 2007. Retrieved 24 December 2010.

- ^ vitor (13 April 2008). "FFmpeg development mailing list". FFmpeg development. FFmpeg website. Retrieved 14 April 2008.[permanent dead link]

- ^ vitor (30 March 2008). "FFmpeg development mailing list". FFmpeg development. FFmpeg website. Retrieved 30 March 2008.[permanent dead link]

- ^ "FFmpeg: MaxisXADemuxContext Struct Reference". FFmpeg development. FFmpeg website. Retrieved 17 March 2024.

- ^ Michael Niedermayer, Timothy Gu (2014-12-05). "RELEASE NOTES for FFmpeg 2.5 "Bohr"". VideoLAN. Archived from the original on 2014-12-08. Retrieved 2014-12-05.

- ^ ramiro (18 March 2008). "FFmpeg development mailing list". FFmpeg development. FFmpeg website. Archived from the original on 17 August 2008. Retrieved 18 March 2008.

- ^ banan (8 June 2008). "FFmpeg development mailing list". FFmpeg development. FFmpeg website. Archived from the original on 14 January 2009. Retrieved 8 June 2008.

- ^ faust3 (21 March 2008). "FFmpeg development mailing list". FFmpeg development. FFmpeg website. Archived from the original on 25 April 2008. Retrieved 21 March 2008.

{{cite web}}: CS1 maint: numeric names: authors list (link) - ^ van Kesteren, Anne (2010-09-01). "Internet Drafts are not Open Standards". annevankesteren.nl. Self-published. Archived from the original on 2010-09-02. Retrieved 2015-03-22.

- ^ Real Time Streaming Protocol 2.0 (RTSP) Archived 2023-10-25 at the Wayback Machine P.231

- ^ "rtsp: Support tls-encapsulated RTSP - git.videolan.org Git - ffmpeg.git/commit". videolan.org. Archived from the original on 18 October 2016. Retrieved 21 October 2016.

- ^ "FFmpeg Filters". ffmpeg.org. Archived from the original on 2017-03-28. Retrieved 2017-03-27.

- ^ How it works earwax.ca

- ^ "FFmpeg Devices Documentation". ffmpeg.org. Archived from the original on 2021-10-25. Retrieved 2021-10-25.

- ^ "Codecs list". ffmpeg.org. Archived from the original on 2012-01-06. Retrieved 2012-01-01.

- ^ "Legal information on FFmpeg's website". ffmpeg.org. Archived from the original on 2012-01-03. Retrieved 2012-01-04.

- ^ "The European Patent Convention". www.epo.org. European Patent Office. 2020-11-29. Archived from the original on 2021-11-19. Retrieved 2021-11-24.

- ^ "VirtualDub2". Archived from the original on 2020-08-07. Retrieved 2020-08-15.

- ^ "Google's YouTube Uses FFmpeg | Breaking Eggs And Making Omelettes". Multimedia.cx. 2011-02-08. Archived from the original on 2012-08-14. Retrieved 2012-08-06.

- ^ a b "FFmpeg-based Projects". ffmpeg.org. Archived from the original on 2016-02-20. Retrieved 2012-01-04..

- ^ "Firefox Enables FFmpeg Support By Default". Phoronix. 2015-11-15. Archived from the original on 2017-09-25. Retrieved 2015-11-18.

- ^ "Multimedia Xpert". Atlas Informatik. Retrieved 2022-05-26.

- ^ Maki, J. N.; Gruel, D.; McKinney, C.; Ravine, M. A.; Morales, M.; Lee, D.; Willson, R.; Copley-Woods, D.; Valvo, M.; Goodsall, T.; McGuire, J.; Sellar, R. G.; Schaffner, J. A.; Caplinger, M. A.; Shamah, J. M.; Johnson, A. E.; Ansari, H.; Singh, K.; Litwin, T.; Deen, R.; Culver, A.; Ruoff, N.; Petrizzo, D.; Kessler, D.; Basset, C.; Estlin, T.; Alibay, F.; Nelessen, A.; Algermissen, S. (2020). "The Mars 2020 Engineering Cameras and Microphone on the Perseverance Rover: A Next-Generation Imaging System for Mars Exploration". Space Science Reviews. 216 (8). Springer Nature Switzerland AG.: 137. Bibcode:2020SSRv..216..137M. doi:10.1007/s11214-020-00765-9. PMC 7686239. PMID 33268910.

External links[edit]

- FFmpeg

- Assembly language software

- Command-line software

- C (programming language) libraries

- Cross-platform free software (Linux; macOS; Windows)

- Free codecs

- Free computer libraries

- Free music software

- Free software programmed in C

- Free video conversion software

- Multimedia frameworks

- Software that uses FFmpeg

- Software using the LGPL license