OmniVision Technologies

This article's tone or style may not reflect the encyclopedic tone used on Wikipedia. (December 2023) |

| |

| Company type | Subsidiary |

|---|---|

| Industry | Semiconductors |

| Founded | 1995 |

| Founder | Aucera Technology (Taiwan) |

| Headquarters | , U.S. |

Area served | Worldwide |

Key people | Renrong Yu, Shaw Hong |

| Products | Image sensor technologies |

| Revenue | $1.379B |

| Owner | Will Semiconductor |

Number of employees | 2,200 (2015)[1] |

| Website | ovt |

OmniVision Technologies Inc. is an American subsidiary of Chinese semiconductor device and mixed-signal integrated circuit design house Will Semiconductor.[2][3] The company designs and develops digital imaging products for use in mobile phones, laptops, netbooks and webcams, security and surveillance cameras, entertainment, automotive and medical imaging systems. Headquartered in Santa Clara, California, OmniVision Technologies has offices in the US, Western Europe and Asia.[4]

In 2016, OmniVision was acquired by a consortium of Chinese investors consisting of Hua Capital Management Co., Ltd., CITIC Capital and Goldstone Investment Co., Ltd.[5]

History[edit]

OmniVision was founded in 1995 by Aucera Technology (TAIWAN:奧斯來科技).

Some company milestones:

- 1999: First Application-specific integrated circuit (ASIC)

- 2000: IPO

- 2005: Acquired CDM-Optics, a company founded to commercialize wavefront coding.[6]

- 2010: Acquires Aurora Systems and adds LCOS to its product line[7]

- 2011: Acquired Kodak patents[8]

- 2015: Signed an agreement to be acquired by a group of Chinese investors, including Hua Capital Management, CITIC Capital Holdings and GoldStone Investment, for about $1.9 billion in cash in April 2015.[9]

- 2016: Becomes a private company due to buyout by Chinese private equity consortium[10]

- 2018/2019: Will Semiconductor acquired OmniVision Technologies (for $2.178 billion) and SuperPix Micro Technology, merging them to form Omnivision Group[11][12]

- 2019: Achieved Guinness World Record for world's smallest commercially available sensor for OV6948 used as the CameraCubeChip.[13]

Technologies[edit]

OmniPixel3-HS[edit]

OmniVision's front-side illumination (FSI) technology is used to manufacture compact cameras in mobile handsets, notebook computers and other applications that require low-light performance without the need for flash.

OmniPixel3-GS expands on its predecessor, and is used for eye-tracking for facial authentication,[14] and other computer vision applications.

OmniBSI[edit]

Backside illuminated image (BSI) technology differs from FSI architectures in how light is delivered to the photosensitive area of the sensor. In FSI architectures, the light must first pass through transistors, dielectric layers, and metal circuitry. In contrast, OmniBSI technology turns the image sensor upside down and applies color filters and micro lenses to the backside of the pixels, resulting in light collection through the backside of the sensor.

OmniBSI-2[edit]

The second-generation BSI technology, developed in cooperation with Taiwan Semiconductor Manufacturing Company Limited (TSMC), is built using custom 65 nm design rules and 300mm copper processes. These technology changes were made to improve low-light sensitivity, dark current, and full-well capacity and provide a sharper image.

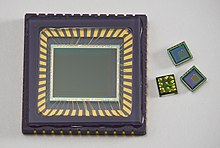

CameraCubeChip[edit]

In this camera module, sensor and lens manufacturing processes are combined using semiconductor stacking methodology. Wafer-level optical elements are fabricated in a single step by combining CMOS image sensors, chip scale packaging processes, (CSP) and wafer-level optics (WLO). These fully integrated chip products have camera functionality and are intended to produce thin, compact devices.

RGB-Ir technology[edit]

RGB-iR technology uses a color filter process to improve color fidelity. By committing 25% of its pixel array pattern to infrared (IR) and 75% to RGB, it can simultaneously capture both RGB and IR images. This makes it possible to capture both day and night images with the same sensor. It is used for battery powered home security cameras as well as biometric authentication, such as gesture and facial recognition.[15]

PureCel technologies[edit]

OmniVision developed its PureCel and PureCel Plus image sensor technology to provide added camera functionality to smartphones and action cameras. The technical goal was to provide smaller camera modules that enable larger optical formats and offer improved image quality, especially in low-light conditions.[16]

Both of these technologies are offered in a stacked die format (PureCel-S and PureCelPlus-S). This stacked die methodology separates the imaging array from the image sensor processing pipeline into a stacked die structure, allowing for additional functionality to be implemented on the sensor while providing for much smaller die sizes compared to non-stacked sensors. PureCelPlus-S uses partial deep trench isolation (B-DTI) structures comprising an interfacial oxide, first deposited HfO, TaO, oxide, Ti-based liner, and a tungsten core. This is OmniVision's first DTI structure, and the first metal filled B-DTI trench since 2013.[17]

PureCel Plus uses buried color filter array (BCFA) to collect light with various incident light angles for tolerance improvements. Deep trench isolation reduces crosstalk by creating isolation walls between pixels inside silicon. In PureCel Plus Gen 2, OmniVision set out to improve deep trench isolation for better pixel isolation and low-light performance. Its target application is smartphone video cameras.[18]

Nyxel[edit]

Developed to address the low-light and night-vision performance requirements of advanced machine vision, surveillance, and automotive camera applications, OmniVision's Nyxel NIR imaging technology combines thick-silicon pixel architectures and careful management of the wafer surface texture to improve quantum efficiency (QE). In addition, extended deep trench isolation helps retain modulation transfer function without affecting the sensor's dark current, further improving night vision capabilities.[19] Performance improvements include image quality, extended image-detection range and a reduced light-source requirement, leading to overall lower system power consumption.[20]

Nyxel 2[edit]

This second generation near-infrared technology improves upon the first generation by increasing the silicon thickness to improve imaging sensitivity. Deep trench isolation was extended to address issues with crosstalk without impacting modulation transfer function. Wafer surface has been refined to improve the extended photon path and increase photon-electron conversion. The sensor achieves 25% improvement in the invisible 940-nm NIR light spectrum and a 17% increase in the barely visible 850-nm NIR wavelength over the first-generation technology.[21]

LED flicker mitigation and high dynamic range[edit]

High-dynamic-range (HDR) imaging relies on algorithms to combined several image captures into one to create a higher quality image than native capture alone. LED lighting can create a flicker effect with HDR. This is a problem for machine vision systems, such as those used in autonomous vehicles. That is because LEDs are ubiquitous in automotive environments, from headlights to traffic lights, road signs and beyond. While the human eye can adapt to LED flickering, machine vision cannot. To mitigate this effect, OmniVision uses split-pixel technology. One large photodiode captures a scene using short exposure time. A small photodiode using long exposure simultaneously captures the LED signal. The two images are then joined in a final picture. The result is a flicker-free image.[22]

Products[edit]

CMOS image sensors[edit]

OmniVision CMOS image sensors range in resolution from 64 megapixels to below one megapixel.[23] In 2009, it received orders from Apple for both 3.2 megapixel and 5 megapixel CIS.[24]

ASIC[edit]

OmniVision also manufactures application integrated circuits (ASICs) as companion products for its image sensors used in automotive, medical, augmented reality and virtual reality (AR/VR), and IoT applications.[25]

CameraCubeChip[edit]

OmniVision's CameraCubeChip is a fully packaged, wafer-level camera module measuring 0.65 mm × 0.65 mm. It is being integrated into disposable endoscopes and catheters with diameters as small as 1.0mm. These medical devices are used for a range of medical procedures, from diagnostic to minimally invasive surgery. The used OV6948 sensor has a size of 0.575 mm × 0.575 mm and a resolution of 200 × 200 Pixel.[26]

LCOS[edit]

OmniVision manufacturers liquid crystal on silicon (LCOS) projection technology for display applications.[27]

In 2018, Magic Leap used OmniVision's LCOS technology and their sensor bridge ASIC for the Magic Leap One augmented reality headset.[28]

Markets and applications[edit]

The digital imaging market has converged into two paths: digital photography and machine vision. While smartphone cameras drove the market for some time, since 2017, machine vision applications have driven new developments. Autonomous vehicles, medical devices, miniaturized security cameras, and internet of things (IoT) devices all rely on advanced imaging technologies.[29] OmniVision's image sensors are designed for all imaging market segments including:

- Mobile

- Automotive

- Security

- IoT/emerging

- Computing

- Medical

The following are examples of OmniVision products that have been adopted by end-users.

- The iPhone 5 front-facing camera is an OV2C3B unit.[30]

- The Official 5.0 megapixel camera for the Raspberry Pi released in 2013 uses an OV5647.[31]

- In 2014, Google developed 3D mapping technology, Project Tango, for the purpose of bringing AR/VR technology to mobile applications.[32] Tango contains a number of OmniVision products including a 4 MP RGB-Ir sensor that allows for high-res photo and video, as well as depth perception in its standard camera, as well as a low-power CameraChip.[33]

- The Arlo home security camera by Netgear is a battery operated, wireless camera security system. It contains several OmniVision products including the OV00788 as the camera's image signal processor, and OV9712 a 1 MP progressive scan CMOS image sensor with video capturing capability.[34]

- The Ring doorbell uses an HD camera that contains a OmniVision OV9712 1 MP Image Sensor OmniVision H.264[35] and a video compression chip used for video processing.[36]

- The Sony PlayStation contains two OV9713 CMOS image sensors in the PlayStation Camera, as well as two USB bridge ASIC solutions. It also appears to have an OV580 ASIC chip that was made specifically for Sony.[37]

- Automotive system supplier ZF included OmniVision CMOS image sensors in its Gen-4 Generation S-Cam in both the mono camera and triple camera set-up.[38]

- As of June 2020, the rear autopilot camera on the Tesla Model S/X/3/Y uses the OV10635 720p CMOS sensor.[39]

- All five models of Asus’ ZenFone 4 smartphone line include dual camera set ups. The mid-range model uses an 8-megapixel OV8856 for both the front camera and the secondary sensor to provides a 120-degree super wide view. The ZenFone 4 Selfie uses a low 5-megapixel resolution OV5670 as its secondary sensor, also for a super-wide view.[40]

- The Microsoft Surface Pro 4 comes with an 8 MP rear camera with the OV5693 image sensor, and a 5 MP front facing camera with the OV8865 image sensor. The rear camera has 1.4 µm pixels, and a F/2 aperture for lower light scenarios. The front camera moves to a wider field of view for use with video conferencing. The quality is a bit grainy.[41]

- Qualcomm's virtual reality design kit (VRDK) was developed to provide a foundation for consumer electronics manufacturers so they could create VR headsets based on Qualcomm's Snapdragon VR hardware. To achieve positional tracking, the company designed in on-board cameras backed by the OV9282 global shutter image sensor which can capture 1,280 × 800 images at 120 Hz, or 180 Hz at 640 × 480. Qualcomm chose it based on claims that low latency makes it a good choice for VR headsets.[42]

References[edit]

- ^ "Corporate Fact Sheet" (PDF). 2014. Retrieved 24 September 2015.

- ^ "603501:Shanghai Stock Quote - Will Semiconductor Ltd". Bloomberg.com. Retrieved 9 October 2020.

- ^ "OmniVision bought quietly by China's Will Semiconductor". eeNews Analog. 24 May 2019. Retrieved 9 October 2020.

- ^ "OmniVision Locations". Retrieved 13 September 2010.

- ^ "Corporate Releases | News & Events | OmniVision". www.ovt.com. Retrieved 11 January 2020.

- ^ Tomkins, Michael R. (28 March 2005). "OmniVision acquires CDM Optics". The Imaging Resource. Retrieved 25 September 2010.

- ^ "OmniVision Technologies, Inc. Completes Acquisition of Aurora Systems, Inc". www.prnewswire.com (Press release). OmniVision Technologies Inc. Retrieved 24 June 2020.

- ^ Koifman, Vladimir (11 April 2011). "OmniVision Acquires 850 Kodak Patents for $65M". Image Sensor World. Retrieved 15 November 2015.

- ^ "OmniVision to Be Bought by Chinese Investors in $1.9 Billion Deal". re/code. 1 May 2015. Retrieved 4 May 2015.

- ^ Stahl, George (30 April 2015). "OmniVision Agrees to $1.9 Billion Buyout". Wall Street Journal. ISSN 0099-9660. Retrieved 18 June 2020.

- ^ Koifman, Vladimir (19 January 2020). "Omnivision Aims to Close the Gap with Sony and Samsung and Lead the Market in 1 Year". Image Sensors World. Retrieved 24 January 2020.

- ^ "OmniVision bought quietly by China's Will Semiconductor | eeNews Analog". www.eenewsanalog.com. 24 May 2019. Retrieved 24 January 2020.

- ^ "Smallest commercially available image sensor". Guinness World Records. Retrieved 18 June 2020.

- ^ "OmniVision announces global shutter image sensors for facial authentication - asmag.com Rankings". www.asmag.com. Retrieved 19 June 2020.

- ^ "OmniVision Releases First 5MP RGB-IR Image Sensor". www.embedded-computing.com. Retrieved 18 June 2020.

- ^ Triggs, Rob (10 July 2015). "Who's Who in the smartphone camera business". Android Authority. Retrieved 4 April 2016.

- ^ Fontaine, Ray. "A Survey of Enabling Technologies in Successful Consumer Digital Imaging Products (Part 3: Pixel Isolation Structures)". TechInsights. Retrieved 4 July 2017.

- ^ "OmniVision Introduces OV02K Image Sensor That is Built on PureCel Plus Pixel Technology". news.thomasnet.com. Retrieved 18 June 2020.

- ^ Carroll, James. "Nyxel near infrared technology from OmniVision offers increased quantum efficiency". Vision Systems Design. Retrieved 9 October 2017.

- ^ Dirjish, Matthew. "Near-Infrared Technology Clears Up Night Vision Apps". Sensors Online. SeQuestex LLC. Retrieved 9 October 2017.

- ^ Roos, Gina (9 March 2020). "OmniVision NIR image sensing achieves new quantum efficiency record". Electronic Products. Retrieved 18 June 2020.

- ^ Yoshida, Junko (12 December 2020). "OmniVision Cuts LED Flicker in HDR". EE Times. Retrieved 18 June 2020.

- ^ "Image Sensors | OmniVision". www.ovt.com. Retrieved 23 June 2020.

- ^ Wu, Hans (3 April 2009). "OmniVision lands CIS orders for next-generation iPhone". DigiTimes. Retrieved 8 October 2020.

- ^ "CameraCubeChip™ | OmniVision". www.ovt.com. Retrieved 23 June 2020.

- ^ "New Miniature Camera Module Emerges for Disposable Medical Endoscopes". mddionline.com. 22 October 2019. Retrieved 23 June 2020.

- ^ "LCOS | OmniVision". www.ovt.com. Retrieved 23 June 2020.

- ^ "iFixit's Magic Leap One Teardown Confirms KGOnTech's Analysis From November 2016". KGOnTech. 23 August 2018. Retrieved 9 October 2020.

- ^ "Beyond the Smartphone: How Digital Imaging is Becoming Ubiquitous". 3D InCites. 2 May 2018. Retrieved 19 June 2020.

- ^ "iPhone 5 Cameras: Sony and Omnivision Win". iFixit. 23 June 2020. Retrieved 23 June 2020.

- ^ "Camera Documenation". raspberrypi.org. Retrieved 7 December 2020.

- ^ "Project Tango Teardown". iFixit. 16 April 2014. Retrieved 23 June 2020.

- ^ "Prototype of Google's Project Tango Goes Under the Knife". iFixit. 23 June 2020. Retrieved 23 June 2020.

- ^ Unifore. "Disassemble/teardown Netgear Arlo Smart Wireless WiFI cameras security system". www.burglaryalarmsystem.com. Retrieved 23 June 2020.

- ^ "Ring Video Doorbell". benchmarking.ihsmarkit.com. 16 May 2016. Retrieved 23 June 2020.

- ^ "Ring Doorbell - Exploitee.rs". www.exploitee.rs. Retrieved 23 June 2020.

- ^ "PlayStation 4 Camera - PS4 Developer wiki". www.psdevwiki.com. Retrieved 23 June 2020.

- ^ ltd, Research and Markets. "ZF S-Cam 4 - Forward Automotive Mono and Tri Camera for Advanced Driver Assistance Systems". www.researchandmarkets.com. Retrieved 23 June 2020.

- ^ "Undocumented | TeslaTap". Retrieved 23 June 2020.

- ^ "ASUS goes dual-camera crazy for its ZenFone 4 series". Engadget. 17 August 2017. Retrieved 23 June 2020.

- ^ Howse, Brett. "The Microsoft Surface Pro 4 Review: Raising The Bar". www.anandtech.com. Retrieved 23 June 2020.

- ^ Lang, Ben (29 June 2017). "HTC Vive & Lenovo Standalone Headsets to be Based on Qualcomm Reference Design, Components Detailed". Road to VR. Retrieved 23 June 2020.