Reinforcement learning

| Part of a series on |

| Machine learning and data mining |

|---|

Reinforcement learning (RL) is an interdisciplinary area of machine learning and optimal control concerned with how an intelligent agent ought to take actions in a dynamic environment in order to maximize the cumulative reward. Reinforcement learning is one of three basic machine learning paradigms, alongside supervised learning and unsupervised learning.

Reinforcement learning differs from supervised learning in not needing labelled input/output pairs to be presented, and in not needing sub-optimal actions to be explicitly corrected. Instead the focus is on finding a balance between exploration (of uncharted territory) and exploitation (of current knowledge) with the goal of maximizing the long term reward, whose feedback might be incomplete or delayed.[1]

The environment is typically stated in the form of a Markov decision process (MDP), because many reinforcement learning algorithms for this context use dynamic programming techniques.[2] The main difference between the classical dynamic programming methods and reinforcement learning algorithms is that the latter do not assume knowledge of an exact mathematical model of the Markov decision process and they target large Markov decision processes where exact methods become infeasible.[3]

Introduction[edit]

Due to its generality, reinforcement learning is studied in many disciplines, such as game theory, control theory, operations research, information theory, simulation-based optimization, multi-agent systems, swarm intelligence, and statistics. In the operations research and control literature, reinforcement learning is called approximate dynamic programming, or neuro-dynamic programming. The problems of interest in reinforcement learning have also been studied in the theory of optimal control, which is concerned mostly with the existence and characterization of optimal solutions, and algorithms for their exact computation, and less with learning or approximation, particularly in the absence of a mathematical model of the environment.

Basic reinforcement learning is modeled as a Markov decision process:

- a set of environment and agent states, ;

- a set of actions, , of the agent;

- , the probability of transition (at time ) from state to state under action .

- , the immediate reward after transition from to with action .

The purpose of reinforcement learning is for the agent to learn an optimal, or nearly-optimal, policy that maximizes the "reward function" or other user-provided reinforcement signal that accumulates from the immediate rewards. This is similar to processes that appear to occur in animal psychology. For example, biological brains are hardwired to interpret signals such as pain and hunger as negative reinforcements, and interpret pleasure and food intake as positive reinforcements. In some circumstances, animals can learn to engage in behaviors that optimize these rewards. This suggests that animals are capable of reinforcement learning.[4][5]

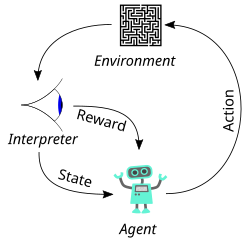

A basic reinforcement learning agent AI interacts with its environment in discrete time steps. At each time t, the agent receives the current state and reward . It then chooses an action from the set of available actions, which is subsequently sent to the environment. The environment moves to a new state and the reward associated with the transition is determined. The goal of a reinforcement learning agent is to learn a policy: , that maximizes the expected cumulative reward.

Formulating the problem as an Markov decision process assumes the agent directly observes the current environmental state; in this case the problem is said to have full observability. If the agent only has access to a subset of states, or if the observed states are corrupted by noise, the agent is said to have partial observability, and formally the problem must be formulated as a Partially observable Markov decision process. In both cases, the set of actions available to the agent can be restricted. For example, the state of an account balance could be restricted to be positive; if the current value of the state is 3 and the state transition attempts to reduce the value by 4, the transition will not be allowed.

When the agent's performance is compared to that of an agent that acts optimally, the difference in performance gives rise to the notion of regret. In order to act near optimally, the agent must reason about the long-term consequences of its actions (i.e., maximize future income), although the immediate reward associated with this might be negative.

Thus, reinforcement learning is particularly well-suited to problems that include a long-term versus short-term reward trade-off. It has been applied successfully to various problems, including energy storage operation,[6] robot control,[7] photovoltaic generators dispatch,[8] backgammon, checkers,[9] Go (AlphaGo), and autonomous driving systems.[10]

Two elements make reinforcement learning powerful: the use of samples to optimize performance and the use of function approximation to deal with large environments. Thanks to these two key components, reinforcement learning can be used in large environments in the following situations:

- A model of the environment is known, but an analytic solution is not available;

- Only a simulation model of the environment is given (the subject of simulation-based optimization);[11]

- The only way to collect information about the environment is to interact with it.

The first two of these problems could be considered planning problems (since some form of model is available), while the last one could be considered to be a genuine learning problem. However, reinforcement learning converts both planning problems to machine learning problems.

Exploration[edit]

The exploration vs. exploitation trade-off has been most thoroughly studied through the multi-armed bandit problem and for finite state space Markov decision processes in Burnetas and Katehakis (1997).[12]

Reinforcement learning requires clever exploration mechanisms; randomly selecting actions, without reference to an estimated probability distribution, shows poor performance. The case of (small) finite Markov decision processes is relatively well understood. However, due to the lack of algorithms that scale well with the number of states (or scale to problems with infinite state spaces), simple exploration methods are the most practical.

One such method is -greedy, where is a parameter controlling the amount of exploration vs. exploitation. With probability , exploitation is chosen, and the agent chooses the action that it believes has the best long-term effect (ties between actions are broken uniformly at random). Alternatively, with probability , exploration is chosen, and the action is chosen uniformly at random. is usually a fixed parameter but can be adjusted either according to a schedule (making the agent explore progressively less), or adaptively based on heuristics.[13]

Algorithms for control learning[edit]

Even if the issue of exploration is disregarded and even if the state was observable (assumed hereafter), the problem remains to use past experience to find out which actions lead to higher cumulative rewards.

Criterion of optimality[edit]

Policy[edit]

The agent's action selection is modeled as a map called policy:

The policy map gives the probability of taking action when in state .[14]: 61 There are also deterministic policies.

State-value function[edit]

The state-value function is defined as, expected discounted return starting with state , i.e. , and successively following policy . Hence, roughly speaking, the value function estimates "how good" it is to be in a given state.[14]: 60

where the random variable denotes the discounted return, and is defined as the sum of future discounted rewards:

where is the reward for transitioning from state to , is the discount rate. is less than 1, so rewards in the distant future are weighted less than rewards in the immediate future.

The algorithm must find a policy with maximum expected discounted return. From the theory of Markov decision processes it is known that, without loss of generality, the search can be restricted to the set of so-called stationary policies. A policy is stationary if the action-distribution returned by it depends only on the last state visited (from the observation agent's history). The search can be further restricted to deterministic stationary policies. A deterministic stationary policy deterministically selects actions based on the current state. Since any such policy can be identified with a mapping from the set of states to the set of actions, these policies can be identified with such mappings with no loss of generality.

Brute force[edit]

The brute force approach entails two steps:

- For each possible policy, sample returns while following it

- Choose the policy with the largest expected discounted return

One problem with this is that the number of policies can be large, or even infinite. Another is that the variance of the returns may be large, which requires many samples to accurately estimate the discounted return of each policy.

These problems can be ameliorated if we assume some structure and allow samples generated from one policy to influence the estimates made for others. The two main approaches for achieving this are value function estimation and direct policy search.

Value function[edit]

Value function approaches attempt to find a policy that maximizes the discounted return by maintaining a set of estimates of expected discounted returns for some policy (usually either the "current" [on-policy] or the optimal [off-policy] one).

These methods rely on the theory of Markov decision processes, where optimality is defined in a sense stronger than the one above: A policy is optimal if it achieves the best-expected discounted return from any initial state (i.e., initial distributions play no role in this definition). Again, an optimal policy can always be found among stationary policies.

To define optimality in a formal manner, define the state-value of a policy by

where stands for the discounted return associated with following from the initial state . Defining as the maximum possible state-value of , where is allowed to change,

A policy that achieves these optimal state-values in each state is called optimal. Clearly, a policy that is optimal in this strong sense is also optimal in the sense that it maximizes the expected discounted return , since , where is a state randomly sampled from the distribution of initial states (so ).

Although state-values suffice to define optimality, it is useful to define action-values. Given a state , an action and a policy , the action-value of the pair under is defined by

where now stands for the random discounted return associated with first taking action in state and following , thereafter.

The theory of Markov decision processes states that if is an optimal policy, we act optimally (take the optimal action) by choosing the action from with the highest action-value at each state, . The action-value function of such an optimal policy () is called the optimal action-value function and is commonly denoted by . In summary, the knowledge of the optimal action-value function alone suffices to know how to act optimally.

Assuming full knowledge of the Markov decision process, the two basic approaches to compute the optimal action-value function are value iteration and policy iteration. Both algorithms compute a sequence of functions () that converge to . Computing these functions involves computing expectations over the whole state-space, which is impractical for all but the smallest (finite) Markov decision processes. In reinforcement learning methods, expectations are approximated by averaging over samples and using function approximation techniques to cope with the need to represent value functions over large state-action spaces.

Monte Carlo methods[edit]

Monte Carlo methods can be used in an algorithm that mimics policy iteration. Policy iteration consists of two steps: policy evaluation and policy improvement.

Monte Carlo is used in the policy evaluation step. In this step, given a stationary, deterministic policy , the goal is to compute the function values (or a good approximation to them) for all state-action pairs . Assume (for simplicity) that the Markov decision process is finite, that sufficient memory is available to accommodate the action-values and that the problem is episodic and after each episode a new one starts from some random initial state. Then, the estimate of the value of a given state-action pair can be computed by averaging the sampled returns that originated from over time. Given sufficient time, this procedure can thus construct a precise estimate of the action-value function . This finishes the description of the policy evaluation step.

In the policy improvement step, the next policy is obtained by computing a greedy policy with respect to : Given a state , this new policy returns an action that maximizes . In practice lazy evaluation can defer the computation of the maximizing actions to when they are needed.

Problems with this procedure include:

- The procedure may spend too much time evaluating a suboptimal policy.

- It uses samples inefficiently in that a long trajectory improves the estimate only of the single state-action pair that started the trajectory.

- When the returns along the trajectories have high variance, convergence is slow.

- It works in episodic problems only.

- It works in small, finite Markov decision processes only.

Temporal difference methods[edit]

The first problem is corrected by allowing the procedure to change the policy (at some or all states) before the values settle. This too may be problematic as it might prevent convergence. Most current algorithms do this, giving rise to the class of generalized policy iteration algorithms. Many actor-critic methods belong to this category.

The second issue can be corrected by allowing trajectories to contribute to any state-action pair in them. This may also help to some extent with the third problem, although a better solution when returns have high variance is Sutton's temporal difference (TD) methods that are based on the recursive Bellman equation.[15][16] The computation in TD methods can be incremental (when after each transition the memory is changed and the transition is thrown away), or batch (when the transitions are batched and the estimates are computed once based on the batch). Batch methods, such as the least-squares temporal difference method,[17] may use the information in the samples better, while incremental methods are the only choice when batch methods are infeasible due to their high computational or memory complexity. Some methods try to combine the two approaches. Methods based on temporal differences also overcome the fourth issue.

Another problem specific to TD comes from their reliance on the recursive Bellman equation. Most TD methods have a so-called parameter that can continuously interpolate between Monte Carlo methods that do not rely on the Bellman equations and the basic TD methods that rely entirely on the Bellman equations. This can be effective in palliating this issue.

Function approximation methods[edit]

In order to address the fifth issue, function approximation methods are used. Linear function approximation starts with a mapping that assigns a finite-dimensional vector to each state-action pair. Then, the action values of a state-action pair are obtained by linearly combining the components of with some weights :

The algorithms then adjust the weights, instead of adjusting the values associated with the individual state-action pairs. Methods based on ideas from nonparametric statistics (which can be seen to construct their own features) have been explored.

Value iteration can also be used as a starting point, giving rise to the Q-learning algorithm and its many variants.[18] Including Deep Q-learning methods when a neural network is used to represent Q, with various applications in stochastic search problems.[19]

The problem with using action-values is that they may need highly precise estimates of the competing action values that can be hard to obtain when the returns are noisy, though this problem is mitigated to some extent by temporal difference methods. Using the so-called compatible function approximation method compromises generality and efficiency.

Direct policy search[edit]

An alternative method is to search directly in (some subset of) the policy space, in which case the problem becomes a case of stochastic optimization. The two approaches available are gradient-based and gradient-free methods.

Gradient-based methods (policy gradient methods) start with a mapping from a finite-dimensional (parameter) space to the space of policies: given the parameter vector , let denote the policy associated to . Defining the performance function by under mild conditions this function will be differentiable as a function of the parameter vector . If the gradient of was known, one could use gradient ascent. Since an analytic expression for the gradient is not available, only a noisy estimate is available. Such an estimate can be constructed in many ways, giving rise to algorithms such as Williams' REINFORCE method[20] (which is known as the likelihood ratio method in the simulation-based optimization literature).[21]

A large class of methods avoids relying on gradient information. These include simulated annealing, cross-entropy search or methods of evolutionary computation. Many gradient-free methods can achieve (in theory and in the limit) a global optimum.

Policy search methods may converge slowly given noisy data. For example, this happens in episodic problems when the trajectories are long and the variance of the returns is large. Value-function based methods that rely on temporal differences might help in this case. In recent years, actor–critic methods have been proposed and performed well on various problems.[22]

Policy search methods have been used in the robotics context.[23] Many policy search methods may get stuck in local optima (as they are based on local search).

Model-based algorithms[edit]

Finally, all of the above methods can be combined with algorithms that first learn a model of the Markov Decision Process, the probability of each next state given an action taken from an existing state. For instance, the Dyna algorithm[24] learns a model from experience, and uses that to provide more modelled transitions for a value function, in addition to the real transitions. Such methods can sometimes be extended to use of non-parametric models, such as when the transitions are simply stored and 'replayed'[25] to the learning algorithm.

Model-based methods can be more computationally intensive than model-free approaches, and their utility can be limited by the extent to which the Markov Decision Process can be learnt.[26]

There are other ways to use models than to update a value function.[27] For instance, in model predictive control the model is used to update the behavior directly.

Theory[edit]

Both the asymptotic and finite-sample behaviors of most algorithms are well understood. Algorithms with provably good online performance (addressing the exploration issue) are known.

Efficient exploration of Markov decision processes is given in Burnetas and Katehakis (1997).[12] Finite-time performance bounds have also appeared for many algorithms, but these bounds are expected to be rather loose and thus more work is needed to better understand the relative advantages and limitations.

For incremental algorithms, asymptotic convergence issues have been settled[clarification needed]. Temporal-difference-based algorithms converge under a wider set of conditions than was previously possible (for example, when used with arbitrary, smooth function approximation).

Research[edit]

This section needs additional citations for verification. (October 2022) |

Research topics include:

- actor-critic architecture

- actor-critic-scenery architecture [3]

- adaptive methods that work with fewer (or no) parameters under a large number of conditions

- bug detection in software projects[28]

- continuous learning

- combinations with logic-based frameworks[29]

- exploration in large Markov decision processes

- human feedback[30]

- interaction between implicit and explicit learning in skill acquisition

- intrinsic motivation which differentiates information-seeking, curiosity-type behaviours from task-dependent goal-directed behaviours large-scale empirical evaluations

- large (or continuous) action spaces

- modular and hierarchical reinforcement learning[31]

- multiagent/distributed reinforcement learning is a topic of interest. Applications are expanding.[32]

- occupant-centric control

- optimization of computing resources[33][34][35]

- partial information (e.g., using predictive state representation)

- reward function based on maximising novel information[36][37][38]

- sample-based planning (e.g., based on Monte Carlo tree search).

- securities trading[39]

- transfer learning[40]

- TD learning modeling dopamine-based learning in the brain. Dopaminergic projections from the substantia nigra to the basal ganglia function are the prediction error.

- value-function and policy search methods

Comparison of key algorithms[edit]

| Algorithm | Description | Policy | Action space | State space | Operator |

|---|---|---|---|---|---|

| Monte Carlo | Every visit to Monte Carlo | Either | Discrete | Discrete | Sample-means of state-values or action-values |

| TD learning | State–action–reward–state | Off-policy | Discrete | Discrete | State-value |

| Q-learning | State–action–reward–state | Off-policy | Discrete | Discrete | Action-value |

| SARSA | State–action–reward–state–action | On-policy | Discrete | Discrete | Action-value |

| DQN | Deep Q Network | Off-policy | Discrete | Continuous | Action-value |

| DDPG | Deep Deterministic Policy Gradient | Off-policy | Continuous | Continuous | Action-value |

| A3C | Asynchronous Advantage Actor-Critic Algorithm | On-policy | Discrete | Continuous | Advantage (=action-value - state-value) |

| TRPO | Trust Region Policy Optimization | On-policy | Continuous or Discrete | Continuous | Advantage |

| PPO | Proximal Policy Optimization | On-policy | Continuous or Discrete | Continuous | Advantage |

| TD3 | Twin Delayed Deep Deterministic Policy Gradient | Off-policy | Continuous | Continuous | Action-value |

| SAC | Soft Actor-Critic | Off-policy | Continuous | Continuous | Advantage |

| DSAC[41][42][43] | Distributional Soft Actor Critic | Off-policy | Continuous | Continuous | Action-value distribution |

Associative reinforcement learning[edit]

Associative reinforcement learning tasks combine facets of stochastic learning automata tasks and supervised learning pattern classification tasks. In associative reinforcement learning tasks, the learning system interacts in a closed loop with its environment.[44]

Deep reinforcement learning[edit]

This approach extends reinforcement learning by using a deep neural network and without explicitly designing the state space.[45] The work on learning ATARI games by Google DeepMind increased attention to deep reinforcement learning or end-to-end reinforcement learning.[46]

Adversarial deep reinforcement learning[edit]

Adversarial deep reinforcement learning is an active area of research in reinforcement learning focusing on vulnerabilities of learned policies. In this research area some studies initially showed that reinforcement learning policies are susceptible to imperceptible adversarial manipulations.[47][48][49] While some methods have been proposed to overcome these susceptibilities, in the most recent studies it has been shown that these proposed solutions are far from providing an accurate representation of current vulnerabilities of deep reinforcement learning policies.[50]

Fuzzy reinforcement learning[edit]

By introducing fuzzy inference in reinforcement learning,[51] approximating the state-action value function with fuzzy rules in continuous space becomes possible. The IF - THEN form of fuzzy rules make this approach suitable for expressing the results in a form close to natural language. Extending FRL with Fuzzy Rule Interpolation [52] allows the use of reduced size sparse fuzzy rule-bases to emphasize cardinal rules (most important state-action values).

Inverse reinforcement learning[edit]

In inverse reinforcement learning (IRL), no reward function is given. Instead, the reward function is inferred given an observed behavior from an expert. The idea is to mimic observed behavior, which is often optimal or close to optimal.[53] One popular IRL paradigm is named maximum entropy inverse reinforcement learning (MaxEnt IRL). [54] MaxEnt IRL estimates the parameters of a linear model of the reward function by maximizing the entropy of the probability distribution of observed trajectories subject to constraints related to matching expected feature counts. Recently it has been shown that MaxEnt IRL is a particular case of a more general framework named random utility inverse reinforcement learning (RU-IRL). [55] RU-IRL is based on random utility theory and Markov decision processes. While prior IRL approaches assume that the apparent random behavior of an observed agent is due to it following a random policy, RU-IRL assumes that the observed agent follows a deterministic policy but randomness in observed behavior is due to the fact that an observer only has partial access to the features the observed agent uses in decision making. The utility function is modeled as a random variable to account for the ignorance of the observer regarding the features the observed agent actually considers in its utility function.

Safe reinforcement learning[edit]

Safe reinforcement learning (SRL) can be defined as the process of learning policies that maximize the expectation of the return in problems in which it is important to ensure reasonable system performance and/or respect safety constraints during the learning and/or deployment processes.[56]

See also[edit]

- Temporal difference learning

- Q-learning

- State–action–reward–state–action (SARSA)

- Reinforcement learning from human feedback

- Optimal control

- Error-driven learning

- Multi-agent reinforcement learning

- Apprenticeship learning

- Model-free (reinforcement learning)

- Model-based reinforcement learning

- Direct reinforcement learning[3] [57]

- Indirect reinforcement learning[3] [57]

- active learning (machine learning)

References[edit]

- ^ Kaelbling, Leslie P.; Littman, Michael L.; Moore, Andrew W. (1996). "Reinforcement Learning: A Survey". Journal of Artificial Intelligence Research. 4: 237–285. arXiv:cs/9605103. doi:10.1613/jair.301. S2CID 1708582. Archived from the original on 2001-11-20.

- ^ van Otterlo, M.; Wiering, M. (2012). "Reinforcement Learning and Markov Decision Processes". Reinforcement Learning. Adaptation, Learning, and Optimization. Vol. 12. pp. 3–42. doi:10.1007/978-3-642-27645-3_1. ISBN 978-3-642-27644-6.

- ^ a b c d Li, Shengbo (2023). Reinforcement Learning for Sequential Decision and Optimal Control (First ed.). Springer Verlag, Singapore. pp. 1–460. doi:10.1007/978-981-19-7784-8. ISBN 978-9-811-97783-1. S2CID 257928563.

{{cite book}}: CS1 maint: location missing publisher (link) - ^ Russell, Stuart J.; Norvig, Peter (2010). Artificial intelligence : a modern approach (Third ed.). Upper Saddle River, New Jersey. pp. 830, 831. ISBN 978-0-13-604259-4.

{{cite book}}: CS1 maint: location missing publisher (link) - ^ Lee, Daeyeol; Seo, Hyojung; Jung, Min Whan (21 July 2012). "Neural Basis of Reinforcement Learning and Decision Making". Annual Review of Neuroscience. 35 (1): 287–308. doi:10.1146/annurev-neuro-062111-150512. PMC 3490621. PMID 22462543.

- ^ Salazar Duque, Edgar Mauricio; Giraldo, Juan S.; Vergara, Pedro P.; Nguyen, Phuong; Van Der Molen, Anne; Slootweg, Han (2022). "Community energy storage operation via reinforcement learning with eligibility traces". Electric Power Systems Research. 212. doi:10.1016/j.epsr.2022.108515. S2CID 250635151.

- ^ Xie, Zhaoming; Hung Yu Ling; Nam Hee Kim; Michiel van de Panne (2020). "ALLSTEPS: Curriculum-driven Learning of Stepping Stone Skills". arXiv:2005.04323 [cs.GR].

- ^ Vergara, Pedro P.; Salazar, Mauricio; Giraldo, Juan S.; Palensky, Peter (2022). "Optimal dispatch of PV inverters in unbalanced distribution systems using Reinforcement Learning". International Journal of Electrical Power & Energy Systems. 136. doi:10.1016/j.ijepes.2021.107628. S2CID 244099841.

- ^ Sutton & Barto 2018, Chapter 11.

- ^ Ren, Yangang; Jiang, Jianhua; Zhan, Guojian; Li, Shengbo Eben; Chen, Chen; Li, Keqiang; Duan, Jingliang (2022). "Self-Learned Intelligence for Integrated Decision and Control of Automated Vehicles at Signalized Intersections". IEEE Transactions on Intelligent Transportation Systems. 23 (12): 24145–24156. arXiv:2110.12359. doi:10.1109/TITS.2022.3196167.

- ^ Gosavi, Abhijit (2003). Simulation-based Optimization: Parametric Optimization Techniques and Reinforcement. Operations Research/Computer Science Interfaces Series. Springer. ISBN 978-1-4020-7454-7.

- ^ a b Burnetas, Apostolos N.; Katehakis, Michael N. (1997), "Optimal adaptive policies for Markov Decision Processes", Mathematics of Operations Research, 22 (1): 222–255, doi:10.1287/moor.22.1.222, JSTOR 3690147

- ^ Tokic, Michel; Palm, Günther (2011), "Value-Difference Based Exploration: Adaptive Control Between Epsilon-Greedy and Softmax" (PDF), KI 2011: Advances in Artificial Intelligence, Lecture Notes in Computer Science, vol. 7006, Springer, pp. 335–346, ISBN 978-3-642-24455-1

- ^ a b "Reinforcement learning: An introduction" (PDF). Archived from the original (PDF) on 2017-07-12. Retrieved 2017-07-23.

- ^ Sutton, Richard S. (1984). Temporal Credit Assignment in Reinforcement Learning (PhD thesis). University of Massachusetts, Amherst, MA. Archived from the original on 2017-03-30. Retrieved 2017-03-29.

- ^ Sutton & Barto 2018, §6. Temporal-Difference Learning.

- ^ Bradtke, Steven J.; Barto, Andrew G. (1996). "Learning to predict by the method of temporal differences". Machine Learning. 22: 33–57. CiteSeerX 10.1.1.143.857. doi:10.1023/A:1018056104778. S2CID 20327856.

- ^ Watkins, Christopher J.C.H. (1989). Learning from Delayed Rewards (PDF) (PhD thesis). King’s College, Cambridge, UK.

- ^ Matzliach, Barouch; Ben-Gal, Irad; Kagan, Evgeny (2022). "Detection of Static and Mobile Targets by an Autonomous Agent with Deep Q-Learning Abilities". Entropy. 24 (8): 1168. Bibcode:2022Entrp..24.1168M. doi:10.3390/e24081168. PMC 9407070. PMID 36010832.

- ^ Williams, Ronald J. (1987). "A class of gradient-estimating algorithms for reinforcement learning in neural networks". Proceedings of the IEEE First International Conference on Neural Networks. CiteSeerX 10.1.1.129.8871.

- ^ Peters, Jan; Vijayakumar, Sethu; Schaal, Stefan (2003). Reinforcement Learning for Humanoid Robotics (PDF). IEEE-RAS International Conference on Humanoid Robots. Archived from the original (PDF) on 2013-05-12.

- ^ Juliani, Arthur (2016-12-17). "Simple Reinforcement Learning with Tensorflow Part 8: Asynchronous Actor-Critic Agents (A3C)". Medium. Retrieved 2018-02-22.

- ^ Deisenroth, Marc Peter; Neumann, Gerhard; Peters, Jan (2013). A Survey on Policy Search for Robotics (PDF). Foundations and Trends in Robotics. Vol. 2. NOW Publishers. pp. 1–142. doi:10.1561/2300000021. hdl:10044/1/12051.

- ^ Sutton, Richard (1990). "Integrated Architectures for Learning, Planning and Reacting based on Dynamic Programming". Machine Learning: Proceedings of the Seventh International Workshop.

- ^ Lin, Long-Ji (1992). "Self-improving reactive agents based on reinforcement learning, planning and teaching" (PDF). Machine Learning volume 8. doi:10.1007/BF00992699.

- ^ Zou, Lan (2023-01-01), Zou, Lan (ed.), "Chapter 7 - Meta-reinforcement learning", Meta-Learning, Academic Press, pp. 267–297, doi:10.1016/b978-0-323-89931-4.00011-0, ISBN 978-0-323-89931-4, retrieved 2023-11-08

- ^ van Hasselt, Hado; Hessel, Matteo; Aslanides, John (2019). "When to use parametric models in reinforcement learning?" (PDF). Advances in Neural Information Processing Systems 32.

- ^ "On the Use of Reinforcement Learning for Testing Game Mechanics : ACM - Computers in Entertainment". cie.acm.org. Retrieved 2018-11-27.

- ^ Riveret, Regis; Gao, Yang (2019). "A probabilistic argumentation framework for reinforcement learning agents". Autonomous Agents and Multi-Agent Systems. 33 (1–2): 216–274. doi:10.1007/s10458-019-09404-2. S2CID 71147890.

- ^ Yamagata, Taku; McConville, Ryan; Santos-Rodriguez, Raul (2021-11-16). "Reinforcement Learning with Feedback from Multiple Humans with Diverse Skills". arXiv:2111.08596 [cs.LG].

- ^ Kulkarni, Tejas D.; Narasimhan, Karthik R.; Saeedi, Ardavan; Tenenbaum, Joshua B. (2016). "Hierarchical Deep Reinforcement Learning: Integrating Temporal Abstraction and Intrinsic Motivation". Proceedings of the 30th International Conference on Neural Information Processing Systems. NIPS'16. USA: Curran Associates Inc.: 3682–3690. arXiv:1604.06057. Bibcode:2016arXiv160406057K. ISBN 978-1-5108-3881-9.

- ^ "Reinforcement Learning / Successes of Reinforcement Learning". umichrl.pbworks.com. Retrieved 2017-08-06.

- ^ Dey, Somdip; Singh, Amit Kumar; Wang, Xiaohang; McDonald-Maier, Klaus (March 2020). "User Interaction Aware Reinforcement Learning for Power and Thermal Efficiency of CPU-GPU Mobile MPSoCs". 2020 Design, Automation & Test in Europe Conference & Exhibition (DATE) (PDF). pp. 1728–1733. doi:10.23919/DATE48585.2020.9116294. ISBN 978-3-9819263-4-7. S2CID 219858480.

- ^ Quested, Tony. "Smartphones get smarter with Essex innovation". Business Weekly. Retrieved 2021-06-17.

- ^ Williams, Rhiannon (2020-07-21). "Future smartphones 'will prolong their own battery life by monitoring owners' behaviour'". i. Retrieved 2021-06-17.

- ^ Kaplan, F.; Oudeyer, P. (2004). "Maximizing Learning Progress: An Internal Reward System for Development". In Iida, F.; Pfeifer, R.; Steels, L.; Kuniyoshi, Y. (eds.). Embodied Artificial Intelligence. Lecture Notes in Computer Science. Vol. 3139. Berlin; Heidelberg: Springer. pp. 259–270. doi:10.1007/978-3-540-27833-7_19. ISBN 978-3-540-22484-6. S2CID 9781221.

- ^ Klyubin, A.; Polani, D.; Nehaniv, C. (2008). "Keep your options open: an information-based driving principle for sensorimotor systems". PLOS ONE. 3 (12): e4018. Bibcode:2008PLoSO...3.4018K. doi:10.1371/journal.pone.0004018. PMC 2607028. PMID 19107219.

- ^ Barto, A. G. (2013). "Intrinsic motivation and reinforcement learning". Intrinsically Motivated Learning in Natural and Artificial Systems (PDF). Berlin; Heidelberg: Springer. pp. 17–47.

- ^ Dabérius, Kevin; Granat, Elvin; Karlsson, Patrik (2020). "Deep Execution - Value and Policy Based Reinforcement Learning for Trading and Beating Market Benchmarks". The Journal of Machine Learning in Finance. 1. SSRN 3374766.

- ^ George Karimpanal, Thommen; Bouffanais, Roland (2019). "Self-organizing maps for storage and transfer of knowledge in reinforcement learning". Adaptive Behavior. 27 (2): 111–126. arXiv:1811.08318. doi:10.1177/1059712318818568. ISSN 1059-7123. S2CID 53774629.

- ^ J Duan; Y Guan; S Li (2021). "Distributional Soft Actor-Critic: Off-policy reinforcement learning for addressing value estimation errors". IEEE Transactions on Neural Networks and Learning Systems. 33 (11): 6584–6598. arXiv:2001.02811. doi:10.1109/TNNLS.2021.3082568. PMID 34101599. S2CID 211259373.

- ^ Y Ren; J Duan; S Li (2020). "Improving Generalization of Reinforcement Learning with Minimax Distributional Soft Actor-Critic". 2020 IEEE 23rd International Conference on Intelligent Transportation Systems (ITSC). pp. 1–6. arXiv:2002.05502. doi:10.1109/ITSC45102.2020.9294300. ISBN 978-1-7281-4149-7. S2CID 211096594.

- ^ Duan, J; Wang, W; Xiao, L (2023-10-26). "DSAC-T: Distributional Soft Actor-Critic with Three Refinements". arXiv:2310.05858 [cs.LG].

- ^ Soucek, Branko (6 May 1992). Dynamic, Genetic and Chaotic Programming: The Sixth-Generation Computer Technology Series. John Wiley & Sons, Inc. p. 38. ISBN 0-471-55717-X.

- ^ Francois-Lavet, Vincent; et al. (2018). "An Introduction to Deep Reinforcement Learning". Foundations and Trends in Machine Learning. 11 (3–4): 219–354. arXiv:1811.12560. Bibcode:2018arXiv181112560F. doi:10.1561/2200000071. S2CID 54434537.

- ^ Mnih, Volodymyr; et al. (2015). "Human-level control through deep reinforcement learning". Nature. 518 (7540): 529–533. Bibcode:2015Natur.518..529M. doi:10.1038/nature14236. PMID 25719670. S2CID 205242740.

- ^ Goodfellow, Ian; Shlens, Jonathan; Szegedy, Christian (2015). "Explaining and Harnessing Adversarial Examples". International Conference on Learning Representations. arXiv:1412.6572.

- ^ Behzadan, Vahid; Munir, Arslan (2017). "Vulnerability of Deep Reinforcement Learning to Policy Induction Attacks". Machine Learning and Data Mining in Pattern Recognition. Lecture Notes in Computer Science. Vol. 10358. pp. 262–275. arXiv:1701.04143. doi:10.1007/978-3-319-62416-7_19. ISBN 978-3-319-62415-0. S2CID 1562290.

- ^ Pieter, Huang, Sandy Papernot, Nicolas Goodfellow, Ian Duan, Yan Abbeel (2017-02-07). Adversarial Attacks on Neural Network Policies. OCLC 1106256905.

{{cite book}}: CS1 maint: multiple names: authors list (link) - ^ Korkmaz, Ezgi (2022). "Deep Reinforcement Learning Policies Learn Shared Adversarial Features Across MDPs". Thirty-Sixth AAAI Conference on Artificial Intelligence (AAAI-22). 36 (7): 7229–7238. arXiv:2112.09025. doi:10.1609/aaai.v36i7.20684. S2CID 245219157.

- ^ Berenji, H.R. (1994). "Fuzzy Q-learning: A new approach for fuzzy dynamic programming". Proceedings of 1994 IEEE 3rd International Fuzzy Systems Conference. Orlando, FL, USA: IEEE. pp. 486–491. doi:10.1109/FUZZY.1994.343737. ISBN 0-7803-1896-X. S2CID 56694947.

- ^ Vincze, David (2017). "Fuzzy rule interpolation and reinforcement learning" (PDF). 2017 IEEE 15th International Symposium on Applied Machine Intelligence and Informatics (SAMI). IEEE. pp. 173–178. doi:10.1109/SAMI.2017.7880298. ISBN 978-1-5090-5655-2. S2CID 17590120.

- ^ Ng, A. Y.; Russell, S. J. (2000). "Algorithms for Inverse Reinforcement Learning" (PDF). Proceeding ICML '00 Proceedings of the Seventeenth International Conference on Machine Learning. pp. 663–670. ISBN 1-55860-707-2.

- ^ Ziebart, Brian D.; Maas, Andrew; Bagnell, J. Andrew; Dey, Anind K. (2008-07-13). "Maximum entropy inverse reinforcement learning". Proceedings of the 23rd national conference on Artificial intelligence - Volume 3. AAAI'08. Chicago, Illinois: AAAI Press: 1433–1438. ISBN 978-1-57735-368-3. S2CID 336219.

- ^ Pitombeira-Neto, Anselmo R.; Santos, Helano P.; Coelho da Silva, Ticiana L.; de Macedo, José Antonio F. (March 2024). "Trajectory modeling via random utility inverse reinforcement learning". Information Sciences. 660: 120128. arXiv:2105.12092. doi:10.1016/j.ins.2024.120128. ISSN 0020-0255. S2CID 235187141.

- ^ García, Javier; Fernández, Fernando (1 January 2015). "A comprehensive survey on safe reinforcement learning" (PDF). The Journal of Machine Learning Research. 16 (1): 1437–1480.

- ^ a b Guan, Yang; Li, Shengbo; Duan, Jiangliang (2021). "Direct and indirect reinforcement learning". International Journal of Intelligent Systems. 36 (8): 4439–4467. arXiv:1912.10600. doi:10.1002/int.22466.

Sources[edit]

- Sutton, Richard S.; Barto, Andrew G. (2018) [1998]. Reinforcement Learning: An Introduction (2nd ed.). MIT Press. ISBN 978-0-262-03924-6.

- Li, Shengbo Eben (2023). Reinforcement Learning for Sequential Decision and Optimal Control (1st ed.). Springer Verlag, Singapore. doi:10.1007/978-981-19-7784-8. ISBN 978-9-811-97783-1.

- Bertsekas, Dimitri P. (2023) [2019]. REINFORCEMENT LEARNING AND OPTIMAL CONTROL (1st ed.). Athena Scientific. ISBN 978-1-886-52939-7.

Further reading[edit]

- Annaswamy, Anuradha M. (3 May 2023). "Adaptive Control and Intersections with Reinforcement Learning". Annual Review of Control, Robotics, and Autonomous Systems. 6 (1): 65–93. doi:10.1146/annurev-control-062922-090153. ISSN 2573-5144. S2CID 255702873.

- Auer, Peter; Jaksch, Thomas; Ortner, Ronald (2010). "Near-optimal regret bounds for reinforcement learning". Journal of Machine Learning Research. 11: 1563–1600.

- Busoniu, Lucian; Babuska, Robert; De Schutter, Bart; Ernst, Damien (2010). Reinforcement Learning and Dynamic Programming using Function Approximators. Taylor & Francis CRC Press. ISBN 978-1-4398-2108-4.

- François-Lavet, Vincent; Henderson, Peter; Islam, Riashat; Bellemare, Marc G.; Pineau, Joelle (2018). "An Introduction to Deep Reinforcement Learning". Foundations and Trends in Machine Learning. 11 (3–4): 219–354. arXiv:1811.12560. Bibcode:2018arXiv181112560F. doi:10.1561/2200000071. S2CID 54434537.

- Powell, Warren (2011). Approximate dynamic programming: solving the curses of dimensionality. Wiley-Interscience. Archived from the original on 2016-07-31. Retrieved 2010-09-08.

- Sutton, Richard S. (1988). "Learning to predict by the method of temporal differences". Machine Learning. 3: 9–44. doi:10.1007/BF00115009.

- Szita, Istvan; Szepesvari, Csaba (2010). "Model-based Reinforcement Learning with Nearly Tight Exploration Complexity Bounds" (PDF). ICML 2010. Omnipress. pp. 1031–1038. Archived from the original (PDF) on 2010-07-14.

External links[edit]

- Reinforcement Learning Repository

- Reinforcement Learning and Artificial Intelligence (RLAI, Rich Sutton's lab at the University of Alberta)

- Autonomous Learning Laboratory (ALL, Andrew Barto's lab at the University of Massachusetts Amherst)

- Real-world reinforcement learning experiments Archived 2018-10-08 at the Wayback Machine at Delft University of Technology

- Stanford University Andrew Ng Lecture on Reinforcement Learning

- Dissecting Reinforcement Learning Series of blog post on reinforcement learning with Python code

- A (Long) Peek into Reinforcement Learning

![{\displaystyle \pi :{\mathcal {S}}\times {\mathcal {A}}\rightarrow [0,1]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1a69266603ad25d7978aa2bb47fddf8a28aa3399)

![{\displaystyle \pi :{\mathcal {A}}\times {\mathcal {S}}\rightarrow [0,1]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/8874b7ef5cb4db70c52a318d73a094611a33f5f4)

![{\displaystyle V_{\pi }(s)=\operatorname {\mathbb {E} } [G\mid S_{0}=s]=\operatorname {\mathbb {E} } \left[\sum _{t=0}^{\infty }\gamma ^{t}R_{t+1}\mid S_{0}=s\right],}](https://wikimedia.org/api/rest_v1/media/math/render/svg/f84b6e9913e812561947e07eb7bee813a398e879)

![{\displaystyle \operatorname {\mathbb {E} } [G]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/c17deb2341b047040bbddcbb56bae20d587311cb)

![{\displaystyle V^{\pi }(s)=\operatorname {\mathbb {E} } [G\mid s,\pi ],}](https://wikimedia.org/api/rest_v1/media/math/render/svg/f79fad295cbb4d688fe27b8e58110fddde33549f)

![{\displaystyle \rho ^{\pi }=\operatorname {\mathbb {E} } [V^{\pi }(s)]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/d4e1f416bab963210f369daa9fd1aaa639fb78fe)

![{\displaystyle Q^{\pi }(s,a)=\operatorname {\mathbb {E} } [G\mid s,a,\pi ],\,}](https://wikimedia.org/api/rest_v1/media/math/render/svg/a8d16c880426ef6a8e8723bc804fbec99bb81b08)