Settling time

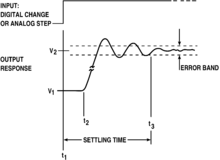

In control theory the settling time of a dynamical system such as an amplifier or other output device is the time elapsed from the application of an ideal instantaneous step input to the time at which the amplifier output has entered and remained within a specified error band.

Settling time includes a propagation delay, plus the time required for the output to slew to the vicinity of the final value, recover from the overload condition associated with slew, and finally settle to within the specified error.

Systems with energy storage cannot respond instantaneously and will exhibit transient responses when they are subjected to inputs or disturbances.[1]

Definition[edit]

Tay, Mareels and Moore (1998) defined settling time as "the time required for the response curve to reach and stay within a range of certain percentage (usually 5% or 2%) of the final value."[2]

Mathematical detail[edit]

Settling time depends on the system response and natural frequency.

The settling time for a second order, underdamped system responding to a step response can be approximated if the damping ratio by

A general form is

Thus, if the damping ratio , settling time to within 2% = 0.02 is:

See also[edit]

References[edit]

- ^ Modern Control Engineering (5th Edition), Katsuhiko Ogata[page needed]

- ^ Tay, Teng-Tiow; Iven Mareels; John B. Moore (1998). High performance control. Birkhäuser. p. 93. ISBN 978-0-8176-4004-0.

External links[edit]

- Second-Order System Example

- Op Amp Settling Time

- Graphical tutorial of Settling time and Risetime

- MATLAB function for computing settling time, rise time, and other step response characteristics

- Settling Time Calculator