Single-precision floating-point format

Single-precision floating-point format (sometimes called FP32 or float32) is a computer number format, usually occupying 32 bits in computer memory; it represents a wide dynamic range of numeric values by using a floating radix point.

A floating-point variable can represent a wider range of numbers than a fixed-point variable of the same bit width at the cost of precision. A signed 32-bit integer variable has a maximum value of 231 − 1 = 2,147,483,647, whereas an IEEE 754 32-bit base-2 floating-point variable has a maximum value of (2 − 2−23) × 2127 ≈ 3.4028235 × 1038. All integers with 7 or fewer decimal digits, and any 2n for a whole number −149 ≤ n ≤ 127, can be converted exactly into an IEEE 754 single-precision floating-point value.

In the IEEE 754-2008 standard, the 32-bit base-2 format is officially referred to as binary32; it was called single in IEEE 754-1985. IEEE 754 specifies additional floating-point types, such as 64-bit base-2 double precision and, more recently, base-10 representations.

One of the first programming languages to provide single- and double-precision floating-point data types was Fortran. Before the widespread adoption of IEEE 754-1985, the representation and properties of floating-point data types depended on the computer manufacturer and computer model, and upon decisions made by programming-language designers. E.g., GW-BASIC's single-precision data type was the 32-bit MBF floating-point format.

Single precision is termed REAL in Fortran;[1] SINGLE-FLOAT in Common Lisp;[2] float in C, C++, C# and Java;[3] Float in Haskell[4] and Swift;[5] and Single in Object Pascal (Delphi), Visual Basic, and MATLAB. However, float in Python, Ruby, PHP, and OCaml and single in versions of Octave before 3.2 refer to double-precision numbers. In most implementations of PostScript, and some embedded systems, the only supported precision is single.

| Floating-point formats |

|---|

| IEEE 754 |

|

| Other |

| Alternatives |

IEEE 754 standard: binary32[edit]

The IEEE 754 standard specifies a binary32 as having:

- Sign bit: 1 bit

- Exponent width: 8 bits

- Significand precision: 24 bits (23 explicitly stored)

This gives from 6 to 9 significant decimal digits precision. If a decimal string with at most 6 significant digits is converted to the IEEE 754 single-precision format, giving a normal number, and then converted back to a decimal string with the same number of digits, the final result should match the original string. If an IEEE 754 single-precision number is converted to a decimal string with at least 9 significant digits, and then converted back to single-precision representation, the final result must match the original number.[6]

The sign bit determines the sign of the number, which is the sign of the significand as well. The exponent field is an 8-bit unsigned integer from 0 to 255, in biased form: a value of 127 represents the actual exponent zero. Exponents range from −126 to +127 (thus 1 to 254 in the exponent field), because the biased exponent values 0 (all 0s) and 255 (all 1s) are reserved for special numbers (subnormal numbers, signed zeros, infinities, and NaNs).

The true significand of normal numbers includes 23 fraction bits to the right of the binary point and an implicit leading bit (to the left of the binary point) with value 1. Subnormal numbers and zeros (which are the floating-point numbers smaller in magnitude than the least positive normal number) are represented with the biased exponent value 0, giving the implicit leading bit the value 0. Thus only 23 fraction bits of the significand appear in the memory format, but the total precision is 24 bits (equivalent to log10(224) ≈ 7.225 decimal digits).

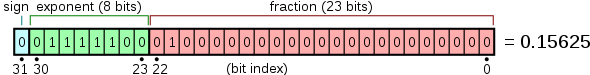

The bits are laid out as follows:

The real value assumed by a given 32-bit binary32 data with a given sign, biased exponent e (the 8-bit unsigned integer), and a 23-bit fraction is

- ,

which yields

In this example:

- ,

- ,

- ,

- ,

- .

thus:

- .

Note:

- ,

- ,

- ,

- .

Exponent encoding[edit]

The single-precision binary floating-point exponent is encoded using an offset-binary representation, with the zero offset being 127; also known as exponent bias in the IEEE 754 standard.

- Emin = 01H−7FH = −126

- Emax = FEH−7FH = 127

- Exponent bias = 7FH = 127

Thus, in order to get the true exponent as defined by the offset-binary representation, the offset of 127 has to be subtracted from the stored exponent.

The stored exponents 00H and FFH are interpreted specially.

| Exponent | fraction = 0 | fraction ≠ 0 | Equation |

|---|---|---|---|

| 00H = 000000002 | ±zero | subnormal number | |

| 01H, ..., FEH = 000000012, ..., 111111102 | normal value | ||

| FFH = 111111112 | ±infinity | NaN (quiet, signalling) | |

The minimum positive normal value is and the minimum positive (subnormal) value is .

Converting decimal to binary32[edit]

This section possibly contains original research. (February 2020) |

This section may be confusing or unclear to readers. In particular, the examples are simple particular cases (simple values exactly representable in binary, without an exponent part). This section is also probably off-topic: this is not an article about conversion, and conversion from decimal using decimal arithmetic (as opposed to conversion from a character string) is uncommon. (February 2020) |

In general, refer to the IEEE 754 standard itself for the strict conversion (including the rounding behaviour) of a real number into its equivalent binary32 format.

Here we can show how to convert a base-10 real number into an IEEE 754 binary32 format using the following outline:

- Consider a real number with an integer and a fraction part such as 12.375

- Convert and normalize the integer part into binary

- Convert the fraction part using the following technique as shown here

- Add the two results and adjust them to produce a proper final conversion

Conversion of the fractional part: Consider 0.375, the fractional part of 12.375. To convert it into a binary fraction, multiply the fraction by 2, take the integer part and repeat with the new fraction by 2 until a fraction of zero is found or until the precision limit is reached which is 23 fraction digits for IEEE 754 binary32 format.

- , the integer part represents the binary fraction digit. Re-multiply 0.750 by 2 to proceed

- , fraction = 0.011, terminate

We see that can be exactly represented in binary as . Not all decimal fractions can be represented in a finite digit binary fraction. For example, decimal 0.1 cannot be represented in binary exactly, only approximated. Therefore:

Since IEEE 754 binary32 format requires real values to be represented in format (see Normalized number, Denormalized number), 1100.011 is shifted to the right by 3 digits to become

Finally we can see that:

From which we deduce:

- The exponent is 3 (and in the biased form it is therefore

- The fraction is 100011 (looking to the right of the binary point)

From these we can form the resulting 32-bit IEEE 754 binary32 format representation of 12.375:

Note: consider converting 68.123 into IEEE 754 binary32 format: Using the above procedure you expect to get with the last 4 bits being 1001. However, due to the default rounding behaviour of IEEE 754 format, what you get is , whose last 4 bits are 1010.

Example 1: Consider decimal 1. We can see that:

From which we deduce:

- The exponent is 0 (and in the biased form it is therefore

- The fraction is 0 (looking to the right of the binary point in 1.0 is all )

From these we can form the resulting 32-bit IEEE 754 binary32 format representation of real number 1:

Example 2: Consider a value 0.25. We can see that:

From which we deduce:

- The exponent is −2 (and in the biased form it is )

- The fraction is 0 (looking to the right of binary point in 1.0 is all zeroes)

From these we can form the resulting 32-bit IEEE 754 binary32 format representation of real number 0.25:

Example 3: Consider a value of 0.375. We saw that

Hence after determining a representation of 0.375 as we can proceed as above:

- The exponent is −2 (and in the biased form it is )

- The fraction is 1 (looking to the right of binary point in 1.1 is a single )

From these we can form the resulting 32-bit IEEE 754 binary32 format representation of real number 0.375:

Converting binary32 to decimal[edit]

This section possibly contains original research. (February 2020) |

This section may be confusing or unclear to readers. In particular, there is only a very simple example, without rounding. This section is also probably off-topic: this is not an article about conversion, and conversion to decimal, using decimal arithmetic, is uncommon. (February 2020) |

If the binary32 value, 41C80000 in this example, is in hexadecimal we first convert it to binary:

then we break it down into three parts: sign bit, exponent, and significand.

- Sign bit:

- Exponent:

- Significand:

We then add the implicit 24th bit to the significand:

- Significand:

and decode the exponent value by subtracting 127:

- Raw exponent:

- Decoded exponent:

Each of the 24 bits of the significand (including the implicit 24th bit), bit 23 to bit 0, represents a value, starting at 1 and halves for each bit, as follows:

bit 23 = 1 bit 22 = 0.5 bit 21 = 0.25 bit 20 = 0.125 bit 19 = 0.0625 bit 18 = 0.03125 bit 17 = 0.015625 . . bit 6 = 0.00000762939453125 bit 5 = 0.000003814697265625 bit 4 = 0.0000019073486328125 bit 3 = 0.00000095367431640625 bit 2 = 0.000000476837158203125 bit 1 = 0.0000002384185791015625 bit 0 = 0.00000011920928955078125

The significand in this example has three bits set: bit 23, bit 22, and bit 19. We can now decode the significand by adding the values represented by these bits.

- Decoded significand:

Then we need to multiply with the base, 2, to the power of the exponent, to get the final result:

Thus

This is equivalent to:

where s is the sign bit, x is the exponent, and m is the significand.

Precision limitations on decimal values (between 1 and 16777216)[edit]

- Decimals between 1 and 2: fixed interval 2−23 (1+2−23 is the next largest float after 1)

- Decimals between 2 and 4: fixed interval 2−22

- Decimals between 4 and 8: fixed interval 2−21

- ...

- Decimals between 2n and 2n+1: fixed interval 2n-23

- ...

- Decimals between 222=4194304 and 223=8388608: fixed interval 2−1=0.5

- Decimals between 223=8388608 and 224=16777216: fixed interval 20=1

Precision limitations on integer values[edit]

- Integers between 0 and 16777216 can be exactly represented (also applies for negative integers between −16777216 and 0)

- Integers between 224=16777216 and 225=33554432 round to a multiple of 2 (even number)

- Integers between 225 and 226 round to a multiple of 4

- ...

- Integers between 2n and 2n+1 round to a multiple of 2n-23

- ...

- Integers between 2127 and 2128 round to a multiple of 2104

- Integers greater than or equal to 2128 are rounded to "infinity".

Notable single-precision cases[edit]

These examples are given in bit representation, in hexadecimal and binary, of the floating-point value. This includes the sign, (biased) exponent, and significand.

0 00000000 000000000000000000000012 = 0000 000116 = 2−126 × 2−23 = 2−149 ≈ 1.4012984643 × 10−45

(smallest positive subnormal number)

0 00000000 111111111111111111111112 = 007f ffff16 = 2−126 × (1 − 2−23) ≈ 1.1754942107 ×10−38

(largest subnormal number)

0 00000001 000000000000000000000002 = 0080 000016 = 2−126 ≈ 1.1754943508 × 10−38

(smallest positive normal number)

0 11111110 111111111111111111111112 = 7f7f ffff16 = 2127 × (2 − 2−23) ≈ 3.4028234664 × 1038

(largest normal number)

0 01111110 111111111111111111111112 = 3f7f ffff16 = 1 − 2−24 ≈ 0.999999940395355225

(largest number less than one)

0 01111111 000000000000000000000002 = 3f80 000016 = 1 (one)

0 01111111 000000000000000000000012 = 3f80 000116 = 1 + 2−23 ≈ 1.00000011920928955

(smallest number larger than one)

1 10000000 000000000000000000000002 = c000 000016 = −2

0 00000000 000000000000000000000002 = 0000 000016 = 0

1 00000000 000000000000000000000002 = 8000 000016 = −0

0 11111111 000000000000000000000002 = 7f80 000016 = infinity

1 11111111 000000000000000000000002 = ff80 000016 = −infinity

0 10000000 100100100001111110110112 = 4049 0fdb16 ≈ 3.14159274101257324 ≈ π ( pi )

0 01111101 010101010101010101010112 = 3eaa aaab16 ≈ 0.333333343267440796 ≈ 1/3

x 11111111 100000000000000000000012 = ffc0 000116 = qNaN (on x86 and ARM processors)

x 11111111 000000000000000000000012 = ff80 000116 = sNaN (on x86 and ARM processors)

By default, 1/3 rounds up, instead of down like double precision, because of the even number of bits in the significand. The bits of 1/3 beyond the rounding point are 1010... which is more than 1/2 of a unit in the last place.

Encodings of qNaN and sNaN are not specified in IEEE 754 and implemented differently on different processors. The x86 family and the ARM family processors use the most significant bit of the significand field to indicate a quiet NaN. The PA-RISC processors use the bit to indicate a signalling NaN.

Optimizations[edit]

The design of floating-point format allows various optimisations, resulting from the easy generation of a base-2 logarithm approximation from an integer view of the raw bit pattern. Integer arithmetic and bit-shifting can yield an approximation to reciprocal square root (fast inverse square root), commonly required in computer graphics.

See also[edit]

- IEEE 754

- ISO/IEC 10967, language independent arithmetic

- Primitive data type

- Numerical stability

- Scientific notation

References[edit]

- ^ "REAL Statement". scc.ustc.edu.cn. Archived from the original on 2021-02-24. Retrieved 2013-02-28.

- ^ "CLHS: Type SHORT-FLOAT, SINGLE-FLOAT, DOUBLE-FLOAT..."

- ^ "Primitive Data Types". Java Documentation.

- ^ "6 Predefined Types and Classes". haskell.org. 20 July 2010.

- ^ "Float". Apple Developer Documentation.

- ^ William Kahan (1 October 1997). "Lecture Notes on the Status of IEEE Standard 754 for Binary Floating-Point Arithmetic" (PDF). p. 4.

![{\displaystyle 1.b_{22}b_{21}...b_{0}=1+\sum _{i=1}^{23}b_{23-i}2^{-i}=1+1\cdot 2^{-2}=1.25\in \{1,1+2^{-23},\ldots ,2-2^{-23}\}\subset [1;2-2^{-23}]\subset [1;2)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4c8b8c401c6cf3993878f339f4a19970db99f883)