Time-Sensitive Networking

Time-Sensitive Networking (TSN) is a set of standards under development by the Time-Sensitive Networking task group of the IEEE 802.1 working group.[1] The TSN task group was formed in November 2012 by renaming the existing Audio Video Bridging Task Group[2] and continuing its work. The name changed as a result of the extension of the working area of the standardization group. The standards define mechanisms for the time-sensitive transmission of data over deterministic Ethernet networks.

The majority of projects define extensions to the IEEE 802.1Q – Bridges and Bridged Networks, which describes virtual LANs and network switches.[3] These extensions in particular address transmission with very low latency and high availability. Applications include converged networks with real-time audio/video streaming and real-time control streams which are used in automotive applications and industrial control facilities.

Background[edit]

Standard information technology network equipment has no concept of "time" and cannot provide synchronization and precision timing. Delivering data reliably is more important than delivering within a specific time, so there are no constraints on delay or synchronization precision. Even if the average hop delay is very low, individual delays can be unacceptably high. Network congestion is handled by throttling and retransmitting dropped packets at the transport layer, but there are no means to prevent congestion at the link layer. Data can be lost when the buffers are too small or the bandwidth is insufficient, but excessive buffering adds to the delay, which is unacceptable when low deterministic delays are required.

The different AVB/TSN standards documents specified by IEEE 802.1 can be grouped into three basic key component categories that are required for a complete real-time communication solution based on switched Ethernet networks with deterministic quality of service (QoS) for point-to-point connections. Each and every standard specification can be used on its own and is mostly self-sufficient. However, only when used together in a concerted way, TSN as a communication system can achieve its full potential. The three basic components are:

- Time synchronization: All devices that are participating in real-time communication need to have a common understanding of time

- Scheduling and traffic shaping: All devices that are participating in real-time communication adhere to the same rules in processing and forwarding communication packets

- Selection of communication paths, path reservations and fault-tolerance: All devices that are participating in real-time communication adhere to the same rules in selecting communication paths and in reserving bandwidth and time slots, possibly utilizing more than one simultaneous path to achieve fault-tolerance

Applications which need a deterministic network that behaves in a predictable fashion include audio and video, initially defined in Audio Video Bridging (AVB); control networks that accept inputs from sensors, perform control loop processing, and initiate actions; safety-critical networks that implement packet and link redundancy; and mixed media networks that handle data with varying levels of timing sensitivity and priority, such as vehicle networks that support climate control, infotainment, body electronics, and driver assistance. The IEEE AVB/TSN suite serves as the foundation for deterministic networking to satisfy the common requirements of these applications.

AVB/TSN can handle rate-constrained traffic, where each stream has a bandwidth limit defined by minimum inter-frame intervals and maximal frame size, and time-trigger traffic with an exact accurate time to be sent. Low-priority traffic is passed on best-effort base, with no timing and delivery guarantees.

Time Synchronization[edit]

In contrast to standard Ethernet according to IEEE 802.3 and Ethernet bridging according to IEEE 802.1Q, time is very important in TSN networks. For real-time communication with hard, non-negotiable time boundaries for end-to-end transmission latencies, all devices in this network need to have a common time reference and therefore, need to synchronize their clocks among each other. This is not only true for the end devices of a communication stream, such as an industrial controller and a manufacturing robot, but also true for network components, such as Ethernet switches. Only through synchronized clocks, it is possible for all network devices to operate in unison and execute the required operation at exactly the required point in time. Although time synchronization in TSN networks can be achieved with GPS clock, this is costly and there is no guarantee that the endpoint device has access to the radio or satellite signal at all times. Due to these constraints, time in TSN networks is usually distributed from one central time source directly through the network itself using the IEEE 1588 Precision Time Protocol, which utilizes Ethernet frames to distribute time synchronization information. IEEE 802.1AS is a tightly constrained subset of IEEE 1588 with sub-microsecond precision and extensions to support synchronisation over WiFi radio (IEEE 802.11). The idea behind this profile is to narrow the huge list of different IEEE 1588 options down to a manageable few critical options that are applicable to home networks or networks in automotive or industrial automation environments.

IEEE 802.1AS Timing and Synchronization for Time-Sensitive Applications[edit]

IEEE 802.1AS-2011 defines the Generalized Precision Time Protocol (gPTP) profile which, like all profiles of IEEE 1588, selects among the options of 1588, but also generalizes the architecture to allow PTP to apply beyond wired Ethernet networks.

To account for data path delays, the gPTP protocol measures the frame residence time within each bridge (the time required for receiving, processing, queuing and transmission of timing information from the ingress to egress ports), and the link latency of each hop (a propagation delay between two adjacent bridges). These calculated delays are then referenced to the GrandMaster (GM) clock in a bridge elected by the Best Master Clock Algorithm, a clock spanning tree protocol to which all Clock Master (CM) and endpoint devices attempt to synchronize. Any device which does not synchronize to timing messages is outside of the timing domain boundaries (Figure 2).

Synchronization accuracy depends on precise measurements of link delay and frame residence time. 802.1AS uses 'logical syntonization', where a ratio between local clock and GM clock frequencies is used to calculate synchronized time, and a ratio between local and GM clock frequencies to calculate propagation delay.

IEEE802.1AS-2020 introduces improved time measurement accuracy and support for multiple time domains for redundancy.

Scheduling and traffic shaping[edit]

Scheduling and traffic shaping allows for the coexistence of different traffic classes with different priorities on the same network - each with different requirements to available bandwidth and end-to-end latency.

Traffic shaping refers to the process of distributing frames/packets evenly in time to smooth out the traffic. Without traffic shaping at sources and bridges, the packets will "bunch", i.e. agglomerate into bursts of traffic, overwhelming the buffers in subsequent bridges/switches along the path.

Standard bridging according to IEEE 802.1Q uses a strict priority scheme with eight distinct priorities. On the protocol level, these priorities are visible in the Priority Code Point (PCP) field in the 802.1Q VLAN tag of a standard Ethernet frame. These priorities already distinguish between more important and less important network traffic, but even with the highest of the eight priorities, no absolute guarantee for an end-to-end delivery time can be given. The reason for this is buffering effects inside the Ethernet switches. If a switch has started the transmission of an Ethernet frame on one of its ports, even the highest priority frame has to wait inside the switch buffer for this transmission to finish. With standard Ethernet switching, this non-determinism cannot be avoided. This is not an issue in environments where applications do not depend on the timely delivery of single Ethernet frames - such as office IT infrastructures. In these environments, file transfers, emails or other business applications have limited time sensitivity themselves and are usually protected by other mechanisms further up the protocol stack, such as the Transmission Control Protocol. In industrial automation (Programmable Logic Controller (PLC) with an industrial robot) and automotive car environments, where closed loop control or safety applications are using the Ethernet network, reliable and timely delivery is of utmost importance. AVB/TSN enhances standard Ethernet communication by adding mechanisms to provide different time slices for different traffic classes and ensure timely delivery with soft and hard real-time requirements of control system applications. The mechanism of utilizing the eight distinct VLAN priorities is retained, to ensure complete backward compatibility to non-TSN Ethernet. To achieve transmission times with guaranteed end-to-end latency, one or several of the eight Ethernet priorities can be individually assigned to already existing methods (such as the IEEE 802.1Q strict priority scheduler) or new processing methods, such as the IEEE 802.1Qav credit-based traffic shaper, IEEE 802.1Qbv time-aware shaper,[4] or IEEE 802.1Qcr asynchronous shaper.

Time-sensitive traffic has several priority classes. For credit-based shaper 802.1Qav, Stream Reservation Class A is the highest priority with a transmission period of 125 μs; Class B has the second-highest priority with a maximum transmission period of 250 μs. Traffic classes shall not exceed their preconfigured maximum bandwidth (75% for audio and video applications). The maximum number of hops is 7. The worst-case latency requirement is defined as 2 ms for Class A and 50 ms for Class B, but has been shown to be unreliable.[5][6] The per-port peer delay provided by gPTP and the network bridge residence delay are added to calculate the accumulated delays and ensure the latency requirement is met. Control traffic has the third-highest priority and includes gPTP and SRP traffic. Time-aware scheduler 802.1Qbv introduces Class CDT for realtime control data from sensors and command streams to actuators, with worst-case latency of 100 μs over 5 hops, and a maximum transmission period of 0.5 ms. Class CDT takes the highest priority over classes A, B, and control traffic.

AVB credit-based scheduler[edit]

IEEE 802.1Qav Forwarding and queuing enhancements for time-sensitive streams[edit]

IEEE 802.1Qav Forwarding and Queuing Enhancements for Time-Sensitive Streams defines traffic shaping using priority classes, which is based on a simple form of "leaky bucket" credit-based fair queuing. 802.1Qav is designed to reduce buffering in receiving bridges and endpoints.

The credit-based shaper defines credits in bits for two separate queues, dedicated to Class A and Class B traffic. Frame transmission is only allowed when credit is non-negative; during transmission the credit decreases at a rate called sendSlope:

The credit increases at a rate idleSlope if frames are waiting for other queues to be transmitted:

Thus the idleSlope is the bandwidth reserved for the queue by the bridge, and the sendSlope is the transmission rate of the port MAC service.

If the credit is negative and no frames are transmitted, credit increases at idleSlope rate until zero is reached. If an AVB frame cannot be transmitted because a non-AVB frame is in transmission, credit accumulates at idleSlope rate but positive credit is allowed.

Additional limits hiCredit and loCredit are derived from the maximum frame size and maximum interference size, the idleSlope/sendSlope, and the maximum port transmission rate.

Reserved AV stream traffic frames are forwarded with high priority over non-reserved best-effort traffic, subject to credit-based traffic shaping rules which may require them to wait for certain amount of credits. This protects best-effort traffic by limiting maximum AV stream burst. The frames are scheduled very evenly, though only on an aggregate basis, to smooth out the delivery times and reduce bursting and bunching, which can lead to buffer overflows and packet drops that trigger retransmissions. The increased buffering delay makes re-transmitted packets obsolete by the time they arrive, resulting in frame drops which reduces the quality of AV applications.

Though credit-based shaper provides fair scheduling for low-priority packets and smooths out traffic to eliminate congestion, unfortunately, average delay increases up to 250 μs per hop, which is too high for control applications, whereas a time-aware shaper (IEEE 802.1Qbv) has a fixed cycle delay from 30 μs to several milliseconds, and typical delay of 125 μs. Deriving guaranteed upper bounds on delays in TSN is non-trivial and is currently being researched, e.g., by using the mathematical framework Network Calculus.[7]

IEEE 802.1Qat Stream Reservation Protocol[edit]

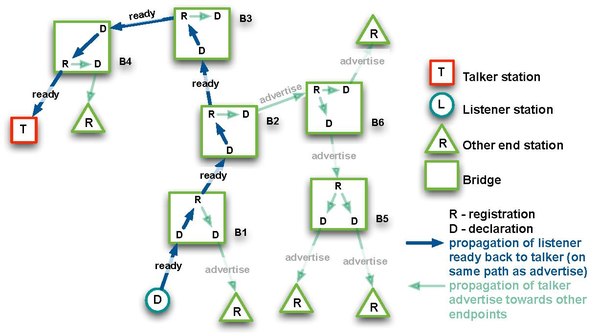

IEEE 802.1Qat Stream Reservation Protocol (SRP) is a distributed peer-to-peer protocol that specifies admission controls based on resource requirements of the flow and available network resources.

SRP reserves resources and advertises streams from the sender/source (talker) to the receivers/destinations (listeners); it works to satisfy QoS requirements for each stream and guarantee the availability of sufficient network resources along the entire flow transmission path.

The traffic streams are identified and registered with a 64-bit StreamID, made up of the 48-bit MAC address (EUI) and 16-bit UniqueID to identify different streams from the one source.

SRP employs variants of Multiple Registration Protocol (MRP) to register and de-register attribute values on switches/bridges/devices - the Multiple MAC Registration Protocol (MMRP), the Multiple VLAN Registration Protocol (MVRP), and the Multiple Stream Registration Protocol (MSRP).

The SRP protocol essentially works in the following sequence:

- Advertise a stream from a talker

- Register the paths along data flow

- Calculate worst-case latency

- Create an AVB domain

- Reserve the bandwidth

Resources are allocated and configured in both the end nodes of the data stream and the transit nodes along the data flow path, with an end-to-end signaling mechanism to detect the success/failure. Worst-case latency is calculated by querying every bridge.

Reservation requests use the general MRP application with MRP attribute propagation mechanism. All nodes along the flow path pass the MRP Attribute Declaration (MAD) specification which describes the stream characteristics so that bridges could allocate the necessary resources.

If a bridge is able to reserve the required resources, it propagates the advertisement to the next bridge; otherwise, a 'talker failed' message is raised. When the advertise message reaches the listener, it replies with 'listener ready' message that propagates back to the talker.

Talker advertise and listener ready messages can be de-registered, which terminates the stream.

Successful reservation is only guaranteed when all intermediate nodes support SRP and respond to advertise and ready messages; in Figure 2 above, AVB domain 1 is unable to connect with AVB domain 2.

SRP is also used by TSN/AVB standards for frame priorities, frame scheduling, and traffic shaping

Enhancements to AVB scheduling[edit]

IEEE 802.1Qcc Enhancements to SRP[edit]

SRP uses decentralized registration and reservation procedure, multiple requests can introduce delays for critical traffic. IEEE 802.1Qcc-2018 "Stream Reservation Protocol (SRP) Enhancements and Performance Improvements" amendment reduces the size of reservation messages and redefines timers so they trigger updates only when link state or reservation is changed. To improve TSN administration on large scale networks, each User Network Interface (UNI) provides methods for requesting Layer 2 services, supplemented by Centralized Network Configuration (CNC) to provide centralized reservation and scheduling, and remote management using NETCONF/RESTCONF protocols and IETF YANG/NETCONF data modeling.

CNC implements a per-stream request-response model, where SR class is not explicitly used: end-stations send requests for a specific stream (via edge port) without knowledge of the network configuration, and CNC performs stream reservation centrally. MSRP only runs on the link to end-stations as an information carrier between CNC and end-stations, not for stream reservation. Centralized User Configuration (CUC) is an optional node that discovers end stations, their capabilities and user requirements, and configures delay-optimized TSN features (for closed-loop IACS applications). Seamless interop with Resource Reservation Protocol (RSVP) transport is provided. 802.1Qcc allows centralized configuration management to coexist with decentralized, fully distributed configuration of the SRP protocol, and also supports hybrid configurations for legacy AVB devices.

802.1Qcc can be combined with IEEE 802.1Qca Path Control and Reservation (PCR) and TSN traffic shapers.

IEEE 802.1Qch Cyclic Queuing and Forwarding (CQF)[edit]

While the 802.1Qav FQTSS/CBS works very well with soft real-time traffic, worst-case delays are both hop count and network topology dependent. Pathological topologies introduce delays, so buffer size requirements have to consider network topology.

IEEE 802.1Qch Cyclic Queuing and Forwarding (CQF), also known as the Peristaltic Shaper (PS), introduces double buffering which allows bridges to synchronize transmission (frame enqueue/dequeue operations) in a cyclic manner, with bounded latency depending only on the number of hops and the cycle time, completely independent of the network topology.

CQF can be used with the IEEE 802.1Qbv time-aware scheduler, IEEE 802.1Qbu frame preemption, and IEEE 802.1Qci ingress traffic policing.

IEEE 802.1Qci Per-Stream Filtering and Policing (PSFP)[edit]

IEEE 802.1Qci Per-Stream Filtering and Policing (PSFP) improves network robustness by filtering individual traffic streams. It prevents traffic overload conditions that may affect bridges and the receiving endpoints due to malfunction or Denial of Service (DoS) attacks. The stream filter uses rule matching to allow frames with specified stream IDs and priority levels and apply policy actions otherwise. All streams are coordinated at their gates, similarly to the 802.1Qch signaling. The flow metering applies predefined bandwidth profiles for each stream.

TSN scheduling and traffic shaping[edit]

IEEE 802.1Qbv Enhancements to Traffic Scheduling: Time-Aware Shaper (TAS)[edit]

The IEEE 802.1Qbv time-aware scheduler is designed to separate the communication on the Ethernet network into fixed length, repeating time cycles. Within these cycles, different time slices can be configured that can be assigned to one or several of the eight Ethernet priorities. By doing this, it is possible to grant exclusive use - for a limited time - to the Ethernet transmission medium for those traffic classes that need transmission guarantees and can't be interrupted. The basic concept is a time-division multiple access (TDMA) scheme. By establishing virtual communication channels for specific time periods, time-critical communication can be separated from non-critical background traffic.

Time-aware scheduler introduces Stream Reservation Class CDT for time-critical control data, with worst-case latency of 100 μs over 5 hops, and maximum transmission period of 0.5 ms, in addition to classes A and B defined for IEEE 802.1Qav credit-based traffic shaper. By granting exclusive access to the transmission medium and devices to time-critical traffic classes, the buffering effects in the Ethernet switch transmission buffers can be avoided and time-critical traffic can be transmitted without non-deterministic interruptions. One example for an IEEE 802.1Qbv scheduler configuration is visible in figure 1:

In this example, each cycle consists of two time slices. Time slice 1 only allows the transmission of traffic tagged with VLAN priority 3, and time slice 2 in each cycle allows for the rest of the priorities to be sent. Since the IEEE 802.1Qbv scheduler requires all clocks on all network devices (Ethernet switches and end devices) to be synchronized and the identical schedule to be configured, all devices understand which priority can be sent to the network at any given point in time. Since time slice 2 has more than one priority assigned to it, within this time slice, the priorities are handled according to standard IEEE 802.1Q strict priority scheduling.

This separation of Ethernet transmissions into cycles and time slices can be enhanced further by the inclusion of other scheduling or traffic shaping algorithms, such as the IEEE 802.1Qav credit-based traffic shaper. IEEE 802.1Qav supports soft real-time. In this particular example, IEEE 802.1Qav could be assigned to one or two of the priorities that are used in time slice two to distinguish further between audio/video traffic and background file transfers. The Time-Sensitive Networking Task Group specifies a number of different schedulers and traffic shapers that can be combined to achieve the nonreactive coexistence of hard real-time, soft real-time and background traffic on the same Ethernet infrastructure.

IEEE 802.1Qbv in more detail: Time slices and guard bands[edit]

When an Ethernet interface has started the transmission of a frame to the transmission medium, this transmission has to be completely finished before another transmission can take place. This includes the transmission of the CRC32 checksum at the end of the frame to ensure a reliable, fault-free transmission. This inherent property of Ethernet networks - again- poses a challenge to the TDMA approach of the IEEE 802.1Qbv scheduler. This is visible in figure 2:

Just before the end of time slice 2 in cycle n, a new frame transmission is started. Unfortunately, this frame is too large to fit into its time slice. Since the transmission of this frame cannot be interrupted, the frame infringes the following time slice 1 of the next cycle n+1. By partially or completely blocking a time-critical time slice, real-time frames can be delayed up to the point where they cannot meet the application requirements any longer. This is very similar to the actual buffering effects that happen in non-TSN Ethernet switches, so TSN has to specify a mechanism to prevent this from happening.

The IEEE 802.1Qbv time-aware scheduler has to ensure that the Ethernet interface is not busy with the transmission of a frame when the scheduler changes from one-time slice into the next. The time-aware scheduler achieves this by putting a guard band in front of every time slice that carries time-critical traffic. During this guard band time, no new Ethernet frame transmission may be started, only already ongoing transmissions may be finished. The duration of this guard band has to be as long as it takes the maximum frame size to be safely transmitted. For an Ethernet frame according to IEEE 802.3 with a single IEEE 802.1Q VLAN tag and including interframe spacing, the total length is: 1500 byte (frame payload) + 18 byte (Ethernet addresses, EtherType and CRC) + 4 byte (VLAN Tag) + 12 byte (Interframe spacing) + 8 byte (preamble and SFD) = 1542 byte.

The total time needed for sending this frame is dependent on the link speed of the Ethernet network. With Fast Ethernet and 100 Mbit/s transmission rate, the transmission duration is as follows:

In this case, the guard band has to be at least 123.36 μs long. With the guard band, the total bandwidth or time that is usable within a time slice is reduced by the length of the guard band. This is visible in figure 3

Note: to facilitate the presentation of the topic, the actual size of the guard band in figure 3 is not to scale, but is significantly smaller than indicated by the frame in figure 2.

In this example, the time slice 1 always contains high priority data (e.g. for motion control), while time slice 2 always contains best-effort data. Therefore, a guard band needs to be placed at every transition point into time slice 1 to protect the time slice of the critical data stream(s).

While the guard bands manage to protect the time slices with high priority, critical traffic, they also have some significant drawbacks:

- The time that is consumed by a guard band is lost - it cannot be used to transmit any data, as the Ethernet port needs to be silent. Therefore, the lost time directly translates in lost bandwidth for background traffic on that particular Ethernet link.

- A single time slice can never be configured smaller than the size of the guard band. Especially with lower speed Ethernet connections and growing guard band size, this has a negative impact on the lowest achievable time slice length and cycle time.

To partially mitigate the loss of bandwidth through the guard band, the standard IEEE 802.1Qbv includes a length-aware scheduling mechanism. This mechanism is used when store-and-forward switching is utilized: after the full reception of an Ethernet frame that needs to be transmitted on a port where the guard band is in effect, the scheduler checks the overall length of the frame. If the frame can fit completely inside the guard band, without any infringement of the following high priority slice, the scheduler can send this frame, despite an active guard band, and reduce the waste of bandwidth. This mechanism, however, cannot be used when cut-through switching is enabled, since the total length of the Ethernet frame needs to be known a priori. Therefore, when cut-through switching is used to minimize end-to-end latency, the waste of bandwidth will still occur. Also, this does not help with the minimum achievable cycle time. Therefore, length-aware scheduling is an improvement, but cannot mitigate all drawbacks that are introduced by the guard band.

IEEE 802.3br and 802.1Qbu Interspersing Express Traffic (IET) and Frame Preemption[edit]

To further mitigate the negative effects from the guard bands, the IEEE working groups 802.1 and 802.3 have specified the frame pre-emption technology. The two working groups collaborated in this endeavour since the technology required both changes in the Ethernet Media Access Control (MAC) scheme that is under the control of IEEE 802.3, as well as changes in the management mechanisms that are under the control of IEEE 802.1. Due to this fact, frame pre-emption is described in two different standards documents: IEEE 802.1Qbu[8] for the bridge management component and IEEE 802.3br[9] for the Ethernet MAC component.

Frame preemption defines two MAC services for an egress port, preemptable MAC (pMAC) and express MAC (eMAC). Express frames can interrupt transmission of preemptable frames. On resume, MAC merge sublayer re-assembles frame fragments in the next bridge.

Preemption causes computational overhead in the link interface, as the operational context shall be transitioned to the express frame.

Figure 4 gives a basic example how frame pre-emption works. During the process of sending a best effort Ethernet frame, the MAC interrupts the frame transmission just before the start of the guard band. The partial frame is completed with a CRC and will be stored in the next switch to wait for the second part of the frame to arrive. After the high priority traffic in time slice 1 has passed and the cycle switches back to time slice 2, the interrupted frame transmission is resumed. Frame pre-emption always operates on a pure link-by-link basis and only fragments from one Ethernet switch to the next Ethernet switch, where the frame is reassembled. In contrast to fragmentation with the Internet Protocol (IP), no end-to-end fragmentation is supported.

Each partial frame is completed by a CRC32 for error detection. In contrast to the regular Ethernet CRC32, the last 16 bits are inverted to make a partial frame distinguishable from a regular Ethernet frame. In addition, the start of frame delimiter (SFD) is changed.

The support for frame pre-emption has to be activated on each link between devices individually. To signal the capability for frame pre-emption on a link, an Ethernet switch announces this capability through the LLDP (Link Layer Discovery Protocol). When a device receives such an LLDP announcement on a network port and supports frame pre-emption itself, it may activate the capability. There is no direct negotiation and activation of the capability on adjacent devices. Any device that receives the LLDP pre-emption announcement assumes that on the other end of the link, a device is present that can understand the changes in the frame format (changed CRC32 and SFD).

Frame pre-emption allows for a significant reduction of the guard band. The length of the guard band is now dependent on the precision of the frame pre-emption mechanism: how small is the minimum size of the frame that the mechanism can still pre-empt. IEEE 802.3br specifies the best accuracy for this mechanism at 64 byte - due to the fact that this is the minimum size of a still valid Ethernet frame. In this case, the guard band can be reduced to a total of 127 byte: 64 byte (minimum frame) + 63 byte (remaining length that cannot be pre-empted). All larger frames can be pre-empted again and therefore, there is no need to protect against this size with a guard band.

This minimizes the best effort bandwidth that is lost and also allows for much shorter cycle times at slower Ethernet speeds, such as 100 Mbit/s and below. Since the pre-emption takes place in hardware in the MAC, as the frame passes through, cut-through switching can be supported as well, since the overall frame size is not needed a priori. The MAC interface just checks in regular 64 byte intervals whether the frame needs to be pre-empted or not.

The combination of time synchronization, the IEEE 802.1Qbv scheduler and frame pre-emption already constitutes an effective set of standards that can be utilized to guarantee the coexistence of different traffic categories on a network while also providing end-to-end latency guarantees. This will be enhanced further as new IEEE 802.1 specifications, such as 802.1Qch are finalized.

Shortcomings of IEEE 802.1Qbv/bu[edit]

Overall, the time-aware scheduler has high implementation complexity and its use of bandwidth is not efficient. Task and event scheduling in endpoints has to be coupled with the gate scheduling of the traffic shaper in order to lower the latencies. A critical shortcoming is some delay incurred when an end-point streams unsynchronized data, due to the waiting time for the next time-triggered window.

The time-aware scheduler requires tight synchronization of its time-triggered windows, so all bridges on the stream path must be synchronized. However synchronizing TSN bridge frame selection and transmission time is nontrivial even in moderately sized networks and requires a fully managed solution.

Frame preemption is hard to implement and has not seen wide industry support.

IEEE 802.1Qcr Asynchronous Traffic Shaping[edit]

Credit-based, time-aware and cyclic (peristaltic) shapers require network-wide coordinated time and utilize network bandwidth inefficiently, as they enforce packet transmission at periodic cycles. The IEEE 802.1Qcr Asynchronous Traffic Shaper (ATS) operates asynchronously based on local clocks in each bridge, improving link utilization for mixed traffic types, such as periodic with arbitrary periods, sporadic (event driven), and rate-constrained.

ATS employs the urgency-based scheduler (UBS) which prioritizes urgent traffic using per-class queuing and per-stream reshaping. Asynchronicity is achieved by interleaved shaping with traffic characterization based on Token Bucket Emulation, a token bucket emulation model, to eliminate the burstiness cascade effects of per-class shaping. The TBE shaper controls the traffic by average transmission rate, but allows a certain level of burst traffic. When there is a sufficient number of tokens in the bucket, transmission starts immediately; otherwise the queue's gate closes for the time needed to accumulate enough tokens.

The UBS is an improvement on Rate-Controlled Service Disciplines (RCSDs) to control selection and transmission of each individual frame at each hop, decoupling stream bandwidth from the delay bound by separation of rate control and packet scheduling, and using static priorities and First Come - First Serve and Earliest Due - Date First queuing.

UBS queuing has two levels of hierarchy: per-flow shaped queues, with fixed priority assigned by the upstream sources according to application-defined packet transmission times, allowing arbitrary transmission period for each stream, and shared queues that merge streams with the same internal priority from several shapers. This separation of queuing has low implementation complexity while ensuring that frames with higher priority will bypass the lower priority frames.

The shared queues are highly isolated, with policies for separate queues for frames from different transmitters, the same transmitter but different priority, and the same transmitter and priority but a different priority at the receiver. Queue isolation prevents propagation of malicious data, assuring that ordinary streams will get no interference, and enables flexible stream or transmitter blocking by administrative action. The minimum number of shared queues is the number of ports minus one, and more with additional isolation policies. Shared queues have scheduler internal fixed priority, and frames are transmitted on the First Come First Serve principle.

Worst case clock sync inaccuracy does not decrease link utilization, contrary to time-triggered approaches such as TAS (Qbv) and CQF (Qch).

Selection of communication paths and fault-tolerance[edit]

IEEE 802.1Qca Path Control and Reservation (PCR)[edit]

IEEE 802.1Qca Path Control and Reservation (PCR) specifies extensions to the Intermediate Station to Intermediate Station (IS-IS) protocol to configure multiple paths in bridged networks.

The IEEE 802.1Qca standard uses Shortest Path Bridging (SPB) with a software-defined networking (SDN) hybrid mode - the IS-IS protocol handles basic functions, while the SDN controller manages explicit paths using Path Computation Elements (PCEs) at dedicated server nodes. IEEE 802.1Qca integrates control protocols to manage multiple topologies, configure an explicit forwarding path (a predefined path for each stream), reserve bandwidth, provides data protection and redundancy, and distribute flow synchronization and flow control messages. These are derived from Equal Cost Tree (ECT), Multiple Spanning Tree Instance (MSTI) and Internal Spanning Tree (IST), and Explicit Tree (ET) protocols.

IEEE 802.1CB Frame Replication and Elimination for Reliability (FRER)[edit]

IEEE 802.1CB Frame Replication and Elimination for Reliability (FRER) sends duplicate copies of each frame over multiple disjoint paths, to provide proactive seamless redundancy for control applications that cannot tolerate packet losses.

The packet replication can use traffic class and path information to minimize network congestion. Each replicated frame has a sequence identification number, used to re-order and merge frames and to discard duplicates.

FRER requires centralized configuration management and needs to be used with 802.1Qcc and 802.1Qca. Industrial fault-tolerance HSR and PRP specified in IEC 62439-3 are supported.

Current projects[edit]

IEEE 802.1CS Link-Local Registration Protocol[edit]

MRP state data for a stream takes 1500 bytes. With additional traffic streams and larger networks, the size of the database proportionally increases and MRP updates between bridge neighbors significantly slow down. The Link-Local Registration Protocol (LRP) is optimized for a larger database size of about 1 Mbyte with efficient replication that allows incremental updates. Unresponsive nodes with stale data are automatically discarded. While MRP is application specific, with each registered application defining its own set of operations, LRP is application neutral.

IEEE 802.1Qdd Resource Allocation Protocol[edit]

SRP and MSRP are primarily designed for AV applications - their distributed configuration model is limited to Stream Reservation (SR) Classes A and B defined by the Credit-Based Shaper (CBS), whereas IEEE 802.1Qcc includes a more centralized CNC configuration model supporting all new TSN features such as additional shapers, frame preemption, and path redundancy.

IEEE P802.1Qdd project updates the distributed configuration model by defining new peer-to-peer Resource Allocation Protocol signaling built upon P802.1CS Link-local Registration Protocol. RAP will improve scalability and provide dynamic reservation for a larger number of streams with support for redundant transmission over multiple paths in 802.1CB FRER, and autoconfiguration of sequence recovery.

RAP supports the 'topology-independent per-hop latency calculation' capability of TSN shapers such as 802.1Qch Cyclic Queuing and Forwarding (CQF) and P802.1Qcr Asynchronous Traffic Shaping (ATS). It will also improve performance under high load and support proxying and enhanced diagnostics, all while maintaining backward compatibility and interoperability with MSRP.

IEEE 802.1ABdh Link Layer Discovery Protocol v2[edit]

IEEE P802.1ABdh Station and Media Access Control Connectivity Discovery - Support for Multiframe Protocol Data Units (LLDPv2)[10][11] updates LLDP to support IETF Link State Vector Routing protocol[12] and improve efficiency of protocol messages.

YANG data models[edit]

The IEEE 802.1Qcp standard implements the YANG data model to provide a Universal Plug-and-Play (uPnP) framework for status reporting and configuration of equipment such as Media Access Control (MAC) Bridges, Two-Port MAC Relays (TPMRs), Customer Virtual Local Area Network (VLAN) Bridges, and Provider Bridges, and to support the 802.1X Security and 802.1AX Datacenter Bridging standards.

YANG is a Unified Modeling Language (UML) for configuration and state data, notifications, and remote procedure calls, to set up device configuration with network management protocols such as NETCONF/RESTCONF.

DetNet[edit]

The IETF Deterministic Networking (DetNet) Working Group is focusing to define deterministic data paths with high reliability and bounds on latency, loss, and packet delay variation (jitter), such as audio and video streaming, industrial automation, and vehicle control.

The goals of Deterministic Networking are to migrate time-critical, high-reliability industrial and audio-video applications from special-purpose Fieldbus networks to IP packet networks. To achieve these goals, DetNet uses resource allocation to manage buffer sizes and transmission rates in order to satisfy end-to-end latency requirements. Service protection against failures with redundancy over multiple paths and explicit routes to reduce packet loss and reordering. The same physical network shall handle both time-critical reserved traffic and regular best-effort traffic, and unused reserved bandwidth shall be released for best-effort traffic.

DetNet operates at the IP Layer 3 routed segments using a software-defined networking layer to provide IntServ and DiffServ integration, and delivers services over lower Layer 2 bridged segments using technologies such as MPLS and IEEE 802.1 AVB/TSN.[13]

Traffic Engineering (TE) routing protocols translate DetNet flow specification to AVB/TSN controls for queuing, shaping, and scheduling algorithms, such as IEEE 802.1Qav credit-based shaper, IEEE802.1Qbv time-triggered shaper with a rotating time scheduler, IEEE802.1Qch synchronized double buffering, 802.1Qbu/802.3br Ethernet packet pre-emption, and 802.1CB frame replication and elimination for reliability. Also protocol interworking defined by IEEE 802.1CB is used to advertise TSN sub-network capabilities to DetNet flows via the Active Destination MAC and VLAN Stream identification functions. DetNet flows are matched by destination MAC address, VLAN ID and priority parameters to Stream ID and QoS requirements for talkers and listeners in the AVB/TSN sub-network.[14]

Standards[edit]

| Standard | Title | Status | Publication Date |

|---|---|---|---|

| IEEE 802.1BA-2021 | TSN profile for Audio Video Bridging (AVB) Systems | Current[16][17] | 12 December 2021 |

| IEEE 802.1AS-2020 | Timing and Synchronization for Time-Sensitive Applications (gPTP) | Current,[18][19] amended by Cor 1-2021 [20] | 30 January 2020 |

| IEEE 802.1AS-rev | Timing and Synchronization for Time-Sensitive Applications (gPTP) | preparation[21] | 5 June 2023 |

| IEEE 802.1ASdm | Timing and Synchronization for Time-Sensitive Applications - Hot Standby | Draft 2.0[22] | 29 January 2024 |

| IEEE 802.1ASds | IEEE 802.3 Clause 4 Media Access Control (MAC) operating in half-duplex | Draft 0.2[23] | 6 February 2024 |

| IEEE 802.1Qav-2009 | Forwarding and Queuing Enhancements for Time-Sensitive Streams | Incorporated into IEEE 802.1Q | 5 January 2010 |

| IEEE 802.1Qat-2010 | Stream Reservation Protocol (SRP) | 30 September 2010 | |

| IEEE 802.1aq-2012 | Shortest Path Bridging (SPB) | 29 March 2012 | |

| IEEE 802.1Qbp-2014 | Equal Cost Multiple Paths (for Shortest Path Bridging) | 27 March 2014 | |

| IEEE 802.1Qbv-2015 | Enhancements for Scheduled Traffic | 18 March 2016 | |

| IEEE 802.1Qbu-2016 | Frame Preemption (requires IEEE 802.3br Interspersing Express Traffic) | 30 August 2016 | |

| IEEE 802.1Qca-2015 | Path Control and Reservation | 11 March 2016 | |

| IEEE 802.1Qch-2017 | Cyclic Queuing and Forwarding | 28 June 2017 | |

| IEEE 802.1Qci-2017 | Per-Stream Filtering and Policing | 28 September 2017 | |

| IEEE 802.1Qcc-2018 | Stream Reservation Protocol (SRP) Enhancements and Performance Improvements[24] | 31 October 2018 | |

| IEEE 802.1Qcy-2019 | Virtual Station Interface (VSI) Discovery and Configuration Protocol (VDP)[25] | 4 June 2018 | |

| IEEE 802.1Qcr-2020 | Asynchronous Traffic Shaping[26][27] | 6 November 2020 | |

| IEEE 802.1Q-2022 | Bridges and Bridged Networks (incorporates 802.1Qav/at/aq/bp/bv/bu/ca/ci/ch/cc/cy/cr and other amendmends) | Current[28][29] | 22 December 2022 |

| IEEE 802.1Q-Rev | Bridges and Bridged Networks (incorporates 802.1Qcz/Qcj and other amendments) | preparation[30] | 5 June 2023 |

| IEEE 802.1Qcj | Automatic Attachment to Provider Backbone Bridging (PBB) services | Current[31][32] | 17 November 2023 |

| IEEE 802.1Qcz | Congestion Isolation | Current[33][34] | 4 August 2023 |

| IEEE 802.1Qdd | Resource Allocation Protocol | Draft 0.8[35] | 5 December 2023 |

| IEEE 802.1Qdj | Configuration enhancements for TSN | Draft 1.2[36] | 16 August 2023 |

| IEEE 802.1Qdq | Shaper Parameter Settings for Bursty Traffic Requiring Bounded Latency | Draft 0.4[37] | 17 May 2023 |

| IEEE 802.1Qdt | Priority-based Flow Control Enhancements | Draft 0.2[38] | 15 September 2022 |

| IEEE 802.1Qdv | Enhancements to Cyclic Queuing and Forwarding | Draft 0.4[39] | 14 November 2023 |

| IEEE 802.1Qdw | Source Flow Control | preparation[40] | 21 September 2023 |

| IEEE 802.1AB-2016 | Station and Media Access Control Connectivity Discovery (Link Layer Discovery Protocol (LLDP)) | Current[41] | 11 March 2016 |

| IEEE 802.1ABdh-2021 | Station and Media Access Control Connectivity Discovery - Support for Multiframe Protocol Data Units (LLDPv2) | Current[42][43] | 21 September 2021 |

| IEEE 802.1AX-2020 | Link Aggregation | Current[44][45] | 29 May 2020 |

| IEEE 802.1CB-2017 | Frame Replication and Elimination for Reliability | Current[46] | 27 October 2017 |

| IEEE 802.1CBdb-2021 | FRER Extended Stream Identification Functions | Current [47][48] | 22 September 2021 |

| IEEE 802.1CM-2018 | Time-Sensitive Networking for Fronthaul | Current[49][50] | 8 June 2018 |

| IEEE 802.1CMde-2020 | Enhancements to Fronthaul Profiles to Support New Fronthaul Interface, Synchronization, and Syntonization Standards | Current[51] | 16 October 2020 |

| IEEE 802.1CS-2020 | Link-Local Registration Protocol | Current[52][53] | 3 December 2020 |

| IEEE 802.1CQ | Multicast and Local Address Assignment | Draft 0.8[54] | 31 July 2022 |

| IEEE 802.1DC | Quality of Service Provision by Network Systems | Draft 3.1[55] | 1 March 2024 |

| IEEE 802.1DF | TSN Profile for Service Provider Networks | Draft 0.1[56] | 21 December 2020 |

| IEEE 802.1DG | TSN Profile for Automotive In-Vehicle Ethernet Communications | Draft 2.4[57] | 13 February 2024 |

| IEEE 802.1DP / SAE AS 6675 | TSN for Aerospace Onboard Ethernet Communications | Draft 1.1[58] | 29 December 2023 |

| IEEE 802.1DU | Cut-Through Forwarding Bridges and Bridged Networks | Draft 0.2[59] | 4 October 2023 |

| IEC/IEEE 60802 | TSN Profile for Industrial Automation | Draft 2.2[60] | 22 February 2024 |

Related projects:

| Standard | Title | Status | Updated date |

|---|---|---|---|

| IEEE 802.3br | Interspersing Express Traffic[61] | Published | June 30, 2016 |

References[edit]

- ^ "IEEE 802.1 Time-Sensitive Networking Task Group". www.ieee802.org.

- ^ "IEEE 802.1 AV Bridging Task Group". www.ieee802.org.

- ^ "802.1Q-2018 Bridges and Bridged Networks – Revision |". 1.ieee802.org.

- ^ "IEEE 802.1: 802.1Qbv - Enhancements for Scheduled Traffic". www.ieee802.org.

- ^ "On the Validity of Credit-Based Shaper Delay Guarantees in Decentralized Reservation Protocols" (PDF). www.ieee802.org.

- ^ "Class A Bridge Latency Calculations" (PDF). www.ieee802.org.

- ^ Maile, Lisa; Hielscher, Kai-Steffen; German, Reinhard (May 2020). "Network Calculus Results for TSN: An Introduction". 2020 Information Communication Technologies Conference (ICTC). pp. 131–140. doi:10.1109/ICTC49638.2020.9123308. ISBN 978-1-7281-6776-3. S2CID 220072988. Retrieved 25 March 2021.

- ^ "IEEE 802.1: 802.1Qbu - Frame Preemption". www.ieee802.org.

- ^ "IEEE P802.3br Interspersing Express Traffic Task Force". www.ieee802.org.

- ^ "P802.1ABdh – Support for Multiframe Protocol Data Units".

- ^ "IEEE 802 PARs under consideration". www.ieee802.org.

- ^ "Link State Vector Routing (lsvr) -". datatracker.ietf.org.

- ^ "Deterministic Networking (detnet) - Documents". datatracker.ietf.org.

- ^ Varga, Balazs; Farkas, János; Malis, Anew G.; Bryant, Stewart (8 June 2021). "draft-ietf-detnet-ip-over-tsn-01 - DetNet Data Plane: IP over IEEE 802.1 Time Sensitive Networking (TSN)". datatracker.ietf.org.

- ^ https://www.ieee802.org/1/files/public/docs2021/admin-TSN-summary-1121-v01.pdf [bare URL PDF]

- ^ "IEEE Sa - IEEE 802.1Ba-2011".

- ^ "802.1BA-Rev – Revision to IEEE STD 802.1BA-2011".

- ^ "IEEE 802.1AS-2020 Local and Metropolitan Area Networks - Timing and Synchronization for Time-Sensitive Applications". standards.ieee.org. IEEE.

- ^ "P802.1AS-2020 – Timing and Synchronization for Time-Sensitive Applications". 1.ieee802.org.

- ^ "IEEE 802.1AS-2020 Local and Metropolitan Area Networks - Timing and Synchronization for Time-Sensitive Applications - Corrigendum 1: Technical and Editorial Correction". standards.ieee.org. IEEE.

- ^ {{Cite web|url=https://1.ieee802.org/maintenance/802-1as-2020-rev/%7Ctitle=style="background: #FF8; vertical-align: middle; text-align: center; " class="table-maybe"|802.1AS-2020-Revision – Timing and Synchronization for Time-Sensitive Applications

- ^ "P802.1ASdm – Hot Standby".

- ^ "P802.1ASds – Support for the IEEE Std 802.3 Clause 4 Media Access Control (MAC) operating in half-duplex".

- ^ "802.1Qcc-2018 - IEEE Standard for Local and Metropolitan Area Networks--Bridges and Bridged Networks -- Amendment 31: Stream Reservation Protocol (SRP) Enhancements and Performance Improvements". standards.ieee.org.

- ^ "802.1Qcy-2019 - IEEE Standard for Local and Metropolitan Area Networks--Bridges and Bridged Networks Amendment 32: Virtual Station Interface (VSI) Discovery and Configuration Protocol (VDP) Extension to Support Network Virtualization Overlays Over Layer 3 (NVO3)". standards.ieee.org.

- ^ "IEEE 802.1Qcr-2020 Local and Metropolitan Area Networks - Bridges and Bridged Networks - Amendment 34: Asynchronous Traffic Shaping". standards.ieee.org.

- ^ "P802.1Qcr – Bridges and Bridged Networks Amendment: Asynchronous Traffic Shaping". 1.ieee802.org.

- ^ "IEEE 802.1Q-2022 Local and Metropolitan Area Networks - Bridges and Bridged Networks". standards.ieee.org.

- ^ "802.1Q-2022 – Bridges and Bridged Networks".

- ^ "P802.1Q-Rev – Revision to IEEE Standard 802.1Q-2022".

- ^ "IEEE 802.1Qcj-2023 Local and Metropolitan Area Networks - Bridges and Bridged Networks - Amendment 37: Automatic Attachment to Provider Backbone Bridging (PBB) Services". standards.ieee.org.

- ^ "P802.1Qcj – Automatic Attachment to Provider Backbone Bridging (PBB) services". 1.ieee802.org.

- ^ "IEEE 802.1Qcj-2023 Local and Metropolitan Area Networks - Bridges and Bridged Networks - Amendment 35: Congestion Isolation". standards.ieee.org.

- ^ "P802.1Qcz – Congestion Isolation". 1.ieee802.org.

- ^ "P802.1Qdd – Resource Allocation Protocol". 1.ieee802.org.

- ^ "P802.1Qdj – Configuration enhancements for TSN". 1.ieee802.org.

- ^ "P802.1Qdq – Shaper Parameter Settings for Bursty Traffic Requiring Bounded Latency".

- ^ "P802.1Qdt – Priority-based Flow Control Enhancements".

- ^ "P802.1Qdv – Enhancements to Cyclic Queuing and Forwarding".

- ^ "P802.1Qdw – Source Flow Control".

- ^ "IEEE Sa - IEEE 802.1Ab-2016".

- ^ "IEEE 802.1ABdh-2021 Station and Media Access Control Connectivity Discovery — Amendment: Support for Multiframe Protocol Data Units". standards.ieee.org.

- ^ "P802.1ABdh – Support for Multiframe Protocol Data Units".

- ^ "IEEE 802.1AX-2020 Local and Metropolitan Area Networks - Link Aggregation".

- ^ "802.1AX-2020 – Link Aggregation". 1.ieee802.org.

- ^ "IEEE Sa - IEEE 802.1Cb-2017".

- ^ ref>{{Cite web|url=https://standards.ieee.org/standard/802_1CBdb-2021.html%7Ctitle = IEEE 802.1CBdb Local and metropolitan area networks -Frame Replication and Elimination for Reliability - Amendment 2: Extended Stream Identification Functions

- ^ "P802.1CBdb – FRER Extended Stream Identification Functions". 1.ieee802.org.

- ^ "IEEE Sa - IEEE 802.1Cm-2018".

- ^ "802.1CM-2018 – Time-Sensitive Networking for Fronthaul".

- ^ "P802.1CMde – Enhancements to Fronthaul Profiles to Support New Fronthaul Interface, Synchronization, and Syntonization Standards". 1.ieee802.org.

- ^ "IEEE Sa - IEEE 802.1Cs-2020".

- ^ "P802.1CS – Link-local Registration Protocol". 1.ieee802.org.

- ^ "P802.1CQ: Multicast and Local Address Assignment". 1.ieee802.org.

- ^ "P802.1DC – Quality of Service Provision by Network Systems". 1.ieee802.org.

- ^ "P802.1DF – TSN Profile for Service Provider Networks". 1.ieee802.org.

- ^ "P802.1DG – TSN Profile for Automotive In-Vehicle Ethernet Communications". 1.ieee802.org.

- ^ "P802.1DP – TSN for Aerospace Onboard Ethernet Communications". 1.ieee802.org.

- ^ "P802.1DU – Cut-Through Forwarding Bridges and Bridged Networks". 1.ieee802.org.

- ^ "IEC/IEEE 60802 TSN Profile for Industrial Automation". 1.ieee802.org.

- ^ Interspersing "Express Traffic Task Force".

External links[edit]

- IEEE 802.1 Time-Sensitive Networking Task Group

- IEEE 802.1 public document archive

- Specification for IEEE TSN Standards from Avnu Alliance

- Real-time Ethernet – redefined (in German)

- Time Sensitive Networking (TSN) Vision: Unifying Business & Industrial Automation

- Is TSN Activity Igniting Another Fieldbus WAR?[dead link]

- research project related to TSN applications in aircraft

- TSN Training, Marc Boyer (ONERA), Pierre Julien Chaine (Airbus Defence and Space)