Transitive closure

| Transitive binary relations | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

All definitions tacitly require the homogeneous relation be transitive: for all if and then |

In mathematics, the transitive closure R+ of a homogeneous binary relation R on a set X is the smallest relation on X that contains R and is transitive. For finite sets, "smallest" can be taken in its usual sense, of having the fewest related pairs; for infinite sets R+ is the unique minimal transitive superset of R.

For example, if X is a set of airports and x R y means "there is a direct flight from airport x to airport y" (for x and y in X), then the transitive closure of R on X is the relation R+ such that x R+ y means "it is possible to fly from x to y in one or more flights".

More formally, the transitive closure of a binary relation R on a set X is the smallest (w.r.t. ⊆) transitive relation R+ on X such that R ⊆ R+; see Lidl & Pilz (1998, p. 337). We have R+ = R if, and only if, R itself is transitive.

Conversely, transitive reduction adduces a minimal relation S from a given relation R such that they have the same closure, that is, S+ = R+; however, many different S with this property may exist.

Both transitive closure and transitive reduction are also used in the closely related area of graph theory.

Transitive relations and examples[edit]

A relation R on a set X is transitive if, for all x, y, z in X, whenever x R y and y R z then x R z. Examples of transitive relations include the equality relation on any set, the "less than or equal" relation on any linearly ordered set, and the relation "x was born before y" on the set of all people. Symbolically, this can be denoted as: if x < y and y < z then x < z.

One example of a non-transitive relation is "city x can be reached via a direct flight from city y" on the set of all cities. Simply because there is a direct flight from one city to a second city, and a direct flight from the second city to the third, does not imply there is a direct flight from the first city to the third. The transitive closure of this relation is a different relation, namely "there is a sequence of direct flights that begins at city x and ends at city y". Every relation can be extended in a similar way to a transitive relation.

An example of a non-transitive relation with a less meaningful transitive closure is "x is the day of the week after y". The transitive closure of this relation is "some day x comes after a day y on the calendar", which is trivially true for all days of the week x and y (and thus equivalent to the Cartesian square, which is "x and y are both days of the week").

Existence and description[edit]

For any relation R, the transitive closure of R always exists. To see this, note that the intersection of any family of transitive relations is again transitive. Furthermore, there exists at least one transitive relation containing R, namely the trivial one: X × X. The transitive closure of R is then given by the intersection of all transitive relations containing R.

For finite sets, we can construct the transitive closure step by step, starting from R and adding transitive edges. This gives the intuition for a general construction. For any set X, we can prove that transitive closure is given by the following expression

where is the i-th power of R, defined inductively by

and, for ,

where denotes composition of relations.

To show that the above definition of R+ is the least transitive relation containing R, we show that it contains R, that it is transitive, and that it is the smallest set with both of those characteristics.

- : contains all of the , so in particular contains .

- is transitive: If , then and for some by definition of . Since composition is associative, ; hence by definition of and .

- is minimal, that is, if is any transitive relation containing , then : Given any such , induction on can be used to show for all as follows: Base: by assumption. Step: If holds, and , then and for some , by definition of . Hence, by assumption and by induction hypothesis. Hence by transitivity of ; this completes the induction. Finally, for all implies by definition of .

Properties[edit]

The intersection of two transitive relations is transitive.

The union of two transitive relations need not be transitive. To preserve transitivity, one must take the transitive closure. This occurs, for example, when taking the union of two equivalence relations or two preorders. To obtain a new equivalence relation or preorder one must take the transitive closure (reflexivity and symmetry—in the case of equivalence relations—are automatic).

In graph theory[edit]

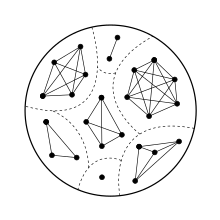

In computer science, the concept of transitive closure can be thought of as constructing a data structure that makes it possible to answer reachability questions. That is, can one get from node a to node d in one or more hops? A binary relation tells you only that node a is connected to node b, and that node b is connected to node c, etc. After the transitive closure is constructed, as depicted in the following figure, in an O(1) operation one may determine that node d is reachable from node a. The data structure is typically stored as a Boolean matrix, so if matrix[1][4] = true, then it is the case that node 1 can reach node 4 through one or more hops.

The transitive closure of the adjacency relation of a directed acyclic graph (DAG) is the reachability relation of the DAG and a strict partial order.

The transitive closure of an undirected graph produces a cluster graph, a disjoint union of cliques. Constructing the transitive closure is an equivalent formulation of the problem of finding the components of the graph.[1]

In logic and computational complexity[edit]

The transitive closure of a binary relation cannot, in general, be expressed in first-order logic (FO). This means that one cannot write a formula using predicate symbols R and T that will be satisfied in any model if and only if T is the transitive closure of R. In finite model theory, first-order logic (FO) extended with a transitive closure operator is usually called transitive closure logic, and abbreviated FO(TC) or just TC. TC is a sub-type of fixpoint logics. The fact that FO(TC) is strictly more expressive than FO was discovered by Ronald Fagin in 1974; the result was then rediscovered by Alfred Aho and Jeffrey Ullman in 1979, who proposed to use fixpoint logic as a database query language.[2] With more recent concepts of finite model theory, proof that FO(TC) is strictly more expressive than FO follows immediately from the fact that FO(TC) is not Gaifman-local.[3]

In computational complexity theory, the complexity class NL corresponds precisely to the set of logical sentences expressible in TC. This is because the transitive closure property has a close relationship with the NL-complete problem STCON for finding directed paths in a graph. Similarly, the class L is first-order logic with the commutative, transitive closure. When transitive closure is added to second-order logic instead, we obtain PSPACE.

In database query languages[edit]

Since the 1980s Oracle Database has implemented a proprietary SQL extension CONNECT BY... START WITH that allows the computation of a transitive closure as part of a declarative query. The SQL 3 (1999) standard added a more general WITH RECURSIVE construct also allowing transitive closures to be computed inside the query processor; as of 2011 the latter is implemented in IBM Db2, Microsoft SQL Server, Oracle, PostgreSQL, and MySQL (v8.0+). SQLite released support for this in 2014.

Datalog also implements transitive closure computations.[4]

MariaDB implements Recursive Common Table Expressions, which can be used to compute transitive closures. This feature was introduced in release 10.2.2 of April 2016.[5]

Algorithms[edit]

Efficient algorithms for computing the transitive closure of the adjacency relation of a graph can be found in Nuutila (1995). Reducing the problem to multiplications of adjacency matrices achieves the least[citation needed] time complexity, viz. that of matrix multiplication (Munro 1971, Fischer & Meyer 1971), which is as of December 2020[update]. However, this approach is not practical since both the constant factors and the memory consumption for sparse graphs are high (Nuutila 1995, pp. 22–23, sect.2.3.3). The problem can also be solved by the Floyd–Warshall algorithm in , or by repeated breadth-first search or depth-first search starting from each node of the graph.

For directed graphs, Purdom's algorithm solves the problem by first computing its condensation DAG and its transitive closure, then lifting it to the original graph. Its runtime is , where is the number of edges between its strongly connected components.[6][7][8][9]

More recent research has explored efficient ways of computing transitive closure on distributed systems based on the MapReduce paradigm.[10]

See also[edit]

- Ancestral relation

- Deductive closure

- Reflexive closure

- Symmetric closure

- Transitive reduction (a smallest relation having the transitive closure of R as its transitive closure)

References[edit]

- ^ McColl, W. F.; Noshita, K. (1986), "On the number of edges in the transitive closure of a graph", Discrete Applied Mathematics, 15 (1): 67–73, doi:10.1016/0166-218X(86)90020-X, MR 0856101

- ^ (Libkin 2004:vii)

- ^ (Libkin 2004:49)

- ^ (Silberschatz et al. 2010:C.3.6)

- ^ "Recursive Common Table Expressions Overview". mariadb.com.

- ^ Purdom Jr., Paul (Mar 1970). "A transitive closure algorithm". BIT Numerical Mathematics. 10 (1): 76–94. doi:10.1007/BF01940892.

- ^ Paul W. Purdom Jr. (Jul 1968). A transitive closure algorithm (Computer Sciences Technical Report). Vol. 33. University of Wisconsin-Madison.

- ^ ""Purdom's algorithm" on AlgoWiki".

- ^ ""Transitive closure of a directed graph" on AlgoWiki".

- ^ (Afrati et al. 2011)

- Foto N. Afrati, Vinayak Borkar, Michael Carey, Neoklis Polyzotis, Jeffrey D. Ullman, Map-Reduce Extensions and Recursive Queries, EDBT 2011, March 22–24, 2011, Uppsala, Sweden, ISBN 978-1-4503-0528-0

- Aho, A. V.; Ullman, J. D. (1979). "Universality of data retrieval languages". Proceedings of the 6th ACM SIGACT-SIGPLAN Symposium on Principles of programming languages - POPL '79. pp. 110–119. doi:10.1145/567752.567763.

- Benedikt, M.; Senellart, P. (2011). "Databases". In Blum, Edward K.; Aho, Alfred V. (eds.). Computer Science. The Hardware, Software and Heart of It. pp. 169–229. doi:10.1007/978-1-4614-1168-0_10. ISBN 978-1-4614-1167-3.

- Heinz-Dieter Ebbinghaus; Jörg Flum (1999). Finite Model Theory (2nd ed.). Springer. pp. 123–124, 151–161, 220–235. ISBN 978-3-540-28787-2.

- Fischer, M.J.; Meyer, A.R. (Oct 1971). "Boolean matrix multiplication and transitive closure" (PDF). In Raymond E. Miller and John E. Hopcroft (ed.). Proc. 12th Ann. Symp. on Switching and Automata Theory (SWAT). IEEE Computer Society. pp. 129–131. doi:10.1109/SWAT.1971.4.

- Erich Grädel; Phokion G. Kolaitis; Leonid Libkin; Maarten Marx; Joel Spencer; Moshe Y. Vardi; Yde Venema; Scott Weinstein (2007). Finite Model Theory and Its Applications. Springer. pp. 151–152. ISBN 978-3-540-68804-4.

- Keller, U., 2004, Some Remarks on the Definability of Transitive Closure in First-order Logic and Datalog (unpublished manuscript)* Libkin, Leonid (2004), Elements of Finite Model Theory, Springer, ISBN 978-3-540-21202-7

- Lidl, R.; Pilz, G. (1998), Applied abstract algebra, Undergraduate Texts in Mathematics (2nd ed.), Springer, ISBN 0-387-98290-6

- Munro, Ian (Jan 1971). "Efficient determination of the transitive closure of a directed graph". Information Processing Letters. 1 (2): 56–58. doi:10.1016/0020-0190(71)90006-8.

- Nuutila, Esko (1995). Efficient transitive closure computation in large digraphs. Finnish Academy of Technology. ISBN 951-666-451-2. OCLC 912471702.

- Abraham Silberschatz; Henry Korth; S. Sudarshan (2010). Database System Concepts (6th ed.). McGraw-Hill. ISBN 978-0-07-352332-3. Appendix C (online only)

External links[edit]

- "Transitive closure and reduction", The Stony Brook Algorithm Repository, Steven Skiena.