User:Thermochap

| This is a Wikipedia user page. This is not an encyclopedia article or the talk page for an encyclopedia article. If you find this page on any site other than Wikipedia, you are viewing a mirror site. Be aware that the page may be outdated and that the user whom this page is about may have no personal affiliation with any site other than Wikipedia. The original page is located at https://en.wikipedia.org/wiki/User:Thermochap. |

Greetings![edit]

I'm relatively new here, and not yet quite sure what to do with a user page. For the most part so far I've found the mechanics of interaction fairly self-explanatory, and the community by and large welcoming. I am particularly excited about the educational potential of Wikipedia. Thermochap (talk) 14:44, 21 January 2008 (UTC)

Perhaps I should note here that author contributions to Wikipedia are, by default, protected by the GNU Free Documentation License discussed in more detail here.

- Since the note above was posted, I've become even more interested in the potential of limited-access or scoped collaboration-spaces built on platforms like that provided by mediawiki software. One reason is that such space serves as platform for locally-implemented non-byline evolution of shared idea-codes. Circulatory systems in multi-celled organisms similarly implemented methods for local non-byline distribution of (e.g. enzymatic) molecule-codes, like the request for clotting-agents local to the site of a wound, so this development might make new things possible in the world of ideas too. Thermochap (talk) 19:52, 7 April 2011 (UTC)

|

an you imagine living with a ton of air sitting on you, |

Wikipedia topics...[edit]

...to which I've contributed might include some of the entries in this table...

...and what else? Thermochap (talk) 10:36, 25 October 2013 (UTC)

Background[edit]

My academic home is in physics & astronomy, although I'm an applied mathematician at heart. The label thermochap is a contraction of "thermo chapters", which in modern introductory-physics books (except e.g. for Tom Moore's text[1]) still introduce entropy (and hence the pivotal role of accesible-state uncertainty) last even though the "entropy-first" approach took over senior undergrad thermal-physics texts nearly 50 years ago and (by virtue of its connection to information theory and Bayesian inference) makes the strengths and limits of relationships like the ideal gas law, equipartition and mass-action clear from the start.

With help from regional employers & facilities for nanocharacterization I've thankfully encountered chances to explore nature on varied scales of space and time. Applications include mathematical nano-microscopy tool development, laboratory study of circumstellar dust, high temperature materials for electronic or aerospace applications, and Bayesian informatic studies of data analysis, complex systems, & algorithmic simplicity.

Key developments include:

- robust log-probability tools for assessing community health that include species-diversity & available work as special cases,

- evidence for carbon droplets from laboratory study of unlayered-graphene condensed in red-giant atmospheres,

- other research involves digital darkfield decompositions, experimental detection of icosahedral twins, proper kinematics from the metric equation, electron zero-loss vs. fraction-deflection studies of nano-carbon, real-time sheet music, lattice fringe visibility analysis, effects of sample-bias on error in the mean, AFM study of alpha-recoil tracks in anisotropic minerals, industrially-important semiconductor crystal defects, empirical observations that lead to time-scale and size-scale awareness, solid-interface modeling of metal-oxide nanowire growth, ways to translate knowledge codes into live structures like idea-streams and attention-slice update reporting-mechanisms internal to a variety of organization-types (e.g. local communities, schools, families), and

- a proposal that led to a $10M Center for NanoScience building filled with 6&7-figure instruments for atom-scale detective work.

| Topic | Web Sites | Tools For Interaction | Papers |

|---|---|---|---|

| Labs at Home | Matt's Physics@Home | Don't Just Look: Measure! Picture The Sound! NanoExploration w/Electrons |

A Fast Track Simulation Openworld Experiment Draft |

| Model Selection & Net Surprisal |

Bayesian Model Selection | Phil Gregory's book voicethread | Metric/Entropy-1st Surprises Avogadro\Boltzmann Constants |

| Metric-First motion | Traveler Point Site | Introducing Newton voicethread Metric relativity voicethread |

One Map Two Clocks] One Class Period Draft |

| Evolving Complexity | Correlation-1st Informatics | Quantifying Risk Sampler Fraction Infectious voicethread |

Heat Capacity in Bits Units for Coldness Thermal Roots |

| Task-layer Multiplicity | Old Google Site Ellie's Video Contest Page New Google Site |

Attention-Slice Sampler Augmented Like Button |

1989 MSL-8857 Simplex Model Community Health |

Single phrase project overviews[edit]

A few of our "small project" (and possible talk) topics highlighting "one-new-fact" might include traveler point dynamics, task layer multiplicity, moon baseball, single-slice electron optics, dimensionless units in cycles/radians & mole/molecule, coldness in GB/nJ, concept-selection, organism-centricity, correlation-based complexity, carbon rain & Milky Way nannites, total-sound videography, and what else? Thermochap (talk) 20:51, 24 December 2021 (UTC)

For instance, the "new fact" for:

- our TPD project[2][3][4][5][6] says e.g. that metric-first relativity opens the door to curved spacetime & accelerated frames in everyday life as well as to physics engines for accelerated interstellar travel,

- our TLM project[7][8][9] says e.g. that activity time looking in/out from skin, family & culture can quantify community-level health to help guide the development of public policy downstream,

- our ET baseball project says e.g. that one can keep field size + ball forces/energies unchanged by making the ball's mass proportional to 1/g (although ball momentum is ∝ 1/g½ and speed is ∝ g½),

- our SSEO project says e.g. that single-slice electron optics[10] can give everyone a view of the nanowrld through "electron eyes" and help train operators as well as refine user/algorithm interfaces,

- our dimensionless units project[11] says e.g. that confusion is reduced if we make dimensionless units like cycles/radians, moles/molecules, and bits/nats explicit (wavevector is usually in radians/meter, angular momentum in Js/radian, Planck's constant in Js/cycle, Boltzmann constant in J/K per nat, heat capacity's E/kT in correlation-bits lost per 2-fold increase in thermal energy[12], etc.),

- our coldness (reciprocal temperature in GB/nJ) project[12][13][3][11] says e.g. that bits of subsystem correlation lost per unit thermal energy underlie temperature's wide-ranging utility and make inverted population (negative coldness) states easier to understand,

- our concept-selection project[3] says e.g. that the model least-surprised by available data (minimizing both complexity & disagreement) is likely the best bet even if your "soldier over scout" mindset tells you otherwise,

- our "o-centricity" project says e.g. that an awareness of the dynamics of molecule & idea codes may be obscured by our paleolithic obsession with the "bad vs. good guy" problem as a survival trait that has become a liability,

- our correlation-based complexity project[14] says e.g. that layers of complexity bloom with the breaking of symmetries relative to emergent gradients, boundaries, and pool edges thus integrating insights from observation of physical, biological as well as cultural evolution,

- our carbon rain project (detailed further below) says e.g. that slow-cooled carbon vapor droplets that brought your C-atoms to earth solidify as an unlayered-graphene composite diffusion barrier able to trap atoms in place for aeons,

- our Milky Way nannite project says e.g. that radiation pressure ejection from our solar system can seed many new star systems in a few galactic revolutions if we are clever and also able to take the long view,

- our TSV[15] patent[16] may be telling folks that real time sheet music apps offer up a musician-accesible visual guide to any sound that you encounter including information not accessible by ear,

and what else? pFraundorf (talk) 12:48, 20 December 2021 (UTC)

Specialized areas of interest impacted in our carbon-rain project, on the other hand, are broader and include:

- (i) meteoritics esp. the laboratory study of presolar particles from meteorite dissolution residues,

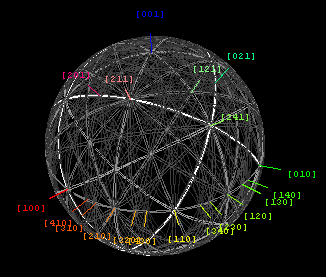

- (ii) transmission electron microscopy esp. electron powder diffraction & high resolution imaging,

- (iii) carbon esp. the study of metastable elemental liquid at low pressure,

- (iv) nucleation theory esp. nucleation and growth of 2D solid precipitates from a liquid phase,

- (v) atomistic modeling esp. VASP studies of nucleation & LCBOP studies of energies & growth, and

- (vi) stellar astrophysics esp. T[R] and R[t] (ejection) for particles in carbon star photospheres.

See more on this project below.

paths in from where folks are[edit]

A key to dialog is to begin from where your target audience is at. This is important with editors, with specialist peers, and also with the general public. Observations and/or simple true assertions, on the contrary, won't work by themselves particularly if they are unexpected. The reaction in that case tends instead to be "likely story" and then move on.

Messages to try introducing in this context might include e.g.:

- Task layer multiplicity as a measure of community-level health,

- Nano-liquid C as a low pressure source of hi-quality diffusion barrier material,

- J/K as an information measure, & coldness in GB/nJ as reluctance to share heat,

- Traveler point dynamics as a 3-vector bridge to curved spacetimes,

and what else?

task layer multiplicity & community-level health[edit]

we like to tell stories of how humans invented our world, let's momentarily imagine that human community is a natural phenomenon that (like microbial and multi-celled life in general) has self-assembled on earth's surface. Like all forms of layered complexity, it is: (a) constructed from a hierarchy of broken symmetries with respect to gradients, boundaries, or pool edges, and (b) expanded/maintained by flows of thermodynamic availability (e.g. available work and/or correlation information) e.g. from processes running lower in the hierarchy. The substrate for human community of course includes our galaxy arm, our star, our planet's surface, living cells and cell communities on the wide range of size scales that serve up the environment in which our ancestors (and ourselves) have emerged.

Within human communities (and metazoan ecosystems in general) we also find a layered hierachy that involves subsystem correlations which look inward and outward from the metazoan skin, given that then inward and outward from family e.g. the edges of our gene and amino acid (molecular) code pools, and further given that then inward and outward from the boundary of culture e.g. the edges of our language and idea pools. We might therefore, at its simplest, model any single-species metazoan community in terms of the fraction of its attention directed toward maintaining these six layers of organizational structure, i.e. in buffering subsystem-correlations that look inward and outward from the boundaries of skin, family, and culture. There is nothing fundamental about this, except that it for simplicity puts these disparate layers of organization on an equal footing.

Modern astronomy as well as paleontology now show us that, just as these amazing things can emerge on the surface of a planet, so the conditions that allow them to remain or even thrive are fragile, and likely to eventually end either gradually or abruptly. Therefore models of the decline as well as the bloom of complexity in metazoan communities are probably worth exploring.

There is a great deal of literature about individual and even "public" health in communities. However the focus tends to be on the individual perspective, e.g. on mental and physical health of the individuals within the community. To address well-being at the community level, it might be interesting to use the simple community-level model of task layer multiplicity (TLM) above to examine the fraction of effort in any given community directed toward those six layers: self-health, pair interactions, family, community, culture, and extra-cultural knowledge[7][9]. Perhaps we will thereby gain some insight into guiding social policy as weil as our own behavior as we attempt to make the most of our blessings. Along the way, we may encounter some surprises that our focus on individual perspectives has helped us to miss!

- For example if truth refers to the correlation between a message and external reality, then autocrats may not need to know what truth means. Neither do wolf packs, because their communities have closer to 4 than to 6 layers of subsystem correlation, and idea (as distinct from molecule) codes in particular play a diminished role.

- Moreover as free energy per capita decreases[17], our human communities may be expected to lower their center-of-mass multiplicity from around 6 down toward 5 or smaller, especially given that our population is largely comprised of folks who spend most of their time at buffering correlations on only 4 or 5 of the 6 layers that look in/out from the boundaries of skin, family & culture. The good news is that individual (i.e. a geometric-average) multiplicity of only around 4¼ layers may be all that's needed to maximize task layer diversity, should we decide to focus on community-level well-being in this context.

- To be more specific here, note that the focus of the community as a whole is represented by the center of mass of the 5-simplex associated with the 6 attention-slice fractions (one for each layer) that add to 1. The breadth of effort by individuals, on the other hand, is represented by the geometric-average multiplicity (rather than the linear average) because multiplicities multiply when uncertainties (log-multiplicities) add, and because this has a simple analytical value of e29/20 ≈ 4¼ for niches uniformly-distributed across the simplex.

In summary:[edit]

- Planetary (and exo-planetary) science is showing that earth is blessed with a fragile friendliness to life rare elsewhere in the universe.

- Plant and animal life, including human communities, are maintained by ordered energy flows past and future, mostly from the sun.

- This layered complexity has self-assembled, and continues to adapt, by a repeated process of variation followed by selection.

- Digital correlation-information storage in the form of molecular, and more recently idea, codes plays an important role in this process.

Do we next need to discuss the importance of the 6 different "broken-symmetry" layers of organization in multi-celled lifeform communities, namely layers that look in (←|) and out (|→) from the boundaries of metazoan-skin (←1|2→), gene-pool (←3|4→) and idea-pool (←5|6→)?

- What are some ways to explore fitness (←1), pair bonds (2→), family (←3), community (4→), culture (←5) & profession (6→) as separate elements of a healthy human world? Moreover, how do these connect to issues of shared interest that we face today, like those listed in the section below?

- For starters, the size of the real challenge (involving a balanced approach considering all 6 levels) is generally much more ambitious than the challenge associated with any one of the issues normally covered in the news. Hence one initial strategy might be to examine the balance of any given argument, and then explore strategies which consider not just one at a time but all.

- For instance, does a law which prevents a raped 10 year old from getting an abortion serve all 6 layers well, or does it serve a mainly cultural mandate (←5) but help replicate the gene-pool (←3) of rapists (2→) while boosting the population of uncared-for children (4→), at the same time promoting governmental infringement of an innocent 10 year old person's personal space (←1)? If this law is not well balanced, then it may help lead toward the decline (rather than the bloom) of layered complexity, especially if our planet is having trouble providing all 6-layers of opportunity to the folks already here (6→).

Another sobering aspect of this story that might grab some attention is precisely how one might expect complexity's decline to unfold, namely in loss of the distinction between these 6 layers. For instance, the higher numbered (and later developed) ones may (at least in the long run) lose steam first.

finding what works[edit]

If you are serious about any particular problem, ranging from how to fix a dripping faucet or a broken electrical circuit to making gerrymanders constructive, minimizing abortions, or reducing gun deaths, consider adopting a scout (versus soldier) mindset and embark on the cycle: Observe → selectModel → predictOptions → Implement → Observe....

This "V↔S" cycle (vary then select then vary etc.) is not only how (for example) science works (although it often also gets bogged down in doctrine), but also how life on earth has always worked. In other words it's part of perhaps our oldest constitution, namely that on the basis of which life itself continues to adapt and survive.

- In this context how may we get media to focus on problems that folks could agree to collaborate on solving, using model strategies and data-based observations that can track progress and thereby lead to making things better? For instance folks on both local and global scales might agree to work toward: (i) fewer gun deaths, (ii) fewer abortions, (iii) fewer COVID infections, (iv) constructive redistricting, (v) community-oriented policing, (vi) minimizing unconstructive use of tax dollars, (v) a healthier middle class, etc.

- Also, how might we create some enthusiasm for pursuing shared goals of even less cultural specificity? These might include: (a) keeping center-of-mass task layer multiplicity[9] up near its maximum of Mcm ≈ 6, or (b) keeping geometric-average task layer multiplicity for indiviuals above Mgeo ≥ 4¼ (also reducing the specialization index R = Mcm/Mgeo) to maximize individual opportunity (a measure of effective freedom) as well as the survival value associated with task layer diversity, and what else? Thermochap (talk) 13:24, 1 June 2022 (UTC)

nano-liquid C & diffusion barriers to beat all[edit]

was long thought that diamond could only be produced under conditions of high pressure and temperature. We now know that metastable diamond can be grown at low pressure and temperature by chemical vapor deposition, perhaps because diamond is the stable phase for nanoscale carbon at low temperature and pressure.

In the same way, liquid carbon is thought to only exist at high temperature and high pressure, although low-pressure stability below the sublimation temperature on the nanoscale has also been predicted[18]. We report now observations in both nature, and in the laboratory, which suggest that metastable liquid condenses at low pressure from carbon vapor perhaps because nanoscale liquid is stable at low pressure, and hence able to grow metastably from the vapor in containerless settings to temperatures well below the melting point as do metallic liquids in general[19].

Moreover, when this low-pressure liquid is cooled slowly it appears to result in an unlayered graphene composite that may have diffusion barrier properties otherwise unrivaled in nature. Synthesizing practical quantities of this material, however, may involve finding a way to "slow cool" (e.g. << 105[K/sec]) condensed carbon vapor from temperatures in the 3000K range without triggering premature solidification.

To be more specific, on-going work addresses these topics:

- (i) pent-first nucleation of graphene sheet growth[20][21],

- (Ii) TEM study of presolar grains extracted from meteorites[22][23][24][25],

- (iii) laboratory synthesis of carbon droplets at low pressure[26][27][28][29],

- (iv) carbon's nanoparticle phase diagram at low pressures but high temperature,

- (v) models of graphene sheet growth over a wide range of droplet cooling rates[30][31][32], and

- (vi) modeling of stellar atmosphere cooling rates for condensed core\rim carbon spheres[33].

J/K in bits & coldness in GB/nJ[edit]

units are seldom expressed in terms of natural or fundamental quantities (i.e. historical units named after people are normally used) because temperature was discovered to be a useful concept long before folks understood that temperature's reciprocal takes on negative values for inverted population state systems, and is most generally a measure of energy's uncertainty slope 1/T = δS/δE (AKA coldness) e.g. in nats of correlation information lost per joule of thermal energy added to any internally equilibrated system[34].

Hence Boltzmann's constant is the number of joules of heat energy for each nat of correlation information lost per Kelvin of temperature available in the ambient heat reservoir, and J/K is therefore simply a measure of correlation information in units like bits, bytes, or nats. In the new-SI, the number of bits of correlation-information in one J/K i.e. exactly 1029/(1,380,649ln[2]) is also the number of bits of correlation information potentially gained per joule of thermal energy lost for each reciprocal Kelvin of coldness in the world around.

Here positive absolute zero represents infinite coldness, which of course can never be reached for a finite system. The reciprocal of this 1,380,649ln[2]/1029 ≈ 9.5699296×10-24 is the number of joules per bit of state uncertainty increase associated with each Kelvin of temperature as well as Boltzmann's constant in J/(K bit) rather than the more familiar 1,380,649/1029 J/(K nat). The available work associated with correlation-information about the state of a system plays a particularly important role in forms of reversible (including quantum) computing[35] as well as in the study of correlation-based complexity[36].

traveler point 3-vectors in curved spacetimes[edit]

an excellent 1968 article, Robert W. Brehme discussed the advantage of teaching relativity with four-vectors. This is not done for intro-physics courses. One reason may be that spacetime’s 4-vector symmetry is broken locally into (3+1)D, so that e.g. engineers working in curved spacetimes (including that here on earth) will naturally want to describe time-intervals in seconds and space-intervals e.g. in meters. In this note[4], we therefore explore in depth an idea about use of minimally frame-variant scalars and 3-vectors, perhaps first hinted at in Percy W. Bridgman’s 1928 ruminations about the wisdom of defining 3-vector velocity using an ”extended” time system rather than defining 3-vector velocity using traveler proper time instead.

The problem with the latter, of course, is that minimally frame-variant quantities like proper time, 3-vector proper velocity, and 3-vector proper acceleration are inherently local to one traveler’s perspective, hence we refer to them as ”traveler-point” quantities. The advantage is that they are either frame-invariant (in the case of proper time and the magnitude of proper-acceleration) or synchrony-free (i.e. do not require an extended network of synchronized clocks which of course is not always available in curved space time and accelerated frames).

In a larger context, general-relativity revealed a century ago why we can get by with using Newton’s laws in spacetime so curvy that it’s tough to jump higher than a meter. It’s because those laws work locally in all frames of reference, provided we recognize that motion is generally affected by both proper and “geometric” (i.e. accelerometer-invisible connection-coefficient) forces.

In that context, it’s probably time to give introductory students the good news. Here we discuss a way to do this without telling them to measure time and distance in e.g. kilograms, and without asking them to juggle more than one concurrent defintion of simultaneity.

The metric equation of course avoids these things (cf. Fig. 1) by specifying locally-defined frame invariants like proper-time, and contenting itself with a single definition of simultaneity i.e. that associated with the set of book-keeper (or map) coordinates that one chooses to describe the spacetime metric. Hence the focus here is on a metric-first, as distinct from transform-first, strategy for describing motion in accelerated frames, in curved spacetime, as well as at high speeds[2][3][4][5][6].

Dynamics in flat space-time[edit]

This "expanded" table is meant to move from more familiar relations, to relations that are useful not just at high speeds but (with help from the "geometric force" approximation) also in curved spacetimes and accelerated frames. Here green backgrounds involve purely kinematic integrals of constant proper acceleration, blue backgrounds involve dynamical quantities connected to motion's causes, and red backgrounds flag more technical relationships that may come in handy for doing calculations.

| relation | w<<c | (1+1)D | (3+1)D |

|---|---|---|---|

| map time t | t ≈ τ | t = c/α sinh[ατ/c] | t = ½(wo/c)2/(γo+1) τ + (ατo/c)2τo sinh[τ/τo] |

| map position r | r ≈ vot + ½at2 | x = (c2/α)(cosh[ατ/c]-1) | r = ½(τ+τosinh[τ/τo]) wo + τo2(cosh[τ/τo]-1) α |

| aging factor γ ≡ δt/δτ | γ ≈ 1 + ½(v/c)2 | γ = cosh[ατ/c] | γ = ½(wo/c)2/(γo+1) + (ατo/c)2cosh[τ/τo] |

| proper velocity w ≡ δr/δτ | w ≈ v ≈ vo + at | w = c sinh[ατ/c] | w = ½(1+cosh[τ/τo]) wo + τosinh[τ/τo] α |

| relative velocity wAC | vAC ≈ vAB + vBC | wAC = γABγBC(vAB+vBC) | wAC = wAB∗ + wB∗C where wB∗C ≡ γABwBC and wAB∗ ≡ wAB⊥wBC+γBCwAB||wBC |

| momentum p | p ≈ mv | p = mw = mγv | p = mw = mγv |

| energy E | E ≈ mc2+½mv2 | E = γmc2 | E = γmc2 |

| felt (ξ) ↔ map-based (f) force conversions |

f ≈ ξ | f = ξ | ξ = f||w + γf⊥w f = ξ||w + ξ⊥w/γ |

| work-energy | δE ≈ Σf•δr | δE = (Σf)•δx | δE = Σξ•δr |

| action-reaction | fAB = -fBA | fAB = -fBA | fAB = -fBA |

| map-based force (f)-momentum (p) felt force (ξ)-acceleration (α) |

Σf = δp/δt ≈ ma | Σf = δp/δt = mα | Σf = δp/δt Σξ = mα |

| "purely felt" static/kinetic breakdown f = fE + fB |

fE ≈ ξ ≈ ma fB ≈ 0 |

fE = ξ = γ3ma fB = 0 |

fE = ξ||w + γξ⊥w = γ3ma fB = (1/γ-γ)ξ⊥w = -γ(v/c)2ξ⊥w |

| electromagnetic static/kinetic field breakdown f = fE + fB |

fE = qE fB = qv×B |

fE = qE = qE' fB = 0 |

fE = qE = q(E'||w+γE'⊥w)-qw×B' fB = qv×B = q(1/γ-γ)E'⊥w+qw×B' |

The foregoing velocity parameters, especially Lorentz-factor γ ≡ dt/dτ and proper-velocity w ≡ dx/dτ, also connect up simply to dynamically-conserved quantities like the relativistic total energy E, and the relativistic momentum p, via:

- , and

- ,

where m is rest mass, c is lightspeed, w is proper-velocity dx/dτ, v is coordinate velocity dx/dt, K is kinetic energy, and the Lorentz-factor γ may be written:

- .

These equations may also work locally in any spacetime, provided that we approximate connection-coefficient effects with "geometric forces" (like gravity and inertial) which are undetectable by on-board accelerometers, act on every ounce of an object's being, and can be made to disappear from the vantage point of a free float frame.

Notes on broken symmetry[edit]

Symmetry-breaking is an integrative-theme[37] which cuts across disciplines[38]. On the molecular level[39], for instance, the relatively-featureless isotropic-symmetry of liquid water[40] may first be broken by local translational pair-correlations (resulting in spherical reciprocal-lattice shells) as the liquid turns to polycrystal ice, and eventually by global translational and rotational ordering (resulting in reciprocal-lattice spots) as the ice becomes a single crystal.

Partly along the way to single-crystal form a quasicrystal phase might have rotational without translational ordering, while a random-layer lattice might have rotational and translational ordering in one "layering" direction only. Thus even within a single layer of organization, broken symmetries play a role in the (at least temporary) development of order.

Hierarchical ordering in the layer just above a pair-correlated level (e.g. interacting organisms) may generally require a higher-level symmetry-break (e.g. recognition of differing organism groups), which in turn gives rise to processes that select for inward-looking (e.g. from the group boundary) post-pair correlations as well as outward-looking pair-correlations on the next level up (e.g. between groups).

Thus shared-electrons break the symmetry between in-molecule and extra-molecule interactions, bi-layer membranes allow symmetry between in-cell and out-cell chemistry to be broken, shared resources (like steady-state flows) may break the symmetry between in-tissue and external processes, metazoan skins allow symmetry between in-organism and out-organism processes to be broken, bias toward family breaks the symmetry between in-family and extra-family processes, membership-rules break the symmetry between in-culture and multi-cultural processes, etc.

- Broken symmetry also plays an important role in the Higgs mechanism, which of course is another topic in the modern physics news. In this context, a fascinating modern example of "mechanical models" for the building blocks of matter is highlighted in the coupled-pendulum analogy here on the subject of tachyonic field. Thermochap (talk) 10:48, 25 October 2013 (UTC)

Forward-looking themes[edit]

Although modern physics courses often concentrate on history, the very name of the course suggests that we should also be on the lookout for unfolding-themes. For instance:

The temporal vs. spatial frequency theme includes stuff like the relationship ν = c/λ between broadcast-frequency & wavelength/antenna-size, resonant oscillations, waves, kinetic-energy vs. momentum plots since E = hν and p = h/λ, metric-1st relativity with proper-velocity w ≡ dx/dτ = p/m and proper/geometric accelerations, radar-time simultaneity, x-cτ & x-ct plots, reciprocal-lattices in diffraction & scattering theory, dispersion-relations e.g. of ω vs. k, band-structure, pair & pair-pair correlations, least action, and what else?

The number of choices = 2#bits = eS/k theme instead leads us through stuff like Bayesian data analysis including parameter-estimation (e.g. least-squares fits) & model-selection (e.g. AIC), surprisal-based risk-estimation, entropy-1st and correlation-1st studies[3] of analog & digital order-disorder transitions, Boltzmann & quantum occupancy-factors, natural units for temperature, heat-capacities as multiplicity-exponents, phase-changes, symmetry-breaks, information-engines including organisms & quantum computers, data compression, clade analysis, (d)evolving complexity in context of both cosmic and biological evolution, task layer-multiplicity, and what else?

Unhiding dimensionless units[edit]

When dimensionless units take on multiple forms, it is often helpful to be explicit about which of those forms are being used in any given situation. Some examples of these are shown in the table below, using the most recent "new-SI" definitions to make two of them rational as well as exact:

| larger unit | × conversion factor | = smaller unit |

|---|---|---|

| mole | AvogadroConstant 6.02214076×1023 |

molecule |

| cycle | 2π 6.2831853071... |

radian |

| nat | 1/ln2 1.4426950408... |

bit |

| nat | 1/BoltzmannConstant 1023/1.380649 |

J/K |

In this note we address applications involving angle units and information units where the dimensionless units are not always explicit. Although this is often not a problem within the jargon of a given field, being explicit about one's choice should help in communication between disciplines. In some areas, it may also provide insight into physical connections which might otherwise be missed. Thermochap (talk) 11:08, 28 September 2021 (UTC)

Application areas[edit]

cycle ⇔ radian[edit]

- Temporal frequencies are routinely described in either units of cycles per second (Hertz) or radians per second, whether or not rotation angles are involved. Spatial frequencies are similarly described (e.g. in the MKS/SI or meter-kilogram-second/Système International d'Unités system) as either g-vectors (g = 1/λ in cycles per meter) or as wave-vectors (k = 2π/λ in radians per meter), although in discussing spatial frequencies the cycle/radian descriptors are frequently unmentioned. This problem e.g. in diffraction studies was pointed out by John Cowley in his 1975 book[41], and of course the parallel between E = hν = ħω and deBroglie's p = h/λ hypothesis is much clearer written as p = hg = ħk. Thermochap (talk) 11:08, 28 September 2021 (UTC)

- By convention MKS/SI units for angular momentum L ≈ mvr are implicitly [kg m2/s], but explicitly [kg m2/s per radian] rather than [kg m2/s per cycle] since after all radius r is implicitly the number of [meters per radian] and angular momentum does involve angles. However the PlanckConstant h is implicitly in [Joule seconds per cycle], as you can see by noting that photon energy E = hν = ħω. Since ν is frequency in [cycles/second] then [Joule seconds per cycle] times [cycles per second] will naturally give us [Joules] of photon energy. Purely as a result of this switch in conventions, angular momentum is traditionally quantized not in units of h but of h/(2π) ≡ ħ. Thermochap (talk) 11:08, 28 September 2021 (UTC)

- Fourier transform and Fourier power units are a related problem. There are many conventions for measuring spectral amplitude and intensity to the point where electrical engineers often just use deciBel ratios to defocus on absolute values. The problem is again complicated by the implicit "cycles/radians" ambiguity, even though physical angles are seldom involved. One result is that some conventions yield physically sensible units (like amplitude and/or power per unit frequency interval) for spectral amplitude and/or power, while others (like Sqrt[2π] times amplitude and/or power per unit frequency interval) don't. Thermochap (talk) 11:08, 28 September 2021 (UTC)

nat ⇔ J/K[edit]

- Information and entropy S are logarithmic measures of the number of accessible states W (i.e. S = k ln W), whose energy derivative is reciprocal temperature and whose units are determined by one's choice of constant k. Hence the natural (as distinct from historical) units for temperature[12] are energy per unit correlation information e.g. 22oC = 295.15K ≈ 22.5965 picojoules/gigabyte. As a result, the BoltzmannConstant has related MKS/SI units of J/K per nat of correlation information. Boltzmann's constant thus converts between J/K and traditional information units. This is nothing more than e.g. the reversible-computing available-work cost in joules per ambient degree Kelvin, for each nat of correlation between two data-storage locations. It is in part e.g. why the dynamic range of CCD recording goes up at cryo-temperatures. Thermochap (talk) 11:08, 28 September 2021 (UTC)

An "unhiding" table[edit]

Here's a reference table of "sometimes hidden" dimensionless units that may be worth making explicit. Can you think of any others that we might mention?

| Quantity | Version w/unit hidden | Version w/unit exposed |

|---|---|---|

| temporal frequency f or ν angular frequency ω |

reciprocal seconds reciprcoal seconds |

cycles per second or Hertz radians per second |

| period τ | seconds | seconds per cycle |

| spatial frequency or g-vector wave vector or k |

reciprocal meters reciprocal meters |

cycles per meter radians per meter |

| wavelength λ | meters | meters per cycle |

| angular momentum L | kilogram meter2/second | kg m2/s per radian |

| PlanckConstant h "h-bar" or ħ ≡ h/(2π) |

Joule seconds = kg m2/s Joule seconds = kg m2/s |

Js per cycle = J/Hz Js/rad = kg m2/s per radian |

| BoltzmannConstant kB | joules per kelvin | J/K per nat |

| AvogadroConstant NA | molecules | molecules per mole ≠ but ≈ Daltons/gram |

Note that per cycle units are more general than per radian (which may however simplify some expressions), since not all application of these above quantities involves a physicsl angle. Note also that wavelength, angular momentum, and the Boltzmann constant are generally fixed in the dimensionless unit that they assume, even though other choices are available.

Correlations & uncertainty[edit]

One may think of the familiar uncertainty-relation in QM as a statement about subsystem-correlations between features of an object (like position or momentum) and an external knower. In the same way that shortening the duration of a musical note expands the range of frequencies that you hear (i.e. Δtime⇓ means Δfreq⇑) regardless of how well the musical instrument is tuned, measurement in general correlates one parameter's value with the knower's state of mind while unavoidably de-correlating that parameter's Fourier transform e.g. its frequency-space footprint.

A special case of this arises in the study of small systems because of energy/momentum's proportionality to temporal/spatial-frequency through Planck's constant i.e. since h = E/ν = p/g = 2πL. In fact some groups assert that all of quantum theory (not to mention other sciences) can be reduced not to studies about the state of stand-alone systems but to studies of our relationship as knower to structures in the world around.

- For instance these papers[42][43] discuss quantum-relativistic applications of the insight behind correlation-based complexity, namely that science is less about stand-alone-states than (as Lee Smolin[44] puts it) about "information that one subsystem of the universe can have about another". As a result quantum state models, like other forms of information[3] relevant to the 2nd law's assertion[45] that the external world's knowledge of an isolated system is unlikely to increase, "live not in one part or the other but on the boundary between".

Is this related to recent interest in thermodynamic explanation of e.g. spring & gravity forces in terms of information gradients on holographic surfaces, or to this 2013 note on time flow from sub-system correlations which, at least at first glance, follows a similar pattern?

- Put another way, the idea of state-collapse may be an artifact of our passion for stand-alone or observer-free models of our world i.e. models of world subsystems with the world removed. Sometimes we can get by with it, but not always because our models of an external subsystem may in practical ways be constrained by the world's (and hence our) access to knowledge about it. Thermochap (talk) 13:10, 25 October 2013 (UTC)

Bitwise re-imaginings[edit]

It's probably worth putting together an overview on use of log-probability measures to provide quantitative insight into:

- risks as surprisals[46] sp ≡ ln2[1/p] in bits,

- true-false bits of evidence[47] via ln2[odds ratio] = s1-p-sp,

- curve-fit & Ωoccam≈e-K model-selection[48][49] w/Sq/p≡Σpiln2[1/qi],

- entropy & available-work[50] via KL-divergence Iq/p≡Σpiln2[pi/qi],

- heat-capacities as multiplicity-exponents[34] for U and T,

- quantum-entanglements as mutual-information[51] Ipipj/pij,

- information & complexity[14] via KL-divergence Iq/p,

- Ipipj/pij compression studies[52] of cladistics & plagarism,

- molecular sequence-recognition[53] via KL-divergence Iq/p,

- individual-equality & community-health via NNLM[8],

- and what else?

Aside: One advantage of this integrative notation is that we deal exclusively in positive quantities, except of course when we are calculating differences between them.

We'll gradually flesh in details on this topic, which are presently available only on limited-access mediawiki sites. If you are interested in more on any particular item in the list above, leave a note for me on my talk page here: Thermochap (talk) 14:28, 13 November 2011 (UTC)

Useful modern physics numbers[edit]

| item ⇒ | ⇐ value | item ⇒ | ⇐ value |

|---|---|---|---|

| Elementary charge -qe | ≈ 1.6×10-19 Coulomb or J/eV | Electron mass me ≈ mp/1836 | ≈ 9.1×10-31 kg ≈ 1/1823 u ≈ 511 keV/c2 |

| Selected isotopes AZXN | 4018Ar22, 19779Au118, 23892U146 | Unified mass unit u or dalton Da | ≈ 1.66×10-27 kg ≈ 1/(6×1023) gram |

| Lightspeed constant c | = 1 ly/y ≈ 3×108 m/s ≈ 0.98 ft/ns | Atom radii are near 1 Ångstrom | ≡ 10-10 meter = 105 femtometer |

| Boltzmann constant kB | ≈ 1.38×10-23 J/K ≈ 8.6×10-5 eV/K | Room temperature 20oC = 68oF | ≈ 293 K ≈ 4×10-21 J/nat ≈ 1/40 eV/nat |

| Planck constant h ≡ 2πħ | ≈ 6.626×10-34 Js ≈ 4.14×10-15 eVs | Coulomb constant ke ≡ 1/(4πεo) | = c2μo/(4π) = αħc/qe2 ≈ 9×109 Nm2/C2 |

A curious resonance involving the re-framing of variables shows up in each of the 4 parts of today's modern physics course. Lastly (in part 4) decay-constant λ ≡ ln[2]/τ½ e.g. in [e-folds/second] is the dog that wags half-life's tail e.g. in [seconds/2-fold], much as (in part 2) vector spatial-frequency g ≡ k/(2π) = p/h e.g. in [cycles/Ångstrom] is the dog that wags wavelength and d-spacing's tail e.g. in [Ångstroms/cycle].

Later (in part 3) coldness or energy's uncertainty-slope i.e. reciprocal-temperature 1/kT ≡ d(S/k)/dE e.g. in [nats/eV] turns out to be the dog that wags temperature's tail e.g. in [Kelvin] or [eV/nat]. One may also point out (in part 1) that proper-velocity w ≡ dx/dτ = p/m = γv e.g. in [lightyears/traveler-year] is the dog that wag's coordinate-velocity's tail e.g. in [lightyears/map-year], but that relationship is not similarly reciprocal.

See also[edit]

|

Footnotes[edit]

- ^ Thomas A. Moore (2003 2nd ed) Six ideas that shaped physics (McGraw-Hill, NY).

- ^ a b P. Fraundorf (1996) "A one-map two-clock approach to teaching relativity in introductory physics" (arXiv:physics/9611011)

- ^ a b c d e f P. Fraundorf (2011/2012) "Metric-first & entropy-first surprises", arXiv:1106.4698 [physics.gen-ph].

- ^ a b c P. Fraundorf (2016/2017) Traveler-point dynamics, hal-01503971 working draft on-line discussion.

- ^ a b P. Fraundorf and Matt Wentzel-Long (2019) "Parameterizing the proper-acceleration 3-vector", Hyper Articles on Line operated by Centre National pour la Recherche Scientifique, hal-02087094, working version.

- ^ a b Matt Wentzel-Long and P. Fraundorf (2019) "A class period on spacetime-smart 3-vectors with familiar approximates", laTeX draft with supplementary notes in an appendix (HAL-02196765), Word version in preparation for a physics education journal, Mobile-friendly supplementary notes.

- ^ a b P. Fraundorf (2008) "A simplex model for layered niche-networks", Complexity 13:6, 29-39 abstract e-print.

- ^ a b P. Fraundorf (2013) "Layer-multiplicity as a community order-parameter", arXiv:1306.5185 [physics.gen-ph] mobile-ready version current draft. Cite error: The named reference "pf2013a" was defined multiple times with different content (see the help page).

- ^ a b c P. Fraundorf (2019) "Task layer multiplicity as a measure of community level health", Complexity 2019, 1082412, 8 pages, hal-01503096, laTeX pdf, new/earlier google sites. Cite error: The named reference "TLM2017" was defined multiple times with different content (see the help page).

- ^ P. Fraundorf (2016) "Piecewise-continuous nanoworlds online." UM-StL Dept. of Physics & Astronomy/Center for Nanoscience, hal-01364382 current pdf.

- ^ a b P. Fraundorf and Melanie Lipp (2016) "Molar standards & information units in the `new-SI'" hal-01381003 current pdf.

- ^ a b c P. Fraundorf (2003) "Heat capacity in bits", Amer. J. Phys. 71:11, 1142-1151 (link).

- ^ P. Fraundorf (2006) "Friendly units for coldness" (arXiv:physics/0606227).

- ^ a b P. Fraundorf (2008) "The thermal roots of correlation-based complexity", Complexity 13:3, 16-26 abstract. Cite error: The named reference "pf2008a" was defined multiple times with different content (see the help page).

- ^ Stephen Wedekind and P. Fraundorf (Sept 2016) "Log complex color for visual pattern recognition of total sound" Audio Engineering Society Convention 141, paper 9647 AES library mobile-ready link.

- ^ Philip Fraundorf, Stephen Wedekind and Wayne Garver, Log complex color for visual pattern recognition of total sound, U.S. Patent 10,341,795 filed November 28, 2017 and issued July 2, 2019, WIPO, Justia, FPO.

- ^ Eric J. Chaisson (2004) "Complexity: An Energetics Agenda", Complexity 9, pp 14-21.

- ^ C.C. Yang, S. Li, "Size-dependent temperature– pressure phase diagram of carbon". J. Phys. Chem. 112, 1423– 1426 (2008)

- ^ K. F. Kelton of Washington University in St. Louis, USA and A. L. Greer of University of Cambridge, UK (2010) Nucleation in Condensed Matter: Applications in Materials and Biology (Elsevier Science & Technology, Amsterdam) link.

- ^ C. Silva, P. Chrostoski & P. Fraundorf (2021) "DFT study of “unlayered-graphene solid” formation, in liquid carbon droplets at low pressures", MRS Advances 6:203-208 (Materials Research Society, Warrendale, PA) pdf.

- ^ Chathuri Silva, "COMPUTATIONAL STUDIES OF CARBON NANOCLUSTER SOLIDIFICATION" (2021) is the topic of a dissertation in partial fulfillment of the requirements for a Ph.D. in Physics and Astronomy at UM-StL and MST (UM-Rolla).

- ^ Phil Fraundorf and Martin Wackenhut (20 Oct 2002) "The core structure of pre-solar graphite onions" Ap. J. Lett. 578(2):L153-156 (American Astronomical Society). PDF here

- ^ P. Fraundorf, Melanie Lipp and Taylor Savage (2016) "Analogs for Unlayered-Graphene Droplet-Formation in Stellar Atmospheres", Microscopy and Microanalysis 22:S3, 1816-1817 pdf HAL-01356394.

- ^ Melanie Lipp, Taylor Savage, David Osborn and P. Fraundorf (2017) "Laboratory evidence of slow-cooling for carbon droplets from red-giant atmospheres", Microscopy and Microanalysis 23:S1, 2192-2193 pdf discussion poster.

- ^ P. Fraundorf, C. Silva, P. Chrostoski, M. Lipp (2021) "Characterization, synthesis, & modeling of unlayered graphene nucleation/growth in solidified carbon raindrops from presolar AGB atmospheres" (43rd COSPAR meeting) talk.

- ^ T. J. Hundley and P. Fraundorf (2018) "Lab simulation of carbon droplet cooling in AGB star atmospheres", 49th Lunar and Planetary Science Conference 2018 (LPI Contrib. No. 2083), 2154-2155 pdf.

- ^ Tristan Hundley and P. Fraundorf (2018) "Characterizing unlayered-graphene in homemade core\rim carbon raindrops", Microscopy and Microanalysis 24':S1, 2056-2057, pdf.

- ^ P. Fraundorf, Tristan Hundley and Melanie Lipp (2019) "Synthesis of unlayered graphene from carbon droplets: In stars and in the lab", HAL-02238804, draft for ApJLett, discussion.

- ^ P. Fraundorf, M. Lipp, T. Hundley, C. Silva and P. Chrostoski (2020) "Fraction crystalline from electron powder patterns of unlayered graphene in solidified carbon rain.", Microscopy and Microanalysis 26:S2 pp. 2838-2840 pdf.

- ^ Chrostoski, P.; Silva, C. & Fraundorf, P. (01 June 2021) "The rates of unlayered graphene formation in a supercooled carbon melt at low pressure", MRS Advances (Materials Research Society, Warrendale, PA) abstract.

- ^ Philip C. Chrostoski, "SEMI-EMPIRICAL MODELING OF LIQUID CARBON’S CONTAINERLESS SOLIDIFICATION" (2021) is the topic of a dissertation in partial fulfillment of the requirements for a Ph.D. in Physics and Astronomy at UM-StL and MST (UM-Rolla).

- ^ P. Chrostoski1, P. Fraundorf1,2, R. Molitor1 and C. Silva, "TEM Data & Thermal History Models for Presolar Core\Rim Carbon Spheres" 53rd Lunar and Planetary Science Conference 2022 (Lunar & Planetary Inst. Contrib. No. 2220), pdf.

- ^ R. J. Molitor, P. Fraundorf and P. Chrostoski (2022) "Stellar Atmosphere Cooling Rates For Presolar Core\Rim Carbon Spheres", 53rd Lunar and Planetary Science Conference 2022 (Lunar & Planetary Inst. Contrib. No. 2250), pdf.

- ^ a b P. Fraundorf (2003) "Heat capacity in bits", Amer. J. Phys. 71:11, 1142-1151 (link). Cite error: The named reference "pf2003" was defined multiple times with different content (see the help page).

- ^ Seth Lloyd (1989) "Use of mutual information to decrease entropy: Implications for the second law of thermodynamics", Physical Review A 39, 5378-5386 (link).

- ^ Elad Schneidman, Susanne Still, Michael J. Berry II, and William Bialek (2003) "Network information and connected correlations", Phys. Rev. Lett. 91:23, 238701 (link).

- ^ P. W. Anderson (1972) "More is different", Science 177:4047, 393-396 pdf.

- ^ James P. Sethna (2006) Entropy, order parameters and complexity (Oxford U. Press, Oxford UK) (e-book pdf).

- ^ J. M. Ziman (1979) Models of disorder: The theoretical physics of homgeneously disordered systems (Cambridge U. Press, Cambridge UK).

- ^ One might object that liquid water at the atomic-scale has neither translational nor rotational invariance but, referring again to the importance of subsystem-correlations, our mutual-information about the state of liquid water molecules generally does have translational and rotational invariance.

- ^ John M. Cowley (1975) Diffraction Physics (North-Holland, Amsterdam) preview.

- ^ Fotini Markopoulou (2000) "An insider's guide to quantum causal histories", Nucl.Phys.Proc.Suppl. 88, 308-313 arxiv:hep-th/9912137.

- ^ Louis Crane (1995) "Clock and Category; IS QUANTUM GRAVITY ALGEBRAIC" J.Math.Phys. 36 6180-6193 arxiv:gr-qc/9504038.

- ^ Lee Smolin (2007) The Trouble with Physics (Houghton Mifflin) Interspersed with the story of modern physics, the author argues that (1) theoretical physics has been prejudiced in favor of string theory to the point that other promising approaches have been entirely neglected and disdained by the mainstream theoretical physics culture and that (2) there are two kinds of physicists, the calculative and the imaginative. He further argues that the system is very biased toward the calculative. See here preview.

- ^ Seth Lloyd (1989) "Use of mutual information to decrease entropy: Implications for the second law of thermodynamics", Physical Review A 39, 5378-5386 (link).

- ^ Tribus, Myron (1961) Thermodynamics and Thermostatics: An Introduction to Energy, Information and States of Matter, with Engineering Applications (D. Van Nostrand Company Inc., 24 West 40 Street, New York 18, New York, U.S.A) ASIN: B000ARSH5S.

- ^ E. T. Jaynes (2003) Probability theory: The logic of science (Cambridge University Press, UK) preview.

- ^ Phil C. Gregory (2005) Bayesian logical data analysis for the physical sciences: A comparative approach with Mathematica support (Cambridge U. Press, Cambridge UK) preview.

- ^ Burnham, K. P. and Anderson D. R. (2002) Model Selection and Multimodel Inference: A Practical Information-Theoretic Approach, Second Edition (Springer Science, New York) ISBN 978-0-387-95364-9.

- ^ M. Tribus and E. C. McIrvine (1971) "Energy and information", Scientific American 224:179-186.

- ^ Seth Lloyd (1989) "Use of mutual information to decrease entropy: Implications for the second law of thermodynamics", Physical Review A 39, 5378-5386 (link).

- ^ Charles H. Bennett, Ming Li and Bin Ma (June 2003) "Chain letters and evolutionary histories" Scientific American.

- ^ Thomas D. Schneider (2000) "Evolution of biological information", Nucleic Acids Research 28:14, 2794-2799 pdf movie.