User talk:Ancheta Wis/sandbox

https://hackage.haskell.org/package/compose-ltr left-to-right composition

https://stackoverflow.com/tags?page=2&tab=popular

cf: Ernst von Salomon https://www.worldcat.org/title/answers-of-ernst-von-salomon-to-the-131-questions-in-the-allied-military-government-fragebogen/oclc/1509800

"You are accessing a U.S. Government (USG) Information System (IS) that is provided for USG-authorized use only.

By using this IS (which includes any device attached to this IS), you consent to the following conditions:

The USG routinely intercepts and monitors communications on this IS for purposes including, but not limited to, penetration testing, COMSEC monitoring, network operations and defense, personnel misconduct (PM), law enforcement (LE), and counterintelligence (CI) investigations.

At any time, the USG may inspect and seize data stored on this IS.

Communications using, or data stored on, this IS are not private, are subject to routine monitoring, interception, and search, and may be disclosed or used for USG-authorized purpose.

This IS includes security measures (e.g., authentication and access controls) to protect USG interests--not for your personal benefit or privacy.

Notwithstanding the above, using this IS does not constitute consent to PM, LE or CI investigative searching or monitoring of the content of privileged communications, or work product, related to personal representation or services by attorneys, psychotherapists, or clergy, and their assistants. Such communications and work products are private and confidential."

"Power: capacity for A to influence the behavior of B so that B acts in accordance with A's intent." —Robbins & Judge 14th ed. https://othjournal.com/2019/03/04/vulnerabilities-of-multi-domain-command-and-control-part-1/ https://othjournal.com/2019/03/06/vulnerabilities-of-multi-domain-command-and-control-part-2/ http://c4i-technology-news.blogspot.com/2011/12/us-armys-common-operating-environment.html https://breakingdefense.com/2019/12/from-the-baltic-to-black-seas-defender-exercise-goes-big-with-a-big-price-tag/ https://www.sciencedirect.com/science/article/pii/0957417496000309 A hybrid expert system for scheduling the U.S. Army's Close Combat Tactical Trainer (CCTT) https://asc.army.mil/web/portfolio-item/close-combat-tactical-trainer-cctt/ CLOSE COMBAT TACTICAL TRAINER (CCTT) https://www.realcleardefense.com/articles/2017/12/06/the_next_revolution_in_military_affairs_multi-domain_command_and_control_112741.html https://www.japcc.org/multi-domain-command-and-control/ https://www.dvidshub.net/feature/rapidforge2019 https://www.jcs.mil/Portals/36/Documents/Doctrine/pubs/jp1_ch1.pdf?ver=2019-02-11-174350-967 https://breakingdefense.com/2019/01/hack-jam-sense-shoot-army-creates-1st-multi-domain-unit/ https://www.dvidshub.net/image/5932060/i2cews-factsheet https://www.dvidshub.net/news/306986/new-space-cyber-battalion-activates-jblm

https://www3.nd.edu/~sbernste/LewisTCTI.pdf https://v4.chriskrycho.com/2016/the-covering-law-model.html https://perso.uclouvain.be/peter.verdee/counterfactuals/lewis3.pdf https://www.jstor.org/stable/25592003?seq=33#metadata_info_tab_contents How Science Textbooks Treat Scientific Method: A Philosopher's Perspective https://link.springer.com/chapter/10.1007/978-94-011-1735-7_1 The Deductive-Nomological Model Briefly Revisited At the cost of restating the points above (I retain the abbreviations listed above, and the names of the sources from the Scientific method article), I summarize in one thread:

- There is no canonical list of steps which apply to all researchers, because everyone begins from a different basis for understanding.[1] (This somewhat corroborates Feyerabend, who seems to be more nihilistic in his statements.) Pólya's suggestion that students attempt to restate the problem in their own words (this restatement serves the teacher as a check that students understand enough to be able to capture a problem in their own words, from their own experience. In other words, silence denotes noncomprehension, by Pólya's lights).

- Knowing that we do not understand something is a step along the path toward our understanding it. The next step beyond our inability to express it in our own words is to acquire more basic blocks so that we can make a plan.

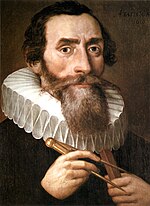

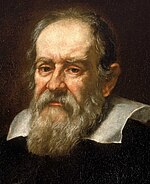

- That said, scientific method requires that students already have command of the basics of their subject of study, such as an understanding of mathematical functions[2] (enough to understand the law of falling bodies, for example. A teacher could then review Galileo's experimental setup to show how he discovered the law of falling bodies.

- The hammer and feather drop on the moon could then be displayed as a counter-example to Aristotle's earthbound feather drop demonstration, to show the limits of a purely empiricist philosophy. The demonstration could also clearly limn the science and its limits of certainty.)

- The need to teach from a shared base of knowledge (for example,Andrew Carberry (Updated: March 28, 2019) How to Build a Compost Pile) depends on a common shared experience. The shared experience allows the teacher and the student to communicate to each other that which needs to be established to achieve the goals (for example, improving the landscaping in a park using its natural resources).

- Pólya's dictum "Use your own brain first" is a restatement of his "First, you have to understand the problem," as a researcher. This dictum applies to a covering-law (CL) viewpoint which models a law of nature, or an institution, to cover the answer needed (profession of a CL thus implies that a researcher has accepted the risk of renouncing that CL, should it fail to cover the problem domain). At most, a CL is for an established institution or science (See Feynman's lectures in physics Vol I, ch. 2 -- his references to "law known, law partially known, law unknown"). Pólya's "make a plan" thus has 3 variants:

- Law known: one plan might be: use deductive-nomological method (DN) to search for a systematic explanation — when a law is known, it entails known resources, such as lab equipment, time to use some scientific instrument, some location in space to observe some phenomenon, some expertise possessed by people at some institution such as a lab, a library, and so forth. Results are thus available from those resources, and Pólya's "execute the plan" has a predictable mission. This is the simplest path for rote problems, because it saves a teacher's time, a scarce resource.

- Law partially known: one plan in the face of partial understanding might be: exploit Five Ws to narrow the search for a systematic explanation, to learn what resources, mechanisms, or equipment are needed, at what cost. It may be that the will to know the answer depends on a marketplace for interested parties, who then have a stake in the quest for the answer by the researcher. Even if problem has only been partially solved, Pólya's "look back" is a way for a researcher to consolidate any lessons learned during the progress that has been made.

- Law unknown: currently our best-understood model for scientific method is to use hypothetico-deductive model (HD -- Blachowicz 2009 notes that only 3 out of 70 texts use the hypothetico-deductive model for teaching scientific method) method in the search for a systematic explanation: we posit a hypothetical explanation and seek disproof (it is a logical error to seek proof of some hypothesis, see confirmation bias). Again, there is no guarantee that the process will in fact converge on a systematic answer. Pólya cautions that "there may be an easier problem for you to solve. Find it." Experiment to learn a cause and effect relationship in resources of the system under study. Eventually, researchers can marshal mechanisms and equipment to institute systematic effects on the system under study.

- " Mathematics presented with rigor is a systematic deductive science, but mathematics in the making is an experimental inductive science." --Pólya, How to solve it

References

- ^ Subjective theory of value

- ^ "I do not think [causality modelled as a mathematical function] can be further analyzed" —paraphrase of Max Born (1949) Natural Philosophy of Cause and Chance

Black hole: accretion disk, far side, shadow, underside, and photon ring

OK, I am going to write out a tutorial here which is not meant to go into the article; it is written in terms of understanding the imaging problem for M87*, and hopefully Sagittarius A*, which is the next step.

The Event Horizon Telescope (EHT) is a radio telescope, meaning that the electromagnetic wavelengths (millimeters) which EHT observes are much longer than visible light's wavelengths. The analog to the beam of light we are used to, for visible light, is spread out, like the flashlight beam we use in summer camp. The central bright beam is surrounded by successive dark and bright rings (called phases), like a searchlight. Instead of pointing the searchlight by hand, EHT uses a basic technique for interferometry; the bright and dark rings of sensitivity of EHT are built up of individual beams received by each individual telescope. Instead of emitting light, EHT observes light (in the radio spectrum rather than the visible spectrum of light). This lets EHT form an observation beam almost as wide as Earth. By summing up the dark and bright rings of sensitivity, EHT can be pointed at M87*. This synthetic aperture allows EHT to be pointed at M87* much more precisely than its component telescopes. When EHT turns on its receivers the radio signal is synchronized to the same clock standard for each individual telescope.

EHT observed M87* for four successive night, with this synchronized network of telescopes. The observational problem was to distinguish M87* from its background. One data processing problem was to handle the empty spaces between the point sources which make up galaxy M87, while keeping the relevant signal from M87*.

Clarence K.K. Chinn

- https://www.dvidshub.net/news/259736/usarpac-retirement-ceremoy

- https://www.army.mil/article/156496/us_army_south_advancing_existing_relationships_forging_new_ones

- https://www.army.mil/article/152867/army_south_completes_ninth_bilateral_staff_talks_with_el_salvador

- https://www.army.mil/article/50262/chinn_takes_command_of_joint_readiness_training_center_and_fort_polk

- https://ar.usembassy.gov/wp-content/uploads/sites/26/2016/08/Clarence-K.pdf

- 1st Theater Sustainment Command Centcom

- 8th Theater Sustainment Command Usfk

- 21st Theater Sustainment Command[5] Eucom

- 377th Theater Sustainment Command Reserve

- 3rd Sustainment Command (Expeditionary)[6] Airborne

- 13th Sustainment Command (Expeditionary) Conus West

- 19th Sustainment Command (Expeditionary) Daegu South Korea[7]

- 10th Army Air and Missile Defense Command Eucom

- 32nd Army Air and Missile Defense Command[8] Conus etc

- 94th Army Air and Missile Defense Command Usfk

- 263rd Army Air and Missile Defense Command Reserve

References

- ^ (1997) History of Sandia Labs

- ^ Office of the Chief of Public Affairs, US Army (10.16.2019) 2019 AUSA Warriors Corner - TacticalSpace: Delivering Future Force Space Capabilities

- ^ Kenneth J. Bocam, Kenneth J. Hyatt, Stephen J. Kalasky, Dominick D. Risaliti, Krystal Arroyo-Flores, Fred Eckert, Gregory Gallant, Glen E. Cameron and Stephen J. Fujikawa --Adcole Maryland Aerospace (Jun 28, 2018); Wheeler K. Hardy, Mark E. Ray, Christian J. Reyes, Matthew A. Hitt, Mason E. Nixon --USASMDC/ARSTRAT --pre publication release #8018, cleared for publication: (08 Feb 2018) Kestrel Eye Block II 32nd Annual AIAA/USU Conference on Small Satellites 2018 pdf

- (9 August 2012) USAF Kestrel Eye

- (2012) Kestrel Eye Block 2A

- LEO John London (26 March 2015) SMDC space initiatives pdf

- John Keller (Oct 2nd, 2014) Army taps Quantum Research to build imaging nano-satellites for front-line warfighters

- Elizabeth Howell (October 24, 2017) The Army Has a New Eye in the Sky

- Stephanie Mills (Oct 24, 2017) Kestrel Eye satellite sent into space from ISS

- US Army (Oct 25, 2017) US Army deploys its own Kestrel Eye satellite

- Jason Cutshaw (Oct 24, 2017) Army deploys Kestrel Eye satellite

- Jason B. Cutshaw (SMDC/ARSTRAT) (October 25, 2017) Army deploys Kestrel Eye satellite

- Steve Johnson (November 3, 2017) A new satellite in orbit has the attention of Redstone Arsenal

- Sandra Erwin (February 21, 2018) Army’s imaging satellite up and running, but its future is TBD

- Sydney J. Freedberg Jr. (November 22, 2019 at 7:00 AM) SecArmy’s Multi-Domain Kill Chain: Space-Cloud-AI

- (2019-11-23) kestrel eye orbit history

- ^ *Todd South (5 Jun 2019) Tactics, tech and work of close combat experts is turning warfare ‘upside down’

- ^ [https://www.army.mil/article/215302/mission_command_of_sustainment_operations Maj. Gen. Steven A. Shapiro and Maj. Oliver Davis (January 2, 2019) Mission command of sustainment operations ]

- ^ Maj. Daniel J. N. Belzer (January 2, 2019) Command relationships between corps and ESCs ESC = (Expeditionary Support Command); TSC = (Theater Support Command)

- ^ (December 14, 2017) "Team 19" begins new chapter

- ^ Army Regulation 220–1 15 April 2010

- The 32d Army Air and Missile Defense Command (AAMDC) is a theater level Army air and missile defense multi-component organization with a worldwide, 72-hour deployment mission. 32d AAMDC consists of four brigades, 11th ADA, 31st ADA, 69th ADA and 108th ADA

- Well, what you are saying is the right editor (not me, I'm not the right one) needs to look at the exterior derivative of the Hodge star operator on the electromagnetic tensor and search for a citation about how E and B ought to behave in the physical case I am alluding to above (the prediction). I am specifically not calling for that editor to deduce and then publish that prediction here. That would be WP:OR and not suitable for the encyclopedia in that form. Intuition tells me there could be some off-diagonal components (for the EM case, something akin to polarization) in another kind of spacetime.

- I urge you to self-revert; it's OK to discuss this on the talk page, though, as the kind of citation we are seeking would materially improve the article after some editor finds it.

- It is customary to sign our contributions on this talk page with --~~~~ --Ancheta Wis (talk | contribs) 19:41, 11 May 2019 (UTC)

The basis of the Haskell programming language can be found in Miranda, a proprietary language of David Turner. The aim was to concentrate functional programming concepts as open source without reinventing functional programming over and over. As a result, Haskell has a small cohesive core which has remained stable for thirty years. Discussing the Girard-Reynolds isomorphism can make the Haskell Core understandable;[1] Core is really the implementation of the Girard-Reynolds type system.

- Constructivism (philosophy of mathematics) was chosen because it is the philosophy-put-into-practice of a software developer, as is Intuitionistic type theory and Intuitionistic logic. They are quite practical, and more than theoretical, in other words.

- Alpha equivalence, Beta reduction, and Eta conversion are basic for Haskell; you can't proceed without them, as Chris Allen has shown.

- Anonymous functions, or better, lambdas are part of Haskell.

- Continuation-passing style is a part of Haskell which means that functional reactive programming can be implemented in Haskell.

- Curry-Howard isomorphism and the Girard-Reynolds isomorphism are fundamental to Haskell

- Currying is a part of Haskell.

- Declarative programming and Denotational semantics are only part of what is needed to code in Haskell

- Domain-specific language -- covered above

- Effect systems can be coded in Haskell. See Oleg Kiselyov's work, coded in Haskell, on extensible effects

- The Exponential object is a notation to express higher level type application to other types, which is allowed in Girard-Reynolds (the Haskell core)[1]

- Expression (computer science) and Expression (mathematics) are integrated into one concept in this language, which points to the implementation of Girard-Reynolds (the Haskell core).[1]

Expris about seven lines of Haskell code (a sum type) in Haskell Core.[1] - Function types are First-class citizens in Haskell

- Hash consing

- Higher-order abstract syntax -- covered above, but Higher-order functions and Higher-order logic are part and parcel of any Haskell implementation of some Domain-specific language (some specific situation -- you can think of this as a Business Case)

- The Hindley–Milner type system is decidable, and has been part of Miranda and Haskell since their early days. This is the reason that Haskell can automate type inference.

- Intuitionistic type theory -- covered above

- Intuitionistic logic -- covered above

- Kan extensions allow us to carry along the data so that we can call by need, a practical part of both effectful programming (i.e. monads) and lazy evaluation.

- Lambda calculus definition, Lambda calculus, and Lambda lifting allow us to exploit Lazy evaluation when we need it along some axis of the Lambda cube. Note that the specialization to some Topos allows us to skip past the infinities that some other languages would force us to step into, but which lazy evaluation allows us to skip.

- Linear logic is Girard's resource-oriented logic, which is a basis for Effect systems

- Monad (functional programming) was first implemented in Haskell

- Natural deduction -- covered above. Note that it is groundwork for the normal forms which get produced by alpha, beta, and eta transformations covered above.

- Normal form (abstract rewriting) is a fundamental part of a Haskell thunk, but weak head normal form doesn't even have an article yet

- Partial application, and Partial function are discussed above. They are built-in to the Haskell language with a consistent grammar which doesn't even need to identify them as special cases, they are first-class citizens of the language. Now compare to other functional languages which have to create special machinery. 16:44, 24 March 2019 (UTC)

- Partially ordered sets are fundamental to any Haskell type. They need to be derived via natural deduction, bottom-up for each topos.

- Pointfree programming is either very high-level (via Higher-order type classes) or very low-level (via natural deduction). As an example, the point-free implementation of Map-reduce (call it mr) of Google fame is one line in Haskell:

mr = (. map).(.).foldr--credit: Udo Stenzel's derivation. ghci consumes this without complaint. Other languages implement it with thousandfold (or more) increases in lines of code. --16:44, 24 March 2019 (UTC) - Polymorphism (computer science) can be implemented by the Girard-Reynolds isomorphism, implemented largely in Haskell Core,[1] which is typed (colloquially termed Big Lambda) in less than 20 lines of Haskell. --Ancheta Wis (talk | contribs) 16:44, 24 March 2019 (UTC)

- Purely functional data structures, and Purely functional programming can exploit lazy evaluation while using some interpreter such as ghci to prove that the Haskell code is correct for the denoted cases (which could be infinite in number).

- Rewriting -- the transformations from one form to another (e.g., the alpha, beta, eta discussed above) while remaining assured of correctness. This in particular is a difficult concept to grok because the different forms can morph from one to the other, and the skilled practioners seem to have this ability of fluent translation, while remaining mathematically correct, to the despair of anyone trying to follow their code. --Ancheta Wis (talk | contribs) 16:44, 24 March 2019 (UTC)

- Scott continuity describes a mapping between posets, which are the elements of the type system being implemented in the Haskell code. For example, "two particularly notable examples of Scott-continuous functions are curry and apply." Their cartesian closed category cannot be a foreign concept to a Haskeller. --Ancheta Wis (talk | contribs) 13:53, 24 March 2019 (UTC)

- Sequent -- discussed above

- Sequent calculus -- discussed above, as the framework for expressions which surround a Turnstile --Ancheta Wis (talk | contribs) 16:44, 24 March 2019 (UTC)

- SECD machine (an abstract machine) for implementing Lambda calculus

- Side effect (computer science) -- not a part of Haskell, unless you seek one

- Strictness analysis -- there is an operator which tells the compiler to reduce the expression right away, absolving the coder of the responsibility of carrying along its weak head normal form (the lazy instance). --16:44, 24 March 2019 (UTC)

- Subobject classifier -- a necessary part of a topos. A classifier might be reducible to a Boolean function, but the Subobject classifier is allowed to be even more general (ala higher order type theory (HoTT).[2]). This kind of classifier is a predicate in Gottlob Frege's sense as it includes semantic meaning. --Ancheta Wis (talk | contribs) 13:44, 25 March 2019 (UTC)

- Supercombinator

- Syntactic closure

- System F -- see Girard-Reynolds

- Tail call -- one strategy for handling thunks

- Thunk -- a fundamental part of Haskell, discussed above

- Turnstile (symbol) used in sequents above

- Type classes were first used in Haskell

- Type constructors reside on the left hand side of a declaration, while data constructors reside on the right hand side of a declaration, and can be sum types or product types. --Ancheta Wis (talk | contribs) 16:44, 24 March 2019 (UTC)

- The kind of Type inference which Haskell offers depends on the Type system it implements; Haskell at one time supported only Hindley-Milner, but now offers a version of System F --Ancheta Wis (talk | contribs) 16:44, 24 March 2019 (UTC)

- Intuitionistic Type theory is a basis for Haskell

- Unified Modeling Language can depict Haskell code, because it has arrows. This defines graphs and allows Graph rewriting.

15:32, 13 March 2019

The scientific method is an empirical method of acquiring knowledge that has characterized the development of science since at least the 17th century. It involves careful observation, applying rigorous skepticism about what is observed, given that cognitive assumptions can distort how one interprets the observation. It involves formulating hypotheses, via induction, based on such observations; experimental and measurement-based testing of deductions drawn from the hypotheses; and refinement (or elimination) of the hypotheses based on the experimental findings. These are principles of the scientific method, as distinguished from a definitive series of steps applicable to all scientific enterprises.[3][4][5]

Though diverse models for the scientific method are available, there is in general a continuous process that includes observations about the natural world. People are naturally inquisitive, so they often come up with questions about things they see or hear, and they often develop ideas or hypotheses about why things are the way they are. The best hypotheses lead to predictions that can be tested in various ways. The most conclusive testing of hypotheses comes from reasoning based on carefully controlled experimental data. Depending on how well additional tests match the predictions, the original hypothesis may require refinement, alteration, expansion or even rejection. If a particular hypothesis becomes very well supported, a general theory may be developed.[6]

Although procedures vary from one field of inquiry to another, they are frequently the same from one to another. The process of the scientific method involves making conjectures (hypotheses), deriving predictions from them as logical consequences, and then carrying out experiments or empirical observations based on those predictions.[7][8] A hypothesis is a conjecture, based on knowledge obtained while seeking answers to the question. The hypothesis might be very specific, or it might be broad. Scientists then test hypotheses by conducting experiments or studies. A scientific hypothesis must be falsifiable, implying that it is possible to identify a possible outcome of an experiment or observation that conflicts with predictions deduced from the hypothesis; otherwise, the hypothesis cannot be meaningfully tested.[9]

The purpose of an experiment is to determine whether observations agree with or conflict with the predictions derived from a hypothesis.[10] Experiments can take place anywhere from a garage to CERN's Large Hadron Collider. There are difficulties in a formulaic statement of method, however. Though the scientific method is often presented as a fixed sequence of steps, it represents rather a set of general principles.[11] Not all steps take place in every scientific inquiry (nor to the same degree), and they are not always in the same order.[12][13] Some philosophers and scientists have argued that there is no scientific method; they include physicist Lee Smolin[14] and philosopher Paul Feyerabend (in his Against Method). Robert Nola and Howard Sankey remark that "For some, the whole idea of a theory of scientific method is yester-year's debate, the continuation of which can be summed up as yet more of the proverbial deceased equine castigation. We beg to differ."[15]

History[edit]

Important debates in the history of science concern rationalism, especially as advocated by René Descartes; inductivism and/or empiricism, as argued for by Francis Bacon, and rising to particular prominence with Isaac Newton and his followers; and hypothetico-deductivism, which came to the fore in the early 19th century.

The term "scientific method" emerged in the 19th century, when a significant institutional development of science was taking place and terminologies establishing clear boundaries between science and non-science, such as "scientist" and "pseudoscience", appeared.[21] Throughout the 1830s and 1850s, by which time Baconianism was popular, naturalists like William Whewell, John Herschel, John Stuart Mill engaged in debates over "induction" and "facts" and were focused on how to generate knowledge.[21] In the late 19th a debate over realism vs. antirealism was conducted as powerful scientific theories extended beyond the realm of the observable.[22]

The term "scientific method" came into popular use in the twentieth century, popping up in dictionaries and science textbooks, although there was little scientific consensus over its meaning.[21] Although there was a growth through the middle of the twentieth century, by the end of that century numerous influential philosophers of science like Thomas Kuhn and Paul Feyerabend had questioned the universality of the "scientific method" and in doing so largely replaced the notion of science as a homogeneous and universal method with that of it being a heterogeneous and local practice.[21] In particular, Paul Feyerabend argued against there being any universal rules of science.[22] Historian of science Daniel Thurs maintains that the scientific method is a myth or, at best, an idealization.[23]

Overview[edit]

The scientific method is the process by which science is carried out.[24] As in other areas of inquiry, science (through the scientific method) can build on previous knowledge and develop a more sophisticated understanding of its topics of study over time.[25][26][27][28][29][30] This model can be seen to underlie the scientific revolution.[31]

The ubiquitous element in the model of the scientific method is empiricism, or more precisely, epistemologic sensualism. This is in opposition to stringent forms of rationalism: the scientific method embodies that reason alone cannot solve a particular scientific problem. A strong formulation of the scientific method is not always aligned with a form of empiricism in which the empirical data is put forward in the form of experience or other abstracted forms of knowledge; in current scientific practice, however, the use of scientific modelling and reliance on abstract typologies and theories is normally accepted. The scientific method is of necessity also an expression of an opposition to claims that e.g. revelation, political or religious dogma, appeals to tradition, commonly held beliefs, common sense, or, importantly, currently held theories, are the only possible means of demonstrating truth.

Different early expressions of empiricism and the scientific method can be found throughout history, for instance with the ancient Stoics, Epicurus,[32] Alhazen,[33] Roger Bacon, and William of Ockham. From the 16th century onwards, experiments were advocated by Francis Bacon, and performed by Giambattista della Porta,[34] Johannes Kepler,[35] and Galileo Galilei.[36] There was particular development aided by theoretical works by Francisco Sanches,[37] John Locke, George Berkeley, and David Hume.

The hypothetico-deductive model[38] formulated in the 20th century, is the ideal although it has undergone significant revision since first proposed (for a more formal discussion, see below). Staddon (2017) argues it is a mistake to try following rules[39] which are best learned through careful study of examples of scientific investigation.

Process[edit]

The overall process involves making conjectures (hypotheses), deriving predictions from them as logical consequences, and then carrying out experiments based on those predictions to determine whether the original conjecture was correct.[7] There are difficulties in a formulaic statement of method, however. Though the scientific method is often presented as a fixed sequence of steps, these actions are better considered as general principles.[12] Not all steps take place in every scientific inquiry (nor to the same degree), and they are not always done in the same order. As noted by scientist and philosopher William Whewell (1794–1866), "invention, sagacity, [and] genius"[13] are required at every step.

Formulation of a question[edit]

The question can refer to the explanation of a specific observation, as in "Why is the sky blue?" but can also be open-ended, as in "How can I design a drug to cure this particular disease?" This stage frequently involves finding and evaluating evidence from previous experiments, personal scientific observations or assertions, as well as the work of other scientists. If the answer is already known, a different question that builds on the evidence can be posed. When applying the scientific method to research, determining a good question can be very difficult and it will affect the outcome of the investigation.[40]

Hypothesis[edit]

A hypothesis is a conjecture, based on knowledge obtained while formulating the question, that may explain any given behavior. The hypothesis might be very specific; for example, Einstein's equivalence principle or Francis Crick's "DNA makes RNA makes protein",[41] or it might be broad; for example, unknown species of life dwell in the unexplored depths of the oceans. A statistical hypothesis is a conjecture about a given statistical population. For example, the population might be people with a particular disease. The conjecture might be that a new drug will cure the disease in some of those people. Terms commonly associated with statistical hypotheses are null hypothesis and alternative hypothesis. A null hypothesis is the conjecture that the statistical hypothesis is false; for example, that the new drug does nothing and that any cure is caused by chance. Researchers normally want to show that the null hypothesis is false. The alternative hypothesis is the desired outcome, that the drug does better than chance. A final point: a scientific hypothesis must be falsifiable, meaning that one can identify a possible outcome of an experiment that conflicts with predictions deduced from the hypothesis; otherwise, it cannot be meaningfully tested.

Prediction[edit]

This step involves determining the logical consequences of the hypothesis. One or more predictions are then selected for further testing. The more unlikely that a prediction would be correct simply by coincidence, then the more convincing it would be if the prediction were fulfilled; evidence is also stronger if the answer to the prediction is not already known, due to the effects of hindsight bias (see also postdiction). Ideally, the prediction must also distinguish the hypothesis from likely alternatives; if two hypotheses make the same prediction, observing the prediction to be correct is not evidence for either one over the other. (These statements about the relative strength of evidence can be mathematically derived using Bayes' Theorem).[42]

Testing[edit]

This is an investigation of whether the real world behaves as predicted by the hypothesis. Scientists (and other people) test hypotheses by conducting experiments. The purpose of an experiment is to determine whether observations of the real world agree with or conflict with the predictions derived from a hypothesis. If they agree, confidence in the hypothesis increases; otherwise, it decreases. Agreement does not assure that the hypothesis is true; future experiments may reveal problems. Karl Popper advised scientists to try to falsify hypotheses, i.e., to search for and test those experiments that seem most doubtful. Large numbers of successful confirmations are not convincing if they arise from experiments that avoid risk.[10] Experiments should be designed to minimize possible errors, especially through the use of appropriate scientific controls. For example, tests of medical treatments are commonly run as double-blind tests. Test personnel, who might unwittingly reveal to test subjects which samples are the desired test drugs and which are placebos, are kept ignorant of which are which. Such hints can bias the responses of the test subjects. Furthermore, failure of an experiment does not necessarily mean the hypothesis is false. Experiments always depend on several hypotheses, e.g., that the test equipment is working properly, and a failure may be a failure of one of the auxiliary hypotheses. (See the Duhem–Quine thesis.) Experiments can be conducted in a college lab, on a kitchen table, at CERN's Large Hadron Collider, at the bottom of an ocean, on Mars (using one of the working rovers), and so on. Astronomers do experiments, searching for planets around distant stars. Finally, most individual experiments address highly specific topics for reasons of practicality. As a result, evidence about broader topics is usually accumulated gradually.

Analysis[edit]

This involves determining what the results of the experiment show and deciding on the next actions to take. The predictions of the hypothesis are compared to those of the null hypothesis, to determine which is better able to explain the data. In cases where an experiment is repeated many times, a statistical analysis such as a chi-squared test may be required. If the evidence has falsified the hypothesis, a new hypothesis is required; if the experiment supports the hypothesis but the evidence is not strong enough for high confidence, other predictions from the hypothesis must be tested. Once a hypothesis is strongly supported by evidence, a new question can be asked to provide further insight on the same topic. Evidence from other scientists and experience are frequently incorporated at any stage in the process. Depending on the complexity of the experiment, many iterations may be required to gather sufficient evidence to answer a question with confidence, or to build up many answers to highly specific questions in order to answer a single broader question.

DNA example[edit]

![]() The basic elements of the scientific method are illustrated by the following example from the discovery of the structure of DNA:

The basic elements of the scientific method are illustrated by the following example from the discovery of the structure of DNA:

- Question: Previous investigation of DNA had determined its chemical composition (the four nucleotides), the structure of each individual nucleotide, and other properties. It had been identified as the carrier of genetic information by the Avery–MacLeod–McCarty experiment in 1944,[43] but the mechanism of how genetic information was stored in DNA was unclear.

- Hypothesis: Linus Pauling, Francis Crick and James D. Watson hypothesized that DNA had a helical structure.[44]

- Prediction: If DNA had a helical structure, its X-ray diffraction pattern would be X-shaped.[45][46] This prediction was determined using the mathematics of the helix transform, which had been derived by Cochran, Crick and Vand[47] (and independently by Stokes). This prediction was a mathematical construct, completely independent from the biological problem at hand.

- Experiment: Rosalind Franklin crystallized pure DNA and performed X-ray diffraction to produce photo 51. The results showed an X-shape.

- Analysis: When Watson saw the detailed diffraction pattern, he immediately recognized it as a helix.[48][49] He and Crick then produced their model, using this information along with the previously known information about DNA's composition and about molecular interactions such as hydrogen bonds.[50]

The discovery became the starting point for many further studies involving the genetic material, such as the field of molecular genetics, and it was awarded the Nobel Prize in 1962. Each step of the example is examined in more detail later in the article.

Other components[edit]

The scientific method also includes other components required even when all the iterations of the steps above have been completed:[51]

Replication[edit]

If an experiment cannot be repeated to produce the same results, this implies that the original results might have been in error. As a result, it is common for a single experiment to be performed multiple times, especially when there are uncontrolled variables or other indications of experimental error. For significant or surprising results, other scientists may also attempt to replicate the results for themselves, especially if those results would be important to their own work.[52]

External review[edit]

The process of peer review involves evaluation of the experiment by experts, who typically give their opinions anonymously. Some journals request that the experimenter provide lists of possible peer reviewers, especially if the field is highly specialized. Peer review does not certify correctness of the results, only that, in the opinion of the reviewer, the experiments themselves were sound (based on the description supplied by the experimenter). If the work passes peer review, which occasionally may require new experiments requested by the reviewers, it will be published in a peer-reviewed scientific journal. The specific journal that publishes the results indicates the perceived quality of the work.[53]

Data recording and sharing[edit]

Scientists typically are careful in recording their data, a requirement promoted by Ludwik Fleck (1896–1961) and others.[54] Though not typically required, they might be requested to supply this data to other scientists who wish to replicate their original results (or parts of their original results), extending to the sharing of any experimental samples that may be difficult to obtain.[55]

Scientific inquiry[edit]

Scientific inquiry generally aims to obtain knowledge in the form of testable explanations that scientists can use to predict the results of future experiments. This allows scientists to gain a better understanding of the topic under study, and later to use that understanding to intervene in its causal mechanisms (such as to cure disease). The better an explanation is at making predictions, the more useful it frequently can be, and the more likely it will continue to explain a body of evidence better than its alternatives. The most successful explanations – those which explain and make accurate predictions in a wide range of circumstances – are often called scientific theories.

Most experimental results do not produce large changes in human understanding; improvements in theoretical scientific understanding typically result from a gradual process of development over time, sometimes across different domains of science.[56] Scientific models vary in the extent to which they have been experimentally tested and for how long, and in their acceptance in the scientific community. In general, explanations become accepted over time as evidence accumulates on a given topic, and the explanation in question proves more powerful than its alternatives at explaining the evidence. Often subsequent researchers re-formulate the explanations over time, or combined explanations to produce new explanations.

Tow sees the scientific method in terms of an evolutionary algorithm applied to science and technology.[57]

Properties of scientific inquiry[edit]

Scientific knowledge is closely tied to empirical findings, and can remain subject to falsification if new experimental observation incompatible with it is found. That is, no theory can ever be considered final, since new problematic evidence might be discovered. If such evidence is found, a new theory may be proposed, or (more commonly) it is found that modifications to the previous theory are sufficient to explain the new evidence. The strength of a theory can be argued[by whom?] to relate to how long it has persisted without major alteration to its core principles.

Theories can also become subsumed by other theories. For example, Newton's laws explained thousands of years of scientific observations of the planets almost perfectly. However, these laws were then determined to be special cases of a more general theory (relativity), which explained both the (previously unexplained) exceptions to Newton's laws and predicted and explained other observations such as the deflection of light by gravity. Thus, in certain cases independent, unconnected, scientific observations can be connected to each other, unified by principles of increasing explanatory power.[58][59]

Since new theories might be more comprehensive than what preceded them, and thus be able to explain more than previous ones, successor theories might be able to meet a higher standard by explaining a larger body of observations than their predecessors.[58] For example, the theory of evolution explains the diversity of life on Earth, how species adapt to their environments, and many other patterns observed in the natural world;[60][61] its most recent major modification was unification with genetics to form the modern evolutionary synthesis. In subsequent modifications, it has also subsumed aspects of many other fields such as biochemistry and molecular biology.

Beliefs and biases[edit]

Scientific methodology often directs that hypotheses be tested in controlled conditions wherever possible. This is frequently possible in certain areas, such as in the biological sciences, and more difficult in other areas, such as in astronomy.

The practice of experimental control and reproducibility can have the effect of diminishing the potentially harmful effects of circumstance, and to a degree, personal bias. For example, pre-existing beliefs can alter the interpretation of results, as in confirmation bias; this is a heuristic that leads a person with a particular belief to see things as reinforcing their belief, even if another observer might disagree (in other words, people tend to observe what they expect to observe).

A historical example is the belief that the legs of a galloping horse are splayed at the point when none of the horse's legs touches the ground, to the point of this image being included in paintings by its supporters. However, the first stop-action pictures of a horse's gallop by Eadweard Muybridge showed this to be false, and that the legs are instead gathered together.[62]

Another important human bias that plays a role is a preference for new, surprising statements (see appeal to novelty), which can result in a search for evidence that the new is true.[63] Poorly attested beliefs can be believed and acted upon via a less rigorous heuristic.[64]

Goldhaber and Nieto published in 2010 the observation that if theoretical structures with "many closely neighboring subjects are described by connecting theoretical concepts then the theoretical structure .. becomes increasingly hard to overturn".[59] When a narrative is constructed its elements become easier to believe.[65] For more on the narrative fallacy, see also Fleck 1979, p. 27: "Words and ideas are originally phonetic and mental equivalences of the experiences coinciding with them. ... Such proto-ideas are at first always too broad and insufficiently specialized. ... Once a structurally complete and closed system of opinions consisting of many details and relations has been formed, it offers enduring resistance to anything that contradicts it." Sometimes, these have their elements assumed a priori, or contain some other logical or methodological flaw in the process that ultimately produced them. Donald M. MacKay has analyzed these elements in terms of limits to the accuracy of measurement and has related them to instrumental elements in a category of measurement.[66]

Elements of the scientific method[edit]

There are different ways of outlining the basic method used for scientific inquiry. The scientific community and philosophers of science generally agree on the following classification of method components. These methodological elements and organization of procedures tend to be more characteristic of natural sciences than social sciences. Nonetheless, the cycle of formulating hypotheses, testing and analyzing the results, and formulating new hypotheses, will resemble the cycle described below.

The scientific method is an iterative, cyclical process through which information is continually revised.[67][68] It is generally recognized to develop advances in knowledge through the following elements, in varying combinations or contributions:[69][70]

- Characterizations (observations, definitions, and measurements of the subject of inquiry)

- Hypotheses (theoretical, hypothetical explanations of observations and measurements of the subject)

- Predictions (inductive and deductive reasoning from the hypothesis or theory)

- Experiments (tests of all of the above)

Each element of the scientific method is subject to peer review for possible mistakes. These activities do not describe all that scientists do (see below) but apply mostly to experimental sciences (e.g., physics, chemistry, and biology). The elements above are often taught in the educational system as "the scientific method".[71]

The scientific method is not a single recipe: it requires intelligence, imagination, and creativity.[72] In this sense, it is not a mindless set of standards and procedures to follow, but is rather an ongoing cycle, constantly developing more useful, accurate and comprehensive models and methods. For example, when Einstein developed the Special and General Theories of Relativity, he did not in any way refute or discount Newton's Principia. On the contrary, if the astronomically large, the vanishingly small, and the extremely fast are removed from Einstein's theories – all phenomena Newton could not have observed – Newton's equations are what remain. Einstein's theories are expansions and refinements of Newton's theories and, thus, increase confidence in Newton's work.

A linearized, pragmatic scheme of the four points above is sometimes offered as a guideline for proceeding:[73]

- Define a question

- Gather information and resources (observe)

- Form an explanatory hypothesis

- Test the hypothesis by performing an experiment and collecting data in a reproducible manner

- Analyze the data

- Interpret the data and draw conclusions that serve as a starting point for new hypothesis

- Publish results

- Retest (frequently done by other scientists)

The iterative cycle inherent in this step-by-step method goes from point 3 to 6 back to 3 again.

While this schema outlines a typical hypothesis/testing method,[74] it should also be noted that a number of philosophers, historians, and sociologists of science, including Paul Feyerabend, claim that such descriptions of scientific method have little relation to the ways that science is actually practiced.

Characterizations[edit]

The scientific method depends upon increasingly sophisticated characterizations of the subjects of investigation. (The subjects can also be called unsolved problems or the unknowns.) For example, Benjamin Franklin conjectured, correctly, that St. Elmo's fire was electrical in nature, but it has taken a long series of experiments and theoretical changes to establish this. While seeking the pertinent properties of the subjects, careful thought may also entail some definitions and observations; the observations often demand careful measurements and/or counting.

The systematic, careful collection of measurements or counts of relevant quantities is often the critical difference between pseudo-sciences, such as alchemy, and science, such as chemistry or biology. Scientific measurements are usually tabulated, graphed, or mapped, and statistical manipulations, such as correlation and regression, performed on them. The measurements might be made in a controlled setting, such as a laboratory, or made on more or less inaccessible or unmanipulatable objects such as stars or human populations. The measurements often require specialized scientific instruments such as thermometers, spectroscopes, particle accelerators, or voltmeters, and the progress of a scientific field is usually intimately tied to their invention and improvement.

I am not accustomed to saying anything with certainty after only one or two observations.

— Andreas Vesalius, (1546)[75]

Uncertainty[edit]

Measurements in scientific work are also usually accompanied by estimates of their uncertainty. The uncertainty is often estimated by making repeated measurements of the desired quantity. Uncertainties may also be calculated by consideration of the uncertainties of the individual underlying quantities used. Counts of things, such as the number of people in a nation at a particular time, may also have an uncertainty due to data collection limitations. Or counts may represent a sample of desired quantities, with an uncertainty that depends upon the sampling method used and the number of samples taken.

Definition[edit]

Measurements demand the use of operational definitions of relevant quantities. That is, a scientific quantity is described or defined by how it is measured, as opposed to some more vague, inexact or "idealized" definition. For example, electric current, measured in amperes, may be operationally defined in terms of the mass of silver deposited in a certain time on an electrode in an electrochemical device that is described in some detail. The operational definition of a thing often relies on comparisons with standards: the operational definition of "mass" ultimately relies on the use of an artifact, such as a particular kilogram of platinum-iridium kept in a laboratory in France.

The scientific definition of a term sometimes differs substantially from its natural language usage. For example, mass and weight overlap in meaning in common discourse, but have distinct meanings in mechanics. Scientific quantities are often characterized by their units of measure which can later be described in terms of conventional physical units when communicating the work.

New theories are sometimes developed after realizing certain terms have not previously been sufficiently clearly defined. For example, Albert Einstein's first paper on relativity begins by defining simultaneity and the means for determining length. These ideas were skipped over by Isaac Newton with, "I do not define time, space, place and motion, as being well known to all." Einstein's paper then demonstrates that they (viz., absolute time and length independent of motion) were approximations. Francis Crick cautions us that when characterizing a subject, however, it can be premature to define something when it remains ill-understood.[76] In Crick's study of consciousness, he actually found it easier to study awareness in the visual system, rather than to study free will, for example. His cautionary example was the gene; the gene was much more poorly understood before Watson and Crick's pioneering discovery of the structure of DNA; it would have been counterproductive to spend much time on the definition of the gene, before them.

DNA-characterizations[edit]

The history of the discovery of the structure of DNA is a classic example of the elements of the scientific method: in 1950 it was known that genetic inheritance had a mathematical description, starting with the studies of Gregor Mendel, and that DNA contained genetic information (Oswald Avery's transforming principle).[43] But the mechanism of storing genetic information (i.e., genes) in DNA was unclear. Researchers in Bragg's laboratory at Cambridge University made X-ray diffraction pictures of various molecules, starting with crystals of salt, and proceeding to more complicated substances. Using clues painstakingly assembled over decades, beginning with its chemical composition, it was determined that it should be possible to characterize the physical structure of DNA, and the X-ray images would be the vehicle.[77] ..2. DNA-hypotheses

Another example: precession of Mercury[edit]

The characterization element can require extended and extensive study, even centuries. It took thousands of years of measurements, from the Chaldean, Indian, Persian, Greek, Arabic and European astronomers, to fully record the motion of planet Earth. Newton was able to include those measurements into consequences of his laws of motion. But the perihelion of the planet Mercury's orbit exhibits a precession that cannot be fully explained by Newton's laws of motion (see diagram to the right), as Leverrier pointed out in 1859. The observed difference for Mercury's precession between Newtonian theory and observation was one of the things that occurred to Albert Einstein as a possible early test of his theory of General relativity. His relativistic calculations matched observation much more closely than did Newtonian theory. The difference is approximately 43 arc-seconds per century.

Hypothesis development[edit]

A hypothesis is a suggested explanation of a phenomenon, or alternately a reasoned proposal suggesting a possible correlation between or among a set of phenomena.

Normally hypotheses have the form of a mathematical model. Sometimes, but not always, they can also be formulated as existential statements, stating that some particular instance of the phenomenon being studied has some characteristic and causal explanations, which have the general form of universal statements, stating that every instance of the phenomenon has a particular characteristic.

Scientists are free to use whatever resources they have – their own creativity, ideas from other fields, inductive reasoning, Bayesian inference, and so on – to imagine possible explanations for a phenomenon under study. Albert Einstein once observed that "there is no logical bridge between phenomena and their theoretical principles."[78] Charles Sanders Peirce, borrowing a page from Aristotle (Prior Analytics, 2.25) described the incipient stages of inquiry, instigated by the "irritation of doubt" to venture a plausible guess, as abductive reasoning. The history of science is filled with stories of scientists claiming a "flash of inspiration", or a hunch, which then motivated them to look for evidence to support or refute their idea. Michael Polanyi made such creativity the centerpiece of his discussion of methodology.

William Glen observes that[79]

the success of a hypothesis, or its service to science, lies not simply in its perceived "truth", or power to displace, subsume or reduce a predecessor idea, but perhaps more in its ability to stimulate the research that will illuminate ... bald suppositions and areas of vagueness.

In general scientists tend to look for theories that are "elegant" or "beautiful". In contrast to the usual English use of these terms, they here refer to a theory in accordance with the known facts, which is nevertheless relatively simple and easy to handle. Occam's Razor serves as a rule of thumb for choosing the most desirable amongst a group of equally explanatory hypotheses.

To minimize the confirmation bias which results from entertaining a single hypothesis, strong inference emphasizes the need for entertaining multiple alternative hypotheses.[80]

DNA-hypotheses[edit]

Linus Pauling proposed that DNA might be a triple helix.[81] This hypothesis was also considered by Francis Crick and James D. Watson but discarded. When Watson and Crick learned of Pauling's hypothesis, they understood from existing data that Pauling was wrong[82] and that Pauling would soon admit his difficulties with that structure. So, the race was on to figure out the correct structure (except that Pauling did not realize at the time that he was in a race) ..3. DNA-predictions

Predictions from the hypothesis[edit]

Any useful hypothesis will enable predictions, by reasoning including deductive reasoning. It might predict the outcome of an experiment in a laboratory setting or the observation of a phenomenon in nature. The prediction can also be statistical and deal only with probabilities.

It is essential that the outcome of testing such a prediction be currently unknown. Only in this case does a successful outcome increase the probability that the hypothesis is true. If the outcome is already known, it is called a consequence and should have already been considered while formulating the hypothesis.

If the predictions are not accessible by observation or experience, the hypothesis is not yet testable and so will remain to that extent unscientific in a strict sense. A new technology or theory might make the necessary experiments feasible. For example, while a hypothesis on the existence of other intelligent species may be convincing with scientifically based speculation, there is no known experiment that can test this hypothesis. Therefore, science itself can have little to say about the possibility. In future, a new technique may allow for an experimental test and the speculation would then become part of accepted science.

DNA-predictions[edit]

James D. Watson, Francis Crick, and others hypothesized that DNA had a helical structure. This implied that DNA's X-ray diffraction pattern would be 'x shaped'.[46][83] This prediction followed from the work of Cochran, Crick and Vand[47] (and independently by Stokes). The Cochran-Crick-Vand-Stokes theorem provided a mathematical explanation for the empirical observation that diffraction from helical structures produces x shaped patterns.

In their first paper, Watson and Crick also noted that the double helix structure they proposed provided a simple mechanism for DNA replication, writing, "It has not escaped our notice that the specific pairing we have postulated immediately suggests a possible copying mechanism for the genetic material".[84] ..4. DNA-experiments

Another example: general relativity[edit]

Einstein's theory of General Relativity makes several specific predictions about the observable structure of space-time, such as that light bends in a gravitational field, and that the amount of bending depends in a precise way on the strength of that gravitational field. Arthur Eddington's observations made during a 1919 solar eclipse supported General Relativity rather than Newtonian gravitation.[85]

Experiments[edit]

Once predictions are made, they can be sought by experiments. If the test results contradict the predictions, the hypotheses which entailed them are called into question and become less tenable. Sometimes the experiments are conducted incorrectly or are not very well designed, when compared to a crucial experiment. If the experimental results confirm the predictions, then the hypotheses are considered more likely to be correct, but might still be wrong and continue to be subject to further testing. The experimental control is a technique for dealing with observational error. This technique uses the contrast between multiple samples (or observations) under differing conditions to see what varies or what remains the same. We vary the conditions for each measurement, to help isolate what has changed. Mill's canons can then help us figure out what the important factor is.[86] Factor analysis is one technique for discovering the important factor in an effect.

Depending on the predictions, the experiments can have different shapes. It could be a classical experiment in a laboratory setting, a double-blind study or an archaeological excavation. Even taking a plane from New York to Paris is an experiment which tests the aerodynamical hypotheses used for constructing the plane.

Scientists assume an attitude of openness and accountability on the part of those conducting an experiment. Detailed record keeping is essential, to aid in recording and reporting on the experimental results, and supports the effectiveness and integrity of the procedure. They will also assist in reproducing the experimental results, likely by others. Traces of this approach can be seen in the work of Hipparchus (190–120 BCE), when determining a value for the precession of the Earth, while controlled experiments can be seen in the works of Jābir ibn Hayyān (721–815 CE), al-Battani (853–929) and Alhazen (965–1039).[87]

DNA-experiments[edit]

Watson and Crick showed an initial (and incorrect) proposal for the structure of DNA to a team from Kings College – Rosalind Franklin, Maurice Wilkins, and Raymond Gosling. Franklin immediately spotted the flaws which concerned the water content. Later Watson saw Franklin's detailed X-ray diffraction images which showed an X-shape and was able to confirm the structure was helical.[48][49] This rekindled Watson and Crick's model building and led to the correct structure. ..1. DNA-characterizations

Evaluation and improvement[edit]

The scientific method is iterative. At any stage it is possible to refine its accuracy and precision, so that some consideration will lead the scientist to repeat an earlier part of the process. Failure to develop an interesting hypothesis may lead a scientist to re-define the subject under consideration. Failure of a hypothesis to produce interesting and testable predictions may lead to reconsideration of the hypothesis or of the definition of the subject. Failure of an experiment to produce interesting results may lead a scientist to reconsider the experimental method, the hypothesis, or the definition of the subject.

Other scientists may start their own research and enter the process at any stage. They might adopt the characterization and formulate their own hypothesis, or they might adopt the hypothesis and deduce their own predictions. Often the experiment is not done by the person who made the prediction, and the characterization is based on experiments done by someone else. Published results of experiments can also serve as a hypothesis predicting their own reproducibility.

DNA-iterations[edit]

After considerable fruitless experimentation, being discouraged by their superior from continuing, and numerous false starts,[88][89][90] Watson and Crick were able to infer the essential structure of DNA by concrete modeling of the physical shapes of the nucleotides which comprise it.[50][91] They were guided by the bond lengths which had been deduced by Linus Pauling and by Rosalind Franklin's X-ray diffraction images. ..DNA Example

Confirmation[edit]

Science is a social enterprise, and scientific work tends to be accepted by the scientific community when it has been confirmed. Crucially, experimental and theoretical results must be reproduced by others within the scientific community. Researchers have given their lives for this vision; Georg Wilhelm Richmann was killed by ball lightning (1753) when attempting to replicate the 1752 kite-flying experiment of Benjamin Franklin.[92]

To protect against bad science and fraudulent data, government research-granting agencies such as the National Science Foundation, and science journals, including Nature and Science, have a policy that researchers must archive their data and methods so that other researchers can test the data and methods and build on the research that has gone before. Scientific data archiving can be done at a number of national archives in the U.S. or in the World Data Center.

Models of scientific inquiry[edit]

Classical model[edit]

The classical model of scientific inquiry derives from Aristotle,[93] who distinguished the forms of approximate and exact reasoning, set out the threefold scheme of abductive, deductive, and inductive inference, and also treated the compound forms such as reasoning by analogy.

Hypothetico-deductive model[edit]

The hypothetico-deductive model or method is a proposed description of scientific method. Here, predictions from the hypothesis are central: if you assume the hypothesis to be true, what consequences follow?

If subsequent empirical investigation does not demonstrate that these consequences or predictions correspond to the observable world, the hypothesis can be concluded to be false.

Pragmatic model[edit]

In 1877,[25] Charles Sanders Peirce (1839–1914) characterized inquiry in general not as the pursuit of truth per se but as the struggle to move from irritating, inhibitory doubts born of surprises, disagreements, and the like, and to reach a secure belief, belief being that on which one is prepared to act. He framed scientific inquiry as part of a broader spectrum and as spurred, like inquiry generally, by actual doubt, not mere verbal or hyperbolic doubt, which he held to be fruitless.[94] He outlined four methods of settling opinion, ordered from least to most successful:

- The method of tenacity (policy of sticking to initial belief) – which brings comforts and decisiveness but leads to trying to ignore contrary information and others' views as if truth were intrinsically private, not public. It goes against the social impulse and easily falters since one may well notice when another's opinion is as good as one's own initial opinion. Its successes can shine but tend to be transitory.[95]

- The method of authority – which overcomes disagreements but sometimes brutally. Its successes can be majestic and long-lived, but it cannot operate thoroughly enough to suppress doubts indefinitely, especially when people learn of other societies present and past.

- The method of the a priori – which promotes conformity less brutally but fosters opinions as something like tastes, arising in conversation and comparisons of perspectives in terms of "what is agreeable to reason." Thereby it depends on fashion in paradigms and goes in circles over time. It is more intellectual and respectable but, like the first two methods, sustains accidental and capricious beliefs, destining some minds to doubt it.

- The scientific method – the method wherein inquiry regards itself as fallible and purposely tests itself and criticizes, corrects, and improves itself.

Peirce held that slow, stumbling ratiocination can be dangerously inferior to instinct and traditional sentiment in practical matters, and that the scientific method is best suited to theoretical research,[96] which in turn should not be trammeled by the other methods and practical ends; reason's "first rule" is that, in order to learn, one must desire to learn and, as a corollary, must not block the way of inquiry.[97] The scientific method excels the others by being deliberately designed to arrive – eventually – at the most secure beliefs, upon which the most successful practices can be based. Starting from the idea that people seek not truth per se but instead to subdue irritating, inhibitory doubt, Peirce showed how, through the struggle, some can come to submit to truth for the sake of belief's integrity, seek as truth the guidance of potential practice correctly to its given goal, and wed themselves to the scientific method.[25][28]

For Peirce, rational inquiry implies presuppositions about truth and the real; to reason is to presuppose (and at least to hope), as a principle of the reasoner's self-regulation, that the real is discoverable and independent of our vagaries of opinion. In that vein he defined truth as the correspondence of a sign (in particular, a proposition) to its object and, pragmatically, not as actual consensus of some definite, finite community (such that to inquire would be to poll the experts), but instead as that final opinion which all investigators would reach sooner or later but still inevitably, if they were to push investigation far enough, even when they start from different points.[98] In tandem he defined the real as a true sign's object (be that object a possibility or quality, or an actuality or brute fact, or a necessity or norm or law), which is what it is independently of any finite community's opinion and, pragmatically, depends only on the final opinion destined in a sufficient investigation. That is a destination as far, or near, as the truth itself to you or me or the given finite community. Thus, his theory of inquiry boils down to "Do the science." Those conceptions of truth and the real involve the idea of a community both without definite limits (and thus potentially self-correcting as far as needed) and capable of definite increase of knowledge.[99] As inference, "logic is rooted in the social principle" since it depends on a standpoint that is, in a sense, unlimited.[100]

Paying special attention to the generation of explanations, Peirce outlined the scientific method as a coordination of three kinds of inference in a purposeful cycle aimed at settling doubts, as follows (in §III–IV in "A Neglected Argument"[7] except as otherwise noted):

- Abduction (or retroduction). Guessing, inference to explanatory hypotheses for selection of those best worth trying. From abduction, Peirce distinguishes induction as inferring, on the basis of tests, the proportion of truth in the hypothesis. Every inquiry, whether into ideas, brute facts, or norms and laws, arises from surprising observations in one or more of those realms (and for example at any stage of an inquiry already underway). All explanatory content of theories comes from abduction, which guesses a new or outside idea so as to account in a simple, economical way for a surprising or complicative phenomenon. Oftenest, even a well-prepared mind guesses wrong. But the modicum of success of our guesses far exceeds that of sheer luck and seems born of attunement to nature by instincts developed or inherent, especially insofar as best guesses are optimally plausible and simple in the sense, said Peirce, of the "facile and natural", as by Galileo's natural light of reason and as distinct from "logical simplicity". Abduction is the most fertile but least secure mode of inference. Its general rationale is inductive: it succeeds often enough and, without it, there is no hope of sufficiently expediting inquiry (often multi-generational) toward new truths.[101] Coordinative method leads from abducing a plausible hypothesis to judging it for its testability[102] and for how its trial would economize inquiry itself.[103] Peirce calls his pragmatism "the logic of abduction".[104] His pragmatic maxim is: "Consider what effects that might conceivably have practical bearings you conceive the objects of your conception to have. Then, your conception of those effects is the whole of your conception of the object".[98] His pragmatism is a method of reducing conceptual confusions fruitfully by equating the meaning of any conception with the conceivable practical implications of its object's conceived effects – a method of experimentational mental reflection hospitable to forming hypotheses and conducive to testing them. It favors efficiency. The hypothesis, being insecure, needs to have practical implications leading at least to mental tests and, in science, lending themselves to scientific tests. A simple but unlikely guess, if uncostly to test for falsity, may belong first in line for testing. A guess is intrinsically worth testing if it has instinctive plausibility or reasoned objective probability, while subjective likelihood, though reasoned, can be misleadingly seductive. Guesses can be chosen for trial strategically, for their caution (for which Peirce gave as example the game of Twenty Questions), breadth, and incomplexity.[105] One can hope to discover only that which time would reveal through a learner's sufficient experience anyway, so the point is to expedite it; the economy of research is what demands the leap, so to speak, of abduction and governs its art.[103]

- Deduction. Two stages:

- Explication. Unclearly premissed, but deductive, analysis of the hypothesis in order to render its parts as clear as possible.

- Demonstration: Deductive Argumentation, Euclidean in procedure. Explicit deduction of hypothesis's consequences as predictions, for induction to test, about evidence to be found. Corollarial or, if needed, theorematic.

- Induction. The long-run validity of the rule of induction is deducible from the principle (presuppositional to reasoning in general[98]) that the real is only the object of the final opinion to which adequate investigation would lead;[106] anything to which no such process would ever lead would not be real. Induction involving ongoing tests or observations follows a method which, sufficiently persisted in, will diminish its error below any predesignate degree. Three stages:

- Classification. Unclearly premissed, but inductive, classing of objects of experience under general ideas.

- Probation: direct inductive argumentation. Crude (the enumeration of instances) or gradual (new estimate of proportion of truth in the hypothesis after each test). Gradual induction is qualitative or quantitative; if qualitative, then dependent on weightings of qualities or characters;[107] if quantitative, then dependent on measurements, or on statistics, or on countings.

- Sentential Induction. "...which, by inductive reasonings, appraises the different probations singly, then their combinations, then makes self-appraisal of these very appraisals themselves, and passes final judgment on the whole result".

Science of complex systems[edit]

Science applied to complex systems can involve elements such as transdisciplinarity, systems theory and scientific modelling. The Santa Fe Institute studies such systems;[108] Murray Gell-Mann interconnects these topics with message passing.[109]

In general, the scientific method may be difficult to apply stringently to diverse, interconnected systems and large data sets. In particular, practices used within Big data, such as predictive analytics, may be considered to be at odds with the scientific method.[110]

Communication and community[edit]

Frequently the scientific method is employed not only by a single person, but also by several people cooperating directly or indirectly. Such cooperation can be regarded as an important element of a scientific community. Various standards of scientific methodology are used within such an environment.

Peer review evaluation[edit]

Scientific journals use a process of peer review, in which scientists' manuscripts are submitted by editors of scientific journals to (usually one to three, and usually anonymous) fellow scientists familiar with the field for evaluation. In certain journals, the journal itself selects the referees; while in others (especially journals that are extremely specialized), the manuscript author might recommend referees. The referees may or may not recommend publication, or they might recommend publication with suggested modifications, or sometimes, publication in another journal. This standard is practiced to various degrees by different journals, and can have the effect of keeping the literature free of obvious errors and to generally improve the quality of the material, especially in the journals who use the standard most rigorously. The peer review process can have limitations when considering research outside the conventional scientific paradigm: problems of "groupthink" can interfere with open and fair deliberation of some new research.[111]

Documentation and replication[edit]

Sometimes experimenters may make systematic errors during their experiments, veer from standard methods and practices (Pathological science) for various reasons, or, in rare cases, deliberately report false results. Occasionally because of this then, other scientists might attempt to repeat the experiments in order to duplicate the results.

Archiving[edit]

Researchers sometimes practice scientific data archiving, such as in compliance with the policies of government funding agencies and scientific journals. In these cases, detailed records of their experimental procedures, raw data, statistical analyses and source code can be preserved in order to provide evidence of the methodology and practice of the procedure and assist in any potential future attempts to reproduce the result. These procedural records may also assist in the conception of new experiments to test the hypothesis, and may prove useful to engineers who might examine the potential practical applications of a discovery.

Data sharing[edit]

When additional information is needed before a study can be reproduced, the author of the study might be asked to provide it. They might provide it, or if the author refuses to share data, appeals can be made to the journal editors who published the study or to the institution which funded the research.

Limitations[edit]