Compressed sensing

Compressed sensing (also known as compressive sensing, compressive sampling, or sparse sampling) is a signal processing technique for efficiently acquiring and reconstructing a signal, by finding solutions to underdetermined linear systems. This is based on the principle that, through optimization, the sparsity of a signal can be exploited to recover it from far fewer samples than required by the Nyquist–Shannon sampling theorem. There are two conditions under which recovery is possible. The first one is sparsity, which requires the signal to be sparse in some domain. The second one is incoherence, which is applied through the isometric property, which is sufficient for sparse signals.[1][2] Compressed sensing has applications in, for example, MRI where the incoherence condition is typically satisfied.[3]

Overview

A common goal of the engineering field of signal processing is to reconstruct a signal from a series of sampling measurements. In general, this task is impossible because there is no way to reconstruct a signal during the times that the signal is not measured. Nevertheless, with prior knowledge or assumptions about the signal, it turns out to be possible to perfectly reconstruct a signal from a series of measurements (acquiring this series of measurements is called sampling). Over time, engineers have improved their understanding of which assumptions are practical and how they can be generalized.

An early breakthrough in signal processing was the Nyquist–Shannon sampling theorem. It states that if a real signal's highest frequency is less than half of the sampling rate, then the signal can be reconstructed perfectly by means of sinc interpolation. The main idea is that with prior knowledge about constraints on the signal's frequencies, fewer samples are needed to reconstruct the signal.

Around 2004, Emmanuel Candès, Justin Romberg, Terence Tao, and David Donoho proved that given knowledge about a signal's sparsity, the signal may be reconstructed with even fewer samples than the sampling theorem requires.[4][5] This idea is the basis of compressed sensing.

History

Compressed sensing relies on techniques, which several other scientific fields have used historically.[6] In statistics, the least squares method was complemented by the -norm, which was introduced by Laplace. Following the introduction of linear programming and Dantzig's simplex algorithm, the -norm was used in computational statistics. In statistical theory, the -norm was used by George W. Brown and later writers on median-unbiased estimators. It was used by Peter J. Huber and others working on robust statistics. The -norm was also used in signal processing, for example, in the 1970s, when seismologists constructed images of reflective layers within the earth based on data that did not seem to satisfy the Nyquist–Shannon criterion.[7] It was used in matching pursuit in 1993, the LASSO estimator by Robert Tibshirani in 1996[8] and basis pursuit in 1998.[9]

At first glance, compressed sensing might seem to violate the sampling theorem, because compressed sensing depends on the sparsity of the signal in question and not its highest frequency. This is a misconception, because the sampling theorem guarantees perfect reconstruction given sufficient, not necessary, conditions. A sampling method fundamentally different from classical fixed-rate sampling cannot "violate" the sampling theorem. Sparse signals with high frequency components can be highly under-sampled using compressed sensing compared to classical fixed-rate sampling.[10]

Method

Underdetermined linear system

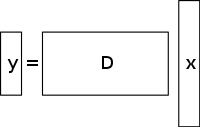

An underdetermined system of linear equations has more unknowns than equations and generally has an infinite number of solutions. The figure below shows such an equation system where we want to find a solution for .

In order to choose a solution to such a system, one must impose extra constraints or conditions (such as smoothness) as appropriate. In compressed sensing, one adds the constraint of sparsity, allowing only solutions which have a small number of nonzero coefficients. Not all underdetermined systems of linear equations have a sparse solution. However, if there is a unique sparse solution to the underdetermined system, then the compressed sensing framework allows the recovery of that solution.

Solution / reconstruction method

Compressed sensing takes advantage of the redundancy in many interesting signals—they are not pure noise. In particular, many signals are sparse, that is, they contain many coefficients close to or equal to zero, when represented in some domain.[11] This is the same insight used in many forms of lossy compression.

Compressed sensing typically starts with taking a weighted linear combination of samples also called compressive measurements in a basis different from the basis in which the signal is known to be sparse. The results found by Emmanuel Candès, Justin Romberg, Terence Tao, and David Donoho showed that the number of these compressive measurements can be small and still contain nearly all the useful information. Therefore, the task of converting the image back into the intended domain involves solving an underdetermined matrix equation since the number of compressive measurements taken is smaller than the number of pixels in the full image. However, adding the constraint that the initial signal is sparse enables one to solve this underdetermined system of linear equations.

The least-squares solution to such problems is to minimize the norm—that is, minimize the amount of energy in the system. This is usually simple mathematically (involving only a matrix multiplication by the pseudo-inverse of the basis sampled in). However, this leads to poor results for many practical applications, for which the unknown coefficients have nonzero energy.

To enforce the sparsity constraint when solving for the underdetermined system of linear equations, one can minimize the number of nonzero components of the solution. The function counting the number of non-zero components of a vector was called the "norm" by David Donoho.[note 1]

Candès et al. proved that for many problems it is probable that the norm is equivalent to the norm, in a technical sense: This equivalence result allows one to solve the problem, which is easier than the problem. Finding the candidate with the smallest norm can be expressed relatively easily as a linear program, for which efficient solution methods already exist.[13] When measurements may contain a finite amount of noise, basis pursuit denoising is preferred over linear programming, since it preserves sparsity in the face of noise and can be solved faster than an exact linear program.

Total variation-based CS reconstruction

Motivation and applications

Role of TV regularization

Total variation can be seen as a non-negative real-valued functional defined on the space of real-valued functions (for the case of functions of one variable) or on the space of integrable functions (for the case of functions of several variables). For signals, especially, total variation refers to the integral of the absolute gradient of the signal. In signal and image reconstruction, it is applied as total variation regularization where the underlying principle is that signals with excessive details have high total variation and that removing these details, while retaining important information such as edges, would reduce the total variation of the signal and make the signal subject closer to the original signal in the problem.

For the purpose of signal and image reconstruction, minimization models are used. Other approaches also include the least-squares as has been discussed before in this article. These methods are extremely slow and return a not-so-perfect reconstruction of the signal. The current CS Regularization models attempt to address this problem by incorporating sparsity priors of the original image, one of which is the total variation (TV). Conventional TV approaches are designed to give piece-wise constant solutions. Some of these include (as discussed ahead) – constrained -minimization which uses an iterative scheme. This method, though fast, subsequently leads to over-smoothing of edges resulting in blurred image edges.[14] TV methods with iterative re-weighting have been implemented to reduce the influence of large gradient value magnitudes in the images. This has been used in computed tomography (CT) reconstruction as a method known as edge-preserving total variation. However, as gradient magnitudes are used for estimation of relative penalty weights between the data fidelity and regularization terms, this method is not robust to noise and artifacts and accurate enough for CS image/signal reconstruction and, therefore, fails to preserve smaller structures.

Recent progress on this problem involves using an iteratively directional TV refinement for CS reconstruction.[15] This method would have 2 stages: the first stage would estimate and refine the initial orientation field – which is defined as a noisy point-wise initial estimate, through edge-detection, of the given image. In the second stage, the CS reconstruction model is presented by utilizing directional TV regularizer. More details about these TV-based approaches – iteratively reweighted l1 minimization, edge-preserving TV and iterative model using directional orientation field and TV- are provided below.

Existing approaches

Iteratively reweighted ℓ1 minimization

In the CS reconstruction models using constrained minimization,[16] larger coefficients are penalized heavily in the norm. It was proposed to have a weighted formulation of minimization designed to more democratically penalize nonzero coefficients. An iterative algorithm is used for constructing the appropriate weights.[17] Each iteration requires solving one minimization problem by finding the local minimum of a concave penalty function that more closely resembles the norm. An additional parameter, usually to avoid any sharp transitions in the penalty function curve, is introduced into the iterative equation to ensure stability and so that a zero estimate in one iteration does not necessarily lead to a zero estimate in the next iteration. The method essentially involves using the current solution for computing the weights to be used in the next iteration.

Advantages and disadvantages

Early iterations may find inaccurate sample estimates, however this method will down-sample these at a later stage to give more weight to the smaller non-zero signal estimates. One of the disadvantages is the need for defining a valid starting point as a global minimum might not be obtained every time due to the concavity of the function. Another disadvantage is that this method tends to uniformly penalize the image gradient irrespective of the underlying image structures. This causes over-smoothing of edges, especially those of low contrast regions, subsequently leading to loss of low contrast information. The advantages of this method include: reduction of the sampling rate for sparse signals; reconstruction of the image while being robust to the removal of noise and other artifacts; and use of very few iterations. This can also help in recovering images with sparse gradients.

In the figure shown below, P1 refers to the first-step of the iterative reconstruction process, of the projection matrix P of the fan-beam geometry, which is constrained by the data fidelity term. This may contain noise and artifacts as no regularization is performed. The minimization of P1 is solved through the conjugate gradient least squares method. P2 refers to the second step of the iterative reconstruction process wherein it utilizes the edge-preserving total variation regularization term to remove noise and artifacts, and thus improve the quality of the reconstructed image/signal. The minimization of P2 is done through a simple gradient descent method. Convergence is determined by testing, after each iteration, for image positivity, by checking if for the case when (Note that refers to the different x-ray linear attenuation coefficients at different voxels of the patient image).

Edge-preserving total variation (TV)-based compressed sensing

This is an iterative CT reconstruction algorithm with edge-preserving TV regularization to reconstruct CT images from highly undersampled data obtained at low dose CT through low current levels (milliampere). In order to reduce the imaging dose, one of the approaches used is to reduce the number of x-ray projections acquired by the scanner detectors. However, this insufficient projection data which is used to reconstruct the CT image can cause streaking artifacts. Furthermore, using these insufficient projections in standard TV algorithms end up making the problem under-determined and thus leading to infinitely many possible solutions. In this method, an additional penalty weighted function is assigned to the original TV norm. This allows for easier detection of sharp discontinuities in intensity in the images and thereby adapt the weight to store the recovered edge information during the process of signal/image reconstruction. The parameter controls the amount of smoothing applied to the pixels at the edges to differentiate them from the non-edge pixels. The value of is changed adaptively based on the values of the histogram of the gradient magnitude so that a certain percentage of pixels have gradient values larger than . The edge-preserving total variation term, thus, becomes sparser and this speeds up the implementation. A two-step iteration process known as forward–backward splitting algorithm is used.[18] The optimization problem is split into two sub-problems which are then solved with the conjugate gradient least squares method[19] and the simple gradient descent method respectively. The method is stopped when the desired convergence has been achieved or if the maximum number of iterations is reached.[14]

Advantages and disadvantages

Some of the disadvantages of this method are the absence of smaller structures in the reconstructed image and degradation of image resolution. This edge preserving TV algorithm, however, requires fewer iterations than the conventional TV algorithm.[14] Analyzing the horizontal and vertical intensity profiles of the reconstructed images, it can be seen that there are sharp jumps at edge points and negligible, minor fluctuation at non-edge points. Thus, this method leads to low relative error and higher correlation as compared to the TV method. It also effectively suppresses and removes any form of image noise and image artifacts such as streaking.

Iterative model using a directional orientation field and directional total variation

To prevent over-smoothing of edges and texture details and to obtain a reconstructed CS image which is accurate and robust to noise and artifacts, this method is used. First, an initial estimate of the noisy point-wise orientation field of the image , , is obtained. This noisy orientation field is defined so that it can be refined at a later stage to reduce the noise influences in orientation field estimation. A coarse orientation field estimation is then introduced based on structure tensor, which is formulated as:[20] . Here, refers to the structure tensor related with the image pixel point (i,j) having standard deviation . refers to the Gaussian kernel with standard deviation . refers to the manually defined parameter for the image below which the edge detection is insensitive to noise. refers to the gradient of the image and refers to the tensor product obtained by using this gradient.[15]

The structure tensor obtained is convolved with a Gaussian kernel to improve the accuracy of the orientation estimate with being set to high values to account for the unknown noise levels. For every pixel (i,j) in the image, the structure tensor J is a symmetric and positive semi-definite matrix. Convolving all the pixels in the image with , gives orthonormal eigen vectors ω and υ of the matrix. ω points in the direction of the dominant orientation having the largest contrast and υ points in the direction of the structure orientation having the smallest contrast. The orientation field coarse initial estimation is defined as = υ. This estimate is accurate at strong edges. However, at weak edges or on regions with noise, its reliability decreases.

To overcome this drawback, a refined orientation model is defined in which the data term reduces the effect of noise and improves accuracy while the second penalty term with the L2-norm is a fidelity term which ensures accuracy of initial coarse estimation.

This orientation field is introduced into the directional total variation optimization model for CS reconstruction through the equation: . is the objective signal which needs to be recovered. Y is the corresponding measurement vector, d is the iterative refined orientation field and is the CS measurement matrix. This method undergoes a few iterations ultimately leading to convergence. is the orientation field approximate estimation of the reconstructed image from the previous iteration (in order to check for convergence and the subsequent optical performance, the previous iteration is used). For the two vector fields represented by and , refers to the multiplication of respective horizontal and vertical vector elements of and followed by their subsequent addition. These equations are reduced to a series of convex minimization problems which are then solved with a combination of variable splitting and augmented Lagrangian (FFT-based fast solver with a closed form solution) methods.[15] It (Augmented Lagrangian) is considered equivalent to the split Bregman iteration which ensures convergence of this method. The orientation field, d is defined as being equal to , where define the horizontal and vertical estimates of .

The Augmented Lagrangian method for the orientation field, , involves initializing and then finding the approximate minimizer of with respect to these variables. The Lagrangian multipliers are then updated and the iterative process is stopped when convergence is achieved. For the iterative directional total variation refinement model, the augmented lagrangian method involves initializing .[21]

Here, are newly introduced variables where = , = , = , and = . are the Lagrangian multipliers for . For each iteration, the approximate minimizer of with respect to variables () is calculated. And as in the field refinement model, the lagrangian multipliers are updated and the iterative process is stopped when convergence is achieved.

For the orientation field refinement model, the Lagrangian multipliers are updated in the iterative process as follows:

For the iterative directional total variation refinement model, the Lagrangian multipliers are updated as follows:

Here, are positive constants.

Advantages and disadvantages

Based on peak signal-to-noise ratio (PSNR) and structural similarity index (SSIM) metrics and known ground-truth images for testing performance, it is concluded that iterative directional total variation has a better reconstructed performance than the non-iterative methods in preserving edge and texture areas. The orientation field refinement model plays a major role in this improvement in performance as it increases the number of directionless pixels in the flat area while enhancing the orientation field consistency in the regions with edges.

Applications

The field of compressive sensing is related to several topics in signal processing and computational mathematics, such as underdetermined linear systems, group testing, heavy hitters, sparse coding, multiplexing, sparse sampling, and finite rate of innovation. Its broad scope and generality has enabled several innovative CS-enhanced approaches in signal processing and compression, solution of inverse problems, design of radiating systems, radar and through-the-wall imaging, and antenna characterization.[22] Imaging techniques having a strong affinity with compressive sensing include coded aperture and computational photography.

Conventional CS reconstruction uses sparse signals (usually sampled at a rate less than the Nyquist sampling rate) for reconstruction through constrained minimization. One of the earliest applications of such an approach was in reflection seismology which used sparse reflected signals from band-limited data for tracking changes between sub-surface layers.[23] When the LASSO model came into prominence in the 1990s as a statistical method for selection of sparse models,[24] this method was further used in computational harmonic analysis for sparse signal representation from over-complete dictionaries. Some of the other applications include incoherent sampling of radar pulses. The work by Boyd et al.[16] has applied the LASSO model- for selection of sparse models- towards analog to digital converters (the current ones use a sampling rate higher than the Nyquist rate along with the quantized Shannon representation). This would involve a parallel architecture in which the polarity of the analog signal changes at a high rate followed by digitizing the integral at the end of each time-interval to obtain the converted digital signal.

Photography

Compressed sensing has been used in an experimental mobile phone camera sensor. The approach allows a reduction in image acquisition energy per image by as much as a factor of 15 at the cost of complex decompression algorithms; the computation may require an off-device implementation.[25]

Compressed sensing is used in single-pixel cameras from Rice University.[26] Bell Labs employed the technique in a lensless single-pixel camera that takes stills using repeated snapshots of randomly chosen apertures from a grid. Image quality improves with the number of snapshots, and generally requires a small fraction of the data of conventional imaging, while eliminating lens/focus-related aberrations.[27][28]

Holography

Compressed sensing can be used to improve image reconstruction in holography by increasing the number of voxels one can infer from a single hologram.[29][30][31] It is also used for image retrieval from undersampled measurements in optical[32][33] and millimeter-wave[34] holography.

Facial recognition

Compressed sensing has been used in facial recognition applications.[35]

Magnetic resonance imaging

Compressed sensing has been used[36][37] to shorten magnetic resonance imaging scanning sessions on conventional hardware.[38] Reconstruction methods include

Compressed sensing addresses the issue of high scan time by enabling faster acquisition by measuring fewer Fourier coefficients. This produces a high-quality image with relatively lower scan time. Another application (also discussed ahead) is for CT reconstruction with fewer X-ray projections. Compressed sensing, in this case, removes the high spatial gradient parts – mainly, image noise and artifacts. This holds tremendous potential as one can obtain high-resolution CT images at low radiation doses (through lower current-mA settings).[42]

Network tomography

Compressed sensing has showed outstanding results in the application of network tomography to network management. Network delay estimation and network congestion detection can both be modeled as underdetermined systems of linear equations where the coefficient matrix is the network routing matrix. Moreover, in the Internet, network routing matrices usually satisfy the criterion for using compressed sensing.[43]

Shortwave-infrared cameras

In 2013 one company announced shortwave-infrared cameras which utilize compressed sensing.[44] These cameras have light sensitivity from 0.9 μm to 1.7 μm, wavelengths invisible to the human eye.

Aperture synthesis astronomy

In radio astronomy and optical astronomical interferometry, full coverage of the Fourier plane is usually absent and phase information is not obtained in most hardware configurations. In order to obtain aperture synthesis images, various compressed sensing algorithms are employed.[45] The Högbom CLEAN algorithm has been in use since 1974 for the reconstruction of images obtained from radio interferometers, which is similar to the matching pursuit algorithm mentioned above.

Transmission electron microscopy

Compressed sensing combined with a moving aperture has been used to increase the acquisition rate of images in a transmission electron microscope.[46] In scanning mode, compressive sensing combined with random scanning of the electron beam has enabled both faster acquisition and less electron dose, which allows for imaging of electron beam sensitive materials.[47]

See also

- Noiselet

- Sparse approximation

- Sparse coding

- Low-density parity-check code

- Compressed sensing in speech signals

Notes

- ^ The quotation marks served two warnings. First, the number-of-nonzeros -"norm" is not a proper F-norm, because it is not continuous in its scalar argument: nnzs(αx) is constant as α approaches zero. Unfortunately, authors now neglect the quotation marks and abused terminology—clashing with the established use of the norm for the space of measurable functions (equipped with an appropriate metric) or for the space of sequences with F–norm .[12]

References

- ^ Donoho, David L. (2006). "For most large underdetermined systems of linear equations the minimal 1-norm solution is also the sparsest solution". Communications on Pure and Applied Mathematics. 59 (6): 797–829. doi:10.1002/cpa.20132. S2CID 8510060.

- ^ M. Davenport, "The Fundamentals of Compressive Sensing", SigView, April 12, 2013.

- ^ Candès, E.J., & Plan, Y. (2010). A Probabilistic and RIPless Theory of Compressed Sensing. IEEE Transactions on Information Theory, 57, 7235–7254.

- ^ Candès, Emmanuel J.; Romberg, Justin K.; Tao, Terence (2006). "Stable signal recovery from incomplete and inaccurate measurements" (PDF). Communications on Pure and Applied Mathematics. 59 (8): 1207–1223. arXiv:math/0503066. Bibcode:2005math......3066C. doi:10.1002/cpa.20124. S2CID 119159284. Archived from the original (PDF) on 2012-03-11. Retrieved 2011-02-10.

- ^ Donoho, D.L. (2006). "Compressed sensing". IEEE Transactions on Information Theory. 52 (4): 1289–1306. doi:10.1109/TIT.2006.871582. S2CID 206737254.

- ^ List of L1 regularization ideas from Vivek Goyal, Alyson Fletcher, Sundeep Rangan, The Optimistic Bayesian: Replica Method Analysis of Compressed Sensing

- ^ Hayes, Brian (2009). "The Best Bits". American Scientist. 97 (4): 276. doi:10.1511/2009.79.276. S2CID 349102.

- ^ Tibshirani, Robert (1996). "Regression shrinkage and selection via the lasso". Journal of the Royal Statistical Society, Series B. 58 (1): 267–288. doi:10.1111/j.2517-6161.1996.tb02080.x.

- ^ "Atomic decomposition by basis pursuit", by Scott Shaobing Chen, David L. Donoho, Michael, A. Saunders. SIAM Journal on Scientific Computing

- ^ Candès, Emmanuel J.; Romberg, Justin K.; Tao, Terence (2006). "Robust Uncertainty Principles: Exact Signal Reconstruction from Highly Incomplete Fourier Information" (PDF). IEEE Trans. Inf. Theory. 52 (8): 489–509. arXiv:math/0409186. CiteSeerX 10.1.1.122.4429. doi:10.1109/tit.2005.862083. S2CID 7033413.

- ^ Candès, E.J., & Wakin, M.B., An Introduction To Compressive Sampling, IEEE Signal Processing Magazine, V.21, March 2008 [1]

- ^ Stefan Rolewicz. Metric Linear Spaces.

- ^ L1-MAGIC is a collection of MATLAB routines

- ^ a b c Tian, Z.; Jia, X.; Yuan, K.; Pan, T.; Jiang, S. B. (2011). "Low-dose CT reconstruction via edge preserving total variation regularization". Phys Med Biol. 56 (18): 5949–5967. arXiv:1009.2288. Bibcode:2011PMB....56.5949T. doi:10.1088/0031-9155/56/18/011. PMC 4026331. PMID 21860076.

- ^ a b c Xuan Fei; Zhihui Wei; Liang Xiao (2013). "Iterative Directional Total Variation Refinement for Compressive Sensing Image Reconstruction". IEEE Signal Processing Letters. 20 (11): 1070–1073. Bibcode:2013ISPL...20.1070F. doi:10.1109/LSP.2013.2280571. S2CID 8156085.

- ^ a b Candes, E. J.; Wakin, M. B.; Boyd, S. P. (2008). "Enhancing sparsity by reweighted l1 minimization". J. Fourier Anal. Appl. 14 (5–6): 877–905. arXiv:0711.1612. doi:10.1007/s00041-008-9045-x. S2CID 5879257.

- ^ Lange, K.: Optimization, Springer Texts in Statistics. Springer, New York (2004)

- ^ Combettes, P; Wajs, V (2005). "Signal recovery by proximal forward-backward splitting". Multiscale Model Simul. 4 (4): 1168–200. doi:10.1137/050626090. S2CID 15064954.

- ^ Hestenes, M; Stiefel, E (1952). "Methods of conjugate gradients for solving linear systems". Journal of Research of the National Bureau of Standards. 49 (6): 409–36. doi:10.6028/jres.049.044.

- ^ Brox, T.; Weickert, J.; Burgeth, B.; Mrázek, P. (2006). "Nonlinear structure tensors". Image Vis. Comput. 24 (1): 41–55. CiteSeerX 10.1.1.170.6085. doi:10.1016/j.imavis.2005.09.010.

- ^ Goldluecke, B.; Strekalovskiy, E.; Cremers, D.; Siims, P.-T. A. I. (2012). "The natural vectorial total variation which arises from geometric measure theory". SIAM J. Imaging Sci. 5 (2): 537–563. CiteSeerX 10.1.1.364.3997. doi:10.1137/110823766.

- ^ Andrea Massa; Paolo Rocca; Giacomo Oliveri (2015). "Compressive Sensing in Electromagnetics – A Review". IEEE Antennas and Propagation Magazine. 57 (1): 224–238. Bibcode:2015IAPM...57..224M. doi:10.1109/MAP.2015.2397092. S2CID 30196057.

- ^ Taylor, H.L.; Banks, S.C.; McCoy, J.F. (1979). "Deconvolution with the 1 norm". Geophysics. 44 (1): 39–52. doi:10.1190/1.1440921.

- ^ Tibshirani, R (1996). "Regression shrinkage and selection via the lasso" (PDF). J. R. Stat. Soc. B. 58 (1): 267–288. doi:10.1111/j.2517-6161.1996.tb02080.x. S2CID 16162039.

- ^ David Schneider (March 2013). "New Camera Chip Captures Only What It Needs". IEEE Spectrum. Retrieved 2013-03-20.

- ^ "Compressive Imaging: A New Single-Pixel Camera". Rice DSP. Archived from the original on 2010-06-05. Retrieved 2013-06-04.

- ^ "Bell Labs Invents Lensless Camera". MIT Technology Review. 2013-05-25. Archived from the original on 2016-01-20. Retrieved 2013-06-04.

- ^ Gang Huang; Hong Jiang; Kim Matthews; Paul Wilford (2013). Lensless Imaging by Compressive Sensing. 2013 IEEE International Conference on Image Processing. Vol. 2393. pp. 2101–2105. arXiv:1305.7181. Bibcode:2013arXiv1305.7181H. doi:10.1109/ICIP.2013.6738433. ISBN 978-1-4799-2341-0.

- ^ Brady, David; Choi, Kerkil; Marks, Daniel; Horisaki, Ryoichi; Lim, Sehoon (2009). "Compressive holography". Optics Express. 17 (15): 13040–13049. Bibcode:2009OExpr..1713040B. doi:10.1364/oe.17.013040. PMID 19654708.

- ^ Rivenson, Y.; Stern, A.; Javidi, B. (2010). "Compressive fresnel holography". Journal of Display Technology. 6 (10): 506–509. Bibcode:2010JDisT...6..506R. CiteSeerX 10.1.1.391.2020. doi:10.1109/jdt.2010.2042276. S2CID 7460759.

- ^ Denis, Loic; Lorenz, Dirk; Thibaut, Eric; Fournier, Corinne; Trede, Dennis (2009). "Inline hologram reconstruction with sparsity constraints" (PDF). Opt. Lett. 34 (22): 3475–3477. Bibcode:2009OptL...34.3475D. doi:10.1364/ol.34.003475. PMID 19927182. S2CID 14377881.

- ^ Marim, M.; Angelini, E.; Olivo-Marin, J. C.; Atlan, M. (2011). "Off-axis compressed holographic microscopy in low-light conditions". Optics Letters. 36 (1): 79–81. arXiv:1101.1735. Bibcode:2011OptL...36...79M. doi:10.1364/ol.36.000079. PMID 21209693. S2CID 24074045.

- ^ Marim, M. M.; Atlan, M.; Angelini, E.; Olivo-Marin, J. C. (2010). "Compressed sensing with off-axis frequency-shifting holography". Optics Letters. 35 (6): 871–873. arXiv:1004.5305. Bibcode:2010OptL...35..871M. doi:10.1364/ol.35.000871. PMID 20237627. S2CID 9738556.

- ^ Fernandez Cull, Christy; Wikner, David A.; Mait, Joseph N.; Mattheiss, Michael; Brady, David J. (2010). "Millimeter-wave compressive holography". Appl. Opt. 49 (19): E67–E82. Bibcode:2010ApOpt..49E..67C. CiteSeerX 10.1.1.1018.5231. doi:10.1364/ao.49.000e67. PMID 20648123.

- ^ "Engineers Test Highly Accurate Face Recognition". Wired. 2008-03-24. Archived from the original on 2014-01-10.

- ^ Lustig, Michael (2007). "Sparse MRI: The application of compressed sensing for rapid MR imaging". Magnetic Resonance in Medicine. 58 (6): 1182–1195. doi:10.1002/mrm.21391. PMID 17969013. S2CID 15370510.

- ^ Lustig, M.; Donoho, D.L.; Santos, J.M.; Pauly, J.M. (2008). "Compressed Sensing MRI;". IEEE Signal Processing Magazine. 25 (2): 72–82. Bibcode:2008ISPM...25...72L. doi:10.1109/MSP.2007.914728. S2CID 945906.

- ^ Ellenberg, Jordan (2010-03-04). "Fill in the Blanks: Using Math to Turn Lo-Res Datasets Into Hi-Res Samples". Wired. Vol. 18, no. 3. Retrieved 2024-04-20.

- ^ Zhang, Y.; Peterson, B. (2014). "Energy Preserved Sampling for Compressed Sensing MRI". Computational and Mathematical Methods in Medicine. 2014: 546814. arXiv:1501.03915. Bibcode:2015CMMM.201514104T. doi:10.1155/2014/546814. PMC 4058219. PMID 24971155.

- ^ Zhang, Y. (2015). "Exponential Wavelet Iterative Shrinkage Thresholding Algorithm for Compressed Sensing Magnetic Resonance Imaging". Information Sciences. 322: 115–132. doi:10.1016/j.ins.2015.06.017.

- ^ Zhang, Y.; Wang, S. (2015). "Exponential Wavelet Iterative Shrinkage Thresholding Algorithm with Random Shift for Compressed Sensing Magnetic Resonance Imaging". IEEJ Transactions on Electrical and Electronic Engineering. 10 (1): 116–117. doi:10.1002/tee.22059. S2CID 109854375.

- ^ Figueiredo, M.; Bioucas-Dias, J.M.; Nowak, R.D. (2007). "Majorization–minimization algorithms for wavelet-based image restoration". IEEE Trans. Image Process. 16 (12): 2980–2991. Bibcode:2007ITIP...16.2980F. doi:10.1109/tip.2007.909318. PMID 18092597. S2CID 8160052.

- ^ [Network tomography via compressed sensing|http://www.ee.washington.edu/research/funlab/Publications/2010/CS-Tomo.pdf]

- ^ "InView web site". inviewcorp.com. Archived from the original on 2013-03-31.

- ^ Compressed sensing imaging techniques for radio interferometry

- ^ Stevens, Andrew; Kovarik, Libor; Abellan, Patricia; Yuan, Xin; Carin, Lawrence; Browning, Nigel D. (13 August 2015). "Applying compressive sensing to TEM video: a substantial frame rate increase on any camera". Advanced Structural and Chemical Imaging. 1 (1). doi:10.1186/s40679-015-0009-3.

- ^ Kovarik, L.; Stevens, A.; Liyu, A.; Browning, N. D. (17 October 2016). "Implementing an accurate and rapid sparse sampling approach for low-dose atomic resolution STEM imaging". Applied Physics Letters. 109 (16): 164102. Bibcode:2016ApPhL.109p4102K. doi:10.1063/1.4965720.

Further reading

- "The Fundamentals of Compressive Sensing" Part 1, Part 2 and Part 3: video tutorial by Mark Davenport, Georgia Tech. at SigView, the IEEE Signal Processing Society Tutorial Library.

- Using Math to Turn Lo-Res Datasets Into Hi-Res Samples Wired Magazine article

- Compressive Sensing Resources at Rice University.

- Compressed Sensing Makes Every Pixel Count – article in the AMS What's Happening in the Mathematical Sciences series

- Wiki on sparse reconstruction