Nyquist–Shannon sampling theorem

The Nyquist–Shannon sampling theorem is an essential principle for digital signal processing linking the frequency range of a signal and the sample rate required to avoid a type of distortion called aliasing. The theorem states that the sample rate must be at least twice the bandwidth of the signal to avoid aliasing. In practice, it is used to select band-limiting filters to keep aliasing below an acceptable amount when an analog signal is sampled or when sample rates are changed within a digital signal processing function.

The Nyquist–Shannon sampling theorem is a theorem in the field of signal processing which serves as a fundamental bridge between continuous-time signals and discrete-time signals. It establishes a sufficient condition for a sample rate that permits a discrete sequence of samples to capture all the information from a continuous-time signal of finite bandwidth.

Strictly speaking, the theorem only applies to a class of mathematical functions having a Fourier transform that is zero outside of a finite region of frequencies. Intuitively we expect that when one reduces a continuous function to a discrete sequence and interpolates back to a continuous function, the fidelity of the result depends on the density (or sample rate) of the original samples. The sampling theorem introduces the concept of a sample rate that is sufficient for perfect fidelity for the class of functions that are band-limited to a given bandwidth, such that no actual information is lost in the sampling process. It expresses the sufficient sample rate in terms of the bandwidth for the class of functions. The theorem also leads to a formula for perfectly reconstructing the original continuous-time function from the samples.

Perfect reconstruction may still be possible when the sample-rate criterion is not satisfied, provided other constraints on the signal are known (see § Sampling of non-baseband signals below and compressed sensing). In some cases (when the sample-rate criterion is not satisfied), utilizing additional constraints allows for approximate reconstructions. The fidelity of these reconstructions can be verified and quantified utilizing Bochner's theorem.[1]

The name Nyquist–Shannon sampling theorem honours Harry Nyquist and Claude Shannon, but the theorem was also previously discovered by E. T. Whittaker (published in 1915), and Shannon cited Whittaker's paper in his work. The theorem is thus also known by the names Whittaker–Shannon sampling theorem, Whittaker–Shannon, and Whittaker–Nyquist–Shannon, and may also be referred to as the cardinal theorem of interpolation.

Introduction[edit]

Sampling is a process of converting a signal (for example, a function of continuous time or space) into a sequence of values (a function of discrete time or space). Shannon's version of the theorem states:[2]

Theorem — If a function contains no frequencies higher than B hertz, then it can be completely determined from its ordinates at a sequence of points spaced less than seconds apart.

A sufficient sample-rate is therefore anything larger than samples per second. Equivalently, for a given sample rate , perfect reconstruction is guaranteed possible for a bandlimit .

When the bandlimit is too high (or there is no bandlimit), the reconstruction exhibits imperfections known as aliasing. Modern statements of the theorem are sometimes careful to explicitly state that must contain no sinusoidal component at exactly frequency or that must be strictly less than ½ the sample rate. The threshold is called the Nyquist rate and is an attribute of the continuous-time input to be sampled. The sample rate must exceed the Nyquist rate for the samples to suffice to represent The threshold is called the Nyquist frequency and is an attribute of the sampling equipment. All meaningful frequency components of the properly sampled exist below the Nyquist frequency. The condition described by these inequalities is called the Nyquist criterion, or sometimes the Raabe condition. The theorem is also applicable to functions of other domains, such as space, in the case of a digitized image. The only change, in the case of other domains, is the units of measure attributed to and

The symbol is customarily used to represent the interval between samples and is called the sample period or sampling interval. The samples of function are commonly denoted by [3] (alternatively in older signal processing literature), for all integer values of The multiplier is a result of the transition from continuous time to discrete time (see Discrete-time_Fourier_transform#Relation_to_Fourier_Transform), and it preserves the energy of the signal as varies.

A mathematically ideal way to interpolate the sequence involves the use of sinc functions. Each sample in the sequence is replaced by a sinc function, centered on the time axis at the original location of the sample with the amplitude of the sinc function scaled to the sample value, Subsequently, the sinc functions are summed into a continuous function. A mathematically equivalent method uses the Dirac comb and proceeds by convolving one sinc function with a series of Dirac delta pulses, weighted by the sample values. Neither method is numerically practical. Instead, some type of approximation of the sinc functions, finite in length, is used. The imperfections attributable to the approximation are known as interpolation error.

Practical digital-to-analog converters produce neither scaled and delayed sinc functions, nor ideal Dirac pulses. Instead they produce a piecewise-constant sequence of scaled and delayed rectangular pulses (the zero-order hold), usually followed by a lowpass filter (called an "anti-imaging filter") to remove spurious high-frequency replicas (images) of the original baseband signal.

Aliasing[edit]

When is a function with a Fourier transform :

Then the samples, of are sufficient to create a periodic summation of (see Discrete-time_Fourier_transform#Relation_to_Fourier_Transform):

-

(Eq.1)

which is a periodic function and its equivalent representation as a Fourier series, whose coefficients are . This function is also known as the discrete-time Fourier transform (DTFT) of the sample sequence.

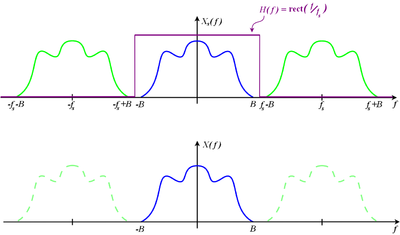

As depicted, copies of are shifted by multiples of the sampling rate and combined by addition. For a band-limited function and sufficiently large it is possible for the copies to remain distinct from each other. But if the Nyquist criterion is not satisfied, adjacent copies overlap, and it is not possible in general to discern an unambiguous Any frequency component above is indistinguishable from a lower-frequency component, called an alias, associated with one of the copies. In such cases, the customary interpolation techniques produce the alias, rather than the original component. When the sample-rate is pre-determined by other considerations (such as an industry standard), is usually filtered to reduce its high frequencies to acceptable levels before it is sampled. The type of filter required is a lowpass filter, and in this application it is called an anti-aliasing filter.

Derivation as a special case of Poisson summation[edit]

When there is no overlap of the copies (also known as "images") of , the term of Eq.1 can be recovered by the product:

where:

The sampling theorem is proved since uniquely determines .

All that remains is to derive the formula for reconstruction. need not be precisely defined in the region because is zero in that region. However, the worst case is when the Nyquist frequency. A function that is sufficient for that and all less severe cases is:

where is the rectangular function. Therefore:

The inverse transform of both sides produces the Whittaker–Shannon interpolation formula:

which shows how the samples, , can be combined to reconstruct .

- Larger-than-necessary values of (smaller values of ), called oversampling, have no effect on the outcome of the reconstruction and have the benefit of leaving room for a transition band in which is free to take intermediate values. Undersampling, which causes aliasing, is not in general a reversible operation.

- Theoretically, the interpolation formula can be implemented as a low-pass filter, whose impulse response is and whose input is which is a Dirac comb function modulated by the signal samples. Practical digital-to-analog converters (DAC) implement an approximation like the zero-order hold. In that case, oversampling can reduce the approximation error.

Shannon's original proof[edit]

Poisson shows that the Fourier series in Eq.1 produces the periodic summation of , regardless of and . Shannon, however, only derives the series coefficients for the case . Virtually quoting Shannon's original paper:

- Let be the spectrum of Then

- because is assumed to be zero outside the band If we let where is any positive or negative integer, we obtain:

-

(Eq.2)

-

- On the left are values of at the sampling points. The integral on the right will be recognized as essentially[a] the coefficient in a Fourier-series expansion of the function taking the interval to as a fundamental period. This means that the values of the samples determine the Fourier coefficients in the series expansion of Thus they determine since is zero for frequencies greater than and for lower frequencies is determined if its Fourier coefficients are determined. But determines the original function completely, since a function is determined if its spectrum is known. Therefore the original samples determine the function completely.

Shannon's proof of the theorem is complete at that point, but he goes on to discuss reconstruction via sinc functions, what we now call the Whittaker–Shannon interpolation formula as discussed above. He does not derive or prove the properties of the sinc function, as the Fourier pair relationship between the rect (the rectangular function) and sinc functions was well known by that time.[4]

Let be the sample. Then the function is represented by:

As in the other proof, the existence of the Fourier transform of the original signal is assumed, so the proof does not say whether the sampling theorem extends to bandlimited stationary random processes.

Notes[edit]

Application to multivariable signals and images[edit]

The sampling theorem is usually formulated for functions of a single variable. Consequently, the theorem is directly applicable to time-dependent signals and is normally formulated in that context. However, the sampling theorem can be extended in a straightforward way to functions of arbitrarily many variables. Grayscale images, for example, are often represented as two-dimensional arrays (or matrices) of real numbers representing the relative intensities of pixels (picture elements) located at the intersections of row and column sample locations. As a result, images require two independent variables, or indices, to specify each pixel uniquely—one for the row, and one for the column.

Color images typically consist of a composite of three separate grayscale images, one to represent each of the three primary colors—red, green, and blue, or RGB for short. Other colorspaces using 3-vectors for colors include HSV, CIELAB, XYZ, etc. Some colorspaces such as cyan, magenta, yellow, and black (CMYK) may represent color by four dimensions. All of these are treated as vector-valued functions over a two-dimensional sampled domain.

Similar to one-dimensional discrete-time signals, images can also suffer from aliasing if the sampling resolution, or pixel density, is inadequate. For example, a digital photograph of a striped shirt with high frequencies (in other words, the distance between the stripes is small), can cause aliasing of the shirt when it is sampled by the camera's image sensor. The aliasing appears as a moiré pattern. The "solution" to higher sampling in the spatial domain for this case would be to move closer to the shirt, use a higher resolution sensor, or to optically blur the image before acquiring it with the sensor using an optical low-pass filter.

Another example is shown here in the brick patterns. The top image shows the effects when the sampling theorem's condition is not satisfied. When software rescales an image (the same process that creates the thumbnail shown in the lower image) it, in effect, runs the image through a low-pass filter first and then downsamples the image to result in a smaller image that does not exhibit the moiré pattern. The top image is what happens when the image is downsampled without low-pass filtering: aliasing results.

The sampling theorem applies to camera systems, where the scene and lens constitute an analog spatial signal source, and the image sensor is a spatial sampling device. Each of these components is characterized by a modulation transfer function (MTF), representing the precise resolution (spatial bandwidth) available in that component. Effects of aliasing or blurring can occur when the lens MTF and sensor MTF are mismatched. When the optical image which is sampled by the sensor device contains higher spatial frequencies than the sensor, the under sampling acts as a low-pass filter to reduce or eliminate aliasing. When the area of the sampling spot (the size of the pixel sensor) is not large enough to provide sufficient spatial anti-aliasing, a separate anti-aliasing filter (optical low-pass filter) may be included in a camera system to reduce the MTF of the optical image. Instead of requiring an optical filter, the graphics processing unit of smartphone cameras performs digital signal processing to remove aliasing with a digital filter. Digital filters also apply sharpening to amplify the contrast from the lens at high spatial frequencies, which otherwise falls off rapidly at diffraction limits.

The sampling theorem also applies to post-processing digital images, such as to up or down sampling. Effects of aliasing, blurring, and sharpening may be adjusted with digital filtering implemented in software, which necessarily follows the theoretical principles.

Critical frequency[edit]

To illustrate the necessity of consider the family of sinusoids generated by different values of in this formula:

With or equivalently the samples are given by:

regardless of the value of That sort of ambiguity is the reason for the strict inequality of the sampling theorem's condition.

Sampling of non-baseband signals[edit]

As discussed by Shannon:[2]

A similar result is true if the band does not start at zero frequency but at some higher value, and can be proved by a linear translation (corresponding physically to single-sideband modulation) of the zero-frequency case. In this case the elementary pulse is obtained from by single-side-band modulation.

That is, a sufficient no-loss condition for sampling signals that do not have baseband components exists that involves the width of the non-zero frequency interval as opposed to its highest frequency component. See sampling for more details and examples.

For example, in order to sample FM radio signals in the frequency range of 100–102 MHz, it is not necessary to sample at 204 MHz (twice the upper frequency), but rather it is sufficient to sample at 4 MHz (twice the width of the frequency interval).

A bandpass condition is that for all nonnegative outside the open band of frequencies:

for some nonnegative integer . This formulation includes the normal baseband condition as the case

The corresponding interpolation function is the impulse response of an ideal brick-wall bandpass filter (as opposed to the ideal brick-wall lowpass filter used above) with cutoffs at the upper and lower edges of the specified band, which is the difference between a pair of lowpass impulse responses:

Other generalizations, for example to signals occupying multiple non-contiguous bands, are possible as well. Even the most generalized form of the sampling theorem does not have a provably true converse. That is, one cannot conclude that information is necessarily lost just because the conditions of the sampling theorem are not satisfied; from an engineering perspective, however, it is generally safe to assume that if the sampling theorem is not satisfied then information will most likely be lost.

Nonuniform sampling[edit]

The sampling theory of Shannon can be generalized for the case of nonuniform sampling, that is, samples not taken equally spaced in time. The Shannon sampling theory for non-uniform sampling states that a band-limited signal can be perfectly reconstructed from its samples if the average sampling rate satisfies the Nyquist condition.[5] Therefore, although uniformly spaced samples may result in easier reconstruction algorithms, it is not a necessary condition for perfect reconstruction.

The general theory for non-baseband and nonuniform samples was developed in 1967 by Henry Landau.[6] He proved that the average sampling rate (uniform or otherwise) must be twice the occupied bandwidth of the signal, assuming it is a priori known what portion of the spectrum was occupied.

In the late 1990s, this work was partially extended to cover signals for which the amount of occupied bandwidth is known but the actual occupied portion of the spectrum is unknown.[7] In the 2000s, a complete theory was developed (see the section Sampling below the Nyquist rate under additional restrictions below) using compressed sensing. In particular, the theory, using signal processing language, is described in a 2009 paper by Mishali and Eldar.[8] They show, among other things, that if the frequency locations are unknown, then it is necessary to sample at least at twice the Nyquist criteria; in other words, you must pay at least a factor of 2 for not knowing the location of the spectrum. Note that minimum sampling requirements do not necessarily guarantee stability.

Sampling below the Nyquist rate under additional restrictions[edit]

The Nyquist–Shannon sampling theorem provides a sufficient condition for the sampling and reconstruction of a band-limited signal. When reconstruction is done via the Whittaker–Shannon interpolation formula, the Nyquist criterion is also a necessary condition to avoid aliasing, in the sense that if samples are taken at a slower rate than twice the band limit, then there are some signals that will not be correctly reconstructed. However, if further restrictions are imposed on the signal, then the Nyquist criterion may no longer be a necessary condition.

A non-trivial example of exploiting extra assumptions about the signal is given by the recent field of compressed sensing, which allows for full reconstruction with a sub-Nyquist sampling rate. Specifically, this applies to signals that are sparse (or compressible) in some domain. As an example, compressed sensing deals with signals that may have a low overall bandwidth (say, the effective bandwidth ) but the frequency locations are unknown, rather than all together in a single band, so that the passband technique does not apply. In other words, the frequency spectrum is sparse. Traditionally, the necessary sampling rate is thus Using compressed sensing techniques, the signal could be perfectly reconstructed if it is sampled at a rate slightly lower than With this approach, reconstruction is no longer given by a formula, but instead by the solution to a linear optimization program.

Another example where sub-Nyquist sampling is optimal arises under the additional constraint that the samples are quantized in an optimal manner, as in a combined system of sampling and optimal lossy compression.[9] This setting is relevant in cases where the joint effect of sampling and quantization is to be considered, and can provide a lower bound for the minimal reconstruction error that can be attained in sampling and quantizing a random signal. For stationary Gaussian random signals, this lower bound is usually attained at a sub-Nyquist sampling rate, indicating that sub-Nyquist sampling is optimal for this signal model under optimal quantization.[10]

Historical background[edit]

The sampling theorem was implied by the work of Harry Nyquist in 1928,[11] in which he showed that up to independent pulse samples could be sent through a system of bandwidth ; but he did not explicitly consider the problem of sampling and reconstruction of continuous signals. About the same time, Karl Küpfmüller showed a similar result[12] and discussed the sinc-function impulse response of a band-limiting filter, via its integral, the step-response sine integral; this bandlimiting and reconstruction filter that is so central to the sampling theorem is sometimes referred to as a Küpfmüller filter (but seldom so in English).

The sampling theorem, essentially a dual of Nyquist's result, was proved by Claude E. Shannon.[2] The mathematician E. T. Whittaker published similar results in 1915,[13] J. M. Whittaker in 1935,[14] and Gabor in 1946 ("Theory of communication").

In 1948 and 1949, Claude E. Shannon published the two revolutionary articles in which he founded the information theory.[15][16][2] In Shannon 1948 the sampling theorem is formulated as "Theorem 13": Let contain no frequencies over W. Then

It was not until these articles were published that the theorem known as "Shannon's sampling theorem" became common property among communication engineers, although Shannon himself writes that this is a fact which is common knowledge in the communication art.[B] A few lines further on, however, he adds: "but in spite of its evident importance, [it] seems not to have appeared explicitly in the literature of communication theory".

Other discoverers[edit]

Others who have independently discovered or played roles in the development of the sampling theorem have been discussed in several historical articles, for example, by Jerri[17] and by Lüke.[18] For example, Lüke points out that H. Raabe, an assistant to Küpfmüller, proved the theorem in his 1939 Ph.D. dissertation; the term Raabe condition came to be associated with the criterion for unambiguous representation (sampling rate greater than twice the bandwidth). Meijering[19] mentions several other discoverers and names in a paragraph and pair of footnotes:

As pointed out by Higgins, the sampling theorem should really be considered in two parts, as done above: the first stating the fact that a bandlimited function is completely determined by its samples, the second describing how to reconstruct the function using its samples. Both parts of the sampling theorem were given in a somewhat different form by J. M. Whittaker and before him also by Ogura. They were probably not aware of the fact that the first part of the theorem had been stated as early as 1897 by Borel.[Meijering 1] As we have seen, Borel also used around that time what became known as the cardinal series. However, he appears not to have made the link. In later years it became known that the sampling theorem had been presented before Shannon to the Russian communication community by Kotel'nikov. In more implicit, verbal form, it had also been described in the German literature by Raabe. Several authors have mentioned that Someya introduced the theorem in the Japanese literature parallel to Shannon. In the English literature, Weston introduced it independently of Shannon around the same time.[Meijering 2]

- ^ Several authors, following Black, have claimed that this first part of the sampling theorem was stated even earlier by Cauchy, in a paper published in 1841. However, the paper of Cauchy does not contain such a statement, as has been pointed out by Higgins.

- ^ As a consequence of the discovery of the several independent introductions of the sampling theorem, people started to refer to the theorem by including the names of the aforementioned authors, resulting in such catchphrases as "the Whittaker–Kotel'nikov–Shannon (WKS) sampling theorem" or even "the Whittaker–Kotel'nikov–Raabe–Shannon–Someya sampling theorem". To avoid confusion, perhaps the best thing to do is to refer to it as the sampling theorem, "rather than trying to find a title that does justice to all claimants".

— Eric Meijering, "A Chronology of Interpolation From Ancient Astronomy to Modern Signal and Image Processing" (citations omitted)

In Russian literature it is known as the Kotelnikov's theorem, named after Vladimir Kotelnikov, who discovered it in 1933.[20]

Why Nyquist?[edit]

Exactly how, when, or why Harry Nyquist had his name attached to the sampling theorem remains obscure. The term Nyquist Sampling Theorem (capitalized thus) appeared as early as 1959 in a book from his former employer, Bell Labs,[21][verification needed] and appeared again in 1963,[22] and not capitalized in 1965.[23] It had been called the Shannon Sampling Theorem as early as 1954,[24] but also just the sampling theorem by several other books in the early 1950s.

In 1958, Blackman and Tukey cited Nyquist's 1928 article as a reference for the sampling theorem of information theory,[25] even though that article does not treat sampling and reconstruction of continuous signals as others did. Their glossary of terms includes these entries:

- Sampling theorem (of information theory)

- Nyquist's result that equi-spaced data, with two or more points per cycle of highest frequency, allows reconstruction of band-limited functions. (See Cardinal theorem.)

- Cardinal theorem (of interpolation theory)

- A precise statement of the conditions under which values given at a doubly infinite set of equally spaced points can be interpolated to yield a continuous band-limited function with the aid of the function

Exactly what "Nyquist's result" they are referring to remains mysterious.

When Shannon stated and proved the sampling theorem in his 1949 article, according to Meijering,[19] "he referred to the critical sampling interval as the Nyquist interval corresponding to the band in recognition of Nyquist's discovery of the fundamental importance of this interval in connection with telegraphy". This explains Nyquist's name on the critical interval, but not on the theorem.

Similarly, Nyquist's name was attached to Nyquist rate in 1953 by Harold S. Black:

If the essential frequency range is limited to cycles per second, was given by Nyquist as the maximum number of code elements per second that could be unambiguously resolved, assuming the peak interference is less half a quantum step. This rate is generally referred to as signaling at the Nyquist rate and has been termed a Nyquist interval.

— Harold Black, Modulation Theory[26] (bold added for emphasis; italics as in the original)

According to the Oxford English Dictionary, this may be the origin of the term Nyquist rate. In Black's usage, it is not a sampling rate, but a signaling rate.

See also[edit]

- 44,100 Hz, a customary rate used to sample audible frequencies is based on the limits of human hearing and the sampling theorem

- Balian–Low theorem, a similar theoretical lower bound on sampling rates, but which applies to time–frequency transforms

- Cheung–Marks theorem, which specifies conditions where restoration of a signal by the sampling theorem can become ill-posed

- Shannon–Hartley theorem

- Nyquist ISI criterion

- Reconstruction from zero crossings

- Zero-order hold

- Dirac comb

Notes[edit]

- ^ The sinc function follows from rows 202 and 102 of the transform tables

- ^ Shannon 1949, p. 448.

References[edit]

- ^ Nemirovsky, Jonathan; Shimron, Efrat (2015). "Utilizing Bochners Theorem for Constrained Evaluation of Missing Fourier Data". arXiv:1506.03300 [physics.med-ph].

- ^ a b c d Shannon, Claude E. (January 1949). "Communication in the presence of noise". Proceedings of the Institute of Radio Engineers. 37 (1): 10–21. doi:10.1109/jrproc.1949.232969. S2CID 52873253. Reprint as classic paper in: Proc. IEEE, Vol. 86, No. 2, (Feb 1998) Archived 2010-02-08 at the Wayback Machine

- ^ Ahmed, N.; Rao, K.R. (July 10, 1975). Orthogonal Transforms for Digital Signal Processing (1 ed.). Berlin Heidelberg New York: Springer-Verlag. doi:10.1007/978-3-642-45450-9. ISBN 9783540065562.

- ^ Campbell, George; Foster, Ronald (1942). Fourier Integrals for Practical Applications. New York: Bell Telephone System Laboratories.

- ^ Marvasti, F., ed. (2000). Nonuniform Sampling, Theory and Practice. New York: Kluwer Academic/Plenum Publishers.

- ^ Landau, H. J. (1967). "Necessary density conditions for sampling and interpolation of certain entire functions". Acta Mathematica. 117 (1): 37–52. doi:10.1007/BF02395039.

- ^ For example, Feng, P. (1997). Universal minimum-rate sampling and spectrum-blind reconstruction for multiband signals (Ph.D. thesis). University of Illinois at Urbana-Champaign.

- ^ Mishali, Moshe; Eldar, Yonina C. (March 2009). "Blind Multiband Signal Reconstruction: Compressed Sensing for Analog Signals". IEEE Trans. Signal Process. 57 (3): 993–1009. Bibcode:2009ITSP...57..993M. CiteSeerX 10.1.1.154.4255. doi:10.1109/TSP.2009.2012791. S2CID 2529543.

- ^ Kipnis, Alon; Goldsmith, Andrea J.; Eldar, Yonina C.; Weissman, Tsachy (January 2016). "Distortion rate function of sub-Nyquist sampled Gaussian sources". IEEE Transactions on Information Theory. 62: 401–429. arXiv:1405.5329. doi:10.1109/tit.2015.2485271. S2CID 47085927.

- ^ Kipnis, Alon; Eldar, Yonina; Goldsmith, Andrea (26 April 2018). "Analog-to-Digital Compression: A New Paradigm for Converting Signals to Bits". IEEE Signal Processing Magazine. 35 (3): 16–39. arXiv:1801.06718. Bibcode:2018ISPM...35c..16K. doi:10.1109/MSP.2017.2774249. S2CID 13693437.

- ^ Nyquist, Harry (April 1928). "Certain topics in telegraph transmission theory". Transactions of the AIEE. 47 (2): 617–644. Bibcode:1928TAIEE..47..617N. doi:10.1109/t-aiee.1928.5055024. Reprint as classic paper in: Proceedings of the IEEE, Vol. 90, No. 2, February 2002. Archived 2013-09-26 at the Wayback Machine

- ^ Küpfmüller, Karl (1928). "Über die Dynamik der selbsttätigen Verstärkungsregler". Elektrische Nachrichtentechnik (in German). 5 (11): 459–467. (English translation 2005).

- ^ Whittaker, E. T. (1915). "On the Functions Which are Represented by the Expansions of the Interpolation Theory". Proceedings of the Royal Society of Edinburgh. 35: 181–194. doi:10.1017/s0370164600017806. ("Theorie der Kardinalfunktionen").

- ^ Whittaker, J. M. (1935). Interpolatory Function Theory. Cambridge, England: Cambridge University Press.

- ^ Shannon, Claude E. (July 1948). "A Mathematical Theory of Communication". Bell System Technical Journal. 27 (3): 379–423. doi:10.1002/j.1538-7305.1948.tb01338.x. hdl:11858/00-001M-0000-002C-4317-B.

- ^ Shannon, Claude E. (October 1948). "A Mathematical Theory of Communication". Bell System Technical Journal. 27 (4): 623–666. doi:10.1002/j.1538-7305.1948.tb00917.x. hdl:11858/00-001M-0000-002C-4314-2.

- ^ Jerri, Abdul (November 1977). "The Shannon Sampling Theorem—Its Various Extensions and Applications: A Tutorial Review". Proceedings of the IEEE. 65 (11): 1565–1596. Bibcode:1977IEEEP..65.1565J. doi:10.1109/proc.1977.10771. S2CID 37036141. See also Jerri, Abdul (April 1979). "Correction to 'The Shannon sampling theorem—Its various extensions and applications: A tutorial review'". Proceedings of the IEEE. 67 (4): 695. doi:10.1109/proc.1979.11307.

- ^ Lüke, Hans Dieter (April 1999). "The Origins of the Sampling Theorem" (PDF). IEEE Communications Magazine. 37 (4): 106–108. CiteSeerX 10.1.1.163.2887. doi:10.1109/35.755459.

- ^ a b Meijering, Erik (March 2002). "A Chronology of Interpolation From Ancient Astronomy to Modern Signal and Image Processing" (PDF). Proceedings of the IEEE. 90 (3): 319–342. doi:10.1109/5.993400.

- ^ Kotelnikov VA, On the transmission capacity of "ether" and wire in electrocommunications, (English translation, PDF), Izd. Red. Upr. Svyazzi RKKA (1933), Reprint in Modern Sampling Theory: Mathematics and Applications, Editors: J. J. Benedetto und PJSG Ferreira, Birkhauser (Boston) 2000, ISBN 0-8176-4023-1.

- ^ Members of the Technical Staff of Bell Telephone Lababoratories (1959). Transmission Systems for Communications. Vol. 2. AT&T. pp. 26–4.

- ^ Guillemin, Ernst Adolph (1963). Theory of Linear Physical Systems. Wiley. ISBN 9780471330707.

- ^ Roberts, Richard A.; Barton, Ben F. (1965). Theory of Signal Detectability: Composite Deferred Decision Theory.

- ^ Gray, Truman S. (1954). "Applied Electronics: A First Course in Electronics, Electron Tubes, and Associated Circuits". Physics Today. 7 (11): 17. Bibcode:1954PhT.....7k..17G. doi:10.1063/1.3061438. hdl:2027/mdp.39015002049487.

- ^ Blackman, R. B.; Tukey, J. W. (1958). The Measurement of Power Spectra: From the Point of View of Communications Engineering (PDF). New York: Dover. Archived (PDF) from the original on 2022-10-09.[permanent dead link]

- ^ Black, Harold S. (1953). Modulation Theory.

Further reading[edit]

- Higgins, J.R. (1985). "Five short stories about the cardinal series". Bulletin of the AMS. 12 (1): 45–89. doi:10.1090/S0273-0979-1985-15293-0.

- Küpfmüller, Karl (1931). "Utjämningsförlopp inom Telegraf- och Telefontekniken" [Transients in telegraph and telephone engineering]. Teknisk Tidskrift (9): 153–160. and (10): pp. 178–182

- Marks, II, R.J. (1991). Introduction to Shannon Sampling and Interpolation Theory (PDF). Springer Texts in Electrical Engineering. Springer. doi:10.1007/978-1-4613-9708-3. ISBN 978-1-4613-9708-3. Archived (PDF) from the original on 2011-07-14.

- Marks, II, R.J., ed. (1993). Advanced Topics in Shannon Sampling and Interpolation Theory (PDF). Springer Texts in Electrical Engineering. Springer. doi:10.1007/978-1-4613-9757-1. ISBN 978-1-4613-9757-1. Archived (PDF) from the original on 2011-10-06.

- Marks, II, R.J. (2009). "Chapters 5–8". Handbook of Fourier Analysis and Its Applications. Oxford University Press. ISBN 978-0-19-804430-7.

- Press, WH; Teukolsky, SA; Vetterling, WT; Flannery, BP (2007), "§13.11. Numerical Use of the Sampling Theorem", Numerical Recipes: The Art of Scientific Computing (3rd ed.), Cambridge University Press, ISBN 978-0-521-88068-8, archived from the original on 2011-08-11, retrieved 2011-08-13

- Unser, Michael (April 2000). "Sampling-50 Years after Shannon" (PDF). Proc. IEEE. 88 (4): 569–587. doi:10.1109/5.843002. S2CID 11657280. Archived (PDF) from the original on 2022-11-03.

External links[edit]

- Learning by Simulations Interactive simulation of the effects of inadequate sampling

- Interactive presentation of the sampling and reconstruction in a web-demo Institute of Telecommunications, University of Stuttgart

- Undersampling and an application of it

- Sampling Theory For Digital Audio

- Journal devoted to Sampling Theory

- Huang, J.; Padmanabhan, K.; Collins, O.M. (June 2011). "The Sampling Theorem With Constant Amplitude Variable Width Pulses". IEEE Transactions on Circuits and Systems I: Regular Papers. 58 (6): 1178–90. doi:10.1109/TCSI.2010.2094350. S2CID 13590085.

- Lüke, Hans Dieter (April 1999). "The Origins of the Sampling Theorem" (PDF). IEEE Communications Magazine. 37 (4): 106–108. CiteSeerX 10.1.1.163.2887. doi:10.1109/35.755459.

![{\displaystyle x[n]\triangleq T\cdot x(nT)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/c35cde04e72bd7b8afd4776928a7cb2222cf9e3d)

![{\displaystyle x[n],}](https://wikimedia.org/api/rest_v1/media/math/render/svg/5e286f372d35a48fc88332f573f2902beb862047)

![{\displaystyle X_{1/T}(f)\ \triangleq \sum _{k=-\infty }^{\infty }X\left(f-k/T\right)=\sum _{n=-\infty }^{\infty }x[n]\ e^{-i2\pi fnT},}](https://wikimedia.org/api/rest_v1/media/math/render/svg/57958d35d8c28bb41cdb93a006f90ad16834d268)

![{\displaystyle x[n]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/864cbbefbdcb55af4d9390911de1bf70167c4a3d)

![{\displaystyle [B,\ f_{s}-B]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/02b743a70365b24e59b3d3fc11cffabc30107bd0)