Film editing

This article has multiple issues. Please help improve it or discuss these issues on the talk page. (Learn how and when to remove these template messages)

|

| Part of a series on |

| Filmmaking |

|---|

|

| Glossary |

Film editing is both a creative and a technical part of the post-production process of filmmaking. The term is derived from the traditional process of working with film which increasingly involves the use of digital technology. When putting together some sort of video composition, typically, you would need a collection of shots and footages that vary from one another. The act of adjusting the shots you have already taken, and turning them into something new is known as film editing.

The film editor works with raw footage, selecting shots and combining them into sequences which create a finished motion picture. Film editing is described as an art or skill, the only art that is unique to cinema, separating filmmaking from other art forms that preceded it, although there are close parallels to the editing process in other art forms such as poetry and novel writing. Film editing is an extremely important tool when attempting to intrigue a viewer. When done properly, a film's editing can captivate a viewer and fly completely under the radar. Because of this, film editing has been given the name “the invisible art.”

On its most fundamental level, film editing is the art, technique and practice of assembling shots into a coherent sequence. The job of an editor is not simply to mechanically put pieces of a film together, cut off film slates or edit dialogue scenes. A film editor must creatively work with the layers of images, story, dialogue, music, pacing, as well as the actors' performances to effectively "re-imagine" and even rewrite the film to craft a cohesive whole. Editors usually play a dynamic role in the making of a film. An editor must select only the most quality shots, removing all unnecessary frames to ensure the shot is clean. Sometimes, auteurist film directors edit their own films, for example, Akira Kurosawa, Bahram Beyzai, Steven Soderbergh, and the Coen brothers.

According to “Film Art, An Introduction”, by Bordwell and Thompson, there are four basic areas of film editing that the editor has full control over. The first dimension is the graphic relations between a shot A and shot B. The shots are analyzed in terms of their graphic configurations, including light and dark, lines and shapes, volumes and depths, movement and stasis. The director makes deliberate choices regarding the composition, lighting, color, and movement within each shot, as well as the transitions between them. There are several techniques used by editors to establish graphic relations between shots. These include maintaining overall brightness consistency, keeping important elements in the center of the frame, playing with color differences, and creating visual matches or continuities between shots.

The second dimension is the rhythmic relationship between shot A and shot B. The duration of each shot, determined by the number of frames or length of film, contributes to the overall rhythm of the film. The filmmaker has control over the editing rhythm by adjusting the length of shots in relation to each other. Shot duration can be used to create specific effects and emphasize moments in the film. For example, a brief flash of white frames can convey a sudden impact or a violent moment. On the other hand, lengthening or adding seconds to a shot can allow for audience reaction or to accentuate an action. The length of shots can also be used to establish a rhythmic pattern, such as creating a steady beat or gradually slowing down or accelerating the tempo.

The third dimension is the spatial relationship between shot A and shot B. Editing allows the filmmaker to construct film space and imply a relationship between different points in space. The filmmaker can juxtapose shots to establish spatial holes or construct a whole space out of component parts. For example, the filmmaker can start with a shot that establishes a spatial hole and then follow it with a shot of a part of that space, creating an analytical breakdown.

The final dimension that an editor has control over is the temporal relation between shot A and shot B. Editing plays a crucial role in manipulating the time of action in a film. It allows filmmakers to control the order, duration, and frequency of events, thus shaping the narrative and influencing the audience's perception of time. Through editing, shots can be rearranged, flashbacks and flash-forwards can be employed, and the duration of actions can be compressed or expanded. The main point is that editing gives filmmakers the power to control and manipulate the temporal aspects of storytelling in film.

Between graphic, rhythmic, spatial, and temporal relationships between two shots, an editor has various ways to add a creative element to the film, and enhance the overall viewing experience.

With the advent of digital editing in non-linear editing systems, film editors and their assistants have become responsible for many areas of filmmaking that used to be the responsibility of others. For instance, in past years, picture editors dealt only with just that—picture. Sound, music, and (more recently) visual effects editors dealt with the practicalities of other aspects of the editing process, usually under the direction of the picture editor and director. However, digital systems have increasingly put these responsibilities on the picture editor. It is common, especially on lower budget films, for the editor to sometimes cut in temporary music, mock up visual effects and add temporary sound effects or other sound replacements. These temporary elements are usually replaced with more refined final elements produced by the sound, music and visual effects teams hired to complete the picture.[citation needed] The importance of an editor has become increasingly pivotal to the quality and success of a film due to the multiple roles that have been added to their job.

History[edit]

Early films were short films that were one long, static, and locked-down shot. Motion in the shot was all that was necessary to amuse an audience, so the first films simply showed activity such as traffic moving along a city street. There was no story and no editing. Each film ran as long as there was film in the camera.

The use of film editing to establish continuity, involving action moving from one sequence into another, is attributed to British film pioneer Robert W. Paul's Come Along, Do!, made in 1898 and one of the first films to feature more than one shot.[1] In the first shot, an elderly couple is outside an art exhibition having lunch and then follow other people inside through the door. The second shot shows what they do inside. Paul's 'Cinematograph Camera No. 1' of 1896 was the first camera to feature reverse-cranking, which allowed the same film footage to be exposed several times and thereby to create super-positions and multiple exposures. One of the first films to use this technique, Georges Méliès's The Four Troublesome Heads from 1898, was produced with Paul's camera.

The further development of action continuity in multi-shot films continued in 1899–1900 at the Brighton School in England, where it was definitively established by George Albert Smith and James Williamson. In that year, Smith made As Seen Through a Telescope, in which the main shot shows street scene with a young man tying the shoelace and then caressing the foot of his girlfriend, while an old man observes this through a telescope. There is then a cut to close shot of the hands on the girl's foot shown inside a black circular mask, and then a cut back to the continuation of the original scene.

Even more remarkable was James Williamson's Attack on a China Mission Station, made around the same time in 1900. The first shot shows the gate to the mission station from the outside being attacked and broken open by Chinese Boxer rebels, then there is a cut to the garden of the mission station where a pitched battle ensues. An armed party of British sailors arrived to defeat the Boxers and rescue the missionary's family. The film used the first "reverse angle" cut in film history.

James Williamson concentrated on making films taking action from one place shown in one shot to the next shown in another shot in films like Stop Thief! and Fire!, made in 1901, and many others. He also experimented with the close-up, and made perhaps the most extreme one of all in The Big Swallow, when his character approaches the camera and appears to swallow it. These two filmmakers of the Brighton School also pioneered the editing of the film; they tinted their work with color and used trick photography to enhance the narrative. By 1900, their films were extended scenes of up to five minutes long.[2]

Other filmmakers then took up all these ideas including the American Edwin S. Porter, who started making films for the Edison Company in 1901. Porter worked on a number of minor films before making Life of an American Fireman in 1903. The film was the first American film with a plot, featuring action, and even a closeup of a hand pulling a fire alarm. The film comprised a continuous narrative over seven scenes, rendered in a total of nine shots.[3] He put a dissolve between every shot, just as Georges Méliès was already doing, and he frequently had the same action repeated across the dissolves. His film, The Great Train Robbery (1903), had a running time of twelve minutes, with twenty separate shots and ten different indoor and outdoor locations. He used cross-cutting editing method to show simultaneous action in different places.

These early film directors discovered important aspects of motion picture language: that the screen image does not need to show a complete person from head to toe and that splicing together two shots creates in the viewer's mind a contextual relationship. These were the key discoveries that made all non-live or non live-on-videotape narrative motion pictures and television possible—that shots (in this case, whole scenes since each shot is a complete scene) can be photographed at widely different locations over a period of time (hours, days or even months) and combined into a narrative whole.[4] That is, The Great Train Robbery contains scenes shot on sets of a telegraph station, a railroad car interior, and a dance hall, with outdoor scenes at a railroad water tower, on the train itself, at a point along the track, and in the woods. But when the robbers leave the telegraph station interior (set) and emerge at the water tower, the audience believes they went immediately from one to the other. Or that when they climb on the train in one shot and enter the baggage car (a set) in the next, the audience believes they are on the same train.

Sometime around 1918, Russian director Lev Kuleshov did an experiment that proves this point. (See Kuleshov Experiment) He took an old film clip of a headshot of a noted Russian actor and intercut the shot with a shot of a bowl of soup, then with a child playing with a teddy bear, then with a shot an elderly woman in a casket. When he showed the film to people they praised the actor's acting—the hunger in his face when he saw the soup, the delight in the child, and the grief when looking at the dead woman.[5] Of course, the shot of the actor was years before the other shots and he never "saw" any of the items. The simple act of juxtaposing the shots in a sequence made the relationship.

Film editing technology[edit]

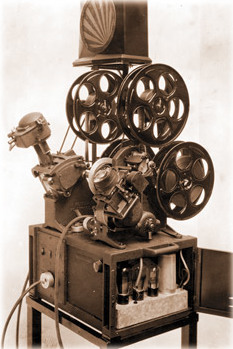

Before the widespread use of digital non-linear editing systems, the initial editing of all films was done with a positive copy of the film negative called a film workprint (cutting copy in UK) by physically cutting and splicing together pieces of film.[6] Strips of footage would be hand cut and attached together with tape and then later in time, glue. Editors were very precise; if they made a wrong cut or needed a fresh positive print, it cost the production money and time for the lab to reprint the footage. Additionally, each reprint put the negative at risk of damage. With the invention of a splicer and threading the machine with a viewer such as a Moviola, or "flatbed" machine such as a K.-E.-M. or Steenbeck, the editing process sped up a little bit and cuts came out cleaner and more precise. The Moviola editing practice is non-linear, allowing the editor to make choices faster, a great advantage to editing episodic films for television which have very short timelines to complete the work. All film studios and production companies who produced films for television provided this tool for their editors. Flatbed editing machines were used for playback and refinement of cuts, particularly in feature films and films made for television because they were less noisy and cleaner to work with. They were used extensively for documentary and drama production within the BBC's Film Department. Operated by a team of two, an editor and assistant editor, this tactile process required significant skill but allowed for editors to work extremely efficiently.[7]

Modern film editing has evolved significantly since it was first introduced to the film and entertainment industry. Some other new aspects of editing have been introduced such as color grading and digital workflows. As mentioned earlier, over the course of time, new technology has exponentially enhanced the quality of pictures in films. One of the most important steps in this process was transitioning from analog to digital filmmaking. By doing this, it gives the ability editors to immediately playback scenes, duplication and much more. Additionally digital has simplified and reduced the cost of filmmaking. Digital film is not only cheaper, but lasts longer, is safer, and is overall more efficient. Color grading is a post production process, where the editor manipulates or enhances the color of images, or environments in order to create a color tone. Doing this can alter the setting, tone, and mood of the entirety of scenes, and can enhance reactions that would otherwise have the possibility of being dull or out of place. Color grading is vital to the film editing process, and is technology that allows editors to enhance a story.

Today, most films are edited digitally (on systems such as Media Composer, Final Cut Pro X or Premiere Pro) and bypass the film positive workprint altogether. In the past, the use of a film positive (not the original negative) allowed the editor to do as much experimenting as he or she wished, without the risk of damaging the original. With digital editing, editors can experiment just as much as before except with the footage completely transferred to a computer hard drive.

When the film workprint had been cut to a satisfactory state, it was then used to make an edit decision list (EDL). The negative cutter referred to this list while processing the negative, splitting the shots into rolls, which were then contact printed to produce the final film print or answer print. Today, production companies have the option of bypassing negative cutting altogether. With the advent of digital intermediate ("DI"), the physical negative does not necessarily need to be physically cut and hot spliced together; rather the negative is optically scanned into the computer(s) and a cut list is confirmed by a DI editor.

Women in film editing[edit]

In the early years of film, editing was considered a technical job; editors were expected to "cut out the bad bits" and string the film together. Indeed, when the Motion Picture Editors Guild was formed, they chose to be "below the line", that is, not a creative guild, but a technical one. Women were not usually able to break into the "creative" positions; directors, cinematographers, producers, and executives were almost always men. Editing afforded creative women a place to assert their mark on the filmmaking process. The history of film has included many women editors such as Dede Allen, Anne Bauchens, Margaret Booth, Barbara McLean, Anne V. Coates, Adrienne Fazan, Verna Fields, Blanche Sewell and Eda Warren.[8]

Post-production[edit]

Post-production editing may be summarized by three distinct phases commonly referred to as the editor's cut, the director's cut, and the final cut.

There are several editing stages and the editor's cut is the first. An editor's cut (sometimes referred to as the "Assembly edit" or "Rough cut") is normally the first pass of what the final film will be when it reaches picture lock. The film editor usually starts working while principal photography starts. Sometimes, prior to cutting, the editor and director will have seen and discussed "dailies" (raw footage shot each day) as shooting progresses. As production schedules have shortened over the years, this co-viewing happens less often. Screening dailies give the editor a general idea of the director's intentions. Because it is the first pass, the editor's cut might be longer than the final film. The editor continues to refine the cut while shooting continues, and often the entire editing process goes on for many months and sometimes more than a year, depending on the film. The editor's cut is an opportunity for the editor to shape the story and present their vision of how the film should unfold. It provides a solid foundation for further collaboration with the director, allowing them to assess the initial assembly and provide feedback or guidance on the creative direction.

When shooting is finished, the director can then turn his or her full attention to collaborating with the editor and further refining the cut of the film. This is the time that is set aside where the film editor's first cut is molded to fit the director's vision. In the United States, under the rules of the Directors Guild of America, directors receive a minimum of ten weeks after completion of principal photography to prepare their first cut. While collaborating on what is referred to as the "director's cut", the director and the editor go over the entire movie in great detail; scenes and shots are re-ordered, removed, shortened and otherwise tweaked. Often it is discovered that there are plot holes, missing shots or even missing segments which might require that new scenes be filmed. Because of this time working closely and collaborating – a period that is normally far longer and more intricately detailed than the entire preceding film production – many directors and editors form a unique artistic bond. The goal is to align the film with the director's artistic vision and narrative objectives. The director's cut typically involves multiple iterations and discussions until both the director and editor are satisfied with the overall direction of the film.

Often after the director has had their chance to oversee a cut, the subsequent cuts are supervised by one or more producers, who represent the production company or movie studio. There have been several conflicts in the past between the director and the studio, sometimes leading to the use of the "Alan Smithee" credit signifying when a director no longer wants to be associated with the final release. The final cut is the last stage of post-production editing and represents the definitive version of the film. It is the result of the collaborative efforts between the director, editor, and other key stakeholders. The final cut reflects the agreed-upon creative decisions and serves as the basis for distribution and exhibition.

Mise en Scene vs Editing[edit]

Mise en scene is the term used to describe all of the lighting, music, placement, costume design, and other elements of a shot. Film editing and Mise en scene go hand in hand with one another. A major part of film editing is the use of filters and adjusting the lighting in a shot. Film editing contributes to the mise en scene of a given shot. When shooting a film, you typically get shots from multiple angles. The angles at which you shoot from are all part of the film's mise en scene.

Methods of montage[edit]

In motion picture terminology, a montage (from the French for "putting together" or "assembly") is a film editing technique.

There are at least three senses of the term:

- In French film practice, "montage" has its literal French meaning (assembly, installation) and simply identifies editing.

- In Soviet filmmaking of the 1920s, "montage" was a method of juxtaposing shots to derive new meaning that did not exist in either shot alone.

- In classical Hollywood cinema, a "montage sequence" is a short segment in a film in which narrative information is presented in a condensed fashion.

Although film director D. W. Griffith was not part of the montage school, he was one of the early proponents of the power of editing — mastering cross-cutting to show parallel action in different locations, and codifying film grammar in other ways as well. Griffith's work in the teens was highly regarded by Lev Kuleshov and other Soviet filmmakers and greatly influenced their understanding of editing.

Kuleshov was among the first to theorize about the relatively young medium of the cinema in the 1920s. For him, the unique essence of the cinema — that which could be duplicated in no other medium — is editing. He argues that editing a film is like constructing a building. Brick-by-brick (shot-by-shot) the building (film) is erected. His often-cited Kuleshov Experiment established that montage can lead the viewer to reach certain conclusions about the action in a film. Montage works because viewers infer meaning based on context. Sergei Eisenstein was briefly a student of Kuleshov's, but the two parted ways because they had different ideas of montage. Eisenstein regarded montage as a dialectical means of creating meaning. By contrasting unrelated shots he tried to provoke associations in the viewer, which were induced by shocks. But Eisenstein did not always do his own editing, and some of his most important films were edited by Esfir Tobak.[9]

A montage sequence consists of a series of short shots that are edited into a sequence to condense narrative. It is usually used to advance the story as a whole (often to suggest the passage of time), rather than to create symbolic meaning. In many cases, a song plays in the background to enhance the mood or reinforce the message being conveyed. One famous example of montage was seen in the 1968 film 2001: A Space Odyssey, depicting the start of man's first development from apes to humans. Another example that is employed in many films is the sports montage. The sports montage shows the star athlete training over a period of time, each shot having more improvement than the last. Classic examples include Rocky and the Karate Kid.

The word's association with Sergei Eisenstein is often condensed—too simply—into the idea of "juxtaposition" or into two words: "collision montage," whereby two adjacent shots that oppose each other on formal parameters or on the content of their images are cut against each other to create a new meaning not contained in the respective shots: Shot a + Shot b = New Meaning c.

The association of collision montage with Eisenstein is not surprising. He consistently maintained that the mind functions dialectically, in the Hegelian sense, that the contradiction between opposing ideas (thesis versus antithesis) is resolved by a higher truth, synthesis. He argued that conflict was the basis of all art, and never failed to see montage in other cultures. For example, he saw montage as a guiding principle in the construction of "Japanese hieroglyphics in which two independent ideographic characters ('shots') are juxtaposed and explode into a concept. Thus:

- Eye + Water = Crying

- Door + Ear = Eavesdropping

- Child + Mouth = Screaming

- Mouth + Dog = Barking

- Mouth + Bird = Singing."[10]

He also found montage in Japanese haiku, where short sense perceptions are juxtaposed and synthesized into a new meaning, as in this example:

- A lonely crow

- On a leafless bough

- One autumn eve.

- On a leafless bough

(枯朶に烏のとまりけり秋の暮)

As Dudley Andrew notes, "The collision of attractions from line to line produces the unified psychological effect which is the hallmark of haiku and montage."[11]

Continuity editing and alternatives[edit]

Continuity editing, developed in the early 1900s, aimed to create a coherent and smooth storytelling experience in films. It relied on consistent graphic qualities, balanced composition, and controlled editing rhythms to ensure narrative continuity and engage the audience. For example, whether an actor's costume remains the same from one scene to the next, or whether a glass of milk held by a character is full or empty throughout the scene. Because films are typically shot out of sequence, the script supervisor will keep a record of continuity and provide that to the film editor for reference. The editor may try to maintain continuity of elements, or may intentionally create a discontinuous sequence for stylistic or narrative effect.

The technique of continuity editing, part of the classical Hollywood style, was developed by early European and American directors, in particular, D.W. Griffith in his films such as The Birth of a Nation and Intolerance. The classical style embraces temporal and spatial continuity as a way of advancing the narrative, using such techniques as the 180 degree rule, Establishing shot, and Shot reverse shot. The 180-degree system in film editing ensures consistency in shot composition by keeping relative positions of characters or objects in the frame consistent. It also maintains consistent eye-lines and screen direction to avoid disorientation and confusion for the audience, allowing for clear spatial delineation and a smooth narrative experience. Often, continuity editing means finding a balance between literal continuity and perceived continuity. For instance, editors may condense action across cuts in a non-distracting way. A character walking from one place to another may "skip" a section of floor from one side of a cut to the other, but the cut is constructed to appear continuous so as not to distract the viewer.

Early Russian filmmakers such as Lev Kuleshov (already mentioned) further explored and theorized about editing and its ideological nature. Sergei Eisenstein developed a system of editing that was unconcerned with the rules of the continuity system of classical Hollywood that he called Intellectual montage.

Alternatives to traditional editing were also explored by early surrealist and Dada filmmakers such as Luis Buñuel (director of the 1929 Un Chien Andalou) and René Clair (director of 1924's Entr'acte which starred famous Dada artists Marcel Duchamp and Man Ray).

Filmmakers have explored alternatives to continuity editing, focusing on graphic and rhythmic possibilities in their films. Experimental filmmakers like Stan Brakhage and Bruce Conner have used purely graphic elements to join shots, emphasizing light, texture, and shape rather than narrative coherence. Non-narrative films have prioritized rhythmic relations among shots, even employing single-frame shots for extreme rhythmic effects. Narrative filmmakers, such as Busby Berkeley and Yasujiro Ow, have occasionally subordinated narrative concerns to graphic or rhythmic patterns, while films influenced by music videos often feature pulsating rhythmic editing that de-emphasizes spatial and temporal dimensions.

The French New Wave filmmakers such as Jean-Luc Godard and François Truffaut and their American counterparts such as Andy Warhol and John Cassavetes also pushed the limits of editing technique during the late 1950s and throughout the 1960s. French New Wave films and the non-narrative films of the 1960s used a carefree editing style and did not conform to the traditional editing etiquette of Hollywood films. Like its Dada and surrealist predecessors, French New Wave editing often drew attention to itself by its lack of continuity, its demystifying self-reflexive nature (reminding the audience that they were watching a film), and by the overt use of jump cuts or the insertion of material not often related to any narrative. Three of the most influential editors of French New Wave films were the women who (in combination) edited 15 of Godard's films: Francoise Collin, Agnes Guillemot, and Cecile Decugis, and another notable editor is Marie-Josèphe Yoyotte, the first black woman editor in French cinema and editor of The 400 Blows.[9]

Since the late 20th century Post-classical editing has seen faster editing styles with nonlinear, discontinuous action.

Significance[edit]

Vsevolod Pudovkin noted that the editing process is the one phase of production that is truly unique to motion pictures. Every other aspect of filmmaking originated in a different medium than film (photography, art direction, writing, sound recording), but editing is the one process that is unique to film.[12] Filmmaker Stanley Kubrick was quoted as saying: "I love editing. I think I like it more than any other phase of filmmaking. If I wanted to be frivolous, I might say that everything that precedes editing is merely a way of producing a film to edit."[13] Film editing is significant because it shapes the narrative structure, visual and aesthetic impact, rhythm and pacing, emotional resonance, and overall storytelling of a film. Editors possess a unique creative power to manipulate and arrange shots, allowing them to craft a cinematic experience that engages, entertains, and emotionally connects with the audience. Film editing is a distinct art form within the filmmaking process, enabling filmmakers to realize their vision and bring stories to life on the screen.

According to writer-director Preston Sturges:

[T]here is a law of natural cutting and that this replicates what an audience in a legitimate theater does for itself. The more nearly the film cutter approaches this law of natural interest, the more invisible will be his cutting. If the camera moves from one person to another at the exact moment that one in the legitimate theatre would have turned his head, one will not be conscious of a cut. If the camera misses by a quarter of a second, one will get a jolt. There is one other requirement: the two shots must be approximate of the same tone value. If one cuts from black to white, it is jarring. At any given moment, the camera must point at the exact spot the audience wishes to look at. To find that spot is absurdly easy: one has only to remember where one was looking at the time the scene was made.[14]

Assistant editors[edit]

Assistant editors aid the editor and director in collecting and organizing all the elements needed to edit the film. The Motion Picture Editors Guild defines an assistant editor as "a person who is assigned to assist an Editor. His or her duties shall be such as are assigned and performed under the immediate direction, supervision, and responsibility of the editor."[15] When editing is finished, they oversee the various lists and instructions necessary to put the film into its final form. Editors of large budget features will usually have a team of assistants working for them. The first assistant editor is in charge of this team and may do a small bit of picture editing as well, if necessary. Assistant editors are responsible for collecting, organizing, and managing all the elements needed for the editing process. This includes footage, sound files, music tracks, visual effects assets, and other media assets. They ensure that everything is properly labeled, logged, and stored in an organized manner, making it easier for the editor to access and work with the materials efficiently. Assistant editors serve as a bridge between the editing team and other departments, facilitating communication and collaboration. They often work closely with the director, editor, visual effects artists, sound designers, and other post-production professionals, relaying information, managing deliverables, and coordinating schedules. Often assistant editors will perform temporary sound, music, and visual effects work. The other assistants will have set tasks, usually helping each other when necessary to complete the many time-sensitive tasks at hand. In addition, an apprentice editor may be on hand to help the assistants. An apprentice is usually someone who is learning the ropes of assisting.[16]

Television shows typically have one assistant per editor. This assistant is responsible for every task required to bring the show to the final form. Lower budget features and documentaries will also commonly have only one assistant. Higher budget films and shows tend to have more than one assistant editor, and in some cases, there can be a full team of assistants.

The organizational aspects job could best be compared to database management. When a film is shot, every piece of picture or sound is coded with numbers and timecode. It is the assistant's job to keep track of these numbers in a database, which, in non-linear editing, is linked to the computer program.[citation needed] The editor and director cut the film using digital copies of the original film and sound, commonly referred to as an "offline" edit. When the cut is finished, it is the assistant's job to bring the film or television show "online". They create lists and instructions that tell the picture and sound finishers how to put the edit back together with the high-quality original elements. Assistant editing can be seen as a career path to eventually becoming an editor. Many assistants, however, do not choose to pursue advancement to the editor, and are very happy at the assistant level, working long and rewarding careers on many films and television shows.[17]

See also[edit]

- 180-degree rule

- 30-degree rule

- Footage (A Roll)

- B-roll

- Cinematic techniques

- Clapperboard

- Compositing (keying)

- Cut (transition), for the director's call Cut! or stop

- Cutaway

- The Cutting Edge: The Magic of Movie Editing

- Edit decision list (EDL)

- Film transition

- Filmmaking

- Index of articles related to motion pictures

- Kuleshov effect

- Motion Picture Editors Guild (MPEG)

- Moviola

- Negative cutting

- Outline of film

- Re-edited film

- Scene

- Sequence

- Shot

- Video editing

References[edit]

Notes

- ^ Brooke, Michael. "Come Along, Do!". BFI Screenonline Database. Retrieved 24 April 2011.

- ^ "The Brighton School". Archived from the original on 24 December 2013. Retrieved 17 December 2012.

- ^ Originally in Edison Films catalog, February 1903, 2–3; reproduced in Charles Musser, Before the Nickelodeon: Edwin S. Porter and the Edison Manufacturing Company (Berkeley: University of California Press, 1991), 216–18.

- ^ Arthur Knight (1957). p. 25.

- ^ Arthur Knight (1957). pp. 72–73.

- ^ "Cutting Room Practice and Procedure (BBC Film Training Text no. 58) – How television used to be made". Retrieved 8 February 2019.

- ^ Ellis, John; Hall, Nick (2017): ADAPT. figshare. Collection.https://doi.org/10.17637/rh.c.3925603.v1

- ^ Galvão, Sara (15 March 2015). ""A Tedious Job" – Women and Film Editing". Critics Associated. Retrieved 15 January 2018.

- ^ a b "Esfir Tobak". Edited by.

- ^ S. M. Eisenstein and Richard Taylor, Selected works, Volume 1 (Bloomington: BFI/Indiana University Press, 1988), 164.

- ^ Dudley Andrew, The major film theories: an introduction (London: Oxford University Press, 1976), 52.

- ^ Jacobs, Lewis (1954). Introduction. Film technique ; and Film acting : the cinema writings of V.I. Pudovkin. By Pudovkin, Vsevolod Illarionovich. Vision. p. ix. OCLC 982196683. Retrieved 30 March 2019 – via Internet Archive.

- ^ Walker, Alexander (1972). Stanley Kubrick Directs. New York: Harcourt Brace Jovanovich. p. 46. ISBN 0156848929. Retrieved 30 March 2019 – via GoogleBooks.

- ^ Sturges, Preston; Sturges, Sandy (adapt. & ed.) (1991), Preston Sturges on Preston Sturges, Boston: Faber & Faber, ISBN 0-571-16425-0, p. 275

- ^ Hollyn, Norman (2009). The Film Editing Room Handbook: How to Tame the Chaos of the Editing Room. Peachpit Press. p. xv. ISBN 978-0321702937. Retrieved 29 March 2019 – via GoogleBooks.

- ^ Wales, Lorene (2015). Complete Guide to Film and Digital Production: The People and The Process. CRC Press. p. 209. ISBN 978-1317349310. Retrieved 29 March 2019 – via GoogleBooks.

- ^ Jones, Chris; Jolliffe, Genevieve (2006). The Guerilla Film Makers Handbook. A&C Black. p. 363. ISBN 082647988X. Retrieved 29 March 2019 – via GoogleBooks.

Bibliography

- Dmytryk, Edward (1984). On Film Editing: An Introduction to the Art of Film Construction. Focal Press, Boston. ISBN 0-240-51738-5

- Eisenstein, Sergei (2010). Glenny, Michael; Taylor, Richard (eds.). Towards a Theory of Montage. Michael Glenny (translation). London: Tauris. ISBN 978-1-84885-356-0. Translation of Russian language works by Eisenstein, who died in 1948.

- Knight, Arthur (1957). The Liveliest Art. Mentor Books. New American Library. ISBN 0-02-564210-3

Further reading

- Morales, Morante, Luis Fernando (2017). 'Editing and Montage in International Film and Video: Theory and Technique, Focal Press, Taylor & Francis ISBN 1-138-24408-2

- Murch, Walter (2001). In the Blink of an Eye: a Perspective on Film Editing. Silman-James Press. 2d rev. ed.. ISBN 1-879505-62-2

External links[edit]

- Demonstration of Picsync machine by former BBC film editors

- Demonstration of editing 16mm film using a Steenbeck editing table

- Discussion and demonstration of a 16mm edit suite and the working environment within it

Wikibooks

Wikiversity