Hilbert space

In mathematics, Hilbert spaces (named after David Hilbert) allow the methods of linear algebra and calculus to be generalized from (finite-dimensional) Euclidean vector spaces to spaces that may be infinite-dimensional. Hilbert spaces arise naturally and frequently in mathematics and physics, typically as function spaces. Formally, a Hilbert space is a vector space equipped with an inner product that induces a distance function for which the space is a complete metric space.

The earliest Hilbert spaces were studied from this point of view in the first decade of the 20th century by David Hilbert, Erhard Schmidt, and Frigyes Riesz. They are indispensable tools in the theories of partial differential equations, quantum mechanics, Fourier analysis (which includes applications to signal processing and heat transfer), and ergodic theory (which forms the mathematical underpinning of thermodynamics). John von Neumann coined the term Hilbert space for the abstract concept that underlies many of these diverse applications. The success of Hilbert space methods ushered in a very fruitful era for functional analysis. Apart from the classical Euclidean vector spaces, examples of Hilbert spaces include spaces of square-integrable functions, spaces of sequences, Sobolev spaces consisting of generalized functions, and Hardy spaces of holomorphic functions.

Geometric intuition plays an important role in many aspects of Hilbert space theory. Exact analogs of the Pythagorean theorem and parallelogram law hold in a Hilbert space. At a deeper level, perpendicular projection onto a linear subspace plays a significant role in optimization problems and other aspects of the theory. An element of a Hilbert space can be uniquely specified by its coordinates with respect to an orthonormal basis, in analogy with Cartesian coordinates in classical geometry. When this basis is countably infinite, it allows identifying the Hilbert space with the space of the infinite sequences that are square-summable. The latter space is often in the older literature referred to as the Hilbert space.

Definition and illustration[edit]

Motivating example: Euclidean vector space[edit]

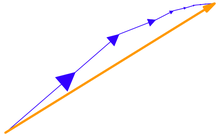

One of the most familiar examples of a Hilbert space is the Euclidean vector space consisting of three-dimensional vectors, denoted by R3, and equipped with the dot product. The dot product takes two vectors x and y, and produces a real number x ⋅ y. If x and y are represented in Cartesian coordinates, then the dot product is defined by

The dot product satisfies the properties[1]

- It is symmetric in x and y: x ⋅ y = y ⋅ x.

- It is linear in its first argument: (ax1 + bx2) ⋅ y = a(x1 ⋅ y) + b(x2 ⋅ y) for any scalars a, b, and vectors x1, x2, and y.

- It is positive definite: for all vectors x, x ⋅ x ≥ 0 , with equality if and only if x = 0.

An operation on pairs of vectors that, like the dot product, satisfies these three properties is known as a (real) inner product. A vector space equipped with such an inner product is known as a (real) inner product space. Every finite-dimensional inner product space is also a Hilbert space.[2] The basic feature of the dot product that connects it with Euclidean geometry is that it is related to both the length (or norm) of a vector, denoted ‖x‖, and to the angle θ between two vectors x and y by means of the formula

Multivariable calculus in Euclidean space relies on the ability to compute limits, and to have useful criteria for concluding that limits exist. A mathematical series

Just as with a series of scalars, a series of vectors that converges absolutely also converges to some limit vector L in the Euclidean space, in the sense that

This property expresses the completeness of Euclidean space: that a series that converges absolutely also converges in the ordinary sense.

Hilbert spaces are often taken over the complex numbers. The complex plane denoted by C is equipped with a notion of magnitude, the complex modulus |z|, which is defined as the square root of the product of z with its complex conjugate:

If z = x + iy is a decomposition of z into its real and imaginary parts, then the modulus is the usual Euclidean two-dimensional length:

The inner product of a pair of complex numbers z and w is the product of z with the complex conjugate of w:

This is complex-valued. The real part of ⟨z, w⟩ gives the usual two-dimensional Euclidean dot product.

A second example is the space C2 whose elements are pairs of complex numbers z = (z1, z2). Then the inner product of z with another such vector w = (w1, w2) is given by

The real part of ⟨z, w⟩ is then the two-dimensional Euclidean dot product. This inner product is Hermitian symmetric, which means that the result of interchanging z and w is the complex conjugate:

Definition[edit]

A Hilbert space is a real or complex inner product space that is also a complete metric space with respect to the distance function induced by the inner product.[4]

To say that a complex vector space H is a complex inner product space means that there is an inner product associating a complex number to each pair of elements of H that satisfies the following properties:

- The inner product is conjugate symmetric; that is, the inner product of a pair of elements is equal to the complex conjugate of the inner product of the swapped elements: Importantly, this implies that is a real number.

- The inner product is linear in its first[nb 1] argument. For all complex numbers and

- The inner product of an element with itself is positive definite:

It follows from properties 1 and 2 that a complex inner product is antilinear, also called conjugate linear, in its second argument, meaning that

A real inner product space is defined in the same way, except that H is a real vector space and the inner product takes real values. Such an inner product will be a bilinear map and will form a dual system.[5]

The norm is the real-valued function

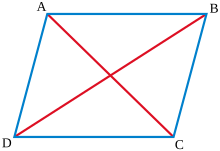

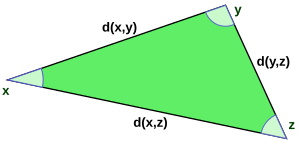

That this function is a distance function means firstly that it is symmetric in and secondly that the distance between and itself is zero, and otherwise the distance between and must be positive, and lastly that the triangle inequality holds, meaning that the length of one leg of a triangle xyz cannot exceed the sum of the lengths of the other two legs:

This last property is ultimately a consequence of the more fundamental Cauchy–Schwarz inequality, which asserts

With a distance function defined in this way, any inner product space is a metric space, and sometimes is known as a Hausdorff pre-Hilbert space.[6] Any pre-Hilbert space that is additionally also a complete space is a Hilbert space.[7]

The completeness of H is expressed using a form of the Cauchy criterion for sequences in H: a pre-Hilbert space H is complete if every Cauchy sequence converges with respect to this norm to an element in the space. Completeness can be characterized by the following equivalent condition: if a series of vectors

As a complete normed space, Hilbert spaces are by definition also Banach spaces. As such they are topological vector spaces, in which topological notions like the openness and closedness of subsets are well defined. Of special importance is the notion of a closed linear subspace of a Hilbert space that, with the inner product induced by restriction, is also complete (being a closed set in a complete metric space) and therefore a Hilbert space in its own right.

Second example: sequence spaces[edit]

The sequence space l2 consists of all infinite sequences z = (z1, z2, …) of complex numbers such that the following series converges:[9]

The inner product on l2 is defined by:

This second series converges as a consequence of the Cauchy–Schwarz inequality and the convergence of the previous series.

Completeness of the space holds provided that whenever a series of elements from l2 converges absolutely (in norm), then it converges to an element of l2. The proof is basic in mathematical analysis, and permits mathematical series of elements of the space to be manipulated with the same ease as series of complex numbers (or vectors in a finite-dimensional Euclidean space).[10]

History[edit]

Prior to the development of Hilbert spaces, other generalizations of Euclidean spaces were known to mathematicians and physicists. In particular, the idea of an abstract linear space (vector space) had gained some traction towards the end of the 19th century:[11] this is a space whose elements can be added together and multiplied by scalars (such as real or complex numbers) without necessarily identifying these elements with "geometric" vectors, such as position and momentum vectors in physical systems. Other objects studied by mathematicians at the turn of the 20th century, in particular spaces of sequences (including series) and spaces of functions,[12] can naturally be thought of as linear spaces. Functions, for instance, can be added together or multiplied by constant scalars, and these operations obey the algebraic laws satisfied by addition and scalar multiplication of spatial vectors.

In the first decade of the 20th century, parallel developments led to the introduction of Hilbert spaces. The first of these was the observation, which arose during David Hilbert and Erhard Schmidt's study of integral equations,[13] that two square-integrable real-valued functions f and g on an interval [a, b] have an inner product

which has many of the familiar properties of the Euclidean dot product. In particular, the idea of an orthogonal family of functions has meaning. Schmidt exploited the similarity of this inner product with the usual dot product to prove an analog of the spectral decomposition for an operator of the form

where K is a continuous function symmetric in x and y. The resulting eigenfunction expansion expresses the function K as a series of the form

where the functions φn are orthogonal in the sense that ⟨φn, φm⟩ = 0 for all n ≠ m. The individual terms in this series are sometimes referred to as elementary product solutions. However, there are eigenfunction expansions that fail to converge in a suitable sense to a square-integrable function: the missing ingredient, which ensures convergence, is completeness.[14]

The second development was the Lebesgue integral, an alternative to the Riemann integral introduced by Henri Lebesgue in 1904.[15] The Lebesgue integral made it possible to integrate a much broader class of functions. In 1907, Frigyes Riesz and Ernst Sigismund Fischer independently proved that the space L2 of square Lebesgue-integrable functions is a complete metric space.[16] As a consequence of the interplay between geometry and completeness, the 19th century results of Joseph Fourier, Friedrich Bessel and Marc-Antoine Parseval on trigonometric series easily carried over to these more general spaces, resulting in a geometrical and analytical apparatus now usually known as the Riesz–Fischer theorem.[17]

Further basic results were proved in the early 20th century. For example, the Riesz representation theorem was independently established by Maurice Fréchet and Frigyes Riesz in 1907.[18] John von Neumann coined the term abstract Hilbert space in his work on unbounded Hermitian operators.[19] Although other mathematicians such as Hermann Weyl and Norbert Wiener had already studied particular Hilbert spaces in great detail, often from a physically motivated point of view, von Neumann gave the first complete and axiomatic treatment of them.[20] Von Neumann later used them in his seminal work on the foundations of quantum mechanics,[21] and in his continued work with Eugene Wigner. The name "Hilbert space" was soon adopted by others, for example by Hermann Weyl in his book on quantum mechanics and the theory of groups.[22]

The significance of the concept of a Hilbert space was underlined with the realization that it offers one of the best mathematical formulations of quantum mechanics.[23] In short, the states of a quantum mechanical system are vectors in a certain Hilbert space, the observables are hermitian operators on that space, the symmetries of the system are unitary operators, and measurements are orthogonal projections. The relation between quantum mechanical symmetries and unitary operators provided an impetus for the development of the unitary representation theory of groups, initiated in the 1928 work of Hermann Weyl.[22] On the other hand, in the early 1930s it became clear that classical mechanics can be described in terms of Hilbert space (Koopman–von Neumann classical mechanics) and that certain properties of classical dynamical systems can be analyzed using Hilbert space techniques in the framework of ergodic theory.[24]

The algebra of observables in quantum mechanics is naturally an algebra of operators defined on a Hilbert space, according to Werner Heisenberg's matrix mechanics formulation of quantum theory.[25] Von Neumann began investigating operator algebras in the 1930s, as rings of operators on a Hilbert space. The kind of algebras studied by von Neumann and his contemporaries are now known as von Neumann algebras.[26] In the 1940s, Israel Gelfand, Mark Naimark and Irving Segal gave a definition of a kind of operator algebras called C*-algebras that on the one hand made no reference to an underlying Hilbert space, and on the other extrapolated many of the useful features of the operator algebras that had previously been studied. The spectral theorem for self-adjoint operators in particular that underlies much of the existing Hilbert space theory was generalized to C*-algebras.[27] These techniques are now basic in abstract harmonic analysis and representation theory.

Examples[edit]

Lebesgue spaces[edit]

Lebesgue spaces are function spaces associated to measure spaces (X, M, μ), where X is a set, M is a σ-algebra of subsets of X, and μ is a countably additive measure on M. Let L2(X, μ) be the space of those complex-valued measurable functions on X for which the Lebesgue integral of the square of the absolute value of the function is finite, i.e., for a function f in L2(X, μ),

The inner product of functions f and g in L2(X, μ) is then defined as

where the second form (conjugation of the first element) is commonly found in the theoretical physics literature. For f and g in L2, the integral exists because of the Cauchy–Schwarz inequality, and defines an inner product on the space. Equipped with this inner product, L2 is in fact complete.[28] The Lebesgue integral is essential to ensure completeness: on domains of real numbers, for instance, not enough functions are Riemann integrable.[29]

The Lebesgue spaces appear in many natural settings. The spaces L2(R) and L2([0,1]) of square-integrable functions with respect to the Lebesgue measure on the real line and unit interval, respectively, are natural domains on which to define the Fourier transform and Fourier series. In other situations, the measure may be something other than the ordinary Lebesgue measure on the real line. For instance, if w is any positive measurable function, the space of all measurable functions f on the interval [0, 1] satisfying

w([0, 1]), and w is called the weight function. The inner product is defined by

The weighted space L2

w([0, 1]) is identical with the Hilbert space L2([0, 1], μ) where the measure μ of a Lebesgue-measurable set A is defined by

Weighted L2 spaces like this are frequently used to study orthogonal polynomials, because different families of orthogonal polynomials are orthogonal with respect to different weighting functions.[30]

Sobolev spaces[edit]

Sobolev spaces, denoted by Hs or Ws, 2, are Hilbert spaces. These are a special kind of function space in which differentiation may be performed, but that (unlike other Banach spaces such as the Hölder spaces) support the structure of an inner product. Because differentiation is permitted, Sobolev spaces are a convenient setting for the theory of partial differential equations.[31] They also form the basis of the theory of direct methods in the calculus of variations.[32]

For s a non-negative integer and Ω ⊂ Rn, the Sobolev space Hs(Ω) contains L2 functions whose weak derivatives of order up to s are also L2. The inner product in Hs(Ω) is

Sobolev spaces are also studied from the point of view of spectral theory, relying more specifically on the Hilbert space structure. If Ω is a suitable domain, then one can define the Sobolev space Hs(Ω) as the space of Bessel potentials;[33] roughly,

Here Δ is the Laplacian and (1 − Δ)−s / 2 is understood in terms of the spectral mapping theorem. Apart from providing a workable definition of Sobolev spaces for non-integer s, this definition also has particularly desirable properties under the Fourier transform that make it ideal for the study of pseudodifferential operators. Using these methods on a compact Riemannian manifold, one can obtain for instance the Hodge decomposition, which is the basis of Hodge theory.[34]

Spaces of holomorphic functions[edit]

Hardy spaces[edit]

The Hardy spaces are function spaces, arising in complex analysis and harmonic analysis, whose elements are certain holomorphic functions in a complex domain.[35] Let U denote the unit disc in the complex plane. Then the Hardy space H2(U) is defined as the space of holomorphic functions f on U such that the means

remain bounded for r < 1. The norm on this Hardy space is defined by

Hardy spaces in the disc are related to Fourier series. A function f is in H2(U) if and only if

Thus H2(U) consists of those functions that are L2 on the circle, and whose negative frequency Fourier coefficients vanish.

Bergman spaces[edit]

The Bergman spaces are another family of Hilbert spaces of holomorphic functions.[36] Let D be a bounded open set in the complex plane (or a higher-dimensional complex space) and let L2, h(D) be the space of holomorphic functions f in D that are also in L2(D) in the sense that

where the integral is taken with respect to the Lebesgue measure in D. Clearly L2, h(D) is a subspace of L2(D); in fact, it is a closed subspace, and so a Hilbert space in its own right. This is a consequence of the estimate, valid on compact subsets K of D, that

A Bergman space is an example of a reproducing kernel Hilbert space, which is a Hilbert space of functions along with a kernel K(ζ, z) that verifies a reproducing property analogous to this one. The Hardy space H2(D) also admits a reproducing kernel, known as the Szegő kernel.[37] Reproducing kernels are common in other areas of mathematics as well. For instance, in harmonic analysis the Poisson kernel is a reproducing kernel for the Hilbert space of square-integrable harmonic functions in the unit ball. That the latter is a Hilbert space at all is a consequence of the mean value theorem for harmonic functions.

Applications[edit]

Many of the applications of Hilbert spaces exploit the fact that Hilbert spaces support generalizations of simple geometric concepts like projection and change of basis from their usual finite dimensional setting. In particular, the spectral theory of continuous self-adjoint linear operators on a Hilbert space generalizes the usual spectral decomposition of a matrix, and this often plays a major role in applications of the theory to other areas of mathematics and physics.

Sturm–Liouville theory[edit]

In the theory of ordinary differential equations, spectral methods on a suitable Hilbert space are used to study the behavior of eigenvalues and eigenfunctions of differential equations. For example, the Sturm–Liouville problem arises in the study of the harmonics of waves in a violin string or a drum, and is a central problem in ordinary differential equations.[38] The problem is a differential equation of the form

Partial differential equations[edit]

Hilbert spaces form a basic tool in the study of partial differential equations.[31] For many classes of partial differential equations, such as linear elliptic equations, it is possible to consider a generalized solution (known as a weak solution) by enlarging the class of functions. Many weak formulations involve the class of Sobolev functions, which is a Hilbert space. A suitable weak formulation reduces to a geometrical problem, the analytic problem of finding a solution or, often what is more important, showing that a solution exists and is unique for given boundary data. For linear elliptic equations, one geometrical result that ensures unique solvability for a large class of problems is the Lax–Milgram theorem. This strategy forms the rudiment of the Galerkin method (a finite element method) for numerical solution of partial differential equations.[40]

A typical example is the Poisson equation −Δu = g with Dirichlet boundary conditions in a bounded domain Ω in R2. The weak formulation consists of finding a function u such that, for all continuously differentiable functions v in Ω vanishing on the boundary:

This can be recast in terms of the Hilbert space H1

0(Ω) consisting of functions u such that u, along with its weak partial derivatives, are square integrable on Ω, and vanish on the boundary. The question then reduces to finding u in this space such that for all v in this space

where a is a continuous bilinear form, and b is a continuous linear functional, given respectively by

Since the Poisson equation is elliptic, it follows from Poincaré's inequality that the bilinear form a is coercive. The Lax–Milgram theorem then ensures the existence and uniqueness of solutions of this equation.[41]

Hilbert spaces allow for many elliptic partial differential equations to be formulated in a similar way, and the Lax–Milgram theorem is then a basic tool in their analysis. With suitable modifications, similar techniques can be applied to parabolic partial differential equations and certain hyperbolic partial differential equations.[42]

Ergodic theory[edit]

The field of ergodic theory is the study of the long-term behavior of chaotic dynamical systems. The protypical case of a field that ergodic theory applies to is thermodynamics, in which—though the microscopic state of a system is extremely complicated (it is impossible to understand the ensemble of individual collisions between particles of matter)—the average behavior over sufficiently long time intervals is tractable. The laws of thermodynamics are assertions about such average behavior. In particular, one formulation of the zeroth law of thermodynamics asserts that over sufficiently long timescales, the only functionally independent measurement that one can make of a thermodynamic system in equilibrium is its total energy, in the form of temperature.[43]

An ergodic dynamical system is one for which, apart from the energy—measured by the Hamiltonian—there are no other functionally independent conserved quantities on the phase space. More explicitly, suppose that the energy E is fixed, and let ΩE be the subset of the phase space consisting of all states of energy E (an energy surface), and let Tt denote the evolution operator on the phase space. The dynamical system is ergodic if every invariant measurable functions on ΩE is constant almost everywhere.[44] An invariant function f is one for which

The von Neumann mean ergodic theorem[24] states the following:

- If Ut is a (strongly continuous) one-parameter semigroup of unitary operators on a Hilbert space H, and P is the orthogonal projection onto the space of common fixed points of Ut, {x ∈H | Utx = x, ∀t > 0}, then

For an ergodic system, the fixed set of the time evolution consists only of the constant functions, so the ergodic theorem implies the following:[45] for any function f ∈ L2(ΩE, μ),

That is, the long time average of an observable f is equal to its expectation value over an energy surface.

Fourier analysis[edit]

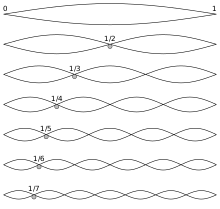

One of the basic goals of Fourier analysis is to decompose a function into a (possibly infinite) linear combination of given basis functions: the associated Fourier series. The classical Fourier series associated to a function f defined on the interval [0, 1] is a series of the form

The example of adding up the first few terms in a Fourier series for a sawtooth function is shown in the figure. The basis functions are sine waves with wavelengths λ/n (for integer n) shorter than the wavelength λ of the sawtooth itself (except for n = 1, the fundamental wave).

A significant problem in classical Fourier series asks in what sense the Fourier series converges, if at all, to the function f. Hilbert space methods provide one possible answer to this question.[46] The functions en(θ) = e2πinθ form an orthogonal basis of the Hilbert space L2([0, 1]). Consequently, any square-integrable function can be expressed as a series

and, moreover, this series converges in the Hilbert space sense (that is, in the L2 mean).

The problem can also be studied from the abstract point of view: every Hilbert space has an orthonormal basis, and every element of the Hilbert space can be written in a unique way as a sum of multiples of these basis elements. The coefficients appearing on these basis elements are sometimes known abstractly as the Fourier coefficients of the element of the space.[47] The abstraction is especially useful when it is more natural to use different basis functions for a space such as L2([0, 1]). In many circumstances, it is desirable not to decompose a function into trigonometric functions, but rather into orthogonal polynomials or wavelets for instance,[48] and in higher dimensions into spherical harmonics.[49]

For instance, if en are any orthonormal basis functions of L2[0, 1], then a given function in L2[0, 1] can be approximated as a finite linear combination[50]

The coefficients {aj} are selected to make the magnitude of the difference ‖f − fn‖2 as small as possible. Geometrically, the best approximation is the orthogonal projection of f onto the subspace consisting of all linear combinations of the {ej}, and can be calculated by[51]

That this formula minimizes the difference ‖f − fn‖2 is a consequence of Bessel's inequality and Parseval's formula.

In various applications to physical problems, a function can be decomposed into physically meaningful eigenfunctions of a differential operator (typically the Laplace operator): this forms the foundation for the spectral study of functions, in reference to the spectrum of the differential operator.[52] A concrete physical application involves the problem of hearing the shape of a drum: given the fundamental modes of vibration that a drumhead is capable of producing, can one infer the shape of the drum itself?[53] The mathematical formulation of this question involves the Dirichlet eigenvalues of the Laplace equation in the plane, that represent the fundamental modes of vibration in direct analogy with the integers that represent the fundamental modes of vibration of the violin string.

Spectral theory also underlies certain aspects of the Fourier transform of a function. Whereas Fourier analysis decomposes a function defined on a compact set into the discrete spectrum of the Laplacian (which corresponds to the vibrations of a violin string or drum), the Fourier transform of a function is the decomposition of a function defined on all of Euclidean space into its components in the continuous spectrum of the Laplacian. The Fourier transformation is also geometrical, in a sense made precise by the Plancherel theorem, that asserts that it is an isometry of one Hilbert space (the "time domain") with another (the "frequency domain"). This isometry property of the Fourier transformation is a recurring theme in abstract harmonic analysis (since it reflects the conservation of energy for the continuous Fourier Transform), as evidenced for instance by the Plancherel theorem for spherical functions occurring in noncommutative harmonic analysis.

Quantum mechanics[edit]

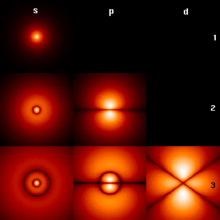

In the mathematically rigorous formulation of quantum mechanics, developed by John von Neumann,[54] the possible states (more precisely, the pure states) of a quantum mechanical system are represented by unit vectors (called state vectors) residing in a complex separable Hilbert space, known as the state space, well defined up to a complex number of norm 1 (the phase factor). In other words, the possible states are points in the projectivization of a Hilbert space, usually called the complex projective space. The exact nature of this Hilbert space is dependent on the system; for example, the position and momentum states for a single non-relativistic spin zero particle is the space of all square-integrable functions, while the states for the spin of a single proton are unit elements of the two-dimensional complex Hilbert space of spinors. Each observable is represented by a self-adjoint linear operator acting on the state space. Each eigenstate of an observable corresponds to an eigenvector of the operator, and the associated eigenvalue corresponds to the value of the observable in that eigenstate.[55]

The inner product between two state vectors is a complex number known as a probability amplitude. During an ideal measurement of a quantum mechanical system, the probability that a system collapses from a given initial state to a particular eigenstate is given by the square of the absolute value of the probability amplitudes between the initial and final states.[56] The possible results of a measurement are the eigenvalues of the operator—which explains the choice of self-adjoint operators, for all the eigenvalues must be real. The probability distribution of an observable in a given state can be found by computing the spectral decomposition of the corresponding operator.[57]

For a general system, states are typically not pure, but instead are represented as statistical mixtures of pure states, or mixed states, given by density matrices: self-adjoint operators of trace one on a Hilbert space.[58] Moreover, for general quantum mechanical systems, the effects of a single measurement can influence other parts of a system in a manner that is described instead by a positive operator valued measure. Thus the structure both of the states and observables in the general theory is considerably more complicated than the idealization for pure states.[59]

Probability theory[edit]

In probability theory, Hilbert spaces also have diverse applications. Here a fundamental Hilbert space is the space of random variables on a given probability space, having class (finite first and second moments). A common operation in statistics is that of centering a random variable by subtracting its expectation. Thus if is a random variable, then is its centering. In the Hilbert space view, this is the orthogonal projection of onto the kernel of the expectation operator, which a continuous linear functional on the Hilbert space (in fact, the inner product with the constant random variable 1), and so this kernel is a closed subspace.

The conditional expectation has a natural interpretation in the Hilbert space.[60] Suppose that a probability space is given, where is a sigma algebra on the set , and is a probability measure on the measure space . If is a sigma subalgebra of , then the conditional expectation is the orthogonal projection of onto the subspace of consisting of the -measurable functions. If the random variable in is independent of the sigma algebra then conditional expectation , i.e., its projection onto the -measurable functions is constant. Equivalently, the projection of its centering is zero.

In particular, if two random variables and (in ) are independent, then the centered random variables and are orthogonal. (This means that the two variables have zero covariance: they are uncorrelated.) In that case, the Pythagorean theorem in the kernel of the expectation operator implies that the variances of and satisfy the identity:

The theory of martingales can be formulated in Hilbert spaces. A martingale in a Hilbert space is a sequence of elements of a Hilbert space such that, for each n, is the orthogonal projection of onto the linear hull of .[62] If the are random variables, this reproduces the usual definition of a (discrete) martingale: the expectation of , conditioned on , is equal to .

Hilbert spaces are also used throughout the foundations of the Itô calculus.[63] To any square-integrable martingale, it is possible to associate a Hilbert norm on the space of equivalence classes of progressively measurable processes with respect to the martingale (using the quadratic variation of the martingale as the measure). The Itô integral can be constructed by first defining it for simple processes, and then exploiting their density in the Hilbert space. A noteworthy result is then the Itô isometry, which attests that for any martingale M having quadratic variation measure , and any progressively measurable process H:

A deeper application of Hilbert spaces that is especially important in the theory of Gaussian processes is an attempt, due to Leonard Gross and others, to make sense of certain formal integrals over infinite dimensional spaces like the Feynman path integral from quantum field theory. The problem with integral like this is that there is no infinite dimensional Lebesgue measure. The notion of an abstract Wiener space allows one to construct a measure on a Banach space B that contains a Hilbert space H, called the Cameron–Martin space, as a dense subset, out of a finitely additive cylinder set measure on H. The resulting measure on B is countably additive and invariant under translation by elements of H, and this provides a mathematically rigorous way of thinking of the Wiener measure as a Gaussian measure on the Sobolev space .[64]

Color perception[edit]

Any true physical color can be represented by a combination of pure spectral colors. As physical colors can be composed of any number of spectral colors, the space of physical colors may aptly be represented by a Hilbert space over spectral colors. Humans have three types of cone cells for color perception, so the perceivable colors can be represented by 3-dimensional Euclidean space. The many-to-one linear mapping from the Hilbert space of physical colors to the Euclidean space of human perceivable colors explains why many distinct physical colors may be perceived by humans to be identical (e.g., pure yellow light versus a mix of red and green light, see metamerism).[65][66]

Properties[edit]

Pythagorean identity[edit]

Two vectors u and v in a Hilbert space H are orthogonal when ⟨u, v⟩ = 0. The notation for this is u ⊥ v. More generally, when S is a subset in H, the notation u ⊥ S means that u is orthogonal to every element from S.

When u and v are orthogonal, one has

By induction on n, this is extended to any family u1, ..., un of n orthogonal vectors,

Whereas the Pythagorean identity as stated is valid in any inner product space, completeness is required for the extension of the Pythagorean identity to series.[67] A series Σuk of orthogonal vectors converges in H if and only if the series of squares of norms converges, and

Parallelogram identity and polarization[edit]

By definition, every Hilbert space is also a Banach space. Furthermore, in every Hilbert space the following parallelogram identity holds:[68]

Conversely, every Banach space in which the parallelogram identity holds is a Hilbert space, and the inner product is uniquely determined by the norm by the polarization identity.[69] For real Hilbert spaces, the polarization identity is

For complex Hilbert spaces, it is

The parallelogram law implies that any Hilbert space is a uniformly convex Banach space.[70]

Best approximation[edit]

This subsection employs the Hilbert projection theorem. If C is a non-empty closed convex subset of a Hilbert space H and x a point in H, there exists a unique point y ∈ C that minimizes the distance between x and points in C,[71]

This is equivalent to saying that there is a point with minimal norm in the translated convex set D = C − x. The proof consists in showing that every minimizing sequence (dn) ⊂ D is Cauchy (using the parallelogram identity) hence converges (using completeness) to a point in D that has minimal norm. More generally, this holds in any uniformly convex Banach space.[72]

When this result is applied to a closed subspace F of H, it can be shown that the point y ∈ F closest to x is characterized by[73]

This point y is the orthogonal projection of x onto F, and the mapping PF : x → y is linear (see Orthogonal complements and projections). This result is especially significant in applied mathematics, especially numerical analysis, where it forms the basis of least squares methods.[74]

In particular, when F is not equal to H, one can find a nonzero vector v orthogonal to F (select x ∉ F and v = x − y). A very useful criterion is obtained by applying this observation to the closed subspace F generated by a subset S of H.

- A subset S of H spans a dense vector subspace if (and only if) the vector 0 is the sole vector v ∈ H orthogonal to S.

Duality[edit]

The dual space H* is the space of all continuous linear functions from the space H into the base field. It carries a natural norm, defined by

The Riesz representation theorem affords a convenient description of the dual space. To every element u of H, there is a unique element φu of H*, defined by

The Riesz representation theorem states that the map from H to H* defined by u ↦ φu is surjective, which makes this map an isometric antilinear isomorphism.[75] So to every element φ of the dual H* there exists one and only one uφ in H such that

The reversal of order on the right-hand side restores linearity in φ from the antilinearity of uφ. In the real case, the antilinear isomorphism from H to its dual is actually an isomorphism, and so real Hilbert spaces are naturally isomorphic to their own duals.

The representing vector uφ is obtained in the following way. When φ ≠ 0, the kernel F = Ker(φ) is a closed vector subspace of H, not equal to H, hence there exists a nonzero vector v orthogonal to F. The vector u is a suitable scalar multiple λv of v. The requirement that φ(v) = ⟨v, u⟩ yields

This correspondence φ ↔ u is exploited by the bra–ket notation popular in physics.[76] It is common in physics to assume that the inner product, denoted by ⟨x|y⟩, is linear on the right,

The Riesz representation theorem relies fundamentally not just on the presence of an inner product, but also on the completeness of the space. In fact, the theorem implies that the topological dual of any inner product space can be identified with its completion.[77] An immediate consequence of the Riesz representation theorem is also that a Hilbert space H is reflexive, meaning that the natural map from H into its double dual space is an isomorphism.

Weakly-convergent sequences[edit]

In a Hilbert space H, a sequence {xn} is weakly convergent to a vector x ∈ H when

For example, any orthonormal sequence {fn} converges weakly to 0, as a consequence of Bessel's inequality. Every weakly convergent sequence {xn} is bounded, by the uniform boundedness principle.

Conversely, every bounded sequence in a Hilbert space admits weakly convergent subsequences (Alaoglu's theorem).[78] This fact may be used to prove minimization results for continuous convex functionals, in the same way that the Bolzano–Weierstrass theorem is used for continuous functions on Rd. Among several variants, one simple statement is as follows:[79]

- If f : H → R is a convex continuous function such that f(x) tends to +∞ when ‖x‖ tends to ∞, then f admits a minimum at some point x0 ∈ H.

This fact (and its various generalizations) are fundamental for direct methods in the calculus of variations. Minimization results for convex functionals are also a direct consequence of the slightly more abstract fact that closed bounded convex subsets in a Hilbert space H are weakly compact, since H is reflexive. The existence of weakly convergent subsequences is a special case of the Eberlein–Šmulian theorem.

Banach space properties[edit]

Any general property of Banach spaces continues to hold for Hilbert spaces. The open mapping theorem states that a continuous surjective linear transformation from one Banach space to another is an open mapping meaning that it sends open sets to open sets. A corollary is the bounded inverse theorem, that a continuous and bijective linear function from one Banach space to another is an isomorphism (that is, a continuous linear map whose inverse is also continuous). This theorem is considerably simpler to prove in the case of Hilbert spaces than in general Banach spaces.[80] The open mapping theorem is equivalent to the closed graph theorem, which asserts that a linear function from one Banach space to another is continuous if and only if its graph is a closed set.[81] In the case of Hilbert spaces, this is basic in the study of unbounded operators (see closed operator).

The (geometrical) Hahn–Banach theorem asserts that a closed convex set can be separated from any point outside it by means of a hyperplane of the Hilbert space. This is an immediate consequence of the best approximation property: if y is the element of a closed convex set F closest to x, then the separating hyperplane is the plane perpendicular to the segment xy passing through its midpoint.[82]

Operators on Hilbert spaces[edit]

Bounded operators[edit]

The continuous linear operators A : H1 → H2 from a Hilbert space H1 to a second Hilbert space H2 are bounded in the sense that they map bounded sets to bounded sets.[83] Conversely, if an operator is bounded, then it is continuous. The space of such bounded linear operators has a norm, the operator norm given by

The sum and the composite of two bounded linear operators is again bounded and linear. For y in H2, the map that sends x ∈ H1 to ⟨Ax, y⟩ is linear and continuous, and according to the Riesz representation theorem can therefore be represented in the form

The set B(H) of all bounded linear operators on H (meaning operators H → H), together with the addition and composition operations, the norm and the adjoint operation, is a C*-algebra, which is a type of operator algebra.

An element A of B(H) is called 'self-adjoint' or 'Hermitian' if A* = A. If A is Hermitian and ⟨Ax, x⟩ ≥ 0 for every x, then A is called 'nonnegative', written A ≥ 0; if equality holds only when x = 0, then A is called 'positive'. The set of self adjoint operators admits a partial order, in which A ≥ B if A − B ≥ 0. If A has the form B*B for some B, then A is nonnegative; if B is invertible, then A is positive. A converse is also true in the sense that, for a non-negative operator A, there exists a unique non-negative square root B such that

In a sense made precise by the spectral theorem, self-adjoint operators can usefully be thought of as operators that are "real". An element A of B(H) is called normal if A*A = AA*. Normal operators decompose into the sum of a self-adjoint operator and an imaginary multiple of a self adjoint operator

An element U of B(H) is called unitary if U is invertible and its inverse is given by U*. This can also be expressed by requiring that U be onto and ⟨Ux, Uy⟩ = ⟨x, y⟩ for all x, y ∈ H. The unitary operators form a group under composition, which is the isometry group of H.

An element of B(H) is compact if it sends bounded sets to relatively compact sets. Equivalently, a bounded operator T is compact if, for any bounded sequence {xk}, the sequence {Txk} has a convergent subsequence. Many integral operators are compact, and in fact define a special class of operators known as Hilbert–Schmidt operators that are especially important in the study of integral equations. Fredholm operators differ from a compact operator by a multiple of the identity, and are equivalently characterized as operators with a finite dimensional kernel and cokernel. The index of a Fredholm operator T is defined by

The index is homotopy invariant, and plays a deep role in differential geometry via the Atiyah–Singer index theorem.

Unbounded operators[edit]

Unbounded operators are also tractable in Hilbert spaces, and have important applications to quantum mechanics.[84] An unbounded operator T on a Hilbert space H is defined as a linear operator whose domain D(T) is a linear subspace of H. Often the domain D(T) is a dense subspace of H, in which case T is known as a densely defined operator.

The adjoint of a densely defined unbounded operator is defined in essentially the same manner as for bounded operators. Self-adjoint unbounded operators play the role of the observables in the mathematical formulation of quantum mechanics. Examples of self-adjoint unbounded operators on the Hilbert space L2(R) are:[85]

- A suitable extension of the differential operator where i is the imaginary unit and f is a differentiable function of compact support.

- The multiplication-by-x operator:

These correspond to the momentum and position observables, respectively. Neither A nor B is defined on all of H, since in the case of A the derivative need not exist, and in the case of B the product function need not be square integrable. In both cases, the set of possible arguments form dense subspaces of L2(R).

Constructions[edit]

Direct sums[edit]

Two Hilbert spaces H1 and H2 can be combined into another Hilbert space, called the (orthogonal) direct sum,[86] and denoted

consisting of the set of all ordered pairs (x1, x2) where xi ∈ Hi, i = 1, 2, and inner product defined by

More generally, if Hi is a family of Hilbert spaces indexed by i ∈ I, then the direct sum of the Hi, denoted

The inner product is defined by

Each of the Hi is included as a closed subspace in the direct sum of all of the Hi. Moreover, the Hi are pairwise orthogonal. Conversely, if there is a system of closed subspaces, Vi, i ∈ I, in a Hilbert space H, that are pairwise orthogonal and whose union is dense in H, then H is canonically isomorphic to the direct sum of Vi. In this case, H is called the internal direct sum of the Vi. A direct sum (internal or external) is also equipped with a family of orthogonal projections Ei onto the ith direct summand Hi. These projections are bounded, self-adjoint, idempotent operators that satisfy the orthogonality condition

The spectral theorem for compact self-adjoint operators on a Hilbert space H states that H splits into an orthogonal direct sum of the eigenspaces of an operator, and also gives an explicit decomposition of the operator as a sum of projections onto the eigenspaces. The direct sum of Hilbert spaces also appears in quantum mechanics as the Fock space of a system containing a variable number of particles, where each Hilbert space in the direct sum corresponds to an additional degree of freedom for the quantum mechanical system. In representation theory, the Peter–Weyl theorem guarantees that any unitary representation of a compact group on a Hilbert space splits as the direct sum of finite-dimensional representations.

Tensor products[edit]

If x1, y1 ∊ H1 and x2, y2 ∊ H2, then one defines an inner product on the (ordinary) tensor product as follows. On simple tensors, let

This formula then extends by sesquilinearity to an inner product on H1 ⊗ H2. The Hilbertian tensor product of H1 and H2, sometimes denoted by H1 H2, is the Hilbert space obtained by completing H1 ⊗ H2 for the metric associated to this inner product.[87]

An example is provided by the Hilbert space L2([0, 1]). The Hilbertian tensor product of two copies of L2([0, 1]) is isometrically and linearly isomorphic to the space L2([0, 1]2) of square-integrable functions on the square [0, 1]2. This isomorphism sends a simple tensor f1 ⊗ f2 to the function

This example is typical in the following sense.[88] Associated to every simple tensor product x1 ⊗ x2 is the rank one operator from H∗

1 to H2 that maps a given x* ∈ H∗

1 as

This mapping defined on simple tensors extends to a linear identification between H1 ⊗ H2 and the space of finite rank operators from H∗

1 to H2. This extends to a linear isometry of the Hilbertian tensor product H1 H2 with the Hilbert space HS(H∗

1, H2) of Hilbert–Schmidt operators from H∗

1 to H2.

Orthonormal bases[edit]

The notion of an orthonormal basis from linear algebra generalizes over to the case of Hilbert spaces.[89] In a Hilbert space H, an orthonormal basis is a family {ek}k ∈ B of elements of H satisfying the conditions:

- Orthogonality: Every two different elements of B are orthogonal: ⟨ek, ej⟩ = 0 for all k, j ∈ B with k ≠ j.

- Normalization: Every element of the family has norm 1: ‖ek‖ = 1 for all k ∈ B.

- Completeness: The linear span of the family ek, k ∈ B, is dense in H.

A system of vectors satisfying the first two conditions basis is called an orthonormal system or an orthonormal set (or an orthonormal sequence if B is countable). Such a system is always linearly independent.

Despite the name, an orthonormal basis is not, in general, a basis in the sense of linear algebra (Hamel basis). More precisely, an orthonormal basis is a Hamel basis if and only if the Hilbert space is a finite-dimensional vector space.[90]

Completeness of an orthonormal system of vectors of a Hilbert space can be equivalently restated as:

- for every v ∈ H, if ⟨v, ek⟩ = 0 for all k ∈ B, then v = 0.

This is related to the fact that the only vector orthogonal to a dense linear subspace is the zero vector, for if S is any orthonormal set and v is orthogonal to S, then v is orthogonal to the closure of the linear span of S, which is the whole space.

Examples of orthonormal bases include:

- the set {(1, 0, 0), (0, 1, 0), (0, 0, 1)} forms an orthonormal basis of R3 with the dot product;

- the sequence { fn | n ∈ Z} with fn(x) = exp(2πinx) forms an orthonormal basis of the complex space L2([0, 1]);

In the infinite-dimensional case, an orthonormal basis will not be a basis in the sense of linear algebra; to distinguish the two, the latter basis is also called a Hamel basis. That the span of the basis vectors is dense implies that every vector in the space can be written as the sum of an infinite series, and the orthogonality implies that this decomposition is unique.

Sequence spaces[edit]

The space of square-summable sequences of complex numbers is the set of infinite sequences[9]

This space has an orthonormal basis:

This space is the infinite-dimensional generalization of the space of finite-dimensional vectors. It is usually the first example used to show that in infinite-dimensional spaces, a set that is closed and bounded is not necessarily (sequentially) compact (as is the case in all finite dimensional spaces). Indeed, the set of orthonormal vectors above shows this: It is an infinite sequence of vectors in the unit ball (i.e., the ball of points with norm less than or equal one). This set is clearly bounded and closed; yet, no subsequence of these vectors converges to anything and consequently the unit ball in is not compact. Intuitively, this is because "there is always another coordinate direction" into which the next elements of the sequence can evade.

One can generalize the space in many ways. For example, if B is any set, then one can form a Hilbert space of sequences with index set B, defined by[91]

The summation over B is here defined by

for all x, y ∈ l2(B). Here the sum also has only countably many nonzero terms, and is unconditionally convergent by the Cauchy–Schwarz inequality.

An orthonormal basis of l2(B) is indexed by the set B, given by

Bessel's inequality and Parseval's formula[edit]

Let f1, …, fn be a finite orthonormal system in H. For an arbitrary vector x ∈ H, let

Then ⟨x, fk⟩ = ⟨y, fk⟩ for every k = 1, …, n. It follows that x − y is orthogonal to each fk, hence x − y is orthogonal to y. Using the Pythagorean identity twice, it follows that

Let {fi}, i ∈ I, be an arbitrary orthonormal system in H. Applying the preceding inequality to every finite subset J of I gives Bessel's inequality:[92]

Geometrically, Bessel's inequality implies that the orthogonal projection of x onto the linear subspace spanned by the fi has norm that does not exceed that of x. In two dimensions, this is the assertion that the length of the leg of a right triangle may not exceed the length of the hypotenuse.

Bessel's inequality is a stepping stone to the stronger result called Parseval's identity, which governs the case when Bessel's inequality is actually an equality. By definition, if {ek}k ∈ B is an orthonormal basis of H, then every element x of H may be written as

Even if B is uncountable, Bessel's inequality guarantees that the expression is well-defined and consists only of countably many nonzero terms. This sum is called the Fourier expansion of x, and the individual coefficients ⟨x, ek⟩ are the Fourier coefficients of x. Parseval's identity then asserts that[93]

Conversely,[93] if {ek} is an orthonormal set such that Parseval's identity holds for every x, then {ek} is an orthonormal basis.

Hilbert dimension[edit]

As a consequence of Zorn's lemma, every Hilbert space admits an orthonormal basis; furthermore, any two orthonormal bases of the same space have the same cardinality, called the Hilbert dimension of the space.[94] For instance, since l2(B) has an orthonormal basis indexed by B, its Hilbert dimension is the cardinality of B (which may be a finite integer, or a countable or uncountable cardinal number).

The Hilbert dimension is not greater than the Hamel dimension (the usual dimension of a vector space). The two dimensions are equal if and only one of them is finite.

As a consequence of Parseval's identity,[95] if {ek}k ∈ B is an orthonormal basis of H, then the map Φ : H → l2(B) defined by Φ(x) = ⟨x, ek⟩k∈B is an isometric isomorphism of Hilbert spaces: it is a bijective linear mapping such that

Separable spaces[edit]

By definition, a Hilbert space is separable provided it contains a dense countable subset. Along with Zorn's lemma, this means a Hilbert space is separable if and only if it admits a countable orthonormal basis. All infinite-dimensional separable Hilbert spaces are therefore isometrically isomorphic to the square-summable sequence space

In the past, Hilbert spaces were often required to be separable as part of the definition.[96]

In quantum field theory[edit]

Most spaces used in physics are separable, and since these are all isomorphic to each other, one often refers to any infinite-dimensional separable Hilbert space as "the Hilbert space" or just "Hilbert space".[97] Even in quantum field theory, most of the Hilbert spaces are in fact separable, as stipulated by the Wightman axioms. However, it is sometimes argued that non-separable Hilbert spaces are also important in quantum field theory, roughly because the systems in the theory possess an infinite number of degrees of freedom and any infinite Hilbert tensor product (of spaces of dimension greater than one) is non-separable.[98] For instance, a bosonic field can be naturally thought of as an element of a tensor product whose factors represent harmonic oscillators at each point of space. From this perspective, the natural state space of a boson might seem to be a non-separable space.[98] However, it is only a small separable subspace of the full tensor product that can contain physically meaningful fields (on which the observables can be defined). Another non-separable Hilbert space models the state of an infinite collection of particles in an unbounded region of space. An orthonormal basis of the space is indexed by the density of the particles, a continuous parameter, and since the set of possible densities is uncountable, the basis is not countable.[98]

Orthogonal complements and projections[edit]

If S is a subset of a Hilbert space H, the set of vectors orthogonal to S is defined by

The set S⊥ is a closed subspace of H (can be proved easily using the linearity and continuity of the inner product) and so forms itself a Hilbert space. If V is a closed subspace of H, then V⊥ is called the orthogonal complement of V. In fact, every x ∈ H can then be written uniquely as x = v + w, with v ∈ V and w ∈ V⊥. Therefore, H is the internal Hilbert direct sum of V and V⊥.

The linear operator PV : H → H that maps x to v is called the orthogonal projection onto V. There is a natural one-to-one correspondence between the set of all closed subspaces of H and the set of all bounded self-adjoint operators P such that P2 = P. Specifically,

Theorem — The orthogonal projection PV is a self-adjoint linear operator on H of norm ≤ 1 with the property P2

V = PV. Moreover, any self-adjoint linear operator E such that E2 = E is of the form PV, where V is the range of E. For every x in H, PV(x) is the unique element v of V that minimizes the distance ‖x − v‖.

This provides the geometrical interpretation of PV(x): it is the best approximation to x by elements of V.[99]

Projections PU and PV are called mutually orthogonal if PUPV = 0. This is equivalent to U and V being orthogonal as subspaces of H. The sum of the two projections PU and PV is a projection only if U and V are orthogonal to each other, and in that case PU + PV = PU+V.[100] The composite PUPV is generally not a projection; in fact, the composite is a projection if and only if the two projections commute, and in that case PUPV = PU∩V.[101]

By restricting the codomain to the Hilbert space V, the orthogonal projection PV gives rise to a projection mapping π : H → V; it is the adjoint of the inclusion mapping

The operator norm of the orthogonal projection PV onto a nonzero closed subspace V is equal to 1:

Every closed subspace V of a Hilbert space is therefore the image of an operator P of norm one such that P2 = P. The property of possessing appropriate projection operators characterizes Hilbert spaces:[102]

- A Banach space of dimension higher than 2 is (isometrically) a Hilbert space if and only if, for every closed subspace V, there is an operator PV of norm one whose image is V such that P2

V = PV.

While this result characterizes the metric structure of a Hilbert space, the structure of a Hilbert space as a topological vector space can itself be characterized in terms of the presence of complementary subspaces:[103]

- A Banach space X is topologically and linearly isomorphic to a Hilbert space if and only if, to every closed subspace V, there is a closed subspace W such that X is equal to the internal direct sum V ⊕ W.

The orthogonal complement satisfies some more elementary results. It is a monotone function in the sense that if U ⊂ V, then V⊥ ⊆ U⊥ with equality holding if and only if V is contained in the closure of U. This result is a special case of the Hahn–Banach theorem. The closure of a subspace can be completely characterized in terms of the orthogonal complement: if V is a subspace of H, then the closure of V is equal to V⊥⊥. The orthogonal complement is thus a Galois connection on the partial order of subspaces of a Hilbert space. In general, the orthogonal complement of a sum of subspaces is the intersection of the orthogonal complements:[104]

If the Vi are in addition closed, then

Spectral theory[edit]

There is a well-developed spectral theory for self-adjoint operators in a Hilbert space, that is roughly analogous to the study of symmetric matrices over the reals or self-adjoint matrices over the complex numbers.[105] In the same sense, one can obtain a "diagonalization" of a self-adjoint operator as a suitable sum (actually an integral) of orthogonal projection operators.

The spectrum of an operator T, denoted σ(T), is the set of complex numbers λ such that T − λ lacks a continuous inverse. If T is bounded, then the spectrum is always a compact set in the complex plane, and lies inside the disc |z| ≤ ‖T‖. If T is self-adjoint, then the spectrum is real. In fact, it is contained in the interval [m, M] where

Moreover, m and M are both actually contained within the spectrum.

The eigenspaces of an operator T are given by

Unlike with finite matrices, not every element of the spectrum of T must be an eigenvalue: the linear operator T − λ may only lack an inverse because it is not surjective. Elements of the spectrum of an operator in the general sense are known as spectral values. Since spectral values need not be eigenvalues, the spectral decomposition is often more subtle than in finite dimensions.

However, the spectral theorem of a self-adjoint operator T takes a particularly simple form if, in addition, T is assumed to be a compact operator. The spectral theorem for compact self-adjoint operators states:[106]

- A compact self-adjoint operator T has only countably (or finitely) many spectral values. The spectrum of T has no limit point in the complex plane except possibly zero. The eigenspaces of T decompose H into an orthogonal direct sum: Moreover, if Eλ denotes the orthogonal projection onto the eigenspace Hλ, thenwhere the sum converges with respect to the norm on B(H).

This theorem plays a fundamental role in the theory of integral equations, as many integral operators are compact, in particular those that arise from Hilbert–Schmidt operators.

The general spectral theorem for self-adjoint operators involves a kind of operator-valued Riemann–Stieltjes integral, rather than an infinite summation.[107] The spectral family associated to T associates to each real number λ an operator Eλ, which is the projection onto the nullspace of the operator (T − λ)+, where the positive part of a self-adjoint operator is defined by

The operators Eλ are monotone increasing relative to the partial order defined on self-adjoint operators; the eigenvalues correspond precisely to the jump discontinuities. One has the spectral theorem, which asserts

The integral is understood as a Riemann–Stieltjes integral, convergent with respect to the norm on B(H). In particular, one has the ordinary scalar-valued integral representation

A somewhat similar spectral decomposition holds for normal operators, although because the spectrum may now contain non-real complex numbers, the operator-valued Stieltjes measure dEλ must instead be replaced by a resolution of the identity.

A major application of spectral methods is the spectral mapping theorem, which allows one to apply to a self-adjoint operator T any continuous complex function f defined on the spectrum of T by forming the integral

The resulting continuous functional calculus has applications in particular to pseudodifferential operators.[108]

The spectral theory of unbounded self-adjoint operators is only marginally more difficult than for bounded operators. The spectrum of an unbounded operator is defined in precisely the same way as for bounded operators: λ is a spectral value if the resolvent operator

fails to be a well-defined continuous operator. The self-adjointness of T still guarantees that the spectrum is real. Thus the essential idea of working with unbounded operators is to look instead at the resolvent Rλ where λ is nonreal. This is a bounded normal operator, which admits a spectral representation that can then be transferred to a spectral representation of T itself. A similar strategy is used, for instance, to study the spectrum of the Laplace operator: rather than address the operator directly, one instead looks as an associated resolvent such as a Riesz potential or Bessel potential.

A precise version of the spectral theorem in this case is:[109]

Theorem — Given a densely defined self-adjoint operator T on a Hilbert space H, there corresponds a unique resolution of the identity E on the Borel sets of R, such that

There is also a version of the spectral theorem that applies to unbounded normal operators.

In popular culture[edit]

In Gravity's Rainbow (1973), a novel by Thomas Pynchon, one of the characters is called "Sammy Hilbert-Spaess", a pun on "Hilbert Space". The novel refers also to Gödel's incompleteness theorems.[110]

See also[edit]

- Banach space – Normed vector space that is complete

- Fock space – Multi particle state space

- Fundamental theorem of Hilbert spaces

- Hadamard space – geodesically complete metric space of non-positive curvature

- Hausdorff space – Type of topological space

- Hilbert algebra

- Hilbert C*-module – Mathematical objects that generalise the notion of Hilbert spaces

- Hilbert manifold – Manifold modelled on Hilbert spaces

- L-semi-inner product – Generalization of inner products that applies to all normed spaces

- Locally convex topological vector space – A vector space with a topology defined by convex open sets

- Operator theory – Mathematical field of study

- Operator topologies – Topologies on the set of operators on a Hilbert space

- Quantum state space – Mathematical space representing physical quantum systems

- Rigged Hilbert space – Construction linking the study of "bound" and continuous eigenvalues in functional analysis

- Topological vector space – Vector space with a notion of nearness

Remarks[edit]

Notes[edit]

- ^ Axler 2014, p. 164 §6.2

- ^ However, some sources call finite-dimensional spaces with these properties pre-Hilbert spaces, reserving the term "Hilbert space" for infinite-dimensional spaces; see, e.g., Levitan 2001.

- ^ Marsden 1974, §2.8

- ^ The mathematical material in this section can be found in any good textbook on functional analysis, such as Dieudonné (1960), Hewitt & Stromberg (1965), Reed & Simon (1980) or Rudin (1987).

- ^ Schaefer & Wolff 1999, pp. 122–202.

- ^ Dieudonné 1960, §6.2

- ^ Roman 2008, p. 327

- ^ Roman 2008, p. 330 Theorem 13.8

- ^ a b Stein & Shakarchi 2005, p. 163

- ^ Dieudonné 1960

- ^ Largely from the work of Hermann Grassmann, at the urging of August Ferdinand Möbius (Boyer & Merzbach 1991, pp. 584–586). The first modern axiomatic account of abstract vector spaces ultimately appeared in Giuseppe Peano's 1888 account (Grattan-Guinness 2000, §5.2.2; O'Connor & Robertson 1996).

- ^ A detailed account of the history of Hilbert spaces can be found in Bourbaki 1987.

- ^ Schmidt 1908

- ^ Titchmarsh 1946, §IX.1

- ^ Lebesgue 1904. Further details on the history of integration theory can be found in Bourbaki (1987) and Saks (2005).

- ^ Bourbaki 1987.

- ^ Dunford & Schwartz 1958, §IV.16

- ^ In Dunford & Schwartz (1958, §IV.16), the result that every linear functional on L2[0,1] is represented by integration is jointly attributed to Fréchet (1907) and Riesz (1907). The general result, that the dual of a Hilbert space is identified with the Hilbert space itself, can be found in Riesz (1934).

- ^ von Neumann 1929.

- ^ Kline 1972, p. 1092

- ^ Hilbert, Nordheim & von Neumann 1927

- ^ a b Weyl 1931.

- ^ Prugovečki 1981, pp. 1–10.

- ^ a b von Neumann 1932

- ^ Peres 1993, pp. 79–99.

- ^ Murphy 1990, p. 112

- ^ Murphy 1990, p. 72

- ^ Halmos 1957, Section 42.

- ^ Hewitt & Stromberg 1965.

- ^ Abramowitz, Milton; Stegun, Irene Ann, eds. (1983) [June 1964]. "Chapter 22". Handbook of Mathematical Functions with Formulas, Graphs, and Mathematical Tables. Applied Mathematics Series. Vol. 55 (Ninth reprint with additional corrections of tenth original printing with corrections (December 1972); first ed.). Washington D.C.; New York: United States Department of Commerce, National Bureau of Standards; Dover Publications. p. 773. ISBN 978-0-486-61272-0. LCCN 64-60036. MR 0167642. LCCN 65-12253.

- ^ a b Bers, John & Schechter 1981.

- ^ Giusti 2003.

- ^ Stein 1970

- ^ Details can be found in Warner (1983).

- ^ A general reference on Hardy spaces is the book Duren (1970).

- ^ Krantz 2002, §1.4

- ^ Krantz 2002, §1.5

- ^ Young 1988, Chapter 9.

- ^ Pedersen 1995, §4.4

- ^ More detail on finite element methods from this point of view can be found in Brenner & Scott (2005).

- ^ Brezis 2010, section 9.5

- ^ Evans 1998

- ^ Pathria (1996), Chapters 2 and 3

- ^ Einsiedler & Ward (2011), Proposition 2.14.

- ^ Reed & Simon 1980

- ^ A treatment of Fourier series from this point of view is available, for instance, in Rudin (1987) or Folland (2009).

- ^ Halmos 1957, §5

- ^ Bachman, Narici & Beckenstein 2000

- ^ Stein & Weiss 1971, §IV.2.

- ^ Lanczos 1988, pp. 212–213

- ^ Lanczos 1988, Equation 4-3.10

- ^ The classic reference for spectral methods is Courant & Hilbert 1953. A more up-to-date account is Reed & Simon 1975.

- ^ Kac 1966

- ^ von Neumann 1955

- ^ Holevo 2001, p. 17

- ^ Rieffel & Polak 2011, p. 55

- ^ Peres 1993, p. 101

- ^ Peres 1993, pp. 73

- ^ Nielsen & Chuang 2000, p. 90

- ^ Billingsley (1986), p. 477, ex. 34.13}}

- ^ Stapleton 1995

- ^ Hewitt & Stromberg (1965), Exercise 16.45.

- ^ Karatzas & Shreve 2019, Chapter 3

- ^ Stroock (2011), Chapter 8.

- ^ Hermann Weyl (2009), "Mind and nature", Mind and nature: selected writings on philosophy, mathematics, and physics, Princeton University Press.

- ^ Berthier, M. (2020), "Geometry of color perception. Part 2: perceived colors from real quantum states and Hering's rebit", The Journal of Mathematical Neuroscience, 10 (1): 14, doi:10.1186/s13408-020-00092-x, PMC 7481323, PMID 32902776.

- ^ Reed & Simon 1980, Theorem 12.6

- ^ Reed & Simon 1980, p. 38

- ^ Young 1988, p. 23.

- ^ Clarkson 1936.

- ^ Rudin 1987, Theorem 4.10

- ^ Dunford & Schwartz 1958, II.4.29

- ^ Rudin 1987, Theorem 4.11

- ^ Blanchet, Gérard; Charbit, Maurice (2014). Digital Signal and Image Processing Using MATLAB. Vol. 1 (Second ed.). New Jersey: Wiley. pp. 349–360. ISBN 978-1848216402.

- ^ Weidmann 1980, Theorem 4.8

- ^ Peres 1993, pp. 77–78.

- ^ Weidmann (1980), Exercise 4.11.

- ^ Weidmann 1980, §4.5

- ^ Buttazzo, Giaquinta & Hildebrandt 1998, Theorem 5.17

- ^ Halmos 1982, Problem 52, 58

- ^ Rudin 1973

- ^ Trèves 1967, Chapter 18

- ^ A general reference for this section is Rudin (1973), chapter 12.

- ^ See Prugovečki (1981), Reed & Simon (1980, Chapter VIII) and Folland (1989).

- ^ Prugovečki 1981, III, §1.4

- ^ Dunford & Schwartz 1958, IV.4.17-18

- ^ Weidmann 1980, §3.4

- ^ Kadison & Ringrose 1983, Theorem 2.6.4

- ^ Dunford & Schwartz 1958, §IV.4.

- ^ Roman 2008, p. 218

- ^ Rudin 1987, Definition 3.7

- ^ For the case of finite index sets, see, for instance, Halmos 1957, §5. For infinite index sets, see Weidmann 1980, Theorem 3.6.

- ^ a b Hewitt & Stromberg (1965), Theorem 16.26.

- ^ Levitan 2001. Many authors, such as Dunford & Schwartz (1958, §IV.4), refer to this just as the dimension. Unless the Hilbert space is finite dimensional, this is not the same thing as its dimension as a linear space (the cardinality of a Hamel basis).

- ^ Hewitt & Stromberg (1965), Theorem 16.29.

- ^ Prugovečki 1981, I, §4.2

- ^ von Neumann (1955) defines a Hilbert space via a countable Hilbert basis, which amounts to an isometric isomorphism with l2. The convention still persists in most rigorous treatments of quantum mechanics; see for instance Sobrino 1996, Appendix B.

- ^ a b c Streater & Wightman 1964, pp. 86–87

- ^ Young 1988, Theorem 15.3

- ^ von Neumann 1955, Theorem 16

- ^ von Neumann 1955, Theorem 14

- ^ Kakutani 1939

- ^ Lindenstrauss & Tzafriri 1971

- ^ Halmos 1957, §12

- ^ A general account of spectral theory in Hilbert spaces can be found in Riesz & Sz.-Nagy (1990). A more sophisticated account in the language of C*-algebras is in Rudin (1973) or Kadison & Ringrose (1997)

- ^ See, for instance, Riesz & Sz.-Nagy (1990, Chapter VI) or Weidmann 1980, Chapter 7. This result was already known to Schmidt (1908) in the case of operators arising from integral kernels.

- ^ Riesz & Sz.-Nagy 1990, §§107–108

- ^ Shubin 1987

- ^ Rudin 1973, Theorem 13.30.

- ^ Pynchon, Thomas (1973). Gravity's Rainbow. Viking Press. pp. 217, 275. ISBN 978-0143039945.

References[edit]

- Axler, Sheldon (18 December 2014), Linear Algebra Done Right, Undergraduate Texts in Mathematics (3rd ed.), Springer Publishing (published 2015), p. 296, ISBN 978-3-319-11079-0

- Bachman, George; Narici, Lawrence; Beckenstein, Edward (2000), Fourier and wavelet analysis, Universitext, Berlin, New York: Springer-Verlag, ISBN 978-0-387-98899-3, MR 1729490.

- Bers, Lipman; John, Fritz; Schechter, Martin (1981), Partial differential equations, American Mathematical Society, ISBN 978-0-8218-0049-2.

- Billingsley, Patrick (1986), Probability and measure, Wiley.

- Bourbaki, Nicolas (1986), Spectral theories, Elements of mathematics, Berlin: Springer-Verlag, ISBN 978-0-201-00767-1.

- Bourbaki, Nicolas (1987), Topological vector spaces, Elements of mathematics, Berlin: Springer-Verlag, ISBN 978-3-540-13627-9.

- Boyer, Carl Benjamin; Merzbach, Uta C (1991), A History of Mathematics (2nd ed.), John Wiley & Sons, Inc., ISBN 978-0-471-54397-8.

- Brenner, S.; Scott, R. L. (2005), The Mathematical Theory of Finite Element Methods (2nd ed.), Springer, ISBN 978-0-387-95451-6.

- Brezis, Haim (2010), Functional analysis, Sobolev spaces, and partial differential equations, Springer.

- Buttazzo, Giuseppe; Giaquinta, Mariano; Hildebrandt, Stefan (1998), One-dimensional variational problems, Oxford Lecture Series in Mathematics and its Applications, vol. 15, The Clarendon Press Oxford University Press, ISBN 978-0-19-850465-8, MR 1694383.

- Clarkson, J. A. (1936), "Uniformly convex spaces", Trans. Amer. Math. Soc., 40 (3): 396–414, doi:10.2307/1989630, JSTOR 1989630.

- Courant, Richard; Hilbert, David (1953), Methods of Mathematical Physics, Vol. I, Interscience.

- Dieudonné, Jean (1960), Foundations of Modern Analysis, Academic Press.

- Dirac, P.A.M. (1930), The Principles of Quantum Mechanics, Oxford: Clarendon Press.

- Dunford, N.; Schwartz, J.T. (1958), Linear operators, Parts I and II, Wiley-Interscience.

- Duren, P. (1970), Theory of Hp-Spaces, New York: Academic Press.

- Einsiedler, Manfred; Ward, Thomas (2011), Ergodic theory with a view towards number theory, Springer.

- Evans, L. C. (1998), Partial Differential Equations, Providence: American Mathematical Society, ISBN 0-8218-0772-2.

- Folland, Gerald B. (2009), Fourier analysis and its application (Reprint of Wadsworth and Brooks/Cole 1992 ed.), American Mathematical Society Bookstore, ISBN 978-0-8218-4790-9.

- Folland, Gerald B. (1989), Harmonic analysis in phase space, Annals of Mathematics Studies, vol. 122, Princeton University Press, ISBN 978-0-691-08527-2.

- Fréchet, Maurice (1907), "Sur les ensembles de fonctions et les opérations linéaires", C. R. Acad. Sci. Paris, 144: 1414–1416.

- Fréchet, Maurice (1904), "Sur les opérations linéaires", Transactions of the American Mathematical Society, 5 (4): 493–499, doi:10.2307/1986278, JSTOR 1986278.

- Giusti, Enrico (2003), Direct Methods in the Calculus of Variations, World Scientific, ISBN 978-981-238-043-2.

- Grattan-Guinness, Ivor (2000), The search for mathematical roots, 1870–1940, Princeton Paperbacks, Princeton University Press, ISBN 978-0-691-05858-0, MR 1807717.

- Halmos, Paul (1957), Introduction to Hilbert Space and the Theory of Spectral Multiplicity, Chelsea Pub. Co

- Halmos, Paul (1982), A Hilbert Space Problem Book, Springer-Verlag, ISBN 978-0-387-90685-0.

- Hewitt, Edwin; Stromberg, Karl (1965), Real and Abstract Analysis, New York: Springer-Verlag.

- Hilbert, David; Nordheim, Lothar Wolfgang; von Neumann, John (1927), "Über die Grundlagen der Quantenmechanik", Mathematische Annalen, 98: 1–30, doi:10.1007/BF01451579, S2CID 120986758.

- Holevo, Alexander S. (2001), Statistical Structure of Quantum Theory, Lecture Notes in Physics, Springer, ISBN 3-540-42082-7, OCLC 318268606.

- Kac, Mark (1966), "Can one hear the shape of a drum?", American Mathematical Monthly, 73 (4, part 2): 1–23, doi:10.2307/2313748, JSTOR 2313748.

- Kadison, Richard V.; Ringrose, John R. (1997), Fundamentals of the theory of operator algebras. Vol. I, Graduate Studies in Mathematics, vol. 15, Providence, R.I.: American Mathematical Society, ISBN 978-0-8218-0819-1, MR 1468229.

- Kadison, Richard V.; Ringrose, John R. (1983), Fundamentals of the Theory of Operator Algebras, Vol. I: Elementary Theory, New York: Academic Press, Inc.

- Karatzas, Ioannis; Shreve, Steven (2019), Brownian Motion and Stochastic Calculus (2nd ed.), Springer, ISBN 978-0-387-97655-6

- Kakutani, Shizuo (1939), "Some characterizations of Euclidean space", Japanese Journal of Mathematics, 16: 93–97, doi:10.4099/jjm1924.16.0_93, MR 0000895.

- Kline, Morris (1972), Mathematical thought from ancient to modern times, Volume 3 (3rd ed.), Oxford University Press (published 1990), ISBN 978-0-19-506137-6.

- Kolmogorov, Andrey; Fomin, Sergei V. (1970), Introductory Real Analysis (Revised English edition, trans. by Richard A. Silverman (1975) ed.), Dover Press, ISBN 978-0-486-61226-3.

- Krantz, Steven G. (2002), Function Theory of Several Complex Variables, Providence, R.I.: American Mathematical Society, ISBN 978-0-8218-2724-6.

- Lanczos, Cornelius (1988), Applied analysis (Reprint of 1956 Prentice-Hall ed.), Dover Publications, ISBN 978-0-486-65656-4.

- Lebesgue, Henri (1904), Leçons sur l'intégration et la recherche des fonctions primitives, Gauthier-Villars.

- Levitan, B.M. (2001) [1994], "Hilbert space", Encyclopedia of Mathematics, EMS Press.

- Lindenstrauss, J.; Tzafriri, L. (1971), "On the complemented subspaces problem", Israel Journal of Mathematics, 9 (2): 263–269, doi:10.1007/BF02771592, ISSN 0021-2172, MR 0276734, S2CID 119575718.

- Marsden, Jerrold E. (1974), Elementary classical analysis, W. H. Freeman and Co., MR 0357693.

- Murphy, Gerald J. (1990), C*-algebras and Operator Theory, Academic Press, ISBN 0-12-511360-9.

- von Neumann, John (1929), "Allgemeine Eigenwerttheorie Hermitescher Funktionaloperatoren", Mathematische Annalen, 102: 49–131, doi:10.1007/BF01782338, S2CID 121249803.