Mathematical formulation of quantum mechanics

| Part of a series of articles about |

| Quantum mechanics |

|---|

The mathematical formulations of quantum mechanics are those mathematical formalisms that permit a rigorous description of quantum mechanics. This mathematical formalism uses mainly a part of functional analysis, especially Hilbert spaces, which are a kind of linear space. Such are distinguished from mathematical formalisms for physics theories developed prior to the early 1900s by the use of abstract mathematical structures, such as infinite-dimensional Hilbert spaces (L2 space mainly), and operators on these spaces. In brief, values of physical observables such as energy and momentum were no longer considered as values of functions on phase space, but as eigenvalues; more precisely as spectral values of linear operators in Hilbert space.[1]

These formulations of quantum mechanics continue to be used today. At the heart of the description are ideas of quantum state and quantum observables, which are radically different from those used in previous models of physical reality. While the mathematics permits calculation of many quantities that can be measured experimentally, there is a definite theoretical limit to values that can be simultaneously measured. This limitation was first elucidated by Heisenberg through a thought experiment, and is represented mathematically in the new formalism by the non-commutativity of operators representing quantum observables.

Prior to the development of quantum mechanics as a separate theory, the mathematics used in physics consisted mainly of formal mathematical analysis, beginning with calculus, and increasing in complexity up to differential geometry and partial differential equations. Probability theory was used in statistical mechanics. Geometric intuition played a strong role in the first two and, accordingly, theories of relativity were formulated entirely in terms of differential geometric concepts. The phenomenology of quantum physics arose roughly between 1895 and 1915, and for the 10 to 15 years before the development of quantum mechanics (around 1925) physicists continued to think of quantum theory within the confines of what is now called classical physics, and in particular within the same mathematical structures. The most sophisticated example of this is the Sommerfeld–Wilson–Ishiwara quantization rule, which was formulated entirely on the classical phase space.

History of the formalism[edit]

The "old quantum theory" and the need for new mathematics[edit]

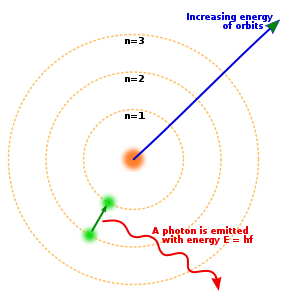

In the 1890s, Planck was able to derive the blackbody spectrum, which was later used to avoid the classical ultraviolet catastrophe by making the unorthodox assumption that, in the interaction of electromagnetic radiation with matter, energy could only be exchanged in discrete units which he called quanta. Planck postulated a direct proportionality between the frequency of radiation and the quantum of energy at that frequency. The proportionality constant, h, is now called Planck's constant in his honor.

In 1905, Einstein explained certain features of the photoelectric effect by assuming that Planck's energy quanta were actual particles, which were later dubbed photons.

All of these developments were phenomenological and challenged the theoretical physics of the time. Bohr and Sommerfeld went on to modify classical mechanics in an attempt to deduce the Bohr model from first principles. They proposed that, of all closed classical orbits traced by a mechanical system in its phase space, only the ones that enclosed an area which was a multiple of Planck's constant were actually allowed. The most sophisticated version of this formalism was the so-called Sommerfeld–Wilson–Ishiwara quantization. Although the Bohr model of the hydrogen atom could be explained in this way, the spectrum of the helium atom (classically an unsolvable 3-body problem) could not be predicted. The mathematical status of quantum theory remained uncertain for some time.

In 1923, de Broglie proposed that wave–particle duality applied not only to photons but to electrons and every other physical system.

The situation changed rapidly in the years 1925–1930, when working mathematical foundations were found through the groundbreaking work of Erwin Schrödinger, Werner Heisenberg, Max Born, Pascual Jordan, and the foundational work of John von Neumann, Hermann Weyl and Paul Dirac, and it became possible to unify several different approaches in terms of a fresh set of ideas. The physical interpretation of the theory was also clarified in these years after Werner Heisenberg discovered the uncertainty relations and Niels Bohr introduced the idea of complementarity.

The "new quantum theory"[edit]

Werner Heisenberg's matrix mechanics was the first successful attempt at replicating the observed quantization of atomic spectra. Later in the same year, Schrödinger created his wave mechanics. Schrödinger's formalism was considered easier to understand, visualize and calculate as it led to differential equations, which physicists were already familiar with solving. Within a year, it was shown that the two theories were equivalent.

Schrödinger himself initially did not understand the fundamental probabilistic nature of quantum mechanics, as he thought that the absolute square of the wave function of an electron should be interpreted as the charge density of an object smeared out over an extended, possibly infinite, volume of space. It was Max Born who introduced the interpretation of the absolute square of the wave function as the probability distribution of the position of a pointlike object. Born's idea was soon taken over by Niels Bohr in Copenhagen who then became the "father" of the Copenhagen interpretation of quantum mechanics. Schrödinger's wave function can be seen to be closely related to the classical Hamilton–Jacobi equation. The correspondence to classical mechanics was even more explicit, although somewhat more formal, in Heisenberg's matrix mechanics. In his PhD thesis project, Paul Dirac[2] discovered that the equation for the operators in the Heisenberg representation, as it is now called, closely translates to classical equations for the dynamics of certain quantities in the Hamiltonian formalism of classical mechanics, when one expresses them through Poisson brackets, a procedure now known as canonical quantization.

To be more precise, already before Schrödinger, the young postdoctoral fellow Werner Heisenberg invented his matrix mechanics, which was the first correct quantum mechanics–– the essential breakthrough. Heisenberg's matrix mechanics formulation was based on algebras of infinite matrices, a very radical formulation in light of the mathematics of classical physics, although he started from the index-terminology of the experimentalists of that time, not even aware that his "index-schemes" were matrices, as Born soon pointed out to him. In fact, in these early years, linear algebra was not generally popular with physicists in its present form.

Although Schrödinger himself after a year proved the equivalence of his wave-mechanics and Heisenberg's matrix mechanics, the reconciliation of the two approaches and their modern abstraction as motions in Hilbert space is generally attributed to Paul Dirac, who wrote a lucid account in his 1930 classic The Principles of Quantum Mechanics. He is the third, and possibly most important, pillar of that field (he soon was the only one to have discovered a relativistic generalization of the theory). In his above-mentioned account, he introduced the bra–ket notation, together with an abstract formulation in terms of the Hilbert space used in functional analysis; he showed that Schrödinger's and Heisenberg's approaches were two different representations of the same theory, and found a third, most general one, which represented the dynamics of the system. His work was particularly fruitful in many types of generalizations of the field.

The first complete mathematical formulation of this approach, known as the Dirac–von Neumann axioms, is generally credited to John von Neumann's 1932 book Mathematical Foundations of Quantum Mechanics, although Hermann Weyl had already referred to Hilbert spaces (which he called unitary spaces) in his 1927 classic paper and book. It was developed in parallel with a new approach to the mathematical spectral theory based on linear operators rather than the quadratic forms that were David Hilbert's approach a generation earlier. Though theories of quantum mechanics continue to evolve to this day, there is a basic framework for the mathematical formulation of quantum mechanics which underlies most approaches and can be traced back to the mathematical work of John von Neumann. In other words, discussions about interpretation of the theory, and extensions to it, are now mostly conducted on the basis of shared assumptions about the mathematical foundations.

Later developments[edit]

The application of the new quantum theory to electromagnetism resulted in quantum field theory, which was developed starting around 1930. Quantum field theory has driven the development of more sophisticated formulations of quantum mechanics, of which the ones presented here are simple special cases.

- Path integral formulation

- Phase-space formulation of quantum mechanics & geometric quantization

- quantum field theory in curved spacetime

- axiomatic, algebraic and constructive quantum field theory

- C*-algebra formalism

- Generalized statistical model of quantum mechanics

A related topic is the relationship to classical mechanics. Any new physical theory is supposed to reduce to successful old theories in some approximation. For quantum mechanics, this translates into the need to study the so-called classical limit of quantum mechanics. Also, as Bohr emphasized, human cognitive abilities and language are inextricably linked to the classical realm, and so classical descriptions are intuitively more accessible than quantum ones. In particular, quantization, namely the construction of a quantum theory whose classical limit is a given and known classical theory, becomes an important area of quantum physics in itself.

Finally, some of the originators of quantum theory (notably Einstein and Schrödinger) were unhappy with what they thought were the philosophical implications of quantum mechanics. In particular, Einstein took the position that quantum mechanics must be incomplete, which motivated research into so-called hidden-variable theories. The issue of hidden variables has become in part an experimental issue with the help of quantum optics.

Postulates of quantum mechanics[edit]

A physical system is generally described by three basic ingredients: states; observables; and dynamics (or law of time evolution) or, more generally, a group of physical symmetries. A classical description can be given in a fairly direct way by a phase space model of mechanics: states are points in a phase space formulated by symplectic manifold, observables are real-valued functions on it, time evolution is given by a one-parameter group of symplectic transformations of the phase space, and physical symmetries are realized by symplectic transformations. A quantum description normally consists of a Hilbert space of states, observables are self-adjoint operators on the space of states, time evolution is given by a one-parameter group of unitary transformations on the Hilbert space of states, and physical symmetries are realized by unitary transformations. (It is possible, to map this Hilbert-space picture to a phase space formulation, invertibly. See below.)

The following summary of the mathematical framework of quantum mechanics can be partly traced back to the Dirac–von Neumann axioms.[3]

Description of the state of a system[edit]

Each isolated physical system is associated with a (topologically) separable complex Hilbert space H with inner product ⟨φ|ψ⟩.

The state of an isolated physical system is represented, at a fixed time , by a state vector belonging to a Hilbert space called the state space.

Separability is a mathematically convenient hypothesis, with the physical interpretation that the state is uniquely determined by countably many observations. Quantum states can be identified with equivalence classes in H, where two vectors (of length 1) represent the same state if they differ only by a phase factor.[4] As such, quantum states form a ray in projective Hilbert space, not a vector. Many textbooks fail to make this distinction, which could be partly a result of the fact that the Schrödinger equation itself involves Hilbert-space "vectors", with the result that the imprecise use of "state vector" rather than ray is very difficult to avoid.[5]

Accompanying Postulate I is the composite system postulate:[6]

The Hilbert space of a composite system is the Hilbert space tensor product of the state spaces associated with the component systems. For a non-relativistic system consisting of a finite number of distinguishable particles, the component systems are the individual particles.

In the presence of quantum entanglement, the quantum state of the composite system cannot be factored as a tensor product of states of its local constituents; Instead, it is expressed as a sum, or superposition, of tensor products of states of component subsystems. A subsystem in an entangled composite system generally cannot be described by a state vector (or a ray), but instead is described by a density operator; Such quantum state is known as a mixed state. The density operator of a mixed state is a trace class, nonnegative (positive semi-definite) self-adjoint operator ρ normalized to be of trace 1. In turn, any density operator of a mixed state can be represented as a subsystem of a larger composite system in a pure state (see purification theorem).

In the absence of quantum entanglement, the quantum state of the composite system is called a separable state. The density matrix of a bipartite system in a separable state can be expressed as , where . If there is only a single non-zero , then the state can be expressed just as and is called simply separable or product state.

Measurement on a system [edit]

Description of physical quantities[edit]

Physical observables are represented by Hermitian matrices on H. Since these operators are Hermitian, their eigenvalues are always real, and represent the possible outcomes/results from measuring the corresponding observable. If the spectrum of the observable is discrete, then the possible results are quantized.

Every measurable physical quantity is described by a Hermitian operator acting in the state space . This operator is an observable, meaning that its eigenvectors form a basis for . The result of measuring a physical quantity must be one of the eigenvalues of the corresponding observable .

Results of measurement[edit]

By spectral theory, we can associate a probability measure to the values of A in any state ψ. We can also show that the possible values of the observable A in any state must belong to the spectrum of A. The expectation value (in the sense of probability theory) of the observable A for the system in state represented by the unit vector ψ ∈ H is . If we represent the state ψ in the basis formed by the eigenvectors of A, then the square of the modulus of the component attached to a given eigenvector is the probability of observing its corresponding eigenvalue.

When the physical quantity is measured on a system in a normalized state , the probability of obtaining an eigenvalue (denoted for discrete spectra and for continuous spectra) of the corresponding observable is given by the amplitude squared of the appropriate wave function (projection onto corresponding eigenvector).

For a mixed state ρ, the expected value of A in the state ρ is , and the probability of obtaining an eigenvalue in a discrete, nondegenerate spectrum of the corresponding observable is given by .

If the eigenvalue has degenerate, orthonormal eigenvectors , then the projection operator onto the eigensubspace can be defined as the identity operator in the eigensubspace:

Postulates II.a and II.b are collectively known as the Born rule of quantum mechanics.

Effect of measurement on the state[edit]

When a measurement is performed, only one result is obtained (according to some interpretations of quantum mechanics). This is modeled mathematically as the processing of additional information from the measurement, confining the probabilities of an immediate second measurement of the same observable. In the case of a discrete, non-degenerate spectrum, two sequential measurements of the same observable will always give the same value assuming the second immediately follows the first. Therefore, the state vector must change as a result of measurement, and collapse onto the eigensubspace associated with the eigenvalue measured.

If the measurement of the physical quantity on the system in the state gives the result , then the state of the system immediately after the measurement is the normalized projection of onto the eigensubspace associated with

For a mixed state ρ, after obtaining an eigenvalue in a discrete, nondegenerate spectrum of the corresponding observable , the updated state is given by . If the eigenvalue has degenerate, orthonormal eigenvectors , then the projection operator onto the eigensubspace is .

Postulates II.c is sometimes called the "state update rule" or "collapse rule"; Together with the Born rule (Postulates II.a and II.b), they form a complete representation of measurements, and are sometimes collectively called the measurement postulate(s).

Note that the projection-valued measures (PVM) described in the measurement postulate(s) can be generalized to positive operator-valued measures (POVM), which is the most general kind of measurement in quantum mechanics. A POVM can be understood as the effect on a component subsystem when a PVM is performed on a larger, composite system (see Naimark's dilation theorem).

Time evolution of a system[edit]

Though it is possible to derive the Schrödinger equation, which describes how a state vector evolves in time, most texts assert the equation as a postulate. Common derivations include using the de Broglie hypothesis or path integrals.

The time evolution of the state vector is governed by the Schrödinger equation, where is the observable associated with the total energy of the system (called the Hamiltonian)

Equivalently, the time evolution postulate can be stated as:

The time evolution of a closed system is described by a unitary transformation on the initial state.

For a closed system in a mixed state ρ, the time evolution is .

The evolution of an open quantum system can be described by quantum operations (in an operator sum formalism) and quantum instruments, and generally does not have to be unitary.

Other implications of the postulates[edit]

- Physical symmetries act on the Hilbert space of quantum states unitarily or antiunitarily due to Wigner's theorem (supersymmetry is another matter entirely).

- Density operators are those that are in the closure of the convex hull of the one-dimensional orthogonal projectors. Conversely, one-dimensional orthogonal projectors are extreme points of the set of density operators. Physicists also call one-dimensional orthogonal projectors pure states and other density operators mixed states.

- One can in this formalism state Heisenberg's uncertainty principle and prove it as a theorem, although the exact historical sequence of events, concerning who derived what and under which framework, is the subject of historical investigations outside the scope of this article.

- Recent research has shown[7] that the composite system postulate (tensor product postulate) can be derived from the state postulate (Postulate I) and the measurement postulates (Postulates II); Moreover, it has also been shown[8] that the measurement postulates (Postulates II) can be derived from "unitary quantum mechanics", which includes only the state postulate (Postulate I), the composite system postulate (tensor product postulate) and the unitary evolution postulate (Postulate III).

Furthermore, to the postulates of quantum mechanics one should also add basic statements on the properties of spin and Pauli's exclusion principle, see below.

Spin[edit]

In addition to their other properties, all particles possess a quantity called spin, an intrinsic angular momentum. Despite the name, particles do not literally spin around an axis, and quantum mechanical spin has no correspondence in classical physics. In the position representation, a spinless wavefunction has position r and time t as continuous variables, ψ = ψ(r, t). For spin wavefunctions the spin is an additional discrete variable: ψ = ψ(r, t, σ), where σ takes the values;

That is, the state of a single particle with spin S is represented by a (2S + 1)-component spinor of complex-valued wave functions.

Two classes of particles with very different behaviour are bosons which have integer spin (S = 0, 1, 2, ...), and fermions possessing half-integer spin (S = 1⁄2, 3⁄2, 5⁄2, ...).

Symmetrization postulate[edit]

In quantum mechanics, two particles can be distinguished from one another using two methods. By performing a measurement of intrinsic properties of each particle, particles of different types can be distinguished. Otherwise, if the particles are identical, their trajectories can be tracked which distinguishes the particles based on the locality of each particle. While the second method is permitted in classical mechanics, (i.e. all classical particles are treated with distinguishability), the same cannot be said for quantum mechanical particles since the process is infeasible due to the fundamental uncertainty principles that govern small scales. Hence the requirement of indistinguishability of quantum particles is presented by the symmetrization postulate. The postulate is applicable to a system of bosons or fermions, for example, in predicting the spectra of helium atom. The postulate, explained in the following sections, can be stated as follows:

The wavefunction of a system of N identical particles (in 3D) is either totally symmetric (Bosons) or totally antisymmetric (Fermions) under interchange of any pair of particles.

Exceptions can occur when the particles are constrained to two spatial dimensions where existence of particles known as anyons are possible which are said to have a continuum of statistical properties spanning the range between fermions and bosons.[9] The connection between behaviour of identical particles and their spin is given by spin statistics theorem.

It can be shown that two particles localized in different regions of space can still be represented using a symmetrized/antisymmetrized wavefunction and that independent treatment of these wavefunctions gives the same result.[10] Hence the symmetrization postulate is applicable in the general case of a system of identical particles.

Exchange Degeneracy[edit]

In a system of identical particles, let P be known as exchange operator that acts on the wavefunction as:

If a physical system of identical particles is given, wavefunction of all particles can be well known from observation but these cannot be labelled to each particle. Thus, the above exchanged wavefunction represents the same physical state as the original state which implies that the wavefunction is not unique. This is known as exchange degeneracy.[11]

More generally, consider a linear combination of such states, . For the best representation of the physical system, we expect this to be an eigenvector of P since exchange operator is not excepted to give completely different vectors in projective Hilbert space. Since , the possible eigenvalues of P are +1 and −1. The states for identical particle system are represented as symmetric for +1 eigenvalue or antisymmetric for -1 eigenvalue as follows:

The explicit symmetric/antisymmetric form of is constructed using a symmetrizer or antisymmetrizer operator. Particles that form symmetric states are called bosons and those that form antisymmetric states are called as fermions. The relation of spin with this classification is given from spin statistics theorem which shows that integer spin particles are bosons and half integer spin particles are fermions.

Pauli exclusion principle[edit]

The property of spin relates to another basic property concerning systems of N identical particles: the Pauli exclusion principle, which is a consequence of the following permutation behaviour of an N-particle wave function; again in the position representation one must postulate that for the transposition of any two of the N particles one always should have

i.e., on transposition of the arguments of any two particles the wavefunction should reproduce, apart from a prefactor (−1)2S which is +1 for bosons, but (−1) for fermions. Electrons are fermions with S = 1/2; quanta of light are bosons with S = 1.

Due to the form of anti-symmetrized wavefunction:

if the wavefunction of each particle is completely determined by a set of quantum number, then two fermions cannot share the same set of quantum numbers since the resulting function cannot be anti-symmetrized (i.e. above formula gives zero). The same cannot be said of Bosons since their wavefunction is:

where is the number of particles with same wavefunction.

Exceptions for symmetrization postulate[edit]

In nonrelativistic quantum mechanics all particles are either bosons or fermions; in relativistic quantum theories also "supersymmetric" theories exist, where a particle is a linear combination of a bosonic and a fermionic part. Only in dimension d = 2 can one construct entities where (−1)2S is replaced by an arbitrary complex number with magnitude 1, called anyons. In relativistic quantum mechanics, spin statistic theorem can prove that under certain set of assumptions that the integer spins particles are classified as bosons and half spin particles are classified as fermions. Anyons which form neither symmetric nor antisymmetric states are said to have fractional spin.

Although spin and the Pauli principle can only be derived from relativistic generalizations of quantum mechanics, the properties mentioned in the last two paragraphs belong to the basic postulates already in the non-relativistic limit. Especially, many important properties in natural science, e.g. the periodic system of chemistry, are consequences of the two properties.

Mathematical structure of quantum mechanics[edit]

Pictures of dynamics[edit]

- In the so-called Schrödinger picture of quantum mechanics, the dynamics is given as follows:

The time evolution of the state is given by a differentiable function from the real numbers R, representing instants of time, to the Hilbert space of system states. This map is characterized by a differential equation as follows: If |ψ(t)⟩ denotes the state of the system at any one time t, the following Schrödinger equation holds:

Schrödinger equation (general)where H is a densely defined self-adjoint operator, called the system Hamiltonian, i is the imaginary unit and ħ is the reduced Planck constant. As an observable, H corresponds to the total energy of the system.

Alternatively, by Stone's theorem one can state that there is a strongly continuous one-parameter unitary map U(t): H → H such that

for all times s, t. The existence of a self-adjoint Hamiltonian H such thatis a consequence of Stone's theorem on one-parameter unitary groups. It is assumed that H does not depend on time and that the perturbation starts at t0 = 0; otherwise one must use the Dyson series, formally written aswhere is Dyson's time-ordering symbol.(This symbol permutes a product of noncommuting operators of the form

into the uniquely determined re-ordered expressionwith The result is a causal chain, the primary cause in the past on the utmost r.h.s., and finally the present effect on the utmost l.h.s. .) - The Heisenberg picture of quantum mechanics focuses on observables and instead of considering states as varying in time, it regards the states as fixed and the observables as changing. To go from the Schrödinger to the Heisenberg picture one needs to define time-independent states and time-dependent operators thus:

It is then easily checked that the expected values of all observables are the same in both picturesand that the time-dependent Heisenberg operators satisfyHeisenberg picture (general)which is true for time-dependent A = A(t). Notice the commutator expression is purely formal when one of the operators is unbounded. One would specify a representation for the expression to make sense of it.

- The so-called Dirac picture or interaction picture has time-dependent states and observables, evolving with respect to different Hamiltonians. This picture is most useful when the evolution of the observables can be solved exactly, confining any complications to the evolution of the states. For this reason, the Hamiltonian for the observables is called "free Hamiltonian" and the Hamiltonian for the states is called "interaction Hamiltonian". In symbols:

Dirac picture

The interaction picture does not always exist, though. In interacting quantum field theories, Haag's theorem states that the interaction picture does not exist. This is because the Hamiltonian cannot be split into a free and an interacting part within a superselection sector. Moreover, even if in the Schrödinger picture the Hamiltonian does not depend on time, e.g. H = H0 + V, in the interaction picture it does, at least, if V does not commute with H0, since

So the above-mentioned Dyson-series has to be used anyhow.

The Heisenberg picture is the closest to classical Hamiltonian mechanics (for example, the commutators appearing in the above equations directly translate into the classical Poisson brackets); but this is already rather "high-browed", and the Schrödinger picture is considered easiest to visualize and understand by most people, to judge from pedagogical accounts of quantum mechanics. The Dirac picture is the one used in perturbation theory, and is specially associated to quantum field theory and many-body physics.

Similar equations can be written for any one-parameter unitary group of symmetries of the physical system. Time would be replaced by a suitable coordinate parameterizing the unitary group (for instance, a rotation angle, or a translation distance) and the Hamiltonian would be replaced by the conserved quantity associated with the symmetry (for instance, angular or linear momentum).

Summary:

| Evolution of: | Picture () | ||

| Schrödinger (S) | Heisenberg (H) | Interaction (I) | |

| Ket state | constant | ||

| Observable | constant | ||

| Density matrix | constant | ||

Representations[edit]

The original form of the Schrödinger equation depends on choosing a particular representation of Heisenberg's canonical commutation relations. The Stone–von Neumann theorem dictates that all irreducible representations of the finite-dimensional Heisenberg commutation relations are unitarily equivalent. A systematic understanding of its consequences has led to the phase space formulation of quantum mechanics, which works in full phase space instead of Hilbert space, so then with a more intuitive link to the classical limit thereof. This picture also simplifies considerations of quantization, the deformation extension from classical to quantum mechanics.

The quantum harmonic oscillator is an exactly solvable system where the different representations are easily compared. There, apart from the Heisenberg, or Schrödinger (position or momentum), or phase-space representations, one also encounters the Fock (number) representation and the Segal–Bargmann (Fock-space or coherent state) representation (named after Irving Segal and Valentine Bargmann). All four are unitarily equivalent.

Time as an operator[edit]

The framework presented so far singles out time as the parameter that everything depends on. It is possible to formulate mechanics in such a way that time becomes itself an observable associated with a self-adjoint operator. At the classical level, it is possible to arbitrarily parameterize the trajectories of particles in terms of an unphysical parameter s, and in that case the time t becomes an additional generalized coordinate of the physical system. At the quantum level, translations in s would be generated by a "Hamiltonian" H − E, where E is the energy operator and H is the "ordinary" Hamiltonian. However, since s is an unphysical parameter, physical states must be left invariant by "s-evolution", and so the physical state space is the kernel of H − E (this requires the use of a rigged Hilbert space and a renormalization of the norm).

This is related to the quantization of constrained systems and quantization of gauge theories. It is also possible to formulate a quantum theory of "events" where time becomes an observable.[12]

The problem of measurement[edit]

The picture given in the preceding paragraphs is sufficient for description of a completely isolated system. However, it fails to account for one of the main differences between quantum mechanics and classical mechanics, that is, the effects of measurement.[13] The von Neumann description of quantum measurement of an observable A, when the system is prepared in a pure state ψ is the following (note, however, that von Neumann's description dates back to the 1930s and is based on experiments as performed during that time – more specifically the Compton–Simon experiment; it is not applicable to most present-day measurements within the quantum domain):

- Let A have spectral resolution where EA is the resolution of the identity (also called projection-valued measure) associated with A. Then the probability of the measurement outcome lying in an interval B of R is |EA(B) ψ|2. In other words, the probability is obtained by integrating the characteristic function of B against the countably additive measure

- If the measured value is contained in B, then immediately after the measurement, the system will be in the (generally non-normalized) state EA(B)ψ. If the measured value does not lie in B, replace B by its complement for the above state.

For example, suppose the state space is the n-dimensional complex Hilbert space Cn and A is a Hermitian matrix with eigenvalues λi, with corresponding eigenvectors ψi. The projection-valued measure associated with A, EA, is then

where B is a Borel set containing only the single eigenvalue λi. If the system is prepared in state

Then the probability of a measurement returning the value λi can be calculated by integrating the spectral measure

over Bi. This gives trivially

The characteristic property of the von Neumann measurement scheme is that repeating the same measurement will give the same results. This is also called the projection postulate.

A more general formulation replaces the projection-valued measure with a positive-operator valued measure (POVM). To illustrate, take again the finite-dimensional case. Here we would replace the rank-1 projections

Since the Fi Fi* operators need not be mutually orthogonal projections, the projection postulate of von Neumann no longer holds.

The same formulation applies to general mixed states.

In von Neumann's approach, the state transformation due to measurement is distinct from that due to time evolution in several ways. For example, time evolution is deterministic and unitary whereas measurement is non-deterministic and non-unitary. However, since both types of state transformation take one quantum state to another, this difference was viewed by many as unsatisfactory. The POVM formalism views measurement as one among many other quantum operations, which are described by completely positive maps which do not increase the trace.

In any case it seems that the above-mentioned problems can only be resolved if the time evolution included not only the quantum system, but also, and essentially, the classical measurement apparatus (see above).

List of mathematical tools[edit]

Part of the folklore of the subject concerns the mathematical physics textbook Methods of Mathematical Physics put together by Richard Courant from David Hilbert's Göttingen University courses. The story is told (by mathematicians) that physicists had dismissed the material as not interesting in the current research areas, until the advent of Schrödinger's equation. At that point it was realised that the mathematics of the new quantum mechanics was already laid out in it. It is also said that Heisenberg had consulted Hilbert about his matrix mechanics, and Hilbert observed that his own experience with infinite-dimensional matrices had derived from differential equations, advice which Heisenberg ignored, missing the opportunity to unify the theory as Weyl and Dirac did a few years later. Whatever the basis of the anecdotes, the mathematics of the theory was conventional at the time, whereas the physics was radically new.

The main tools include:

- linear algebra: complex numbers, eigenvectors, eigenvalues

- functional analysis: Hilbert spaces, linear operators, spectral theory

- differential equations: partial differential equations, separation of variables, ordinary differential equations, Sturm–Liouville theory, eigenfunctions

- harmonic analysis: Fourier transforms

See also[edit]

Notes[edit]

- ^ Byron & Fuller 1992, p. 277.

- ^ Dirac 1925.

- ^ Cohen-Tannoudji, Diu & Laloë 2020.

- ^ Bäuerle & de Kerf 1990, p. 330.

- ^ Solem & Biedenharn 1993.

- ^ Jauch, Wigner & Yanase 1997.

- ^ Carcassi, Maccone & Aidala 2021.

- ^ Masanes, Galley & Müller 2019.

- ^ a b Sakurai & Napolitano 2021, p. 443.

- ^ Sakurai & Napolitano 2021, p. 434-437.

- ^ Cohen-Tannoudji, Diu & Laloë 2020, p. 1375–1377.

- ^ Edwards 1979.

- ^ Greenstein & Zajonc 2006, p. 215.

References[edit]

- Bäuerle, Gerard G. A.; de Kerf, Eddy A. (1990). Lie Algebras, Part 1: Finite and Infinite Dimensional Lie Algebras and Applications in Physics. Studies in Mathematical Physics. Amsterdam: North Holland. ISBN 0-444-88776-8.

- Byron, Frederick W.; Fuller, Robert W. (1992). Mathematics of Classical and Quantum Physics. New York: Courier Corporation. ISBN 978-0-486-67164-2.

- Carcassi, Gabriele; Maccone, Lorenzo; Aidala, Christine A. (2021-03-16). "Four Postulates of Quantum Mechanics Are Three". Physical Review Letters. 126 (11). American Physical Society (APS): 110402. arXiv:2003.11007. Bibcode:2021PhRvL.126k0402C. doi:10.1103/physrevlett.126.110402. ISSN 0031-9007. PMID 33798366. S2CID 214623241.

- Cohen-Tannoudji, Claude; Diu, Bernard; Laloë, Franck (2020). Quantum mechanics. Volume 2: Angular momentum, spin, and approximation methods. Weinheim: Wiley-VCH Verlag GmbH & Co. KGaA. ISBN 978-3-527-82272-0.

- Dirac, P. A. M. (1925). "The Fundamental Equations of Quantum Mechanics". Proceedings of the Royal Society A: Mathematical, Physical and Engineering Sciences. 109 (752): 642–653. Bibcode:1925RSPSA.109..642D. doi:10.1098/rspa.1925.0150.

- Edwards, David A. (1979). "The mathematical foundations of quantum mechanics". Synthese. 42 (1). Springer Science and Business Media LLC: 1–70. doi:10.1007/bf00413704. ISSN 0039-7857. S2CID 46969028.

- Greenstein, George; Zajonc, Arthur (2006). The Quantum Challenge. Sudbury, Mass.: Jones & Bartlett Learning. ISBN 978-0-7637-2470-2.

- Jauch, J. M.; Wigner, E. P.; Yanase, M. M. (1997). "Some Comments Concerning Measurements in Quantum Mechanics". Part I: Particles and Fields. Part II: Foundations of Quantum Mechanics. Berlin, Heidelberg: Springer Berlin Heidelberg. pp. 475–482. doi:10.1007/978-3-662-09203-3_52. ISBN 978-3-642-08179-8.

- Masanes, Lluís; Galley, Thomas D.; Müller, Markus P. (2019). "The measurement postulates of quantum mechanics are operationally redundant". Nature Communications. 10 (1). Springer Science and Business Media LLC: 1361. arXiv:1811.11060. Bibcode:2019NatCo..10.1361M. doi:10.1038/s41467-019-09348-x. ISSN 2041-1723. PMC 6434053. PMID 30911009.

- Solem, J. C.; Biedenharn, L. C. (1993). "Understanding geometrical phases in quantum mechanics: An elementary example". Foundations of Physics. 23 (2): 185–195. Bibcode:1993FoPh...23..185S. doi:10.1007/BF01883623. S2CID 121930907.

- Streater, Raymond Frederick; Wightman, Arthur Strong (2000). PCT, Spin and Statistics, and All that. Princeton, NJ: Princeton University Press. ISBN 978-0-691-07062-9.

- Sakurai, Jun John; Napolitano, Jim (2021). Modern quantum mechanics (3rd ed.). Cambridge: Cambridge University Press. ISBN 978-1-108-47322-4.

Further reading[edit]

- Auyang, Sunny Y. (1995). How is Quantum Field Theory Possible?. New York, NY: Oxford University Press on Demand. ISBN 978-0-19-509344-5.

- Emch, Gérard G. (1972). Algebraic Methods in Statistical Mechanics and Quantum Field Theory. New York: John Wiley & Sons. ISBN 0-471-23900-3.

- Giachetta, Giovanni; Mangiarotti, Luigi; Sardanashvily, Gennadi (2005). Geometric and Algebraic Topological Methods in Quantum Mechanics. WORLD SCIENTIFIC. arXiv:math-ph/0410040. doi:10.1142/5731. ISBN 978-981-256-129-9.

- Gleason, Andrew M. (1957). "Measures on the Closed Subspaces of a Hilbert Space". Journal of Mathematics and Mechanics. 6 (6). Indiana University Mathematics Department: 885–893. JSTOR 24900629.

- Hall, Brian C. (2013). Quantum Theory for Mathematicians. Graduate Texts in Mathematics. Vol. 267. New York, NY: Springer New York. Bibcode:2013qtm..book.....H. doi:10.1007/978-1-4614-7116-5. ISBN 978-1-4614-7115-8. ISSN 0072-5285. S2CID 117837329.

- Jauch, Josef Maria (1968). Foundations of Quantum Mechanics. Reading, Mass.: Addison-Wesley. ISBN 0-201-03298-8.

- Jost, R. (1965). The General Theory of Quantized Fields. Lectures in applied mathematics. American Mathematical Society.

- Kuhn, Thomas S. (1987). Black-Body Theory and the Quantum Discontinuity, 1894-1912. Chicago: University of Chicago Press. ISBN 978-0-226-45800-7.

- Landsman, Klaas (2017). Foundations of Quantum Theory. Fundamental Theories of Physics. Vol. 188. Cham: Springer International Publishing. doi:10.1007/978-3-319-51777-3. ISBN 978-3-319-51776-6. ISSN 0168-1222.

- Mackey, George W. (2004). Mathematical Foundations of Quantum Mechanics. Mineola, N.Y: Courier Corporation. ISBN 978-0-486-43517-6.

- McMahon, David (2013). Quantum Mechanics Demystified, 2nd Edition (PDF). New York, NY: McGraw-Hill Prof Med/Tech. ISBN 978-0-07-176563-3.

- Moretti, Valter (2017). Spectral Theory and Quantum Mechanics. Unitext. Vol. 110. Cham: Springer International Publishing. doi:10.1007/978-3-319-70706-8. ISBN 978-3-319-70705-1. ISSN 2038-5714. S2CID 125121522.

- Moretti, Valter (2019). Fundamental Mathematical Structures of Quantum Theory. Cham: Springer International Publishing. doi:10.1007/978-3-030-18346-2. ISBN 978-3-030-18345-5. S2CID 197485828.

- Prugovecki, Eduard (2006). Quantum Mechanics in Hilbert Space. Mineola, NY: Courier Dover Publications. ISBN 978-0-486-45327-9.

- Reed, Michael; Simon, Barry (1972). Methods of Modern Mathematical Physics. New York: Academic Press. ISBN 978-0-12-585001-8.

- Shankar, R. (2013). Principles of Quantum Mechanics (PDF). Springer. ISBN 978-1-4615-7675-4.

- Teschl, Gerald (2009). Mathematical Methods in Quantum Mechanics (PDF). Providence, R.I: American Mathematical Soc. ISBN 978-0-8218-4660-5.

- von Neumann, John (2018). Mathematical Foundations of Quantum Mechanics. Princeton Oxford: Princeton University Press. ISBN 978-0-691-17856-1.

- Weaver, Nik (2001). Mathematical Quantization. Chapman and Hall/CRC. doi:10.1201/9781420036237. ISBN 978-0-429-07514-8.

- Weyl, Hermann (1950). The Theory of Groups and Quantum Mechanics. Mineola, NY: Courier Corporation. ISBN 978-0-486-60269-1.

![{\displaystyle U(t)={\mathcal {T}}\left[\exp \left(-{\frac {i}{\hbar }}\int _{t_{0}}^{t}dt'\,H(t')\right)\right],}](https://wikimedia.org/api/rest_v1/media/math/render/svg/442eb5c646ec193e2cc7e5056896a8648396079b)

![{\displaystyle {\frac {d}{dt}}A(t)={\frac {i}{\hbar }}[H,A(t)]+{\frac {\partial A(t)}{\partial t}},}](https://wikimedia.org/api/rest_v1/media/math/render/svg/7d714d59756ef17027e03f666547917ba07d8057)

![{\displaystyle i\hbar {\frac {d}{dt}}A(t)=[A(t),H_{0}].}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1fe8dccc41b7f0b775dcb47dde6daca9ae2f080a)