Probabilistic design

Probabilistic design is a discipline within engineering design. It deals primarily with the consideration and minimization of the effects of random variability upon the performance of an engineering system during the design phase. Typically, these effects studied and optimized are related to quality and reliability. It differs from the classical approach to design by assuming a small probability of failure instead of using the safety factor.[2][3] Probabilistic design is used in a variety of different applications to assess the likelihood of failure. Disciplines which extensively use probabilistic design principles include product design, quality control, systems engineering, machine design, civil engineering (particularly useful in limit state design) and manufacturing.

Objective and motivations[edit]

When using a probabilistic approach to design, the designer no longer thinks of each variable as a single value or number. Instead, each variable is viewed as a continuous random variable with a probability distribution. From this perspective, probabilistic design predicts the flow of variability (or distributions) through a system.[4]

Because there are so many sources of random and systemic variability when designing materials and structures, it is greatly beneficial for the designer to model the factors studied as random variables. By considering this model, a designer can make adjustments to reduce the flow of random variability, thereby improving engineering quality. Proponents of the probabilistic design approach contend that many quality problems can be predicted and rectified during the early design stages and at a much reduced cost.[4][5]

Typically, the goal of probabilistic design is to identify the design that will exhibit the smallest effects of random variability. Minimizing random variability is essential to probabilistic design because it limits uncontrollable factors, while also providing a much more precise determination of failure probability. This could be the one design option out of several that is found to be most robust. Alternatively, it could be the only design option available, but with the optimum combination of input variables and parameters. This second approach is sometimes referred to as robustification, parameter design or design for six sigma.[4]

Sources of variability[edit]

Though the laws of physics dictate the relationships between variables and measurable quantities such as force, stress, strain, and deflection, there are still three primary sources of variability when considering these relationships.[6]

The first source of variability is statistical, due to the limitations of having a finite sample size to estimate parameters such as yield stress, Young's modulus, and true strain.[7] Measurement uncertainty is the most easily minimized out of these three sources, as variance is proportional to the inverse of the sample size.

We can represent variance due to measurement uncertainties as a corrective factor , which is multiplied by the true mean to yield the measured mean of . Equivalently, .

This yields the result , and the variance of the corrective factor is given as:

where is the correction factor, is the true mean, is the measured mean, and is the number of measurements made.[6]

The second source of variability stems from the inaccuracies and uncertainties of the model used to calculate such parameters. These include the physical models we use to understand loading and their associated effects in materials. The uncertainty from the model of a physical measurable can be determined if both theoretical values according to the model and experimental results are available.

The measured value is equivalent to the theoretical model prediction multiplied by a model error of , plus the experimental error .[8] Equivalently,

and the model error takes the general form:

where are coefficients of regression determined from experimental data.[8]

Finally, the last variability source comes from the intrinsic variability of any physical measurable. There is a fundamental random uncertainty associated with all physical phenomena, and it is comparatively the most difficult to minimize this variability. Thus, each physical variable and measurable quantity can be represented as a random variable with a mean and a variability.

Comparison to classical design principles[edit]

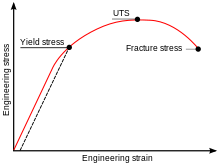

Consider the classical approach to performing tensile testing in materials. The stress experienced by a material is given as a singular value (i.e., force applied divided by the cross-sectional area perpendicular to the loading axis). The yield stress, which is the maximum stress a material can support before plastic deformation, is also given as a singular value. Under this approach, there is a 0% chance of material failure below the yield stress, and a 100% chance of failure above it. However, these assumptions break down in the real world.

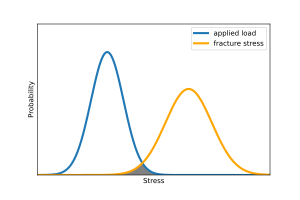

The yield stress of a material is often only known to a certain precision, meaning that there is an uncertainty and therefore a probability distribution associated with the known value.[6][8] Let the probability distribution function of the yield strength be given as .

Similarly, the applied load or predicted load can also only be known to a certain precision, and the range of stress which the material will undergo is unknown as well. Let this probability distribution be given as .

The probability of failure is equivalent to the area between these two distribution functions, mathematically:

or equivalently, if we let the difference between yield stress and applied load equal a third function , then:

where the variance of the mean difference is given by .

The probabilistic design principles allow for precise determination of failure probability, whereas the classical model assumes absolutely no failure before yield strength.[9] It is clear that the classical applied load vs. yield stress model has limitations, so modeling these variables with a probability distribution to calculate failure probability is a more precise approach. The probabilistic design approach allows for the determination of material failure under all loading conditions, associating quantitative probabilities to failure chance in place of a definitive yes or no.

Methods used to determine variability[edit]

In essence, probabilistic design focuses upon the prediction of the effects of variability. In order to be able to predict and calculate variability associated with model uncertainty, many methods have been devised and utilized across different disciplines to determine theoretical values for parameters such as stress and strain. Examples of theoretical models used alongside probabilistic design include:

- Finite element analysis[10]

- Stochastic finite element method[11]

- Boundary element method

- Meshfree methods

- Analytical methods (refer to classical design principles)

Additionally, there are many statistical methods used to quantify and predict the random variability in the desired measurable. Some methods that are used to predict the random variability of an output include:

- the Monte Carlo method (including Latin hypercubes);[12]

- propagation of error;

- design of experiments (DOE)

- the method of moments

- Statistical interference

- quality function deployment

- Failure mode and effects analysis

See also[edit]

Footnotes[edit]

- ^ Sundarth, S; Woeste, Frank E.; Galligan, William (1978), Differential reliability : probabilistic engineering applied to wood members in bending-tension (PDF), vol. Res. Pap. FPL-RP-302., US Forest Products Laboratory, retrieved 21 January 2015

{{citation}}: CS1 maint: multiple names: authors list (link) - ^ Sundararajan, S (1995). Probabilistic Structural Mechanics Handbook. Springer. ISBN 978-0412054815.

- ^ Long, M W; Narcico, J D (June 1999), Design Methodology for Composite Aircraft Structures, DOT/FAA/AR-99/2, FAA, archived from the original on 3 March 2016, retrieved 24 January 2015

- ^ a b c Ang, Alfredo H-S; Tang, Wilson H (2006). Probability Concepts in Engineering: Emphasis on Applications to Civil and Environmental Engineering (2nd ed.). John Wiley & Sons. ISBN 978-0471720645.

- ^ Doorn, Neelke; Hansson, Sven Ove (2011-06-01). "Should Probabilistic Design Replace Safety Factors?". Philosophy & Technology. 24 (2): 151–168. doi:10.1007/s13347-010-0003-6. ISSN 2210-5441.

- ^ a b c Soares, C. Guedes (1997), Soares, C. Guedes (ed.), "Quantification of Model Uncertainty in Structural Reliability", Probabilistic Methods for Structural Design, Solid Mechanics and Its Applications, Dordrecht: Springer Netherlands, pp. 17–37, doi:10.1007/978-94-011-5614-1_2, ISBN 978-94-011-5614-1, retrieved 2023-12-11

- ^ Soares, C. Guedes, ed. (1997). "Probabilistic Methods for Structural Design". Solid Mechanics and Its Applications. doi:10.1007/978-94-011-5614-1. ISSN 0925-0042.

- ^ a b c Ditlevsen, Ove (1982-01-01). "Model uncertainty in structural reliability". Structural Safety. 1 (1): 73–86. doi:10.1016/0167-4730(82)90016-9. ISSN 0167-4730.

- ^ Haugen, Edward B. (1980). Probabilistic mechanical design: Edward B. Haugen. New York: Wiley. ISBN 978-0-471-05847-2.

- ^ a b Benaroya, H.; Rehak, M. (May 1, 1988). "Finite Element Methods in Probabilistic Structural Analysis: A Selective Review". Applied Mechanics Reviews. 41 (5): 201–213 – via ASME Digital Collection.

- ^ Liu, W. K.; Belytschko, T.; Lua, Y. J. (1995), Sundararajan, C. (ed.), "Probabilistic Finite Element Method", Probabilistic Structural Mechanics Handbook: Theory and Industrial Applications, Boston, MA: Springer US, pp. 70–105, doi:10.1007/978-1-4615-1771-9_5, ISBN 978-1-4615-1771-9, retrieved 2023-12-11

- ^ Kong, Depeng; Lu, Shouxiang; Frantzich, Hakan; Lo, S. M. (2013-12-01). "A method for linking safety factor to the target probability of failure in fire safety engineering". Journal of Civil Engineering and Management. 19 (S1): S212–S212. doi:10.3846/13923730.2013.802718.

References[edit]

- Ang and Tang (2006) Probability Concepts in Engineering: Emphasis on Applications to Civil and Environmental Engineering. John Wiley & Sons. ISBN 0-471-72064-X

- Ash (1993) The Probability Tutoring Book: An Intuitive Course for Engineers and Scientists (and Everyone Else). Wiley-IEEE Press. ISBN 0-7803-1051-9

- Clausing (1994) Total Quality Development: A Step-By-Step Guide to World-Class Concurrent Engineering. American Society of Mechanical Engineers. ISBN 0-7918-0035-0

- Haugen (1980) Probabilistic mechanical design. Wiley. ISBN 0-471-05847-5

- Papoulis (2002) Probability, Random Variables and Stochastic Process. McGraw-Hill Publishing Co. ISBN 0-07-119981-0

- Siddall (1982) Optimal Engineering Design. CRC. ISBN 0-8247-1633-7

- Dodson, B., Hammett, P., and Klerx, R. (2014) Probabilistic Design for Optimization and Robustness for Engineers John Wiley & Sons, Inc. ISBN 978-1-118-79619-1

- Cederbaum G., Elishakoff I., Aboudi J. and Librescu L., Random Vibration and Reliability of Composite Structures, Technomic, Lancaster, 1992, XIII + pp. 191; ISBN 0 87762 865 3

- Elishakoff I., Lin Y.K. and Zhu L.P., Probabilistic and Convex Modeling of Acoustically Excited Structures, Elsevier Science Publishers, Amsterdam, 1994, VIII + pp. 296; ISBN 0 444 81624 0

- Elishakoff I., Probabilistic Methods in the Theory of Structures: Random Strength of Materials, Random Vibration, and Buckling, World Scientific, Singapore, ISBN 978-981-3149-84-7, 2017

![{\displaystyle Var[B]={\frac {Var[{\bar {X}}]}{X}}={\frac {Var[X]}{nX}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/002148471165bf53adabc898af7506dab8705cc1)