Rectifier (neural networks)

| Part of a series on |

| Machine learning and data mining |

|---|

In the context of artificial neural networks, the rectifier or ReLU (rectified linear unit) activation function[1][2] is an activation function defined as the positive part of its argument:

where is the input to a neuron. This is also known as a ramp function and is analogous to half-wave rectification in electrical engineering. This activation function was introduced by Kunihiko Fukushima in 1969 in the context of visual feature extraction in hierarchical neural networks.[3][4][5] It was later argued that it has strong biological motivations and mathematical justifications.[6][7] In 2011 it was found to enable better training of deeper networks,[8] compared to the widely used activation functions prior to 2011, e.g., the logistic sigmoid (which is inspired by probability theory; see logistic regression) and its more practical[9] counterpart, the hyperbolic tangent. The rectifier is, as of 2017[update], the most popular activation function for deep neural networks.[10]

Rectified linear units find applications in computer vision[8] and speech recognition[11][12] using deep neural nets and computational neuroscience.[13][14][15]

Advantages[edit]

- Sparse activation: For example, in a randomly initialized network, only about 50% of hidden units are activated (have a non-zero output).

- Better gradient propagation: Fewer vanishing gradient problems compared to sigmoidal activation functions that saturate in both directions.[8]

- Efficient computation: Only comparison, addition and multiplication.

- Scale-invariant: .

Rectifying activation functions were used to separate specific excitation and unspecific inhibition in the neural abstraction pyramid, which was trained in a supervised way to learn several computer vision tasks.[16] In 2011,[8] the use of the rectifier as a non-linearity has been shown to enable training deep supervised neural networks without requiring unsupervised pre-training. Rectified linear units, compared to sigmoid function or similar activation functions, allow faster and effective training of deep neural architectures on large and complex datasets.

Potential problems[edit]

- Non-differentiable at zero; however, it is differentiable anywhere else, and the value of the derivative at zero can be arbitrarily chosen to be 0 or 1.

- Not zero-centered: ReLU outputs are always non-negative. This can make it harder for the network to learn during backpropagation because gradient updates tend to push weights in one direction (positive or negative). Batch normalization can help address this.[citation needed]

- Unbounded.

- Dying ReLU problem: ReLU (rectified linear unit) neurons can sometimes be pushed into states in which they become inactive for essentially all inputs. In this state, no gradients flow backward through the neuron, and so the neuron becomes stuck in a perpetually inactive state and "dies". This is a form of the vanishing gradient problem. In some cases, large numbers of neurons in a network can become stuck in dead states, effectively decreasing the model capacity. This problem typically arises when the learning rate is set too high. It may be mitigated by using leaky ReLUs instead, which assign a small positive slope for x < 0; however, the performance is reduced.

Variants[edit]

Piecewise-linear variants[edit]

Leaky ReLU[edit]

Leaky ReLUs allow a small, positive gradient when the unit is not active,[12] helping to mitigate the vanishing gradient problem.

Parametric ReLU[edit]

Parametric ReLUs (PReLUs) take this idea further by making the coefficient of leakage into a parameter that is learned along with the other neural-network parameters.[17]

Note that for a ≤ 1, this is equivalent to

and thus has a relation to "maxout" networks.[17]

Other non-linear variants[edit]

Gaussian-error linear unit (GELU)[edit]

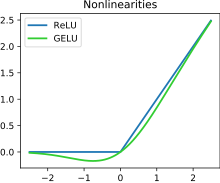

GELU is a smooth approximation to the rectifier:

where is the cumulative distribution function of the standard normal distribution.

This activation function is illustrated in the figure at the start of this article. It has a "bump" to the left of x < 0 and serves as the default activation for models such as BERT.[18]

SiLU[edit]

The SiLU (sigmoid linear unit) or swish function[19] is another smooth approximation, first coined in the GELU paper:[18]

where is the sigmoid function.

Softplus[edit]

A smooth approximation to the rectifier is the analytic function

which is called the softplus[20][8] or SmoothReLU function.[21] For large negative it is roughly , so just above 0, while for large positive it is roughly , so just above .

This function can be approximated as:

By making the change of variables , this is equivalent to

A sharpness parameter may be included:

The derivative of softplus is the logistic function.

The logistic sigmoid function is a smooth approximation of the derivative of the rectifier, the Heaviside step function.

The multivariable generalization of single-variable softplus is the LogSumExp with the first argument set to zero:

The LogSumExp function is

and its gradient is the softmax; the softmax with the first argument set to zero is the multivariable generalization of the logistic function. Both LogSumExp and softmax are used in machine learning.

ELU[edit]

Exponential linear units try to make the mean activations closer to zero, which speeds up learning. It has been shown that ELUs can obtain higher classification accuracy than ReLUs.[22]

In these formulas, is a hyper-parameter to be tuned with the constraint .

The ELU can be viewed as a smoothed version of a shifted ReLU (SReLU), which has the form , given the same interpretation of .

Mish[edit]

The mish function can also be used as a smooth approximation of the rectifier.[19] It is defined as

where is the hyperbolic tangent, and is the softplus function.

Mish is non-monotonic and self-gated.[23] It was inspired by Swish, itself a variant of ReLU.[23]

Squareplus[edit]

Squareplus[24] is the function

where is a hyperparameter that determines the "size" of the curved region near . (For example, letting yields ReLU, and letting yields the metallic mean function.) Squareplus shares many properties with softplus: It is monotonic, strictly positive, approaches 0 as , approaches the identity as , and is smooth. However, squareplus can be computed using only algebraic functions, making it well-suited for settings where computational resources or instruction sets are limited. Additionally, squareplus requires no special consideration to ensure numerical stability when is large.

See also[edit]

References[edit]

- ^ Brownlee, Jason (8 January 2019). "A Gentle Introduction to the Rectified Linear Unit (ReLU)". Machine Learning Mastery. Retrieved 8 April 2021.

- ^ Liu, Danqing (30 November 2017). "A Practical Guide to ReLU". Medium. Retrieved 8 April 2021.

- ^ Fukushima, K. (1969). "Visual feature extraction by a multilayered network of analog threshold elements". IEEE Transactions on Systems Science and Cybernetics. 5 (4): 322–333. doi:10.1109/TSSC.1969.300225.

- ^ Fukushima, K.; Miyake, S. (1982). "Neocognitron: A Self-Organizing Neural Network Model for a Mechanism of Visual Pattern Recognition". Competition and Cooperation in Neural Nets. Lecture Notes in Biomathematics. Vol. 45. Springer. pp. 267–285. doi:10.1007/978-3-642-46466-9_18. ISBN 978-3-540-11574-8.

{{cite book}}:|journal=ignored (help) - ^ Schmidhuber, Juergen (2022). "Annotated History of Modern AI and Deep Learning". arXiv:2212.11279 [cs.NE].

- ^ Hahnloser, R.; Sarpeshkar, R.; Mahowald, M. A.; Douglas, R. J.; Seung, H. S. (2000). "Digital selection and analogue amplification coexist in a cortex-inspired silicon circuit". Nature. 405 (6789): 947–951. Bibcode:2000Natur.405..947H. doi:10.1038/35016072. PMID 10879535. S2CID 4399014.

- ^ Hahnloser, R.; Seung, H. S. (2001). Permitted and Forbidden Sets in Symmetric Threshold-Linear Networks. NIPS 2001.

- ^ a b c d e Xavier Glorot; Antoine Bordes; Yoshua Bengio (2011). Deep sparse rectifier neural networks (PDF). AISTATS.

Rectifier and softplus activation functions. The second one is a smooth version of the first.

- ^ Yann LeCun; Leon Bottou; Genevieve B. Orr; Klaus-Robert Müller (1998). "Efficient BackProp" (PDF). In G. Orr; K. Müller (eds.). Neural Networks: Tricks of the Trade. Springer.

- ^ Ramachandran, Prajit; Barret, Zoph; Quoc, V. Le (October 16, 2017). "Searching for Activation Functions". arXiv:1710.05941 [cs.NE].

- ^ László Tóth (2013). Phone Recognition with Deep Sparse Rectifier Neural Networks (PDF). ICASSP.

- ^ a b Andrew L. Maas, Awni Y. Hannun, Andrew Y. Ng (2014). Rectifier Nonlinearities Improve Neural Network Acoustic Models.

- ^ Hansel, D.; van Vreeswijk, C. (2002). "How noise contributes to contrast invariance of orientation tuning in cat visual cortex". J. Neurosci. 22 (12): 5118–5128. doi:10.1523/JNEUROSCI.22-12-05118.2002. PMC 6757721. PMID 12077207.

- ^ Kadmon, Jonathan; Sompolinsky, Haim (2015-11-19). "Transition to Chaos in Random Neuronal Networks". Physical Review X. 5 (4): 041030. arXiv:1508.06486. Bibcode:2015PhRvX...5d1030K. doi:10.1103/PhysRevX.5.041030. S2CID 7813832.

- ^ Engelken, Rainer; Wolf, Fred; Abbott, L. F. (2020-06-03). "Lyapunov spectra of chaotic recurrent neural networks". arXiv:2006.02427 [nlin.CD].

- ^ Behnke, Sven (2003). Hierarchical Neural Networks for Image Interpretation. Lecture Notes in Computer Science. Vol. 2766. Springer. doi:10.1007/b11963. ISBN 978-3-540-40722-5. S2CID 1304548.

- ^ a b He, Kaiming; Zhang, Xiangyu; Ren, Shaoqing; Sun, Jian (2015). "Delving Deep into Rectifiers: Surpassing Human-Level Performance on Image Net Classification". arXiv:1502.01852 [cs.CV].

- ^ a b Hendrycks, Dan; Gimpel, Kevin (2016). "Gaussian Error Linear Units (GELUs)". arXiv:1606.08415 [cs.LG].

- ^ a b Diganta Misra (23 Aug 2019), Mish: A Self Regularized Non-Monotonic Activation Function (PDF), arXiv:1908.08681v1, retrieved 26 March 2022.

- ^ Dugas, Charles; Bengio, Yoshua; Bélisle, François; Nadeau, Claude; Garcia, René (2000-01-01). "Incorporating second-order functional knowledge for better option pricing" (PDF). Proceedings of the 13th International Conference on Neural Information Processing Systems (NIPS'00). MIT Press: 451–457.

Since the sigmoid h has a positive first derivative, its primitive, which we call softplus, is convex.

- ^ "Smooth Rectifier Linear Unit (SmoothReLU) Forward Layer". Developer Guide for Intel Data Analytics Acceleration Library. 2017. Retrieved 2018-12-04.

- ^ Clevert, Djork-Arné; Unterthiner, Thomas; Hochreiter, Sepp (2015). "Fast and Accurate Deep Network Learning by Exponential Linear Units (ELUs)". arXiv:1511.07289 [cs.LG].

- ^ a b Shaw, Sweta (2020-05-10). "Activation Functions Compared with Experiments". W&B. Retrieved 2022-07-11.

- ^ Barron, Jonathan T. (22 December 2021). "Squareplus: A Softplus-Like Algebraic Rectifier". arXiv:2112.11687 [cs.NE].

![{\displaystyle \ln \left(1+e^{x}\right)\approx {\begin{cases}\ln 2,&x=0,\\[6pt]{\frac {x}{1-e^{-x/\ln 2}}},&x\neq 0\end{cases}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/b2ee7d51d51afff7c0be67d189f28b50dc79be00)

![{\displaystyle \log _{2}(1+2^{y})\approx {\begin{cases}1,&y=0,\\[6pt]{\frac {y}{1-e^{-y}}},&y\neq 0.\end{cases}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/24e5140ee98bf4c13e12e7d6083cbbe9fdb47041)