User:Rajkiran g/sandbox

Cybersecurity[edit]

Cybersecurity, computer security or IT security is the protection of computer systems from the theft and damage to their hardware, software or information, as well as from disruption or misdirection of the services they provide.

Cybersecurity includes controlling physical access to the hardware, as well as protecting against harm that may come via network access, data and code injection.[1] Also, due to malpractice by operators, whether intentional or accidental, IT security is susceptible to being tricked into deviating from secure procedures through various methods.[2]

The field is of growing importance due to the increasing reliance on computer systems and the Internet,[3] wireless networks such as Bluetooth and Wi-Fi, the growth of "smart" devices, including smartphones, televisions and tiny devices as part of the Internet of Things.

Vulnerabilities and attacks[edit]

A vulnerability is a weakness in design, implementation, operation or internal control. Most of the vulnerabilities that have been discovered are documented in the Common Vulnerabilities and Exposures (CVE) database.

An exploitable vulnerability is one for which at least one working attack or "exploit" exists.[4] Vulnerabilities are often hunted or exploited with the aid of automated tools or manually using customized scripts.

To secure a computer system, it is important to understand the attacks that can be made against it, and these threats can typically be classified into one of these categories below:

Backdoor[edit]

A backdoor in a computer system, a cryptosystem or an algorithm, is any secret method of bypassing normal authentication or security controls. They may exist for a number of reasons, including by original design or from poor configuration. They may have been added by an authorized party to allow some legitimate access, or by an attacker for malicious reasons; but regardless of the motives for their existence, they create a vulnerability.

Denial-of-service attacks[edit]

Denial of service attacks (DoS) are designed to make a machine or network resource unavailable to its intended users.[5] Attackers can deny service to individual victims, such as by deliberately entering a wrong password enough consecutive times to cause the victims account to be locked, or they may overload the capabilities of a machine or network and block all users at once. While a network attack from a single IP address can be blocked by adding a new firewall rule, many forms of Distributed denial of service (DDoS) attacks are possible, where the attack comes from a large number of points – and defending is much more difficult. Such attacks can originate from the zombie computers of a botnet, but a range of other techniques are possible including reflection and amplification attacks, where innocent systems are fooled into sending traffic to the victim.

Direct-access attacks[edit]

An unauthorized user gaining physical access to a computer is most likely able to directly copy data from it. They may also compromise security by making operating system modifications, installing software worms, keyloggers, covert listening devices or using wireless mice.[6] Even when the system is protected by standard security measures, these may be able to be by-passed by booting another operating system or tool from a CD-ROM or other bootable media. Disk encryption and Trusted Platform Module are designed to prevent these attacks.

Eavesdropping[edit]

Eavesdropping is the act of surreptitiously listening to a private conversation, typically between hosts on a network. For instance, programs such as Carnivore and NarusInSight have been used by the FBI and NSA to eavesdrop on the systems of internet service providers. Even machines that operate as a closed system (i.e., with no contact to the outside world) can be eavesdropped upon via monitoring the faint electro-magnetic transmissions generated by the hardware; TEMPEST is a specification by the NSA referring to these attacks.

Spoofing[edit]

Spoofing is the act of masquerading as a valid entity through falsification of data (such as an IP address or username), in order to gain access to information or resources that one is otherwise unauthorized to obtain.[7][8] There are several types of spoofing, including:

- Email spoofing, where an attacker forges the sending (From, or source) address of an email.

- IP address spoofing, where an attacker alters the source IP address in a network packet to hide their identity or impersonate another computing system.

- MAC spoofing, where an attacker modifies the Media Access Control (MAC) address of their network interface to pose as a valid user on a network.

- Biometric spoofing, where an attacker produces a fake biometric sample to pose as another user.[9]

Tampering[edit]

Tampering describes a malicious modification of products. So-called "Evil Maid" attacks and security services planting of surveillance capability into routers[10] are examples.

Privilege escalation[edit]

Privilege escalation describes a situation where an attacker with some level of restricted access is able to, without authorization, elevate their privileges or access level. For example, a standard computer user may be able to fool the system into giving them access to restricted data; or even to "become root" and have full unrestricted access to a system.

Phishing[edit]

Phishing is the attempt to acquire sensitive information such as usernames, passwords, and credit card details directly from users.[11] Phishing is typically carried out by email spoofing or instant messaging, and it often directs users to enter details at a fake website whose look and feel are almost identical to the legitimate one. Preying on a victim's trust, phishing can be classified as a form of social engineering.

Clickjacking[edit]

Clickjacking, also known as "UI redress attack" or "User Interface redress attack", is a malicious technique in which an attacker tricks a user into clicking on a button or link on another webpage while the user intended to click on the top level page. This is done using multiple transparent or opaque layers. The attacker is basically "hijacking" the clicks meant for the top level page and routing them to some other irrelevant page, most likely owned by someone else. A similar technique can be used to hijack keystrokes. Carefully drafting a combination of stylesheets, iframes, buttons and text boxes, a user can be led into believing that they are typing the password or other information on some authentic webpage while it is being channeled into an invisible frame controlled by the attacker.

Social engineering[edit]

Social engineering aims to convince a user to disclose secrets such as passwords, card numbers, etc. by, for example, impersonating a bank, a contractor, or a customer.[12]

A common scam involves fake CEO emails sent to accounting and finance departments. In early 2016, the FBI reported that the scam has cost US businesses more than $2bn in about two years.[13]

In May 2016, the Milwaukee Bucks NBA team was the victim of this type of cyber scam with a perpetrator impersonating the team's president Peter Feigin, resulting in the handover of all the team's employees' 2015 W-2 tax forms.[14]

Information security culture[edit]

Employee behavior can have a big impact on information security in organizations. Cultural concepts can help different segments of the organization work effectively or work against effectiveness towards information security within an organization.″Exploring the Relationship between Organizational Culture and Information Security Culture″ provides the following definition of information security culture: ″ISC is the totality of patterns of behavior in an organization that contribute to the protection of information of all kinds.″[15]

Andersson and Reimers (2014) found that employees often do not see themselves as part of the organization Information Security "effort" and often take actions that ignore organizational Information Security best interests.[citation needed] Research shows Information security culture needs to be improved continuously. In ″Information Security Culture from Analysis to Change″, authors commented, ″It′s a never ending process, a cycle of evaluation and change or maintenance.″ To manage the information security culture, five steps should be taken: Pre-evaluation, strategic planning, operative planning, implementation, and post-evaluation.[16]

- Pre-Evaluation: to identify the awareness of information security within employees and to analyze the current security policy.

- Strategic Planning: to come up with a better awareness program, clear targets need to be set. Clustering people is helpful to achieve it.

- Operative Planning: a good security culture can be established based on internal communication, management-buy-in, and security awareness and a training program.[16]

- Implementation: four stages should be used to implement the information security culture. They are commitment of the management, communication with organizational members, courses for all organizational members, and commitment of the employees.[16]

Systems at risk[edit]

The growth in the number of computer systems, and the increasing reliance upon them of individuals, businesses, industries and governments means that there are an increasing number of systems at risk.

Financial systems[edit]

The computer systems of financial regulators and financial institutions like the U.S. Securities and Exchange Commission, SWIFT, investment banks, and commercial banks are prominent hacking targets for cybercriminals interested in manipulating markets and making illicit gains.[17] Web sites and apps that accept or store credit card numbers, brokerage accounts, and bank account information are also prominent hacking targets, because of the potential for immediate financial gain from transferring money, making purchases, or selling the information on the black market.[18] In-store payment systems and ATMs have also been tampered with in order to gather customer account data and PINs.

Utilities and industrial equipment[edit]

Computers control functions at many utilities, including coordination of telecommunications, the power grid, nuclear power plants, and valve opening and closing in water and gas networks. The Internet is a potential attack vector for such machines if connected, but the Stuxnet worm demonstrated that even equipment controlled by computers not connected to the Internet can be vulnerable. In 2014, the Computer Emergency Readiness Team, a division of the Department of Homeland Security, investigated 79 hacking incidents at energy companies.[19] Vulnerabilities in smart meters (many of which use local radio or cellular communications) can cause problems with billing fraud.[20]

Aviation[edit]

The aviation industry is very reliant on a series of complex systems which could be attacked.[21] A simple power outage at one airport can cause repercussions worldwide,[22] much of the system relies on radio transmissions which could be disrupted,[23] and controlling aircraft over oceans is especially dangerous because radar surveillance only extends 175 to 225 miles offshore.[24] There is also potential for attack from within an aircraft.[25]

In Europe, with the (Pan-European Network Service)[26] and NewPENS,[27] and in the US with the NextGen program,[28] air navigation service providers are moving to create their own dedicated networks.

The consequences of a successful attack range from loss of confidentiality to loss of system integrity, air traffic control outages, loss of aircraft, and even loss of life.

Consumer devices[edit]

Desktop computers and laptops are commonly targeted to gather passwords or financial account information, or to construct a botnet to attack another target. Smartphones, tablet computers, smart watches, and other mobile devices such as quantified self devices like activity trackers have sensors such as cameras, microphones, GPS receivers, compasses, and accelerometers which could be exploited, and may collect personal information, including sensitive health information. Wifi, Bluetooth, and cell phone networks on any of these devices could be used as attack vectors, and sensors might be remotely activated after a successful breach.[29]

The increasing number of home automation devices such as the Nest thermostat are also potential targets.[29]

Large corporations[edit]

Large corporations are common targets. In many cases this is aimed at financial gain through identity theft and involves data breaches such as the loss of millions of clients' credit card details by Home Depot,[30] Staples,[31] Target Corporation,[32] and the most recent breach of Equifax.[33]

Some cyberattacks are ordered by foreign governments, these governments engage in cyberwarfare with the intent to spread their propaganda, sabotage, or spy on their targets. Many people believe the Russian government played a major role in the US presidential election of 2016 by using Twitter and Facebook to affect the results of the election, despite the fact that no evidence has been found.[34]

Medical records have been targeted for use in general identify theft, health insurance fraud, and impersonating patients to obtain prescription drugs for recreational purposes or resale.[35] Although cyber threats continue to increase, 62% of all organizations did not increase security training for their business in 2015.[36][37]

Not all attacks are financially motivated however; for example security firm HBGary Federal suffered a serious series of attacks in 2011 from hacktivist group Anonymous in retaliation for the firm's CEO claiming to have infiltrated their group,[38][39] and in the Sony Pictures attack of 2014 the motive appears to have been to embarrass with data leaks, and cripple the company by wiping workstations and servers.[40][41]

Automobiles[edit]

Vehicles are increasingly computerized, with engine timing, cruise control, anti-lock brakes, seat belt tensioners, door locks, airbags and advanced driver-assistance systems on many models. Additionally, connected cars may use WiFi and Bluetooth to communicate with onboard consumer devices and the cell phone network.[42] Self-driving cars are expected to be even more complex.

All of these systems carry some security risk, and such issues have gained wide attention.[43][44][45] Simple examples of risk include a malicious compact disc being used as an attack vector,[46] and the car's onboard microphones being used for eavesdropping. However, if access is gained to a car's internal controller area network, the danger is much greater[42] – and in a widely publicised 2015 test, hackers remotely carjacked a vehicle from 10 miles away and drove it into a ditch.[47][48]

Manufacturers are reacting in a number of ways, with Tesla in 2016 pushing out some security fixes "over the air" into its cars' computer systems.[49]

In the area of autonomous vehicles, in September 2016 the United States Department of Transportation announced some initial safety standards, and called for states to come up with uniform policies.[50][51]

Government[edit]

Government and military computer systems are commonly attacked by activists[52][53][54][55] and foreign powers.[56][57][58][59] Local and regional government infrastructure such as traffic light controls, police and intelligence agency communications, personnel records, student records,[60] and financial systems are also potential targets as they are now all largely computerized. Passports and government ID cards that control access to facilities which use RFID can be vulnerable to cloning.

Internet of things and physical vulnerabilities[edit]

The Internet of things (IoT) is the network of physical objects such as devices, vehicles, and buildings that are embedded with electronics, software, sensors, and network connectivity that enables them to collect and exchange data[61] – and concerns have been raised that this is being developed without appropriate consideration of the security challenges involved.[62][63]

While the IoT creates opportunities for more direct integration of the physical world into computer-based systems,[64][65] it also provides opportunities for misuse. In particular, as the Internet of Things spreads widely, cyber attacks are likely to become an increasingly physical (rather than simply virtual) threat.[66] If a front door's lock is connected to the Internet, and can be locked/unlocked from a phone, then a criminal could enter the home at the press of a button from a stolen or hacked phone. People could stand to lose much more than their credit card numbers in a world controlled by IoT-enabled devices. Thieves have also used electronic means to circumvent non-Internet-connected hotel door locks.[67]

Medical systems[edit]

Medical devices have either been successfully attacked or had potentially deadly vulnerabilities demonstrated, including both in-hospital diagnostic equipment[68] and implanted devices including pacemakers[69] and insulin pumps.[70] There are many reports of hospitals and hospital organizations getting hacked, including ransomware attacks,[71][72][73][74] Windows XP exploits,[75][76] viruses,[77][78][79] and data breaches of sensitive data stored on hospital servers.[80][72][81][82][83] On 28 December 2016 the US Food and Drug Administration released its recommendations for how medical device manufacturers should maintain the security of Internet-connected devices – but no structure for enforcement.[84][85]

Impact of security breaches[edit]

Serious financial damage has been caused by security breaches, but because there is no standard model for estimating the cost of an incident, the only data available is that which is made public by the organizations involved. "Several computer security consulting firms produce estimates of total worldwide losses attributable to virus and worm attacks and to hostile digital acts in general. The 2003 loss estimates by these firms range from $13 billion (worms and viruses only) to $226 billion (for all forms of covert attacks). The reliability of these estimates is often challenged; the underlying methodology is basically anecdotal."[86] Security breaches continue to cost businesses billions of dollars but a survey revealed that 66% of security staffs do not believe senior leadership takes cyber precautions as a strategic priority.[36][third-party source needed]

However, reasonable estimates of the financial cost of security breaches can actually help organizations make rational investment decisions. According to the classic Gordon-Loeb Model analyzing the optimal investment level in information security, one can conclude that the amount a firm spends to protect information should generally be only a small fraction of the expected loss (i.e., the expected value of the loss resulting from a cyber/information security breach).[87]

Attacker motivation[edit]

As with physical security, the motivations for breaches of computer security vary between attackers. Some are thrill-seekers or vandals, some are activists, others are criminals looking for financial gain. State-sponsored attackers are now common and well resourced, but started with amateurs such as Markus Hess who hacked for the KGB, as recounted by Clifford Stoll, in The Cuckoo's Egg.

A standard part of threat modelling for any particular system is to identify what might motivate an attack on that system, and who might be motivated to breach it. The level and detail of precautions will vary depending on the system to be secured. A home personal computer, bank, and classified military network face very different threats, even when the underlying technologies in use are similar.

Computer protection (countermeasures)[edit]

In computer security a countermeasure is an action, device, procedure, or technique that reduces a threat, a vulnerability, or an attack by eliminating or preventing it, by minimizing the harm it can cause, or by discovering and reporting it so that corrective action can be taken.[88][89][90]

Some common countermeasures are listed in the following sections:

Security by design[edit]

Security by design, or alternately secure by design, means that the software has been designed from the ground up to be secure. In this case, security is considered as a main feature.

Some of the techniques in this approach include:

- The principle of least privilege, where each part of the system has only the privileges that are needed for its function. That way even if an attacker gains access to that part, they have only limited access to the whole system.

- Automated theorem proving to prove the correctness of crucial software subsystems.

- Code reviews and unit testing, approaches to make modules more secure where formal correctness proofs are not possible.

- Defense in depth, where the design is such that more than one subsystem needs to be violated to compromise the integrity of the system and the information it holds.

- Default secure settings, and design to "fail secure" rather than "fail insecure" (see fail-safe for the equivalent in safety engineering). Ideally, a secure system should require a deliberate, conscious, knowledgeable and free decision on the part of legitimate authorities in order to make it insecure.

- Audit trails tracking system activity, so that when a security breach occurs, the mechanism and extent of the breach can be determined. Storing audit trails remotely, where they can only be appended to, can keep intruders from covering their tracks.

- Full disclosure of all vulnerabilities, to ensure that the "window of vulnerability" is kept as short as possible when bugs are discovered.

Security architecture[edit]

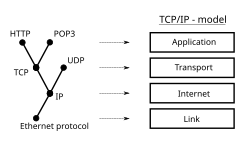

The Open Security Architecture organization defines IT security architecture as "the design artifacts that describe how the security controls (security countermeasures) are positioned, and how they relate to the overall information technology architecture. These controls serve the purpose to maintain the system's quality attributes: confidentiality, integrity, availability, accountability and assurance services".[91]

Techopedia defines security architecture as "a unified security design that addresses the necessities and potential risks involved in a certain scenario or environment. It also specifies when and where to apply security controls. The design process is generally reproducible." The key attributes of security architecture are:[92]

- the relationship of different components and how they depend on each other.

- the determination of controls based on risk assessment, good practice, finances, and legal matters.

- the standardization of controls.

Security measures[edit]

A state of computer "security" is the conceptual ideal, attained by the use of the three processes: threat prevention, detection, and response. These processes are based on various policies and system components, which include the following:

- User account access controls and cryptography can protect systems files and data, respectively.

- Firewalls are by far the most common prevention systems from a network security perspective as they can (if properly configured) shield access to internal network services, and block certain kinds of attacks through packet filtering. Firewalls can be both hardware- or software-based.

- Intrusion Detection System (IDS) products are designed to detect network attacks in-progress and assist in post-attack forensics, while audit trails and logs serve a similar function for individual systems.

- "Response" is necessarily defined by the assessed security requirements of an individual system and may cover the range from simple upgrade of protections to notification of legal authorities, counter-attacks, and the like. In some special cases, a complete destruction of the compromised system is favored, as it may happen that not all the compromised resources are detected.

Today, computer security comprises mainly "preventive" measures, like firewalls or an exit procedure. A firewall can be defined as a way of filtering network data between a host or a network and another network, such as the Internet, and can be implemented as software running on the machine, hooking into the network stack (or, in the case of most UNIX-based operating systems such as Linux, built into the operating system kernel) to provide real time filtering and blocking. Another implementation is a so-called "physical firewall", which consists of a separate machine filtering network traffic. Firewalls are common amongst machines that are permanently connected to the Internet.

Some organizations are turning to big data platforms, such as Apache Hadoop, to extend data accessibility and machine learning to detect advanced persistent threats.[93][94]

However, relatively few organisations maintain computer systems with effective detection systems, and fewer still have organised response mechanisms in place. As a result, as Reuters points out: "Companies for the first time report they are losing more through electronic theft of data than physical stealing of assets".[95] The primary obstacle to effective eradication of cyber crime could be traced to excessive reliance on firewalls and other automated "detection" systems. Yet it is basic evidence gathering by using packet capture appliances that puts criminals behind bars.[citation needed]

Vulnerability management[edit]

Vulnerability management is the cycle of identifying, and remediating or mitigating vulnerabilities,[96] especially in software and firmware. Vulnerability management is integral to computer security and network security.

Vulnerabilities can be discovered with a vulnerability scanner, which analyzes a computer system in search of known vulnerabilities,[97] such as open ports, insecure software configuration, and susceptibility to malware.

Beyond vulnerability scanning, many organisations contract outside security auditors to run regular penetration tests against their systems to identify vulnerabilities. In some sectors this is a contractual requirement.[98]

Reducing vulnerabilities[edit]

While formal verification of the correctness of computer systems is possible,[99][100] it is not yet common. Operating systems formally verified include seL4,[101] and SYSGO's PikeOS[102][103] – but these make up a very small percentage of the market.

Cryptography properly implemented is now virtually impossible to directly break. Breaking them requires some non-cryptographic input, such as a stolen key, stolen plaintext (at either end of the transmission), or some other extra cryptanalytic information.

Two factor authentication is a method for mitigating unauthorized access to a system or sensitive information. It requires "something you know"; a password or PIN, and "something you have"; a card, dongle, cellphone, or other piece of hardware. This increases security as an unauthorized person needs both of these to gain access. The more tight we are on security measures, the less unauthorized hacks there will be.

Social engineering and direct computer access (physical) attacks can only be prevented by non-computer means, which can be difficult to enforce, relative to the sensitivity of the information. Training is often involved to help mitigate this risk, but even in a highly disciplined environments (e.g. military organizations), social engineering attacks can still be difficult to foresee and prevent.

Enoculation, derived from inoculation theory, seeks to prevent social engineering and other fraudulent tricks or traps by instilling a resistance to persuasion attempts through exposure to similar or related attempts.[104]

It is possible to reduce an attacker's chances by keeping systems up to date with security patches and updates, using a security scanner or/and hiring competent people responsible for security. The effects of data loss/damage can be reduced by careful backing up and insurance.

Hardware protection mechanisms[edit]

While hardware may be a source of insecurity, such as with microchip vulnerabilities maliciously introduced during the manufacturing process,[105][106] hardware-based or assisted computer security also offers an alternative to software-only computer security. Using devices and methods such as dongles, trusted platform modules, intrusion-aware cases, drive locks, disabling USB ports, and mobile-enabled access may be considered more secure due to the physical access (or sophisticated backdoor access) required in order to be compromised. Each of these is covered in more detail below.

- USB dongles are typically used in software licensing schemes to unlock software capabilities,[107] but they can also be seen as a way to prevent unauthorized access to a computer or other device's software. The dongle, or key, essentially creates a secure encrypted tunnel between the software application and the key. The principle is that an encryption scheme on the dongle, such as Advanced Encryption Standard (AES) provides a stronger measure of security, since it is harder to hack and replicate the dongle than to simply copy the native software to another machine and use it. Another security application for dongles is to use them for accessing web-based content such as cloud software or Virtual Private Networks (VPNs).[108] In addition, a USB dongle can be configured to lock or unlock a computer.[109]

- Trusted platform modules (TPMs) secure devices by integrating cryptographic capabilities onto access devices, through the use of microprocessors, or so-called computers-on-a-chip. TPMs used in conjunction with server-side software offer a way to detect and authenticate hardware devices, preventing unauthorized network and data access.[110]

- Computer case intrusion detection refers to a push-button switch which is triggered when a computer case is opened. The firmware or BIOS is programmed to show an alert to the operator when the computer is booted up the next time.

- Drive locks are essentially software tools to encrypt hard drives, making them inaccessible to thieves.[111] Tools exist specifically for encrypting external drives as well.[112]

- Disabling USB ports is a security option for preventing unauthorized and malicious access to an otherwise secure computer. Infected USB dongles connected to a network from a computer inside the firewall are considered by the magazine Network World as the most common hardware threat facing computer networks. Use Antivirus[113]

- Mobile-enabled access devices are growing in popularity due to the ubiquitous nature of cell phones. Built-in capabilities such as Bluetooth, the newer Bluetooth low energy (LE), Near field communication (NFC) on non-iOS devices and biometric validation such as thumb print readers, as well as QR code reader software designed for mobile devices, offer new, secure ways for mobile phones to connect to access control systems. These control systems provide computer security and can also be used for controlling access to secure buildings.[114]

Secure operating systems[edit]

One use of the term "computer security" refers to technology that is used to implement secure operating systems. In the 1980s the United States Department of Defense (DoD) used the "Orange Book"[115] standards, but the current international standard ISO/IEC 15408, "Common Criteria" defines a number of progressively more stringent Evaluation Assurance Levels. Many common operating systems meet the EAL4 standard of being "Methodically Designed, Tested and Reviewed", but the formal verification required for the highest levels means that they are uncommon. An example of an EAL6 ("Semiformally Verified Design and Tested") system is Integrity-178B, which is used in the Airbus A380[116] and several military jets.[117]

Secure coding[edit]

In software engineering, secure coding aims to guard against the accidental introduction of security vulnerabilities. It is also possible to create software designed from the ground up to be secure. Such systems are "secure by design". Beyond this, formal verification aims to prove the correctness of the algorithms underlying a system;[118] important for cryptographic protocols for example.

Capabilities and access control lists[edit]

Within computer systems, two of many security models capable of enforcing privilege separation are access control lists (ACLs) and capability-based security. Using ACLs to confine programs has been proven to be insecure in many situations, such as if the host computer can be tricked into indirectly allowing restricted file access, an issue known as the confused deputy problem. It has also been shown that the promise of ACLs of giving access to an object to only one person can never be guaranteed in practice. Both of these problems are resolved by capabilities. This does not mean practical flaws exist in all ACL-based systems, but only that the designers of certain utilities must take responsibility to ensure that they do not introduce flaws.[citation needed]

Capabilities have been mostly restricted to research operating systems, while commercial OSs still use ACLs. Capabilities can, however, also be implemented at the language level, leading to a style of programming that is essentially a refinement of standard object-oriented design. An open source project in the area is the E language.

End user security training[edit]

Repeated education/training in security "best practices" can have a marked effect on compliance with good end user network security habits—which particularly protect against phishing, ransomware and other forms of malware which have a social engineering aspect.[119]

Response to breaches[edit]

Responding forcefully to attempted security breaches (in the manner that one would for attempted physical security breaches) is often very difficult for a variety of reasons:

- Identifying attackers is difficult, as they are often in a different jurisdiction to the systems they attempt to breach, and operate through proxies, temporary anonymous dial-up accounts, wireless connections, and other anonymising procedures which make backtracing difficult and are often located in yet another jurisdiction. If they successfully breach security, they are often able to delete logs to cover their tracks.

- The sheer number of attempted attacks is so large that organisations cannot spend time pursuing each attacker (a typical home user with a permanent (e.g., cable modem) connection will be attacked at least several times per day, so more attractive targets could be presumed to see many more). Note however, that most of the sheer bulk of these attacks are made by automated vulnerability scanners and computer worms.

- Law enforcement officers are often unfamiliar with information technology, and so lack the skills and interest in pursuing attackers. There are also budgetary constraints. It has been argued that the high cost of technology, such as DNA testing, and improved forensics mean less money for other kinds of law enforcement, so the overall rate of criminals not getting dealt with goes up as the cost of the technology increases. In addition, the identification of attackers across a network may require logs from various points in the network and in many countries, the release of these records to law enforcement (with the exception of being voluntarily surrendered by a network administrator or a system administrator) requires a search warrant and, depending on the circumstances, the legal proceedings required can be drawn out to the point where the records are either regularly destroyed, or the information is no longer relevant.

- The United States government spends the largest amount of money every year on cyber security. The United States has a yearly budget of 28 billion dollars. Canada has the 2nd highest annual budget at 1 billion dollars. Australia has the third highest budget with only 70 million dollars.[120]

Types of security and privacy[edit]

- Access control

- Anti-keyloggers

- Anti-malware

- Anti-spyware

- Anti-subversion software

- Anti-tamper software

- Antivirus software

- Cryptographic software

- Computer-aided dispatch (CAD)

- Firewall

- Intrusion detection system (IDS)

- Intrusion prevention system (IPS)

- Log management software

- Records management

- Sandbox

- Security information management

- SIEM

- Anti-theft

- Parental control

- Software and operating system updating

Notable attacks and breaches[edit]

Some illustrative examples of different types of computer security breaches are given below.

Robert Morris and the first computer worm[edit]

In 1988, only 60,000 computers were connected to the Internet, and most were mainframes, minicomputers and professional workstations. On 2 November 1988, many started to slow down, because they were running a malicious code that demanded processor time and that spread itself to other computers – the first internet "computer worm".[121] The software was traced back to 23-year-old Cornell University graduate student Robert Tappan Morris, Jr. who said 'he wanted to count how many machines were connected to the Internet'.[121]

Rome Laboratory[edit]

In 1994, over a hundred intrusions were made by unidentified crackers into the Rome Laboratory, the US Air Force's main command and research facility. Using trojan horses, hackers were able to obtain unrestricted access to Rome's networking systems and remove traces of their activities. The intruders were able to obtain classified files, such as air tasking order systems data and furthermore able to penetrate connected networks of National Aeronautics and Space Administration's Goddard Space Flight Center, Wright-Patterson Air Force Base, some Defense contractors, and other private sector organizations, by posing as a trusted Rome center user.[122]

TJX customer credit card details[edit]

In early 2007, American apparel and home goods company TJX announced that it was the victim of an unauthorized computer systems intrusion[123] and that the hackers had accessed a system that stored data on credit card, debit card, check, and merchandise return transactions.[124]

Stuxnet attack[edit]

The computer worm known as Stuxnet reportedly ruined almost one-fifth of Iran's nuclear centrifuges[125] by disrupting industrial programmable logic controllers (PLCs) in a targeted attack generally believed to have been launched by Israel and the United States[126][127][128][129] – although neither has publicly admitted this.

Global surveillance disclosures[edit]

In early 2013, documents provided by Edward Snowden were published by The Washington Post and The Guardian[130][131] exposing the massive scale of NSA global surveillance. There were also indications that the NSA may have inserted a backdoor in a NIST standard for encryption[132]. This standard was later withdrawn due to widespread criticism[133]. The NSA additionally were revealed to have tapped the links between Google's data centres.[134]

Target and Home Depot breaches[edit]

In 2013 and 2014, a Russian/Ukrainian hacking ring known as "Rescator" broke into Target Corporation computers in 2013, stealing roughly 40 million credit cards,[135] and then Home Depot computers in 2014, stealing between 53 and 56 million credit card numbers.[136] Warnings were delivered at both corporations, but ignored; physical security breaches using self checkout machines are believed to have played a large role. "The malware utilized is absolutely unsophisticated and uninteresting," says Jim Walter, director of threat intelligence operations at security technology company McAfee – meaning that the heists could have easily been stopped by existing antivirus software had administrators responded to the warnings. The size of the thefts has resulted in major attention from state and Federal United States authorities and the investigation is ongoing.

Office of Personnel Management data breach[edit]

In April 2015, the Office of Personnel Management discovered it had been hacked more than a year earlier in a data breach, resulting in the theft of approximately 21.5 million personnel records handled by the office.[137] The Office of Personnel Management hack has been described by federal officials as among the largest breaches of government data in the history of the United States.[138] Data targeted in the breach included personally identifiable information such as Social Security Numbers,[139] names, dates and places of birth, addresses, and fingerprints of current and former government employees as well as anyone who had undergone a government background check.[140] It is believed the hack was perpetrated by Chinese hackers but the motivation remains unclear.[141]

Ashley Madison breach[edit]

In July 2015, a hacker group known as "The Impact Team" successfully breached the extramarital relationship website Ashley Madison. The group claimed that they had taken not only company data but user data as well. After the breach, The Impact Team dumped emails from the company's CEO, to prove their point, and threatened to dump customer data unless the website was taken down permanently. With this initial data release, the group stated "Avid Life Media has been instructed to take Ashley Madison and Established Men offline permanently in all forms, or we will release all customer records, including profiles with all the customers' secret sexual fantasies and matching credit card transactions, real names and addresses, and employee documents and emails. The other websites may stay online."[142] When Avid Life Media, the parent company that created the Ashley Madison website, did not take the site offline, The Impact Group released two more compressed files, one 9.7GB and the second 20GB. After the second data dump, Avid Life Media CEO Noel Biderman resigned, but the website remained functional.

Legal issues and global regulation[edit]

Conflict of laws in cyberspace has become a major cause of concern for computer security community. Some of the main challenges and complaints about the antivirus industry are the lack of global web regulations, a global base of common rules to judge, and eventually punish, cyber crimes and cyber criminals. There is no global cyber law and cyber security treaty that can be invoked for enforcing global cyber security issues.

International legal issues of cyber attacks are complicated in nature. Even if an antivirus firm locates the cybercriminal behind the creation of a particular virus or piece of malware or form of cyber attack, often the local authorities cannot take action due to lack of laws under which to prosecute.[143][144] Authorship attribution for cyber crimes and cyber attacks is a major problem for all law enforcement agencies.

"[Computer viruses] switch from one country to another, from one jurisdiction to another – moving around the world, using the fact that we don't have the capability to globally police operations like this. So the Internet is as if someone [had] given free plane tickets to all the online criminals of the world."[143] Use of dynamic DNS, fast flux and bullet proof servers have added own complexities to this situation.

Role of government[edit]

The role of the government is to make regulations to force companies and organizations to protect their systems, infrastructure and information from any cyberattacks, but also to protect its own national infrastructure such as the national power-grid.[145]

The question of whether the government should intervene or not in the regulation of the cyberspace is a very polemical one. Indeed, for as long as it has existed and by definition, the cyberspace is a virtual space free of any government intervention. Where everyone agrees that an improvement on cyber security is more than vital, is the government the best actor to solve this issue? Many government officials and experts think that the government should step in and that there is a crucial need for regulation, mainly due to the failure of the private sector to solve efficiently the cybersecurity problem. R. Clarke said during a panel discussion at the RSA Security Conference in San Francisco, he believes that the "industry only responds when you threaten regulation. If the industry doesn't respond (to the threat), you have to follow through."[146] On the other hand, executives from the private sector agree that improvements are necessary, but think that the government intervention would affect their ability to innovate efficiently.

International actions[edit]

Many different teams and organisations exist, including:

- The Forum of Incident Response and Security Teams (FIRST) is the global association of CSIRTs.[147] The US-CERT, AT&T, Apple, Cisco, McAfee, Microsoft are all members of this international team.[148]

- The Council of Europe helps protect societies worldwide from the threat of cybercrime through the Convention on Cybercrime.[149]

- The purpose of the Messaging Anti-Abuse Working Group (MAAWG) is to bring the messaging industry together to work collaboratively and to successfully address the various forms of messaging abuse, such as spam, viruses, denial-of-service attacks and other messaging exploitations.[150] France Telecom, Facebook, AT&T, Apple, Cisco, Sprint are some of the members of the MAAWG.[151]

- ENISA : The European Network and Information Security Agency (ENISA) is an agency of the European Union with the objective to improve network and information security in the European Union.

Europe[edit]

CSIRTs in Europe collaborate in the TERENA task force TF-CSIRT. TERENA's Trusted Introducer service provides an accreditation and certification scheme for CSIRTs in Europe. A full list of known CSIRTs in Europe is available from the Trusted Introducer website.

National actions[edit]

Computer emergency response teams[edit]

Most countries have their own computer emergency response team to protect network security.

Canada[edit]

On 3 October 2010, Public Safety Canada unveiled Canada's Cyber Security Strategy, following a Speech from the Throne commitment to boost the security of Canadian cyberspace.[152][153] The aim of the strategy is to strengthen Canada's "cyber systems and critical infrastructure sectors, support economic growth and protect Canadians as they connect to each other and to the world."[153] Three main pillars define the strategy: securing government systems, partnering to secure vital cyber systems outside the federal government, and helping Canadians to be secure online.[153] The strategy involves multiple departments and agencies across the Government of Canada.[154] The Cyber Incident Management Framework for Canada outlines these responsibilities, and provides a plan for coordinated response between government and other partners in the event of a cyber incident.[155] The Action Plan 2010–2015 for Canada's Cyber Security Strategy outlines the ongoing implementation of the strategy.[156]

Public Safety Canada's Canadian Cyber Incident Response Centre (CCIRC) is responsible for mitigating and responding to threats to Canada's critical infrastructure and cyber systems. The CCIRC provides support to mitigate cyber threats, technical support to respond and recover from targeted cyber attacks, and provides online tools for members of Canada's critical infrastructure sectors.[157] The CCIRC posts regular cyber security bulletins on the Public Safety Canada website.[158] The CCIRC also operates an online reporting tool where individuals and organizations can report a cyber incident.[159] Canada's Cyber Security Strategy is part of a larger, integrated approach to critical infrastructure protection, and functions as a counterpart document to the National Strategy and Action Plan for Critical Infrastructure.[154]

On 27 September 2010, Public Safety Canada partnered with STOP.THINK.CONNECT, a coalition of non-profit, private sector, and government organizations dedicated to informing the general public on how to protect themselves online.[160] On 4 February 2014, the Government of Canada launched the Cyber Security Cooperation Program.[161] The program is a $1.5 million five-year initiative aimed at improving Canada's cyber systems through grants and contributions to projects in support of this objective.[162] Public Safety Canada aims to begin an evaluation of Canada's Cyber Security Strategy in early 2015.[154] Public Safety Canada administers and routinely updates the GetCyberSafe portal for Canadian citizens, and carries out Cyber Security Awareness Month during October.[163]

China[edit]

China's Central Leading Group for Internet Security and Informatization (Chinese: 中央网络安全和信息化领导小组) was established on 27 February 2014. This Leading Small Group (LSG) of the Communist Party of China is headed by General Secretary Xi Jinping himself and is staffed with relevant Party and state decision-makers. The LSG was created to overcome the incoherent policies and overlapping responsibilities that characterized China's former cyberspace decision-making mechanisms. The LSG oversees policy-making in the economic, political, cultural, social and military fields as they relate to network security and IT strategy. This LSG also coordinates major policy initiatives in the international arena that promote norms and standards favored by the Chinese government and that emphasize the principle of national sovereignty in cyberspace.[164]

Germany[edit]

Berlin starts National Cyber Defense Initiative: On 16 June 2011, the German Minister for Home Affairs, officially opened the new German NCAZ (National Center for Cyber Defense) Nationales Cyber-Abwehrzentrum located in Bonn. The NCAZ closely cooperates with BSI (Federal Office for Information Security) Bundesamt für Sicherheit in der Informationstechnik, BKA (Federal Police Organisation) Bundeskriminalamt (Deutschland), BND (Federal Intelligence Service) Bundesnachrichtendienst, MAD (Military Intelligence Service) Amt für den Militärischen Abschirmdienst and other national organisations in Germany taking care of national security aspects. According to the Minister the primary task of the new organization founded on 23 February 2011, is to detect and prevent attacks against the national infrastructure and mentioned incidents like Stuxnet.

India[edit]

Some provisions for cyber security have been incorporated into rules framed under the Information Technology Act 2000.[165]

The National Cyber Security Policy 2013 is a policy framework by Ministry of Electronics and Information Technology (MeitY) which aims to protect the public and private infrastructure from cyber attacks, and safeguard "information, such as personal information (of web users), financial and banking information and sovereign data". CERT- In is the nodal agency which monitors the cyber threats in the country. The post of National Cyber Security Coordinator has also been created in the Prime Minister's Office (PMO).

The Indian Companies Act 2013 has also introduced cyber law and cyber security obligations on the part of Indian directors. Some provisions for cyber security have been incorporated into rules framed under the Information Technology Act 2000 Update in 2013.[166]

Portugal[edit]

O CNCS em Portugal promove a utilização do ciberespaço de uma forma livre, confiável e segura, através da melhoria contínua da cibersegurança nacional e da cooperação internacional. — Cyber Security Services, Nano IT Security is a Portuguese company specialized in cyber security, pentesting and vulnerability analyses.

Pakistan[edit]

Cyber-crime has risen rapidly in Pakistan. There are about 34 million Internet users with 133.4 million mobile subscribers in Pakistan. According to Cyber Crime Unit (CCU), a branch of Federal Investigation Agency, only 62 cases were reported to the unit in 2007, 287 cases in 2008, ratio dropped in 2009 but in 2010, more than 312 cases were registered. However, there are many unreported incidents of cyber-crime.[167]

"Pakistan's Cyber Crime Bill 2007", the first pertinent law, focuses on electronic crimes, for example cyber-terrorism, criminal access, electronic system fraud, electronic forgery, and misuse of encryption.[167]

National Response Centre for Cyber Crime (NR3C) – FIA is a law enforcement agency dedicated to fighting cyber crime. Inception of this Hi-Tech crime fighting unit transpired in 2007 to identify and curb the phenomenon of technological abuse in society.[168] However, certain private firms are also working in cohesion with the government to improve cyber security and curb cyber attacks.[169]

South Korea[edit]

Following cyber attacks in the first half of 2013, when the government, news media, television station, and bank websites were compromised, the national government committed to the training of 5,000 new cybersecurity experts by 2017. The South Korean government blamed its northern counterpart for these attacks, as well as incidents that occurred in 2009, 2011,[170] and 2012, but Pyongyang denies the accusations.[171]

United States[edit]

Legislation[edit]

The 1986 18 U.S.C. § 1030, more commonly known as the Computer Fraud and Abuse Act is the key legislation. It prohibits unauthorized access or damage of "protected computers" as defined in .

Although various other measures have been proposed, such as the "Cybersecurity Act of 2010 – S. 773" in 2009, the "International Cybercrime Reporting and Cooperation Act – H.R.4962"[172] and "Protecting Cyberspace as a National Asset Act of 2010 – S.3480"[173] in 2010 – none of these has succeeded.

Executive order 13636 Improving Critical Infrastructure Cybersecurity was signed 12 February 2013.

Agencies[edit]

The Department of Homeland Security has a dedicated division responsible for the response system, risk management program and requirements for cybersecurity in the United States called the National Cyber Security Division.[174][175] The division is home to US-CERT operations and the National Cyber Alert System.[175] The National Cybersecurity and Communications Integration Center brings together government organizations responsible for protecting computer networks and networked infrastructure.[176]

The third priority of the Federal Bureau of Investigation (FBI) is to: "Protect the United States against cyber-based attacks and high-technology crimes",[177] and they, along with the National White Collar Crime Center (NW3C), and the Bureau of Justice Assistance (BJA) are part of the multi-agency task force, The Internet Crime Complaint Center, also known as IC3.[178]

In addition to its own specific duties, the FBI participates alongside non-profit organizations such as InfraGard.[179][180]

In the criminal division of the United States Department of Justice operates a section called the Computer Crime and Intellectual Property Section. The CCIPS is in charge of investigating computer crime and intellectual property crime and is specialized in the search and seizure of digital evidence in computers and networks.[181]

The United States Cyber Command, also known as USCYBERCOM, is tasked with the defense of specified Department of Defense information networks and ensures "the security, integrity, and governance of government and military IT infrastructure and assets"[182] It has no role in the protection of civilian networks.[183][184]

The U.S. Federal Communications Commission's role in cybersecurity is to strengthen the protection of critical communications infrastructure, to assist in maintaining the reliability of networks during disasters, to aid in swift recovery after, and to ensure that first responders have access to effective communications services.[185]

The Food and Drug Administration has issued guidance for medical devices,[186] and the National Highway Traffic Safety Administration[187] is concerned with automotive cybersecurity. After being criticized by the Government Accountability Office,[188] and following successful attacks on airports and claimed attacks on airplanes, the Federal Aviation Administration has devoted funding to securing systems on board the planes of private manufacturers, and the Aircraft Communications Addressing and Reporting System.[189] Concerns have also been raised about the future Next Generation Air Transportation System.[190]

Computer emergency readiness team[edit]

"Computer emergency response team" is a name given to expert groups that handle computer security incidents. In the US, two distinct organization exist, although they do work closely together.

- US-CERT: part of the National Cyber Security Division of the United States Department of Homeland Security.[191]

- CERT/CC: created by the Defense Advanced Research Projects Agency (DARPA) and run by the Software Engineering Institute (SEI).

Modern warfare[edit]

There is growing concern that cyberspace will become the next theater of warfare. As Mark Clayton from the Christian Science Monitor described in an article titled "The New Cyber Arms Race":

In the future, wars will not just be fought by soldiers with guns or with planes that drop bombs. They will also be fought with the click of a mouse a half a world away that unleashes carefully weaponized computer programs that disrupt or destroy critical industries like utilities, transportation, communications, and energy. Such attacks could also disable military networks that control the movement of troops, the path of jet fighters, the command and control of warships.[192]

This has led to new terms such as cyberwarfare and cyberterrorism. The United States Cyber Command was created in 2009[193] and many other countries have similar forces.

Job market[edit]

Cybersecurity is a fast-growing field of IT concerned with reducing organizations' risk of hack or data breach.[194] According to research from the Enterprise Strategy Group, 46% of organizations say that they have a "problematic shortage" of cybersecurity skills in 2016, up from 28% in 2015.[195] Commercial, government and non-governmental organizations all employ cybersecurity professionals. The fastest increases in demand for cybersecurity workers are in industries managing increasing volumes of consumer data such as finance, health care, and retail.[196] However, the use of the term "cybersecurity" is more prevalent in government job descriptions.[197]

Typical cyber security job titles and descriptions include:[198]

- Security analyst

- Analyzes and assesses vulnerabilities in the infrastructure (software, hardware, networks), investigates using available tools and countermeasures to remedy the detected vulnerabilities, and recommends solutions and best practices. Analyzes and assesses damage to the data/infrastructure as a result of security incidents, examines available recovery tools and processes, and recommends solutions. Tests for compliance with security policies and procedures. May assist in the creation, implementation, or management of security solutions.

- Security engineer

- Performs security monitoring, security and data/logs analysis, and forensic analysis, to detect security incidents, and mounts the incident response. Investigates and utilizes new technologies and processes to enhance security capabilities and implement improvements. May also review code or perform other security engineering methodologies.

- Security architect

- Designs a security system or major components of a security system, and may head a security design team building a new security system.

- Security administrator

- Installs and manages organization-wide security systems. May also take on some of the tasks of a security analyst in smaller organizations.

- Chief Information Security Officer (CISO)

- A high-level management position responsible for the entire information security division/staff. The position may include hands-on technical work.

- Chief Security Officer (CSO)

- A high-level management position responsible for the entire security division/staff. A newer position now deemed needed as security risks grow.

- Security Consultant/Specialist/Intelligence

- Broad titles that encompass any one or all of the other roles or titles tasked with protecting computers, networks, software, data or information systems against viruses, worms, spyware, malware, intrusion detection, unauthorized access, denial-of-service attacks, and an ever increasing list of attacks by hackers acting as individuals or as part of organized crime or foreign governments.

Student programs are also available to people interested in beginning a career in cybersecurity.[199][200] Meanwhile, a flexible and effective option for information security professionals of all experience levels to keep studying is online security training, including webcasts.[201][202][203] A wide range of certified courses are also available.[204]

In the United Kingdom, a nationwide set of cyber security forums, known as the U.K Cyber Security Forum, were established supported by the Government's cyber security strategy[205] in order to encourage start-ups and innovation and to address the skills gap[206] identified by the U.K Government.

Terminology[edit]

The following terms used with regards to engineering secure systems are explained below.

- Access authorization restricts access to a computer to the group of users through the use of authentication systems. These systems can protect either the whole computer – such as through an interactive login screen – or individual services, such as an FTP server. There are many methods for identifying and authenticating users, such as passwords, identification cards, and, more recently, smart cards and biometric systems.

- Anti-virus software consists of computer programs that attempt to identify, thwart and eliminate computer viruses and other malicious software (malware).

- Applications are executable code, so general practice is to disallow users the power to install them; to install only those which are known to be reputable – and to reduce the attack surface by installing as few as possible. They are typically run with least privilege, with a robust process in place to identify, test and install any released security patches or updates for them.

- Authentication techniques can be used to ensure that communication end-points are who they say they are.]

- Automated theorem proving and other verification tools can enable critical algorithms and code used in secure systems to be mathematically proven to meet their specifications.

- Backups are one or more copies kept of important computer files. Typically multiple copies, (e.g. daily weekly and monthly), will be kept in different location away from the original, so that they are secure from damage if the original location has its security breached by an attacker, or is destroyed or damaged by natural disasters.

- Capability and access control list techniques can be used to ensure privilege separation and mandatory access control. This section discusses their use.

- Chain of trust techniques can be used to attempt to ensure that all software loaded has been certified as authentic by the system's designers.

- Confidentiality is the nondisclosure of information except to another authorized person.[207]

- Cryptographic techniques can be used to defend data in transit between systems, reducing the probability that data exchanged between systems can be intercepted or modified.

- Cyberwarfare is an internet-based conflict that involves politically motivated attacks on information and information systems. Such attacks can, for example, disable official websites and networks, disrupt or disable essential services, steal or alter classified data, and cripple financial systems.

- Data integrity is the accuracy and consistency of stored data, indicated by an absence of any alteration in data between two updates of a data record.[208]

- Encryption is used to protect the message from the eyes of others. Cryptographically secure ciphers are designed to make any practical attempt of breaking infeasible. Symmetric-key ciphers are suitable for bulk encryption using shared keys, and public-key encryption using digital certificates can provide a practical solution for the problem of securely communicating when no key is shared in advance.

- Endpoint security software helps networks to prevent exfiltration (data theft) and virus infection at network entry points made vulnerable by the prevalence of potentially infected portable computing devices, such as laptops and mobile devices, and external storage devices, such as USB drives.[209]

- Firewalls serve as a gatekeeper system between networks, allowing only traffic that matches defined rules. They often include detailed logging, and may include intrusion detection and intrusion prevention features. They are near-universal between company local area networks and the Internet, but can also be used internally to impose traffic rules between networks if network segmentation is configured.

- Honey pots are computers that are intentionally left vulnerable to attack by crackers. They can be used to catch crackers and to identify their techniques.

- Intrusion-detection systems can scan a network for people that are on the network but who should not be there or are doing things that they should not be doing, for example trying a lot of passwords to gain access to the network.

- A microkernel is an approach to operating system design which has only the near-minimum amount of code running at the most privileged level – and runs other elements of the operating system such as device drivers, protocol stacks and file systems, in the safer, less privileged user space.

- Pinging. The standard "ping" application can be used to test if an IP address is in use. If it is, attackers may then try a port scan to detect which services are exposed.

- A port scan is used to probe an IP address for open ports, and hence identify network services running there.

- Social engineering is the use of deception to manipulate individuals to breach security.

Cyberattack[edit]

A cyberattack is any type of offensive maneuver that targets computer information systems, infrastructures, computer networks, or personal computer devices. A cyberattack employed by nation-states, individuals, groups, society or organizations. A cyberattack may originate from an anonymous source. A cyberattack may steal, alters, or destroy a specified target by hacking into a susceptible system.[210]

In computers and computer networks an attack is any attempt to expose, alter, disable, destroy, steal or gain unauthorized access to or make unauthorized use of an Asset.[211]

Cyber attacks can be labelled as either a cyber campaign, cyberwarfare or cyberterrorism in different context. Cyberattacks can range from installing spyware on a personal computer to attempt to destroy the infrastructure of entire nations. Cyberattacks have become increasingly sophisticated and dangerous as the Stuxnet worm recently demonstrated.[212]

User behavior analytics and SIEM are used to prevent these attacks.

Legal experts are seeking to limit use of the term to incidents causing physical damage, distinguishing it from the more routine data breaches and broader hacking activities.[213]

Definitions[edit]

Internet Engineering Task Force defines attack in RFC 2828 as:[88]

- an assault on system security that derives from an intelligent threat, i.e., an intelligent act that is a deliberate attempt (especially in the sense of a method or technique) to evade security services and violate the security policy of a system.

CNSS Instruction No. 4009 dated 26 April 2010 by Committee on National Security Systems of United States of America[89] defines an attack as:

- Any kind of malicious activity that attempts to collect, disrupt, deny, degrade, or destroy information system resources or the information itself.

The increasing dependencies of modern society on information and computers networks (both in private and public sectors, including military)[214][215][216] has led to new terms like cyber attack and cyberwarfare.

CNSS Instruction No. 4009[89] define a cyber attack as:

- An attack, via cyberspace, targeting an enterprise’s use of cyberspace for the purpose of disrupting, disabling, destroying, or maliciously controlling a computing environment/infrastructure; or destroying the integrity of the data or stealing controlled information.

Cyberwarfare and cyberterrorism[edit]

Cyberwarfare utilizes techniques of defending and attacking information and computer networks that inhabit cyberspace, often through a prolonged cyber campaign or series of related campaigns. It denies an opponent's ability to do the same, while employing technological instruments of war to attack an opponent's critical computer systems. Cyberterrorism, on the other hand, is "the use of computer network tools to shut down critical national infrastructures (such as energy, transportation, government operations) or to coerce or intimidate a government or civilian population".[217] That means the end result of both cyberwarfare and cyberterrorism is the same, to damage critical infrastructures and computer systems linked together within the confines of cyberspace.

Factors[edit]

This section needs additional citations for verification. (July 2014) |

Three factors contribute to why cyber-attacks are launched against a state or an individual: the fear factor, spectacularity factor, and vulnerability factor.

Spectacularity factor[edit]

The spectacularity factor is a measure of the actual damage achieved by an attack, meaning that the attack creates direct losses (usually loss of availability or loss of income) and garners negative publicity. On February 8, 2000, a Denial of Service attack severely reduced traffic to many major sites, including Amazon, Buy.com, CNN, and eBay (the attack continued to affect still other sites the next day).[218] Amazon reportedly estimated the loss of business at $600,000.[218]

Vulnerability factor[edit]

Vulnerability factor exploits how vulnerable an organization or government establishment is to cyber-attacks. An organization can be vulnerable to a denial of service attack, and a government establishment can be defaced on a web page. A computer network attack disrupts the integrity or authenticity of data, usually through malicious code that alters program logic that controls data, leading to errors in output.[219]

Professional hackers to cyberterrorists[edit]

This section possibly contains original research. (March 2015) |

Professional hackers, either working on their own or employed by the government or military service, can find computer systems with vulnerabilities lacking the appropriate security software. Once found, they can infect systems with malicious code and then remotely control the system or computer by sending commands to view content or to disrupt other computers. There needs to be a pre-existing system flaw within the computer such as no antivirus protection or faulty system configuration for the viral code to work. Many professional hackers will promote themselves to cyberterrorists where a new set of rules govern their actions. Cyberterrorists have premeditated plans and their attacks are not born of rage. They need to develop their plans step-by-step and acquire the appropriate software to carry out an attack. They usually have political agendas, targeting political structures. Cyber terrorists are hackers with a political motivation, their attacks can impact political structure through this corruption and destruction.[220] They also target civilians, civilian interests and civilian installations. As previously stated cyberterrorists attack persons or property and cause enough harm to generate fear.

Types of attack[edit]

An attack can be active or passive.[88]

- An "active attack" attempts to alter system resources or affect their operation.

- A "passive attack" attempts to learn or make use of information from the system but does not affect system resources (e.g., wiretapping).

An attack can be perpetrated by an insider or from outside the organization;[88]

- An "inside attack" is an attack initiated by an entity inside the security perimeter (an "insider"), i.e., an entity that is authorized to access system resources but uses them in a way not approved by those who granted the authorization.

- An "outside attack" is initiated from outside the perimeter, by an unauthorized or illegitimate user of the system (an "outsider"). In the Internet, potential outside attackers range from amateur pranksters to organized criminals, international terrorists, and hostile governments.

The term "attack" relates to some other basic security terms as shown in the following diagram:[88]

+ - - - - - - - - - - - - + + - - - - + + - - - - - - - - - - -+

| An Attack: | |Counter- | | A System Resource: |

| i.e., A Threat Action | | measure | | Target of the Attack |

| +----------+ | | | | +-----------------+ |

| | Attacker |<==================||<========= | |

| | i.e., | Passive | | | | | Vulnerability | |

| | A Threat |<=================>||<========> | |

| | Agent | or Active | | | | +-------|||-------+ |

| +----------+ Attack | | | | VVV |

| | | | | Threat Consequences |

+ - - - - - - - - - - - - + + - - - - + + - - - - - - - - - - -+

A resource (both physical or logical), called an asset, can have one or more vulnerabilities that can be exploited by a threat agent in a threat action. As a result, the confidentiality, integrity or availability of resources may be compromised. Potentially, the damage may extend to resources in addition to the one initially identified as vulnerable, including further resources of the organization, and the resources of other involved parties (customers, suppliers).

The so-called CIA triad is the basis of information security.

The attack can be active when it attempts to alter system resources or affect their operation: so it compromises integrity or availability. A "passive attack" attempts to learn or make use of information from the system but does not affect system resources: so it compromises confidentiality.

A threat is a potential for violation of security, which exists when there is a circumstance, capability, action, or event that could breach security and cause harm. That is, a threat is a possible danger that might exploit a vulnerability. A threat can be either "intentional" (i.e., intelligent; e.g., an individual cracker or a criminal organization) or "accidental" (e.g., the possibility of a computer malfunctioning, or the possibility of an "act of God" such as an earthquake, a fire, or a tornado).[88]

A set of policies concerned with information security management, the information security management systems (ISMS), has been developed to manage, according to risk management principles, the countermeasures in order to accomplish to a security strategy set up following rules and regulations applicable in a country.[221]

An attack should led to a security incident i.e. a security event that involves a security violation. In other words, a security-relevant system event in which the system's security policy is disobeyed or otherwise breached.

The overall picture represents the risk factors of the risk scenario.[222]

An organization should make steps to detect, classify and manage security incidents. The first logical step is to set up an incident response plan and eventually a computer emergency response team.

In order to detect attacks, a number of countermeasures can be set up at organizational, procedural and technical levels. Computer emergency response team, information technology security audit and intrusion detection system are example of these.[223]

An attack usually is perpetrated by someone with bad intentions: black hatted attacks falls in this category, while other perform penetration testing on an organization information system to find out if all foreseen controls are in place.

The attacks can be classified according to their origin: i.e. if it is conducted using one or more computers: in the last case is called a distributed attack. Botnets are used to conduct distributed attacks.

Other classifications are according to the procedures used or the type of vulnerabilities exploited: attacks can be concentrated on network mechanisms or host features.

Some attacks are physical: i.e. theft or damage of computers and other equipment. Others are attempts to force changes in the logic used by computers or network protocols in order to achieve unforeseen (by the original designer) result but useful for the attacker. Software used to for logical attacks on computers is called malware.

The following is a partial short list of attacks:

- Passive

- Network

- Active

Syntactic attacks[edit]

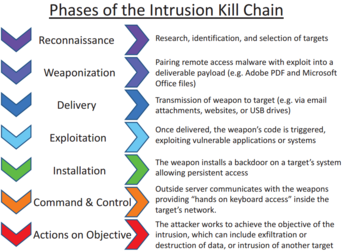

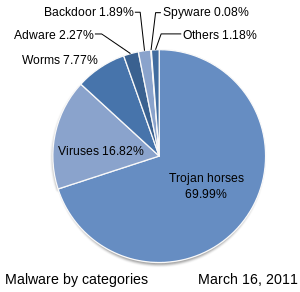

In detail, there are a number of techniques to utilize in cyber-attacks and a variety of ways to administer them to individuals or establishments on a broader scale. Attacks are broken down into two categories: syntactic attacks and semantic attacks. Syntactic attacks are straightforward; it is considered malicious software which includes viruses, worms, and Trojan horses.

Viruses[edit]

A virus is a self-replicating program that can attach itself to another program or file in order to reproduce. The virus can hide in unlikely locations in the memory of a computer system and attach itself to whatever file it sees fit to execute its code. It can also change its digital footprint each time it replicates making it harder to track down in the computer.

Worms[edit]

A worm does not need another file or program to copy itself; it is a self-sustaining running program. Worms replicate over a network using protocols. The latest incarnation of worms make use of known vulnerabilities in systems to penetrate, execute their code, and replicate to other systems such as the Code Red II worm that infected more than 259 000 systems in less than 14 hours.[225] On a much larger scale, worms can be designed for industrial espionage to monitor and collect server and traffic activities then transmit it back to its creator.

Trojan horses[edit]