Cognitive science

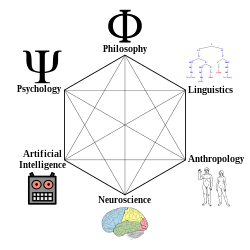

Cognitive science is the interdisciplinary, scientific study of the mind and its processes.[2] It examines the nature, the tasks, and the functions of cognition (in a broad sense). Mental faculties of concern to cognitive scientists include language, perception, memory, attention, reasoning, and emotion; to understand these faculties, cognitive scientists borrow from fields such as linguistics, psychology, artificial intelligence, philosophy, neuroscience, and anthropology.[3] The typical analysis of cognitive science spans many levels of organization, from learning and decision to logic and planning; from neural circuitry to modular brain organization. One of the fundamental concepts of cognitive science is that "thinking can best be understood in terms of representational structures in the mind and computational procedures that operate on those structures."[3]

History[edit]

The cognitive sciences began as an intellectual movement in the 1950s, called the cognitive revolution. Cognitive science has a prehistory traceable back to ancient Greek philosophical texts (see Plato's Meno and Aristotle's De Anima); Modern philosophers such as Descartes, David Hume, Immanuel Kant, Benedict de Spinoza, Nicolas Malebranche, Pierre Cabanis, Leibniz and John Locke, rejected scholasticism while mostly having never read Aristotle, and they were working with an entirely different set of tools and core concepts than those of the cognitive scientist.

The modern culture of cognitive science can be traced back to the early cyberneticists in the 1930s and 1940s, such as Warren McCulloch and Walter Pitts, who sought to understand the organizing principles of the mind. McCulloch and Pitts developed the first variants of what are now known as artificial neural networks, models of computation inspired by the structure of biological neural networks.

Another precursor was the early development of the theory of computation and the digital computer in the 1940s and 1950s. Kurt Gödel, Alonzo Church, Alan Turing, and John von Neumann were instrumental in these developments. The modern computer, or Von Neumann machine, would play a central role in cognitive science, both as a metaphor for the mind, and as a tool for investigation.

The first instance of cognitive science experiments being done at an academic institution took place at MIT Sloan School of Management, established by J.C.R. Licklider working within the psychology department and conducting experiments using computer memory as models for human cognition.[4] In 1959, Noam Chomsky published a scathing review of B. F. Skinner's book Verbal Behavior.[5] At the time, Skinner's behaviorist paradigm dominated the field of psychology within the United States. Most psychologists focused on functional relations between stimulus and response, without positing internal representations. Chomsky argued that in order to explain language, we needed a theory like generative grammar, which not only attributed internal representations but characterized their underlying order.

The term cognitive science was coined by Christopher Longuet-Higgins in his 1973 commentary on the Lighthill report, which concerned the then-current state of artificial intelligence research.[6] In the same decade, the journal Cognitive Science and the Cognitive Science Society were founded.[7] The founding meeting of the Cognitive Science Society was held at the University of California, San Diego in 1979, which resulted in cognitive science becoming an internationally visible enterprise.[8] In 1972, Hampshire College started the first undergraduate education program in Cognitive Science, led by Neil Stillings. In 1982, with assistance from Professor Stillings, Vassar College became the first institution in the world to grant an undergraduate degree in Cognitive Science.[9] In 1986, the first Cognitive Science Department in the world was founded at the University of California, San Diego.[8]

In the 1970s and early 1980s, as access to computers increased, artificial intelligence research expanded. Researchers such as Marvin Minsky would write computer programs in languages such as LISP to attempt to formally characterize the steps that human beings went through, for instance, in making decisions and solving problems, in the hope of better understanding human thought, and also in the hope of creating artificial minds. This approach is known as "symbolic AI".

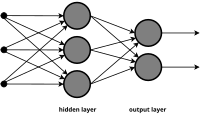

Eventually the limits of the symbolic AI research program became apparent. For instance, it seemed to be unrealistic to comprehensively list human knowledge in a form usable by a symbolic computer program. The late 80s and 90s saw the rise of neural networks and connectionism as a research paradigm. Under this point of view, often attributed to James McClelland and David Rumelhart, the mind could be characterized as a set of complex associations, represented as a layered network. Critics argue that there are some phenomena which are better captured by symbolic models, and that connectionist models are often so complex as to have little explanatory power. Recently symbolic and connectionist models have been combined, making it possible to take advantage of both forms of explanation.[10][11] While both connectionism and symbolic approaches have proven useful for testing various hypotheses and exploring approaches to understanding aspects of cognition and lower level brain functions, neither are biologically realistic and therefore, both suffer from a lack of neuroscientific plausibility.[12][13][14][15][16][17][18] Connectionism has proven useful for exploring computationally how cognition emerges in development and occurs in the human brain, and has provided alternatives to strictly domain-specific / domain general approaches. For example, scientists such as Jeff Elman, Liz Bates, and Annette Karmiloff-Smith have posited that networks in the brain emerge from the dynamic interaction between them and environmental input.[19]

Recent developments in quantum computation, including the ability to run quantum circuits on quantum computers such as IBM Quantum Platform, has accelerated work using elements from quantum mechanics in cognitive models.[20][21]

Principles[edit]

Levels of analysis[edit]

A central tenet of cognitive science is that a complete understanding of the mind/brain cannot be attained by studying only a single level. An example would be the problem of remembering a phone number and recalling it later. One approach to understanding this process would be to study behavior through direct observation, or naturalistic observation. A person could be presented with a phone number and be asked to recall it after some delay of time; then the accuracy of the response could be measured. Another approach to measure cognitive ability would be to study the firings of individual neurons while a person is trying to remember the phone number. Neither of these experiments on its own would fully explain how the process of remembering a phone number works. Even if the technology to map out every neuron in the brain in real-time were available and it were known when each neuron fired it would still be impossible to know how a particular firing of neurons translates into the observed behavior. Thus an understanding of how these two levels relate to each other is imperative. Francisco Varela, in The Embodied Mind: Cognitive Science and Human Experience, argues that "the new sciences of the mind need to enlarge their horizon to encompass both lived human experience and the possibilities for transformation inherent in human experience".[22] On the classic cognitivist view, this can be provided by a functional level account of the process. Studying a particular phenomenon from multiple levels creates a better understanding of the processes that occur in the brain to give rise to a particular behavior. Marr[23] gave a famous description of three levels of analysis:

- The computational theory, specifying the goals of the computation;

- Representation and algorithms, giving a representation of the inputs and outputs and the algorithms which transform one into the other; and

- The hardware implementation, or how algorithm and representation may be physically realized.

Interdisciplinary nature[edit]

Cognitive science is an interdisciplinary field with contributors from various fields, including psychology, neuroscience, linguistics, philosophy of mind, computer science, anthropology and biology. Cognitive scientists work collectively in hope of understanding the mind and its interactions with the surrounding world much like other sciences do. The field regards itself as compatible with the physical sciences and uses the scientific method as well as simulation or modeling, often comparing the output of models with aspects of human cognition. Similarly to the field of psychology, there is some doubt whether there is a unified cognitive science, which have led some researchers to prefer 'cognitive sciences' in plural.[24][25]

Many, but not all, who consider themselves cognitive scientists hold a functionalist view of the mind—the view that mental states and processes should be explained by their function – what they do. According to the multiple realizability account of functionalism, even non-human systems such as robots and computers can be ascribed as having cognition.

Cognitive science: the term[edit]

The term "cognitive" in "cognitive science" is used for "any kind of mental operation or structure that can be studied in precise terms" (Lakoff and Johnson, 1999). This conceptualization is very broad, and should not be confused with how "cognitive" is used in some traditions of analytic philosophy, where "cognitive" has to do only with formal rules and truth-conditional semantics.

The earliest entries for the word "cognitive" in the OED take it to mean roughly "pertaining to the action or process of knowing". The first entry, from 1586, shows the word was at one time used in the context of discussions of Platonic theories of knowledge. Most in cognitive science, however, presumably do not believe their field is the study of anything as certain as the knowledge sought by Plato. [26]

Scope[edit]

Cognitive science is a large field, and covers a wide array of topics on cognition. However, it should be recognized that cognitive science has not always been equally concerned with every topic that might bear relevance to the nature and operation of minds. Classical cognitivists have largely de-emphasized or avoided social and cultural factors, embodiment, emotion, consciousness, animal cognition, and comparative and evolutionary psychologies. However, with the decline of behaviorism, internal states such as affects and emotions, as well as awareness and covert attention became approachable again. For example, situated and embodied cognition theories take into account the current state of the environment as well as the role of the body in cognition. With the newfound emphasis on information processing, observable behavior was no longer the hallmark of psychological theory, but the modeling or recording of mental states.

Below are some of the main topics that cognitive science is concerned with. This is not an exhaustive list. See List of cognitive science topics for a list of various aspects of the field.

Artificial intelligence[edit]

Artificial intelligence (AI) involves the study of cognitive phenomena in machines. One of the practical goals of AI is to implement aspects of human intelligence in computers. Computers are also widely used as a tool with which to study cognitive phenomena. Computational modeling uses simulations to study how human intelligence may be structured.[27] (See § Computational modeling.)

There is some debate in the field as to whether the mind is best viewed as a huge array of small but individually feeble elements (i.e. neurons), or as a collection of higher-level structures such as symbols, schemes, plans, and rules. The former view uses connectionism to study the mind, whereas the latter emphasizes symbolic artificial intelligence. One way to view the issue is whether it is possible to accurately simulate a human brain on a computer without accurately simulating the neurons that make up the human brain.

Attention[edit]

Attention is the selection of important information. The human mind is bombarded with millions of stimuli and it must have a way of deciding which of this information to process. Attention is sometimes seen as a spotlight, meaning one can only shine the light on a particular set of information. Experiments that support this metaphor include the dichotic listening task (Cherry, 1957) and studies of inattentional blindness (Mack and Rock, 1998). In the dichotic listening task, subjects are bombarded with two different messages, one in each ear, and told to focus on only one of the messages. At the end of the experiment, when asked about the content of the unattended message, subjects cannot report it.

The psychological construct of Attention is sometimes confused with the concept of Intentionality due to some degree of semantic ambiguity in their definitions. At the beginning of experimental research on Attention, Wilhelm Wundt defined this term as "that psychical process, which is operative in the clear perception of the narrow region of the content of consciousness."[28] His experiments showed the limits of Attention in space and time, which were 3-6 letters during an exposition of 1/10 s.[28] Because this notion develops within the framework of the original meaning during a hundred years of research, the definition of Attention would reflect the sense when it accounts for the main features initially attributed to this term – it is a process of controlling thought that continues over time.[29] While Intentionality is the power of minds to be about something, Attention is the concentration of awareness on some phenomenon during a period of time, which is necessary to elevate the clear perception of the narrow region of the content of consciousness and which is feasible to control this focus in mind.

The significance of knowledge about the scope of attention for studying cognition is that it defines the intellectual functions of cognition such as apprehension, judgment, reasoning, and working memory. The development of attention scope increases the set of faculties responsible for the mind relies on how it perceives, remembers, considers, and evaluates in making decisions.[30] The ground of this statement is that the more details (associated with an event) the mind may grasp for their comparison, association, and categorization, the closer apprehension, judgment, and reasoning of the event are in accord with reality.[31] According to Latvian professor Sandra Mihailova and professor Igor Val Danilov, the more elements of the phenomenon (or phenomena ) the mind can keep in the scope of attention simultaneously, the more significant number of reasonable combinations within that event it can achieve, enhancing the probability of better understanding features and particularity of the phenomenon (phenomena).[31] For example, three items in the focal point of consciousness yield six possible combinations (3 factorial) and four items – 24 (4 factorial) combinations. The number of reasonable combinations becomes significant in the case of a focal point with six items with 720 possible combinations (6 factorial).[31]

[edit]

Embodied cognition approaches to cognitive science emphasize the role of body and environment in cognition. This includes both neural and extra-neural bodily processes, and factors that range from affective and emotional processes,[32] to posture, motor control, proprioception, and kinaesthesis,[33] to autonomic processes that involve heartbeat[34] and respiration,[35] to the role of the enteric gut microbiome.[36] It also includes accounts of how the body engages with or is coupled to social and physical environments. 4E (embodied, embedded, extended and enactive) cognition[37][38] includes a broad range of views about brain-body-environment interaction, from causal embeddedness to stronger claims about how the mind extends to include tools and instruments, as well as the role of social interactions, action-oriented processes, and affordances. 4E theories range from those closer to classic cognitivism (so-called "weak" embodied cognition[39]) to stronger extended[40] and enactive versions that are sometimes referred to as radical embodied cognitive science.[41][42]

Knowledge and processing of language[edit]

The ability to learn and understand language is an extremely complex process. Language is acquired within the first few years of life, and all humans under normal circumstances are able to acquire language proficiently. A major driving force in the theoretical linguistic field is discovering the nature that language must have in the abstract in order to be learned in such a fashion. Some of the driving research questions in studying how the brain itself processes language include: (1) To what extent is linguistic knowledge innate or learned?, (2) Why is it more difficult for adults to acquire a second-language than it is for infants to acquire their first-language?, and (3) How are humans able to understand novel sentences?

The study of language processing ranges from the investigation of the sound patterns of speech to the meaning of words and whole sentences. Linguistics often divides language processing into orthography, phonetics, phonology, morphology, syntax, semantics, and pragmatics. Many aspects of language can be studied from each of these components and from their interaction.[43][better source needed]

The study of language processing in cognitive science is closely tied to the field of linguistics. Linguistics was traditionally studied as a part of the humanities, including studies of history, art and literature. In the last fifty years or so, more and more researchers have studied knowledge and use of language as a cognitive phenomenon, the main problems being how knowledge of language can be acquired and used, and what precisely it consists of.[44] Linguists have found that, while humans form sentences in ways apparently governed by very complex systems, they are remarkably unaware of the rules that govern their own speech. Thus linguists must resort to indirect methods to determine what those rules might be, if indeed rules as such exist. In any event, if speech is indeed governed by rules, they appear to be opaque to any conscious consideration.

Learning and development[edit]

Learning and development are the processes by which we acquire knowledge and information over time. Infants are born with little or no knowledge (depending on how knowledge is defined), yet they rapidly acquire the ability to use language, walk, and recognize people and objects. Research in learning and development aims to explain the mechanisms by which these processes might take place.

A major question in the study of cognitive development is the extent to which certain abilities are innate or learned. This is often framed in terms of the nature and nurture debate. The nativist view emphasizes that certain features are innate to an organism and are determined by its genetic endowment. The empiricist view, on the other hand, emphasizes that certain abilities are learned from the environment. Although clearly both genetic and environmental input is needed for a child to develop normally, considerable debate remains about how genetic information might guide cognitive development. In the area of language acquisition, for example, some (such as Steven Pinker)[45] have argued that specific information containing universal grammatical rules must be contained in the genes, whereas others (such as Jeffrey Elman and colleagues in Rethinking Innateness) have argued that Pinker's claims are biologically unrealistic. They argue that genes determine the architecture of a learning system, but that specific "facts" about how grammar works can only be learned as a result of experience.

Memory[edit]

Memory allows us to store information for later retrieval. Memory is often thought of as consisting of both a long-term and short-term store. Long-term memory allows us to store information over prolonged periods (days, weeks, years). We do not yet know the practical limit of long-term memory capacity. Short-term memory allows us to store information over short time scales (seconds or minutes).

Memory is also often grouped into declarative and procedural forms. Declarative memory—grouped into subsets of semantic and episodic forms of memory—refers to our memory for facts and specific knowledge, specific meanings, and specific experiences (e.g. "Are apples food?", or "What did I eat for breakfast four days ago?"). Procedural memory allows us to remember actions and motor sequences (e.g. how to ride a bicycle) and is often dubbed implicit knowledge or memory .

Cognitive scientists study memory just as psychologists do, but tend to focus more on how memory bears on cognitive processes, and the interrelationship between cognition and memory. One example of this could be, what mental processes does a person go through to retrieve a long-lost memory? Or, what differentiates between the cognitive process of recognition (seeing hints of something before remembering it, or memory in context) and recall (retrieving a memory, as in "fill-in-the-blank")?

Perception and action[edit]

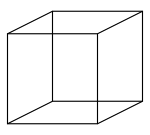

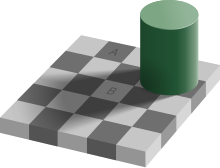

Perception is the ability to take in information via the senses, and process it in some way. Vision and hearing are two dominant senses that allow us to perceive the environment. Some questions in the study of visual perception, for example, include: (1) How are we able to recognize objects?, (2) Why do we perceive a continuous visual environment, even though we only see small bits of it at any one time? One tool for studying visual perception is by looking at how people process optical illusions. The image on the right of a Necker cube is an example of a bistable percept, that is, the cube can be interpreted as being oriented in two different directions.

The study of haptic (tactile), olfactory, and gustatory stimuli also fall into the domain of perception.

Action is taken to refer to the output of a system. In humans, this is accomplished through motor responses. Spatial planning and movement, speech production, and complex motor movements are all aspects of action.

Consciousness[edit]

Consciousness is the awareness of experiences within oneself. This helps the mind with having the ability to experience or feel a sense of self.

Research methods[edit]

Many different methodologies are used to study cognitive science. As the field is highly interdisciplinary, research often cuts across multiple areas of study, drawing on research methods from psychology, neuroscience, computer science and systems theory.

Behavioral experiments[edit]

In order to have a description of what constitutes intelligent behavior, one must study behavior itself. This type of research is closely tied to that in cognitive psychology and psychophysics. By measuring behavioral responses to different stimuli, one can understand something about how those stimuli are processed. Lewandowski & Strohmetz (2009) reviewed a collection of innovative uses of behavioral measurement in psychology including behavioral traces, behavioral observations, and behavioral choice.[46] Behavioral traces are pieces of evidence that indicate behavior occurred, but the actor is not present (e.g., litter in a parking lot or readings on an electric meter). Behavioral observations involve the direct witnessing of the actor engaging in the behavior (e.g., watching how close a person sits next to another person). Behavioral choices are when a person selects between two or more options (e.g., voting behavior, choice of a punishment for another participant).

- Reaction time. The time between the presentation of a stimulus and an appropriate response can indicate differences between two cognitive processes, and can indicate some things about their nature. For example, if in a search task the reaction times vary proportionally with the number of elements, then it is evident that this cognitive process of searching involves serial instead of parallel processing.

- Psychophysical responses. Psychophysical experiments are an old psychological technique, which has been adopted by cognitive psychology. They typically involve making judgments of some physical property, e.g. the loudness of a sound. Correlation of subjective scales between individuals can show cognitive or sensory biases as compared to actual physical measurements. Some examples include:

- sameness judgments for colors, tones, textures, etc.

- threshold differences for colors, tones, textures, etc.

- Eye tracking. This methodology is used to study a variety of cognitive processes, most notably visual perception and language processing. The fixation point of the eyes is linked to an individual's focus of attention. Thus, by monitoring eye movements, we can study what information is being processed at a given time. Eye tracking allows us to study cognitive processes on extremely short time scales. Eye movements reflect online decision making during a task, and they provide us with some insight into the ways in which those decisions may be processed.[47]

Brain imaging[edit]

Brain imaging involves analyzing activity within the brain while performing various tasks. This allows us to link behavior and brain function to help understand how information is processed. Different types of imaging techniques vary in their temporal (time-based) and spatial (location-based) resolution. Brain imaging is often used in cognitive neuroscience.

- Single-photon emission computed tomography and positron emission tomography. SPECT and PET use radioactive isotopes, which are injected into the subject's bloodstream and taken up by the brain. By observing which areas of the brain take up the radioactive isotope, we can see which areas of the brain are more active than other areas. PET has similar spatial resolution to fMRI, but it has extremely poor temporal resolution.

- Electroencephalography. EEG measures the electrical fields generated by large populations of neurons in the cortex by placing a series of electrodes on the scalp of the subject. This technique has an extremely high temporal resolution, but a relatively poor spatial resolution.

- Functional magnetic resonance imaging. fMRI measures the relative amount of oxygenated blood flowing to different parts of the brain. More oxygenated blood in a particular region is assumed to correlate with an increase in neural activity in that part of the brain. This allows us to localize particular functions within different brain regions. fMRI has moderate spatial and temporal resolution.

- Optical imaging. This technique uses infrared transmitters and receivers to measure the amount of light reflectance by blood near different areas of the brain. Since oxygenated and deoxygenated blood reflects light by different amounts, we can study which areas are more active (i.e., those that have more oxygenated blood). Optical imaging has moderate temporal resolution, but poor spatial resolution. It also has the advantage that it is extremely safe and can be used to study infants' brains.

- Magnetoencephalography. MEG measures magnetic fields resulting from cortical activity. It is similar to EEG, except that it has improved spatial resolution since the magnetic fields it measures are not as blurred or attenuated by the scalp, meninges and so forth as the electrical activity measured in EEG is. MEG uses SQUID sensors to detect tiny magnetic fields.

Computational modeling[edit]

Computational models require a mathematically and logically formal representation of a problem. Computer models are used in the simulation and experimental verification of different specific and general properties of intelligence. Computational modeling can help us understand the functional organization of a particular cognitive phenomenon. Approaches to cognitive modeling can be categorized as: (1) symbolic, on abstract mental functions of an intelligent mind by means of symbols; (2) subsymbolic, on the neural and associative properties of the human brain; and (3) across the symbolic–subsymbolic border, including hybrid.

- Symbolic modeling evolved from the computer science paradigms using the technologies of knowledge-based systems, as well as a philosophical perspective (e.g. "Good Old-Fashioned Artificial Intelligence" (GOFAI)). They were developed by the first cognitive researchers and later used in information engineering for expert systems. Since the early 1990s it was generalized in systemics for the investigation of functional human-like intelligence models, such as personoids, and, in parallel, developed as the SOAR environment. Recently, especially in the context of cognitive decision-making, symbolic cognitive modeling has been extended to the socio-cognitive approach, including social and organizational cognition, interrelated with a sub-symbolic non-conscious layer.

- Subsymbolic modeling includes connectionist/neural network models. Connectionism relies on the idea that the mind/brain is composed of simple nodes and its problem-solving capacity derives from the connections between them. Neural nets are textbook implementations of this approach. Some critics of this approach feel that while these models approach biological reality as a representation of how the system works, these models lack explanatory powers because, even in systems endowed with simple connection rules, the emerging high complexity makes them less interpretable at the connection-level than they apparently are at the macroscopic level.

- Other approaches gaining in popularity include (1) dynamical systems theory, (2) mapping symbolic models onto connectionist models (Neural-symbolic integration or hybrid intelligent systems), and (3) and Bayesian models, which are often drawn from machine learning.

All the above approaches tend either to be generalized to the form of integrated computational models of a synthetic/abstract intelligence (i.e. cognitive architecture) in order to be applied to the explanation and improvement of individual and social/organizational decision-making and reasoning[48][49] or to focus on single simulative programs (or microtheories/"middle-range" theories) modelling specific cognitive faculties (e.g. vision, language, categorization etc.).

Neurobiological methods[edit]

Research methods borrowed directly from neuroscience and neuropsychology can also help us to understand aspects of intelligence. These methods allow us to understand how intelligent behavior is implemented in a physical system.

Key findings[edit]

Cognitive science has given rise to models of human cognitive bias and risk perception, and has been influential in the development of behavioral finance, part of economics. It has also given rise to a new theory of the philosophy of mathematics (related to denotational mathematics), and many theories of artificial intelligence, persuasion and coercion. It has made its presence known in the philosophy of language and epistemology as well as constituting a substantial wing of modern linguistics. Fields of cognitive science have been influential in understanding the brain's particular functional systems (and functional deficits) ranging from speech production to auditory processing and visual perception. It has made progress in understanding how damage to particular areas of the brain affect cognition, and it has helped to uncover the root causes and results of specific dysfunction, such as dyslexia, anopsia, and hemispatial neglect.

Notable researchers[edit]

This section needs additional citations for verification. (August 2012) |

| Name | Year of birth | Year of contribution | Contribution(s) |

|---|---|---|---|

| David Chalmers | 1966[50] | 1995[51] | Dualism, hard problem of consciousness |

| Daniel Dennett | 1942[52] | 1987 | Offered a computational systems perspective (Multiple drafts model) |

| John Searle | 1932[53] | 1980 | Chinese room |

| Douglas Hofstadter | 1945 | 1979[54] | Gödel, Escher, Bach[55] |

| Jerry Fodor | 1935[56] | 1968, 1975 | Functionalism |

| Alan Baddeley | 1934[57] | 1974 | Baddeley's model of working memory |

| Marvin Minsky | 1927[58] | 1970s, early 1980s | Wrote computer programs in languages such as LISP to attempt to formally characterize the steps that human beings go through, such as making decisions and solving problems |

| Christopher Longuet-Higgins | 1923[59] | 1973 | Coined the term cognitive science |

| Noam Chomsky | 1928[60] | 1959 | Published a review of B.F. Skinner's book Verbal Behavior which began cognitivism against then-dominant behaviorism[5] |

| George Miller | 1920 | 1956 | Wrote about the capacities of human thinking through mental representations |

| Herbert Simon | 1916 | 1956 | Co-created Logic Theory Machine and General Problem Solver with Allen Newell, EPAM (Elementary Perceiver and Memorizer) theory, organizational decision-making |

| John McCarthy | 1927 | 1955 | Coined the term artificial intelligence and organized the famous Dartmouth conference in Summer 1956, which started AI as a field |

| McCulloch and Pitts | 1930s–1940s | Developed early artificial neural networks | |

| J. C. R. Licklider | 1915[61] | Established MIT Sloan School of Management | |

| Lila R. Gleitman | 1929 | 1970s-2010s | Wide-ranging contributions to understanding the cognition of language acquisition, including syntactic bootstrapping theory[62] |

| Eleanor Rosch | 1938 | 1976 | Development of the Prototype Theory of categorisation[63] |

| Philip N. Johnson-Laird | 1936 | 1980 | Introduced the idea of mental models in cognitive science[64] |

| Dedre Gentner | 1944 | 1983 | Development of the Structure-mapping Theory of analogical reasoning[65] |

| Allen Newell | 1927 | 1990 | Development of the field of Cognitive architecture in cognitive modelling and artificial intelligence[66] |

| Annette Karmiloff-Smith | 1938 | 1992 | Integrating neuroscience and computational modelling into theories of cognitive development[67] |

| David Marr (neuroscientist) | 1945 | 1990 | Proponent of the Three-Level Hypothesis of levels of analysis of computational systems[68] |

| Peter Gärdenfors | 1949 | 2000 | Creator of the conceptual space framework used in cognitive modelling and artificial intelligence. |

| Linda B. Smith | 1951 | 1993 | Together with Esther Thelen, created a dynamical systems approach to understanding cognitive development[69] |

Some of the more recognized names in cognitive science are usually either the most controversial or the most cited. Within philosophy, some familiar names include Daniel Dennett, who writes from a computational systems perspective,[70] John Searle, known for his controversial Chinese room argument,[71] and Jerry Fodor, who advocates functionalism.[72]

Others include David Chalmers, who advocates Dualism and is also known for articulating the hard problem of consciousness, and Douglas Hofstadter, famous for writing Gödel, Escher, Bach, which questions the nature of words and thought.

In the realm of linguistics, Noam Chomsky and George Lakoff have been influential (both have also become notable as political commentators). In artificial intelligence, Marvin Minsky, Herbert A. Simon, and Allen Newell are prominent.

Popular names in the discipline of psychology include George A. Miller, James McClelland, Philip Johnson-Laird, Lawrence Barsalou, Vittorio Guidano, Howard Gardner and Steven Pinker. Anthropologists Dan Sperber, Edwin Hutchins, Bradd Shore, James Wertsch and Scott Atran, have been involved in collaborative projects with cognitive and social psychologists, political scientists and evolutionary biologists in attempts to develop general theories of culture formation, religion, and political association.

Computational theories (with models and simulations) have also been developed, by David Rumelhart, James McClelland and Philip Johnson-Laird.

Epistemics[edit]

Epistemics is a term coined in 1969 by the University of Edinburgh with the foundation of its School of Epistemics. Epistemics is to be distinguished from epistemology in that epistemology is the philosophical theory of knowledge, whereas epistemics signifies the scientific study of knowledge.

Christopher Longuet-Higgins has defined it as "the construction of formal models of the processes (perceptual, intellectual, and linguistic) by which knowledge and understanding are achieved and communicated."[73] In his 1978 essay "Epistemics: The Regulative Theory of Cognition",[74] Alvin I. Goldman claims to have coined the term "epistemics" to describe a reorientation of epistemology. Goldman maintains that his epistemics is continuous with traditional epistemology and the new term is only to avoid opposition. Epistemics, in Goldman's version, differs only slightly from traditional epistemology in its alliance with the psychology of cognition; epistemics stresses the detailed study of mental processes and information-processing mechanisms that lead to knowledge or beliefs.

In the mid-1980s, the School of Epistemics was renamed as The Centre for Cognitive Science (CCS). In 1998, CCS was incorporated into the University of Edinburgh's School of Informatics.[75]

Binding problem in cognitive science[edit]

One of the core aims of cognitive science is to achieve an integrated theory of cognition. This requires integrative mechanisms explaining how the information processing that occurs simultaneously in spatially segregated (sub-)cortical areas in the brain is coordinated and bound together to give rise to coherent perceptual and symbolic representations. One approach is to solve this "Binding problem"[76][77][78] (that is, the problem of dynamically representing conjunctions of informational elements, from the most basic perceptual representations ("feature binding") to the most complex cognitive representations, like symbol structures ("variable binding")), by means of integrative synchronization mechanisms. In other words, one of the coordinating mechanisms appears to be the temporal (phase) synchronization of neural activity based on dynamical self-organizing processes in neural networks, described by the Binding-by-synchrony (BBS) Hypothesis from neurophysiology.[79][80][81][82] Connectionist cognitive neuroarchitectures have been developed that use integrative synchronization mechanisms to solve this binding problem in perceptual cognition and in language cognition.[83][84][85] In perceptual cognition the problem is to explain how elementary object properties and object relations, like the object color or the object form, can be dynamically bound together or can be integrated to a representation of this perceptual object by means of a synchronization mechanism ("feature binding", "feature linking"). In language cognition the problem is to explain how semantic concepts and syntactic roles can be dynamically bound together or can be integrated to complex cognitive representations like systematic and compositional symbol structures and propositions by means of a synchronization mechanism ("variable binding") (see also the "Symbolism vs. connectionism debate" in connectionism).

However, despite significant advances in understanding the integrated theory of cognition (specifically the Binding problem), the debate on this issue of beginning cognition is still in progress. From the different perspectives noted above, this problem can be reduced to the issue of how organisms at the simple reflexes stage of development overcome the threshold of the environmental chaos of sensory stimuli: electromagnetic waves, chemical interactions, and pressure fluctuations.[86] The so-called Primary Data Entry (PDE) thesis poses doubts about the ability of such an organism to overcome this cue threshold on its own.[87] In terms of mathematical tools, the PDE thesis underlines the insuperable high threshold of the cacophony of environmental stimuli (the stimuli noise) for young organisms at the onset of life.[87] It argues that the temporal (phase) synchronization of neural activity based on dynamical self-organizing processes in neural networks, any dynamical bound together or integration to a representation of the perceptual object by means of a synchronization mechanism can not help organisms in distinguishing relevant cue (informative stimulus) for overcome this noise threshold.[87]

See also[edit]

- Affective science

- Cognitive anthropology

- Cognitive biology

- Cognitive computing

- Cognitive ethology

- Cognitive linguistics

- Cognitive neuropsychology

- Cognitive neuroscience

- Cognitive psychology

- Cognitive science of religion

- Computational neuroscience

- Computational-representational understanding of mind

- Concept mining

- Decision field theory

- Decision theory

- Dynamicism

- Educational neuroscience

- Educational psychology

- Embodied cognition

- Embodied cognitive science

- Enactivism

- Epistemology

- Folk psychology

- Heterophenomenology

- Human Cognome Project

- Human–computer interaction

- Indiana Archives of Cognitive Science

- Informatics (academic field)

- List of cognitive scientists

- List of psychology awards

- Malleable intelligence

- Neural Darwinism

- Personal information management (PIM)

- Qualia

- Quantum cognition

- Simulated consciousness

- Situated cognition

- Society of Mind theory

- Spatial cognition

- Speech–language pathology

- Outlines

- Outline of human intelligence – topic tree presenting the traits, capacities, models, and research fields of human intelligence, and more.

- Outline of thought – topic tree that identifies many types of thoughts, types of thinking, aspects of thought, related fields, and more.

References[edit]

- ^ Miller, George A (1 March 2003). "The cognitive revolution: a historical perspective". Trends in Cognitive Sciences. 7 (3): 141–144. doi:10.1016/S1364-6613(03)00029-9. ISSN 1364-6613. PMID 12639696. S2CID 206129621. Archived from the original on 21 November 2018. Retrieved 5 February 2023.

- ^ "Ask the Cognitive Scientist". American Federation of Teachers. 8 August 2014. Archived from the original on 17 September 2014. Retrieved 25 December 2013.

Cognitive science is an interdisciplinary field of researchers from Linguistics, psychology, neuroscience, philosophy, computer science, and anthropology that seek to understand the mind.

- ^ a b Thagard, Paul, Cognitive Science Archived 15 July 2018 at the Wayback Machine, The Stanford Encyclopedia of Philosophy (Fall 2008 Edition), Edward N. Zalta (ed.).

- ^ Hafner, K.; Lyon, M. (1996). Where wizards stay up late: The origins of the Internet. New York: Simon & Schuster. p. 32. ISBN 0-684-81201-0.

- ^ a b Chomsky, Noam (1959). "Review of Verbal behavior". Language. 35 (1): 26–58. doi:10.2307/411334. ISSN 0097-8507. JSTOR 411334.

- ^ Longuet-Higgins, H. C. (1973). "Comments on the Lighthill Report and the Sutherland Reply". Artificial Intelligence: a paper symposium. Science Research Council. pp. 35–37. ISBN 0-901660-18-3.

- ^ Cognitive Science Society Archived 17 July 2010 at the Wayback Machine

- ^ a b "UCSD Cognitive Science - UCSD Cognitive Science". Archived from the original on 9 July 2015. Retrieved 8 July 2015.

- ^ Box 729. "About - Cognitive Science - Vassar College". Cogsci.vassar.edu. Archived from the original on 17 September 2012. Retrieved 15 August 2012.

{{cite web}}: CS1 maint: numeric names: authors list (link) - ^ d'Avila Garcez, Artur S.; Lamb, Luis C.; Gabbay, Dov M. (2008). Neural-Symbolic Cognitive Reasoning. Cognitive Technologies. Springer. ISBN 978-3-540-73245-7.

- ^ Sun, Ron; Bookman, Larry, eds. (1994). Computational Architectures Integrating Neural and Symbolic Processes. Needham, MA: Kluwer Academic. ISBN 0-7923-9517-4.

- ^ "Encephalos Journal". www.encephalos.gr. Archived from the original on 25 June 2011. Retrieved 20 February 2018.

- ^ Wilson, Elizabeth A. (4 February 2016). Neural Geographies: Feminism and the Microstructure of Cognition. Routledge. ISBN 9781317958765. Archived from the original on 5 February 2023. Retrieved 16 April 2018.

- ^ Di Paolo, Ezequiel A. (2003). "Organismically-inspired robotics: homeostatic adaptation and teleology beyond the closed sensorimotor loop". In Murase, Kazuyuki; Asakura, Toshiyuki (eds.). Dynamic Systems Approach for Embodiment and Sociality: From Ecological Psychology to Robotics. Advanced Knowledge International. pp. 19–42. CiteSeerX 10.1.1.62.4813. ISBN 978-0-9751004-1-7. S2CID 15349751.

- ^ Zorzi, Marco; Testolin, Alberto; Stoianov, Ivilin P. (20 August 2013). "Modeling language and cognition with deep unsupervised learning: a tutorial overview". Frontiers in Psychology. 4: 515. doi:10.3389/fpsyg.2013.00515. ISSN 1664-1078. PMC 3747356. PMID 23970869.

- ^ Tieszen, Richard (15 July 2011). "Analytic and Continental Philosophy, Science, and Global Philosophy". Comparative Philosophy. 2 (2). doi:10.31979/2151-6014(2011).020206.

- ^ Browne, A. (1997). Neural Network Perspectives on Cognition and Adaptive Robotics. CRC Press. ISBN 0-7503-0455-3. Archived from the original on 5 February 2023. Retrieved 16 April 2018.

- ^ Pfeifer, R.; Schreter, Z.; Fogelman-Soulié, F.; Steels, L. (1989). Connectionism in Perspective. Elsevier. ISBN 0-444-59876-6. Archived from the original on 5 February 2023. Retrieved 16 April 2018.

- ^ Karmiloff-Smith, A. (2015). "An alternative to domain-general or domain-specific frameworks for theorizing about human evolution and ontogenesis". AIMS Neuroscience. 2 (2): 91–104. doi:10.3934/Neuroscience.2015.2.91. PMC 4678597. PMID 26682283.

- ^ Pothos, E. M., & Busemeyer, J. R. (2022). Quantum Cognition. Annual Review of Psychology, 73, 749–778.

- ^ Widdows, D., Rani, J., & Pothos, E. M. (2023). Quantum Circuit Components for Cognitive Decision-Making. Entropy, 25(4), 548.

- ^ Varela, F. J., Thompson, E., & Rosch, E. (1991). The embodied mind: cognitive science and human experience. Cambridge, Massachusetts: MIT Press.

- ^ Marr, D. (1982). Vision: A Computational Investigation into the Human Representation and Processing of Visual Information. W. H. Freeman.

- ^ Miller, G. A. (2003). "The cognitive revolution: a historical perspective". Trends in Cognitive Sciences. 7 (3): 141–144. doi:10.1016/S1364-6613(03)00029-9. PMID 12639696. S2CID 206129621.

- ^ Ferrés, Joan; Masanet, Maria-Jose (2017). "Communication Efficiency in Education: Increasing Emotions and Storytelling". Comunicar (in Spanish). 25 (52): 51–60. doi:10.3916/c52-2017-05. hdl:10272/14087. ISSN 1134-3478.

- ^ Shields, Christopher (2020), "Aristotle's Psychology", in Zalta, Edward N. (ed.), The Stanford Encyclopedia of Philosophy (Winter 2020 ed.), Metaphysics Research Lab, Stanford University, archived from the original on 20 October 2022, retrieved 20 October 2022

- ^ Sun, Ron (ed.) (2008). The Cambridge Handbook of Computational Psychology. Cambridge University Press, New York.

- ^ a b Wilhelm Wundt. (1912). Introduction to Psychology, trans. Rudolf Pintner (London: Allen, 1912; reprint ed., New York: Arno Press, 1973), p. 16.

- ^ Leahey, T. H. (1979). "Something old, something new: Attention in Wundt and modern cognitive psychology." Journal of the History of the Behavioral Sciences, 15(3), 242-252.

- ^ "Mind". Encyclopedia Britannica. Retrieved 20 January 2024.

- ^ a b c Val Danilov I, and Mihailova S. (2022). "A Case Study on the Development of Math Competence in an Eight-year-old Child with Dyscalculia: Shared Intentionality in Human-Computer Interaction for Online Treatment Via Subitizing." OBM Neurobiology 2022;6(2):17; doi:10.21926/obm.neurobiol.2202122

- ^ Colombetti, G. and, Krueger, J. (2015). "Scaffoldings of the affective mind". Philosophical Psychology. 28 (8): 1157–1176. doi:10.1080/09515089.2014.976334. hdl:10871/15680. S2CID 73617860.

{{cite journal}}: CS1 maint: multiple names: authors list (link) - ^ Gallagher, Shaun (2005). How the Body Shapes the Mind. Oxford: Oxford University Press.

- ^ Garfinkel, S. N.; Barrett, A. B.; Minati, L.; Dolan, R. J.; Seth, A. K.; Critchley, H. D. (2013). "What the heart forgets: Cardiac timing influences memory for words and is modulated by metacognition and interoceptive sensitivity". Psychophysiology. 50 (6): 505–512. doi:10.1111/psyp.12039. PMC 4340570. PMID 23521494.

- ^ Varga, S.; Heck, D. H. (2017). "Rhythms of the body, rhythms of the brain: respiration, neural oscillations, and embodied cognition". Consciousness and Cognition. 56: 77–90. doi:10.1016/j.concog.2017.09.008. PMID 29073509. S2CID 8448790.

- ^ Davidson, G. L.; Cooke, A. C.; Johnson, C. N.; Quinn, J. L. (2018). "The gut microbiome as a driver of individual variation in cognition and functional behaviour". Philosophical Transactions of the Royal Society B: Biological Sciences. 373 (1756): 20170286. doi:10.1098/rstb.2017.0286. PMC 6107574. PMID 30104431.

- ^ Newen, A.; De Bruin, L.; Gallagher, S., eds. (2018). The Oxford handbook of 4E cognition. Oxford: Oxford University Press.

- ^ Menary, Richard (2010). "Special Issue on 4E Cognition". Phenomenology and the Cognitive Sciences. 9 (4). doi:10.1007/s11097-010-9187-6. S2CID 143621939.

- ^ Alsmith, A. J. T.; De Vignemont, F. (2012). "Embodying the mind and representing the body" (PDF). Review of Philosophy and Psychology. 3 (1): 1–13. doi:10.1007/s13164-012-0085-4. S2CID 5823387.

- ^ Clark, Andy (2011). "Précis of Supersizing the mind: embodiment, action, and cognitive extension". Philosophical Studies. 152 (3): 413–416. doi:10.1007/s11098-010-9597-x. S2CID 170708745.

- ^ Chemero, Anthony (2011). Radical embodied cognitive science. Cambridge: MIT Press.

- ^ Hutto, Daniel D. & Erik Myin. (2012). Radicalizing enactivism: Basic minds without content. Cambridge: MIT Press.

- ^ "Linguistics: Semantics, Phonetics, Pragmatics, and Human Communication". Decoded Science. 16 February 2014. Archived from the original on 6 June 2017. Retrieved 7 February 2018.

- ^ Isac, Daniela; Charles Reiss (2013). I-language: An Introduction to Linguistics as Cognitive Science, 2nd edition. Oxford University Press. p. 5. ISBN 978-0199660179. Archived from the original on 6 July 2011. Retrieved 29 July 2008.

- ^ Pinker S., Bloom P. (1990). "Natural language and natural selection". Behavioral and Brain Sciences. 13 (4): 707–784. CiteSeerX 10.1.1.116.4044. doi:10.1017/S0140525X00081061. S2CID 6167614.

- ^ Lewandowski, Gary; Strohmetz, David (2009). "Actions can speak as loud as words: Measuring behavior in psychological science". Social and Personality Psychology Compass. 3 (6): 992–1002. doi:10.1111/j.1751-9004.2009.00229.x.

- ^ König, Peter; Wilming, Niklas; Kietzmann, Tim C.; Ossandón, Jose P.; Onat, Selim; Ehinger, Benedikt V.; Gameiro, Ricardo R.; Kaspar, Kai (1 December 2016). "Eye movements as a window to cognitive processes". Journal of Eye Movement Research. 9 (5). doi:10.16910/jemr.9.5.3.

- ^ Lieto, Antonio (2021). Cognitive Design for Artificial Minds. London, UK: Routledge, Taylor & Francis. ISBN 9781138207929.

- ^ Sun, Ron (ed.), Grounding Social Sciences in Cognitive Sciences. MIT Press, Cambridge, Massachusetts. 2012.

- ^ "David Chalmers". www.informationphilosopher.com. Archived from the original on 24 April 2017. Retrieved 24 April 2017.

- ^ "Facing Up to the Problem of Consciousness". consc.net. Archived from the original on 8 April 2011. Retrieved 24 April 2017.

- ^ "Daniel C. Dennett | American philosopher". Encyclopædia Britannica. Archived from the original on 23 July 2016. Retrieved 3 May 2017.

- ^ "John Searle". www.informationphilosopher.com. Archived from the original on 24 April 2017. Retrieved 3 May 2017.

- ^ "Gödel, Escher, Bach". Goodreads. Archived from the original on 22 May 2017. Retrieved 3 May 2017.

- ^ Somers, James. "The Man Who Would Teach Machines to Think". The Atlantic. Archived from the original on 17 September 2016. Retrieved 3 May 2017.

- ^ "Fodor, Jerry | Internet Encyclopedia of Philosophy". www.iep.utm.edu. Archived from the original on 17 October 2015. Retrieved 3 May 2017.

- ^ "Alan Baddeley in International Directory of Psychologists". 1966. Archived from the original on 5 February 2023. Retrieved 10 March 2022.

- ^ "Marvin Minsky | American scientist". Encyclopædia Britannica. Archived from the original on 28 March 2017. Retrieved 27 March 2017.

- ^ Darwin, Chris (9 June 2004). "Christopher Longuet-Higgins". The Guardian. ISSN 0261-3077. Archived from the original on 21 December 2016. Retrieved 27 March 2017.

- ^ "Noam Chomsky". chomsky.info. Archived from the original on 25 April 2017. Retrieved 24 April 2017.

- ^ "J.C.R. Licklider | Internet Hall of Fame". internethalloffame.org. Archived from the original on 28 March 2017. Retrieved 24 April 2017.

- ^ Gleitman, Lila (January 1990). "The Structural Sources of Verb Meanings". Language Acquisition. 1 (1): 3–55. doi:10.1207/s15327817la0101_2. S2CID 144713838.

- ^ Rosch, Eleanor; Mervis, Carolyn B; Gray, Wayne D; Johnson, David M; Boyes-Braem, Penny (July 1976). "Basic objects in natural categories". Cognitive Psychology. 8 (3): 382–439. CiteSeerX 10.1.1.149.3392. doi:10.1016/0010-0285(76)90013-X. S2CID 5612467.

- ^ Johnsonlaird, P (March 1981). "Mental models in cognitive science". Cognitive Science. 4 (1): 71–115. doi:10.1016/S0364-0213(81)80005-5 (inactive 31 January 2024).

{{cite journal}}: CS1 maint: DOI inactive as of January 2024 (link) - ^ Gentner, Dedre (April 1983). "Structure-Mapping: A Theoretical Framework for Analogy". Cognitive Science. 7 (2): 155–170. doi:10.1207/s15516709cog0702_3. S2CID 5371492.

- ^ Newell, Allen. 1990. Unified Theories of Cognition. Harvard University Press, Cambridge, Massachusetts.[page needed]

- ^ Karmiloff-Smith, Annette (1992). Beyond Modularity: A Developmental Perspective on Cognitive Science. MIT Press. ISBN 9780262111690.[page needed]

- ^ Marr, D.; Poggio, T. (1976). From Understanding Computation to Understanding Neural Circuitry. Artificial Intelligence Laboratory (Report). A.I. Memo. Massachusetts Institute of Technology. hdl:1721.1/5782. AIM-357.

- ^ Smith, Linda B; Thelen, Esther (1993). A Dynamic systems approach to development: applications. MIT Press. ISBN 978-0-585-03867-4. OCLC 42854628.[page needed]

- ^ Rescorla, Michael (1 January 2017). Zalta, Edward N. (ed.). The Stanford Encyclopedia of Philosophy (Spring 2017 ed.). Metaphysics Research Lab, Stanford University. Archived from the original on 18 March 2019. Retrieved 27 March 2017.

- ^ Hauser, Larry. "Chinese Room Argument". Internet Enclclopedia of Philosophy. Archived from the original on 27 April 2016. Retrieved 27 March 2017.

- ^ "Fodor, Jerry | Internet Encyclopedia of Philosophy". www.iep.utm.edu. Archived from the original on 17 October 2015. Retrieved 27 March 2017.

- ^ Longuet-Higgins, Christopher (1977) [1969], "Epistemics", in A. Bullock & O. Stallybrass (ed.), Fontana dictionary of modern thought, London, UK: Fontana, p. 209, ISBN 9780002161497

- ^ Goldman, Alvin I. (1978). "Epistemics: The Regulative Theory of Cognition". The Journal of Philosophy. 75 (10): 509–23. doi:10.2307/2025838. JSTOR 2025838.

- ^ "The old WWW.cogsci.ed.ac.uk server". Archived from the original on 19 October 2010. Retrieved 27 August 2019.

- ^ Hardcastle, V.G. (1998). "The binding problem". In W. Bechtel & G. Graham (eds.), A Companion to Cognitive Science (pp. 555-565). Blackwell Publisher, Malden/MA, Oxford/UK.

- ^ Hummel, J. (1999). "Binding problem". In: R.A. Wilson & F.C. Keil, The MIT Encyclopedia of the Cognitive Sciences (pp. 85-86). Cambridge, MA, London: The MIT Press.

- ^ Malsburg, C. von der (1999). "The what and why of binding: The modeler's perspective". Neuron. 24: 95-104.

- ^ Gray, C. M. & Singer, W. (1989). "Stimulus-specific neuronal oscillations in orientation columns of cat visual cortex". Proceedings of the National Academy of Sciences of the United States of America. 86: 1698-1702.

- ^ Singer, W. (1999b). "Neuronal synchrony: A versatile code for the definition of relations". Neuron. 24: 49-65.

- ^ Singer, W. & A. Lazar. (2016). "Does the cerebral cortex exploit high-dimensional, non-linear dynamics for information processing?" Frontiers in Computational Neuroscience.10: 99.

- ^ Singer, W. (2018). "Neuronal oscillations: unavoidable and useful?" European Journal of Neuroscience. 48: 2389-2399.

- ^ Maurer, H. (2021). "Cognitive science: Integrative synchronization mechanisms in cognitive neuroarchitectures of the modern connectionism". CRC Press, Boca Raton/FL, ISBN 978-1-351-04352-6. https://doi.org/10.1201/9781351043526 Archived 5 February 2023 at the Wayback Machine

- ^ Maurer, H. (2016). "Integrative synchronization mechanisms in connectionist cognitive Neuroarchitectures". Computational Cognitive Science. 2: 3. https://doi.org/10.1186/s40469-016-0010-8 Archived 5 February 2023 at the Wayback Machine

- ^ Marcus, G.F. (2001). "The algebraic mind. Integrating connectionism and cognitive science". Bradford Book, The MIT Press, Cambridge, ISBN 0-262-13379-2.

- ^ Treisman A. (1999). "Solutions to the binding problem: progress through controversy and convergence." Neuron, 1999, 24(1):105-125.

- ^ a b c Val Danilov, Igor (17 February 2023). "Theoretical Grounds of Shared Intentionality for Neuroscience in Developing Bioengineering Systems". OBM Neurobiology. 7 (1): 156. doi:10.21926/obm.neurobiol.2301156.

External links[edit]

Media related to Cognitive science at Wikimedia Commons

Media related to Cognitive science at Wikimedia Commons Quotations related to Cognitive science at Wikiquote

Quotations related to Cognitive science at Wikiquote Learning materials related to Cognitive science at Wikiversity

Learning materials related to Cognitive science at Wikiversity- "Cognitive Science" on the Stanford Encyclopedia of Philosophy

- Cognitive Science Society

- Cognitive Science Movie Index: A broad list of movies showcasing themes in the Cognitive Sciences Archived 4 September 2015 at the Wayback Machine

- List of leading thinkers in cognitive science