Quantum information

Quantum information is the information of the state of a quantum system. It is the basic entity of study in quantum information theory,[1][2][3] and can be manipulated using quantum information processing techniques. Quantum information refers to both the technical definition in terms of Von Neumann entropy and the general computational term.

It is an interdisciplinary field that involves quantum mechanics, computer science, information theory, philosophy and cryptography among other fields.[4][5][6] Its study is also relevant to disciplines such as cognitive science, psychology and neuroscience.[7][8][9][10] Its main focus is in extracting information from matter at the microscopic scale. Observation in science is one of the most important ways of acquiring information and measurement is required in order to quantify the observation, making this crucial to the scientific method. In quantum mechanics, due to the uncertainty principle, non-commuting observables cannot be precisely measured simultaneously, as an eigenstate in one basis is not an eigenstate in the other basis. According to the eigenstate–eigenvalue link, an observable is well-defined (definite) when the state of the system is an eigenstate of the observable.[11] Since any two non-commuting observables are not simultaneously well-defined, a quantum state can never contain definitive information about both non-commuting observables.[8]

Information is something physical that is encoded in the state of a quantum system.[12] While quantum mechanics deals with examining properties of matter at the microscopic level,[13][8] quantum information science focuses on extracting information from those properties,[8] and quantum computation manipulates and processes information – performs logical operations – using quantum information processing techniques.[14]

Quantum information, like classical information, can be processed using digital computers, transmitted from one location to another, manipulated with algorithms, and analyzed with computer science and mathematics. Just like the basic unit of classical information is the bit, quantum information deals with qubits.[15] Quantum information can be measured using Von Neumann entropy.

Recently, the field of quantum computing has become an active research area because of the possibility to disrupt modern computation, communication, and cryptography.[14][16]

History and development[edit]

Development from fundamental quantum mechanics[edit]

The history of quantum information theory began at the turn of the 20th century when classical physics was revolutionized into quantum physics. The theories of classical physics were predicting absurdities such as the ultraviolet catastrophe, or electrons spiraling into the nucleus. At first these problems were brushed aside by adding ad hoc hypotheses to classical physics. Soon, it became apparent that a new theory must be created in order to make sense of these absurdities, and the theory of quantum mechanics was born.[2]

Quantum mechanics was formulated by Schrödinger using wave mechanics and Heisenberg using matrix mechanics.[17] The equivalence of these methods was proven later.[18] Their formulations described the dynamics of microscopic systems but had several unsatisfactory aspects in describing measurement processes. Von Neumann formulated quantum theory using operator algebra in a way that it described measurement as well as dynamics.[19] These studies emphasized the philosophical aspects of measurement rather than a quantitative approach to extracting information via measurements.

See: Dynamical Pictures

| Evolution of: | Picture () | ||

| Schrödinger (S) | Heisenberg (H) | Interaction (I) | |

| Ket state | constant | ||

| Observable | constant | ||

| Density matrix | constant | ||

Development from communication[edit]

In 1960s, Stratonovich, Helstrom and Gordon[20] proposed a formulation of optical communications using quantum mechanics. This was the first historical appearance of quantum information theory. They mainly studied error probabilities and channel capacities for communication.[20][21][22] Later, Alexander Holevo obtained an upper bound of communication speed in the transmission of a classical message via a quantum channel.[23][24]

Development from atomic physics and relativity[edit]

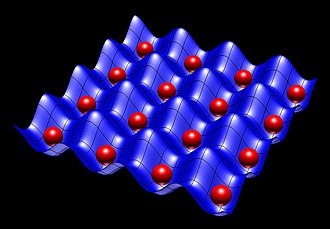

In the 1970s, techniques for manipulating single-atom quantum states, such as the atom trap and the scanning tunneling microscope, began to be developed, making it possible to isolate single atoms and arrange them in arrays. Prior to these developments, precise control over single quantum systems was not possible, and experiments utilized coarser, simultaneous control over a large number of quantum systems.[2] The development of viable single-state manipulation techniques led to increased interest in the field of quantum information and computation.

In the 1980s, interest arose in whether it might be possible to use quantum effects to disprove Einstein's theory of relativity. If it were possible to clone an unknown quantum state, it would be possible to use entangled quantum states to transmit information faster than the speed of light, disproving Einstein's theory. However, the no-cloning theorem showed that such cloning is impossible. The theorem was one of the earliest results of quantum information theory.[2]

Development from cryptography[edit]

Despite all the excitement and interest over studying isolated quantum systems and trying to find a way to circumvent the theory of relativity, research in quantum information theory became stagnant in the 1980s. However, around the same time another avenue started dabbling into quantum information and computation: Cryptography. In a general sense, cryptography is the problem of doing communication or computation involving two or more parties who may not trust one another.[2]

Bennett and Brassard developed a communication channel on which it is impossible to eavesdrop without being detected, a way of communicating secretly at long distances using the BB84 quantum cryptographic protocol.[25] The key idea was the use of the fundamental principle of quantum mechanics that observation disturbs the observed, and the introduction of an eavesdropper in a secure communication line will immediately let the two parties trying to communicate know of the presence of the eavesdropper.

Development from computer science and mathematics[edit]

With the advent of Alan Turing's revolutionary ideas of a programmable computer, or Turing machine, he showed that any real-world computation can be translated into an equivalent computation involving a Turing machine.[26][27] This is known as the Church–Turing thesis.

Soon enough, the first computers were made, and computer hardware grew at such a fast pace that the growth, through experience in production, was codified into an empirical relationship called Moore's law. This 'law' is a projective trend that states that the number of transistors in an integrated circuit doubles every two years.[28] As transistors began to become smaller and smaller in order to pack more power per surface area, quantum effects started to show up in the electronics resulting in inadvertent interference. This led to the advent of quantum computing, which used quantum mechanics to design algorithms.

At this point, quantum computers showed promise of being much faster than classical computers for certain specific problems. One such example problem was developed by David Deutsch and Richard Jozsa, known as the Deutsch–Jozsa algorithm. This problem however held little to no practical applications.[2] Peter Shor in 1994 came up with a very important and practical problem, one of finding the prime factors of an integer. The discrete logarithm problem as it was called, could be solved efficiently on a quantum computer but not on a classical computer hence showing that quantum computers are more powerful than Turing machines.

Development from information theory[edit]

Around the time computer science was making a revolution, so was information theory and communication, through Claude Shannon.[29][30][31] Shannon developed two fundamental theorems of information theory: noiseless channel coding theorem and noisy channel coding theorem. He also showed that error correcting codes could be used to protect information being sent.

Quantum information theory also followed a similar trajectory, Ben Schumacher in 1995 made an analogue to Shannon's noiseless coding theorem using the qubit. A theory of error-correction also developed, which allows quantum computers to make efficient computations regardless of noise and make reliable communication over noisy quantum channels.[2]

Qubits and information theory[edit]

Quantum information differs strongly from classical information, epitomized by the bit, in many striking and unfamiliar ways. While the fundamental unit of classical information is the bit, the most basic unit of quantum information is the qubit. Classical information is measured using Shannon entropy, while the quantum mechanical analogue is Von Neumann entropy. Given a statistical ensemble of quantum mechanical systems with the density matrix , it is given by [2] Many of the same entropy measures in classical information theory can also be generalized to the quantum case, such as Holevo entropy[32] and the conditional quantum entropy.

Unlike classical digital states (which are discrete), a qubit is continuous-valued, describable by a direction on the Bloch sphere. Despite being continuously valued in this way, a qubit is the smallest possible unit of quantum information, and despite the qubit state being continuous-valued, it is impossible to measure the value precisely. Five famous theorems describe the limits on manipulation of quantum information.[2]

- no-teleportation theorem, which states that a qubit cannot be (wholly) converted into classical bits; that is, it cannot be fully "read".

- no-cloning theorem, which prevents an arbitrary qubit from being copied.

- no-deleting theorem, which prevents an arbitrary qubit from being deleted.

- no-broadcast theorem, which prevents an arbitrary qubit from being delivered to multiple recipients, although it can be transported from place to place (e.g. via quantum teleportation).

- no-hiding theorem, which demonstrates the conservation of quantum information.

These theorems are proven from unitarity, which according to Leonard Susskind is the technical term for the statement that quantum information within the universe is conserved.[33]: 94 The five theorems open up possibilities in quantum information processing.

Quantum information processing[edit]

The state of a qubit contains all of its information. This state is frequently expressed as a vector on the Bloch sphere. This state can be changed by applying linear transformations or quantum gates to them. These unitary transformations are described as rotations on the Bloch Sphere. While classical gates correspond to the familiar operations of Boolean logic, quantum gates are physical unitary operators.

- Due to the volatility of quantum systems and the impossibility of copying states, the storing of quantum information is much more difficult than storing classical information. Nevertheless, with the use of quantum error correction quantum information can still be reliably stored in principle. The existence of quantum error correcting codes has also led to the possibility of fault-tolerant quantum computation.

- Classical bits can be encoded into and subsequently retrieved from configurations of qubits, through the use of quantum gates. By itself, a single qubit can convey no more than one bit of accessible classical information about its preparation. This is Holevo's theorem. However, in superdense coding a sender, by acting on one of two entangled qubits, can convey two bits of accessible information about their joint state to a receiver.

- Quantum information can be moved about, in a quantum channel, analogous to the concept of a classical communications channel. Quantum messages have a finite size, measured in qubits; quantum channels have a finite channel capacity, measured in qubits per second.

- Quantum information, and changes in quantum information, can be quantitatively measured by using an analogue of Shannon entropy, called the von Neumann entropy.

- In some cases, quantum algorithms can be used to perform computations faster than in any known classical algorithm. The most famous example of this is Shor's algorithm that can factor numbers in polynomial time, compared to the best classical algorithms that take sub-exponential time. As factorization is an important part of the safety of RSA encryption, Shor's algorithm sparked the new field of post-quantum cryptography that tries to find encryption schemes that remain safe even when quantum computers are in play. Other examples of algorithms that demonstrate quantum supremacy include Grover's search algorithm, where the quantum algorithm gives a quadratic speed-up over the best possible classical algorithm. The complexity class of problems efficiently solvable by a quantum computer is known as BQP.

- Quantum key distribution (QKD) allows unconditionally secure transmission of classical information, unlike classical encryption, which can always be broken in principle, if not in practice. Do note that certain subtle points regarding the safety of QKD are still hotly debated.

The study of all of the above topics and differences comprises quantum information theory.

Relation to quantum mechanics[edit]

Quantum mechanics is the study of how microscopic physical systems change dynamically in nature. In the field of quantum information theory, the quantum systems studied are abstracted away from any real world counterpart. A qubit might for instance physically be a photon in a linear optical quantum computer, an ion in a trapped ion quantum computer, or it might be a large collection of atoms as in a superconducting quantum computer. Regardless of the physical implementation, the limits and features of qubits implied by quantum information theory hold as all these systems are mathematically described by the same apparatus of density matrices over the complex numbers. Another important difference with quantum mechanics is that while quantum mechanics often studies infinite-dimensional systems such as a harmonic oscillator, quantum information theory is concerned with both continuous-variable systems[34] and finite-dimensional systems.[8][35][36]

Entropy and information[edit]

Entropy measures the uncertainty in the state of a physical system.[2] Entropy can be studied from the point of view of both the classical and quantum information theories.

Classical information theory[edit]

Classical information is based on the concepts of information laid out by Claude Shannon. Classical information, in principle, can be stored in a bit of binary strings. Any system having two states is a capable bit.[37]

Shannon entropy[edit]

Shannon entropy is the quantification of the information gained by measuring the value of a random variable. Another way of thinking about it is by looking at the uncertainty of a system prior to measurement. As a result, entropy, as pictured by Shannon, can be seen either as a measure of the uncertainty prior to making a measurement or as a measure of information gained after making said measurement.[2]

Shannon entropy, written as a functional of a discrete probability distribution, associated with events , can be seen as the average information associated with this set of events, in units of bits:

This definition of entropy can be used to quantify the physical resources required to store the output of an information source. The ways of interpreting Shannon entropy discussed above are usually only meaningful when the number of samples of an experiment is large.[35]

Rényi entropy[edit]

The Rényi entropy is a generalization of Shannon entropy defined above. The Rényi entropy of order r, written as a function of a discrete probability distribution, , associated with events , is defined as:[37]

for and .

We arrive at the definition of Shannon entropy from Rényi when , of Hartley entropy (or max-entropy) when , and min-entropy when .

Quantum information theory[edit]

Quantum information theory is largely an extension of classical information theory to quantum systems. Classical information is produced when measurements of quantum systems are made.[37]

Von Neumann entropy[edit]

One interpretation of Shannon entropy was the uncertainty associated with a probability distribution. When we want to describe the information or the uncertainty of a quantum state, the probability distributions are simply replaced by density operators :

where are the eigenvalues of .

Von Neumann entropy plays a role in quantum information similar to the role Shannon entropy plays in classical information.

Applications[edit]

Quantum communication[edit]

Quantum communication is one of the applications of quantum physics and quantum information. There are some famous theorems such as the no-cloning theorem that illustrate some important properties in quantum communication. Dense coding and quantum teleportation are also applications of quantum communication. They are two opposite ways to communicate using qubits. While teleportation transfers one qubit from Alice and Bob by communicating two classical bits under the assumption that Alice and Bob have a pre-shared Bell state, dense coding transfers two classical bits from Alice to Bob by using one qubit, again under the same assumption, that Alice and Bob have a pre-shared Bell state.

Quantum key distribution[edit]

One of the best known applications of quantum cryptography is quantum key distribution which provide a theoretical solution to the security issue of a classical key. The advantage of quantum key distribution is that it is impossible to copy a quantum key because of the no-cloning theorem. If someone tries to read encoded data, the quantum state being transmitted will change. This could be used to detect eavesdropping.

BB84[edit]

The first quantum key distribution scheme, BB84, was developed by Charles Bennett and Gilles Brassard in 1984. It is usually explained as a method of securely communicating a private key from a third party to another for use in one-time pad encryption.[2]

E91[edit]

E91 was made by Artur Ekert in 1991. His scheme uses entangled pairs of photons. These two photons can be created by Alice, Bob, or by a third party including eavesdropper Eve. One of the photons is distributed to Alice and the other to Bob so that each one ends up with one photon from the pair.

This scheme relies on two properties of quantum entanglement:

- The entangled states are perfectly correlated which means that if Alice and Bob both measure their particles having either a vertical or horizontal polarization, they always get the same answer with 100% probability. The same is true if they both measure any other pair of complementary (orthogonal) polarizations. This necessitates that the two distant parties have exact directionality synchronization. However, from quantum mechanics theory the quantum state is completely random so that it is impossible for Alice to predict if she will get vertical polarization or horizontal polarization results.

- Any attempt at eavesdropping by Eve destroys this quantum entanglement such that Alice and Bob can detect.

B92[edit]

B92 is a simpler version of BB84.[38]

The main difference between B92 and BB84:

- B92 only needs two states

- BB84 needs 4 polarization states

Like the BB84, Alice transmits to Bob a string of photons encoded with randomly chosen bits but this time the bits Alice chooses the bases she must use. Bob still randomly chooses a basis by which to measure but if he chooses the wrong basis, he will not measure anything which is guaranteed by quantum mechanics theories. Bob can simply tell Alice after each bit she sends whether or not he measured it correctly.[39]

Quantum computation[edit]

The most widely used model in quantum computation is the quantum circuit, which are based on the quantum bit "qubit". Qubit is somewhat analogous to the bit in classical computation. Qubits can be in a 1 or 0 quantum state, or they can be in a superposition of the 1 and 0 states. However, when qubits are measured the result of the measurement is always either a 0 or a 1; the probabilities of these two outcomes depend on the quantum state that the qubits were in immediately prior to the measurement.

Any quantum computation algorithm can be represented as a network of quantum logic gates.

Quantum decoherence[edit]

If a quantum system were perfectly isolated, it would maintain coherence perfectly, but it would be impossible to test the entire system. If it is not perfectly isolated, for example during a measurement, coherence is shared with the environment and appears to be lost with time; this process is called quantum decoherence. As a result of this process, quantum behavior is apparently lost, just as energy appears to be lost by friction in classical mechanics.

Quantum error correction[edit]

QEC is used in quantum computing to protect quantum information from errors due to decoherence and other quantum noise. Quantum error correction is essential if one is to achieve fault-tolerant quantum computation that can deal not only with noise on stored quantum information, but also with faulty quantum gates, faulty quantum preparation, and faulty measurements.

Peter Shor first discovered this method of formulating a quantum error correcting code by storing the information of one qubit onto a highly entangled state of ancilla qubits. A quantum error correcting code protects quantum information against errors.

Journals[edit]

Many journals publish research in quantum information science, although only a few are dedicated to this area. Among these are:

- International Journal of Quantum Information

- npj Quantum Information

- Quantum

- Quantum Information & Computation

- Quantum Information Processing

- Quantum Science and Technology

See also[edit]

References[edit]

- ^ Vedral, Vlatko (2006). Introduction to Quantum Information Science. Oxford: Oxford University Press. doi:10.1093/acprof:oso/9780199215706.001.0001. ISBN 9780199215706. OCLC 822959053.

- ^ a b c d e f g h i j k l Nielsen, Michael A.; Chuang, Isaac L. (2010). Quantum Computation and Quantum Information (10th anniversary ed.). Cambridge: Cambridge University Press. doi:10.1017/cbo9780511976667. ISBN 9780511976667. OCLC 665137861. S2CID 59717455.

- ^ Hayashi, Masahito (2006). Quantum Information: An Introduction. Berlin: Springer. doi:10.1007/3-540-30266-2. ISBN 978-3-540-30266-7. OCLC 68629072.

- ^ Bokulich, Alisa; Jaeger, Gregg (2010). Philosophy of Quantum Information and Entanglement. Cambridge: Cambridge University Press. doi:10.1017/CBO9780511676550. ISBN 9780511676550.

- ^ Benatti, Fabio; Fannes, Mark; Floreanini, Roberto; Petritis, Dimitri (2010). Quantum Information, Computation and Cryptography: An Introductory Survey of Theory, Technology and Experiments. Lecture Notes in Physics. Vol. 808. Berlin: Springer. doi:10.1007/978-3-642-11914-9. ISBN 978-3-642-11914-9.

- ^ Benatti, Fabio (2009). "Quantum Information Theory". Quantum Entropies. Theoretical and Mathematical Physics. Dordrecht: Springer. pp. 255–315. doi:10.1007/978-1-4020-9306-7_6. ISBN 978-1-4020-9306-7.

- ^ Hayashi, Masahito; Ishizaka, Satoshi; Kawachi, Akinori; Kimura, Gen; Ogawa, Tomohiro (2015). Introduction to Quantum Information Science. Berlin: Springer. Bibcode:2015iqis.book.....H. doi:10.1007/978-3-662-43502-1. ISBN 978-3-662-43502-1.

- ^ a b c d e Hayashi, Masahito (2017). Quantum Information Theory: Mathematical Foundation. Graduate Texts in Physics. Berlin: Springer. doi:10.1007/978-3-662-49725-8. ISBN 978-3-662-49725-8.

- ^ Georgiev, Danko D. (2017-12-06). Quantum Information and Consciousness: A Gentle Introduction. Boca Raton: CRC Press. doi:10.1201/9780203732519. ISBN 9781138104488. OCLC 1003273264. Zbl 1390.81001.

- ^ Georgiev, Danko D. (2020). "Quantum information theoretic approach to the mind-brain problem". Progress in Biophysics and Molecular Biology. 158: 16–32. arXiv:2012.07836. doi:10.1016/j.pbiomolbio.2020.08.002. PMID 32822698. S2CID 221237249.

- ^ Gilton, Marian J. R. (2016). "Whence the eigenstate–eigenvalue link?". Studies in History and Philosophy of Science Part B: Studies in History and Philosophy of Modern Physics. 55: 92–100. Bibcode:2016SHPMP..55...92G. doi:10.1016/j.shpsb.2016.08.005.

- ^ Preskill, John. Quantum Computation (Physics 219/Computer Science 219). Pasadena, California: California Institute of Technology.

- ^ Feynman, Richard Phillips; Leighton, Robert Benjamin; Sands, Matthew Linzee (2013). "Quantum behavior". The Feynman Lectures on Physics. Volume III. Quantum Mechanics. Pasadena, California: California Institute of Technology.

- ^ a b Lo, Hoi-Kwong; Popescu, Sandu; Spiller, Tim (1998). Introduction to Quantum Computation and Information. Singapore: World Scientific. Bibcode:1998iqci.book.....S. doi:10.1142/3724. ISBN 978-981-4496-35-3. OCLC 52859247.

- ^ Bennett, Charles H.; Shor, Peter Williston (1998). "Quantum information theory". IEEE Transactions on Information Theory. 44 (6): 2724–2742. CiteSeerX 10.1.1.89.1572. doi:10.1109/18.720553.

- ^ Garlinghouse, Tom (2020). "Quantum computing: Opening new realms of possibilities". Discovery: Research at Princeton: 12–17.

- ^ Mahan, Gerald D. (2009). Quantum Mechanics in a Nutshell. Princeton: Princeton University Press. doi:10.2307/j.ctt7s8nw. ISBN 978-1-4008-3338-2. JSTOR j.ctt7s8nw.

- ^ Perlman, H. S. (1964). "Equivalence of the Schroedinger and Heisenberg pictures". Nature. 204 (4960): 771–772. Bibcode:1964Natur.204..771P. doi:10.1038/204771b0. S2CID 4194913.

- ^ Neumann, John von (2018-02-27). Mathematical Foundations of Quantum Mechanics: New Edition. Princeton University Press. ISBN 978-0-691-17856-1.

- ^ a b Gordon, J. P. (1962). "Quantum effects in communications systems". Proceedings of the IRE. 50 (9): 1898–1908. doi:10.1109/jrproc.1962.288169. S2CID 51631629.

- ^ Helstrom, Carl W. (1969). "Quantum detection and estimation theory". Journal of Statistical Physics. 1 (2): 231–252. Bibcode:1969JSP.....1..231H. doi:10.1007/bf01007479. hdl:2060/19690016211. S2CID 121571330.

- ^ Helstrom, Carl W. (1976). Quantum Detection and Estimation Theory. Mathematics in Science and Engineering. Vol. 123. New York: Academic Press. doi:10.1016/s0076-5392(08)x6017-5. hdl:2060/19690016211. ISBN 9780080956329. OCLC 2020051.

- ^ Holevo, Alexander S. (1973). "Bounds for the quantity of information transmitted by a quantum communication channel". Problems of Information Transmission. 9 (3): 177–183. MR 0456936. Zbl 0317.94003.

- ^ Holevo, Alexander S. (1979). "On capacity of a quantum communications channel". Problems of Information Transmission. 15 (4): 247–253. MR 0581651. Zbl 0433.94008.

- ^ Bennett, Charles H.; Brassard, Gilles (2014). "Quantum cryptography: public key distribution and coin tossing". Theoretical Computer Science. 560 (1): 7–11. arXiv:2003.06557. doi:10.1016/j.tcs.2014.05.025. S2CID 27022972.

- ^ Weisstein, Eric W. "Church–Turing Thesis". mathworld.wolfram.com. Retrieved 2020-11-13.

- ^ Deutsch, David (1985). "Quantum theory, the Church–Turing principle and the universal quantum computer". Proceedings of the Royal Society of London A: Mathematical and Physical Sciences. 400 (1818): 97–117. Bibcode:1985RSPSA.400...97D. doi:10.1098/rspa.1985.0070. S2CID 1438116.

- ^ Moore, Gordon Earle (1998). "Cramming more components onto integrated circuits". Proceedings of the IEEE. 86 (1): 82–85. doi:10.1109/jproc.1998.658762. S2CID 6519532.

- ^ Shannon, Claude E. (1948). "A mathematical theory of communication". The Bell System Technical Journal. 27 (3): 379–423. doi:10.1002/j.1538-7305.1948.tb01338.x.

- ^ Shannon, Claude E. (1948). "A mathematical theory of communication". The Bell System Technical Journal. 27 (4): 623–656. doi:10.1002/j.1538-7305.1948.tb00917.x.

- ^ Shannon, Claude E.; Weaver, Warren (1964). The Mathematical Theory of Communication. Urbana: University of Illinois Press. hdl:11858/00-001M-0000-002C-4314-2.

- ^ "Alexandr S. Holevo". Mi.ras.ru. Retrieved 4 December 2018.

- ^ Susskind, Leonard; Friedman, Art (2014). Quantum Mechanics: The Theoretical Minimum. What You Need to Know to Start Doing Physics. New York: Basic Books. ISBN 978-0-465-08061-8. OCLC 1038428525.

- ^ Weedbrook, Christian; Pirandola, Stefano; García-Patrón, Raúl; Cerf, Nicolas J.; Ralph, Timothy C.; Shapiro, Jeffrey H.; Lloyd, Seth (2012). "Gaussian quantum information". Reviews of Modern Physics. 84 (2): 621–669. arXiv:1110.3234. Bibcode:2012RvMP...84..621W. doi:10.1103/RevModPhys.84.621. S2CID 119250535.

- ^ a b Watrous, John (2018). The Theory of Quantum Information. Cambridge: Cambridge University Press. doi:10.1017/9781316848142. ISBN 9781316848142. OCLC 1034577167.

- ^ Wilde, Mark M. (2017). Quantum Information Theory (2nd ed.). Cambridge: Cambridge University Press. arXiv:1106.1445. doi:10.1017/9781316809976. ISBN 9781316809976.

- ^ a b c Jaeger, Gregg (2007). Quantum Information: An Overview. New York: Springer. doi:10.1007/978-0-387-36944-0. ISBN 978-0-387-36944-0. OCLC 255569451.

- ^ Bennett, Charles H. (1992). "Quantum cryptography using any two nonorthogonal states". Physical Review Letters. 68 (21): 3121–3124. Bibcode:1992PhRvL..68.3121B. doi:10.1103/PhysRevLett.68.3121. PMID 10045619. S2CID 19708593.

- ^ Haitjema, Mart (2007). A Survey of the Prominent Quantum Key Distribution Protocols. Washington University in St. Louis. S2CID 18346434.

![{\displaystyle H(X)=H[P(x_{1}),P(x_{2}),...,P(x_{n})]=-\sum _{i=1}^{n}P(x_{i})\log _{2}P(x_{i})}](https://wikimedia.org/api/rest_v1/media/math/render/svg/b6fddb19d2d1948497eb53261dc6b6fed63e9ec9)