User:Boundarylayer/sandbox

Energy mix, World energy consumption[edit]

-

Rates of world energy usage [1]

-

The effect of technological progress, e.g. Newcomen engine, on energy efficiency and thus the consumption of energy. Blue curves show the consumption of fuel (coal kg/kwh) after different innovations, red curve shows maximum thermal efficiency (%). "Watt, p" stands for "Watt, pump", "Watt, m" for "Watt, mill". X axis shows years. Source: Shell (2001): Energy Neeeds, Choises and Possibilities - Scenarios to 2050.

-

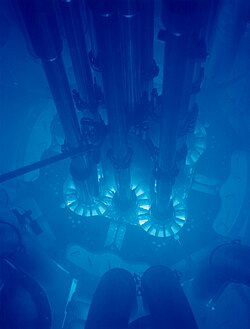

Nuclear fuel pellets and a fuel rod. Reactor Grade Uranium has an Energy Density (MJ/kg) of 3.7x10^6 compared to coal's 32.5 and Diesels 45.8. All numbers assume 100% thermal-to-electrical conversion.[2] Meaning reactor grade uranium is some 100,000 times more energy dense.

| Energy use (PWh)[3] | ||||

|---|---|---|---|---|

| Fossil | Nuclear | Renewable | Total | |

| 1990 | 83.374 | 6.113 | 13.082 | 102.569 |

| 2000 | 94.493 | 7.857 | 15.337 | 117.687 |

| 2008 | 117.076 | 8.283 | 18.492 | 143.851 |

| Change 2000-2008 | 22.583 | 0.426 | 3.155 | 26.164 |

1PWh=1000TWh

In October 2012 the IEA noted that coal accounted for half the increased energy use of the prior decade, growing faster than all renewable energy sources.[4]

Meaning, as the massive growth of nuclear energy slowed practically to a stop in the late 1980s, renewable energy has done nothing to reduce the proportion of energy from fossil fuels currently in use. If you look at the percentage of the energy generated from fossil fuels in the 1990s and compare it to now, it has gone up. Not down as environmentalists intend. Fossil fuels supplied 81.3% of all energy in 1990, and according to the table above, it is now 81.4% of all energy use.

To find out the new installed, and decommissioned energy sources by nameplate capacity, in the US, see the EIA ES3 and ES4 tables here.[5][6]

Decommissioned nuclear power plants[edit]

- Shippingport Atomic Power Station

- Big Rock Point 67 MW. the fifth NPP to ever be commissioned in the US, occurring in 1963, it was returned to Greenfield status in 2006, with the land open to visitors.[8]

- Maine Yankee ~ 800 MW. First large commercial nuclear power station decommissioned in USA. From 1972 to 1996 it produced 119 billion kilowatt hours of electricity over its lifetime, before shutting down due to steam generator tube issues, the decom costs was about $568 million.[9] Fuel in casks, majority of site area was converted to Greenfield status.[10][11]

- Yankee Rowe

- Connecticut Yankee, 619 MW. decom finished 2007. Great picture of site before and after![12] The Independent Spent Fuel Storage Installation (ISFSI) is "3/4 of a mile" off site out of view. The 43 dry casks contain reactor vessel internals as well as its 1019 spent fuel assemblies.

- Rancho Seco 913 MW commercial power operation began in 1975 decommissioned in 2009.

- La Crosse Boiling Water Reactor 50 MW built in 1967 in SAFSTOR decommissioning ongoing?

almost all of the above have their spent fuel in dry cask storage on site.

What the rest of the world is planning to do with their spent nuclear fuel and the design requirements for dry cask storage vessels.[13]

What MIT think should be done with SNF(spent nuclear fuel.)"SNF is a significant potential source of energy; however, we do not know today if LWR SNF is a waste or a valuable national resource. Because of this uncertainty, we recommend a policy that maintains fuel cycle options of long storage of SNF," of about a century. "Storage is a viable option because the quantities of SNF are small and the costs of storage are small relative to the value of electricity produced. A typical reactor produces 20 tons of SNF per year. The U.S. generates ~2000 tons of SNF per year in the process of producing ~20% of the total U.S. electricity. Total waste management costs (including SNF storage) are between 1 and 2% of the cost of electricity"... "For new reactor sites, the economically preferred option would be shipment of SNF to centralized sites afer the initial cooling period, as is done in countries such as Great Britain, France, Russia, and Sweden".[14]

"The geology of nuclear waste disposal" in 1984 (http://www.nature.com/nature/journal/v310/n5978/abs/310537a0.html), "disposal in subduction trenches and ocean sediments deserves more attention."

The operational from 1999 Waste Isolation Pilot Plant military production reactor TRU transuranic material in dry casks underground repository is sent here. 4% of the waste is remote handled HLW waste, while 96% is contact handled(human fork life safe). video here.[15]

"monitored retrievable storage" appears to be the same as interim storage. As opposed to permanent storage, which is what is occurring at WIPP. ~1000 year "permanent storage" will likely be needed for fission products such as Cs-137 no matter if reprocessing occurs or fast breeder reactors come on line.

All Transuranic waste could be burnt up in breeder reactors and or reprocessing. Whether this happens depends on economics, it is not an issue of technical possibility.

(I Have the 3 following vids in list.) nuclear flask and dry storage flask testing, impacts with trains and US tests including jet fuel fire.[16]

Better video of UK test from reactor spent fuel pool to flask. Operation Smashhit.[17] These trains tests were done to "enhance public confidence".

Better video, DOE, of spent fuel "shipping container casks" impact & burn tests.[18]

In the USA "To cover decommissioning costs, NRC requires power plant owners to set aside cleanup funds. Every two years companies must submit reports to NRC, which the commission checks to ensure the funds are safe and adequate. Money is raised through a fee on electricity ratepayers, and funds are invested, like a person’s retirement fund."..."NRC regulations allow a combination of three decommissioning options: immediate dismantlement, a delay of up to 60 years before beginning dismantling, or permanent reactor entombment in which radioactive contaminants are permanently encased on-site. To date, none of the 29 reactors being decommissioned is being entombed, NRC notes"[19]

Spent nuclear fuel/nuclear waste[edit]

link to Spent nuclear fuel shipping cask rather than Nuclear flask for the movement of nuclear fuel in countries other than the UK.

In response to the Mirror story of the lack of security at railway stations in the above link, where someone allegedly walked up and placed a package beside the SNF cask. A successful attack on hardened spent nuclear fuel vessels is unlikely due to there hardened nature. Moreover, if they were encased in a stand off spaced armor screen of double walled polycarbonate, for example, they would be nigh impervious to attacks by individuals.

Moreover, a successful attack would be unlikely to kill anyone directly, instead it is regarded as a "weapon of mass disruption", a radiological dispersal device (RDD) most commonly termed a "dirty bomb".[20]

Terrorists are far more likely to attack soft targets with a higher chance of success, such as industrial chemical carrying train cars, chlorine, liquefied petroleum gas tanks etc.[21]

Speaking of trains, here's a blast resistant 2013 passenger train car with high speed camera video of testing, part of the SecureMetro project.[22]

The discharge isotopic composition of a fuel assembly with initial enrichment of 4.5 wt % U-235 that has accumulated 45 GWd/MTU burnup is: 93.16% uranium, 92.15% of which is U-238, 1.11% plutonium of which 0.56% is Pu-239, 0.09% minor actinides and 5.19% fission products.[23]

Contribution, in watts of spent fuel fission and actinide product decay heat from 10 year to 10,000.[24] Note that after around 5–10 years the heat content is not enough to melt the fuel, so the answer to your question of what substances contribute to the high decay heat of recently scrammed nuclear fuel is still unanswered, is it the short lived actinides or fission products?

Increasingly, reactors are using fuel enriched to over 4% U-235 and burning it longer, to end up with less than 0.5% U-235 in the used fuel. This provides less incentive to reprocess.[25] Although less of an incentive according to World-nuclear, an automated reprocessing plant could theoretically remove the now high Pu-238 fraction of the spent fuel, and use it for the likes of space probes and vehicles such as the Curiosity rover, although probably at a higher cost than producing it from the spent fuel Np-237 + neutron manner in which it is presently produced for spacecraft. All the plutonium could be separated in a PUREX type reprocessing method and blended in with new fuel(MOX fuel) or used in a breeder reactor. Thus eliminating the proliferation problem of spent nuclear fuel (for tens of thousands of years at least) from the once through fuel cycle of Light water reactors. As the spent fuel would contain comparable amounts of U-235 as natural uranium ore and very low, amounts of the fissile plutonium isotopes, Pu-239 and Pu-241.

However the question then arises, great the once through nuclear fuel cycle is now sufficiently proliferation resistant, however if reprocessing is done to get the attractive (from an energy standpoint) plutonium out and made into MOX fuel. Then what is the discharge isotopic composition of that fuel? "during the burning of MOX the ratio of fissile (odd numbered) isotopes to non-fissile (even) drops from around 65% to 20%, depending on burn up. This makes any attempt to recover the fissile isotopes difficult"-MOX fuel. So it seems you end up with even more Pu-238 & Pu-240 and much less Pu-239 & Pu-241. Further making the spent nuclear fuel even more proliferation resistant, to proliferation proof, at this stage. Efficient burning of all the plutonium however can only be achieved with fast breeder reactors.[26] So spent MOX fuel to --> fast breeders is a possibility. However spent LEU fuel --> fast breeders is likely more economical.

Either way the transuranium waste streams from presently operating fuel cycles LWR's, both MOX & LEU, can be burnt up in a breeder reactor operating in burner mode(as opposed to breeder, U-238 to Pu-239 mode).[27] Leaving the nuclear waste content to be primarily fission products and not nuclear weapons usable isotopes. A major need for the future of nuclear fission power.

The fissile plutonium isotopes present in spent fuel, although dependent on a number of factors, generally do not increase significantly in the percentage composition at higher burnup, while the non fissile plutonium isotopes all increase significantly, Pu-238, Pu-240 and Pu-242.[28] Note, anything over 6 to 18 GWd/MTU burnup results in Plutonium with a considerable and increasing amount of these non-fissile, and often hot, isotopes being present in the discharged Plutonium fraction of the spent fuel - making nuclear weapon manufacture considerably difficult if not impractical - that means, high burn up spent fuel is increasingly proliferation resistant.

A typical Pressurized water reactor(PWR) fuel assembly is a grid array("assembly lattice size") of 17x17 fuel rods and approximately 14 ft long with a mass of 700 kg. This grid array("assembly lattice size") arrangement is that which is to be seen in the AP1000, the US-EPR and the US-APWR, the reason being that this will decrease the need for special handling, as all fuel assemblies will be identical thus facilitating reprocessing.[29]

Some fuel rods are clad with stainless steel (SS) specificially SS-304 and SS-348H in place of the more common and problematic zirconium containing alloys.[30] For example, the Haddam Neck power station could be fuelled with XHN15MS and XHN15B fuel rods (page 72) which has a SS and SS-304 cladding, and which produced a burn up of 28,324 and 33,776 MWd/MTU respectively.[31] Also further on the document(pg 94) states that either SS-316L or hastelloy produced fair or good cladding results for high enriched Uranium HEU, which as you know was, previously, widely used in research reactors to produce Mo-99[32] and perhaps submarines.

Why don't they always use stainless steel as fuel rod cladding instead of zirconium? Well to answer that you need to find out if SS also catalyzes water electrolysis at high temperatures, and how well it contributes to the neutron economy. I believe, for example, Cobalt-60 will form in any SS containing natural Cobalt-59 upon neutron bombardment, which adds to the radiological handling issues of the fuel rod. Also an issue is neutron embrittlement of the material.

Oddly, The fuel rod burn up rates tabled on page 77 onwards have a massive range, from 4,907 on up to 50,000 MWd/MTU. This appears to be somewhat explained by the low enrichment of the XDR06A fuel rod of 2.23% although XDR06G has lower enrichment again but achieved a much higher burn up. XDR06A was used in the Dresden-1 nuclear reactor and both XDR06A and XDR06G were Zircaloy-2 clad. What were they doing with XDR06A, perhaps it what faulty bursting before achieving the optimum designed burn up rate? Whatever the reason the vast majority of fuel rods achieve ~ 30,000 MWd/MTU. I am somewhat surprised that this document is available to the public, nefarious minded individuals could use the information to selectively target dry cask storage vessels to achieve the greatest purity of plutonium-239. Getting back to power production, the overall trend of fuel rods seems to be as more modern fuel rods are made, the burn up is higher, and therefore the purity of fissile Plutonium is expected to be lower.

Sovacool biographies of living persons[edit]

https://en.wikipedia.org/w/index.php?title=Wikipedia:Biographies_of_living_persons/Noticeboard This notice board is intended for editors who are repeatedly adding defamatory or libelous material to articles about living people over an extended period. However, none of the edits of his page have included anything but the status quo.

- What issues does this user have exactly with his page? Going by the talk page of the subjects article -

- http://en.wikipedia.org/wiki/Talk:Benjamin_K._Sovacool This IP address, talk, originally attempted to portray themselves as somebody who knows [Sovacool] well. Now they are claiming here to actually be Sovacool himself?

- Is it, or is it not, WP policy to try and include references for a person that are not their own personal webpage? As, at present, the wikipedia page for this person is almost entirely a mirror of the persons personal page. Why bother even having a Wikipedia article if it is just going to be a mirror.

- WP:BALANCE dictates that if there is a controversy surrounding a person, then this should be reflected in the article. The Yale University paper states emphatically. That "life cycle GHG emissions from nuclear power are...comparable to renewable technologies."Life Cycle Greenhouse Gas Emissions of Nuclear Electricity Generation Sovacool however instead has claimed that Renewable energy is "7" times more effective than nuclear power at combating climate change, and now, in there most recent paper that - Wind power is "96" times more effective. This completely goes against the exhaustive findings of researchers at Yale University, the IPCC and the National Renewable Energy Laboratory.Collectively, life cycle assessment literature shows that nuclear power is similar to other renewable and much lower than fossil fuel in total life cycle GHG emissions

Collectively, life cycle assessment literature shows that nuclear power is similar to other renewable and much lower than fossil fuel in total life cycle GHG emissions.

- The IP user on Sovacool's talk page declares that the authoritative Intergovernmental Panel on Climate Change values are "unfair" and goes even further suggesting that the IPCC's values are inferior to those of Sovacool's, they write "Sovacool's number is probably more complete, and accurate". This might be the IP users opinion, however wikipedia policy has a preference for authoritative references, and you can't really get more authoritative than the IPCC when it comes to climate change, except for the UNCEFF. However, this IP user talks down this authoritative organization, and also wishes to remove this material? I think the IP user also fails to understand that it is not so much the actual value Sovacool arrived at as being up for debate, but a careful reading of the rebuttal of their work highlights that Sovacool did not apply his same methodology to other energy sources, however despite this omission, Sovacool declared "Renewable energy is 7 times more effective at combating climate change than nuclear power". The criticism by Berten et al issue is that Sovacool declared "Wind power is 7 more effective at combating climate change than nuclear power". On the Sovacool talk page, they state that "there has been new research published in Environmental Science & Technology confirming Sovacool's numbers that should be acknowledged" however this paper is not "new research" and which the IP user presents as evidence that "Sovacool is right" was printed with the assistance of well known, and highly public anti-nuclear advocates. Benjamin K. Sovacool is the principle author along with M. V. Ramana Mark Z. Jacobson , [[Mark A. Delucchi , and Mark Diesendorf.

- As Johnfos has said, Johnfos routinely edits wikipedia and uses Sovacool as their prime source. However Johnfos is entirely misleading when they suggest that it is only myself who have found Sovacool and Johnfos' promotion of his statements, to be unsuited for wikipedia. Indeed, Johnfos has encountered another editor on wikipedia who has have found Sovacool's publications to be "silly" and unreliable. They are User:NPGuy. An encounter that can be read here. -

- For a full disclosure, something that Johnfos has not done, is that it must be stated that Johnfos has in the past been forced into a "retirement" for copy & pasting material from anti-nuclear websites directly into wikipedia. They have also previously attempted to get me banned in times past, and failed. I began editing nuclear related pages of wikipedia as something seriously needs to be done about the sheer amount of disinformation being spread. A quick example being here Nuclear power#Nuclear decommissioning, in 2011 Sovacool states that no "In the U.S. there are 13 reactors that have permanently shut down and are in some phase of decommissioning, and none of them have completed the process". A quick check of the Nuclear Regulatory Commission website however states exactly to the contrary - https://forms.nrc.gov/reading-rm/doc-collections/fact-sheets/decommissioning.html

- Maine Yankee was completely decommissioned in 2005 for example.[1]

- Johnfos also falsely suggests that Sovacool is not an anti-nuclear advocate. On Sovacool's own site, he details that he supplied "legal advocacy" in India against a proposed power station. Sovacool also wrote Contesting the Future of Nuclear Power.

- Boundarylayer (talk) 02:47, 29 June 2013 (UTC)

Anti-nuclear movement.[edit]

Having once been anti-nuclear seemingly by default as people always tend to fear what they do not understand. I was then sucked in by the movements sound bites and misleading statements. I've now, armed with an education, come to see them as wholesale being spreaders of nothing but exaggerated, misleading, Fear, uncertainty and doubt. Some are more cunning in their anti-nuclear message than others, being selective or economicial with the truth to fit their world-view. Frank von Hippel & Amory Lovins two controversial proliferation talking head.[33]

"Plutonium demilitarization, despite its intrinsic arms-control and nonproliferation value, has not gained traction; it has been caught up in an ongoing ideological dispute over nuclear power. Part of the resistance can be traced to nuclear obstructionists, who are opposed to nuclear power regardless of compensatory benefits. Others averse to nuclear power might be called nuclear abstractionists because they tend to accept some nuclear applications, while objecting to others."..."Frank von Hippel and Amory Lovins are two prominent outspoken opponents of plutonium demilitarization. Examination of their papers and presentations reveals that both tend to omit evidence and citations that contradict their position on the supposed weaponization qualities of reactor and demilitarized grades of plutonium. While short in relevant credentials, each has been actively impeding arms-control and nonproliferation measures described below."[34]

A plutonium nuclear weapon, which I will designate something that can create an explosive yield at least greater than 1 kiloton. It is practically impossible to make a nuclear weapon in a high burn up nuclear reactor, as the amount of Pu-240 and Pu-238 makes constructing such a thing practically impossible.[35]

However it is possible to make an explosion with high burn up plutonium, the explosion however will have a low yield of less than 1 kiloton. It's possible get an explosion with the stuff. Fortunately, the technical hurdles, (dealing with heat generation from Pu-238 and premature initiation from the spontaneous fission of Pu-240, and gamma emissions etc.) are "daunting". Therefore, the concern is "overblown" according to the authors of a paper which appeared in the American Physical Society(and who were members of the Federation of American Scientists) a rebuttal to Mark Carson's musings is presented, and also criticism of the Union of Concerned Scientists for being part of the problem with their campaign against reprocessing.[36]

The United States, for example, in 1988, considered spending a billion dollar on a laser enrichment Special Isotope Separation facility in Idaho national laboratory, to enrich low-grade plutonium from hanford tailings to weapons-grade. As low grade plutonium will sll its isotopic impurities just not being capable of getting crafted into a weapon, without undergoing isotopic enrichment.[37]

Human Research at the Bomb Tests[edit]

For continued research in this area. See the DOE Openness: Human Radiation Experiment Related Site listing.[38]

For example: The "DOE Office of International Health Programs - Marshall Islands Program homepage (http://tis.eh.doe.gov/ihp/marsh/marshall.htm) was created to provide access to documents which tell the story of U.S. nuclear weapons testing in the Marshall Islands from 1946 to 1958 and its impact on the lives of the Marshallese people, specifically the consequences of radioactive fallout on their environment and their health."

The following summary of the CONUS tests that involved humas was made after reading DOE Openness: Human Radiation Experiments: Roadmap to the Project ACHRE(Advisory Committee on Human Radiation Experiments) Report. The Defense Department's Medical Experts: Advocates of Troop Maneuvers and Human Experimentation. chapter 10.

"The [Desert Rock] exercise was designed primarily to train and educate troops in the fighting of atomic wars. The exercise also provided an opportunity for psychological and physiological testing of the effects of the experience on the troops.".[39] Bear in mind that the Desert Rock exercises exactly coincides with, and was likely highly influenced by, the ongoing Korean War at the time. Where there was a discussion of using tactical nuclear weapons if in the event the Chinese and Soviets began to successfully push the UN completely out of the Korean peninsula.

Not to let nuclear tests go to waste, those interested in biomedical effects of nuclear weapon detonations conducted a number of experiments while the nuclear warfare orientation training was being conducted in the majority of military participants. One of the experiments included - "Twelve subjects witnessed the detonation from a darkened trailer about sixteen kilometers from the point of detonation. Each of the human "observers" placed his face in a hood; half wore protective goggles, while the other half had both eyes exposed. A fraction of a second before the explosion, a shutter opened, exposing the left eye to the flash. Two subjects incurred retinal burns, at which point the project for that test series was terminated. The final report recorded that both subjects had "completely recovered." The flashblindness experiments were the only human experiments conducted under the biomedical part of the bomb-test program and the only human experiments where immediate injury was recorded.

Consent was obtained from at least some of the flashblindness subjects. In a 1994 interview, Colonel John Pickering, who in the 1950s was an Air Force researcher with the School of Aviation Medicine, recalled participating as a subject in one of the first tests where the bomb was observed from a trailer, and his written consent was required. "When the time came for ophthalmologists to describe what they thought could or could not happen, and we were asked to sign a consent form, just as you do now in the hospital for surgery, I signed one."

Later in non Desert Rock exercises, Operation Jangle, Jangle I included 8 volunteers moving into the area under a low air burst 4 hours after detonation to assess the ability of military protective uniforms (early NBC suits) to prevent fallout contacting human tissue, these volunteers were accompanied by radiation safety monitors. The Jangle II shot included volunteers doing military maneuvers, crawling, again with protective clothing, over contaminated ground soil at 5 days after the sub surface detonation, along with armored tanks air filters being tested by also driving around ground zero.

Aircraft crew experiments[edit]

Flashblindness experiments were conducted on airborne crew during the Desert Rock exercises, seemingly in tandem with ground trailer experiments. The flashblindness experiments began at the 1951 Operation Buster-Jangle, the series that included Desert Rock I, with the testing of subjects who "orbit[ed] at an altitude of 15,000 feet in an Air Force C-54 approximately 9 miles from the atomic detonation.he test subjects were exposed to three detonations during the operation, after which changes in their visual acuity were measured.[67] Although these experiments were conducted at bomb tests that potentially exposed the subjects to ionizing radiation, the purpose of the experiment was to measure the thermal effects of the visible light flash, not the effects of ionizing radiation.

Later Operation Teapot and Operation Redwing experiments included flying aircraft through the mushroom cloud, cloud-penetration experiments minutes to hours or so after detonation. This experiment was supported by data from drone flights through the clouds, but the air force wanted to be 100% sure, and probably to put aircrews worries to rest, to checked out that humans would be fine as long as they just dashed through the cloud at high speed. These experiments were led by Pinson who also flew a number of the experiments himself, General Pinson was still alive in 1995.[40]

"What are the dangers to be encountered by the personnel who fly through the cloud?--How much radiation can they stand?--How much heat can the aircraft take?--Can the ground crews immediately service the aircraft for another flight?--If so, what precautions are necessary to insure their safety?"

Why was the Air Force interested in showing that atomic clouds could be penetrated soon after a detonation? Most important, the military wanted to assure itself that it was safe for combat pilots to fly through atomic clouds, if need arose during atomic war. But the research did not make much of a scientific contribution.

To read more go to the site and navigate to the next sections.

Sovacool[edit]

https://en.wikipedia.org/w/index.php?title=Wikipedia:Biographies_of_living_persons/Noticeboard

- What issues does this user have exactly with his page? Going by the talk page of the subjects article -

- http://en.wikipedia.org/wiki/Talk:Benjamin_K._Sovacool This IP address, talk, originally attempted to portray themselves as somebody who knows [Sovacool] well. Now they are claiming here to actually be Sovacool himself?

- Is it, or is it not, WP policy to try and include references for a person that are not their own personal webpage? As, at present, the wikipedia page for this person is almost entirely a mirror of the persons personal page. Why bother even having a Wikipedia article if it is just going to be a mirror.

- WP:BALANCE dictates that if there is a controversy surrounding a person, then this should be reflected in the article. The Yale University paper states emphatically. That "life cycle GHG emissions from nuclear power are...comparable to renewable technologies."Life Cycle Greenhouse Gas Emissions of Nuclear Electricity Generation Sovacool however instead has claimed that Renewable energy is "7" times more effective than nuclear power at combating climate change, and now, in there most recent paper that - Wind power is "96" times more effective. This completely goes against the exhaustive findings of researchers at Yale University, the IPCC and the National Renewable Energy Laboratory.Collectively, life cycle assessment literature shows that nuclear power is similar to other renewable and much lower than fossil fuel in total life cycle GHG emissions

Collectively, life cycle assessment literature shows that nuclear power is similar to other renewable and much lower than fossil fuel in total life cycle GHG emissions.

- The IP user on Sovacool's talk page declares that the authoritative Intergovernmental Panel on Climate Change values are "unfair" and goes even further suggesting that the IPCC's values are inferior to those of Sovacool's, they write "Sovacool's number is probably more complete, and accurate". This might be the IP users opinion, however wikipedia policy has a preference for authoritative references, and you can't really get more authoritative than the IPCC when it comes to climate change, except for the UNCEFF. However, this IP user talks down this authoritative organization, and also wishes to remove this material? I think the IP user also fails to understand that it is not so much the actual value Sovacool arrived at as being up for debate, but a careful reading of the rebuttal of their work highlights that Sovacool did not apply his same methodology to other energy sources, however despite this omission, Sovacool declared "Renewable energy is 7 times more effective at combating climate change than nuclear power". The criticism by Berten et al issue is that Sovacool declared "Wind power is 7 more effective at combating climate change than nuclear power". On the Sovacool talk page, they state that "there has been new research published in Environmental Science & Technology confirming Sovacool's numbers that should be acknowledged" however this paper is not "new research" and which the IP user presents as evidence that "Sovacool is right" was printed with the assistance of well known, and highly public anti-nuclear advocates. Benjamin K. Sovacool is the principle author along with M. V. Ramana Mark Z. Jacobson , [[Mark A. Delucchi , and Mark Diesendorf.

- As Johnfos has said, Johnfos routinely edits wikipedia and uses Sovacool as their prime source. However Johnfos is entirely misleading when they suggest that it is only myself who have found Sovacool and Johnfos' promotion of his statements, to be unsuited for wikipedia. Indeed, Johnfos has encountered another editor on wikipedia who has have found Sovacool's publications to be "silly" and unreliable. They are User:NPGuy. An encounter that can be read here. -

- For a full disclosure, something that Johnfos has not done, is that it must be stated that Johnfos has in the past been forced into a "retirement" for copy & pasting material from anti-nuclear websites directly into wikipedia. They have also previously attempted to get me banned in times past, and failed. I began editing nuclear related pages of wikipedia as something seriously needs to be done about the sheer amount of disinformation being spread. A quick example being here Nuclear power#Nuclear decommissioning, in 2011 Sovacool states that no "In the U.S. there are 13 reactors that have permanently shut down and are in some phase of decommissioning, and none of them have completed the process". A quick check of the Nuclear Regulatory Commission website however states exactly to the contrary - https://forms.nrc.gov/reading-rm/doc-collections/fact-sheets/decommissioning.html

- Maine Yankee was completely decommissioned in 2005 for example.[2]

- Johnfos also falsely suggests that Sovacool is not an anti-nuclear advocate. On Sovacool's own site, he details that he supplied "legal advocacy" in India against a proposed power station. Sovacool also wrote Contesting the Future of Nuclear Power.

- Boundarylayer (talk) 02:47, 29 June 2013 (UTC)

Nuclear power for sustainable energy[edit]

Horrible page. Low-carbon power is much cleaner and well laid out, best to link to that, although I have not added anything to that page.

Time to improve it.

A report was published in 2011 by the World Energy Council in association with Oliver Wyman, entitled Policies for the future: 2011 Assessment of country energy and climate policies, which ranks country performance according to an energy sustainability index.[41] The best performers were Switzerland, Sweden and France.

Nuclear fission fuel is inexhaustible. http://www.mcgill.ca/files/gec3/NuclearFissionFuelisInexhaustibleIEEE.pdf

Sustainable energy is the sustainable provision of energy that meets the needs of the present without compromising the ability of future generations to meet their needs. Technologies that promote sustainable energy include renewable energy sources, such as hydroelectricity, solar energy, wind energy, wave power, geothermal energy, tidal power, and nuclear power,[42] and to a lesser degree technologies designed to improve energy efficiency.

Moreover, newer nuclear reactor designs are capable of reusing what is commonly deemed "nuclear waste"/spent nuclear fuel until it is no longer (or dramatically less) dangerous, and have design features that greatly minimize the possibility of a nuclear accident. (See: Integral Fast Reactor and Passive nuclear safety)

Such fuels that are produced by the electrolysis of water, or more efficiently from the thermochemical sulfur-iodine cycle, to make hydrogen that is then in turn fed in to the Sabatier reaction to produce methane, which may as usual then be stored to be burned later in power plants as synthetic natural gas,

Geothermal energy is produced primarily by tapping into the nuclear thermal energy created in the earth as uranium and thorium atoms emit heat as they decay, a process known as nuclear decay. It is considered sustainable because the thermal energy is constantly replenished, and as there is an abundance of uranium and thorium in the earths crust.

Approximately 7% of the heat energy produced in a nuclear fission reactor comes from the nuclear decay of fission products and nuclear transmutation products e.g. Plutonium-238, which is known as the decay heat of the reactor.[43] The majority of the energy in a reactor, around 80%, comes from the inelastic collisions between charged fission product nuclei. Essentially the nuclear fuel becomes full of extremely charged nuclei following fission events, all these "like charged particles", like little bar magnets, instantly push away from each other, creating heat energy as there is now a large amount of internal motion from the "little bar magnets" being repelled around within the nuclear fuel. The charged fission products("the little magnets") moving/kinetic energy is rapidly converted into heat energy during the zillions of inelastic collisions that are occurring - much like how you would expect, if you were standing outside a hollow spherical steel tank(like an empty liquid petroleum gas tank used for cooking) that was being shot at from a machine gun from within the tank, the skin of the tank would be hotter where it was shot with a bullet than in an area where there are no impacts; multiply this by zillions of internal bullet impacts, and the steel tank would get so hot it would become red hot/incandescent, and if the rate of impacts/collisions increased even more, it would become white hot and molten due to the kinetic energy of the bullets being converted into even more heat energy, and if the rate was even faster again - (a prompt supercriticality chain reaction in nuclear fissions case)- the internal energy of the metal shell quickly reaches, and then surpasses, liquid metal going through the gas phase and then finally to the plasma phase of matter which will incandescence mostly in the X-ray region of the electromagnetic spectrum - far past the brightest visible light, that is, equal to, and then higher than, the heat energy released by high explosive reaction products e.g. following a TNT detonation. See relative effectiveness factor to get a feel for just how far past it can go depending on how long you can keep the fissile fuel from blowing itself apart when it reaches the power density of TNT, a feat achieved with self tampering to some degree in the case of the low yield Davy Crockett, but for Fat Man high yields, heavy metal tampers and explosive detonation pressure are primarily what keep it squeezed tight as long as possible to reach much hotter internal temperatures. Finally, for even hotter temperatures, explosively driven implosive shells of fissile fuel are what keep the more efficient designs from blowing themselves apart once they achieve the internal temperature of Fat Man type devices.

In a nuclear reactor operating at peak power however, a much more subdued chain reaction occurs - a delayed criticiality - in which for every nucleus fissioned, another is fissioned...and so on. There is no real net increase in the number of fissions occurring. Unlike a supercriticality event. The "delayed" part of the critical condition is in reference to the use of delayed neutrons, which may be produced by fission products and transmutations, to keep the chain reaction from ending. To start a nuclear reactor, and to increase its power from zero up to say 4000 MW of thermal energy, I believe they use the inherent spontaneous fission rate and then delayed supercriticality to begin, and then to increase, the rate of fissions from zero up to the desired amount.

Many people, including Greenpeace founder and first member Patrick Moore,[44][45][46][47] George Monbiot,[48] Bill Gates,[49] Richard Branson,[50][51][52] environmentalists and authors - Stewart Brand, Gwyneth Cravens, James Lovelock,[53] NASA climate scientist James Hansen,[54][55][56] who was the recipient of the 2010 Sophie Prize for Environmental and Sustainable Development,[57] and David J. C. MacKay[58] the chief scientific adviser of the UK Department of Climate Change have all, either specifically classified nuclear power as sustainable energy, or described conventional nuclear power as safer than alternatives, faster at addressing dangerous climate change than other technologies, and essential to power a prosperous modern world. However critics of nuclear power, namely an organization now classified as a disinformation agent[59] - Greenpeace[60][61] disagree.

Nuclear power, with as of 2007 a 20% share of U.S. electricity production, is the largest deployed technology among current low-carbon energy sources.[62]

Nuclear power, as of 2010, also provides two thirds(2/3) of the twenty seven nation European Union's low-carbon power.[63]

According to a publication by the National academy of science,[62] Presently operating nuclear power plants have a 20% share of U.S. electricity production, and therefore nuclear power is the single largest deployed low-carbon power electricity generating technology in the country.[64]

In 2007, nuclear power supplied around one-seventh of the world's electricity.[65] Numerous studies and assessments (e.g., by the Intergovernmental Panel on Climate Change,[66] International Atomic Energy Agency,[67] and International Energy Agency)[68] suggest that as part of a portfolio of low-carbon energy technologies, nuclear power will continue to play a role in reducing greenhouse gas emissions.

Nuclear power

There are two sources of nuclear power. Nuclear Fission, which is used in all current nuclear power plants and is fueled by the metals uranium(or sometimes thorium), and nuclear Fusion which is the energy source that makes the stars shine, including our sun and is fueled by Hydrogen isotopes, such as Deuterium. There are also many conceptual combinations, combining the advantages of each - using fusion to burn up conventional spent nuclear fuel from fission reactors in Nuclear fusion-fission hybrid reactors. However, only nuclear fission has as of yet provided a steady state, high capacity factor and Energy return on energy invested output.

As of 2013 it remains impractical to generate electricity economically from fusion reactions for use on earth, as higher than break-even fusion reactors are not yet available, with the possible sole caveat of PACER technology.[69] However, a sustainable, break-even, steady state, proof of concept fusion reactor is presently being built, and is expected to begin producing more output energy than input by approximately 2025, known as the International Thermonuclear Experimental Reactor - ITER.

Moreover, pure Nuclear Fusion, if achieved, would become the most sustainable energy source known to humankind, instantly more sustainable than all other sustainable technologies which ultimately require absorbing solar radiation from the sun as their input energy source. As there is enough economically extractable fusion fuel in the form of deuterium, naturally found in sea water, to potentially power the entire earth, at the present energy demand level, for longer than the predicted life span of our parent star, the sun. Furthermore, as fusion energy emitted from the sun is ultimately the primary driver of the majority of what are, commonly considered, conventional sustainable energy power sources - from the solar heating of the air molecules that makes wind power possible, to the evaporation of sea water, that lifts water great heights that is eventually tapped by Hydro power - the efficiency of pure fusion energy would be easily higher than all other energy sources, when it is achieved

In terms of presently operating nuclear power technology, conventional fission power is sometimes also referred to as sustainable and renewable, as it emits a comparable amount of total life cycle greenhouse gas emissions, as wind power, but classifying nuclear power as sustainable is controversial amongst some commentators, often due to reasons irrelevant to the question of whether or not it is a sustainable energy source. As Navid Chowdhury of Stanford University noted:[70]

The IRENA (International Renewable Energy Agency), decision that it will not support nuclear energy programs because its a long, complicated process, it produces waste and is relatively risky, proves that their decision has nothing to do with having a sustainable supply of fuel.

The technology to economically extract uranium from the single largest known source of uranium in the world, the oceans, is as of 2012 approaching the economical equivalence level of uranium produced from conventional uranium resources, as sea water extraction, at 2012 technological levels is predicted to cost ~$300/kg in comparison to the conventionally mined uranium that routinely sells for ~$60–100/kg of uranium. Until seawater extracted uranium can directly compete with conventionally produced uranium, the lower cost of uranium sourced from conventional mining will continue to ensure it will remain the primary source of the worlds uranium supply. With the world supply of uranium not only going on to fuel the fleet of 437 fission power reactors worldwide, but also includes uranium necessary to fuel the world fleet of research reactors which continue to produce the majority of the worlds demand for medical radiopharmaceuticals, and also the fleet of nuclear marine propulsion reactors.

However, despite not becoming commercialized yet, the continued advancement in the economics of extracting uranium from sea water is largely removing previous concerns about the long term sustainability of conventional fission nuclear power.[71] A sustainability issue that may have been encountered after a few centuries of continued consumption of the known land reserves of uranium, but a sustainability issue possible only in a scenario were one assumes humankind would not continue to find more conventional uranium reserves on the earths crust, and therefore add to the inventory of known reserves as the century unfolds, and, assuming that humanity would not improve upon the efficiency of the present fission reactor technology as the century unfolds either.

Moreover, an appraisal of the sustainability of conventional fission nuclear power by a team at MIT in 2003, and updated in 2009,(which is notably before the 2012 advancement in uranium extraction from sea water) stated that:[72]

Most commentators conclude that a half century of unimpeded growth is possible, especially since resources costing several hundred dollars per kilogram($/kg) would also be economically usable...We believe that the world-wide supply of uranium ore is sufficient to fuel the deployment of 1000 reactors over the next half century.

There is, according to the OECD in 2008, excluding oceans, enough uranium in known conventional resources to power the current world fleet of 437+ nuclear reactors, which provides ~15% of the world's electricity, for 670 years at the present economically recoverable uranium rate. This is from combining all the known uranium reserves in total conventional mine resources and phosphate ores, and assuming that all the uranium will be burnt up in the most common present design of reactors and not burnt up in the more efficient generation III or generation IV reactors. Furthermore, the OECD noted that this is all 670 years of uranium recoverable at present 2008 prices, which are between 60 and 100 US$/kg of Uranium.[73]

The OECD have further noted in 2008 that, with and without the likely improvements in reactor uranium burn up efficiency:

Even if the nuclear industry expands significantly, sufficient fuel is available for centuries. If advanced breeder reactors could be designed in the future to efficiently utilize recycled or depleted uranium and all actinides, then the resource utilization efficiency would be further improved by an additional factor of eight.

Finally, the OECD have determined that with a pure fast reactor fuel cycle, with a burn up of, and recycling of, all the Uranium and waste actinides, there is 160,000 years worth of Uranium in known conventional resources and phosphate ore.[74] Again, excluding the largest, but presently unconventional, source of uranium - seawater.

As noted by the OECD, nuclear power has the potential to also significantly expand its renewability from a fuel and waste perspective, such as by the use of more breeder reactors, which breed more fissile fuel than they consume while also producing power(the breeding process is possible due to the reactor design making use of its excess neutrons to burn fertile fuel, much as fissile fuel is burnt in the present Light water reactors).

However, significant challenges presently exist in expanding the role of breeder reactors, and therefore also for nuclear fission to become truly renewable, namely one of the main issues is trying to compete economically with other reactor designs that do not breed more fuel than they consume, but instead are dedicated to solely burning fuel. It is for this reason, that although they have been successfully built and run, uranium breeder reactors have not yet become economically competitive with light water reactor designs, and they may stay that way, until, or if, uranium prices increase, and therefore uranium scarcity occurs.[75]

See talk page history of sustainable energy to see what I was replying to -

- Good catch man, predictably, wikipedia has a lot of anti-science types editing and removing material, especially anything possibly construed as pro-nuclear. I recently added a lot of detail to the nuclear power section, to give its proper amount of weight(as worldwide it is the largest sustainable energy source currently fielded). However I did not see the thorium material you reference(someone probably removed it again), if it was good stuff, could you add it again? I mean, what sort of sustainable energy page on an encyclopedia, that regards itself as respectable, doesn't include a proper treatise on Fusion? It is after all going to be the most sustainable of energy sources once it is achieved and commercialized. It'll out shine the sun in life span! so it is the epitome of sustainable, how much more sustainable do you need?

BoundaryLayer edit blanked by kim [3]

- Kim as other editors here are aware, the nuclear power section has been under consistent vandalism by numerous anti-nuclear elements. (1) Changing 'some' into 'many' is entirely consistent with the list of people who regard nuclear power as sustainable. They are not some fringe group but include NASA climate scientist James Hansen, the only fringe group in respect to nuclear power and the only 'controversy' you speak of comes from biased anti-scientific organizations - Greenpeace.

- (2) Nuclear power is subject to more half-truths and propaganda(again largely due to the likes of Greenpeace and their disinformation campaigns) than any other source of power. For this reason it requires a substantial amount of scientific weight in the article to put their anti-science propaganda to rest, and to address the reality of if fission and fusion are sustainable. Another reason for the nuclear power section is that - it is the most sustainable form of power humanity presently knows of, long after the sun dies, fusion and even some fission technologies will still have more than ample amounts of fuel to keep on going - i.e long after the wind stops blowing and your solar panels stop working for example, so are you really arguing the most sustainable form of power shouldn't get more than a few measles lines? If this encyclopedia wasn't infected with so much anti-nuclear bias and the sustainability technologies were ranked in descending order of sustainability, Nuclear power would be on the top. A fact that is inescapable, and you know it. Another reason for having a fleshed out nuclear power section is that, as there is plenty of uranium and thorium abound, fission is sustainable for thousands of years, not many people are aware of this, and believe the opposite, so it is necessary to detail just exactly how much is available, which is dependent on which reactor technology is used.

- (3) You remove the sulfur-iodine cycle for reasons that are inconsistent, as nanotechnology solar panels feature in the article, - and similarly they're not really in use either Kim. So you have just demonstrated a monumental bias that has resulted in yet more censorship vandalism on this article.

- Please desist from this biased censorship conduct.

- Thank youBoundarylayer

- Kim, please provide one scientific source that supports your POV that it is controversial to regard nuclear power as sustainable. I think you'll find that btw it is you who is not mainstream.

- MIT have stated that there is plenty of fuel for 1000 reactors to be built over the next half century. -Which I reference in the nuclear power section, a section which you have consistently censored.

- Patrick Moore more than once regards nuclear power as sustainable in the video that I provided in the section, so yes I can use that video.

- Richard Branson - The construction of modern nuclear reactors was a step that was already agreed upon in the effort to build a new system powered by sustainable energy. http://www.entrepreneur.com/article/220496

- James Hansen says it is very unfortunate that “a number of nations have indicated that they’re going to phase out nuclear power… The truth is, what we should do is use the more advanced nuclear power. Even the old nuclear power is much safer than the alternatives.”

- http://theenergycollective.com/jcwinnie/60103/social-and-decision-sciences-and-engineering-and-public-policy

- More than once he has described nuclear power as sustainable without specifically saying the word. You know this, but you're just filibustering for the sake of it at this stage.

- James Hansen is also a recipient of the 2010 Sophie Prize for Environmental and Sustainable Development. So you should ask yourself, why they would give him a sustainable development prize if he was not for sustainable energy sources.

- Read about the prize here - https://researchfunding.duke.edu/detail.asp?OppID=5241

- You ramble on quite a bit here - but you fail to realize this simple fact - this article is about sustainable energy! Yes Kim, That means that the article should include long term, after the sun dies, sustainable energy. Kim, which power source would we still have running if the sun were to stop heating the earth tomorrow? Say for example, from a volcanic winter (like the 1800s Krakatoa etc.), or indeed when the sun dies naturally in a few billion years from now? If you answer nuclear power, as any logical thinking person would, you just proved my point. Nuclear Fusion is thy most sustainable source of power.

- As for Solar energy, I think I'll let Mr. Gates respond to this one. - Gates argues that nuclear power is still safer than all other energy options, rich countries aren’t spending enough on R&D, and installing solar panels on your roof is not helping to reduce CO2 emissions. It’s merely “cute.” http://www.wired.com/magazine/2011/06/mf_qagates/

- Oh and Solar energy is not sustainable PV definitely is not. - http://www.newscientist.com/article/dn16550-why-sustainable-power-is-unsustainable.html

- So once again you're just spreading your own misunderstandings.

- Boundarylayer (talk) 20:40, 6 March 2013 (UTC)

- You are abusing your position and you know it. There are many contradictions and falsehoods now stated in the article, once again, thanks only to you.

- Boundarylayer

- It is due to their own interpretation, and lens through which they see the world, that they have assigned scare quotes to nuclear power as sustainable. Moreover, they have still yet to supply a single scientific reference to state nuclear power is unsustainable. They suggest they know what Branson meant and have the gist etc. etc. but I'm the one reading into what he says. Finally if someone defines(see the definitions section in the article, if you don't know what the word means) nuclear power as sustainable, without actually saying the word, then they have described a sustainable energy source. As for WP:WEIGHT, Tidal power, Solar PV etc. etc. all get far too much weight in the article seen as they supply negligible amounts of energy and Solar PV isn't sustainable at all. I will supply some peer reviewed papers in the coming days that state nuclear power is more sustainable than Solar PV.

- http://gabe.web.psi.ch/pdfs/lca/Dones_EcoBalance_2006.pdf Sustainabilty of energy sources. Figure 2. Note that nuclear powers over all combined, Economic, Environmental & Social sustainability score is higher than Solar PV, and its sustainability score would be higher still, that is comparable to hydro power, if the hypothetical nuclear proliferation(Social sustainability) concern did not count against nuclear powers over all sustainability value. Due to Nuclear power being ranked higher than Solar PV in sustainabilty, it logically therefore follows that by the WP:WEIGHT criteria, nuclear power(fission) should get a greater amount of weight in the article than Solar PV, and the nuclear power section should also be positioned closer to the top of the article, rather than pushed down to the very end of the article in the very anti-nuclear POV manner that it currently is. As you wrote yourself - Write about nuclear proportionally to how the literature of sustainable energy is focusing on it. (WP:WEIGHT)

- Another source is http://www.withouthotair.com/c24/page_161.shtml A book titled Sustainable energy by David J. C. MacKay who is the chief scientific adviser to the Department of Energy and Climate Change. Page 162 spends a great deal of time talking about the quantity of Nuclear fuel. Fuel, after all, being the major requirement for sustainable energy considerations. The book is a little old, and many advances in uranium extraction from sea water have occurred since publication. Therefore this is why I spent the majority of the nuclear power section discussing fuel supply.

- I have also noted that this entire Sustainable energy article spends most of its time talking about Renewable energy(an entirely separate topic) rather than sustainable energy. If I had the time I would fix this too, but as the nuclear power section was under-represented, and indeed, entirely misrepresented with, need I remind you, constant censorship blank out's by anti-nuclear editors, the nuclear power section demanded a greater degree of urgent attention. So it has nothing to do with advocacy, but everything to do with writing an encyclopedia.

- Nothing but opinion there friend, therefore nothing but anti-scientific reasons, I asked for scientific sources, with scientific reasoning to back them up. Instead you have linked me to a plethora of opinion pieces, none of which offer a single quantifiable reason why nuclear fission power should be classified as unsustainable.

- Moreover some of those 'references' are truly laughable - the 'Socialist register' an anti-capitalist opinion publication, and others with titles such as industry propaganda...are you serious? Whereas, on the contrary my sources have scientifically crunched the numbers, and have ranked energy sources by their sustainability. http://gabe.web.psi.ch/pdfs/lca/Dones_EcoBalance_2006.pdf Sustainabilty of energy sources. Figure 2. http://www.withouthotair.com/c24/page_161.shtml A book titled Sustainable energy by David J. C. MacKay which is a book that also discusses the quantity of nuclear waste too, all of it fitting into a few swimming pools. I'd like to see the Wind or Solar industry manage to produce such a comparably small amount of waste per unit of energy generated.

- So I'm still waiting. Where is the science to back up the opinion that nuclear power is unsustainable? All I can find is that it nuclear power is sustainable, moreso than Solar PV for that matter.

- Indeed even some Concentrated solar power(CSP) technologies are presently unsustainable due to high water usage in areas where fresh water is scarce. Costs of reducing water use of concentrating solar power to sustainable levels: Scenarios for North Africa http://www.sciencedirect.com/science/article/pii/S0301421511003429 Scaling up CSP with wet cooling from ground water will be unsustainable in North Africa. and the paper generally discusses how dry cooling technology produces lower efficiencies and therefore CSP in Africa won't be economical, even under optimistic calculations until ~2030.

- Solar energy is not all that sustainable PV definitely is questionable. - http://www.newscientist.com/article/dn16550-why-sustainable-power-is-unsustainable.html

- Boundarylayer (talk) 14:49, 14 March 2013 (UTC)

A report was published in 2011 by the World Energy Council in association with Oliver Wyman, entitled Policies for the future: 2011 Assessment of country energy and climate policies, which ranks country performance according to an energy sustainability index.[41] The best performers were Switzerland, Sweden and France. All produce electricity with from ~50% to 80% nuclear power in their electricity grid. No mention of (100% renewable) Iceland and no mention of Brazil either in the top three countries.

Renewable energy[edit]

|

|

Renewable energy sources, that derive their energy from the sun, either directly or indirectly, are expected to be capable of supplying humanity energy for almost another 1 billion years, at which point the predicted increase in heat from the sun is expected to make the surface of the Earth too hot for liquid water to exist.[76][77]

26 March 2013 edit that was removed. added some info on the average rate of supply from the OECD, taken from their factbook. 4.6% in 1971 and 7.6% in 2010)

According to the OECD factbook 2011-2012, worldwide, Iceland(85.6%) and Brazil(45.8%) exploit the greatest proportion of renewable energy to supply their total energy requirements(including electricity and other energy needs) with the world average percentage at 13.1%. Other countries in the OECD with a high total energy supply from renewable sources are - New Zealand(38.6%), Norway(37.3%), Sweden(32.7%),Austria(26%) Portugal(24%), Finland(24.9%), Chile(22.7%), Switzerland(18.8%), Denmark(18.8%), Canada(16.5%) and Estonia(14.4%).[78] Worldwide, other non-OECD nations with a higher percentage of renewable energy representing their total energy needs, than in comparison to the average from OECD countries(7.6%), are - Brazil(45.8%), Indonesia(34.4%), India(26.1%) and China(11.9%).[78][79]

For all OECD countries taken as a whole, the contribution of renewables to total energy supply increased from 4.8% in 1971 to 7.6% in 2010, In general the contribution of renewables to the energy supply in non-OECD countries is higher than in OECD countries.[79] With the world average percentage of total energy supplied from renewable energy at 13.1%(including OECD countries and non-OECD countries) in 2010.[78]

debate[edit]

There is an ongoing renewable energy debate, the debate on biofuels include the food vs fuel dilemma and the Indirect land use change impacts of biofuels. The debate on hydropower installations usually revolve around the footprint of dam floodplains, with for example the Three Gorges dam, the largest renewable electricity source in the world, having displaced 1.3 million people,[80][81] and garnered environmental criticism.[82] The debate on new renewables, such as wind and solar, is usually much less intense, and focuses on their intermittent supply of electricity, higher cost of electricity, and a small but growing community opposition to the industrialized footprint of large solar and wind farm installations.[83] Renewable energy accidents with large losses of life include the Banqiao dam failure and the inhalation of particulate matter from the burning of biomass.[84]

Biomass on the renewable energy page[edit]

The proportion of truly renewable biomass in use is uncertain, as for example peat, one of the largest sources of biomass, is sometimes regarded as a renewable source of energy. However, due to peats extraction rate in industrialized countries far exceeding its slow regrowth rate of 1mm per year,[85] and due to it being reported that peat regrowth takes place only in 30-40% of peatlands,[86] There is considerable controversy with this renewable classification.[87] Organizations tasked with assessing climate change mitigation methods differ on the subject, the UNFCCC classify peat as a fossil fuel due to the thousand plus year length of time for peat to re-accumulate after harvesting,[87] another organization affiliated with the United Nations also classified peat as a fossil fuel.[88] However, the Intergovernmental Panel on Climate Change (IPCC) has begun to classify peat as a "slow-renewable" fuel,[89] with this also being the classification used by many in the peat industry.[87] Further controversy surrounding the classification of all biomass as "renewable" centers around the fact that depending on the plant source, it can take from 2 to 100 years for different sources of plant energy to regrow, such as the difference between fast growing switch grass and slow growing trees, therefore due to the high emission intensity of plant material, researchers have suggested that if the biomass source takes longer than 20 years to regrow, they argue the plant source should not be regarded as renewable from a climate change mitigation standpoint.[90]

Solar power edit[edit]

Thermoelectric, or "thermovoltaic" devices convert a temperature difference between dissimilar materials into an electric current. First proposed as a method to store solar energy by solar pioneer Mouchout in the 1800s,[91] thermoelectrics reemerged in the Soviet Union during the 1930s. Under the direction of Soviet scientist Abram Ioffe a concentrating system was used to thermoelectrically generate power for a 1 hp engine.[92] Thermogenerators, but in the following cases powered by the heat source plutonium-238 in radioisotope thermoelectric generators are used in the US space program as an energy conversion technology for powering deep space missions such as the Mars Curiosity rover, Cassini, Galileo and Viking. Research in this area of thermogenerators, which can use any heat source, is focused on raising the efficiency of these devices from 7–8% to 15–20%.[93]

Wind power, birds, material intensity & actual CO2 savings[edit]

Birds To start with, here's a sad story, that sadly also featured in the telegraph "paper", in June 2013. The White-throated Needletail - the world's fastest flying bird - garnered a big crowd in Britain amongst ornithologists as an opportunity to see the rare bird, having only arrived on the Island, they allegedly saw the bird killed by a wind turbine.[94]

Page 81 has public questions aobut proposed wind farms, some are more plausible than others. Worth taking a look at.[95]

Nuclear safety[edit]

originally for the article but not now, intro, if you reinsert.

To frame nuclear power together with other low carbon sources of dependable power, catastrophic terrorist attacks are also conceivable in hydroelectric dam scenarios,[99][100] and depending on location, could result in a comparable death toll to the worst conceivable nuclear attack. Furthermore, numerous terrorist attacks on nuclear plants have never been successful at breaching the reactor. Attacks on hydro plants on the other hand have been successful at compromising dams, with the 2010 Baksan hydroelectric power station attack being the most recent successful terrorist attack on a hydroelectric power plant.

nuclear power development and safety[edit]

The Three Mile Island accident's molten core got exactly 15 millimeters on its way to "China" before the core froze at the bottom of the reactor pressure vessel.[101]

Unlike the 1979 Three Mile Island accident, the much more serious 1986 Chernobyl accident did not increase regulations affecting Western reactors due to the RBMK reactor that caused the accident being of a design incomparable to all western reactors, that is, Graphite moderated and water cooled, with the water also acting somewhat as a neutron poison.[102] This combination of graphite moderation and water cooling is not found in any other reactor design in the world.[103] Due largely to these materials selections, the Chernobyl RBMK design used only in the former Soviet Union, is unlike all western designs which are primarily, as of 2013, Light water reactors. Precipitating the Chernobyl accident was the reactor design and specific material selections resulting in what is known as a large positive Void coefficient, which is a nuclear engineering term that means fission reactions increase, and therefore the heat that they produce also increases rapidly when coolant is lost. This is in direct contrast to the negative void coefficient in most western designs, were fission reactions cease when coolant is lost.[104] Along with these reactor design flaws the RBMK also had a slow SCRAM speed, lacked the "robust" containment buildings that are built as standard in all U.S. designs and also lacked the 6 inch thick steel reactor vessel.[105][106][107] Approximately 10 of these RBMK reactors are still in use as of 2013. However, changes in the former Soviet Union were made following the accident and the end of the Cold War, improvements in both the reactors themselves (use of a safer enrichment of uranium) and in the control system (prevention of disabling safety systems), amongst other things, to reduce the possibility of a duplicate accident, with some RBMK's now in operation reportedly achieving a negative void coefficient.[108][109][110]

to be added.

Lancet. 1988 Nov 19;2(8621):1185-6. International Physicians for the Prevention of Nuclear War: Messiahs of the nuclear age[111]

Leaders of the Nobel Peace Prize winning group International Physicians for the Prevention of Nuclear War (IPPNW) claim that their struggle against the nuclear threat may be ‘one of the significant contributions of our profession to the survival of humankind’ (Lown, B., ‘Looking back, seeing ahead’, Lancet, 1988; ii: 203-4). Citing their ‘unique knowledge and expertise’ as qualifications for working for the abolition of nuclear weapons, IPPNW urges physicians to educate the public about nuclear war and to offer sound prescriptions for nuclear war prevention (Lown, B., ‘Looking back, seeing ahead’, Lancet, 1988; ii: 203-4).

In science, good intentions and noble sentiments do not exempt one's work from critical scrutiny. Because the advocacy of IPPNW is cloaked in scientific authority, it should be (but rarely is) subjected to the usual rigors of scientific criticism.

IPPNW has indeed played a major role in educating the public about nuclear war, and consequently in gaining widespread acceptance of fallacious beliefs, some of which are repeated in the Lancet (Lown, B., ‘Looking back, seeing ahead’, Lancet, 1988; ii: 203-4). For example, Lown speaks of nuclear winter as a “discovery” rather than as a hypothesis. IPPNW has pointedly ignored the criticism (Penner, J. E., ‘Uncertainties in the smoke source term for “nuclear winter” studies’, Nature, 1986; 324: 222-226; Seitz, R., ‘Siberian fire as “nuclear winter” guide’, Nature, 1986; 323: 116-117; Seitz, R., ‘In from the cold: “nuclear winter” melts down’, National Interest, 1986; 2(1): 3-17; Chester, C. V., et al., ‘A preliminary review of the TTAPS nuclear winter scenario’, Oak Ridge, TN: Oak Ridge National Laboratory, 1984, report ORNL/TM-9223) of the original nuclear winter report, as well as the later, more sophisticated studies that have debunked the doomsday scenario ...

In referring to the Chernobyl disaster, Lown (Lown, B., ‘Looking back, seeing ahead’, Lancet, 1988; ii: 203-4) states that the odds of a meltdown were estimated to be 1 in 10,000 years, according to Soviet Life. (A mere meltdown would have been a trivial event in comparison with the graphite-fueled fire that actually occurred.) Yet American engineers recognized the danger of reactors with a positive void coefficient (like the Chernobyl reactor) as early as 1950 (Teller, E., ‘Better a shield than a sword: perspectives on defense and technology’, New York: Free Press, 1987). Why did the Soviets choose an unsafe design for a reactor built quite recently? One possible explanation is that such reactors can be refueled while in operation, permitting the production of weapons-grade plutonium as a byproduct (Cohen, B. L., ‘The nuclear reactor accident at Chernobyl, USSR’, Am. J. Phys, 1987; 55: 1076-1083).

uranium mining and nuclear spill comparable to three mile island in curies released.[edit]

Check the references.

Conventional Uranium mining via opencast mining and underground mining is largely being replaced by In-situ leaching technology, a method of extraction that does not produce the same occupational hazards, or mine tailings, as conventional mining.

Although the nuclear industry did rely on poorly ventilated uranium mining practices in times past, essentially before the advent of commercial nuclear power in the late 1960s, with a non-zero number of accidents and fatalities, primarily due to radon inhalation.[112] The use of physically mining uranium has increasingly been replaced with In-situ leaching extraction technology, and regulation is in place to ensure the use of high volume ventilation technology in confined space uranium mining, with both largely eliminating occupational exposure and mining deaths.[113][114][115] Moreover, it must be noted that all historic uranium mining deaths are negligible contributors to nuclear powers fatality rate per unit of energy generated, as this is dominated by a single event, the Chernobyl disaster.[116]

Reactor-grade plutonium[edit]

Recent edits by I.

- Reactor-grade plutonium is found in spent nuclear fuel that a nuclear reactor has irradiated (burnup/burnt up) for years before removal from the reactor, in contrast to the low burnup of weeks or months that is commonly required to produce weapons-grade plutonium, with the high time in the reactor(high burnup) of reactor-grade plutonium leading to transmutation of much of the fissile, relatively long half-life isotope 239Pu into a number of other isotopes of plutonium that are less fissile or more radioactive.

- Thermal-neutron reactors (today's nuclear power stations) can reuse reactor-grade plutonium only to a limited degree as MOX fuel, and only for a second cycle; fast-neutron reactors, of which there is less than a handful operating today, can use reactor-grade plutonium, or any other actinide, material indefinitely as a means to reduce the transuranium content of spent nuclear fuel.

- The degree to which typical high burn-up reactor-grade plutonium is less useful than weapons-grade plutonium for building nuclear weapons is somewhat debated, with many sources arguing that the maximum probable yield would be bordering on a fizzle of the range 0.2 to 2 kiloton in a Fat Man type device, assuming the non-trivial issue of dealing with the heat generation from the higher content of non-weapons usable Pu-238, that is present, could be overcome, as the premature initiation from the spontaneous fission of Pu-240 would ensure a low explosive yield in such a device, with the surmounting of both issues being described as "daunting" hurdles for a Fat Man era implosion design.[117][35][34]

- While others disagree on theoretical grounds,[118][119][120] arguing that it would be "relatively easy" for a well funded entity with access to fusion boosting tritium and expertise to overcome the problem of predetonation created by Pu-240, and that a remote manipulation facility could be utilized in the assembly of the highly radioactive gamma ray emitting bomb components, coupled with a means of cooling the pit during storage to prevent the plutonium charge contained in the pit from melting, and a design that kept the implosion mechanisms high explosives from being degraded by the pits heat.

- No information, in the public domain, suggests that any well funded entity has ever achieved, or seriously pursued creating, a nuclear weapon with the same isotopic composition of modern, high burn up, reactor grade plutonium. All nuclear weapon states have taken the more conventional path to nuclear weapons by uranium enrichment and producing low burn up, weapons-grade plutonium, in reactors capable of operating as production reactors. While the isotopic content of reactor-grade plutonium, created by the most common commercial power reactor design, the pressurized water reactor, never directly being considered for weapons use.

- In April 2012 there were thirty one countries that have civil nuclear power plants,[121] with nine of which with nuclear weapons and almost every nuclear weapons state began producing weapons first instead of commercial nuclear power plants. The re-purposing of civilian nuclear industries for military purposes would be a breach of the Non-proliferation treaty.

- (break in text not altered by I)For example, a generic Pressurized water reactor's spent nuclear fuel isotopic composition, following a typical Generation II reactor 45 GWd/MTU of burnup, is 1.11% plutonium of which 0.56% is Pu-239, which corresponds to a Pu-239 content of 50.5%.[23]

- The odd numbered fissile plutonium isotopes present in spent nuclear fuel, such as Pu-239, decrease significantly as a percentage of the total composition of all plutonium isotopes(which was 1.11% in the above example) as higher and higher burnups take place, while the non fissile plutonium isotopes all increase in percentage - e.g Pu-238, Pu-240.[122]

- nuclear terrorism target(of high burn up plutonium.

- Aum Shinrikyo, who succeeded in developed Sarin and VX nerve gas is regarded to have lacked the technical expertise to develop, or steal, a nuclear weapon. Similarly, Al Qaeda was exposed to numerous scams involving the sale of radiological waste and other non-weapons-grade material. With this experience possibly leading terrorists to conclude that nuclear acquisition is too difficult and too costly to be worth pursuing.[123]

Design and construction of nuclear explosives based on normal reactor-grade plutonium is difficult and unreliable, but was demonstrated in 1962 from plutonium from Magnox reactors.[124]

Much popular concern about possible weapons proliferation arises from considering the fissile materials themselves. For instance, in relation to the plutonium contained in spent fuel discharged each year from the world's commercial nuclear power reactors, it is correctly but misleadingly asserted that "only a few kilograms of plutonium are required to make a bomb". Furthermore, no nation is without enough indigenous uranium to construct a few weapons (however, that uranium would have to be enriched).

Plutonium is a substance of varying properties depending on its source. It consists of several different isotopes, including Pu-238, Pu-239, Pu-240, and Pu-241. All of these are plutonium but not all are fissile – only Pu-239 and Pu-241 can undergo fission in a normal reactor. Plutonium-239 by itself is an excellent nuclear fuel. It has also been used extensively for nuclear weapons because it has a relatively low spontaneous fission rate and a low critical mass. Consequently, plutonium-239, with only a few percent of the other isotopes present, is often called "weapons-grade" plutonium. This was used in the Nagasaki bomb in 1945 and in many other nuclear weapons.