Bell's theorem

Bell's theorem is a term encompassing a number of closely related results in physics, all of which determine that quantum mechanics is incompatible with local hidden-variable theories, given some basic assumptions about the nature of measurement. "Local" here refers to the principle of locality, the idea that a particle can only be influenced by its immediate surroundings, and that interactions mediated by physical fields cannot propagate faster than the speed of light. "Hidden variables" are putative properties of quantum particles that are not included in quantum theory but nevertheless affect the outcome of experiments. In the words of physicist John Stewart Bell, for whom this family of results is named, "If [a hidden-variable theory] is local it will not agree with quantum mechanics, and if it agrees with quantum mechanics it will not be local."[1]

The term is broadly applied to a number of different derivations, the first of which was introduced by Bell in a 1964 paper titled "On the Einstein Podolsky Rosen Paradox". Bell's paper was a response to a 1935 thought experiment that Albert Einstein, Boris Podolsky and Nathan Rosen proposed, arguing that quantum physics is an "incomplete" theory.[2][3] By 1935, it was already recognized that the predictions of quantum physics are probabilistic. Einstein, Podolsky and Rosen presented a scenario that involves preparing a pair of particles such that the quantum state of the pair is entangled, and then separating the particles to an arbitrarily large distance. The experimenter has a choice of possible measurements that can be performed on one of the particles. When they choose a measurement and obtain a result, the quantum state of the other particle apparently collapses instantaneously into a new state depending upon that result, no matter how far away the other particle is. This suggests that either the measurement of the first particle somehow also interacted with the second particle at faster than the speed of light, or that the entangled particles had some unmeasured property which pre-determined their final quantum states before they were separated. Therefore, assuming locality, quantum mechanics must be incomplete, as it cannot give a complete description of the particle's true physical characteristics. In other words, quantum particles, like electrons and photons, must carry some property or attributes not included in quantum theory, and the uncertainties in quantum theory's predictions would then be due to ignorance or unknowability of these properties, later termed "hidden variables".

Bell carried the analysis of quantum entanglement much further. He deduced that if measurements are performed independently on the two separated particles of an entangled pair, then the assumption that the outcomes depend upon hidden variables within each half implies a mathematical constraint on how the outcomes on the two measurements are correlated. This constraint would later be named the Bell inequality. Bell then showed that quantum physics predicts correlations that violate this inequality. Consequently, the only way that hidden variables could explain the predictions of quantum physics is if they are "nonlocal", which is to say that somehow the two particles are able to interact instantaneously no matter how widely they ever become separated.[4][5]

Multiple variations on Bell's theorem were put forward in the following years, introducing other closely related conditions generally known as Bell (or "Bell-type") inequalities. The first rudimentary experiment designed to test Bell's theorem was performed in 1972 by John Clauser and Stuart Freedman.[6] More advanced experiments, known collectively as Bell tests, have been performed many times since. Often, these experiments have had the goal of "closing loopholes", that is, ameliorating problems of experimental design or set-up that could in principle affect the validity of the findings of earlier Bell tests. To date, Bell tests have consistently found that physical systems obey quantum mechanics and violate Bell inequalities; which is to say that the results of these experiments are incompatible with any local hidden-variable theory.[7][8]

The exact nature of the assumptions required to prove a Bell-type constraint on correlations has been debated by physicists and by philosophers. While the significance of Bell's theorem is not in doubt, its full implications for the interpretation of quantum mechanics remain unresolved.

Theorem[edit]

There are many variations on the basic idea, some employing stronger mathematical assumptions than others.[9] Significantly, Bell-type theorems do not refer to any particular theory of local hidden variables, but instead show that quantum physics violates general assumptions behind classical pictures of nature. The original theorem proved by Bell in 1964 is not the most amenable to experiment, and it is convenient to introduce the genre of Bell-type inequalities with a later example.[10]

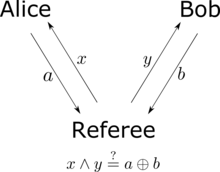

Hypothetical characters Alice and Bob stand in widely separated locations. Their colleague Victor prepares a pair of particles and sends one to Alice and the other to Bob. When Alice receives her particle, she chooses to perform one of two possible measurements (perhaps by flipping a coin to decide which). Denote these measurements by and . Both and are binary measurements: the result of is either or , and likewise for . When Bob receives his particle, he chooses one of two measurements, and , which are also both binary.

Suppose that each measurement reveals a property that the particle already possessed. For instance, if Alice chooses to measure and obtains the result , then the particle she received carried a value of for a property .[note 1] Consider the following combination:

Because both and take the values , then either or . In the former case, , while in the latter case, . So, one of the terms on the right-hand side of the above expression will vanish, and the other will equal . Consequently, if the experiment is repeated over many trials, with Victor preparing new pairs of particles, the absolute value of the average of the combination across all the trials will be less than or equal to 2. No single trial can measure this quantity, because Alice and Bob can only choose one measurement each, but on the assumption that the underlying properties exist, the average value of the sum is just the sum of the averages for each term. Using angle brackets to denote averages,

Quantum mechanics can violate the CHSH inequality, as follows. Victor prepares a pair of qubits which he describes by the Bell state

The CHSH inequality can also be thought of as a game in which Alice and Bob try to coordinate their actions.[14][15] Victor prepares two bits, and , independently and at random. He sends bit to Alice and bit to Bob. Alice and Bob win if they return answer bits and to Victor, satisfying

[edit]

Bell (1964)[edit]

Bell's 1964 paper points out that under restricted conditions, local hidden-variable models can reproduce the predictions of quantum mechanics. He then demonstrates that this cannot hold true in general.[3] Bell considers a refinement by David Bohm of the Einstein–Podolsky–Rosen (EPR) thought experiment. In this scenario, a pair of particles are formed together in such a way that they are described by a spin singlet state (which is an example of an entangled state). The particles then move apart in opposite directions. Each particle is measured by a Stern–Gerlach device, a measuring instrument that can be oriented in different directions and that reports one of two possible outcomes, representable by and . The configuration of each measuring instrument is represented by a unit vector, and the quantum-mechanical prediction for the correlation between two detectors with settings and is

In particular, if the orientation of the two detectors is the same (), then the outcome of one measurement is certain to be the negative of the outcome of the other, giving . And if the orientations of the two detectors are orthogonal (), then the outcomes are uncorrelated, and . Bell proves by example that these special cases can be explained in terms of hidden variables, then proceeds to show that the full range of possibilities involving intermediate angles cannot.

Bell posited that a local hidden-variable model for these correlations would explain them in terms of an integral over the possible values of some hidden parameter :

Bell's 1964 theorem requires the possibility of perfect anti-correlations: the ability to make a probability-1 prediction about the result from the second detector, knowing the result from the first. This is related to the "EPR criterion of reality", a concept introduced in the 1935 paper by Einstein, Podolsky, and Rosen. This paper posits: "If, without in any way disturbing a system, we can predict with certainty (i.e., with probability equal to unity) the value of a physical quantity, then there exists an element of reality corresponding to that quantity."[2]

GHZ–Mermin (1990)[edit]

Daniel Greenberger, Michael A. Horne, and Anton Zeilinger presented a four-particle thought experiment in 1990, which David Mermin then simplified to use only three particles.[17][18] In this thought experiment, Victor generates a set of three spin-1/2 particles described by the quantum state

If Alice, Bob, and Charlie all perform the measurement, then the product of their results would be . This value can be deduced from

This thought experiment can also be recast as a traditional Bell inequality or, equivalently, as a nonlocal game in the same spirit as the CHSH game.[19] In it, Alice, Bob, and Charlie receive bits from Victor, promised to always have an even number of ones, that is, , and send him back bits . They win the game if have an odd number of ones for all inputs except , when they need to have an even number of ones. That is, they win the game iff . With local hidden variables the highest probability of victory they can have is 3/4, whereas using the quantum strategy above they win it with certainty. This is an example of quantum pseudo-telepathy.

Kochen–Specker theorem (1967)[edit]

In quantum theory, orthonormal bases for a Hilbert space represent measurements that can be performed upon a system having that Hilbert space. Each vector in a basis represents a possible outcome of that measurement.[note 2] Suppose that a hidden variable exists, so that knowing the value of would imply certainty about the outcome of any measurement. Given a value of , each measurement outcome — that is, each vector in the Hilbert space — is either impossible or guaranteed. A Kochen–Specker configuration is a finite set of vectors made of multiple interlocking bases, with the property that a vector in it will always be impossible when considered as belonging to one basis and guaranteed when taken as belonging to another. In other words, a Kochen–Specker configuration is an "uncolorable set" that demonstrates the inconsistency of assuming a hidden variable can be controlling the measurement outcomes.[24]: 196–201

Free will theorem[edit]

The Kochen–Specker type of argument, using configurations of interlocking bases, can be combined with the idea of measuring entangled pairs that underlies Bell-type inequalities. This was noted beginning in the 1970s by Kochen,[25] Heywood and Redhead,[26] Stairs,[27] and Brown and Svetlichny.[28] As EPR pointed out, obtaining a measurement outcome on one half of an entangled pair implies certainty about the outcome of a corresponding measurement on the other half. The "EPR criterion of reality" posits that because the second half of the pair was not disturbed, that certainty must be due to a physical property belonging to it.[29] In other words, by this criterion, a hidden variable must exist within the second, as-yet unmeasured half of the pair. No contradiction arises if only one measurement on the first half is considered. However, if the observer has a choice of multiple possible measurements, and the vectors defining those measurements form a Kochen–Specker configuration, then some outcome on the second half will be simultaneously impossible and guaranteed.

This type of argument gained attention when an instance of it was advanced by John Conway and Simon Kochen under the name of the free will theorem.[30][31][32] The Conway–Kochen theorem uses a pair of entangled qutrits and a Kochen–Specker configuration discovered by Asher Peres.[33]

Quasiclassical entanglement[edit]

As Bell pointed out, some predictions of quantum mechanics can be replicated in local hidden-variable models, including special cases of correlations produced from entanglement. This topic has been studied systematically in the years since Bell's theorem. In 1989, Reinhard Werner introduced what are now called Werner states, joint quantum states for a pair of systems that yield EPR-type correlations but also admit a hidden-variable model.[34] Werner states are bipartite quantum states that are invariant under unitaries of symmetric tensor-product form:

In 2004, Robert Spekkens introduced a toy model that starts with the premise of local, discretized degrees of freedom and then imposes a "knowledge balance principle" that restricts how much an observer can know about those degrees of freedom, thereby making them into hidden variables. The allowed states of knowledge ("epistemic states") about the underlying variables ("ontic states") mimic some features of quantum states. Correlations in the toy model can emulate some aspects of entanglement, like monogamy, but by construction, the toy model can never violate a Bell inequality.[35][36]

History[edit]

Background[edit]

The question of whether quantum mechanics can be "completed" by hidden variables dates to the early years of quantum theory. In his 1932 textbook on quantum mechanics, the Hungarian-born polymath John von Neumann presented what he claimed to be a proof that there could be no "hidden parameters". The validity and definitiveness of von Neumann's proof were questioned by Hans Reichenbach, in more detail by Grete Hermann, and possibly in conversation though not in print by Albert Einstein.[note 3] (Simon Kochen and Ernst Specker rejected von Neumann's key assumption as early as 1961, but did not publish a criticism of it until 1967.[42])

Einstein argued persistently that quantum mechanics could not be a complete theory. His preferred argument relied on a principle of locality:

- Consider a mechanical system constituted of two partial systems A and B which have interaction with each other only during limited time. Let the ψ function before their interaction be given. Then the Schrödinger equation will furnish the ψ function after their interaction has taken place. Let us now determine the physical condition of the partial system A as completely as possible by measurements. Then the quantum mechanics allows us to determine the ψ function of the partial system B from the measurements made, and from the ψ function of the total system. This determination, however, gives a result which depends upon which of the determining magnitudes specifying the condition of A has been measured (for instance coordinates or momenta). Since there can be only one physical condition of B after the interaction and which can reasonably not be considered as dependent on the particular measurement we perform on the system A separated from B it may be concluded that the ψ function is not unambiguously coordinated with the physical condition. This coordination of several ψ functions with the same physical condition of system B shows again that the ψ function cannot be interpreted as a (complete) description of a physical condition of a unit system.[43]

The EPR thought experiment is similar, also considering two separated systems A and B described by a joint wave function. However, the EPR paper adds the idea later known as the EPR criterion of reality, according to which the ability to predict with probability 1 the outcome of a measurement upon B implies the existence of an "element of reality" within B.[44]

In 1951, David Bohm proposed a variant of the EPR thought experiment in which the measurements have discrete ranges of possible outcomes, unlike the position and momentum measurements considered by EPR.[45] The year before, Chien-Shiung Wu and Irving Shaknov had successfully measured polarizations of photons produced in entangled pairs, thereby making the Bohm version of the EPR thought experiment practically feasible.[46]

By the late 1940s, the mathematician George Mackey had grown interested in the foundations of quantum physics, and in 1957 he drew up a list of postulates that he took to be a precise definition of quantum mechanics.[47] Mackey conjectured that one of the postulates was redundant, and shortly thereafter, Andrew M. Gleason proved that it was indeed deducible from the other postulates.[48][49] Gleason's theorem provided an argument that a broad class of hidden-variable theories are incompatible with quantum mechanics.[note 4] More specifically, Gleason's theorem rules out hidden-variable models that are "noncontextual". Any hidden-variable model for quantum mechanics must, in order to avoid the implications of Gleason's theorem, involve hidden variables that are not properties belonging to the measured system alone but also dependent upon the external context in which the measurement is made. This type of dependence is often seen as contrived or undesirable; in some settings, it is inconsistent with special relativity.[5][51] The Kochen–Specker theorem refines this statement by constructing a specific finite subset of rays on which no such probability measure can be defined.[5][52]

Tsung-Dao Lee came close to deriving Bell's theorem in 1960. He considered events where two kaons were produced traveling in opposite directions, and came to the conclusion that hidden variables could not explain the correlations that could be obtained in such situations. However, complications arose due to the fact that kaons decay, and he did not go so far as to deduce a Bell-type inequality.[note 5]

Bell's publications[edit]

Bell chose to publish his theorem in a comparatively obscure journal because it did not require page charges, in fact paying the authors who published there at the time. Because the journal did not provide free reprints of articles for the authors to distribute, however, Bell had to spend the money he received to buy copies that he could send to other physicists.[53] While the articles printed in the journal themselves listed the publication's name simply as Physics, the covers carried the trilingual version Physics Physique Физика to reflect that it would print articles in English, French and Russian.[41]: 92–100, 289

Prior to proving his 1964 result, Bell also proved a result equivalent to the Kochen–Specker theorem (hence the latter is sometimes also known as the Bell–Kochen–Specker or Bell–KS theorem). However, publication of this theorem was inadvertently delayed until 1966.[5][54] In that paper, Bell argued that because an explanation of quantum phenomena in terms of hidden variables would require nonlocality, the EPR paradox "is resolved in the way which Einstein would have liked least."[54]

Experiments[edit]

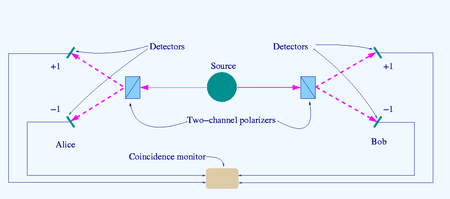

The source S produces pairs of "photons", sent in opposite directions. Each photon encounters a two-channel polariser whose orientation (a or b) can be set by the experimenter. Emerging signals from each channel are detected and coincidences of four types (++, −−, +− and −+) counted by the coincidence monitor.

In 1967, the unusual title Physics Physique Физика caught the attention of John Clauser, who then discovered Bell's paper and began to consider how to perform a Bell test in the laboratory.[55] Clauser and Stuart Freedman would go on to perform a Bell test in 1972.[56][57] This was only a limited test, because the choice of detector settings was made before the photons had left the source. In 1982, Alain Aspect and collaborators performed the first Bell test to remove this limitation.[58] This began a trend of progressively more stringent Bell tests. The GHZ thought experiment was implemented in practice, using entangled triplets of photons, in 2000.[59] By 2002, testing the CHSH inequality was feasible in undergraduate laboratory courses.[60]

In Bell tests, there may be problems of experimental design or set-up that affect the validity of the experimental findings. These problems are often referred to as "loopholes". The purpose of the experiment is to test whether nature can be described by local hidden-variable theory, which would contradict the predictions of quantum mechanics.

The most prevalent loopholes in real experiments are the detection and locality loopholes.[61] The detection loophole is opened when a small fraction of the particles (usually photons) are detected in the experiment, making it possible to explain the data with local hidden variables by assuming that the detected particles are an unrepresentative sample. The locality loophole is opened when the detections are not done with a spacelike separation, making it possible for the result of one measurement to influence the other without contradicting relativity. In some experiments there may be additional defects that make local-hidden-variable explanations of Bell test violations possible.[62]

Although both the locality and detection loopholes had been closed in different experiments, a long-standing challenge was to close both simultaneously in the same experiment. This was finally achieved in three experiments in 2015.[63][64][65][66][67] Regarding these results, Alain Aspect writes that "no experiment ... can be said to be totally loophole-free," but he says the experiments "remove the last doubts that we should renounce" local hidden variables, and refers to examples of remaining loopholes as being "far fetched" and "foreign to the usual way of reasoning in physics."[68]

These efforts to experimentally validate violations of the Bell inequalities would later result in Clauser, Aspect, and Anton Zeilinger being awarded the 2022 Nobel Prize in Physics.[69]

Interpretations[edit]

Reactions to Bell's theorem have been many and varied. Maximilian Schlosshauer, Johannes Kofler, and Zeilinger write that Bell inequalities provide "a wonderful example of how we can have a rigorous theoretical result tested by numerous experiments, and yet disagree about the implications."[70]

The Copenhagen interpretation[edit]

Copenhagen-type interpretations generally take the violation of Bell inequalities as grounds to reject the assumption often called counterfactual definiteness or "realism", which is not necessarily the same as abandoning realism in a broader philosophical sense.[71][72] For example, Roland Omnès argues for the rejection of hidden variables and concludes that "quantum mechanics is probably as realistic as any theory of its scope and maturity ever will be".[73]: 531 Likewise, Rudolf Peierls took the message of Bell's theorem to be that, because the premise of locality is physically reasonable, "hidden variables cannot be introduced without abandoning some of the results of quantum mechanics".[74][75]

This is also the route taken by interpretations that descend from the Copenhagen tradition, such as consistent histories (often advertised as "Copenhagen done right"),[76]: 2839 as well as QBism.[77]

Many-worlds interpretation of quantum mechanics[edit]

The Many-worlds interpretation, also known as the Everett interpretation, is dynamically local[78]: 17 --it does not call for action at a distance-- and is deterministic --it consists of the unitary part of quantum mechanics without collapse. It can generate correlations that violate a Bell inequality because it violates an implicit assumption by Bell that measurements have a single outcome. In fact, Bell's theorem can be proven in the Many-Worlds framework from the assumption that a measurement has a single outcome. Therefore, a violation of a Bell inequality can be interpreted as a demonstration that measurements have multiple outcomes.[79]

The explanation it provides for the Bell correlations is that when Alice and Bob make their measurements, they split into local branches. From the point of view of each copy of Alice, there are multiple copies of Bob experiencing different results, so Bob cannot have a definite result, and the same is true from the point of view of each copy of Bob. They will obtain a mutually well-defined result only when their future light cones overlap. At this point we can say that the Bell correlation starts existing, but it was produced by a purely local mechanism. Therefore, the violation of a Bell inequality cannot be interpreted as a proof of non-locality.[78]: 28

[edit]

Most advocates of the hidden-variables idea believe that experiments have ruled out local hidden variables.[note 6] They are ready to give up locality, explaining the violation of Bell's inequality by means of a non-local hidden variable theory, in which the particles exchange information about their states. This is the basis of the Bohm interpretation of quantum mechanics, which requires that all particles in the universe be able to instantaneously exchange information with all others. One challenge for non-local hidden variable theories is to explain why this instantaneous communication can exist at the level of the hidden variables, but it cannot be used to send signals.[82] A 2007 experiment ruled out a large class of non-Bohmian non-local hidden variable theories, though not Bohmian mechanics itself.[83]

The transactional interpretation, which postulates waves traveling both backwards and forwards in time, is likewise non-local.[84]

Superdeterminism[edit]

A necessary assumption to derive Bell's theorem is that the hidden variables are not correlated with the measurement settings. This assumption has been justified on the grounds that the experimenter has "free will" to choose the settings, and that it is necessary to do science in the first place. A (hypothetical) theory where the choice of measurement is necessarily correlated with the system being measured is known as superdeterministic.[61]

A few advocates of deterministic models have not given up on local hidden variables. For example, Gerard 't Hooft has argued that superdeterminism cannot be dismissed.[85]

See also[edit]

Notes[edit]

- ^ We are for convenience assuming that the response of the detector to the underlying property is deterministic. This assumption can be replaced; it is equivalent to postulating a joint probability distribution over all the observables of the experiment.[11][12]

- ^ In more detail, as developed by Paul Dirac,[20] David Hilbert,[21] John von Neumann,[22] and Hermann Weyl,[23] the state of a quantum mechanical system is a vector belonging to a (separable) Hilbert space . Physical quantities of interest — position, momentum, energy, spin — are represented by "observables", which are self-adjoint linear operators acting on the Hilbert space. When an observable is measured, the result will be one of its eigenvalues with probability given by the Born rule: in the simplest case the eigenvalue is non-degenerate and the probability is given by , where is its associated eigenvector. More generally, the eigenvalue is degenerate and the probability is given by , where is the projector onto its associated eigenspace. For the purposes of this discussion, we can take the eigenvalues to be non-degenerate.

- ^ See Reichenbach[37] and Jammer,[38]: 276 Mermin and Schack,[39] and for Einstein's remarks, Clauser and Shimony[40] and Wick.[41]: 286

- ^ A hidden-variable theory that is deterministic implies that the probability of a given outcome is always either 0 or 1. For example, a Stern–Gerlach measurement on a spin-1 atom will report that the atom's angular momentum along the chosen axis is one of three possible values, which can be designated , and . In a deterministic hidden-variable theory, there exists an underlying physical property that fixes the result found in the measurement. Conditional on the value of the underlying physical property, any given outcome (for example, a result of ) must be either impossible or guaranteed. But Gleason's theorem implies that there can be no such deterministic probability measure, because it proves that any probability measure must take the form of a mapping for some density operator . This mapping is continuous on the unit sphere of the Hilbert space, and since this unit sphere is connected, no continuous probability measure on it can be deterministic.[50]: §1.3

- ^ This was reported by Max Jammer.[38]: 308 Lee is best known for his prediction with Chen-Ning Yang of the violation of parity conservation, a prediction that earned them the Nobel Prize after it was confirmed by Chien-Shiung Wu, who did not share in the Prize.

- ^ E. T. Jaynes was one exception,[80] but Jaynes' arguments have not generally been found persuasive.[81]

References[edit]

- ^ Bell, John S. (1987). Speakable and Unspeakable in Quantum Mechanics. Cambridge University Press. p. 65. ISBN 9780521368698. OCLC 15053677.

- ^ a b Einstein, A.; Podolsky, B.; Rosen, N. (1935-05-15). "Can Quantum-Mechanical Description of Physical Reality be Considered Complete?". Physical Review. 47 (10): 777–780. Bibcode:1935PhRv...47..777E. doi:10.1103/PhysRev.47.777.

- ^ a b Bell, J. S. (1964). "On the Einstein Podolsky Rosen Paradox" (PDF). Physics Physique Физика. 1 (3): 195–200. doi:10.1103/PhysicsPhysiqueFizika.1.195.

- ^ Parker, Sybil B. (1994). McGraw-Hill Encyclopaedia of Physics (2nd ed.). McGraw-Hill. p. 542. ISBN 978-0-07-051400-3.

- ^ a b c d Mermin, N. David (July 1993). "Hidden Variables and the Two Theorems of John Bell" (PDF). Reviews of Modern Physics. 65 (3): 803–815. arXiv:1802.10119. Bibcode:1993RvMP...65..803M. doi:10.1103/RevModPhys.65.803. S2CID 119546199.

- ^ "The Nobel Prize in Physics 2022". Nobel Prize (Press release). The Royal Swedish Academy of Sciences. October 4, 2022. Retrieved 6 October 2022.

- ^ The BIG Bell Test Collaboration (9 May 2018). "Challenging local realism with human choices". Nature. 557 (7704): 212–216. arXiv:1805.04431. Bibcode:2018Natur.557..212B. doi:10.1038/s41586-018-0085-3. PMID 29743691. S2CID 13665914.

- ^ Wolchover, Natalie (2017-02-07). "Experiment Reaffirms Quantum Weirdness". Quanta Magazine. Retrieved 2020-02-08.

- ^ a b Shimony, Abner. "Bell's Theorem". In Zalta, Edward N. (ed.). Stanford Encyclopedia of Philosophy.

- ^ a b c d Nielsen, Michael A.; Chuang, Isaac L. (2010). Quantum Computation and Quantum Information (2nd ed.). Cambridge: Cambridge University Press. ISBN 978-1-107-00217-3. OCLC 844974180.

- ^ Fine, Arthur (1982-02-01). "Hidden Variables, Joint Probability, and the Bell Inequalities". Physical Review Letters. 48 (5): 291–295. Bibcode:1982PhRvL..48..291F. doi:10.1103/PhysRevLett.48.291. ISSN 0031-9007.

- ^ Braunstein, Samuel L.; Caves, Carlton M. (August 1990). "Wringing out better Bell inequalities". Annals of Physics. 202 (1): 22–56. Bibcode:1990AnPhy.202...22B. doi:10.1016/0003-4916(90)90339-P.

- ^ Rau, Jochen (2021). Quantum theory : an information processing approach. Oxford University Press. ISBN 978-0-192-65027-6. OCLC 1256446911.

- ^ Cleve, R.; Hoyer, P.; Toner, B.; Watrous, J. (2004). "Consequences and limits of nonlocal strategies". Proceedings. 19th IEEE Annual Conference on Computational Complexity, 2004. IEEE. pp. 236–249. arXiv:quant-ph/0404076. Bibcode:2004quant.ph..4076C. doi:10.1109/CCC.2004.1313847. ISBN 0-7695-2120-7. OCLC 55954993. S2CID 8077237.

- ^ Barnum, H.; Beigi, S.; Boixo, S.; Elliott, M. B.; Wehner, S. (2010-04-06). "Local Quantum Measurement and No-Signaling Imply Quantum Correlations". Physical Review Letters. 104 (14): 140401. arXiv:0910.3952. Bibcode:2010PhRvL.104n0401B. doi:10.1103/PhysRevLett.104.140401. ISSN 0031-9007. PMID 20481921. S2CID 17298392.

- ^ Griffiths, David J. (2005). Introduction to Quantum Mechanics (2nd ed.). Upper Saddle River, NJ: Pearson Prentice Hall. ISBN 0-13-111892-7. OCLC 53926857.

- ^ Greenberger, D.; Horne, M.; Shimony, A.; Zeilinger, A. (1990). "Bell's theorem without inequalities". American Journal of Physics. 58 (12): 1131. Bibcode:1990AmJPh..58.1131G. doi:10.1119/1.16243.

- ^ Mermin, N. David (1990). "Quantum mysteries revisited". American Journal of Physics. 58 (8): 731–734. Bibcode:1990AmJPh..58..731M. doi:10.1119/1.16503.

- ^ Brassard, Gilles; Broadbent, Anne; Tapp, Alain (2005). "Recasting Mermin's multi-player game into the framework of pseudo-telepathy". Quantum Information and Computation. 5 (7): 538–550. arXiv:quant-ph/0408052. Bibcode:2004quant.ph..8052B. doi:10.26421/QIC5.7-2.

- ^ Dirac, Paul Adrien Maurice (1930). The Principles of Quantum Mechanics. Oxford: Clarendon Press.

- ^ Hilbert, David (2009). Sauer, Tilman; Majer, Ulrich (eds.). Lectures on the Foundations of Physics 1915–1927: Relativity, Quantum Theory and Epistemology. Springer. doi:10.1007/b12915. ISBN 978-3-540-20606-4. OCLC 463777694.

- ^ von Neumann, John (1932). Mathematische Grundlagen der Quantenmechanik. Berlin: Springer. English translation: Mathematical Foundations of Quantum Mechanics. Translated by Beyer, Robert T. Princeton University Press. 1955.

- ^ Weyl, Hermann (1950) [1931]. The Theory of Groups and Quantum Mechanics. Translated by Robertson, H. P. Dover. ISBN 978-0-486-60269-1. Translated from the German Gruppentheorie und Quantenmechanik (2nd ed.). S. Hirzel Verlag. 1931.

- ^ Peres, Asher (1993). Quantum Theory: Concepts and Methods. Kluwer. ISBN 0-7923-2549-4. OCLC 28854083.

- ^ Redhead, Michael; Brown, Harvey (1991-07-01). "Nonlocality in Quantum Mechanics". Proceedings of the Aristotelian Society, Supplementary Volumes. 65 (1): 119–160. doi:10.1093/aristoteliansupp/65.1.119. ISSN 0309-7013. JSTOR 4106773.

A similar approach was arrived at independently by Simon Kochen, although never published (private communication).

- ^ Heywood, Peter; Redhead, Michael L. G. (May 1983). "Nonlocality and the Kochen–Specker paradox". Foundations of Physics. 13 (5): 481–499. Bibcode:1983FoPh...13..481H. doi:10.1007/BF00729511. ISSN 0015-9018. S2CID 120340929.

- ^ Stairs, Allen (December 1983). "Quantum Logic, Realism, and Value Definiteness". Philosophy of Science. 50 (4): 578–602. doi:10.1086/289140. ISSN 0031-8248. S2CID 122885859.

- ^ Brown, H. R.; Svetlichny, G. (November 1990). "Nonlocality and Gleason's lemma. Part I. Deterministic theories". Foundations of Physics. 20 (11): 1379–1387. Bibcode:1990FoPh...20.1379B. doi:10.1007/BF01883492. ISSN 0015-9018. S2CID 122868901.

- ^ Glick, David; Boge, Florian J. (2019-10-22). "Is the Reality Criterion Analytic?". Erkenntnis. 86 (6): 1445–1451. arXiv:1909.11893. Bibcode:2019arXiv190911893G. doi:10.1007/s10670-019-00163-w. ISSN 0165-0106. S2CID 202889160.

- ^ Conway, John; Kochen, Simon (2006). "The Free Will Theorem". Foundations of Physics. 36 (10): 1441. arXiv:quant-ph/0604079. Bibcode:2006FoPh...36.1441C. doi:10.1007/s10701-006-9068-6. S2CID 12999337.

- ^ Rehmeyer, Julie (2008-08-15). "Do subatomic particles have free will?". Science News. Retrieved 2022-04-23.

- ^ Thomas, Rachel (2011-12-27). "John Conway – discovering free will (part I)". Plus Magazine. Retrieved 2022-04-23.

- ^ Conway, John H.; Kochen, Simon (2009). "The strong free will theorem" (PDF). Notices of the AMS. 56 (2): 226–232.

- ^ Werner, Reinhard F. (1989-10-01). "Quantum states with Einstein–Podolsky–Rosen correlations admitting a hidden-variable model". Physical Review A. 40 (8): 4277–4281. Bibcode:1989PhRvA..40.4277W. doi:10.1103/PhysRevA.40.4277. ISSN 0556-2791. PMID 9902666.

- ^ Spekkens, Robert W. (2007-03-19). "Evidence for the epistemic view of quantum states: A toy theory". Physical Review A. 75 (3): 032110. arXiv:quant-ph/0401052. Bibcode:2007PhRvA..75c2110S. doi:10.1103/PhysRevA.75.032110. ISSN 1050-2947. S2CID 117284016.

- ^ Catani, Lorenzo; Browne, Dan E. (2017-07-27). "Spekkens' toy model in all dimensions and its relationship with stabiliser quantum mechanics". New Journal of Physics. 19 (7): 073035. arXiv:1701.07801. Bibcode:2017NJPh...19g3035C. doi:10.1088/1367-2630/aa781c. ISSN 1367-2630. S2CID 119428107.

- ^ Reichenbach, Hans (1944). Philosophic Foundations of Quantum Mechanics. University of California Press. p. 14. OCLC 872622725.

- ^ a b Jammer, Max (1974). The Philosophy of Quantum Mechanics. John Wiley and Sons. ISBN 0-471-43958-4.

- ^ Mermin, N. David; Schack, Rüdiger (2018). "Homer nodded: von Neumann's surprising oversight". Foundations of Physics. 48 (9): 1007–1020. arXiv:1805.10311. Bibcode:2018FoPh...48.1007M. doi:10.1007/s10701-018-0197-5. S2CID 118951033.

- ^ Clauser, J. F.; Shimony, A. (1978). "Bell's theorem: Experimental tests and implications" (PDF). Reports on Progress in Physics. 41 (12): 1881–1927. Bibcode:1978RPPh...41.1881C. CiteSeerX 10.1.1.482.4728. doi:10.1088/0034-4885/41/12/002. S2CID 250885175. Archived (PDF) from the original on 2017-09-23. Retrieved 2017-10-28.

- ^ a b Wick, David (1995). "Bell's Theorem". The Infamous Boundary: Seven Decades of Heresy in Quantum Physics. New York: Springer. pp. 92–100. doi:10.1007/978-1-4612-4030-3_11. ISBN 978-0-387-94726-6.

- ^ Conway, John; Kochen, Simon (2002). "The Geometry of the Quantum Paradoxes". In Bertlmann, Reinhold A.; Zeilinger, Anton (eds.). Quantum [Un]speakables: From Bell to Quantum Information. Berlin: Springer. pp. 257–269. ISBN 3-540-42756-2. OCLC 49404213.

- ^ Einstein, Albert (March 1936). "Physics and reality". Journal of the Franklin Institute. 221 (3): 349–382. Bibcode:1936FrInJ.221..349E. doi:10.1016/S0016-0032(36)91047-5.

- ^ Harrigan, Nicholas; Spekkens, Robert W. (2010). "Einstein, incompleteness, and the epistemic view of quantum states". Foundations of Physics. 40 (2): 125. arXiv:0706.2661. Bibcode:2010FoPh...40..125H. doi:10.1007/s10701-009-9347-0. S2CID 32755624.

- ^ Bohm, David (1989) [1951]. Quantum Theory (Dover reprint ed.). Prentice-Hall. pp. 614–623. ISBN 978-0-486-65969-5. OCLC 1103789975.

- ^ Wu, C.-S.; Shaknov, I. (1950). "The Angular Correlation of Scattered Annihilation Radiation". Physical Review. 77 (1): 136. Bibcode:1950PhRv...77..136W. doi:10.1103/PhysRev.77.136.

- ^ Mackey, George W. (1957). "Quantum Mechanics and Hilbert Space". The American Mathematical Monthly. 64 (8P2): 45–57. doi:10.1080/00029890.1957.11989120. JSTOR 2308516.

- ^ Gleason, Andrew M. (1957). "Measures on the closed subspaces of a Hilbert space". Indiana University Mathematics Journal. 6 (4): 885–893. doi:10.1512/iumj.1957.6.56050. MR 0096113.

- ^ Chernoff, Paul R. "Andy Gleason and Quantum Mechanics" (PDF). Notices of the AMS. 56 (10): 1253–1259.

- ^ Wilce, A. (2017). "Quantum Logic and Probability Theory". Stanford Encyclopedia of Philosophy. Metaphysics Research Lab, Stanford University.

- ^ Shimony, Abner (1984). "Contextual Hidden Variable Theories and Bell's Inequalities". British Journal for the Philosophy of Science. 35 (1): 25–45. doi:10.1093/bjps/35.1.25.

- ^ Peres, Asher (1991). "Two simple proofs of the Kochen-Specker theorem". Journal of Physics A: Mathematical and General. 24 (4): L175–L178. Bibcode:1991JPhA...24L.175P. doi:10.1088/0305-4470/24/4/003. ISSN 0305-4470.

- ^ Whitaker, Andrew (2016). John Stewart Bell and Twentieth Century Physics: Vision and Integrity. Oxford University Press. ISBN 978-0-19-874299-9.

- ^ a b Bell, J. S. (1966). "On the problem of hidden variables in quantum mechanics". Reviews of Modern Physics. 38 (3): 447–452. Bibcode:1966RvMP...38..447B. doi:10.1103/revmodphys.38.447. OSTI 1444158.

- ^ Kaiser, David (2012-01-30). "How the Hippies Saved Physics: Science, Counterculture, and the Quantum Revival [Excerpt]". Scientific American. Retrieved 2020-02-11.

- ^ Freedman, S. J.; Clauser, J. F. (1972). "Experimental test of local hidden-variable theories" (PDF). Physical Review Letters. 28 (938): 938–941. Bibcode:1972PhRvL..28..938F. doi:10.1103/PhysRevLett.28.938.

- ^ Freedman, Stuart Jay (1972-05-05). Experimental test of local hidden-variable theories (PDF) (PhD). University of California, Berkeley.

- ^ Aspect, Alain; Dalibard, Jean; Roger, Gérard (1982). "Experimental Test of Bell's Inequalities Using Time-Varying Analyzers". Physical Review Letters. 49 (25): 1804–7. Bibcode:1982PhRvL..49.1804A. doi:10.1103/PhysRevLett.49.1804.

- ^ Pan, Jian-Wei; Bouwmeester, D.; Daniell, M.; Weinfurter, H.; Zeilinger, A. (2000). "Experimental test of quantum nonlocality in three-photon GHZ entanglement". Nature. 403 (6769): 515–519. Bibcode:2000Natur.403..515P. doi:10.1038/35000514. PMID 10676953. S2CID 4309261.

- ^ Dehlinger, Dietrich; Mitchell, M. W. (2002). "Entangled photons, nonlocality, and Bell inequalities in the undergraduate laboratory". American Journal of Physics. 70 (9): 903–910. arXiv:quant-ph/0205171. Bibcode:2002AmJPh..70..903D. doi:10.1119/1.1498860. S2CID 49487096.

- ^ a b Larsson, Jan-Åke (2014). "Loopholes in Bell inequality tests of local realism". Journal of Physics A: Mathematical and Theoretical. 47 (42): 424003. arXiv:1407.0363. Bibcode:2014JPhA...47P4003L. doi:10.1088/1751-8113/47/42/424003. S2CID 40332044.

- ^ Gerhardt, I.; Liu, Q.; Lamas-Linares, A.; Skaar, J.; Scarani, V.; et al. (2011). "Experimentally faking the violation of Bell's inequalities". Physical Review Letters. 107 (17): 170404. arXiv:1106.3224. Bibcode:2011PhRvL.107q0404G. doi:10.1103/PhysRevLett.107.170404. PMID 22107491. S2CID 16306493.

- ^ Merali, Zeeya (27 August 2015). "Quantum 'spookiness' passes toughest test yet". Nature News. 525 (7567): 14–15. Bibcode:2015Natur.525...14M. doi:10.1038/nature.2015.18255. PMID 26333448. S2CID 4409566.

- ^ Markoff, Jack (21 October 2015). "Sorry, Einstein. Quantum Study Suggests 'Spooky Action' Is Real". New York Times. Retrieved 21 October 2015.

- ^ Hensen, B.; et al. (21 October 2015). "Loophole-free Bell inequality violation using electron spins separated by 1.3 kilometres". Nature. 526 (7575): 682–686. arXiv:1508.05949. Bibcode:2015Natur.526..682H. doi:10.1038/nature15759. PMID 26503041. S2CID 205246446.

- ^ Shalm, L. K.; et al. (16 December 2015). "Strong Loophole-Free Test of Local Realism". Physical Review Letters. 115 (25): 250402. arXiv:1511.03189. Bibcode:2015PhRvL.115y0402S. doi:10.1103/PhysRevLett.115.250402. PMC 5815856. PMID 26722906.

- ^ Giustina, M.; et al. (16 December 2015). "Significant-Loophole-Free Test of Bell's Theorem with Entangled Photons". Physical Review Letters. 115 (25): 250401. arXiv:1511.03190. Bibcode:2015PhRvL.115y0401G. doi:10.1103/PhysRevLett.115.250401. PMID 26722905. S2CID 13789503.

- ^ Aspect, Alain (December 16, 2015). "Closing the Door on Einstein and Bohr's Quantum Debate". Physics. 8: 123. Bibcode:2015PhyOJ...8..123A. doi:10.1103/Physics.8.123.

- ^ Ahlander, Johan; Burger, Ludwig; Pollard, Niklas (2022-10-04). "Nobel physics prize goes to sleuths of 'spooky' quantum science". Reuters. Retrieved 2022-10-04.

- ^ Schlosshauer, Maximilian; Kofler, Johannes; Zeilinger, Anton (2013-01-06). "A Snapshot of Foundational Attitudes Toward Quantum Mechanics". Studies in History and Philosophy of Science Part B: Studies in History and Philosophy of Modern Physics. 44 (3): 222–230. arXiv:1301.1069. Bibcode:2013SHPMP..44..222S. doi:10.1016/j.shpsb.2013.04.004. S2CID 55537196.

- ^ Werner, Reinhard F. (2014-10-24). "Comment on 'What Bell did'". Journal of Physics A: Mathematical and Theoretical. 47 (42): 424011. Bibcode:2014JPhA...47P4011W. doi:10.1088/1751-8113/47/42/424011. ISSN 1751-8113. S2CID 122180759.

- ^ Żukowski, Marek (2017). "Bell's Theorem Tells Us Not What Quantum Mechanics is, but What Quantum Mechanics is Not". In Bertlmann, Reinhold; Zeilinger, Anton (eds.). Quantum [Un]Speakables II. The Frontiers Collection. Cham: Springer International Publishing. pp. 175–185. arXiv:1501.05640. doi:10.1007/978-3-319-38987-5_10. ISBN 978-3-319-38985-1. S2CID 119214547.

- ^ Omnès, R. (1994). The Interpretation of Quantum Mechanics. Princeton University Press. ISBN 978-0-691-03669-4. OCLC 439453957.

- ^ Peierls, Rudolf (1979). Surprises in Theoretical Physics. Princeton University Press. pp. 26–29. ISBN 0-691-08241-3.

- ^ Mermin, N. D. (1999). "What Do These Correlations Know About Reality? Nonlocality and the Absurd". Foundations of Physics. 29: 571–587. arXiv:quant-ph/9807055. Bibcode:1998quant.ph..7055M. doi:10.1023/A:1018864225930.

- ^ Hohenberg, P. C. (2010-10-05). "Colloquium : An introduction to consistent quantum theory". Reviews of Modern Physics. 82 (4): 2835–2844. arXiv:0909.2359. Bibcode:2010RvMP...82.2835H. doi:10.1103/RevModPhys.82.2835. ISSN 0034-6861. S2CID 20551033.

- ^ Healey, Richard (2016). "Quantum-Bayesian and Pragmatist Views of Quantum Theory". In Zalta, Edward N. (ed.). Stanford Encyclopedia of Philosophy. Metaphysics Research Lab, Stanford University. Archived from the original on 2021-08-17. Retrieved 2021-09-16.

- ^ a b Brown, Harvey R.; Timpson, Christopher G. (2016). "Bell on Bell's Theorem: The Changing Face of Nonlocality". In Bell, Mary; Gao, Shan (eds.). Quantum Nonlocality and Reality: 50 years of Bell's theorem. Cambridge University Press. pp. 91–123. arXiv:1501.03521. doi:10.1017/CBO9781316219393.008. ISBN 9781316219393. S2CID 118686956.

- ^ Deutsch, David; Hayden, Patrick (2000). "Information flow in entangled quantum systems". Proceedings of the Royal Society A. 456 (1999): 1759–1774. arXiv:quant-ph/9906007. Bibcode:2000RSPSA.456.1759D. doi:10.1098/rspa.2000.0585. S2CID 13998168.

- ^ Jaynes, E. T. (1989). "Clearing up Mysteries — the Original Goal". Maximum Entropy and Bayesian Methods (PDF). pp. 1–27. CiteSeerX 10.1.1.46.1264. doi:10.1007/978-94-015-7860-8_1. ISBN 978-90-481-4044-2. Archived (PDF) from the original on 2011-10-28. Retrieved 2011-10-18.

- ^ Gill, Richard D. (2002). "Time, Finite Statistics, and Bell's Fifth Position". Proceedings of the Conference Foundations of Probability and Physics - 2 : Växjö (Soland), Sweden, June 2-7, 2002. Vol. 5. Växjö University Press. pp. 179–206. arXiv:quant-ph/0301059.

- ^ Wood, Christopher J.; Spekkens, Robert W. (2015-03-03). "The lesson of causal discovery algorithms for quantum correlations: causal explanations of Bell-inequality violations require fine-tuning". New Journal of Physics. 17 (3): 033002. arXiv:1208.4119. Bibcode:2015NJPh...17c3002W. doi:10.1088/1367-2630/17/3/033002. ISSN 1367-2630. S2CID 118518558.

- ^ Gröblacher, Simon; Paterek, Tomasz; Kaltenbaek, Rainer; Brukner, Časlav; Żukowski, Marek; Aspelmeyer, Markus; Zeilinger, Anton (2007). "An experimental test of non-local realism". Nature. 446 (7138): 871–5. arXiv:0704.2529. Bibcode:2007Natur.446..871G. doi:10.1038/nature05677. PMID 17443179. S2CID 4412358.

- ^ Kastner, Ruth E. (May 2010). "The quantum liar experiment in Cramer's transactional interpretation". Studies in History and Philosophy of Science Part B: Studies in History and Philosophy of Modern Physics. 41 (2): 86–92. arXiv:0906.1626. Bibcode:2010SHPMP..41...86K. doi:10.1016/j.shpsb.2010.01.001. S2CID 16242184. Archived from the original on 2018-06-24. Retrieved 2021-09-16.

- ^ 't Hooft, Gerard (2016). The Cellular Automaton Interpretation of Quantum Mechanics. Fundamental Theories of Physics. Vol. 185. Springer. doi:10.1007/978-3-319-41285-6. ISBN 978-3-319-41284-9. OCLC 951761277. S2CID 7779840. Archived from the original on 2021-12-29. Retrieved 2020-08-27.

Further reading[edit]

The following are intended for general audiences.

- Aczel, Amir D. (2001). Entanglement: The greatest mystery in physics. New York: Four Walls Eight Windows.

- Afriat, A.; Selleri, F. (1999). The Einstein, Podolsky and Rosen Paradox. New York and London: Plenum Press.

- Baggott, J. (1992). The Meaning of Quantum Theory. Oxford University Press.

- Gilder, Louisa (2008). The Age of Entanglement: When Quantum Physics Was Reborn. New York: Alfred A. Knopf.

- Greene, Brian (2004). The Fabric of the Cosmos. Vintage. ISBN 0-375-72720-5.

- Mermin, N. David (1981). "Bringing home the atomic world: Quantum mysteries for anybody". American Journal of Physics. 49 (10): 940–943. Bibcode:1981AmJPh..49..940M. doi:10.1119/1.12594. S2CID 122724592.

- Mermin, N. David (April 1985). "Is the moon there when nobody looks? Reality and the quantum theory". Physics Today. 38 (4): 38–47. Bibcode:1985PhT....38d..38M. doi:10.1063/1.880968.

The following are more technically oriented.

- Aspect, A.; et al. (1981). "Experimental Tests of Realistic Local Theories via Bell's Theorem". Phys. Rev. Lett. 47 (7): 460–463. Bibcode:1981PhRvL..47..460A. doi:10.1103/physrevlett.47.460.

- Aspect, A.; et al. (1982). "Experimental Realization of Einstein–Podolsky–Rosen–Bohm Gedankenexperiment: A New Violation of Bell's Inequalities". Phys. Rev. Lett. 49 (2): 91–94. Bibcode:1982PhRvL..49...91A. doi:10.1103/physrevlett.49.91.

- Aspect, A.; Grangier, P. (1985). "About resonant scattering and other hypothetical effects in the Orsay atomic-cascade experiment tests of Bell inequalities: a discussion and some new experimental data". Lettere al Nuovo Cimento. 43 (8): 345–348. doi:10.1007/bf02746964. S2CID 120840672.

- Bell, J. S. (1971). "Introduction to the hidden variable question". Proceedings of the International School of Physics 'Enrico Fermi', Course IL, Foundations of Quantum Mechanics. pp. 171–81.

- Bell, J. S. (2004). "Bertlmann's Socks and the Nature of Reality". Speakable and Unspeakable in Quantum Mechanics. Cambridge University Press. pp. 139–158.

- D'Espagnat, B. (1979). "The Quantum Theory and Reality" (PDF). Scientific American. 241 (5): 158–181. Bibcode:1979SciAm.241e.158D. doi:10.1038/scientificamerican1179-158. Archived (PDF) from the original on 2009-03-27. Retrieved 2009-03-18.

- Fry, E. S.; Walther, T.; Li, S. (1995). "Proposal for a loophole-free test of the Bell inequalities" (PDF). Phys. Rev. A. 52 (6): 4381–4395. Bibcode:1995PhRvA..52.4381F. doi:10.1103/physreva.52.4381. hdl:1969.1/126533. PMID 9912775. Archived from the original on 2021-12-29. Retrieved 2018-03-19.

- Fry, E. S.; Walther, T. (2002). "Atom based tests of the Bell Inequalities — the legacy of John Bell continues". In Bertlmann, R. A.; Zeilinger, A. (eds.). Quantum [Un]speakables. Berlin-Heidelberg-New York: Springer. pp. 103–117.

- Goldstein, Sheldon; et al. (2011). "Bell's theorem". Scholarpedia. 6 (10): 8378. Bibcode:2011SchpJ...6.8378G. doi:10.4249/scholarpedia.8378.

- Griffiths, R. B. (2001). Consistent Quantum Theory. Cambridge University Press. ISBN 978-0-521-80349-6. OCLC 1180958776.

- Hardy, L. (1993). "Nonlocality for 2 particles without inequalities for almost all entangled states". Physical Review Letters. 71 (11): 1665–1668. Bibcode:1993PhRvL..71.1665H. doi:10.1103/physrevlett.71.1665. PMID 10054467. S2CID 11839894.

- Matsukevich, D. N.; Maunz, P.; Moehring, D. L.; Olmschenk, S.; Monroe, C. (2008). "Bell Inequality Violation with Two Remote Atomic Qubits". Phys. Rev. Lett. 100 (15): 150404. arXiv:0801.2184. Bibcode:2008PhRvL.100o0404M. doi:10.1103/physrevlett.100.150404. PMID 18518088. S2CID 11536757.

- Rieffel, Eleanor G.; Polak, Wolfgang H. (4 March 2011). "4.4 EPR Paradox and Bell's Theorem". Quantum Computing: A Gentle Introduction. MIT Press. pp. 60–65. ISBN 978-0-262-01506-6.

- Sulcs, S. (2003). "The Nature of Light and Twentieth Century Experimental Physics". Foundations of Science. 8 (4): 365–391. doi:10.1023/A:1026323203487. S2CID 118769677.

- van Fraassen, B. C. (1991). Quantum Mechanics: An Empiricist View. Clarendon Press. ISBN 978-0-198-24861-3. OCLC 22906474.